叩丁狼k8s-运维管理

文章目录

一:Helm包管理器

1:什么是Helm

Helm 是 Kubernetes 生态中最主流的包管理器,被称为 K8s 的 "apt/yum",核心是用 Chart 标准化打包、部署、升级与管理复杂应用,大幅降低多资源、多环境的运维复杂度

Helm 管理名为 chart 的 Kubernetes 包的工具。

Helm 可以做以下的事情:

- 从头开始创建新的 chart

- 将 chart 打包成归档(tgz)文件

- 与存储 chart 的仓库进行交互

- 在现有的 Kubernetes 集群中安装和卸载 chart

- 管理与 Helm 一起安装的 chart 的发布周期

对于Helm,有三个重要的概念:

- chart 创建Kubernetes应用程序所必需的一组信息。

- config 包含了可以合并到打包的chart中的配置信息,用于创建一个可发布的对象。

- release 是一个与特定配置相结合的chart的运行实例。

2:核心定位和价值

Helm 解决了 Kubernetes 原生部署的三大痛点:

- 配置碎片化:将 Deployment、Service、ConfigMap 等数十个 YAML 整合成一个可复用包。

- 环境适配难:一套模板 + 多套 Values,轻松适配开发 / 测试 / 生产。

- 版本不可控:提供原子化升级、回滚与完整发布历史。

它是 CNCF 毕业项目,当前主流为 Helm 3(已移除服务端 Tiller,纯客户端架构)。

3:核心概念

3.1:chart

Helm 的 "安装包",是一个标准化目录结构,包含应用的所有资源定义、模板、配置与元数据

python

# 一个典型的目录结构是:

my-chart/

├── Chart.yaml # 元数据(名称、版本、描述等)

├── values.yaml # 默认配置(可被覆盖)

├── charts/ # 依赖的子 Chart

├── templates/ # Go 模板文件(生成最终 YAML)

│ ├── deployment.yaml

│ ├── service.yaml

│ └── _helpers.tpl # 可复用模板函数

└── README.md # 使用说明3.2:release

Chart 部署到集群后的唯一运行实例,一个 Chart 可部署为多个 Release

每个 Release 有独立版本、配置与生命周期(安装 / 升级 / 回滚 / 卸载)。

3.3:repository

存储与分发 Chart 的地方,类似 Docker Hub 或 Maven 仓库

- 公共仓库:Artifact Hub(官方)、Bitnami、Helm Hub 等。

- 私有仓库:ChartMuseum、Harbor、JFrog Artifactory 等(企业内部使用)。

3.4:values

values.yaml 是 Chart 的默认配置

部署时可通过 --set 或自定义 values-prod.yaml 覆盖,实现 "一次打包、多环境复用"。

命令行 --set > 自定义 Values 文件 > Chart 默认 Values。

3.5:templates

基于 Go 模板语言,结合 Values 动态渲染生成 Kubernetes YAML 清单。

支持条件判断、循环、变量、函数与模板复用,灵活适配不同场景。

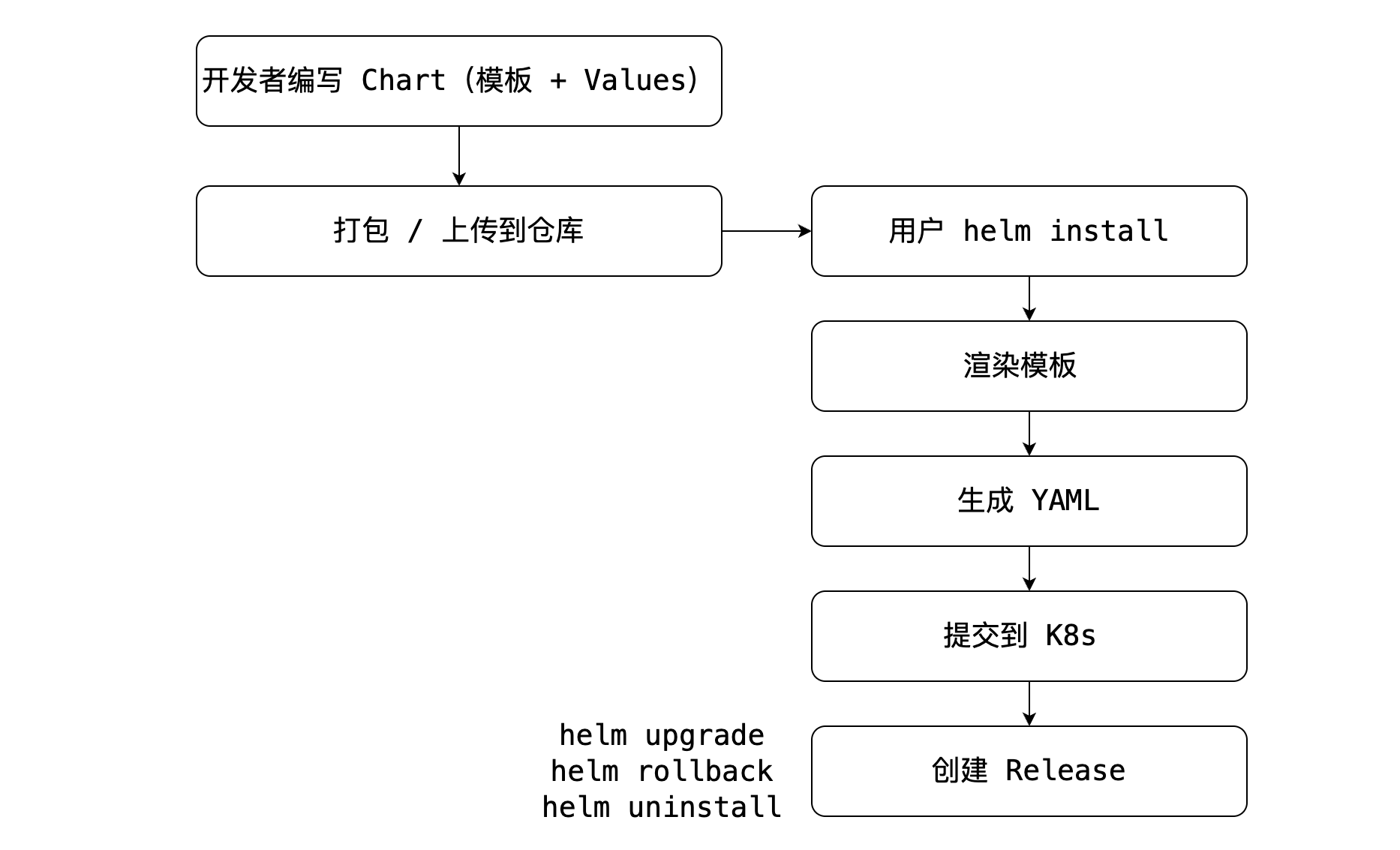

4:工作流程和mysql测试

(有种docker 镜像和容器的感觉)

以mysql为例测试一下:

bash

# ----- 首先是安装和仓库的指定 ---------

# 安装 Helm(以 macOS 为例)

brew install helm

# 添加公共仓库(如 Bitnami)

helm repo list

helm repo add bitnami https://charts.bitnami.com/bitnami

helm repo add aliyun https://apphub.aliyuncs.com/stable

helm repo add azure http://mirror.azure.cn/kubernetes/charts

# 更新仓库索引

helm repo update

# 搜索 Chart

helm search repo mysql

helm show readme bitnami/mysql

# ------- 安装和管理release ---------

# 安装(指定名称、版本、自定义 Values)

helm install my-mysql bitnami/mysql --version 9.10.1 -f values-prod.yaml

# 查看所有 Release

helm list

# 查看 Release 详情与历史

helm status my-mysql

helm history my-mysql

# 升级(修改配置/版本)

helm upgrade my-mysql bitnami/mysql --set auth.rootPassword=newpass

# 回滚到版本 1

helm rollback my-mysql 1

# 卸载(保留历史)

helm uninstall my-mysql --keep-historychart的开发和调试

shell

# 创建新 Chart

helm create my-chart

# 本地渲染模板(不实际部署)

helm template my-chart ./my-chart

# 调试安装(预览最终 YAML)

helm install --dry-run --debug my-chart ./my-chart

# 打包 Chart

helm package ./my-chart5:原生对比和优势

简化部署与复用

- 一键部署复杂应用(如 PostgreSQL、Redis 集群、ELK),无需手写大量 YAML。

- Chart 可在团队 / 社区共享,避免重复造轮子Helm。

灵活配置与多环境适配

- 一套模板,通过不同 Values 文件适配开发、测试、生产环境。

- 支持条件渲染、资源动态调整(副本数、资源限制、镜像版本等)。

强大的版本管理

- 原子升级:升级失败自动回滚,保证集群一致性。

- 发布历史 :

helm history查看所有版本,helm rollback一键回滚。 - 依赖管理:自动解析并安装子 Chart,支持层级依赖与条件激活。

安全与生态

- 无 Tiller,权限更可控,支持 Chart 签名验证。

- 公共仓库有数千个官方 / 社区维护的 Chart,覆盖数据库、中间件、监控、CI/CD 等全场景。

| 方式 | 优点 | 缺点 |

|---|---|---|

| 原生yaml | 简单、直接、无依赖 | 配置零散、多环境难管理、无版本控制、易出错 |

| Helm Chart | 标准化、可复用、多环境适配、版本 / 回滚、依赖管理 | 需学习 Chart 与 Go 模板 |

6:实际应用部署示例

假设想要为一个 Spring Boot 3(Java 17)应用编写完整的 Helm Chart,该应用依赖 MySQL 8 和 Redis 7,并且已经准备好了应用的 JAR 包但还未部署到服务器

6.1:部署思路

创建基础 Chart 骨架:使用 Helm 命令生成标准目录结构,作为开发起点。

定制核心模板文件:

- Deployment:配置 Spring Boot 应用的容器(Java 17 运行时、JAR 包挂载 / 拉取、JVM 参数)。

- Service:暴露应用端口,供内部 / 外部访问。

- ConfigMap:存储应用配置(如数据库、Redis 连接信息)。

- Secret:存储敏感信息(如 MySQL 密码、Redis 密码)。

管理依赖服务:通过 Helm 依赖集成官方的 MySQL 和 Redis Chart,统一部署。

配置 Values.yaml:定义可配置参数(端口、资源限制、依赖服务配置等)。

测试与部署:本地调试模板、安装 Chart、验证应用运行。

6.2:环境准备

已安装 Helm 3(helm version 验证)。

已配置 K8s 集群访问(kubectl get nodes 验证)。

应用 JAR 包:假设文件名为 my-app-1.0.0.jar

6.3:分步实现chart

1️⃣ 创建chart骨架

shell

# 创建 Chart

helm create my-app

# 进入 Chart 目录(后续操作均在此目录下)

cd my-app此时可以看见helm已经帮助我们创建chart骨架了

bash

my-app/

├── Chart.yaml # Chart 元数据

├── values.yaml # 核心配置文件

├── charts/ # 依赖的 MySQL/Redis Chart 目录

├── templates/ # 模板文件目录

│ ├── deployment.yaml # 应用部署模板(核心)

│ ├── service.yaml # 服务暴露模板

│ ├── _helpers.tpl # 辅助模板函数

│ └── NOTES.txt # 部署后提示信息

└── .helmignore # 忽略文件配置2️⃣ 修改 Chart.yaml(元数据与依赖)

主要是添加应用信息和mysql/redis依赖信息:

yaml

apiVersion: v2

name: my-app

description: A Helm chart for Spring Boot 3 (Java 17) app with MySQL 8 and Redis 7

type: application

version: 1.0.0 # Chart 版本(SemVer 2.0)

appVersion: "1.0.0" # 应用版本

# 添加 MySQL 和 Redis 依赖(从 Bitnami 仓库拉取官方 Chart)

dependencies:

- name: mysql

version: 9.16.0 # 适配 MySQL 8 的版本

repository: https://charts.bitnami.com/bitnami

condition: mysql.enabled # 可通过 values 控制是否部署 MySQL

- name: redis

version: 17.12.0 # 适配 Redis 7 的版本

repository: https://charts.bitnami.com/bitnami

condition: redis.enabled # 可通过 values 控制是否部署 Redis添加依赖后,执行以下命令拉取依赖的 Chart 到 charts/ 目录:

shell

helm dependency update3️⃣ 定制 Values.yaml(核心配置)

替换 values.yaml 内容,适配 Spring Boot + MySQL + Redis 配置,关键参数均可自定义

yaml

# -------------------------- 应用基础配置 --------------------------

replicaCount: 1 # 应用副本数

image:

# 方式1:使用 Java 17 基础镜像(后续挂载本地 JAR 包)

repository: eclipse-temurin

tag: 17-jre-alpine # 轻量的 Java 17 运行时

pullPolicy: IfNotPresent

# 方式2:若已将 JAR 包打包到自定义镜像,替换为你的镜像地址

# repository: your-registry/my-app

# tag: 1.0.0

# JAR 包相关配置(方式1用)

jar:

name: my-app-1.0.0.jar # 你的 JAR 包名称

mountPath: /app # JAR 包挂载到容器的路径

# 应用端口(对应 Spring Boot 的 server.port)

service:

type: ClusterIP # 集群内访问,若需外部访问改为 NodePort/LoadBalancer

port: 8080

targetPort: 8080

# JVM 参数(根据应用调整)

jvm:

args: "-Xms512m -Xmx1024m -Dspring.profiles.active=prod"

# 资源限制

resources:

limits:

cpu: 1000m

memory: 1024Mi

requests:

cpu: 500m

memory: 512Mi

# -------------------------- MySQL 配置 --------------------------

mysql:

enabled: true # 是否部署 MySQL

auth:

rootPassword: MyApp@123456 # root 密码(建议部署时通过 --set 覆盖)

database: my_app_db # 应用使用的数据库名

username: my_app_user # 应用访问数据库的用户名

password: MyAppDB@123456 # 应用数据库密码

primary:

service:

port: 3306

resources:

limits:

cpu: 500m

memory: 512Mi

requests:

cpu: 200m

memory: 256Mi

# -------------------------- Redis 配置 --------------------------

redis:

enabled: true # 是否部署 Redis

auth:

enabled: true

password: MyAppRedis@123456 # Redis 密码

master:

service:

port: 6379

resources:

limits:

cpu: 200m

memory: 256Mi

requests:

cpu: 100m

memory: 128Mi

# -------------------------- 应用配置(注入到 ConfigMap) --------------------------

app:

config:

spring:

datasource:

url: jdbc:mysql://my-app-mysql:3306/my_app_db?useUnicode=true&characterEncoding=utf8&useSSL=false&serverTimezone=Asia/Shanghai

username: my_app_user

driver-class-name: com.mysql.cj.jdbc.Driver

redis:

host: my-app-redis-master

port: 6379

password: MyAppRedis@1234564️⃣ 修改模板文件

修改

templates/deployment.yaml(核心)

替换默认的 deployment.yaml,适配 Spring Boot 应用启动、JAR 包挂载、配置注入:

yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: {{ include "my-app.fullname" . }}

labels:

{{- include "my-app.labels" . | nindent 4 }}

spec:

replicas: {{ .Values.replicaCount }}

selector:

matchLabels:

{{- include "my-app.selectorLabels" . | nindent 6 }}

template:

metadata:

labels:

{{- include "my-app.selectorLabels" . | nindent 8 }}

spec:

containers:

- name: {{ .Chart.Name }}

image: "{{ .Values.image.repository }}:{{ .Values.image.tag }}"

imagePullPolicy: {{ .Values.image.pullPolicy }}

# Spring Boot 启动命令(挂载 JAR 包方式)

command: ["java"]

args:

- {{ .Values.jvm.args }}

- "-jar"

- "{{ .Values.jar.mountPath }}/{{ .Values.jar.name }}"

# 端口映射

ports:

- name: http

containerPort: {{ .Values.service.targetPort }}

protocol: TCP

# 环境变量(从 ConfigMap/Secret 注入配置)

env:

# 数据库密码(从 Secret 注入)

- name: SPRING_DATASOURCE_PASSWORD

valueFrom:

secretKeyRef:

name: {{ include "my-app.fullname" . }}-mysql

key: password

# Redis 密码(从 Secret 注入)

- name: SPRING_REDIS_PASSWORD

valueFrom:

secretKeyRef:

name: {{ include "my-app.fullname" . }}-redis

key: password

# 挂载 ConfigMap(应用配置)

volumeMounts:

- name: app-config

mountPath: /app/config

readOnly: true

# 挂载本地 JAR 包(方式1:需先将 JAR 包上传到服务器并创建 PV/PVC)

- name: jar-volume

mountPath: {{ .Values.jar.mountPath }}

# 健康检查(Spring Boot Actuator,需应用开启)

livenessProbe:

httpGet:

path: /actuator/health/liveness

port: http

initialDelaySeconds: 60

periodSeconds: 10

readinessProbe:

httpGet:

path: /actuator/health/readiness

port: http

initialDelaySeconds: 30

periodSeconds: 5

resources:

{{- toYaml .Values.resources | nindent 12 }}

# 定义卷

volumes:

# 应用配置卷(ConfigMap)

- name: app-config

configMap:

name: {{ include "my-app.fullname" . }}-config

# JAR 包卷(方式1:需提前创建 PVC,名称为 jar-pvc)

- name: jar-volume

persistentVolumeClaim:

claimName: jar-pvc创建templates/configmap.yaml(应用配置)

创建 templates/configmap.yaml,存储非敏感的应用配置(注入到 Spring Boot):

yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: {{ include "my-app.fullname" . }}-config

labels:

{{- include "my-app.labels" . | nindent 4 }}

data:

application-prod.yml: |

server:

port: {{ .Values.service.targetPort }}

spring:

datasource:

url: {{ .Values.app.config.spring.datasource.url }}

username: {{ .Values.app.config.spring.datasource.username }}

driver-class-name: {{ .Values.app.config.spring.datasource.driver-class-name }}

redis:

host: {{ .Values.app.config.spring.redis.host }}

port: {{ .Values.app.config.spring.redis.port }}创建templates/secret.yaml(敏感信息)

创建 templates/secret.yaml,存储 MySQL/Redis 密码等敏感信息:

yaml

apiVersion: v1

kind: Secret

metadata:

name: {{ include "my-app.fullname" . }}-mysql

labels:

{{- include "my-app.labels" . | nindent 4 }}

type: Opaque

data:

password: {{ .Values.mysql.auth.password | b64enc }} # Base64 加密

---

apiVersion: v1

kind: Secret

metadata:

name: {{ include "my-app.fullname" . }}-redis

labels:

{{- include "my-app.labels" . | nindent 4 }}

type: Opaque

data:

password: {{ .Values.redis.auth.password | b64enc }} # Base64 加密修改service.yaml

保留默认的 service.yaml,仅确认端口配置:

yaml

apiVersion: v1

kind: Service

metadata:

name: {{ include "my-app.fullname" . }}

labels:

{{- include "my-app.labels" . | nindent 4 }}

spec:

type: {{ .Values.service.type }}

ports:

- port: {{ .Values.service.port }}

targetPort: {{ .Values.service.targetPort }}

protocol: TCP

name: http

selector:

{{- include "my-app.selectorLabels" . | nindent 4 }}5️⃣ jar包的处理

方式 1:本地挂载 JAR 包(适合测试 / 临时部署)

在 K8s 集群节点上创建目录,上传 JAR 包

shell

# 节点上创建目录

mkdir -p /data/my-app/jar

# 上传 JAR 包到该目录(如用 scp)

scp my-app-1.0.0.jar root@node-ip:/data/my-app/jar/创建 PV/PVC(持久卷 / 持久卷声明),供 Deployment 挂载:

yaml

apiVersion: v1

kind: PersistentVolume # 指定成为PV持久卷

metadata:

name: jar-pv

spec:

capacity:

storage: 1Gi # 指定存储

accessModes:

- ReadWriteOnce

hostPath:

path: /data/my-app/jar # 节点上的目录

storageClassName: manual

---

apiVersion: v1

kind: PersistentVolumeClaim # 指定pvc

metadata:

name: jar-pvc

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

storageClassName: manual

bash

kubectl apply -f jar-pv.yaml方式 2:打包 JAR 到自定义镜像(推荐生产环境)

创建dockerfile,和JAR同目录

dockerfile

FROM eclipse-temurin:17-jre-alpine

WORKDIR /app

COPY my-app-1.0.0.jar /app/

EXPOSE 8080

CMD ["java", "-Xms512m", "-Xmx1024m", "-jar", "my-app-1.0.0.jar"]构建并推送镜像到私有仓库

bash

# 构建镜像

docker build -t your-registry/my-app:1.0.0 .

# 推送镜像

docker push your-registry/my-app:1.0.0修改 values.yaml 中的 image 配置,指向自定义镜像(注释掉方式 1 的 JAR 挂载相关配置)。

6️⃣ 测试与部署 Chart

如果是本地的debug测试

bash

# 渲染模板,查看最终生成的 YAML(不实际部署)

helm template my-app ./my-app --debug

# 模拟安装(检查配置是否合法)

helm install --dry-run --debug my-app-release ./my-app正式部署

bash

# 安装 Chart(指定 release 名称为 my-app-release)

# 建议通过 --set 覆盖敏感密码,避免硬编码

helm install my-app-release ./my-app \

--set mysql.auth.rootPassword=YourStrongRootPass123 \

--set mysql.auth.password=YourStrongDBPass123 \

--set redis.auth.password=YourStrongRedisPass123

# 查看部署状态

helm list

kubectl get pods # 确认 my-app、mysql、redis 容器均 Running

kubectl get svc # 查看服务端口验证应用的运行

bash

# 进入应用容器查看日志

kubectl logs -f $(kubectl get pods -l app.kubernetes.io/name=my-app -o jsonpath="{.items[0].metadata.name}")

# 测试接口(若 Service 为 NodePort)

curl http://node-ip:node-port/actuator/health升级,回滚和卸载

bash

# 升级(修改配置后)

helm upgrade my-app-release ./my-app --set replicaCount=2

# 回滚(如回滚到版本 1)

helm rollback my-app-release 1

# 卸载

helm uninstall my-app-release二:K8s集群监控

Prometheus 是一套开源的监控系统、报警、时间序列的集合,最初由 SoundCloud 开发,后来随着越来越多公司的使用,于是便独立成开源项目。自此以后,许多公司和组织都采用了 Prometheus 作为监控告警工具。

1:传统部署方式

前置准备条件

- 你的 K8s 集群已正常运行(v1.20+ 版本)

- 已安装

kubectl并配置好集群访问权限 - 集群有至少 1 个可用节点(推荐 2 核 4G 以上资源)

1️⃣ 创建namespace, 防止和业务资源混在一起

bash

kubectl create namespace monitoring2️⃣ 创建 Prometheus 的 ConfigMap

ConfigMap 用于存储 Prometheus 的核心配置(如采集目标、存储策略、告警配置等),创建prometheus-config.yaml文件

yaml

apiVersion: v1

kind: ConfigMap # 指定资源清单类型为config-map

metadata: # 元数据信息,指定config-map的名称和所处的命名空间

name: prometheus-config

namespace: monitoring

data: # 具体config, 就是指定prometheus的核心配置

prometheus.yml: |

global:

scrape_interval: 15s # 全局采集间隔

evaluation_interval: 15s # 规则评估间隔

# 告警规则文件(后续会挂载)

rule_files:

- "/etc/prometheus/rules/*.yml"

# 采集目标配置

scrape_configs:

# 1. 采集Prometheus自身指标

- job_name: 'prometheus'

static_configs:

- targets: ['localhost:9090']

# 2. 采集K8s apiserver(自动发现)

- job_name: 'kubernetes-apiservers'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

# 3. 采集K8s节点(通过node-exporter,后续需部署)

- job_name: 'kubernetes-nodes'

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

# 4. 采集Pod指标(自动发现)

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

target_label: __address__

bash

# 指定创建

kubectl apply -f prometheus-config.yaml3️⃣ 部署 Prometheus 服务(Deployment + Service)

创建prometheus-deployment.yaml,部署 Prometheus 并挂载 ConfigMap:

yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus-server

namespace: monitoring

spec:

replicas: 1

selector:

matchLabels:

app: prometheus-server

template:

metadata:

labels:

app: prometheus-server

spec:

containers:

- name: prometheus

image: prom/prometheus:v2.45.0 # 稳定版本

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus/"

- "--web.enable-lifecycle" # 支持热加载配置

ports:

- containerPort: 9090

volumeMounts:

# 挂载ConfigMap中的prometheus.yml

- name: prometheus-config-volume

mountPath: /etc/prometheus

# 挂载规则文件目录(后续创建)

- name: prometheus-rules-volume

mountPath: /etc/prometheus/rules

# 持久化存储指标数据

- name: prometheus-storage

mountPath: /prometheus

resources:

requests:

cpu: 100m

memory: 256Mi

limits:

cpu: 500m

memory: 512Mi

volumes:

- name: prometheus-config-volume

configMap:

name: prometheus-config # 关联前面创建的ConfigMap

- name: prometheus-rules-volume

emptyDir: {} # 临时存储,生产建议用ConfigMap/持久卷

- name: prometheus-storage

emptyDir: {} # 生产建议替换为PersistentVolumeClaim

---

# 创建Service暴露Prometheus(NodePort方式,方便访问)

apiVersion: v1

kind: Service

metadata:

name: prometheus-service

namespace: monitoring

spec:

type: NodePort

selector:

app: prometheus-server

ports:

- port: 8080

targetPort: 9090

nodePort: 30090 # 集群外访问端口:节点IP:30090执行部署

bash

kubectl apply -f prometheus-deployment.yaml

# 验证部署,查看是否有prometheus的running

kubectl get pods -n monitoring4️⃣ 部署 Node Exporter(采集节点指标)

Prometheus 需要 Node Exporter 采集 K8s 节点的 CPU、内存、磁盘等指标

创建node-exporter-daemonset.yaml:

yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-exporter

namespace: monitoring

spec:

selector:

matchLabels:

app: node-exporter

template:

metadata:

labels:

app: node-exporter

spec:

hostNetwork: true

hostPID: true

containers:

- name: node-exporter

image: prom/node-exporter:v1.6.1

args:

- "--path.procfs=/host/proc"

- "--path.sysfs=/host/sys"

- "--collector.filesystem.ignored-mount-points=^/(sys|proc|dev|host|etc)($|/)"

ports:

- containerPort: 9100

volumeMounts:

- name: proc

mountPath: /host/proc

readOnly: true

- name: sys

mountPath: /host/sys

readOnly: true

resources:

requests:

cpu: 100m

memory: 100Mi

limits:

cpu: 200m

memory: 200Mi

volumes:

- name: proc

hostPath:

path: /proc

- name: sys

hostPath:

path: /sys

---

# 暴露Node Exporter服务

apiVersion: v1

kind: Service

metadata:

name: node-exporter

namespace: monitoring

spec:

selector:

app: node-exporter

ports:

- port: 9100

targetPort: 9100

type: ClusterIP执行部署

bash

kubectl apply -f node-exporter-daemonset.yaml5️⃣ 编写 Prometheus 监控规则(告警 + 指标)

创建prometheus-rules.yaml(ConfigMap 形式挂载规则):

yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-rules

namespace: monitoring

data:

node-alert.yml: |

groups:

- name: node-monitor-rules

rules:

# 1. 节点CPU使用率超过80%告警

- alert: NodeCPUUsageHigh

expr: 100 - (avg by (instance) (irate(node_cpu_seconds_total{mode="idle"}[5m])) * 100) > 80

for: 2m

labels:

severity: warning

annotations:

summary: "节点CPU使用率过高 ({{ $labels.instance }})"

description: "节点CPU使用率超过80% (当前值: {{ $value }}%)"

# 2. 节点内存使用率超过85%告警

- alert: NodeMemoryUsageHigh

expr: 100 - (node_memory_MemAvailable_bytes / node_memory_MemTotal_bytes * 100) > 85

for: 2m

labels:

severity: warning

annotations:

summary: "节点内存使用率过高 ({{ $labels.instance }})"

description: "节点内存使用率超过85% (当前值: {{ $value }}%)"

# 3. 节点磁盘使用率超过90%告警

- alert: NodeDiskUsageHigh

expr: 100 - (node_filesystem_avail_bytes{mountpoint="/"} / node_filesystem_size_bytes{mountpoint="/"} * 100) > 90

for: 2m

labels:

severity: critical

annotations:

summary: "节点磁盘使用率过高 ({{ $labels.instance }})"

description: "节点根目录磁盘使用率超过90% (当前值: {{ $value }}%)"执行创建,并更新 Prometheus Deployment 挂载该 ConfigMap:

yaml

# 1. 创建规则ConfigMap

kubectl apply -f prometheus-rules.yaml

# 2. 修改Prometheus Deployment,挂载规则ConfigMap(替换之前的emptyDir)

# 编辑Deployment:

kubectl edit deployment prometheus-server -n monitoring

# 将volumes中的prometheus-rules-volume修改为:

# - name: prometheus-rules-volume

# configMap:

# name: prometheus-rules

# 3. 热加载Prometheus配置(无需重启Pod)

kubectl exec -n monitoring $(kubectl get pods -n monitoring -l app=prometheus-server -o jsonpath="{.items[0].metadata.name}") -- curl -X POST http://localhost:9090/-/reload6️⃣ 部署grafana

创建grafana-deployment.yaml,并配置 Prometheus 作为数据源:

yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: grafana

namespace: monitoring

spec:

replicas: 1

selector:

matchLabels:

app: grafana

template:

metadata:

labels:

app: grafana

spec:

containers:

- name: grafana

image: grafana/grafana:9.5.2

ports:

- containerPort: 3000

env:

# 默认管理员账号密码(首次登录修改)

- name: GF_SECURITY_ADMIN_USER

value: "admin"

- name: GF_SECURITY_ADMIN_PASSWORD

value: "admin123"

volumeMounts:

- name: grafana-storage

mountPath: /var/lib/grafana

resources:

requests:

cpu: 100m

memory: 100Mi

limits:

cpu: 500m

memory: 512Mi

volumes:

- name: grafana-storage

emptyDir: {} # 生产建议用PersistentVolumeClaim

---

# 暴露Grafana服务(NodePort)

apiVersion: v1

kind: Service

metadata:

name: grafana-service

namespace: monitoring

spec:

type: NodePort

selector:

app: grafana

ports:

- port: 3000

targetPort: 3000

nodePort: 30030 # 集群外访问:节点IP:30030执行部署

bash

kubectl apply -f grafana-deployment.yaml7️⃣ Grafana 配置 Prometheus 数据源 + 导入仪表盘

浏览器打开 http://<集群节点IP>:30030,使用账号admin/ 密码admin123登录(首次登录需修改密码)

添加 Prometheus 数据源:

- 左侧菜单 → Configuration → Data sources → Add data source → 选择 Prometheus。

- URL 填写:

http://prometheus-service.monitoring.svc:8080(K8s 内部服务地址)。 - 点击 "Save & test",提示 "Data source is working" 即配置成功。

导入 K8s 监控仪表盘:

- 左侧菜单 → Dashboards → Import → 输入仪表盘 ID:

1860(Node Exporter Full,官方推荐)。 - 选择已配置的 Prometheus 数据源 → 点击 "Import",即可看到完整的节点监控面板。

- 额外推荐仪表盘 ID:

7249(K8s 集群监控)、3119(Prometheus 自身监控)。

2:Helm包管理器部署

前置准备

- K8s 集群(1.20+),已配置

kubectl访问权限。 - Helm 3 已安装(

helm version验证)。 - 集群已安装

metrics-server(可选,用于采集 Pod / 节点资源指标)

bash

kubectl apply -f https://github.com/kubernetes-sigs/metrics-server/releases/latest/download/components.yaml

# 若 metrics-server 启动失败,添加 --kubelet-insecure-tls 参数

kubectl edit deployment metrics-server -n kube-system

# 在 args 中添加:--kubelet-insecure-tls目录结构如下:

plaintext

k8s-monitoring/ # 监控总目录

├── prometheus/ # Prometheus 核心配置目录

│ ├── prometheus-values.yaml # Prometheus Helm 部署的完整 values 配置(含 ConfigMap、监控规则)

│ └── scripts/ # 辅助脚本目录

│ └── port-forward.sh # 临时访问 Prometheus 的端口转发脚本

├── grafana/ # Grafana 配置目录

│ └── grafana-values.yaml # Grafana Helm 部署的完整 values 配置

├── deploy.sh # 一键部署 Prometheus + Grafana 的脚本

└── README.md # 部署说明文档k8s-monitoring/prometheus/prometheus-values.yaml

包含 Prometheus 的 ConfigMap、持久化、RBAC、监控规则

yaml

# Prometheus Helm 部署核心配置

# 覆盖 prometheus-community/prometheus Chart 的默认值

# 1. Prometheus 主配置(ConfigMap 形式的 prometheus.yml)

prometheus:

configMap:

# ConfigMap 名称(自动创建)

name: prometheus-server-config

# prometheus.yml 核心配置(采集 K8s 集群指标)

prometheus.yml: |

global:

scrape_interval: 15s

evaluation_interval: 15s

alerting:

alertmanagers:

- static_configs:

- targets: []

# 加载自定义监控规则

rule_files:

- /etc/prometheus/rules/prometheus-prometheus-rule-0.yaml

# 采集配置(K8s 集群组件 + Prometheus 自身)

scrape_configs:

# 采集 Prometheus 自身

- job_name: 'prometheus'

static_configs:

- targets: ['localhost:9090']

# 采集 K8s APIServer

- job_name: 'kubernetes-apiservers'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

# 采集 K8s Node(kubelet 指标)

- job_name: 'kubernetes-nodes'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics

# 采集 K8s Pod(自动发现带注解的 Pod)

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

# 2. 持久化配置(避免数据丢失)

persistence:

enabled: true

storageClassName: "manual" # 若集群无默认存储类,需提前创建(或改为 "" 使用默认)

size: 10Gi

# 3. RBAC 权限(必须开启,否则无法访问 K8s API)

rbac:

create: true

pspEnabled: false

# 4. 服务暴露(ClusterIP,供 Grafana 内部访问)

service:

type: ClusterIP

port: 9090

# 5. 资源限制(生产环境必配)

resources:

limits:

cpu: 2000m

memory: 4Gi

requests:

cpu: 1000m

memory: 2Gi

# 2. 自定义监控规则(PrometheusRule CRD)

prometheusRule:

enabled: true

additionalLabels:

prometheus: k8s

# 监控规则组(节点 + Pod 告警)

groups:

- name: node-monitoring.rules

rules:

- alert: NodeHighCPUUsage

expr: avg(rate(node_cpu_seconds_total{mode!="idle"}[5m])) by (node) > 0.8

for: 5m

labels:

severity: warning

annotations:

summary: "节点 {{ $labels.node }} CPU 使用率过高"

description: "节点 {{ $labels.node }} CPU 使用率超过 80% (当前值: {{ $value }})"

- alert: NodeHighMemoryUsage

expr: (1 - (node_memory_MemAvailable_bytes / node_memory_MemTotal_bytes)) * 100 > 85

for: 5m

labels:

severity: warning

annotations:

summary: "节点 {{ $labels.node }} 内存使用率过高"

description: "节点 {{ $labels.node }} 内存使用率超过 85% (当前值: {{ $value }})"

- name: pod-monitoring.rules

rules:

- alert: PodHighCPUUsage

expr: avg(rate(container_cpu_usage_seconds_total[5m])) by (pod, namespace) > 0.8

for: 5m

labels:

severity: warning

annotations:

summary: "Pod {{ $labels.pod }} ({{ $labels.namespace }}) CPU 使用率过高"

description: "Pod {{ $labels.pod }} CPU 使用率超过 80% (当前值: {{ $value }})"

# 3. 其他全局配置

server:

# 关闭不必要的功能,减少资源占用

statefulSet:

enabled: true

# 数据保留时间(默认 15 天,生产建议 30 天)

retention: 30dk8s-monitoring/prometheus/scripts/port-forward.sh

辅助脚本

shell

#!/bin/bash

# 临时端口转发,访问 Prometheus UI

echo "=== 启动 Prometheus 端口转发 ==="

echo "访问地址:http://localhost:9090"

echo "按 Ctrl+C 停止转发"

kubectl port-forward svc/prometheus-server 9090:9090 -n monitoringk8s-monitoring/grafana/grafana-values.yaml

grafana的配置

yaml

# Grafana Helm 部署配置

# 覆盖 grafana/grafana Chart 的默认值

# 1. 持久化配置

persistence:

enabled: true

size: 5Gi

storageClassName: "manual" # 同 Prometheus 存储类

# 2. 服务暴露(NodePort 供外部访问,生产建议 Ingress)

service:

type: NodePort

nodePort: 30000 # 自定义 NodePort 端口(30000-32767 之间)

port: 3000

# 3. 管理员密码(生产环境建议改为复杂密码)

adminPassword: "Admin@123456"

# 4. 资源限制

resources:

limits:

cpu: 500m

memory: 1Gi

requests:

cpu: 200m

memory: 512Mi

# 5. 自动导入仪表盘(可选,提前下载 JSON 放到 dashboards/ 目录)

dashboard:

enabled: false # 先手动导入,熟悉后再开启自动导入

# 若开启,需配置 dashboardFiles 指向本地 JSON 文件

# dashboardFiles:

# - dashboards/k8s-cluster.json

# 6. 自动配置数据源(可选,替代手动配置)

datasources:

datasources.yaml:

apiVersion: 1

datasources:

- name: Prometheus

type: prometheus

url: http://prometheus-server.monitoring.svc:9090

access: proxy

isDefault: truek8s-monitoring/deploy.sh

一键部署脚本

shell

#!/bin/bash

set -e # 出错立即停止执行

# 颜色输出函数(可选,提升可读性)

red() { echo -e "\033[31m$1\033[0m"; }

green() { echo -e "\033[32m$1\033[0m"; }

yellow() { echo -e "\033[33m$1\033[0m"; }

# 步骤 1:检查前置条件

green "=== 检查前置条件 ==="

if ! command -v helm &> /dev/null; then

red "错误:未安装 Helm 3,请先安装 Helm!"

exit 1

fi

if ! kubectl get nodes &> /dev/null; then

red "错误:kubectl 未配置 K8s 集群访问权限!"

exit 1

fi

# 步骤 2:创建监控命名空间

green "=== 创建 monitoring 命名空间 ==="

kubectl create namespace monitoring || yellow "命名空间已存在,跳过"

# 步骤 3:添加 Helm 仓库

green "=== 添加 Prometheus/Grafana Helm 仓库 ==="

helm repo add prometheus-community https://prometheus-community.github.io/helm-charts || yellow "Prometheus 仓库已添加,跳过"

helm repo add grafana https://grafana.github.io/helm-charts || yellow "Grafana 仓库已添加,跳过"

helm repo update

# 步骤 4:部署 Prometheus

green "=== 部署 Prometheus ==="

helm install prometheus prometheus-community/prometheus \

-n monitoring \

-f ./prometheus/prometheus-values.yaml

# 步骤 5:部署 Grafana

green "=== 部署 Grafana ==="

helm install grafana grafana/grafana \

-n monitoring \

-f ./grafana/grafana-values.yaml

# 步骤 6:验证部署状态

green "=== 验证部署状态 ==="

kubectl get pods -n monitoring

kubectl get svc -n monitoring

# 步骤 7:输出访问信息

green "=== 部署完成!==="

yellow "1. Prometheus 访问:执行 ./prometheus/scripts/port-forward.sh,然后访问 http://localhost:9090"

yellow "2. Grafana 访问:http://<K8s节点IP>:30000(用户名:admin,密码:Admin@123456)"

yellow "3. Grafana 数据源:已自动配置 Prometheus(http://prometheus-server.monitoring.svc:9090)"

bash

./deploy.shPrometheus访问方式

- 执行端口转发:./prometheus/scripts/port-forward.sh

- 访问:http://localhost:9090

- 验证指标:在 Graph 中输入 node_cpu_seconds_total,查看是否有数据

Grafana访问方式

-

访问:http://<K8s 节点 IP>:30000

-

用户名:admin

-

密码:Admin@123456

-

导入仪表盘:

-

节点监控:1860(Node Exporter Full)

-

K8s 集群监控:7249(Kubernetes Cluster Monitoring)

三:ELK日志管理

所谓的ELK就是filebeat -> logstach -> es -> kibana

1️⃣ 先部署filebeat完成数据的采集

yaml

# 创建 filebeat.yaml 并部署

---

apiVersion: v1

kind: ConfigMap

metadata:

name: filebeat-config

namespace: kube-logging

labels:

k8s-app: filebeat

data:

filebeat.yml: |-

filebeat.inputs:

- type: container

enable: true

paths:

- /var/log/containers/*.log #这里是filebeat采集挂载到pod中的日志目录

processors:

- add_kubernetes_metadata: #添加k8s的字段用于后续的数据清洗

host: ${NODE_NAME}

matchers:

- logs_path:

logs_path: "/var/log/containers/"

#output.kafka: #如果日志量较大,es中的日志有延迟,可以选择在filebeat和logstash中间加入kafka

# hosts: ["kafka-log-01:9092", "kafka-log-02:9092", "kafka-log-03:9092"]

# topic: 'topic-test-log'

# version: 2.0.0

output.logstash: #因为还需要部署logstash进行数据的清洗,因此filebeat是把数据推到logstash中

hosts: ["logstash:5044"]

enabled: true

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: filebeat

namespace: kube-logging

labels:

k8s-app: filebeat

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: filebeat

labels:

k8s-app: filebeat

rules:

- apiGroups: [""] # "" indicates the core API group

resources:

- namespaces

- pods

verbs: ["get", "watch", "list"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: filebeat

subjects:

- kind: ServiceAccount

name: filebeat

namespace: kube-logging

roleRef:

kind: ClusterRole

name: filebeat

apiGroup: rbac.authorization.k8s.io

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: filebeat

namespace: kube-logging

labels:

k8s-app: filebeat

spec:

selector:

matchLabels:

k8s-app: filebeat

template:

metadata:

labels:

k8s-app: filebeat

spec:

serviceAccountName: filebeat

terminationGracePeriodSeconds: 30

containers:

- name: filebeat

image: docker.io/kubeimages/filebeat:7.9.3 #该镜像支持arm64和amd64两种架构

args: [

"-c", "/etc/filebeat.yml",

"-e","-httpprof","0.0.0.0:6060"

]

#ports:

# - containerPort: 6060

# hostPort: 6068

env:

- name: NODE_NAME

valueFrom:

fieldRef:

fieldPath: spec.nodeName

- name: ELASTICSEARCH_HOST

value: elasticsearch-logging

- name: ELASTICSEARCH_PORT

value: "9200"

securityContext:

runAsUser: 0

# If using Red Hat OpenShift uncomment this:

#privileged: true

resources:

limits:

memory: 1000Mi

cpu: 1000m

requests:

memory: 100Mi

cpu: 100m

volumeMounts:

- name: config #挂载的是filebeat的配置文件

mountPath: /etc/filebeat.yml

readOnly: true

subPath: filebeat.yml

- name: data #持久化filebeat数据到宿主机上

mountPath: /usr/share/filebeat/data

- name: varlibdockercontainers #这里主要是把宿主机上的源日志目录挂载到filebeat容器中,如果没有修改docker或者containerd的runtime进行了标准的日志落盘路径,可以把mountPath改为/var/lib

mountPath: /var/lib

readOnly: true

- name: varlog #这里主要是把宿主机上/var/log/pods和/var/log/containers的软链接挂载到filebeat容器中

mountPath: /var/log/

readOnly: true

- name: timezone

mountPath: /etc/localtime

volumes:

- name: config

configMap:

defaultMode: 0600

name: filebeat-config

- name: varlibdockercontainers

hostPath: #如果没有修改docker或者containerd的runtime进行了标准的日志落盘路径,可以把path改为/var/lib

path: /var/lib

- name: varlog

hostPath:

path: /var/log/

# data folder stores a registry of read status for all files, so we don't send everything again on a Filebeat pod restart

- name: inputs

configMap:

defaultMode: 0600

name: filebeat-inputs

- name: data

hostPath:

path: /data/filebeat-data

type: DirectoryOrCreate

- name: timezone

hostPath:

path: /etc/localtime

tolerations: #加入容忍能够调度到每一个节点

- effect: NoExecute

key: dedicated

operator: Equal

value: gpu

- effect: NoSchedule

operator: Exists2️⃣ 再部署logstach完成数据的过滤和收集

yaml

# 创建 logstash.yaml 并部署服务

---

apiVersion: v1

kind: Service

metadata:

name: logstash

namespace: kube-logging

spec:

ports:

- port: 5044

targetPort: beats

selector:

type: logstash

clusterIP: None

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: logstash

namespace: kube-logging

spec:

selector:

matchLabels:

type: logstash

template:

metadata:

labels:

type: logstash

srv: srv-logstash

spec:

containers:

- image: docker.io/kubeimages/logstash:7.9.3 #该镜像支持arm64和amd64两种架构

name: logstash

ports:

- containerPort: 5044

name: beats

command:

- logstash

- '-f'

- '/etc/logstash_c/logstash.conf'

env:

- name: "XPACK_MONITORING_ELASTICSEARCH_HOSTS"

value: "http://elasticsearch-logging:9200"

volumeMounts:

- name: config-volume

mountPath: /etc/logstash_c/

- name: config-yml-volume

mountPath: /usr/share/logstash/config/

- name: timezone

mountPath: /etc/localtime

resources: #logstash一定要加上资源限制,避免对其他业务造成资源抢占影响

limits:

cpu: 1000m

memory: 2048Mi

requests:

cpu: 512m

memory: 512Mi

volumes:

- name: config-volume

configMap:

name: logstash-conf

items:

- key: logstash.conf

path: logstash.conf

- name: timezone

hostPath:

path: /etc/localtime

- name: config-yml-volume

configMap:

name: logstash-yml

items:

- key: logstash.yml

path: logstash.yml

---

apiVersion: v1

kind: ConfigMap

metadata:

name: logstash-conf

namespace: kube-logging

labels:

type: logstash

data:

logstash.conf: |-

input {

beats {

port => 5044

}

}

filter {

# 处理 ingress 日志

if [kubernetes][container][name] == "nginx-ingress-controller" {

json {

source => "message"

target => "ingress_log"

}

if [ingress_log][requesttime] {

mutate {

convert => ["[ingress_log][requesttime]", "float"]

}

}

if [ingress_log][upstremtime] {

mutate {

convert => ["[ingress_log][upstremtime]", "float"]

}

}

if [ingress_log][status] {

mutate {

convert => ["[ingress_log][status]", "float"]

}

}

if [ingress_log][httphost] and [ingress_log][uri] {

mutate {

add_field => {"[ingress_log][entry]" => "%{[ingress_log][httphost]}%{[ingress_log][uri]}"}

}

mutate {

split => ["[ingress_log][entry]","/"]

}

if [ingress_log][entry][1] {

mutate {

add_field => {"[ingress_log][entrypoint]" => "%{[ingress_log][entry][0]}/%{[ingress_log][entry][1]}"}

remove_field => "[ingress_log][entry]"

}

} else {

mutate {

add_field => {"[ingress_log][entrypoint]" => "%{[ingress_log][entry][0]}/"}

remove_field => "[ingress_log][entry]"

}

}

}

}

# 处理以srv进行开头的业务服务日志

if [kubernetes][container][name] =~ /^srv*/ {

json {

source => "message"

target => "tmp"

}

if [kubernetes][namespace] == "kube-logging" {

drop{}

}

if [tmp][level] {

mutate{

add_field => {"[applog][level]" => "%{[tmp][level]}"}

}

if [applog][level] == "debug"{

drop{}

}

}

if [tmp][msg] {

mutate {

add_field => {"[applog][msg]" => "%{[tmp][msg]}"}

}

}

if [tmp][func] {

mutate {

add_field => {"[applog][func]" => "%{[tmp][func]}"}

}

}

if [tmp][cost]{

if "ms" in [tmp][cost] {

mutate {

split => ["[tmp][cost]","m"]

add_field => {"[applog][cost]" => "%{[tmp][cost][0]}"}

convert => ["[applog][cost]", "float"]

}

} else {

mutate {

add_field => {"[applog][cost]" => "%{[tmp][cost]}"}

}

}

}

if [tmp][method] {

mutate {

add_field => {"[applog][method]" => "%{[tmp][method]}"}

}

}

if [tmp][request_url] {

mutate {

add_field => {"[applog][request_url]" => "%{[tmp][request_url]}"}

}

}

if [tmp][meta._id] {

mutate {

add_field => {"[applog][traceId]" => "%{[tmp][meta._id]}"}

}

}

if [tmp][project] {

mutate {

add_field => {"[applog][project]" => "%{[tmp][project]}"}

}

}

if [tmp][time] {

mutate {

add_field => {"[applog][time]" => "%{[tmp][time]}"}

}

}

if [tmp][status] {

mutate {

add_field => {"[applog][status]" => "%{[tmp][status]}"}

convert => ["[applog][status]", "float"]

}

}

}

mutate {

rename => ["kubernetes", "k8s"]

remove_field => "beat"

remove_field => "tmp"

remove_field => "[k8s][labels][app]"

}

}

output {

elasticsearch {

hosts => ["http://elasticsearch-logging:9200"]

codec => json

index => "logstash-%{+YYYY.MM.dd}" #索引名称以logstash+日志进行每日新建

}

}

---

apiVersion: v1

kind: ConfigMap

metadata:

name: logstash-yml

namespace: kube-logging

labels:

type: logstash

data:

logstash.yml: |-

http.host: "0.0.0.0"

xpack.monitoring.elasticsearch.hosts: http://elasticsearch-logging:92003️⃣ 先部署elasticsearch

yaml

# 需要提前给 es 落盘节点打上标签

kubectl label node <node name> es=data

# 创建 es.yaml

---

apiVersion: v1

kind: Service

metadata:

name: elasticsearch-logging

namespace: kube-logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "Elasticsearch"

spec:

ports:

- port: 9200

protocol: TCP

targetPort: db

selector:

k8s-app: elasticsearch-logging

---

# RBAC authn and authz

apiVersion: v1

kind: ServiceAccount

metadata:

name: elasticsearch-logging

namespace: kube-logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: elasticsearch-logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

rules:

- apiGroups:

- ""

resources:

- "services"

- "namespaces"

- "endpoints"

verbs:

- "get"

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

namespace: kube-logging

name: elasticsearch-logging

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

subjects:

- kind: ServiceAccount

name: elasticsearch-logging

namespace: kube-logging

apiGroup: ""

roleRef:

kind: ClusterRole

name: elasticsearch-logging

apiGroup: ""

---

# Elasticsearch deployment itself

apiVersion: apps/v1

kind: StatefulSet #使用statefulset创建Pod

metadata:

name: elasticsearch-logging #pod名称,使用statefulSet创建的Pod是有序号有顺序的

namespace: kube-logging #命名空间

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

srv: srv-elasticsearch

spec:

serviceName: elasticsearch-logging #与svc相关联,这可以确保使用以下DNS地址访问Statefulset中的每个pod (es-cluster-[0,1,2].elasticsearch.elk.svc.cluster.local)

replicas: 1 #副本数量,单节点

selector:

matchLabels:

k8s-app: elasticsearch-logging #和pod template配置的labels相匹配

template:

metadata:

labels:

k8s-app: elasticsearch-logging

kubernetes.io/cluster-service: "true"

spec:

serviceAccountName: elasticsearch-logging

containers:

- image: docker.io/library/elasticsearch:7.9.3

name: elasticsearch-logging

resources:

# need more cpu upon initialization, therefore burstable class

limits:

cpu: 1000m

memory: 2Gi

requests:

cpu: 100m

memory: 500Mi

ports:

- containerPort: 9200

name: db

protocol: TCP

- containerPort: 9300

name: transport

protocol: TCP

volumeMounts:

- name: elasticsearch-logging

mountPath: /usr/share/elasticsearch/data/ #挂载点

env:

- name: "NAMESPACE"

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: "discovery.type" #定义单节点类型

value: "single-node"

- name: ES_JAVA_OPTS #设置Java的内存参数,可以适当进行加大调整

value: "-Xms512m -Xmx2g"

volumes:

- name: elasticsearch-logging

hostPath:

path: /data/es/

nodeSelector: #如果需要匹配落盘节点可以添加 nodeSelect

es: data

tolerations:

- effect: NoSchedule

operator: Exists

# Elasticsearch requires vm.max_map_count to be at least 262144.

# If your OS already sets up this number to a higher value, feel free

# to remove this init container.

initContainers: #容器初始化前的操作

- name: elasticsearch-logging-init

image: alpine:3.6

command: ["/sbin/sysctl", "-w", "vm.max_map_count=262144"] #添加mmap计数限制,太低可能造成内存不足的错误

securityContext: #仅应用到指定的容器上,并且不会影响Volume

privileged: true #运行特权容器

- name: increase-fd-ulimit

image: busybox

imagePullPolicy: IfNotPresent

command: ["sh", "-c", "ulimit -n 65536"] #修改文件描述符最大数量

securityContext:

privileged: true

- name: elasticsearch-volume-init #es数据落盘初始化,加上777权限

image: alpine:3.6

command:

- chmod

- -R

- "777"

- /usr/share/elasticsearch/data/

volumeMounts:

- name: elasticsearch-logging

mountPath: /usr/share/elasticsearch/data/

# 创建命名空间

kubectl create ns kube-logging

# 创建服务

kubectl create -f es.yaml

# 查看 pod 启用情况

kubectl get pod -n kube-logging4️⃣ 再部署kibana完成可视化

yaml

# 此处有配置 kibana 访问域名,如果没有域名则需要在本机配置 hosts

192.168.113.121 kibana.wolfcode.cn

# 创建 kibana.yaml 并创建服务

---

apiVersion: v1

kind: ConfigMap

metadata:

namespace: kube-logging

name: kibana-config

labels:

k8s-app: kibana

data:

kibana.yml: |-

server.name: kibana

server.host: "0"

i18n.locale: zh-CN #设置默认语言为中文

elasticsearch:

hosts: ${ELASTICSEARCH_HOSTS} #es集群连接地址,由于我这都都是k8s部署且在一个ns下,可以直接使用service name连接

---

apiVersion: v1

kind: Service

metadata:

name: kibana

namespace: kube-logging

labels:

k8s-app: kibana

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/name: "Kibana"

srv: srv-kibana

spec:

type: NodePort

ports:

- port: 5601

protocol: TCP

targetPort: ui

selector:

k8s-app: kibana

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kibana

namespace: kube-logging

labels:

k8s-app: kibana

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

srv: srv-kibana

spec:

replicas: 1

selector:

matchLabels:

k8s-app: kibana

template:

metadata:

labels:

k8s-app: kibana

spec:

containers:

- name: kibana

image: docker.io/kubeimages/kibana:7.9.3 #该镜像支持arm64和amd64两种架构

resources:

# need more cpu upon initialization, therefore burstable class

limits:

cpu: 1000m

requests:

cpu: 100m

env:

- name: ELASTICSEARCH_HOSTS

value: http://elasticsearch-logging:9200

ports:

- containerPort: 5601

name: ui

protocol: TCP

volumeMounts:

- name: config

mountPath: /usr/share/kibana/config/kibana.yml

readOnly: true

subPath: kibana.yml

volumes:

- name: config

configMap:

name: kibana-config

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: kibana

namespace: kube-logging

spec:

ingressClassName: nginx

rules:

- host: kibana.wolfcode.cn

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: kibana

port:

number: 5601进入 Kibana 界面,打开菜单中的 Stack Management 可以看到采集到的日志

避免日志越来越大,占用磁盘过多,进入 索引生命周期策略 界面点击 创建策略 按钮

设置策略名称为 logstash-history-ilm-policy 关闭 热阶段

开启删除阶段,设置保留天数为 7 天,最后保存配置

四:K8s可视化

kubesphere是K8s 之上的分布式操作系统 / 容器管理平台,开源、多租户、可插拔、开箱即用

- 可视化:Web 界面替代复杂

kubectl,新手快速上手。 - 全栈能力:集群管理、DevOps、服务网格、监控 / 日志 / 告警、应用商店、多租户、边缘计算KubeSphere。

- 灵活部署:支持 All-in-One、多节点、已有 K8s 集成、离线安装。

- 可扩展:v4.x 采用扩展组件架构,按需开启 DevOps、服务网格、可观测等

安装要求

硬件要求(最低)

- All-in-One(单节点):4 核 CPU、8GB 内存、100GB 存储、CentOS 7.9+/Ubuntu 20.04+

- 多节点:Master ≥ 4 核 8GB,Worker ≥ 2 核 4GB,节点间网络互通

软件依赖

- 关闭防火墙 / SELinux、时间同步

- 安装

socat、conntrack、ipset、ebtables - 可选:Docker/containerd(KubeKey 可自动安装)

1:本地存储动态的PVC

shell

# 在所有节点安装 iSCSI 协议客户端(OpenEBS 需要该协议提供存储支持)

yum install iscsi-initiator-utils -y

# 设置开机启动

systemctl enable --now iscsid

# 启动服务

systemctl start iscsid

# 查看服务状态

systemctl status iscsid

# 安装 OpenEBS

kubectl apply -f https://openebs.github.io/charts/openebs-operator.yaml

# 查看状态(下载镜像可能需要一些时间)

kubectl get all -n openebs

# 在主节点创建本地 storage class

kubectl apply -f default-storage-class.yaml2:安装部署

bash

# 安装资源

kubectl apply -f https://github.com/kubesphere/ks-installer/releases/download/v3.3.1/kubesphere-installer.yaml

kubectl apply -f https://github.com/kubesphere/ks-installer/releases/download/v3.3.1/cluster-configuration.yaml

# 检查安装日志

kubectl logs -n kubesphere-system $(kubectl get pod -n kubesphere-system -l 'app in (ks-install, ks-installer)' -o jsonpath='{.items[0].metadata.name}') -f

# 查看端口

kubectl get svc/ks-console -n kubesphere-system

# 默认端口是 30880,如果是云服务商,或开启了防火墙,记得要开放该端口

# 登录控制台访问,账号密码:admin/P@88w0rd