Optimizing Language Models for Inference Time Objectives using Reinforcement Learning

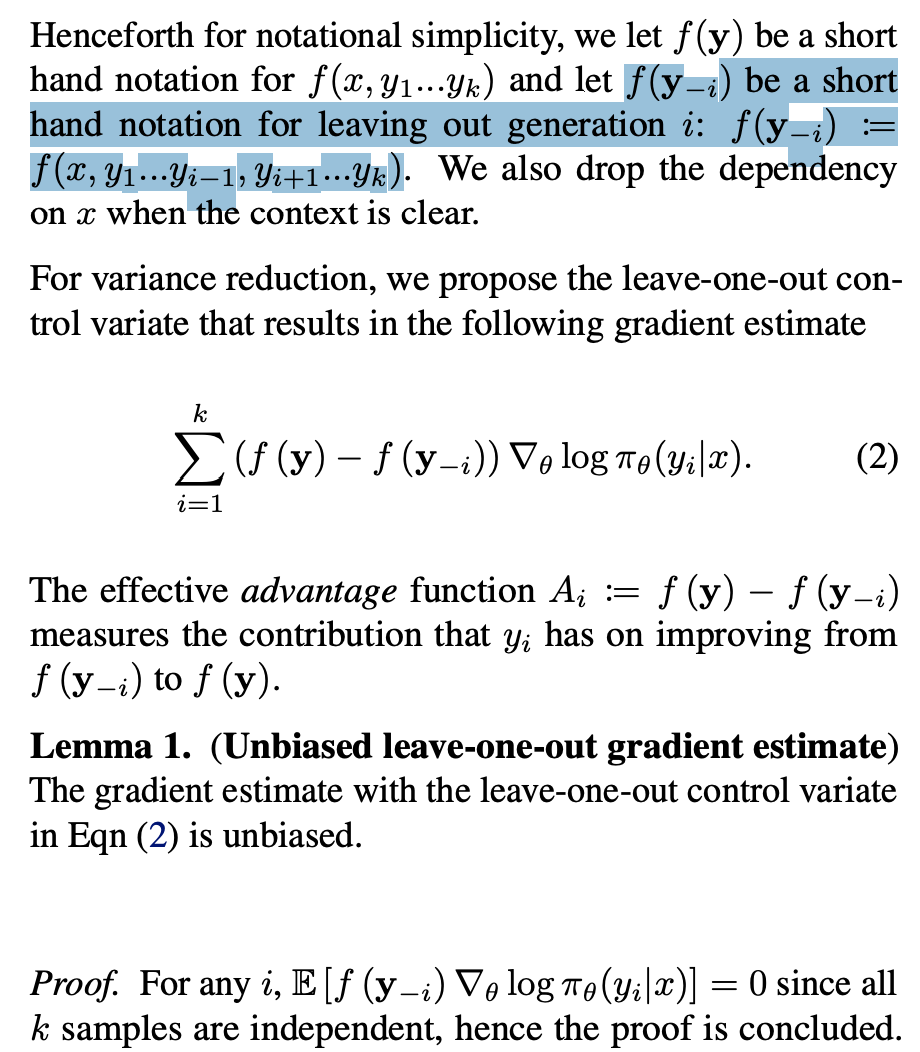

来自https://arxiv.org/pdf/2503.19595,在VeRL直接写了下面的code,这个code本质是最高分和次高分之间有gap就有Advantage(Advantage不为0),否则就没有advantages(Advantage为0)。文章对这个最高分和次高分怎么来的做了一些解释,首先定义了leave-one-out advantages estimate:

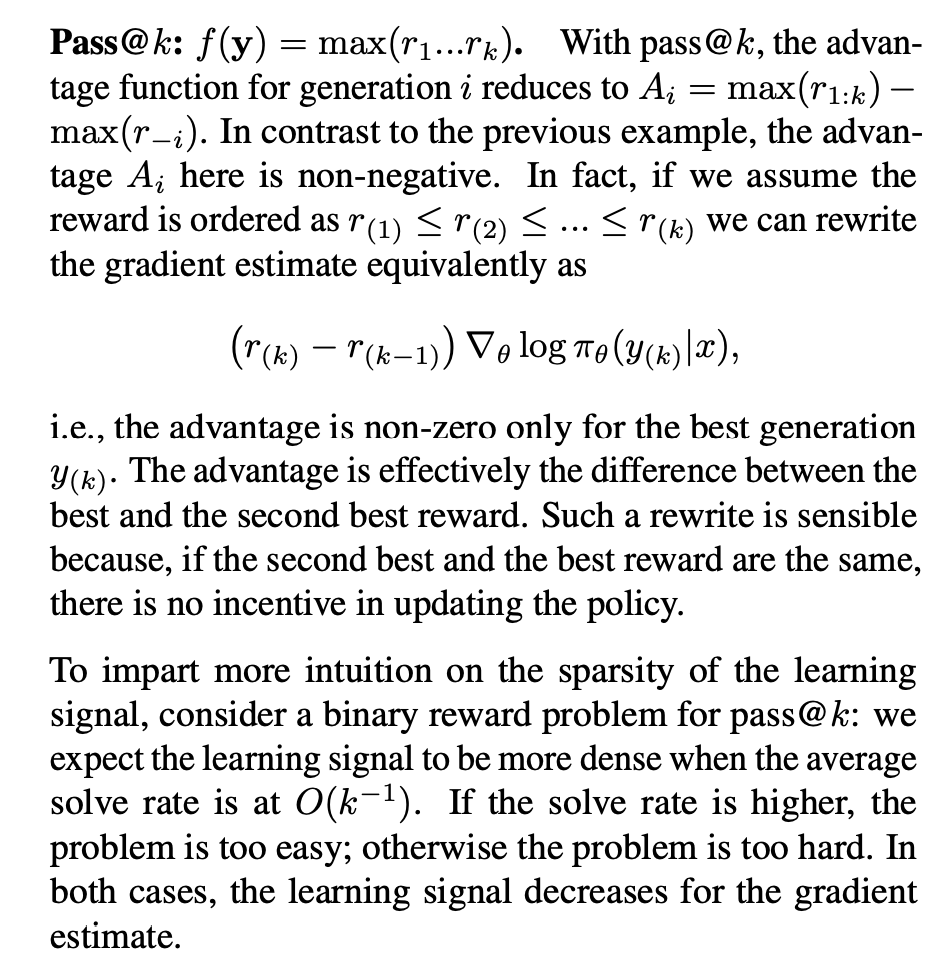

文章接下来讨论了Average reward、pass@k、Majority voting这几种情况,对于pass@k来说,排个序就是最高减去次高:

作者在实验中强调" With language model training on reasoning datasets, we showcase the performance trade-off enabled by training with such objectives. When training on code generation tasks, we show that the approach significantly improves pass@k objectives compared to the baseline method." 论文Review细节参考 https://openreview.net/forum?id=ZVWJO5YTz4

python

@register_adv_est(AdvantageEstimator.GRPO_PASSK) # or simply: @register_adv_est("grpo_passk")

def compute_grpo_passk_outcome_advantage(

token_level_rewards: torch.Tensor,

response_mask: torch.Tensor,

index: np.ndarray,

epsilon: float = 1e-6,

norm_adv_by_std_in_grpo: bool = True,

config: Optional[AlgoConfig] = None,

**kwargs,

) -> tuple[torch.Tensor, torch.Tensor]:

"""

Compute advantage for Pass@k using a GRPO-style outcome reward formulation.

Only the best response per group gets a non-zero advantage: r_max - r_second_max.

Implemented as described in https://arxiv.org/abs/2503.19595.

Args:

token_level_rewards: (bs, response_length)

response_mask: (bs, response_length)

index: (bs,) → group ID per sample

epsilon: float for numerical stability

config: (AlgoConfig) algorithm settings, which contains "norm_adv_by_std_in_grpo"

Returns:

advantages: (bs, response_length)

returns: (bs, response_length)

"""

assert config is not None

# if True, normalize advantage by std within group

norm_adv_by_std_in_grpo = config.get("norm_adv_by_std_in_grpo", True)

scores = token_level_rewards.sum(dim=-1) # (bs,)

advantages = torch.zeros_like(scores)

id2scores = defaultdict(list)

id2indices = defaultdict(list)

with torch.no_grad():

bsz = scores.shape[0]

for i in range(bsz):

idx = index[i]

id2scores[idx].append(scores[i])

id2indices[idx].append(i)

for idx in id2scores:

rewards = torch.stack(id2scores[idx]) # (k,)

if rewards.numel() < 2:

raise ValueError(

f"Pass@k requires at least 2 samples per group. Got {rewards.numel()} for group {idx}."

)

topk, topk_idx = torch.topk(rewards, 2)

r_max, r_second_max = topk[0], topk[1]

i_max = id2indices[idx][topk_idx[0].item()]

advantage = r_max - r_second_max

if norm_adv_by_std_in_grpo:

std = torch.std(rewards)

advantage = advantage / (std + epsilon)

advantages[i_max] = advantage

advantages = advantages.unsqueeze(-1) * response_mask

return advantages, advantagesPass@k Training for Adaptively Balancing Exploration and Exploitation of Large Reasoning Models

python

from collections import defaultdict

import numpy as np

import torch

import random

from scipy.special import comb

def calc_adv(val, k):

c = len(np.where(val==1)[0])

n = len(val)

rho = 1 - comb(n-c, k) / comb(n, k)

sigma = np.sqrt(rho * (1 - rho))

adv_p = (1 - rho) / (sigma + 1e-6)

adv_n = (1 - rho - comb(n-c-1, k-1)/comb(n-1,k-1)) / (sigma + 1e-6)

new_val = np.where(val==1, adv_p, val)

new_val = np.where(new_val==0, adv_n, new_val)

return new_val

def compute_advantage(token_level_rewards, response_mask, index, K):

scores = token_level_rewards.sum(dim=-1)

id2score = defaultdict(list)

uid2sid = defaultdict(list)

id2mean = {}

id2std = {}

with torch.no_grad():

bsz = scores.shape[0]

for i in range(bsz):

id2score[index[i]].append(scores[i].detach().item())

uid2sid[index[i]].append(i)

for uid in id2score.keys():

reward = np.array(id2score[uid])

adv = calc_adv(reward, K)

print(uid2sid[uid])

for i in range(len(uid2sid[uid])):

scores[uid2sid[uid][i]] = adv[i]

scores = scores.unsqueeze(-1) * response_mask

return scores, scores