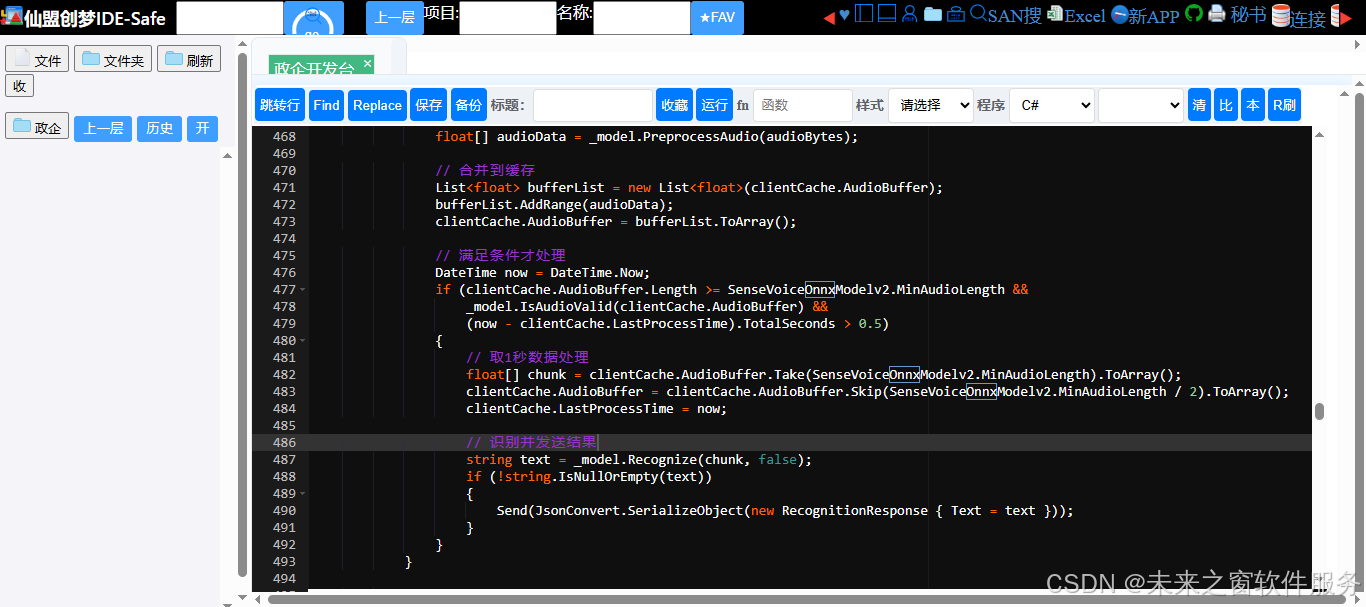

核心代码

AI错误原因onnx参数错误

完整代码

using System;

using System.Collections.Concurrent;

using System.Collections.Generic;

using System.IO;

using System.Linq;

using System.Net;

using System.Runtime.Remoting.Contexts;

using System.Text;

using System.Threading;

using System.Threading.Tasks;

using System.Numerics;

using System.Reflection;

using System.Text;

using System.Threading;

using System.Threading.Tasks;

using MathNet.Numerics;

using Microsoft.ML.OnnxRuntime;

using Microsoft.ML.OnnxRuntime.Tensors;

using NAudio.Wave;

using Newtonsoft.Json;

using WebSocketSharp;

using WebSocketSharp.Server;

namespace CyberWin_TradeTest_Sensvoice2026.CyberWin.VoiceServer.sensevoice

{

using System;

using System.Collections.Concurrent;

using System.Collections.Generic;

using System.IO;

using System.Linq;

using System.Net;

using System.Text;

using System.Threading;

using System.Threading.Tasks;

using System.Numerics;

using Microsoft.ML.OnnxRuntime;

using Microsoft.ML.OnnxRuntime.Tensors;

using NAudio.Wave;

using Newtonsoft.Json;

using WebSocketSharp;

using WebSocketSharp.Server;

namespace CyberWin_TradeTest_Sensvoice2026.CyberWin.VoiceServer.sensevoice

{

#region 数据模型

/// <summary>

/// 识别响应模型

/// </summary>

public class RecognitionResponse

{

[JsonProperty("text")]

public string Text { get; set; } = string.Empty;

[JsonProperty("error")]

public string Error { get; set; } = string.Empty;

}

/// <summary>

/// EOF信号模型

/// </summary>

public class EofSignal

{

[JsonProperty("eof")]

public int Eof { get; set; } = 1;

}

/// <summary>

/// 客户端缓存信息

/// </summary>

public class ClientCache

{

public float[] AudioBuffer { get; set; } = Array.Empty<float>();

public DateTime LastProcessTime { get; set; } = DateTime.Now;

public bool IsFirst { get; set; } = true;

}

#endregion

#region ONNX模型封装(核心修改:自动适配输入名称)

/// <summary>

/// SenseVoice ONNX模型封装(适配.NET Framework 4.7)

/// </summary>

public class SenseVoiceOnnxModelv2 : IDisposable

{

// 配置常量

public const int SampleRate = 16000;

public const int MinAudioLength = 16000; // 1秒

public const float EnergyThreshold = 0.01f;

public const int BufferSize = 8192;

// 自动获取的模型输入名称(核心修改)

private string _voiceInputName; // SenseVoice模型输入名称

private string _vadInputName; // VAD模型输入名称

private bool _hasIsFinalInput; // 是否包含is_final输入

// ONNX Runtime

private readonly InferenceSession _voiceSession;

private readonly InferenceSession _vadSession;

private readonly object _lockObj = new object();

/// <summary>

/// 初始化模型(自动适配输入名称)

/// </summary>

/// <param name="voiceModelPath">SenseVoice ONNX模型路径</param>

/// <param name="vadModelPath">VAD ONNX模型路径</param>

/// <param name="useGpu">是否使用GPU</param>

public SenseVoiceOnnxModelv2(string voiceModelPath, string vadModelPath, bool useGpu = false)

{

// 验证文件存在性

if (!File.Exists(voiceModelPath))

throw new FileNotFoundException("SenseVoice模型文件不存在", voiceModelPath);

if (!File.Exists(vadModelPath))

throw new FileNotFoundException("VAD模型文件不存在", vadModelPath);

// 配置ONNX Runtime(强制CPU,避免GPU兼容性问题)

var sessionOptions = new SessionOptions();

sessionOptions.GraphOptimizationLevel = GraphOptimizationLevel.ORT_ENABLE_ALL;

sessionOptions.AppendExecutionProvider_CPU();

Console.WriteLine("使用CPU运行SenseVoice模型");

// ========== 核心修改:自动读取模型输入名称 ==========

Console.WriteLine("正在读取模型元数据...");

// 读取SenseVoice模型输入信息

using (var tempVoiceSession = new InferenceSession(voiceModelPath, sessionOptions))

{

// 获取第一个输入名称

_voiceInputName = tempVoiceSession.InputMetadata.Keys.FirstOrDefault();

if (string.IsNullOrEmpty(_voiceInputName))

throw new InvalidOperationException("SenseVoice模型未检测到输入节点");

// 检测是否有is_final输入

_hasIsFinalInput = tempVoiceSession.InputMetadata.ContainsKey("is_final");

Console.WriteLine($"SenseVoice模型输入名称:{_voiceInputName}");

Console.WriteLine($"是否包含is_final输入:{_hasIsFinalInput}");

}

// 读取VAD模型输入信息

using (var tempVadSession = new InferenceSession(vadModelPath, sessionOptions))

{

_vadInputName = tempVadSession.InputMetadata.Keys.FirstOrDefault();

if (string.IsNullOrEmpty(_vadInputName))

throw new InvalidOperationException("VAD模型未检测到输入节点");

Console.WriteLine($"VAD模型输入名称:{_vadInputName}");

}

// 加载正式模型

Console.WriteLine("正在加载SenseVoice模型...");

_voiceSession = new InferenceSession(voiceModelPath, sessionOptions);

Console.WriteLine("正在加载VAD模型...");

_vadSession = new InferenceSession(vadModelPath, sessionOptions);

// 预加载模型(使用自动获取的输入名称)

PreloadModel();

Console.WriteLine("模型加载完成!");

}

/// <summary>

/// 预加载模型(避免首次推理延迟)

/// </summary>

private void PreloadModel()

{

var dummyInput = new DenseTensor<float>(new[] { 1, MinAudioLength });

var inputs = new List<NamedOnnxValue>

{

// 使用自动获取的输入名称

NamedOnnxValue.CreateFromTensor(_voiceInputName, dummyInput)

};

lock (_lockObj)

{

using (var results = _voiceSession.Run(inputs))

{

// 空操作,仅预加载模型

}

}

}

/// <summary>

/// 音频有效性检测

/// </summary>

/// <param name="audioData">音频数据</param>

/// <returns>是否有效</returns>

public bool IsAudioValid(float[] audioData)

{

if (audioData == null || audioData.Length < MinAudioLength / 2)

return false;

// 计算音频能量

float energy = (float)Math.Sqrt(audioData.Average(x => x * x));

return energy > EnergyThreshold;

}

/// <summary>

/// 音频预处理(格式转换、重采样、归一化)

/// </summary>

/// <param name="audioBytes">原始音频字节</param>

/// <param name="sourceSampleRate">源采样率</param>

/// <returns>预处理后的浮点音频数据</returns>

public float[] PreprocessAudio(byte[] audioBytes, int sourceSampleRate = SampleRate)

{

if (audioBytes == null || audioBytes.Length == 0)

return Array.Empty<float>();

// 1. 将16bit PCM转换为浮点([-1, 1]范围)

float[] floatAudio = new float[audioBytes.Length / 2];

for (int i = 0; i < floatAudio.Length; i++)

{

short sample = BitConverter.ToInt16(audioBytes, i * 2);

floatAudio[i] = sample / 32767.0f;

}

// 2. 重采样到16kHz

if (sourceSampleRate != SampleRate)

{

floatAudio = ResampleAudio(floatAudio, sourceSampleRate, SampleRate);

}

return floatAudio;

}

/// <summary>

/// 音频重采样(适配.NET Framework)

/// </summary>

/// <param name="audioData">音频数据</param>

/// <param name="srcRate">源采样率</param>

/// <param name="dstRate">目标采样率</param>

/// <returns>重采样后的音频</returns>

private float[] ResampleAudio(float[] audioData, int srcRate, int dstRate)

{

if (srcRate == dstRate || audioData.Length == 0)

return audioData;

try

{

// 将浮点音频转换为16bit PCM字节

byte[] pcmBytes = new byte[audioData.Length * 2];

for (int i = 0; i < audioData.Length; i++)

{

short sample = (short)(audioData[i] * 32767);

BitConverter.GetBytes(sample).CopyTo(pcmBytes, i * 2);

}

// 使用NAudio重采样

using (var msIn = new MemoryStream(pcmBytes))

using (var rawReader = new RawSourceWaveStream(msIn, new WaveFormat(srcRate, 16, 1)))

using (var resampler = new WaveFormatConversionStream(new WaveFormat(dstRate, 16, 1), rawReader))

using (var msOut = new MemoryStream())

{

byte[] buffer = new byte[4096];

int bytesRead;

while ((bytesRead = resampler.Read(buffer, 0, buffer.Length)) > 0)

{

msOut.Write(buffer, 0, bytesRead);

}

// 转换回浮点

byte[] resampledBytes = msOut.ToArray();

float[] resampledFloat = new float[resampledBytes.Length / 2];

for (int i = 0; i < resampledFloat.Length; i++)

{

short sample = BitConverter.ToInt16(resampledBytes, i * 2);

resampledFloat[i] = sample / 32767.0f;

}

return resampledFloat;

}

}

catch (Exception ex)

{

Console.WriteLine($"重采样失败:{ex.Message}");

return audioData;

}

}

/// <summary>

/// 语音识别推理(适配自动获取的输入名称)

/// </summary>

/// <param name="audioData">预处理后的音频数据</param>

/// <param name="isFinal">是否为最后一段</param>

/// <returns>识别文本</returns>

public string Recognize(float[] audioData, bool isFinal = false)

{

if (!IsAudioValid(audioData))

return string.Empty;

lock (_lockObj)

{

try

{

// 构建输入张量 [1, length]

var inputTensor = new DenseTensor<float>(audioData, new[] { 1, audioData.Length });

var inputs = new List<NamedOnnxValue>

{

// 使用自动获取的输入名称

NamedOnnxValue.CreateFromTensor(_voiceInputName, inputTensor)

};

// 仅当模型包含is_final输入时才添加

if (_hasIsFinalInput)

{

inputs.Add(NamedOnnxValue.CreateFromTensor("is_final",

new DenseTensor<bool>(new[] { isFinal }, new[] { 1 })));

}

// 执行推理

using (var results = _voiceSession.Run(inputs))

{

// 兼容字符串/整数输出(部分模型输出token ID)

string text = string.Empty;

try

{

// 优先尝试字符串输出

var outputTensor = results.First().AsTensor<string>();

text = outputTensor.FirstOrDefault() ?? string.Empty;

}

catch

{

// 回退到整数token ID(简单解码)

var outputTensor = results.First().AsTensor<int>();

int[] tokens = outputTensor.ToArray();

text = string.Join("", tokens.Select(t => t.ToString()));

}

// 格式化文本

return FormatText(text);

}

}

catch (Exception ex)

{

Console.WriteLine($"推理错误:{ex.Message}");

return string.Empty;

}

}

}

/// <summary>

/// VAD语音活动检测(适配自动获取的输入名称)

/// </summary>

/// <param name="audioData">音频数据</param>

/// <returns>是否包含语音</returns>

public bool DetectVoiceActivity(float[] audioData)

{

if (!IsAudioValid(audioData))

return false;

try

{

var inputTensor = new DenseTensor<float>(audioData, new[] { 1, audioData.Length });

var inputs = new List<NamedOnnxValue>

{

// 使用自动获取的VAD输入名称

NamedOnnxValue.CreateFromTensor(_vadInputName, inputTensor)

};

using (var results = _vadSession.Run(inputs))

{

var output = results.First().AsTensor<float>();

return output.Average() > 0.5f;

}

}

catch (Exception ex)

{

Console.WriteLine($"VAD检测错误:{ex.Message}");

return true; // 出错时默认认为有语音

}

}

/// <summary>

/// 格式化识别文本

/// </summary>

/// <param name="text">原始文本</param>

/// <returns>格式化后的文本</returns>

private string FormatText(string text)

{

if (string.IsNullOrEmpty(text))

return string.Empty;

// 移除特殊标记和多余空格

text = System.Text.RegularExpressions.Regex.Replace(text, @"<\|.*?\|>", "");

text = System.Text.RegularExpressions.Regex.Replace(text, @"\s+", " ").Trim();

return text;

}

public void Dispose()

{

_voiceSession?.Dispose();

_vadSession?.Dispose();

}

}

#endregion

#region WebSocket服务(适配.NET Framework 4.7)

/// <summary>

/// 流式识别WebSocket服务

/// </summary>

public class StreamingRecognitionServicev2 : WebSocketBehavior

{

private static readonly ConcurrentDictionary<string, ClientCache> _clientCache = new ConcurrentDictionary<string, ClientCache>();

private static SenseVoiceOnnxModelv2 _model;

private string _clientId;

/// <summary>

/// 设置模型实例

/// </summary>

/// <param name="model">模型实例</param>

public static void SetModel(SenseVoiceOnnxModelv2 model)

{

_model = model;

}

protected override void OnOpen()

{

_clientId = ID;

_clientCache.TryAdd(_clientId, new ClientCache());

Console.WriteLine($"客户端连接:{_clientId}");

}

protected override void OnMessage(MessageEventArgs e)

{

try

{

if (_model == null)

{

SendError("模型未初始化");

return;

}

ClientCache clientCache;

if (!_clientCache.TryGetValue(_clientId, out clientCache))

{

clientCache = new ClientCache();

_clientCache.TryAdd(_clientId, clientCache);

}

// 处理二进制音频数据

if (e.IsBinary)

{

ProcessAudioData(e.RawData, clientCache);

}

// 处理文本消息(EOF信号)

else if (e.IsText)

{

ProcessTextMessage(e.Data, clientCache);

}

}

catch (Exception ex)

{

Console.WriteLine($"消息处理错误:{ex.Message}");

SendError(ex.Message);

}

}

/// <summary>

/// 处理音频数据

/// </summary>

/// <param name="audioBytes">音频字节</param>

/// <param name="clientCache">客户端缓存</param>

private void ProcessAudioData(byte[] audioBytes, ClientCache clientCache)

{

if (audioBytes == null || audioBytes.Length == 0)

return;

// 预处理音频

float[] audioData = _model.PreprocessAudio(audioBytes);

// 合并到缓存

List<float> bufferList = new List<float>(clientCache.AudioBuffer);

bufferList.AddRange(audioData);

clientCache.AudioBuffer = bufferList.ToArray();

// 满足条件才处理

DateTime now = DateTime.Now;

if (clientCache.AudioBuffer.Length >= SenseVoiceOnnxModelv2.MinAudioLength &&

_model.IsAudioValid(clientCache.AudioBuffer) &&

(now - clientCache.LastProcessTime).TotalSeconds > 0.5)

{

// 取1秒数据处理

float[] chunk = clientCache.AudioBuffer.Take(SenseVoiceOnnxModelv2.MinAudioLength).ToArray();

clientCache.AudioBuffer = clientCache.AudioBuffer.Skip(SenseVoiceOnnxModelv2.MinAudioLength / 2).ToArray();

clientCache.LastProcessTime = now;

// 识别并发送结果

string text = _model.Recognize(chunk, false);

if (!string.IsNullOrEmpty(text))

{

Send(JsonConvert.SerializeObject(new RecognitionResponse { Text = text }));

}

}

}

/// <summary>

/// 处理文本消息

/// </summary>

/// <param name="text">文本内容</param>

/// <param name="clientCache">客户端缓存</param>

private void ProcessTextMessage(string text, ClientCache clientCache)

{

try

{

EofSignal signal = JsonConvert.DeserializeObject<EofSignal>(text);

if (signal != null && signal.Eof == 1)

{

// 处理最后一段音频

if (clientCache.AudioBuffer.Length > SenseVoiceOnnxModelv2.MinAudioLength / 2 &&

_model.IsAudioValid(clientCache.AudioBuffer))

{

string finalText = _model.Recognize(clientCache.AudioBuffer, true);

Send(JsonConvert.SerializeObject(new RecognitionResponse { Text = finalText }));

}

// 关闭连接

Send(JsonConvert.SerializeObject(new RecognitionResponse { Text = "[识别完成]" }));

Context.WebSocket.Close();

}

}

catch

{

// 忽略解析错误

}

}

protected override void OnClose(CloseEventArgs e)

{

ClientCache cache;

_clientCache.TryRemove(_clientId, out cache);

Console.WriteLine($"客户端断开:{_clientId} - {e.Reason}");

}

protected override void OnError(WebSocketSharp.ErrorEventArgs e)

{

Console.WriteLine($"WebSocket错误:{e.Message}");

ClientCache cache;

_clientCache.TryRemove(_clientId, out cache);

}

/// <summary>

/// 发送错误信息

/// </summary>

/// <param name="error">错误信息</param>

private void SendError(string error)

{

Send(JsonConvert.SerializeObject(new RecognitionResponse { Error = error }));

}

}

#endregion

#region HTTP服务(适配.NET Framework 4.7)

/// <summary>

/// HTTP文件上传识别服务

/// </summary>

public class HttpRecognitionServerv2

{

private readonly HttpListener _listener;

private readonly SenseVoiceOnnxModelv2 _model;

private readonly int _port;

private bool _isRunning;

/// <summary>

/// 初始化HTTP服务

/// </summary>

/// <param name="port">端口</param>

/// <param name="model">模型实例</param>

public HttpRecognitionServerv2(int port, SenseVoiceOnnxModelv2 model)

{

_port = port;

_model = model;

_listener = new HttpListener();

_listener.Prefixes.Add($"http://*:{port}/");

_isRunning = false;

}

/// <summary>

/// 启动服务

/// </summary>

public void Start()

{

if (_isRunning)

return;

_listener.Start();

_isRunning = true;

Console.WriteLine($"HTTP文件识别服务已启动:http://0.0.0.0:{_port}");

// 适配.NET Framework的异步处理

Task.Factory.StartNew(() =>

{

while (_isRunning && _listener.IsListening)

{

try

{

HttpListenerContext context = _listener.GetContext();

ThreadPool.QueueUserWorkItem(ProcessRequest, context);

}

catch (HttpListenerException ex)

{

if (ex.ErrorCode != 995) // 忽略关闭时的异常

Console.WriteLine($"HTTP监听错误:{ex.Message}");

break;

}

catch (Exception ex)

{

Console.WriteLine($"HTTP请求处理错误:{ex.Message}");

}

}

}, TaskCreationOptions.LongRunning);

}

/// <summary>

/// 停止服务

/// </summary>

public void Stop()

{

_isRunning = false;

_listener.Stop();

_listener.Close();

}

/// <summary>

/// 处理HTTP请求

/// </summary>

/// <param name="state">请求上下文</param>

private void ProcessRequest(object state)

{

HttpListenerContext context = state as HttpListenerContext;

if (context == null)

return;

HttpListenerResponse response = context.Response;

try

{

// 处理OPTIONS请求(跨域)

if (context.Request.HttpMethod == "OPTIONS")

{

response.Headers.Add("Access-Control-Allow-Origin", "*");

response.Headers.Add("Access-Control-Allow-Methods", "POST, OPTIONS");

response.Headers.Add("Access-Control-Allow-Headers", "Content-Type");

response.StatusCode = 200;

response.Close();

return;

}

// 只处理POST请求

if (context.Request.HttpMethod != "POST")

{

response.StatusCode = 405;

WriteResponse(response, new RecognitionResponse { Error = "仅支持POST请求" });

return;

}

// 读取请求体

byte[] requestData = new byte[context.Request.ContentLength64];

context.Request.InputStream.Read(requestData, 0, requestData.Length);

context.Request.InputStream.Close();

// 解析音频文件

float[] audioData;

try

{

using (var ms = new MemoryStream(requestData))

using (var waveReader = new WaveFileReader(ms))

{

byte[] waveBytes = ReadAllBytes(waveReader);

audioData = _model.PreprocessAudio(waveBytes, waveReader.WaveFormat.SampleRate);

}

}

catch (Exception ex)

{

WriteResponse(response, new RecognitionResponse { Error = $"音频解析失败:{ex.Message}" });

return;

}

// 识别

string text = _model.Recognize(audioData, true);

WriteResponse(response, new RecognitionResponse { Text = text });

}

catch (Exception ex)

{

WriteResponse(response, new RecognitionResponse { Error = ex.Message });

}

finally

{

response.Close();

}

}

/// <summary>

/// 读取Wave文件所有字节

/// </summary>

private byte[] ReadAllBytes(WaveFileReader reader)

{

using (var ms = new MemoryStream())

{

byte[] buffer = new byte[4096];

int bytesRead;

while ((bytesRead = reader.Read(buffer, 0, buffer.Length)) > 0)

{

ms.Write(buffer, 0, bytesRead);

}

return ms.ToArray();

}

}

/// <summary>

/// 写入响应

/// </summary>

private void WriteResponse(HttpListenerResponse response, RecognitionResponse data)

{

response.ContentType = "application/json";

response.Headers.Add("Access-Control-Allow-Origin", "*");

string json = JsonConvert.SerializeObject(data);

byte[] buffer = Encoding.UTF8.GetBytes(json);

response.ContentLength64 = buffer.Length;

response.OutputStream.Write(buffer, 0, buffer.Length);

response.OutputStream.Flush();

}

}

#endregion

#region WinForm服务启动封装(修复线程终止异常)

/// <summary>

/// WinForm服务启动助手(修复线程终止异常)

/// </summary>

public class SenseVoiceServiceHelper

{

private WebSocketServer _wsServer;

private HttpRecognitionServer _httpServer;

private SenseVoiceOnnxModelv2 _model;

private Thread _serviceThread;

private System.Windows.Forms.TextBox _logTextBox; // 日志输出控件

/// <summary>

/// 初始化服务助手

/// </summary>

/// <param name="logTextBox">日志输出文本框</param>

public SenseVoiceServiceHelper(System.Windows.Forms.TextBox logTextBox)

{

_logTextBox = logTextBox;

}

/// <summary>

/// 跨线程更新日志

/// </summary>

/// <param name="message">日志信息</param>

private void UpdateLog(string message)

{

if (_logTextBox.InvokeRequired)

{

_logTextBox.BeginInvoke(new Action<string>(UpdateLog), message);

return;

}

_logTextBox.AppendText($"{DateTime.Now:yyyy-MM-dd HH:mm:ss} - {message}\r\n");

_logTextBox.ScrollToCaret();

}

}

#endregion

}

}东方仙盟:拥抱知识开源,共筑数字新生态

在全球化与数字化浪潮中,东方仙盟始终秉持开放协作、知识共享的理念,积极拥抱开源技术与开放标准。我们相信,唯有打破技术壁垒、汇聚全球智慧,才能真正推动行业的可持续发展。

开源赋能中小商户:通过将前端异常检测、跨系统数据互联等核心能力开源化,东方仙盟为全球中小商户提供了低成本、高可靠的技术解决方案,让更多商家能够平等享受数字转型的红利。

共建行业标准:我们积极参与国际技术社区,与全球开发者、合作伙伴共同制定开放协议与技术规范,推动跨境零售、文旅、餐饮等多业态的系统互联互通,构建更加公平、高效的数字生态。

知识普惠,共促发展:通过开源社区、技术文档与培训体系,东方仙盟致力于将前沿技术转化为可落地的行业实践,赋能全球合作伙伴,共同培育创新人才,推动数字经济的普惠式增长

阿雪技术观

在科技发展浪潮中,我们不妨积极投身技术共享。不满足于做受益者,更要主动担当贡献者。无论是分享代码、撰写技术博客,还是参与开源项目维护改进,每一个微小举动都可能蕴含推动技术进步的巨大能量。东方仙盟是汇聚力量的天地,我们携手在此探索硅基生命,为科技进步添砖加瓦。

Hey folks, in this wild tech - driven world, why not dive headfirst into the whole tech - sharing scene? Don't just be the one reaping all the benefits; step up and be a contributor too. Whether you're tossing out your code snippets, hammering out some tech blogs, or getting your hands dirty with maintaining and sprucing up open - source projects, every little thing you do might just end up being a massive force that pushes tech forward. And guess what? The Eastern FairyAlliance is this awesome place where we all come together. We're gonna team up and explore the whole silicon - based life thing, and in the process, we'll be fueling the growth of technology.