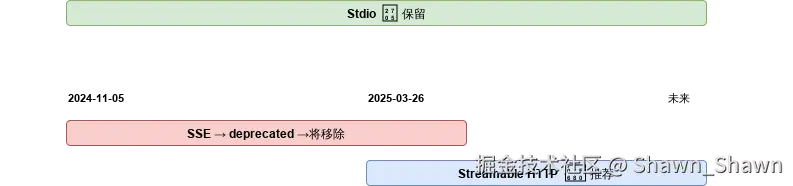

一、三种传输方式详解

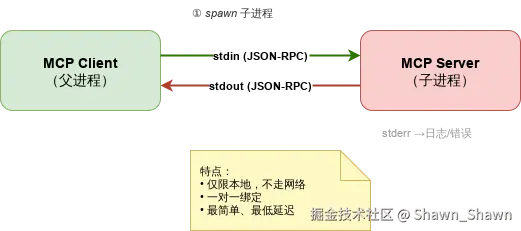

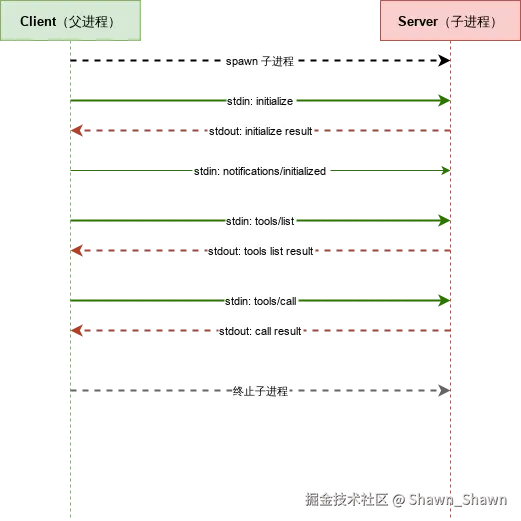

1. Stdio 传输

1.1 原理说明

Stdio(标准输入输出)传输是最简单的传输方式。MCP Client 将Server 作为 子进程 启动,通过进程的 stdin 和 stdout 进行双向通信。

关键规则:

- 每条JSON-RPC 消息以 换行符

\n分隔 - stdout 专用于协议消息,日志/调试信息必须输出到 stderr

- Client 与 Server 一对一绑定,生命周期同步

1.2 原理图(draw.io)

1.3 交互流程图(draw.io 时序图)

1.4 代码示例

Server 端: stdio_server.py

python

# main.py

from mcp.server.fastmcp import FastMCP

from mcp.server.fastmcp.prompts import base

import logging

import json

logger = logging.getLogger(__name__)

logging.basicConfig(level=logging.INFO)

# 创建MCP实例

mcp = FastMCP(name="stdio-demo")

# 添加一个简单的资源

@mcp.tool()

def greeting(name: str = "World") -> str:

"""返回问候语"""

return f"Hello, {name}!"

# 添加计算功能

@mcp.tool()

def add(a: int, b: int) -> int:

"""加法计算器"""

return a + b

@mcp.resource("models://")

def get_models() -> str:

"""Get information about available AI models"""

logger.info("Retrieving available models")

models_data = [

{

"id": "gpt-4",

"name": "GPT-4",

"description": "OpenAI's GPT-4 large language model"

},

{

"id": "llama-3-70b",

"name": "LLaMA 3 (70B)",

"description": "Meta's LLaMA 3 with 70 billion parameters"

},

{

"id": "claude-3-sonnet",

"name": "Claude 3 Sonnet",

"description": "Anthropic's Claude 3 Sonnet model"

}

]

return json.dumps({"models": models_data})

# Define a greeting resource that dynamically constructs a personalized greeting

@mcp.resource("greeting://{name}")

def get_greeting(name: str) -> str:

"""Return a greeting for the given name

Args:

name: The name to greet

Returns:

A personalized greeting message

"""

import urllib.parse

# Decode URL-encoded name

decoded_name = urllib.parse.unquote(name)

logger.info(f"Generating greeting for {decoded_name}")

return f"Hello, {decoded_name}!"

@mcp.resource("file://documents/{name}")

def read_document(name: str) -> str:

"""Read a document by name."""

# This would normally read from disk

return f"Content of {name}"

@mcp.prompt(title="Code Review")

def review_code(code: str) -> str:

return f"Please review this code:\n\n{code}"

@mcp.prompt(title="Debug Assistant")

def debug_error(error: str) -> list[base.Message]:

return [

base.UserMessage("I'm seeing this error:"),

base.UserMessage(error),

base.AssistantMessage("I'll help debug that. What have you tried so far?"),

]

if __name__ == "__main__":

mcp.run()Client 端: stdio_client.py

python

# client.py

import sys

import urllib

from mcp import stdio_client, StdioServerParameters

from mcp.client.session import ClientSession

import asyncio

import logging

import json

from mcp.types import TextContent, TextResourceContents

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger(__name__)

async def main():

"""Main client function that demonstrates MCP client features"""

logger.info("Starting clean MCP client")

try:

logger.info("Connecting to server...")

params = StdioServerParameters(

command="python", # Executable

args=["sse-server.py"], # Server script

env=None, # Optional environment variables

)

async with stdio_client(params) as (reader, writer):

async with ClientSession(reader, writer) as session:

logger.info("Initializing session")

await session.initialize()

# 1. Call the add tool

logger.info("Testing calculator tool")

add_result = await session.call_tool("add", arguments={"a": 5, "b": 7})

if add_result and add_result.content:

text_content = next((content for content in add_result.content

if isinstance(content, TextContent)), None)

if text_content:

print(f"\n1. Calculator result (5 + 7) = {text_content.text}")

# 2. Get models resource

logger.info("Testing models resource")

models_response = await session.read_resource("models://")

if models_response and models_response.contents:

text_resource = next((content for content in models_response.contents

if isinstance(content, TextResourceContents)), None)

if text_resource:

models = json.loads(text_resource.text)

print("\n3. Available models:")

for model in models.get("models", []):

print(f" - {model['name']} ({model['id']}): {model['description']}")

# 4. Get greeting resource

logger.info("Testing greeting resource")

name = "MCP Explorer"

encoded_name = urllib.parse.quote(name)

greeting_response = await session.read_resource(f"greeting://{encoded_name}")

if greeting_response and greeting_response.contents:

text_resource = next((content for content in greeting_response.contents

if isinstance(content, TextResourceContents)), None)

if text_resource:

print(f"\n4. Greeting: {text_resource.text}")

# 5. Get document resource

logger.info("Testing document resource")

document_response = await session.read_resource("file://documents/example.txt")

if document_response and document_response.contents:

text_resource = next((content for content in document_response.contents

if isinstance(content, TextResourceContents)), None)

if text_resource:

print(f"\n5. Document content:")

print(f" {text_resource.text}")

# 6. Use code review prompt

logger.info("Testing code review prompt")

sample_code = "def hello_world():\n print('Hello, world!')"

prompt_response = await session.get_prompt("review_code", {"code": sample_code})

if prompt_response and prompt_response.messages:

message = next((msg for msg in prompt_response.messages if msg.content), None)

if message and message.content:

text_content = next((content for content in [message.content]

if isinstance(content, TextContent)), None)

if text_content:

print("\n6. Code review prompt:")

print(f" {text_content.text}")

except Exception:

logger.exception("An error occurred")

sys.exit(1)

if __name__ == "__main__":

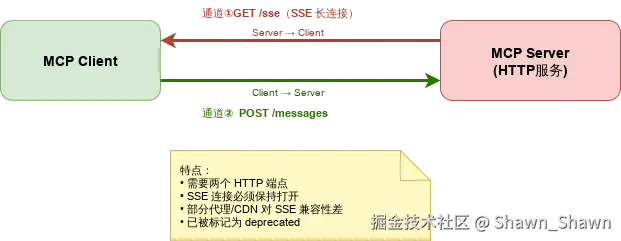

asyncio.run(main())2. SSE 传输

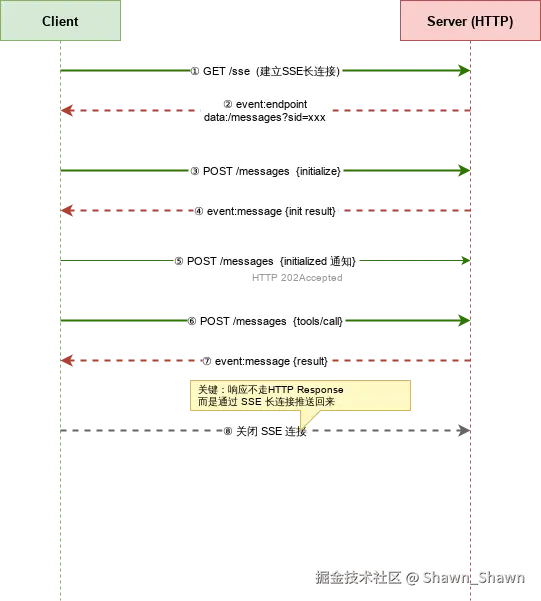

2.1 原理说明

SSE (Server-Sent Events) 传输基于 HTTP,使用 两个通道 实现双向通信:

| 通道 | 方向 | 方法 | 作用 |

|---|---|---|---|

/sse |

Server → Client | GET |

持久 SSE 长连接,服务器推送消息 |

/messages |

Client → Server | POST |

客户端发送 JSON-RPC 请求 |

⚠️ SSE 传输在MCP 协议 2025-03-26 版本中已被标记为 deprecated(弃用) ,推荐使用 Streamable HTTP 替代。

2.2 原理图 (draw.io)

2.3 交互流程图 (draw.io)

2.4 代码示例

Server 端: sse_server.py

python

# main.py

from mcp.server.fastmcp import FastMCP

from mcp.server.fastmcp.prompts import base

import logging

import json

logger = logging.getLogger(__name__)

logging.basicConfig(level=logging.INFO)

# 创建MCP实例

mcp = FastMCP(name="sse-demo", port=8082)

# 添加一个简单的资源

@mcp.tool()

def greeting(name: str = "World") -> str:

"""返回问候语"""

return f"Hello, {name}!"

# 添加计算功能

@mcp.tool()

def add(a: int, b: int) -> int:

"""加法计算器"""

return a + b

@mcp.resource("models://")

def get_models() -> str:

"""Get information about available AI models"""

logger.info("Retrieving available models")

models_data = [

{

"id": "gpt-4",

"name": "GPT-4",

"description": "OpenAI's GPT-4 large language model"

},

{

"id": "llama-3-70b",

"name": "LLaMA 3 (70B)",

"description": "Meta's LLaMA 3 with 70 billion parameters"

},

{

"id": "claude-3-sonnet",

"name": "Claude 3 Sonnet",

"description": "Anthropic's Claude 3 Sonnet model"

}

]

return json.dumps({"models": models_data})

# Define a greeting resource that dynamically constructs a personalized greeting

@mcp.resource("greeting://{name}")

def get_greeting(name: str) -> str:

"""Return a greeting for the given name

Args:

name: The name to greet

Returns:

A personalized greeting message

"""

import urllib.parse

# Decode URL-encoded name

decoded_name = urllib.parse.unquote(name)

logger.info(f"Generating greeting for {decoded_name}")

return f"Hello, {decoded_name}!"

@mcp.resource("file://documents/{name}")

def read_document(name: str) -> str:

"""Read a document by name."""

# This would normally read from disk

return f"Content of {name}"

@mcp.prompt(title="Code Review")

def review_code(code: str) -> str:

return f"Please review this code:\n\n{code}"

@mcp.prompt(title="Debug Assistant")

def debug_error(error: str) -> list[base.Message]:

return [

base.UserMessage("I'm seeing this error:"),

base.UserMessage(error),

base.AssistantMessage("I'll help debug that. What have you tried so far?"),

]

if __name__ == "__main__":

mcp.run(transport="sse")Client 端: sse_client.py

python

# client.py

import sys

import urllib

from mcp.client.sse import sse_client

from mcp.client.session import ClientSession

import asyncio

import logging

import json

from mcp.types import TextContent, TextResourceContents

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger(__name__)

async def main():

"""Main client function that demonstrates MCP client features"""

logger.info("Starting clean MCP client")

try:

logger.info("Connecting to server...")

async with sse_client(url="http://localhost:8082/sse") as (reader, writer):

async with ClientSession(reader, writer) as session:

logger.info("Initializing session")

await session.initialize()

# 1. Call the add tool

logger.info("Testing calculator tool")

add_result = await session.call_tool("add", arguments={"a": 5, "b": 7})

if add_result and add_result.content:

text_content = next((content for content in add_result.content

if isinstance(content, TextContent)), None)

if text_content:

print(f"\n1. Calculator result (5 + 7) = {text_content.text}")

# 2. Get models resource

logger.info("Testing models resource")

models_response = await session.read_resource("models://")

if models_response and models_response.contents:

text_resource = next((content for content in models_response.contents

if isinstance(content, TextResourceContents)), None)

if text_resource:

models = json.loads(text_resource.text)

print("\n3. Available models:")

for model in models.get("models", []):

print(f" - {model['name']} ({model['id']}): {model['description']}")

# 4. Get greeting resource

logger.info("Testing greeting resource")

name = "MCP Explorer"

encoded_name = urllib.parse.quote(name)

greeting_response = await session.read_resource(f"greeting://{encoded_name}")

if greeting_response and greeting_response.contents:

text_resource = next((content for content in greeting_response.contents

if isinstance(content, TextResourceContents)), None)

if text_resource:

print(f"\n4. Greeting: {text_resource.text}")

# 5. Get document resource

logger.info("Testing document resource")

document_response = await session.read_resource("file://documents/example.txt")

if document_response and document_response.contents:

text_resource = next((content for content in document_response.contents

if isinstance(content, TextResourceContents)), None)

if text_resource:

print(f"\n5. Document content:")

print(f" {text_resource.text}")

# 6. Use code review prompt

logger.info("Testing code review prompt")

sample_code = "def hello_world():\n print('Hello, world!')"

prompt_response = await session.get_prompt("review_code", {"code": sample_code})

if prompt_response and prompt_response.messages:

message = next((msg for msg in prompt_response.messages if msg.content), None)

if message and message.content:

text_content = next((content for content in [message.content]

if isinstance(content, TextContent)), None)

if text_content:

print("\n6. Code review prompt:")

print(f" {text_content.text}")

except Exception:

logger.exception("An error occurred")

sys.exit(1)

if __name__ == "__main__":

asyncio.run(main())3. Streamable HTTP 传输

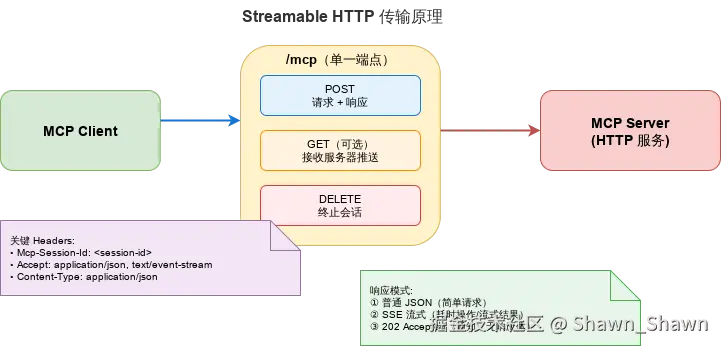

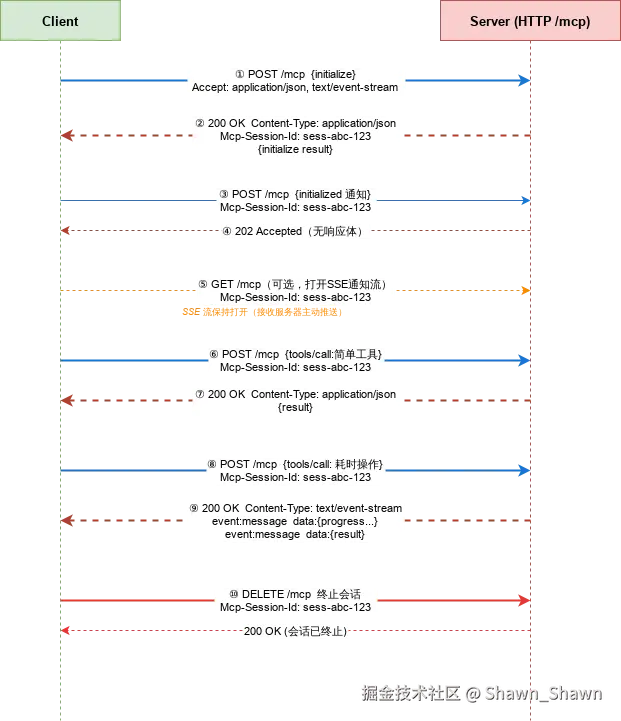

3.1 原理说明

Streamable HTTP 是MCP 协议 2025-03-26 版本引入的 新一代传输方式,作为 SSE 的替代方案。

核心设计:

| 特性 | 说明 |

|---|---|

| 单端点 | 所有通信通过POST /mcp 进行(路径可自定义) |

| 灵活响应 | 服务器可返回 普通 JSON 或 SSE 流 |

| 会话管理 | 通过 Mcp-Session-Id 请求头管理,可选 |

| 有/无状态 | 同时支持有状态(会话绑定)和无状态(每次独立)模式 |

| 服务器推送 | 客户端可通过 GET /mcp 打开 SSE 流接收通知 |

| 会话终止 | 通过 DELETE /mcp 主动结束会话 |

3.2 原理图 (draw.io)

3.3 交互流程图 (draw.io)

3.4 代码示例

Server 端 streamable_http_server.py

python

# main.py

from mcp.server.fastmcp import FastMCP

from mcp.server.fastmcp.prompts import base

import logging

import json

logger = logging.getLogger(__name__)

logging.basicConfig(level=logging.INFO)

# 创建MCP实例

mcp = FastMCP(name="streamable-demo", port=8081, stateless_http=False)

# 添加一个简单的资源

@mcp.tool()

def greeting(name: str = "World") -> str:

"""返回问候语"""

return f"Hello, {name}!"

# 添加计算功能

@mcp.tool()

def add(a: int, b: int) -> int:

"""加法计算器"""

return a + b

@mcp.resource("models://")

def get_models() -> str:

"""Get information about available AI models"""

logger.info("Retrieving available models")

models_data = [

{

"id": "gpt-4",

"name": "GPT-4",

"description": "OpenAI's GPT-4 large language model"

},

{

"id": "llama-3-70b",

"name": "LLaMA 3 (70B)",

"description": "Meta's LLaMA 3 with 70 billion parameters"

},

{

"id": "claude-3-sonnet",

"name": "Claude 3 Sonnet",

"description": "Anthropic's Claude 3 Sonnet model"

}

]

return json.dumps({"models": models_data})

# Define a greeting resource that dynamically constructs a personalized greeting

@mcp.resource("greeting://{name}")

def get_greeting(name: str) -> str:

"""Return a greeting for the given name

Args:

name: The name to greet

Returns:

A personalized greeting message

"""

import urllib.parse

# Decode URL-encoded name

decoded_name = urllib.parse.unquote(name)

logger.info(f"Generating greeting for {decoded_name}")

return f"Hello, {decoded_name}!"

@mcp.resource("file://documents/{name}")

def read_document(name: str) -> str:

"""Read a document by name."""

# This would normally read from disk

return f"Content of {name}"

@mcp.prompt(title="Code Review")

def review_code(code: str) -> str:

return f"Please review this code:\n\n{code}"

@mcp.prompt(title="Debug Assistant")

def debug_error(error: str) -> list[base.Message]:

return [

base.UserMessage("I'm seeing this error:"),

base.UserMessage(error),

base.AssistantMessage("I'll help debug that. What have you tried so far?"),

]

if __name__ == "__main__":

mcp.run(transport="streamable-http")Client 端: streamable_http_client.py

python

# client.py - Streamable HTTP Protocol MCP Client

import sys

import urllib.parse

from mcp.client.streamable_http import streamablehttp_client

from mcp.client.session import ClientSession

import asyncio

import logging

import json

from mcp.types import TextContent, TextResourceContents

from mcp.server.fastmcp.prompts import base

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger(__name__)

async def main():

"""Main client function that demonstrates MCP client features with streamable protocol"""

logger.info("Starting Streamable MCP Client")

try:

# Connect to streamable HTTP server

logger.info("Connecting to streamable server at http://localhost:8081/mcp...")

async with streamablehttp_client("http://localhost:8081/mcp") as (reader, writer, callback):

async with ClientSession(reader, writer) as session:

logger.info("Initializing session")

await session.initialize()

# 1. Call the greeting tool

logger.info("Testing greeting tool")

greeting_result = await session.call_tool("greeting", arguments={"name": "World"})

if greeting_result and greeting_result.content:

text_content = next((content for content in greeting_result.content

if isinstance(content, TextContent)), None)

if text_content:

print(f"\n1. Greeting: {text_content.text}")

# 2. Call the add tool

logger.info("Testing calculator tool")

add_result = await session.call_tool("add", arguments={"a": 5, "b": 7})

if add_result and add_result.content:

text_content = next((content for content in add_result.content

if isinstance(content, TextContent)), None)

if text_content:

print(f"\n2. Calculator result (5 + 7) = {text_content.text}")

# 3. Get models resource

logger.info("Testing models resource")

models_response = await session.read_resource("models://")

if models_response and models_response.contents:

text_resource = next((content for content in models_response.contents

if isinstance(content, TextResourceContents)), None)

if text_resource:

models = json.loads(text_resource.text)

print("\n3. Available models:")

for model in models.get("models", []):

print(f" - {model['name']} ({model['id']}): {model['description']}")

# 4. Get greeting resource

logger.info("Testing greeting resource")

name = "MCP Explorer"

encoded_name = urllib.parse.quote(name)

greeting_response = await session.read_resource(f"greeting://{encoded_name}")

if greeting_response and greeting_response.contents:

text_resource = next((content for content in greeting_response.contents

if isinstance(content, TextResourceContents)), None)

if text_resource:

print(f"\n4. Greeting: {text_resource.text}")

# 5. Get document resource

logger.info("Testing document resource")

document_response = await session.read_resource("file://documents/example.txt")

if document_response and document_response.contents:

text_resource = next((content for content in document_response.contents

if isinstance(content, TextResourceContents)), None)

if text_resource:

print(f"\n5. Document content:")

print(f" {text_resource.text}")

# 6. Use code review prompt

logger.info("Testing code review prompt")

sample_code = "def hello_world():\n print('Hello, world!')"

prompt_response = await session.get_prompt("review_code", {"code": sample_code})

if prompt_response and prompt_response.messages:

message = next((msg for msg in prompt_response.messages if msg.content), None)

if message and message.content:

text_content = next((content for content in [message.content]

if isinstance(content, TextContent)), None)

if text_content:

print("\n6. Code review prompt:")

print(f" {text_content.text}")

# 7. Use debug error prompt (multi-message format)

logger.info("Testing debug assistant prompt")

error_message = "AttributeError: 'NoneType' object has no attribute 'method'"

debug_response = await session.get_prompt("debug_error", {"error": error_message})

if debug_response and debug_response.messages:

print("\n7. Debug assistant prompt (multi-message):")

for idx, msg in enumerate(debug_response.messages):

if isinstance(msg, base.UserMessage):

print(f" [User Message {idx + 1}]: {msg.content.text if hasattr(msg.content, 'text') else msg.content}")

elif isinstance(msg, base.AssistantMessage):

print(f" [Assistant Message {idx + 1}]: {msg.content.text if hasattr(msg.content, 'text') else msg.content}")

except Exception:

logger.exception("An error occurred")

sys.exit(1)

if __name__ == "__main__":

asyncio.run(main())二、三种传输方式对比

| 特性 | Stdio | SSE | Streamable HTTP |

|---|---|---|---|

| 协议版本 | 所有版本 | 2024-11-05 (已弃用) |

2025-03-26 (推荐) |

| 通信方式 | 进程 stdin/stdout | HTTP GET + POST (双端点) | 统一 HTTP POST (单端点) |

| 连接模式 | 进程绑定 | 持久 SSE 长连接 | 灵活 (可有状态/无状态) |

| 网络支持 | ❌ 仅本地 | ✅ 支持 | ✅ 支持 |

| 端点数量 | N/A | 2 (/sse + /messages) |

1 (/mcp) |

| 流式响应 | ✅ 天然流式 | ✅ SSE 推送 | ✅ 可选 JSON/SSE 流 |

| 多客户端 | ❌ 一对一 | ✅ | ✅ |

| 无状态模式 | ❌ | ❌ | ✅ |

| 会话恢复 | ❌ | ❌ 重连困难 | ✅ 支持 |

| 基础设施兼容 | N/A | ⚠️ SSE 代理兼容问题 | ✅ 标准 HTTP |

| 适合场景 | 本地 IDE / CLI | 旧版网络服务 | 新项目首选 |