1 业务背景

GaussDB分布式与集中式数据库在数据存储、扩展性、数据一致性与执行计划的差异外,在数据库调优方面确认分布键与复制表的选择。

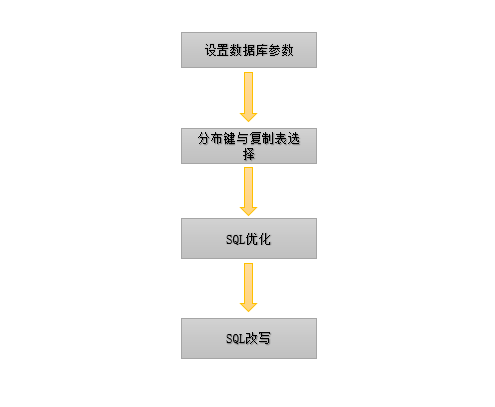

2 分布式数据库调优基本步骤

3 操作实践

3.1 数据库参数

只需要设置几个常规参数,特别的参数设置需要根据后面优化中遇到的问题针对性的设置即可。

常规参数如下:

- 流控参数

bash

gs_guc reload -Z datanode -Z coordinator -N all -I all -c "audit_enabled=off";- 开启stream

bash

gs_guc reload -Z datanode -Z coordinator -N all -I all -c "enable_stream_operator='on'";- 线程池:

bash

gs_guc set -Z datanode -Z coordinator -N all -I all -c "enable_thread_pool='on'"- arm 线程池 绑核

bash

gs_guc set -Z datanode -N all -I all -c "numa_distribute_mode='all'";

gs_guc set -Z datanode -N all -I all -c "thread_pool_attr='1024,4,(numabind: 0-31,32-63,64-95,96-127)'";- sql优化相关 (sql优化完成后正式压测需要关闭一下参数)

bash

gs_guc reload -Z datanode -Z coordinator -N all -I all -c "track_stmt_parameter='on'"; ---显示PBE 入参值

gs_guc reload -Z datanode -Z coordinator -N all -I all -c "instr_unique_sql_count=50000" --dbe_perf.summary_statement unique sql收集数量

gs_guc reload -Z datanode -Z coordinator -N all -I all -c "track_activity_query_size=10000" --- dbe_perf.summary_statement query长度

gs_guc reload -Z datanode -Z coordinator -N all -I all -c "log_min_duration_statement=10"; --- 捕获大于10ms的sql记录到statement_history中 ------ 调试完后 调整为3S3.2 布键 和 复制表的选择

分布式数据库中在两表join中如果非分布键 就是产生 broadcast或者redistribute算子,这两种很影响sql的执行效率。特别是再并发的情况下。所有一般的做法是把整个业务的sql提前出来,把有join的sql中的表提取出来,根据表名来筛选看看这次跟哪些表有join,join的字段是什么,以此为依据选出最适合的分布键 和 复制表。

怎么提前分析业务sql:通过 summary_statement 视图来提取,分布式 业务sql耗时:

sql

select

node_name,

unique_sql_id ,

round(total_elapse_time/n_calls,2) as avg_time,

n_calls as n_calls,

round(plan_time/total_elapse_time,2)*100 as plan_time_rto,

replace(replace(query,CHR(10),''),' ',' ') as query,

last_updated

from dbe_perf.summary_statement

where user_name='xxx'

and n_calls>10 order by 3 desc;3.3 sql 优化

分布键 和 复制表的选择后,可以先跑一轮压测。通过上面的sql来提取 平均耗时最长的sql。找到平均耗时最长的sql后 可以通过unique_sql_id字段 反查只要知道sql的执行计划,入参值,等待时间等来分析:查看sql耗时详细内容需解析details:

sql

select pg_catalog.statement_detail_decode(details,'plaintext',true) as detail

from

(

select

*

from dbe_perf.get_global_full_sql_by_timestamp(now()-1/24,now())

) a

where a.unique_query_id=xxx

order by start_time desc limit 1;如果details 信息中 LockMgrLock 耗着占比很高。可以通过修改num_internal_lock_partitions 参数 减少 轻量级锁 耗时,只要修改 LOG2_LOCKTABLE_PART,FASTPATH_PART:

bash

gs_guc set -Z coordinator -Z datanode -N all -I all -c "num_internal_lock_partitions='CLOG_PART=256,CSNLOG_PART=512,LOG2_LOCKTABLE_PART=4,TWOPHASE_PART=1,FASTPATH_PART=150'";

通过上面的sql获取到入参值后:我们可以手动构成pbe ,来完成模拟java pbe执行,然后看具体的执行计划 (为啥要模拟java pbe的行为,因为在集中式中引入了sql执行计划自适应功能,再某些特定场景下执行计划会变,gplan和cplan的跳变。) :

sql

PREPARE p2(varchar(20),varchar(20),varchar(20))

as (

select a.AAZ007, a.AAZ617, a.AAE140, a.AAE002,

a.AAA039, a.AAE719, a.AAA028, a.AAZ010, a.AAE734, a.AAE145, a.AAE760, a.AAE008, a.AAE009, a.AAE010, a.AAA036, a.AAA345, a.AAA346,

a.AAE100, a.AAA079, a.AAE696, a.AAE101, a.AAZ661, a.AIC161, a.AAZ654, a.AAZ903, a.AAZ901, a.AAB001, a.AIC162, a.AAZ902, a.AAF002, a.AAC157,

a.AAF200, a.AAF216, a.AAF217, a.AAE041, a.AAE042, a.AAE013, a.AAE007, a.AAE006, a.BAZ002, a.AAE011, a.AAE036, a.AAB034, a.BZE011, a.BZE036,

a.AAA027, a.BZE300, a.AAB359, a.AAB360, a.AAZ692, a.AAA431, a.AAF018, a.AAA508, a.AAE930, c.AAC059, c.AAE668, d.AAC107

from xxxx1 a,

xxx2 b,

xxx_tc c,

xx_kz d

where a.aaz010 = b.aac001

and a.baz002 = b.baz002

and c.aaz257 = b.aaz257

and c.aaz649 = b.baz002

and c.aaz661 = a.aaz661

and c.aaz661 = d.aaz661

and c.aaz257 = d.aaz257

AND B.BZ = '1'

AND B.JOB_NAME = $1

AND B.PCH = $2

AND B.BAZ002 = $3);再分析执行计划:

sql

explain analyse

execute p2('200001260412803','1222','200001260412803');对于几毫秒以内的sql,且数据很小的场景,一般情况,我们都是通过 enable_stream_operator=off 来优化。 因为走stream gather算子对于几毫秒的sql底噪很大。

索引不优: 通过 ind_ac82_111 索引扫描后,Filter aaz649, Rows Removed by Filter: 4141249 。Filter的数据量太大,说明索引定位数据不好。

sql

--Index Scan using ind_ac82_111 on ac82 c

Index Cond: (c.aaz257 = xxxxx)

Filter: (aaz649 = xxxxx::numeric)

Rows Removed by Filter: 4141249 Join顺序 、 Join方式的、Scan方式 等等 根据实际情况我们都可以通过hint 的方式来优化。一般在现场都是通过后台绑定sql_patch处理。

sql

--- 创建 patch

select * from dbe_sql_util.create_hint_sql_patch('patch名称',&unique_query_id, 'set (enable_stream_operator off)');

sql

--- 删除 patch

select * from dbe_sql_util.drop_sql_patch('patch名称');

sql

select *from global_sql_patch_func(); -- 全局各个节点上的SQL PATCH信息,用于返回global_sql_patch视图的结果。3.4 sql改写

sql

INSTR((SELECT idpath FROM taarea WHERE areacode = (SELECT area FROM taorg WHERE customno = $5)),areaid) > ?

---改写前

explain analyse

select

BCA001 as bca001, ADA120 as ada120, ADA113 as ada113, BCA008 as bca008, BCA002 as bca002, BCA003 as bca003, BCA004 as bca004,

BCA005 as bca005, BCA006 as bca006, BCA007 as bca007, AAE030 as aae030, AAE031 as aae031, AAE013 as aae013,

AAE100 as aae100, AAE011 as aae011, YAE116 as yae116, AAE017 as aae017, AAE017_DSC as aae017Dsc, AAB302 as

aab302, AAB302_DSC as aab302Dsc, AAE036 as aae036, AAE163 as aae163

from xxx01

where aae100 = '1'

and ADA120 = 'JY198'

and BCA008 = 'JY198_1'

AND (aae017 = '220000000000354256' OR (bca003 = '1'

AND aab302 != (SELECT area FROM taorg WHERE customno = '220xxxx300051034442') AND aab302 IN

(SELECT areaid FROM xxx WHERE

INSTR((SELECT idpath FROM xxx WHERE areacode = (SELECT area FROM xxx WHERE customno = '2201840300051034442')),areaid) > 0

)))

order by aab302 desc;

id | operation | A-time | A-rows | E-rows | Peak Memory | A-width | E-width | E-costs

----+----------------------------------------------------------------------------------------------+--------+--------+--------+-------------+---------+---------+--------------------

1 | -> Sort | 48.172 | 1 | 80 | 41KB | | 654 | 3254.664..3254.864

2 | -> Hash Right Join (3, 7) | 48.150 | 1 | 80 | 33KB | | 654 | 2806.615..3249.852

3 | -> HashAggregate | 47.822 | 4 | 19623 | 280KB | | 13 | 2803.657..2999.887

4 | -> Seq Scan on taarea | 47.626 | 4 | 19623 | 20KB | | 13 | 0.000..2750.035

5 | -> Index Scan using index_taarea_2 on taarea [4, InitPlan 3 (returns $2)] | | | 1 | | | 66 | 2.282..4.565

6 | -> Index Scan using index_taorg_customno on taorg [5, InitPlan 2 (returns $1)] | | | 1 | | | 13 | 0.000..2.282

7 | -> Hash | 0.077 | 1 | 1 | 307KB | | 654 | 2.945..2.945

8 | -> Seq Scan on ca01 | 0.071 | 1 | 1 | 43KB | | 654 | 0.000..2.945

9 | -> Index Scan using index_taorg_customno on taorg [8, InitPlan 1 (returns $0)] | | | 1 | | | 13 | 0.000..2.282

(9 rows)

Predicate Information (identified by plan id)

------------------------------------------------------------------------------------------------------------------------------------------------------

9 --Index Scan using index_taorg_customno on taorg

Index Cond: ((customno)::text = '2201840300051034442'::text)

2 --Hash Right Join (3, 7)

Hash Cond: ((jy13kwsource.taarea.areaid)::text = (ca01.aab302)::text)

Filter: (((ca01.aae017)::text = '220000000000354256'::text) OR (((ca01.bca003)::text = '1'::text) AND ((ca01.aab302)::text <> ($0)::text) AND (jy13kwsource.taarea.areaid IS NOT NULL)))

5 --Index Scan using index_taarea_2 on taarea

Index Cond: ((areacode)::text = ($1)::text)

6 --Index Scan using index_taorg_customno on taorg

Index Cond: ((customno)::text = '2201840300051034442'::text)

4 --Seq Scan on taarea

Filter: (instr(($2)::text, (areaid)::text) > 1)

Rows Removed by Filter: 58865

8 --Seq Scan on ca01

Filter: (((aae100)::text = '1'::text) AND ((ada120)::text = 'JY198'::text) AND ((bca008)::text = 'JY198_1'::text) AND (((aae017)::text = '220000000000354256'::text) OR (((bca003)::text = '1'

::text) AND ((aab302)::text <> ($0)::text))))

Rows Removed by Filter: 53

(15 rows)

可能会用到的一些命令:

sql

select reset_unique_sql('GLOBAL','ALL',0); ---- 清空 summary_statement 视图

select pg_terminate_session(pid,sessionid) from pgxc_stat_activity a where application_name='PostgreSQL JDBC Driver'; ---- 杀掉所有应用链接4 简单总结

分布式数据库中在两表join中如果非分布键 就是产生 broadcast或者redistribute算子,这两种很影响sql的执行效率。

转载:华为云社区