k8s环境部署promethus及周边监控组件(无需修改任何参数,复制粘贴就可完成部署)

1. 文档概述

1.1 文档目的

本文档详细描述基于Kubernetes(K8s)集群部署Prometheus监控系统的完整流程,涵盖所有相关组件的部署步骤、验证方法、关键配置说明、日常运维及常见问题排查。

1.2 监控系统架构

本次部署的Prometheus监控系统由以下核心组件构成,各组件协同工作,实现对K8s集群及相关服务的全面监控:

-

Metrics Server:采集K8s集群节点(Node)和容器组(Pod)的核心资源指标(CPU、内存使用率等),为HPA(Horizontal Pod Autoscaler)等功能提供数据支撑。

-

kube-state-metrics:采集K8s集群各类资源对象(Deployment、Pod、Service、ConfigMap等)的状态指标,反映资源运行状态。

-

node-exporter:以DaemonSet方式部署在集群所有节点,采集节点级系统指标(CPU、内存、磁盘IO、网络流量等)。

-

blackbox-exporter:实现黑盒监控,支持HTTP/HTTPS、TCP、ICMP探测等方式,用于检测服务可用性。

-

Prometheus Server:核心组件,负责指标采集、存储、查询和分析,通过配置文件定义采集规则和目标。

1.3 适用范围

本部署文档基于v1.23.17版本Kubernetes环境,部署的监控系统可覆盖:K8s集群基础设施(节点、容器)、K8s资源对象、服务可用性(HTTP/TCP/Ingress)等。

2. 环境准备

2.1 基础环境要求

-

Kubernetes集群:集群状态正常(所有节点Ready)。

-

命名空间:需提前创建

monitoring命名空间(用于部署除Metrics Server外的所有监控组件),若未创建,执行对应命令创建。 -

镜像仓库:公网镜像仓库可正常访问,确保所有节点能从公网拉取镜像。

-

存储类:生产环境确认已配置存储类(default),用于Prometheus数据持久化(确保PVC能正常绑定),测试环境可直接使用emptyDir等方式挂载。

-

网络环境:集群内所有节点网络互通,无防火墙拦截监控组件所需端口(如9100、9115、9090、10250等)。

-

权限:部署用户需具备K8s集群管理员权限(cluster-admin),可执行集群级资源的创建、修改、查询操作。

2.2 部署文件清单

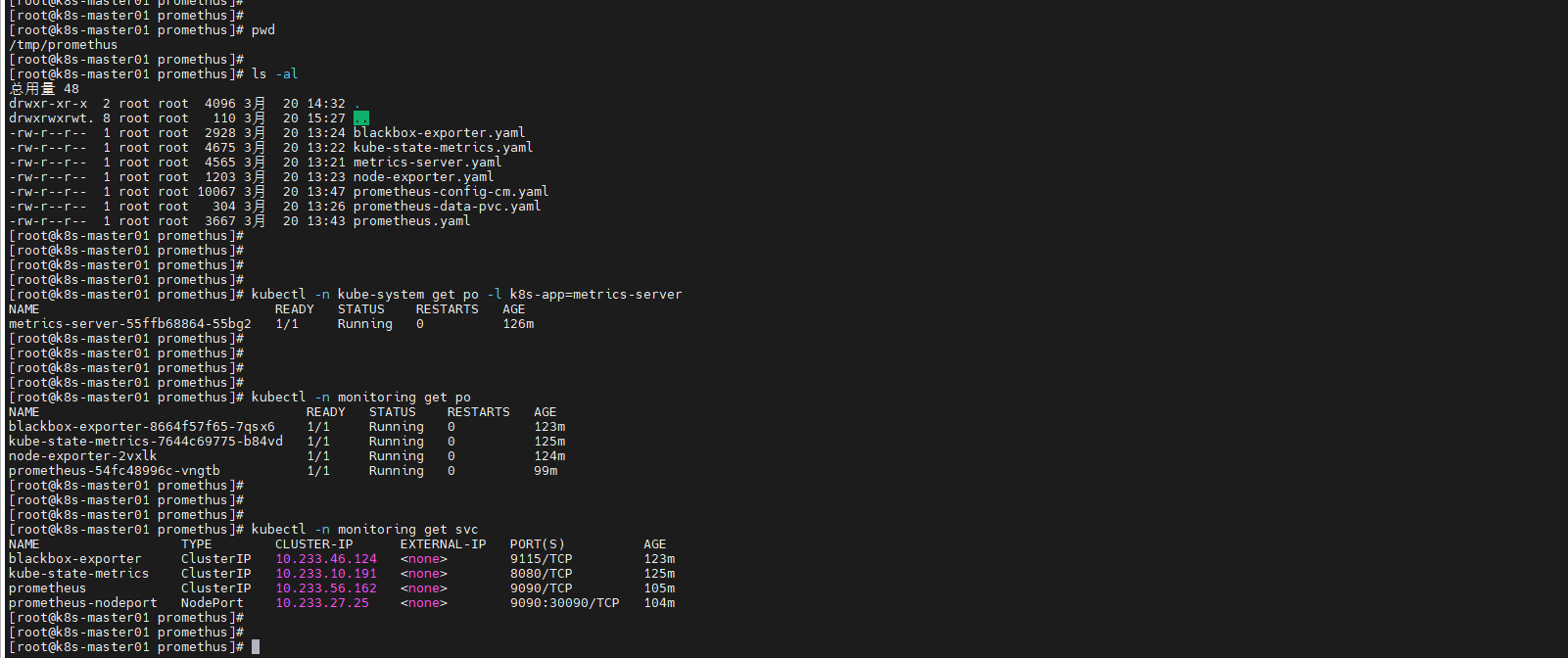

所有部署文件均位于master01节点的 /tmp/promethus 目录下(且后续所有操作都在该目录执行),文件清单及功能说明如下,部署时需确保所有文件齐全且未被修改,后续章节将完整嵌入各文件内容:

bash

# 后续所有未有明确说明的,都在master01节点的 /tmp/promethus 目录下执行:

mkdir -p /tmp/promethus

cd /tmp/promethus| 文件名 | 所属组件 | 部署命名空间 | 核心功能 |

|---|---|---|---|

| metrics-server.yaml | Metrics Server | kube-system | 采集Node、Pod的CPU、内存等核心资源指标 |

| kube-state-metrics.yaml | kube-state-metrics | monitoring | 采集K8s资源对象(Deployment、Pod等)的状态指标 |

| node-exporter.yaml | node-exporter | monitoring | 以DaemonSet部署,采集节点系统级指标 |

| blackbox-exporter.yaml | blackbox-exporter | monitoring | 黑盒监控,支持HTTP/TCP/ICMP/ES等探测 |

| prometheus-config-cm.yaml | Prometheus | monitoring | Prometheus核心配置(采集规则、目标、全局参数等) |

| prometheus-data-pvc.yaml | Prometheus | monitoring | Prometheus数据持久化PVC(20Gi)(临时测试可忽略) |

| prometheus.yaml | Prometheus | monitoring | Prometheus主服务、RBAC权限、Service配置 |

2.3 前置操作

执行部署前,需完成以下前置操作,确保部署顺利进行:

- 检查K8s集群状态:

bash

# 确认所有节点都是 Ready

kubectl get nodes

# 确认所有 Pod 都是 Running

kubectl get pods -A- 创建monitoring命名空间(若未创建):

bash

kubectl create namespace monitoring- 检查公网镜像仓库可访问性:

bash

# 确保镜像能正常拉取,若无法拉取,检查网络连通性。

docker pull registry.cn-shenzhen.aliyuncs.com/rmyyds/prometheus:v3.9.1- 检查默认存储类状态(临时测试测试可忽略该步骤):

bash

# 确保存储类状态正常,可用于PVC绑定。(如以下状态为正常)

kubectl get sc

bash

[root@k8s-master01 promethus]#

[root@k8s-master01 promethus]# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

local openebs.io/local Delete Immediate true 101d

[root@k8s-master01 promethus]#

[root@k8s-master01 promethus]#3. 完整部署流程(含所有YAML文件)

部署时建议按照以下顺序执行,每个组件部署完成后需进行验证,确保组件正常运行。

3.1 部署Metrics Server

3.1.1 部署文件(metrics-server.yaml)

yaml

# metrics-server.yaml

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system # 命名空间

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-view: "true"

name: system:aggregated-metrics-reader

rules:

- apiGroups:

- metrics.k8s.io

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

rules:

- apiGroups:

- ""

resources:

- nodes/metrics

verbs:

- get

- apiGroups:

- ""

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server-auth-reader

namespace: kube-system # 命名空间

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server:system:auth-delegator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system # 命名空间

spec:

ports:

- appProtocol: https

name: https

port: 443

protocol: TCP

targetPort: https

selector:

k8s-app: metrics-server

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system # 命名空间

spec:

selector:

matchLabels:

k8s-app: metrics-server

strategy:

rollingUpdate:

maxUnavailable: 0

template:

metadata:

labels:

k8s-app: metrics-server

spec:

containers:

- args:

- --cert-dir=/tmp

- --secure-port=10250

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --kubelet-use-node-status-port

- --metric-resolution=15s

- --kubelet-insecure-tls

image: registry.cn-shenzhen.aliyuncs.com/rmyyds/metrics-server:v0.8.1 # 公网镜像

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /livez

port: https

scheme: HTTPS

periodSeconds: 10

name: metrics-server

ports:

- containerPort: 10250

name: https

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /readyz

port: https

scheme: HTTPS

initialDelaySeconds: 20

periodSeconds: 10

resources:

requests:

cpu: 100m

memory: 200Mi

limits:

cpu: 250m

memory: 512Mi

securityContext:

allowPrivilegeEscalation: false

capabilities:

drop:

- ALL

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

seccompProfile:

type: RuntimeDefault

volumeMounts:

- mountPath: /tmp

name: tmp-dir

nodeSelector:

kubernetes.io/os: linux

priorityClassName: system-cluster-critical

serviceAccountName: metrics-server

volumes:

- emptyDir: {}

name: tmp-dir

---

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:

labels:

k8s-app: metrics-server

name: v1beta1.metrics.k8s.io

spec:

group: metrics.k8s.io

groupPriorityMinimum: 100

insecureSkipTLSVerify: true

service:

name: metrics-server

namespace: kube-system # 命名空间

version: v1beta1

versionPriority: 100

---3.1.2 部署命令

bash

kubectl apply -f metrics-server.yaml3.1.3 部署验证

bash

# 检查Pod状态(确保Running)

kubectl get pods -n kube-system -l k8s-app=metrics-server

# 验证指标采集(能正常输出节点资源使用情况)

kubectl top nodes

bash

[root@k8s-master01 promethus]#

[root@k8s-master01 promethus]# kubectl -n kube-system get po -l k8s-app=metrics-server

NAME READY STATUS RESTARTS AGE

metrics-server-55ffb68864-55bg2 1/1 Running 0 37m

[root@k8s-master01 promethus]#

[root@k8s-master01 promethus]#

[root@k8s-master01 promethus]# kubectl top no

NAME CPU(cores) CPU(%) MEMORY(bytes) MEMORY(%)

k8s-master01 158m 4% 1963Mi 27%

[root@k8s-master01 promethus]#3.2 部署kube-state-metrics

3.2.1 部署文件(kube-state-metrics.yaml)

yaml

# kube-state-metrics.yaml

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: kube-state-metrics

namespace: monitoring # 命名空间

---

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

app.kubernetes.io/component: exporter

app.kubernetes.io/name: kube-state-metrics

name: kube-state-metrics

namespace: monitoring # 命名空间

rules:

- apiGroups:

- ""

resources:

- configmaps

- secrets

- nodes

- pods

- services

- serviceaccounts

- resourcequotas

- replicationcontrollers

- limitranges

- persistentvolumeclaims

- persistentvolumes

- namespaces

- endpoints

verbs:

- list

- watch

- apiGroups:

- apps

resources:

- statefulsets

- daemonsets

- deployments

- replicasets

verbs:

- list

- watch

- apiGroups:

- batch

resources:

- cronjobs

- jobs

verbs:

- list

- watch

- apiGroups:

- autoscaling

resources:

- horizontalpodautoscalers

verbs:

- list

- watch

- apiGroups:

- authentication.k8s.io

resources:

- tokenreviews

verbs:

- create

- apiGroups:

- authorization.k8s.io

resources:

- subjectaccessreviews

verbs:

- create

- apiGroups:

- policy

resources:

- poddisruptionbudgets

verbs:

- list

- watch

- apiGroups:

- certificates.k8s.io

resources:

- certificatesigningrequests

verbs:

- list

- watch

- apiGroups:

- discovery.k8s.io

resources:

- endpointslices

verbs:

- list

- watch

- apiGroups:

- storage.k8s.io

resources:

- storageclasses

- volumeattachments

verbs:

- list

- watch

- apiGroups:

- admissionregistration.k8s.io

resources:

- mutatingwebhookconfigurations

- validatingwebhookconfigurations

verbs:

- list

- watch

- apiGroups:

- networking.k8s.io

resources:

- networkpolicies

- ingressclasses

- ingresses

verbs:

- list

- watch

- apiGroups:

- coordination.k8s.io

resources:

- leases

verbs:

- list

- watch

- apiGroups:

- rbac.authorization.k8s.io

resources:

- clusterrolebindings

- clusterroles

- rolebindings

- roles

verbs:

- list

- watch

---

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

app.kubernetes.io/component: exporter

app.kubernetes.io/name: kube-state-metrics

name: kube-state-metrics

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kube-state-metrics

subjects:

- kind: ServiceAccount

name: kube-state-metrics

namespace: monitoring

---

---

apiVersion: apps/v1

kind: Deployment

metadata:

annotations:

labels:

app: kube-state-metrics

name: kube-state-metrics

namespace: monitoring # 命名空间

spec:

replicas: 1

selector:

matchLabels:

app: kube-state-metrics

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

template:

metadata:

labels:

app: kube-state-metrics

spec:

containers:

- image: registry.cn-shenzhen.aliyuncs.com/rmyyds/kube-state-metrics:v2.18.0 # 公网镜像

imagePullPolicy: IfNotPresent

name: kube-state-metrics

ports:

- containerPort: 8080

protocol: TCP

resources:

limits:

cpu: 500m

memory: 514Mi

requests:

cpu: 250m

memory: 250Mi

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

dnsPolicy: ClusterFirst

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

serviceAccount: kube-state-metrics

serviceAccountName: kube-state-metrics

terminationGracePeriodSeconds: 30

tolerations:

- effect: NoSchedule

key: node-role.kubernetes.io/master

operator: Exists

---

apiVersion: v1

kind: Service

metadata:

annotations:

prometheus.io/http-probe: "true"

prometheus.io/http-probe-path: /healthz

prometheus.io/http-probe-port: "8080"

prometheus.io/scrape: "true"

labels:

app: kube-state-metrics

name: kube-state-metrics

namespace: monitoring # 命名空间

spec:

ports:

- name: kube-state-metrics

port: 8080

protocol: TCP

targetPort: 8080

selector:

app: kube-state-metrics

type: ClusterIP

---3.2.2 部署命令

bash

kubectl apply -f kube-state-metrics.yaml3.2.3 部署验证

bash

# 检查Pod状态(确保Running)

kubectl get pods -n monitoring -l app=kube-state-metrics

# 检查Service状态

kubectl get svc -n monitoring kube-state-metrics3.3 部署node-exporter

3.3.1 部署文件(node-exporter.yaml)

yaml

# node-exporter.yaml

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

labels:

app: node-exporter

name: node-exporter

namespace: monitoring # 命名空间

spec:

selector:

matchLabels:

app: node-exporter

template:

metadata:

labels:

app: node-exporter

name: node-exporter

spec:

containers:

- image: registry.cn-shenzhen.aliyuncs.com/rmyyds/node-exporter:v1.10.2 # 公网镜像

imagePullPolicy: IfNotPresent

name: node-exporter

ports:

- containerPort: 9100

hostPort: 9100

protocol: TCP

resources:

limits:

cpu: 100m

memory: 200Mi

requests:

cpu: 50m

memory: 50Mi

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

dnsPolicy: ClusterFirst

hostNetwork: true

hostPID: true

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

terminationGracePeriodSeconds: 30

tolerations:

- operator: Exists

updateStrategy:

rollingUpdate:

maxSurge: 0

maxUnavailable: 1

type: RollingUpdate

---3.3.2 部署命令

bash

kubectl apply -f node-exporter.yaml3.3.3 部署验证

bash

# 检查DaemonSet状态(确保所有节点都有对应Pod)

kubectl get ds -n monitoring node-exporter

# 检查Pod状态(每个节点1个,均为Running)

kubectl get pods -n monitoring -l app=node-exporter -o wide3.4 部署blackbox-exporter

3.4.1 部署文件(blackbox-exporter.yaml)

yaml

# blackbox-exporter.yaml

---

apiVersion: v1

kind: ConfigMap

metadata:

name: blackbox-exporter

namespace: monitoring # 命名空间

data:

blackbox.yml: |-

modules:

http_2xx:

prober: http

timeout: 10s

http:

valid_http_versions: ["HTTP/1.1", "HTTP/2"]

valid_status_codes: []

method: GET

preferred_ip_protocol: "ip4"

http_post_2xx:

prober: http

timeout: 10s

http:

valid_http_versions: ["HTTP/1.1", "HTTP/2"]

method: POST

preferred_ip_protocol: "ip4"

tcp_connect:

prober: tcp

timeout: 10s

icmp:

prober: icmp

timeout: 10s

icmp:

preferred_ip_protocol: "ip4"

---

---

apiVersion: apps/v1

kind: Deployment

metadata:

annotations:

generation: 1

name: blackbox-exporter

namespace: monitoring # 命名空间

spec:

progressDeadlineSeconds: 600

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

app: blackbox-exporter

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

template:

metadata:

creationTimestamp: null

labels:

app: blackbox-exporter

spec:

containers:

- args:

- --config.file=/etc/blackbox_exporter/blackbox.yml

- --web.listen-address=:9115

image: registry.cn-shenzhen.aliyuncs.com/rmyyds/blackbox-exporter:v0.18.0 # 公网镜像

imagePullPolicy: IfNotPresent

name: blackbox-exporter

ports:

- containerPort: 9115

protocol: TCP

readinessProbe:

failureThreshold: 3

initialDelaySeconds: 15

periodSeconds: 10

successThreshold: 1

tcpSocket:

port: 9115

timeoutSeconds: 5

resources:

limits:

cpu: 200m

memory: 500Mi

requests:

cpu: 100m

memory: 50Mi

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /etc/blackbox_exporter

name: config

dnsPolicy: ClusterFirst

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

terminationGracePeriodSeconds: 30

tolerations:

- effect: NoSchedule

operator: Exists

volumes:

- configMap:

defaultMode: 420

name: blackbox-exporter

name: config

---

apiVersion: v1

kind: Service

metadata:

name: blackbox-exporter

namespace: monitoring # 命名空间

spec:

ports:

- name: http

port: 9115

protocol: TCP

targetPort: 9115

selector:

app: blackbox-exporter

---3.4.2 部署命令

bash

kubectl apply -f blackbox-exporter.yaml3.4.3 部署验证

bash

# 检查Pod状态(确保Running)

kubectl get pods -n monitoring -l app=blackbox-exporter

# 检查Service状态

kubectl get svc -n monitoring blackbox-exporter3.5 创建Prometheus数据持久化PVC(测试环境不用pvc直接忽略)

3.5.1 部署文件(prometheus-data-pvc.yaml)

yaml

# prometheus-data-pvc.yaml

---

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: prometheus-data

namespace: monitoring # 命名空间

spec:

accessModes:

- ReadWriteOnce

storageClassName: local # 默认存储类名

resources:

requests:

storage: 20Gi # 存储大小

---3.5.2 部署命令

bash

# kubectl apply -f prometheus-data-pvc.yaml

# 提示:该测试环境就不实际创建 pvc了持久化了,后文 promethus 直接换成 emptyDir挂载3.5.3 部署验证

bash

# 检查PVC状态(确保STATUS=Bound)

kubectl get pvc -n monitoring prometheus-data

bash

[root@k8s-master01 ~]#

[root@k8s-master01 ~]# kubectl -n monitoring get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

prometheus-data Bound pvc-b896a5ae-eb14-41fe-a45a-c0a34c4ec043 20Gi RWO local <unset> 56s

[root@k8s-master01 ~]#

[root@k8s-master01 ~]#3.6 创建Prometheus配置ConfigMap

3.6.1 部署文件(prometheus-config-cm.yaml)

yaml

# prometheus-config-cm.yaml

# 将 192.168.133.140 替换成对应集群的master01 IP,便于辨识环境

---

apiVersion: v1

data:

prometheus.yml: |

global:

scrape_interval: 10s

scrape_timeout: 10s

evaluation_interval: 10s

external_labels:

env: 测试环境

rule_files:

- "/etc/prometheus-rules/*.rules"

scrape_configs:

- job_name: 'node-exporter'

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

metrics_path: /metrics

scheme: http

enable_http2: true

kubernetes_sd_configs:

- role: node

relabel_configs:

- source_labels: [__address__]

separator: ;

regex: (.+):(\d+)

target_label: __address__

replacement: $1:9100

action: replace

- source_labels: [__address__]

regex: (.+):(\d+)

target_label: instance

replacement: $1

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- source_labels: [__meta_kubernetes_node_name]

action: replace

target_label: kubernetes_node_name

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- job_name: 'kube-apiservers'

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

regex: default;kubernetes;https

action: keep

# kube-controller-manager 容器(Pod 发现)

- job_name: 'kube-controller-manager'

scheme: https # 核心:10257 端口是 HTTPS

tls_config:

insecure_skip_verify: true # 跳过自签名证书验证

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: pod # Pod 发现

namespaces:

names: [kube-system]

relabel_configs:

- action: keep

source_labels: [__meta_kubernetes_pod_label_component]

regex: kube-controller-manager

- action: replace

source_labels: [__meta_kubernetes_pod_host_ip]

target_label: __address__

replacement: ${1}:10257

- target_label: __metrics_path__

replacement: /metrics

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_pod_name]

target_label: pod_name

# kube-scheduler 容器(Pod 发现)

- job_name: 'kube-scheduler'

scheme: https # 核心:10259 端口是 HTTPS

tls_config:

insecure_skip_verify: true # 跳过自签名证书验证

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: pod # 用 Pod 发现

namespaces:

names: [kube-system]

relabel_configs:

- action: keep

source_labels: [__meta_kubernetes_pod_label_component]

regex: kube-scheduler

- action: replace

source_labels: [__meta_kubernetes_pod_host_ip]

target_label: __address__

replacement: ${1}:10259

- target_label: __metrics_path__

replacement: /metrics

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_pod_name]

target_label: pod_name

# kube-proxy 容器(Node 发现)需要注意 kube-proxy的配置文件指标绑定的IP: metricsBindAddress: 0.0.0.0:10249

# metricsBindAddress: 默认是绑定本地回环地址: 127.0.0.1

- job_name: 'kube-proxy'

scheme: http # 10249 端口是 HTTP

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node # 用 Node 发现

relabel_configs:

- action: replace

source_labels: [__address__]

regex: (.+):\d+

replacement: ${1}:10249

target_label: __address__

- action: keep

source_labels: [__meta_kubernetes_node_name]

regex: .+

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- source_labels: [__meta_kubernetes_node_name]

target_label: node_name

- job_name: 'kubelet'

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- action: replace

target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics

- job_name: 'kubernetes-cadvisor'

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- action: replace

target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

metric_relabel_configs:

- source_labels: [id]

action: replace

regex: '^/machine\.slice/machine-rkt\\x2d([^\\]+)\\.+/([^/]+)\.service$'

target_label: rkt_container_name

replacement: '${2}-${1}'

- source_labels: [id]

action: replace

regex: '^/system\.slice/(.+)\.service$'

target_label: systemd_service_name

replacement: '${1}'

- job_name: 'kube-state-metrics'

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape, __meta_kubernetes_endpoint_port_name]

regex: true;kube-state-metrics

action: keep

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: (.+)(?::\d+);(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- job_name: 'kubernetes-service-http-probe'

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: service

metrics_path: /probe

params:

module: [http_2xx]

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape, __meta_kubernetes_service_annotation_prometheus_io_http_probe]

regex: true;true

action: keep

- source_labels: [__meta_kubernetes_service_name, __meta_kubernetes_namespace, __meta_kubernetes_service_annotation_prometheus_io_http_probe_port, __meta_kubernetes_service_annotation_prometheus_io_http_probe_path]

action: replace

target_label: __param_target

regex: (.+);(.+);(.+);(.+)

replacement: $1.$2:$3$4

- action: replace

target_label: __address__

replacement: blackbox-exporter.monitoring.svc:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_service_annotation_prometheus_io_app_info_(.+)

- job_name: 'kubernetes-service-tcp-probe'

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: service

metrics_path: /probe

params:

module: [tcp_connect]

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape, __meta_kubernetes_service_annotation_prometheus_io_tcp_probe]

regex: true;true

action: keep

- source_labels: [__meta_kubernetes_service_name, __meta_kubernetes_namespace, __meta_kubernetes_service_annotation_prometheus_io_tcp_probe_port]

regex: (.+);(.+);(.+)

target_label: __param_target

replacement: $1.$2:$3

action: replace

- action: replace

target_label: __address__

replacement: blackbox-exporter.monitoring.svc:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_service_annotation_prometheus_io_app_info_(.+)

- source_labels: []

replacement: "192.168.133.140"

action: replace

target_label: k8s_vip

- source_labels: []

replacement: "prd_k8s"

target_label: cluster_name

action: replace

- job_name: kubelet-probes

kubernetes_sd_configs:

- role: node

metrics_path: /metrics

scheme: https

enable_compression: true

authorization:

type: Bearer

credentials_file: /var/run/secrets/kubernetes.io/serviceaccount/token

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

insecure_skip_verify: false

follow_redirects: true

enable_http2: true

relabel_configs:

- separator: ;

regex: __meta_kubernetes_node_label_(.+)

replacement: $1

action: labelmap

- separator: ;

regex: (.*)

target_label: __address__

replacement: kubernetes.default.svc:443

action: replace

- source_labels: [__meta_kubernetes_node_name]

separator: ;

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/probes

action: replace

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitoring

---3.6.2 部署命令

bash

kubectl apply -f prometheus-config-cm.yaml3.6.3 部署验证

bash

# 有结果输出表示成功

kubectl get cm -n monitoring prometheus-config3.7 创建Prometheus应用(deploy)

3.7.1 部署文件(prometheus.yaml)

yaml

# prometheus.yaml

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: monitoring # 命名空间

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

app.kubernetes.io/component: server

app.kubernetes.io/instance: prometheus

app.kubernetes.io/name: prometheus

app.kubernetes.io/part-of: prometheus

name: prometheus

rules:

- apiGroups:

- "*"

resources:

- nodes

- nodes/proxy

- nodes/metrics

- services

- endpoints

- pods

- ingresses

- configmaps

verbs:

- get

- list

- watch

- apiGroups:

- networking.k8s.io

resources:

- ingresses/status

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- discovery.k8s.io

resources:

- endpointslices

verbs:

- get

- list

- watch

- nonResourceURLs:

- /metrics

verbs:

- get

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: monitoring # 命名空间

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: prometheus

name: prometheus

namespace: monitoring # 命名空间

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

template:

metadata:

labels:

app: prometheus

name: prometheus

spec:

containers:

- args:

- --storage.tsdb.path=/prometheus

- --storage.tsdb.retention.time=7d # 数据保留时间

- --config.file=/etc/prometheus/prometheus.yml

- --web.enable-lifecycle

image: registry.cn-shenzhen.aliyuncs.com/rmyyds/prometheus:v3.9.1 # 公网镜像

imagePullPolicy: IfNotPresent

name: prometheus

ports:

- containerPort: 9090

protocol: TCP

resources:

limits:

cpu: 500m

memory: 1024Mi

requests:

cpu: 200m

memory: 512Mi

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /etc/prometheus

name: config

- mountPath: /etc/prometheus-rules

name: rules

- mountPath: /prometheus

name: prometheus

dnsPolicy: ClusterFirst

restartPolicy: Always

schedulerName: default-scheduler

securityContext:

fsGroup: 65534

serviceAccount: prometheus

serviceAccountName: prometheus

terminationGracePeriodSeconds: 30

tolerations:

- effect: NoSchedule

operator: Exists

volumes:

- configMap:

defaultMode: 420

name: prometheus-config

name: config

- name: rules

emptyDir: {}

- name: prometheus

emptyDir: {}

#persistentVolumeClaim:

# claimName: prometheus-data

# 生产环境请替换成 pvc 挂载,保证数据持久化

---

apiVersion: v1

kind: Service

metadata:

labels:

app: prometheus

name: prometheus

namespace: monitoring # 命名空间

spec:

ports:

- name: prometheus

port: 9090

protocol: TCP

targetPort: 9090

selector:

app: prometheus

type: ClusterIP

---

# # NodePort 的service 可选,可修改 30090 为实际端口

#apiVersion: v1

#kind: Service

#metadata:

# labels:

# app: prometheus

# name: prometheus-nodeport

# namespace: monitoring # 命名空间

#spec:

# ports:

# - name: prometheus

# port: 9090

# protocol: TCP

# targetPort: 9090

# nodePort: 30090 # NodePort 端口

# selector:

# app: prometheus

# type: NodePort

---3.7.2 部署命令

bash

kubectl apply -f prometheus.yaml3.7.3 部署验证

bash

# 检查Pod状态(确保Running,且READY状态为1/1)

kubectl get pods -n monitoring -l app=prometheus

# 检查Service状态(确保TYPE为ClusterIP,PORT(S)为9090:TCP)

kubectl get svc -n monitoring prometheus

# 可选:若启用NodePort服务,检查NodePort服务状态

kubectl -n monitoring get svc prometheus-nodeport #(若已启用)

bash

[root@k8s-master01 promethus]#

[root@k8s-master01 promethus]#

[root@k8s-master01 promethus]# kubectl -n monitoring get po

NAME READY STATUS RESTARTS AGE

blackbox-exporter-8664f57f65-7qsx6 1/1 Running 0 28m

kube-state-metrics-7644c69775-b84vd 1/1 Running 0 30m

node-exporter-2vxlk 1/1 Running 0 28m

prometheus-54fc48996c-vngtb 1/1 Running 0 4m56s

[root@k8s-master01 promethus]#

[root@k8s-master01 promethus]# kubectl -n monitoring get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

blackbox-exporter ClusterIP 10.233.46.124 <none> 9115/TCP 28m

kube-state-metrics ClusterIP 10.233.10.191 <none> 8080/TCP 30m

prometheus ClusterIP 10.233.56.162 <none> 9090/TCP 9m59s

prometheus-nodeport NodePort 10.233.27.25 <none> 9090:30090/TCP 9m12s

[root@k8s-master01 promethus]#

[root@k8s-master01 promethus]#

[root@k8s-master01 promethus]#4. 监控系统整体验证

所有组件部署完成后,需进行整体验证,确保Prometheus能正常采集所有目标指标,确认监控系统可正常运行。

4.1 访问Prometheus Web UI

根据Service类型选择对应访问方式,验证Web UI可正常打开且无报错:

-

ClusterIP类型(默认):需通过集群内节点或端口转发访问

# 在master01节点执行端口转发,将Prometheus 9090端口转发到本地9090端口 kubectl port-forward svc/prometheus 9090:9090 -n monitoring# 转发成功后,在本地浏览器访问:http://localhost:9090 -

NodePort类型(若启用):直接通过集群任意节点IP+NodePort端口访问

# 访问地址格式:http://节点IP:30090(30090为配置的NodePort端口,需替换为实际端口)

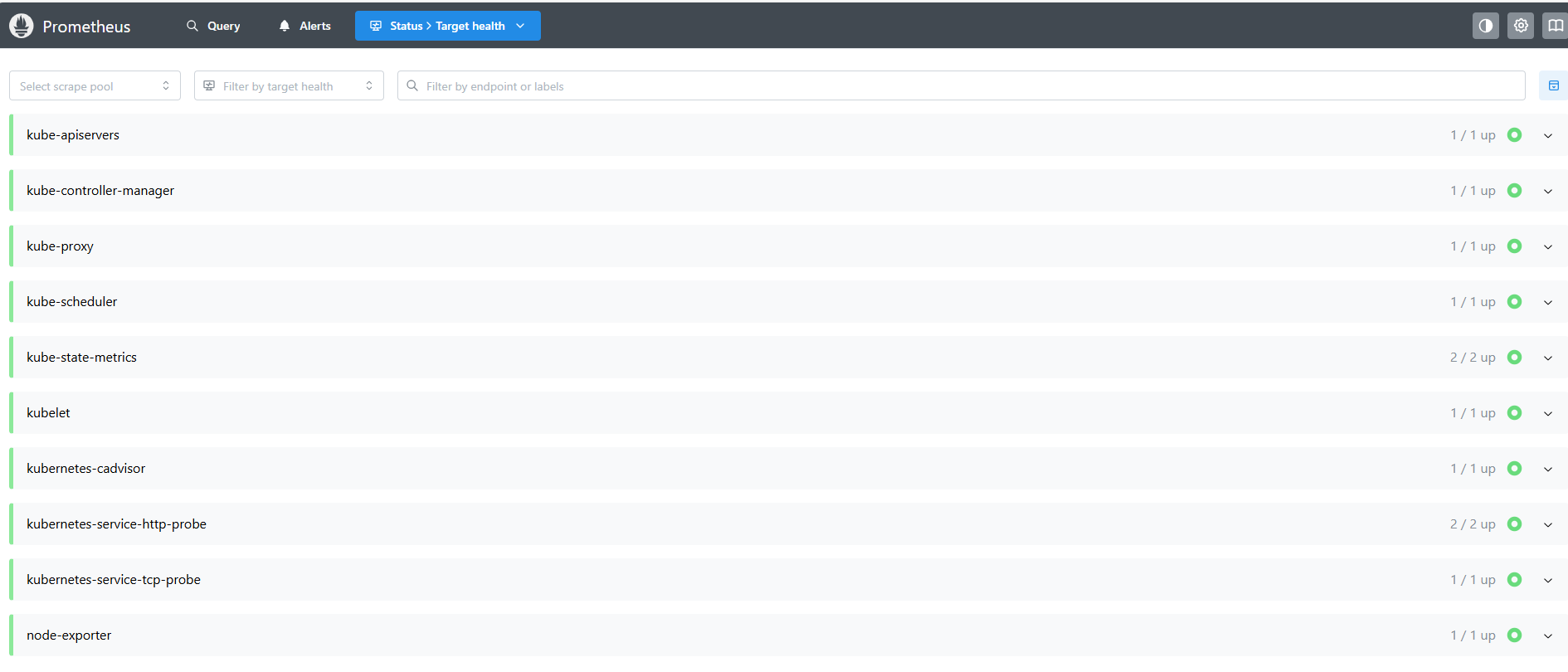

4.2 验证指标采集情况

在Prometheus Web UI中,通过「Status → Targets」查看所有采集目标,确保所有目标的「State」均为「UP」,无「DOWN」状态(若有DOWN状态,需结合对应组件日志排查)。

bash

# 若部分目标DOWN,可查看Prometheus Pod日志排查问题

kubectl logs -n monitoring -l app=prometheus -f关键采集目标验证清单:

-

node-exporter:所有节点的节点级指标采集正常

-

kube-state-metrics:K8s资源对象指标采集正常

-

kube-apiservers、kube-controller-manager等:K8s核心组件指标采集正常

-

blackbox-exporter:HTTP/TCP探测目标(若配置)采集正常

4.3 验证数据查询功能

在Prometheus Web UI的「Graph」页面,输入常见查询语句,验证能正常返回指标数据:

bash

# 1. 查询所有节点CPU使用率(单位:%)

avg(rate(node_cpu_seconds_total{mode!="idle"}[5m])) by (instance) * 100

# 2. 查询所有Pod内存使用量(单位:MiB)

sum(container_memory_usage_bytes{namespace!="kube-system"}) by (pod) / 1024 / 1024

# 3. 查询kube-state-metrics组件运行状态

up{job="kube-state-metrics"}5. 日常运维操作

5.1 数据备份与清理

Prometheus数据通过PVC持久化存储,需定期进行备份,避免数据丢失;同时可根据实际需求调整数据保留时间。

- 数据备份`# 找到Prometheus数据存储的PVC对应的Pod(即Prometheus Pod)

POD_NAME=$(kubectl get pods -n monitoring -l app=prometheus -o jsonpath="{.items0.metadata.name}")

将数据备份到master01节点的指定目录(示例:/backup/prometheus)

kubectl cp -n monitoring PODNAME:/prometheus/backup/prometheus/POD_NAME:/prometheus /backup/prometheus/PODNAME:/prometheus/backup/prometheus/(date +%Y%m%d)

`

- 数据保留时间调整:修改prometheus.yaml中「--storage.tsdb.retention.time=15d」参数(单位支持d、h、m,如30d表示保留30天),修改后重新部署Prometheus。

kubectl apply -f prometheus.yaml

5.2 组件重启与升级

5.2.1 组件重启

当组件出现异常(如Pod卡死、指标采集失败)时,可通过重启Pod解决:

bash

# 重启Prometheus Pod

kubectl delete pods -n monitoring -l app=prometheus

# 重启node-exporter(DaemonSet,会重启所有节点的Pod)

kubectl rollout restart daemonset node-exporter -n monitoring

# 重启其他组件(如kube-state-metrics)

kubectl rollout restart deployment kube-state-metrics -n monitoring5.2.2 组件升级

升级组件时,主要修改对应YAML文件中的镜像版本,然后重新部署即可(以Prometheus为例):

bash

# 1. 编辑prometheus.yaml,修改image字段为目标版本(如v3.9.1)

vi prometheus.yaml

# 2. 重新部署

kubectl apply -f prometheus.yaml注意:升级前建议备份对应组件的配置文件和数据,避免升级失败导致数据丢失或配置异常。

5.3 配置修改

当需要修改Prometheus采集规则、全局参数等配置时,修改prometheus-config-cm.yaml文件,然后重新应用配置并热加载Prometheus:

bash

# 1. 编辑配置文件

vi prometheus-config-cm.yaml

# 2. 重新应用配置

kubectl apply -f prometheus-config-cm.yaml

# 3. 热加载Prometheus配置(无需重启Pod)

POD_NAME=$(kubectl get pods -n monitoring -l app=prometheus -o jsonpath="{.items[0].metadata.name}")

kubectl exec -n monitoring $POD_NAME -- curl -X POST http://localhost:9090/-/reload6. 常见问题排查

6.1 Pod部署失败(Pending/Error状态)

- Pending状态:原因1:资源不足(CPU/内存不够)→ 检查节点资源使用情况,调整Pod的resources.requests/limits参数。

`# 查看节点资源使用情况

kubectl top nodes

编辑对应YAML文件,调整资源配置后重新部署

vi xxx.yaml

kubectl apply -f xxx.yaml`

- 原因2:PVC绑定失败 → 检查默认存储类是否正常,存储资源是否充足。

`# 检查存储类状态

kubectl get sc

检查PVC状态,若为Pending,排查存储类问题

kubectl get pvc -n monitoring`

- Error状态:

查看Pod日志,定位具体错误原因:

kubectl logs -n monitoring <Pod名称> -f

6.2 指标采集失败(Targets状态为DOWN)

-

检查组件Pod是否正常运行:

kubectl get pods -n monitoring -l app=<组件名称> # 组件名称如node-exporter、kube-state-metrics -

检查网络端口是否通畅(组件所需端口未被防火墙拦截):

`# 示例:检查node-exporter的9100端口是否能访问

telnet <节点IP> 9100

-

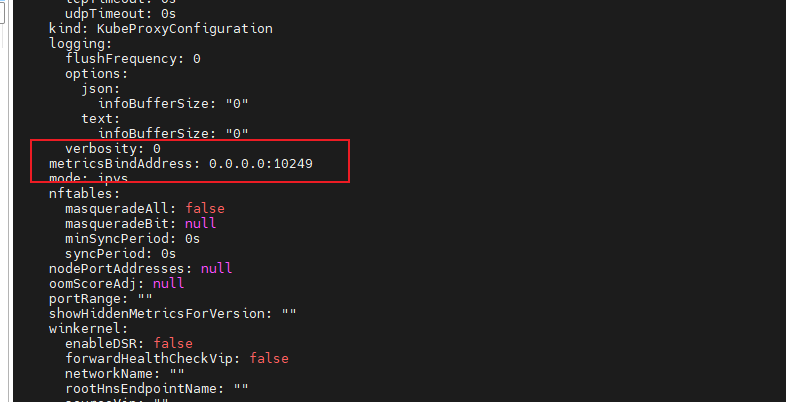

容器守护进程部署的kube-proxy Targets状态为DOWN :

a. 登录 对应 节点查看 kube-proxy的 10249 是否已打开,以及该端口使用的是http协议。

telnet <节点IP> 10249 # 要是端口不通,需要确认是否绑在本地回环地址

telnet 127.0.0.1 10249 # 使用回环地址就通,使用节点IP不通

b. 修改 kube-proxy 配置文件configMap,metricsBindAddress: 0.0.0.0:10249 # 核心修改:开启 10249 端口

kubectl -n kube-system edit cm kube-proxy

c. 修改完成后,重启 kube-proxy守护进程即可

kubectl -n kube-system rollout restart ds kube-proxy

若无法访问,关闭节点防火墙或开放对应端口

systemctl stop firewalld # 临时关闭

systemctl disable firewalld # 永久关闭`

- 检查Prometheus配置是否正确:确认采集目标的地址、端口配置无误,重新应用配置并热加载。

6.3 Prometheus Web UI无法访问

-

检查Prometheus Pod和Service是否正常运行:

kubectl get pods -n monitoring -l app=prometheuskubectl get svc -n monitoring prometheus -

若为ClusterIP类型,确认端口转发是否成功;若为NodePort类型,确认端口未被占用,且节点IP可正常访问。

-

查看Prometheus日志,排查是否有服务启动失败的错误:

kubectl logs -n monitoring -l app=prometheus -f

7. 总结

本文档已完成Kubernetes集群Prometheus监控系统的完整部署流程,涵盖环境准备、组件部署、整体验证、日常运维及常见问题排查。按照文档步骤操作,可实现对K8s集群基础设施、资源对象、服务可用性的全面监控。

后续可根据实际业务需求,扩展监控范围(如添加自定义服务监控)、配置告警规则(结合Alertmanager),进一步完善监控体系,保障K8s集群稳定运行。