一.构建harbor镜像仓库

1.安装docker

[root@harbor ~]# cat > /etc/yum.repos.d/docker.repo <<EOF

[docker]

name = docker

baseurl = https://mirrors.aliyun.com/docker-ce/linux/rhel/9.6/x86_64/stable/

gpgcheck = 0

EOF

[root@harbor ~]# dnf install docker-ce-3:28.5.2-1.el9 -y

#激活内核网络选项

[root@harbor ~]# echo br_netfilter > /etc/modules-load.d/docker_mod.conf

[root@harbor ~]# modprobe -a br_netfilter

[root@harbor ~]# vim /etc/sysctl.d/docker.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

[root@harbor ~]# sysctl --system

[root@harbor ~]# vim /lib/systemd/system/docker.service

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --iptables=true

[root@harbor ~]# systemctl daemon-reload

[root@harbor ~]# systemctl enable --now docker2.生成key

[root@harbor ~]# mkdir /data/certs -p

[root@harbor ~]# mkdir /data/certs -p

[root@harbor ~]# openssl req -newkey rsa:4096 \

-nodes -sha256 -keyout /data/certs/timinglee.org.key \

-addext "subjectAltName = DNS:reg.timinglee.org" \

-x509 -days 365 -out /data/certs/timinglee.org.crt

You are about to be asked to enter information that will be incorporated

into your certificate request.

What you are about to enter is what is called a Distinguished Name or a DN.

There are quite a few fields but you can leave some blank

For some fields there will be a default value,

If you enter '.', the field will be left blank.

-----

Country Name (2 letter code) [XX]:CN

State or Province Name (full name) []:Shannxi

Locality Name (eg, city) [Default City]:Xi'an

Organization Name (eg, company) [Default Company Ltd]:kubernetes

Organizational Unit Name (eg, section) []:harbor

Common Name (eg, your name or your server's hostname) []:reg.timinglee.org

Email Address []:admin@timinglee.org3.编辑harbor配置文件

[root@harbor ~]# tar zxf harbor-offline-installer-v2.5.4.tgz -C /opt/

[root@harbor ~]# cd /opt/harbor/

[root@harbor harbor]# ls

common.sh harbor.v2.5.4.tar.gz harbor.yml.tmpl install.sh LICENSE prepare

[root@harbor harbor]# cp harbor.yml.tmpl harbor.yml

[root@harbor harbor]# vim harbor.yml

hostname: reg.timinglee.org

certificate: /data/certs/timinglee.org.crt

private_key: /data/certs/timinglee.org.key

harbor_admin_password: lee

[root@harbor harbor]# ./install.sh --with-chartmuseum4.启动并验证

[root@harbor harbor]# mkdir /etc/docker/certs.d/reg.timinglee.org/ -p

[root@harbor harbor]# cp /data/certs/timinglee.org.crt /etc/docker/certs.d/reg.timinglee.org/ca.crt

[root@harbor harbor]# vim /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.200 harbor reg.timinglee.org

[root@harbor harbor]# systemctl restart docker

[root@harbor harbor]# docker compose up -d

[root@harbor harbor]# docker login reg.timinglee.org -u admin

Password:

WARNING! Your credentials are stored unencrypted in '/root/.docker/config.json'.

Configure a credential helper to remove this warning. See

https://docs.docker.com/go/credential-store/

Login Succeeded二.构建部署kubernetes所需主机

| 主机名 | ip | 配置 | 角色 |

|---|---|---|---|

| harbor.timinglee.org | 192.168.170.200 | cpu1、mem 1G | harbor仓库 |

| master-node | 192.168.170.100 | cpu4、mem>4G | master,k8s集群控制节点 |

| node1 | 192.168.170.10 | cpu2、mem 2G | worker,k8s集群工作节点 |

| node2 | 192.168.170.20 | cpu2、mem 2G | worker,k8s集群工作节点 |

1.所有主机配置

关闭swap

bash

systemctl disable --now swap.target

systemctl mask swap.target

sed '/swap/s/^/#/g' -i /etc/fstab安装docker

配置可以使用harbor仓库

bash

mkdir /etc/docker/certs.d/reg.timinglee.org/ -p

#在harbor主机中分发证书到所有主机

[root@harbor ~]# for i in 100 10 20

> do

> scp /data/certs/timinglee.org.crt root@192.168.170.$i:/etc/docker/certs.d/reg.timinglee.org/ca.crt

> done

systemctl enable docker

systemctl restart docker所有主机配置docker加速器

bash

cat >/etc/docker/daemon.json <<EOF

{

"registry-mirrors":["https://reg.timinglee.org"]

}

EOF

systemctl restart docker

docker info

可以看到

Registry Mirrors:

https://reg.timinglee.org/所有主机彼此建立解析

bash

vim /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.170.100 master

192.168.170.10 node1

192.168.170.20 node2

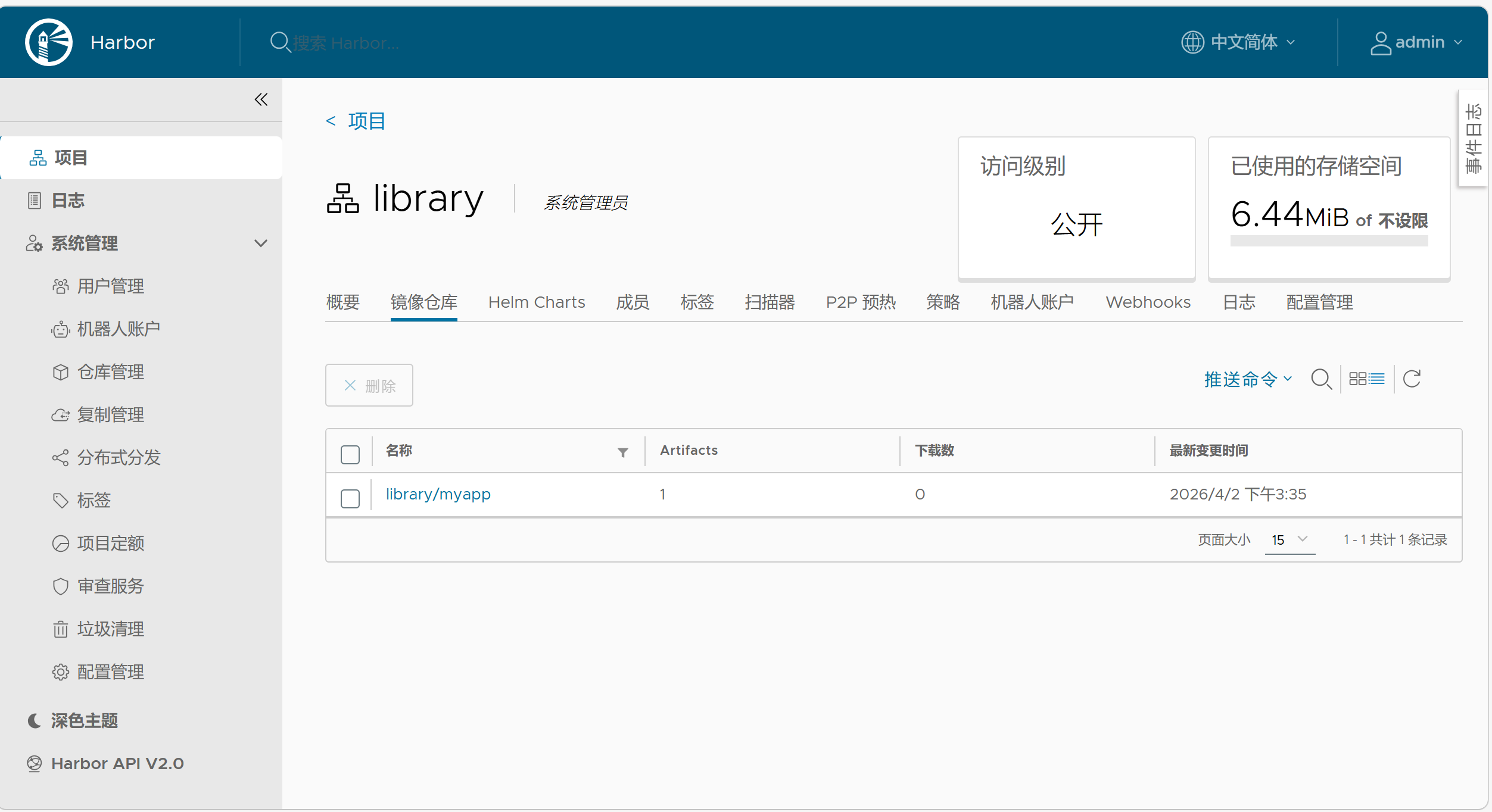

192.168.170.200 reg.timinglee.org上传镜像

bash

[root@harbor ~]# ls

anaconda-ks.cfg busyboxplus.tar harbor-offline-installer-v2.5.4.tgz myapp.tar.gz

[root@harbor ~]# docker load -i busyboxplus.tar

5f70bf18a086: Loading layer 1.024kB/1.024kB

165264a81ac2: Loading layer 4.729MB/4.729MB

430380561a4f: Loading layer 3.072kB/3.072kB

Loaded image: rickiechina/busyboxplus:latest

[root@harbor ~]# docker load -i myapp.tar.gz

d39d92664027: Loading layer 4.232MB/4.232MB

8460a579ab63: Loading layer 11.61MB/11.61MB

c1dc81a64903: Loading layer 3.584kB/3.584kB

68695a6cfd7d: Loading layer 4.608kB/4.608kB

05a9e65e2d53: Loading layer 16.38kB/16.38kB

a0d2c4392b06: Loading layer 7.68kB/7.68kB

Loaded image: timinglee/myapp:v1

Loaded image: timinglee/myapp:v2

[root@harbor ~]# docker tag timinglee/myapp:v1 reg.timinglee.org/library/myapp:v1

[root@harbor ~]# docker push reg.timinglee.org/library/myapp:v1

The push refers to repository [reg.timinglee.org/library/myapp]

a0d2c4392b06: Pushed

05a9e65e2d53: Pushed

68695a6cfd7d: Pushed

c1dc81a64903: Pushed

8460a579ab63: Pushed

d39d92664027: Pushed

v1: digest: sha256:9eeca44ba2d410e54fccc54cbe9c021802aa8b9836a0bcf3d3229354e4c8870e size: 1569

[root@harbor ~]# docker load -i busyboxplus.tar

Loaded image: rickiechina/busyboxplus:latest

[root@harbor ~]# docker tag rickiechina/busyboxplus:latest reg.timinglee.org/library/bus

yboxplus

[root@harbor ~]# docker push reg.timinglee.org/library/busyboxplus

Using default tag: latest

The push refers to repository [reg.timinglee.org/library/busyboxplus]

5f70bf18a086: Pushed

430380561a4f: Pushed

165264a81ac2: Pushed

latest: digest: sha256:ef538eae80f40015736f1ee308d74b4f38f74e978c65522ce64abdf8c8c5e0d6 size: 1765

#在所有主机上执行

[root@master ~]# docker pull myapp:v1

v1: Pulling from library/myapp

550fe1bea624: Pull complete

af3988949040: Pull complete

d6642feac728: Pull complete

c20f0a205eaa: Pull complete

438668b6babd: Pull complete

bf778e8612d0: Pull complete

Digest: sha256:9eeca44ba2d410e54fccc54cbe9c021802aa8b9836a0bcf3d3229354e4c8870e

Status: Downloaded newer image for myapp:v1

docker.io/library/myapp:v1

[root@master ~]# docker pull busyboxplus

Using default tag: latest

latest: Pulling from library/busyboxplus

4f4fb700ef54: Pull complete

72d86f26813c: Pull complete

f45cff1e8e73: Pull complete

Digest: sha256:ef538eae80f40015736f1ee308d74b4f38f74e978c65522ce64abdf8c8c5e0d6

Status: Downloaded newer image for busyboxplus:latest

docker.io/library/busyboxplus:latest

bash

[root@harbor ~]# ls

anaconda-ks.cfg harbor-offline-installer-v2.5.4.tgz nginx-1.23.tar.gz

busyboxplus.tar myapp.tar.gz nginx-1.26.tar

[root@harbor ~]# docker load -i nginx-1.26.tar

ea680fbff095: Loading layer 28.23MB/28.23MB

cf1414070399: Loading layer 43.92MB/43.92MB

c9829892bdc3: Loading layer 628B/628B

67aafe507102: Loading layer 956B/956B

609897c892b4: Loading layer 404B/404B

639bb519faac: Loading layer 1.209kB/1.209kB

76d7a104c298: Loading layer 1.399kB/1.399kB

Loaded image: nginx:1.26

[root@harbor ~]# docker tag nginx:1.26 reg.timinglee.org/library/nginx:latest

[root@harbor ~]# docker push reg.timinglee.org/library/nginx:latest

The push refers to repository [reg.timinglee.org/library/nginx]

76d7a104c298: Pushed

639bb519faac: Pushed

609897c892b4: Pushed

67aafe507102: Pushed

c9829892bdc3: Pushed

cf1414070399: Pushed

ea680fbff095: Pushed

latest: digest: sha256:ee228419e4bec2a78632d216e137e49dfd8f6f65b2f20e666ee4cab14eda781a size: 1778

[root@harbor ~]# docker load -i nginx-1.23.tar.gz

8cbe4b54fa88: Loading layer 84.01MB/84.01MB

5dd6bfd241b4: Loading layer 62.51MB/62.51MB

043198f57be0: Loading layer 3.584kB/3.584kB

2731b5cfb616: Loading layer 4.608kB/4.608kB

6791458b3942: Loading layer 3.584kB/3.584kB

4d33db9fdf22: Loading layer 7.168kB/7.168kB

Loaded image: nginx:1.23

[root@harbor ~]# docker tag nginx:1.23 reg.timinglee.org/library/nginx:latest

1.23

[root@harbor ~]# docker tag nginx:1.23 reg.timinglee.org/library/nginx:latest

1.23

[root@harbor ~]# docker tag nginx:1.23 reg.timinglee.org/library/nginx:1.23

[root@harbor ~]# docker push reg.timinglee.org/library/nginx:1.23

The push refers to repository [reg.timinglee.org/library/nginx]

4d33db9fdf22: Pushed

6791458b3942: Pushed

2731b5cfb616: Pushed

043198f57be0: Pushed

5dd6bfd241b4: Pushed

8cbe4b54fa88: Pushed

1.23: digest: sha256:a97a153152fcd6410bdf4fb64f5622ecf97a753f07dcc89dab14509d059736cf size: 1570

[root@master ~]# docker pull nginx:latest

latest: Pulling from library/nginx

4a679ac3b09f: Pull complete

7a0654aeb922: Pull complete

5e98d206134b: Pull complete

6923759e66ab: Pull complete

d44088bb6ae8: Pull complete

9ebfb40fb06b: Pull complete

4fd410795c0f: Pull complete

Digest: sha256:ee228419e4bec2a78632d216e137e49dfd8f6f65b2f20e666ee4cab14eda781a

Status: Downloaded newer image for nginx:latest

docker.io/library/nginx:latest

[root@master ~]# docker pull nginx:1.23

1.23: Pulling from library/nginx

f03b40093957: Pull complete

0972072e0e8a: Pull complete

a85095acb896: Pull complete

d24b987aa74e: Pull complete

6c1a86118ade: Pull complete

9989f7b33228: Pull complete

Digest: sha256:a97a153152fcd6410bdf4fb64f5622ecf97a753f07dcc89dab14509d059736cf

Status: Downloaded newer image for nginx:1.23

docker.io/library/nginx:1.23

所有主机配置kubernetes安装源

bash

vim /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name = kubernetes

baseurl = https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.35/rpm/

gpgcheck = 0

#检测

dnf list kubelet以上操作完成后重启主机检测swap分区

bash

swapon -s 没有任何输出表示ok三.kubernetes的部署

1.安装cri-dockerd(所有主机(即master、node1和node2)中安装)

bash

[root@master ~]# ls #上传第二三个rpm

anaconda-ks.cfg cri-dockerd-0.3.14-3.el8.x86_64.rpm libcgroup-0.41-19.el8.x86_64.rpm

[root@master ~]# rpm -ivh *.rpm

警告:libcgroup-0.41-19.el8.x86_64.rpm: 头V4 RSA/SHA256 Signature, 密钥 ID 6d745a60: NOKEY

Verifying... ################################# [100%]

准备中... ################################# [100%]

正在升级/安装...

1:libcgroup-0.41-19.el8 ################################# [ 50%]

2:cri-dockerd-3:0.3.14-3.el8 ################################# [100%]

[root@master ~]# scp cri-dockerd-0.3.14-3.el8.x86_64.rpm libcgroup-0.41-19.el8.x86_64.rpm root@192.168.170.10:/root

Warning: Permanently added '192.168.170.10' (ED25519) to the list of known hosts.

cri-dockerd-0.3.14-3.el8.x86_64.rpm 100% 11MB 42.9MB/s 00:00

libcgroup-0.41-19.el8.x86_64.rpm 100% 69KB 19.8MB/s 00:00

[root@master ~]# scp cri-dockerd-0.3.14-3.el8.x86_64.rpm libcgroup-0.41-19.el8.x86_64.rpm root@192.168.170.20:/root

Warning: Permanently added '192.168.170.20' (ED25519) to the list of known hosts.

cri-dockerd-0.3.14-3.el8.x86_64.rpm 100% 11MB 26.7MB/s 00:00

libcgroup-0.41-19.el8.x86_64.rpm 100% 69KB 5.2MB/s 00:00

[root@node1+2 ~]# rpm -ivh *.rpm

bash

vim /lib/systemd/system/cri-docker.service

systemctl enable --now cri-docker

Created symlink /etc/systemd/system/multi-user.target.wants/cri-docker.service → /usr/lib/systemd/system/cri-docker.service.

验证:

[root@master ~]# ll /var/run/cri-dockerd.sock

srw-rw---- 1 root docker 0 4月 2 15:47 /var/run/cri-dockerd.sock2.安装构建kubernetes 集群所需软件

master节点

bash

dnf install kubelet kubeadm kubectl -y

systemctl enable --now kubelet.servicenode节点

bash

dnf install kubelet kubeadm -y

systemctl enable --now kubelet.servicemaster节点中 kubectl 和kubeadm 补齐

bash

[root@master ~]# echo "source <(kubectl completion bash)" >> ~/.bashrc

[root@master ~]# echo "source <(kubeadm completion bash)" >> ~/.bashrc

[root@master ~]# source ~/.bashrc3.下载kubernetes集群所需镜像

下载镜像

bash

#下载之前先添加DNS

[root@master ~]# nmcli connection modify eth0 ipv4.dns "223.5.5.5 119.29.29.29 114.114.114.114"

[root@master ~]# nmcli connection up eth0

连接已成功激活(D-Bus 活动路径:/org/freedesktop/NetworkManager/ActiveConnection/4)

#在maser节点拉取k8s所需镜像

[root@master ~]# kubeadm config images pull \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.35.3 \

--cri-socket=unix:///var/run/cri-dockerd.sock

#运行成功结果

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-apiserver:v1.35.3

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-controller-manager:v1.35.3

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-scheduler:v1.35.3

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-proxy:v1.35.3

[config/images] Pulled registry.aliyuncs.com/google_containers/coredns:v1.13.1

[config/images] Pulled registry.aliyuncs.com/google_containers/pause:3.10.1

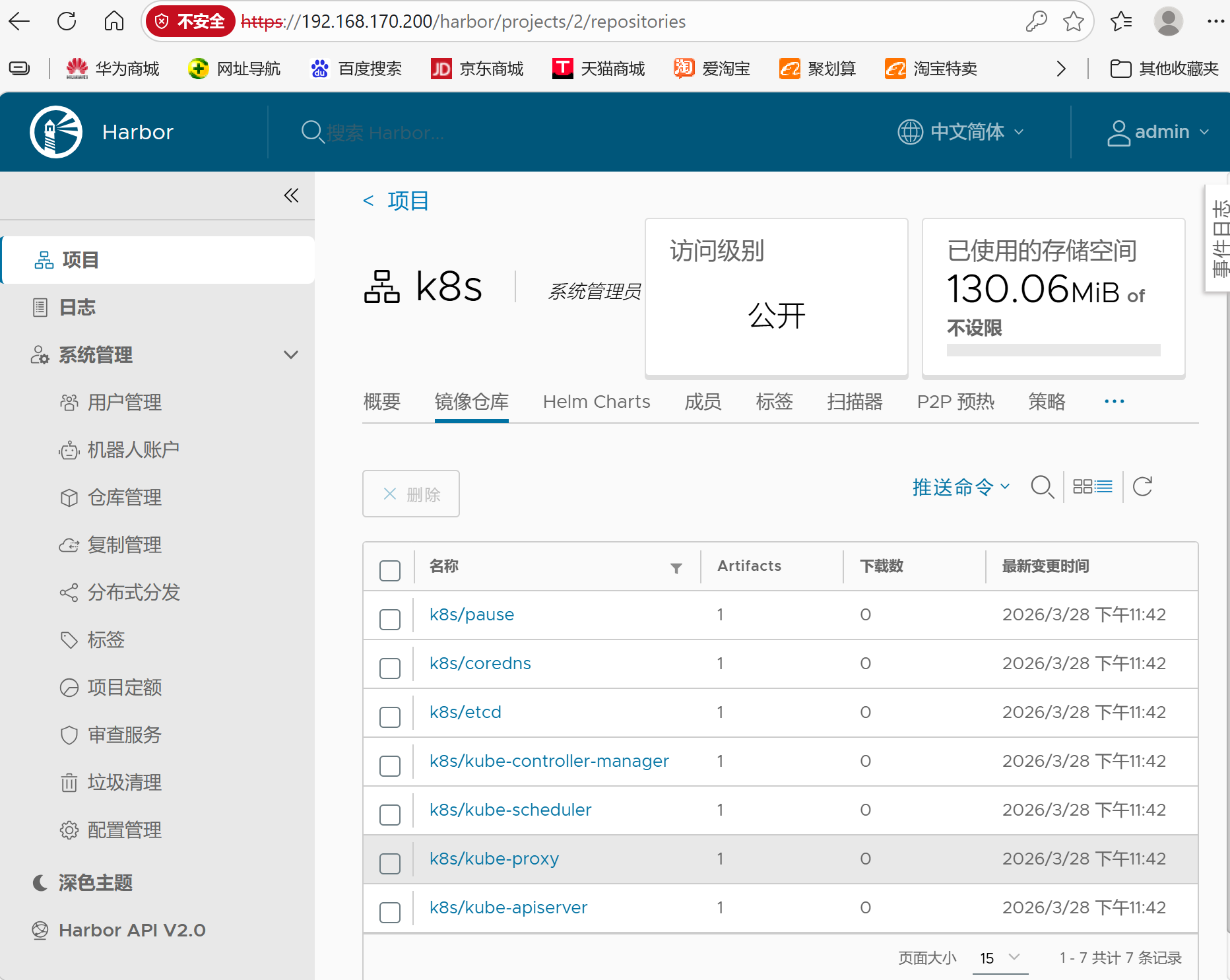

[config/images] Pulled registry.aliyuncs.com/google_containers/etcd:3.6.6-0上传镜像到本地harbor

bash

[root@master ~]# docker login reg.timinglee.org -u admin

Password:

WARNING! Your credentials are stored unencrypted in '/root/.docker/config.json'.

Configure a credential helper to remove this warning. See

https://docs.docker.com/go/credential-store/

Login Succeeded

[root@master ~]# docker images --format "{{.Repository}}:{{.Tag}}" | awk -F "/" '/google/{system("docker tag "$0" reg.timinglee.org/k8s/"$3)}'

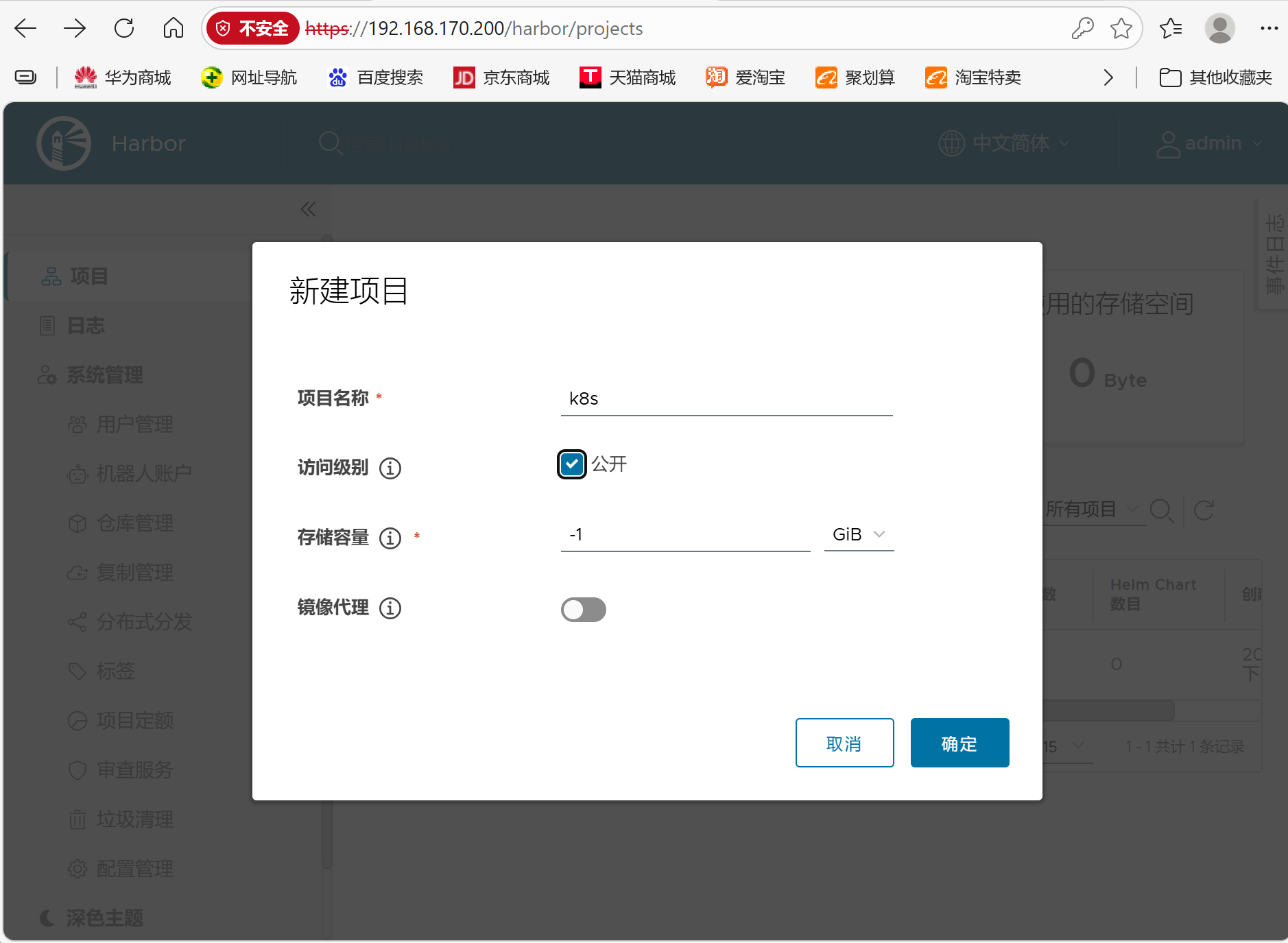

#在harbor新建k8s后执行

[root@master ~]# docker images --format "{{.Repository}}:{{.Tag}}" | awk -F "/" '/timinglee/{system("docker push "$0)}'

运行成功后点开k8s目录里面会有内容

4.在master中初始化kubernetes集群

在master中完成集群初始化

bash

[root@master ~]# kubeadm init --pod-network-cidr=10.244.0.0/16 \

--image-repository reg.timinglee.org/k8s \

--kubernetes-version v1.35.3 \

--cri-socket=unix:///var/run/cri-dockerd.sock

。。。

#每个人不一样,其他主机加入本集群的凭证

kubeadm join 192.168.170.100:6443 --token j00otq.2euwrx91826twqjz \

--discovery-token-ca-cert-hash sha256:c92db652b15dca565e5be7f538f89a84f2d6a08caeb5bbe1c49d0fb30aab37ab

#如果忘记

[root@master ~]# kubeadm token create --print-join-command

kubeadm join 192.168.170.100:6443 --token j00otq.2euwrx91826twqjz \

--discovery-token-ca-cert-hash sha256:c92db652b15dca565e5be7f538f89a84f2d6a08caeb5bbe1c49d0fb30aab37ab

#如果初始化出问题

[root@master ~]# kubeadm reset --cri-socket=unix:///var/run/cri-dockerd.sock #可以重置集群设定添加kubernets环境变量到本机

bash

[root@master ~]# echo "export KUBECONFIG=/etc/kubernetes/admin.conf" > ~/.bash_profile

[root@master ~]# source ~/.bash_profile

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master NotReady control-plane 102s v1.35.3添加node节点到本集群

bash

[root@node1 ~]# kubeadm join 172.25.254.100:6443 --token jl4ztx.cax3iysvu7onsh5s --discovery-token-ca-cert-hash sha256:6b5950ef2cdba85d6dfdb564ee90d4187fa3d341767dc9852cbdd5c9dee4f927 --cri-socket=unix:///var/run/cri-dockerd.sock

#测试

[root@master ~]# kubectl get nodes #可以看到集群中主机但是因为网络插件问题状态是NotReady

NAME STATUS ROLES AGE VERSION

master NotReady control-plane 5m16s v1.35.3

node1 NotReady <none> 66s v1.35.3

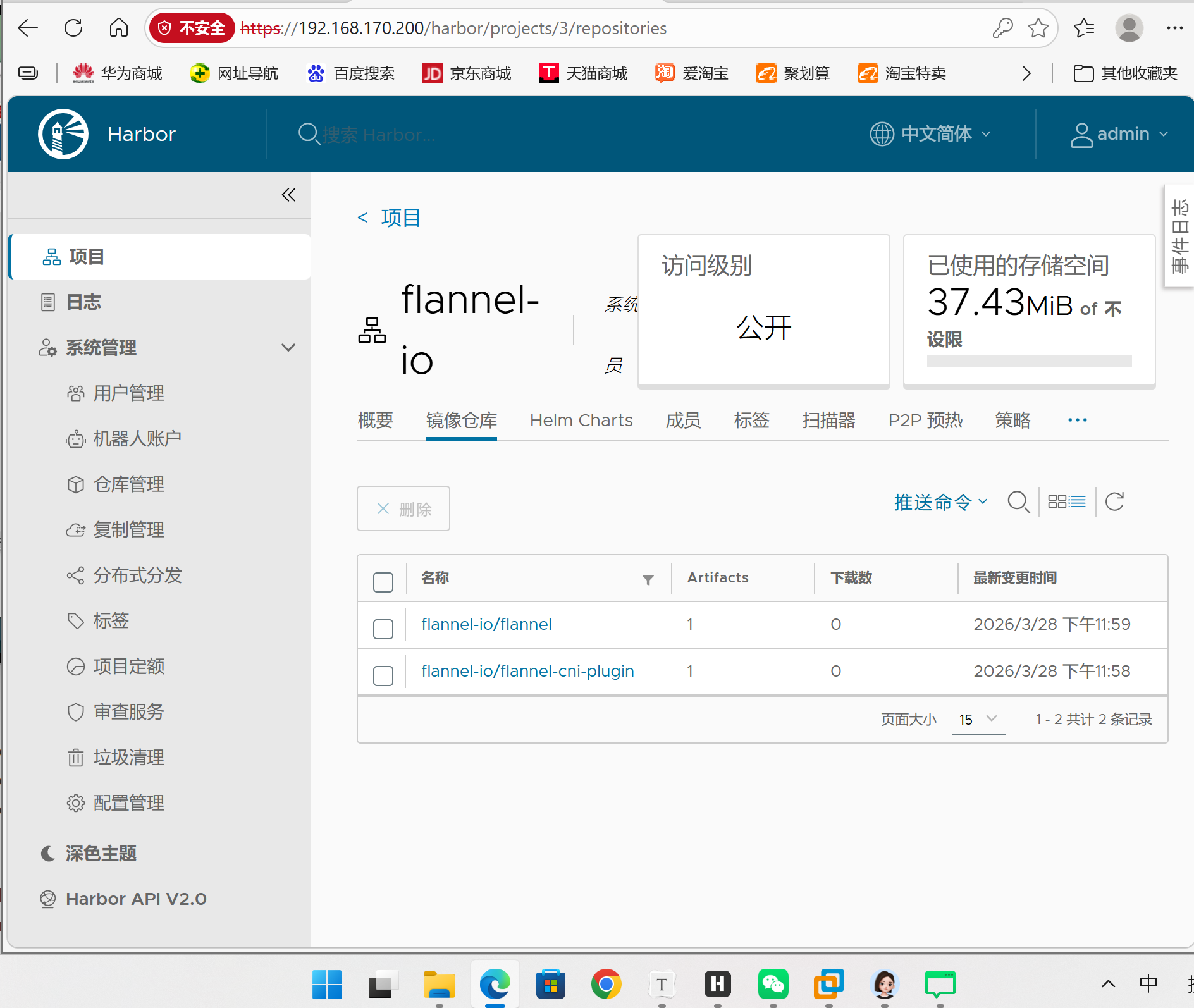

node2 NotReady <none> 58s v1.35.35.安装网络插件

bash

[root@master ~]# ls

anaconda-ks.cfg flannel-0.28.1.tar libcgroup-0.41-19.el8.x86_64.rpm

cri-dockerd-0.3.14-3.el8.x86_64.rpm kube-flannel.yml

[root@master ~]# docker load -i flannel-0.28.1.tar

[root@master ~]# docker tag ghcr.io/flannel-io/flannel-cni-plugin:v1.9.0-flannel1 reg.timinglee.org/flannel-io/flannel-cni-plugin:v1.9.0-flannel1

[root@master ~]# docker push reg.timinglee.org/flannel-io/flannel-cni-plugin:v1.9.0-flannel1

The push refers to repository [reg.timinglee.org/flannel-io/flannel-cni-plugin]

2c8aa52d4746: Pushed

5aa68bbbc67e: Pushed

v1.9.0-flannel1: digest: sha256:b3d30c221113b30fea3e8a7fccb145e929b097d0319b9eeb6b5a591b10b5c671 size: 739

#提前跟前面一样建立项目flannel-io

[root@master ~]# docker tag ghcr.io/flannel-io/flannel:v0.28.1 reg.timinglee.org/flannel-io/flannel:v0.28.1

[root@master ~]# docker push reg.timinglee.org/flannel-io/flannel:v0.28.1

The push refers to repository [reg.timinglee.org/flannel-io/flannel]

5668da16a30b: Pushed

5f70bf18a086: Pushed

00012e17b6cc: Pushed

9738bb9596cf: Pushed

b6233bc105d7: Pushed

03767449b95b: Pushed

9a7d8cf9ff51: Pushed

13a60c10faeb: Pushed

70dc5e033175: Pushed

256f393e029f: Pushed

v0.28.1: digest: sha256:e671adbc267460164555159210066d3304a43e3b5dd85cc0b5b6ad62e83aab52 size: 2414项目flannel-io内添加内容:

bash

[root@master ~]# vim kube-flannel.yml

image: flannel-io/flannel:v0.28.1

image: flannel-io/flannel-cni-plugin:v1.9.0-flannel1

image: flannel-io/flannel:v0.28.1

[root@master ~]# kubectl apply -f kube-flannel.yml

namespace/kube-flannel created

serviceaccount/flannel created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created测试:

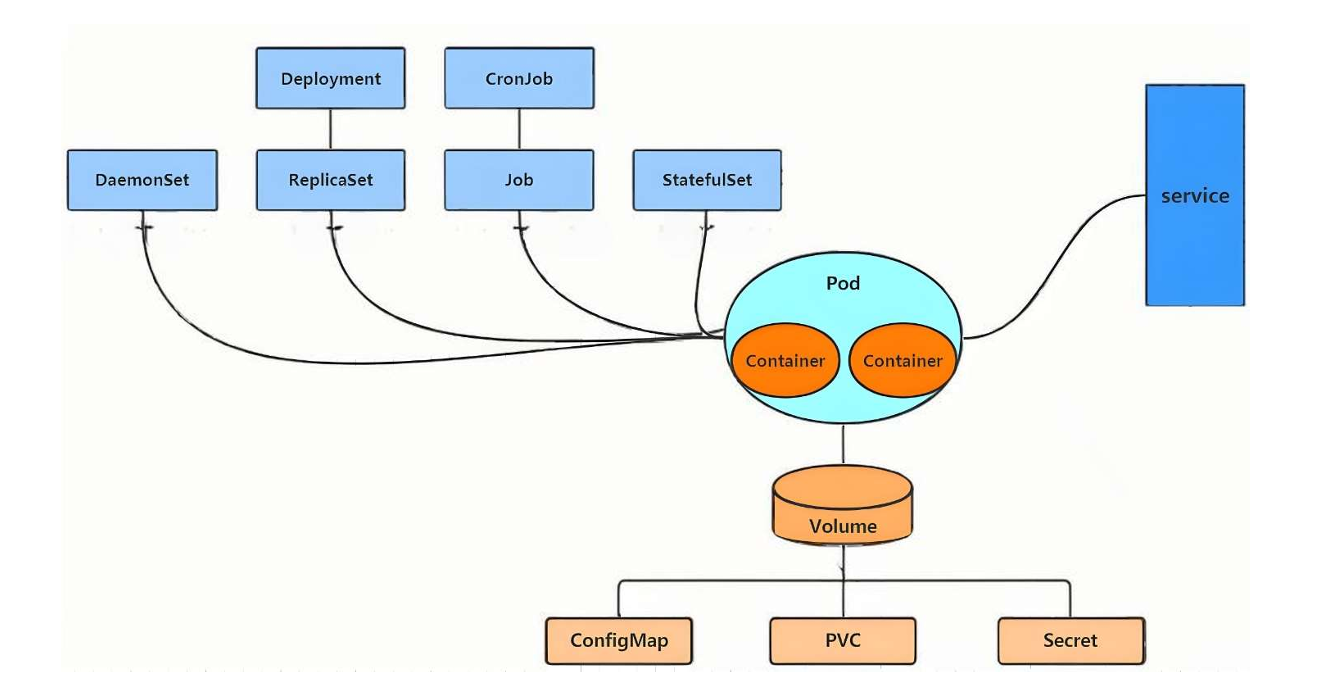

四、资源使用的方法

资源管理介绍

- 在 kubernetes 中,所有的内容都抽象为资源,用户需要通过操作资源来管理 kubernetes。

- kubernetes 的本质上就是一个集群系统,用户可以在集群中部署各种服务

- 所谓的部署服务,其实就是在 kubernetes 集群中运行一个个的容器,并将指定的程序跑在容器中。

- kubernetes 的最小管理单元是 pod 而不是容器,只能将容器放在 Pod 中,

- kubernetes 一般也不会直接管理 Pod,而是通过 Pod 控制器来管理 Pod 的。

- Pod 中服务服务的访问是由 kubernetes 提供的 Service 资源来实现。

- Pod 中程序的数据需要持久化是由 kubernetes 提供的各种存储系统来实现

1.命令式

直接通过kubectl命令快速创建、查看、删除 Pod(容器的集合)

-

命令式操作快,但只适合临时测试;

-

删除 Pod 后,这个 Pod 就彻底没了,不会自动重建(因为没有控制器管理)。

#创建一个叫 webpod 的 Pod,用 nginx 最新镜像,暴露 80 端口

[root@master ~]# kubectl run testpod --image=reg.timinglee.org/k8s/pause:3.10.1 --restart=Never #只能用你本地已经有的镜像

pod/testpod created

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

testpod 1/1 Running 0 3s

[root@master ~]# kubectl describe pods testpod

Name: testpod

Namespace: default

Priority: 0

Service Account: default

Node: node2/192.168.170.20

Start Time: Sun, 29 Mar 2026 11:28:27 +0800

Labels: run=testpod

Annotations: <none>

Status: Running

IP: 10.244.1.9

IPs:

IP: 10.244.1.9

Containers:

testpod:

Container ID: docker://8f9236aabfca8a567a962fc63b9ad39aa3d33feea85ee3acbc9972622ed8209e

Image: reg.timinglee.org/k8s/pause:3.10.1

Image ID: docker-pullable://reg.timinglee.org/k8s/pause@sha256:d31e7f29ada8b13c6dd047ec4805720fcf14df5fccbb27903e374dc56225ca49

Port: <none>

Host Port: <none>

State: Running

Started: Sun, 29 Mar 2026 11:28:27 +0800

Ready: True

Restart Count: 0

Environment: <none>

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-hqwg2 (ro)

Conditions:

Type Status

PodReadyToStartContainers True

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-hqwg2:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

Optional: false

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 18s default-scheduler Successfully assigned default/testpod to node2

Normal Pulled 18s kubelet spec.containers{testpod}: Container image "reg.timinglee.org/k8s/pause:3.10.1" already present on machine and can be accessed by the pod

Normal Created 18s kubelet spec.containers{testpod}: Container created

Normal Started 18s kubelet spec.containers{testpod}: Container started

[root@master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

testpod 1/1 Running 0 42s 10.244.1.9 node2 <none> <none>

[root@master ~]# kubectl delete pods testpod

pod "testpod" deleted from default namespace

2.yaml文件方式

用配置文件(YAML)管理 Pod(通过 Deployment 控制器),支持 "创建 + 更新",比命令式更规范、可复用。

#模拟创建一个叫 test 的 Deployment(管理 Pod 的控制器),用 reg.timinglee.org/k8s/pause:3.10.1 镜像,1 个副本,不真创建,只把配置写到 test.yml 文件里

[root@master ~]# kubectl create deployment test --image=reg.timinglee.org/k8s/pause:3.10.1 --replicas=1 --dry-run=client -o yaml > test.yml

[root@master ~]# vim test.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: test

name: test

spec:

replicas: 1

selector:

matchLabels:

app: test

template:

metadata:

labels:

app: test

spec:

containers:

- image: reg.timinglee.org/k8s/pause:3.10.1

name: nginx

#建立式

[root@master ~]# kubectl create -f test.yml

deployment.apps/test created

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-56848fd9dc-h2sct 1/1 Running 0 8s

[root@master ~]# kubectl delete -f test.yml

deployment.apps "test" deleted from default namespace

[root@master ~]# kubectl get pods

No resources found in default namespace.

#声明式

[root@master ~]# kubectl apply -f test.yml

deployment.apps/test created

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-56848fd9dc-cxtnp 1/1 Running 0 1s

#注意建立只能建立不能更新,声明可以

[root@master ~]# vim test.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: test

name: test

spec:

replicas: 2 #只修改pod数量

。。。。。。。。。。。。。。。。。。

[root@master ~]# kubectl create -f test.yml

Error from server (AlreadyExists): error when creating "test.yml": deployments.apps "test" already exists

[root@master ~]# kubectl apply -f test.yml

deployment.apps/test configured

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-56848fd9dc-9sw95 1/1 Running 0 8s

test-56848fd9dc-cxtnp 1/1 Running 0 2m42s五、资源类型

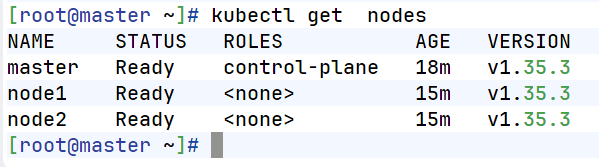

1.node

查看 K8s 集群里的节点(物理 / 虚拟服务器),生成节点加入集群的命令。

bash

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready control-plane 3d22h v1.35.3

node1 Ready <none> 3d22h v1.35.3

node2 Ready <none> 3d22h v1.35.3

#生成 "加入集群的命令"(新节点执行这个命令就能加入集群)。

[root@master ~]# kubeadm token create --print-join-command

kubeadm join 192.168.170.100:6443 --token d6eh0b.3muwmbp1zu22quez --discovery-token-ca-cert-hash sha256:c92db652b15dca565e5be7f538f89a84f2d6a08caeb5bbe1c49d0fb30aab37ab 2.namespace

Namespace 是 K8s 的 "资源隔离空间",不同 Namespace 的资源相互独立(比如两个 Namespace 都能有叫 testpod 的 Pod)。

- 不加

-n默认用 default 命名空间; - Namespace 用于隔离不同环境(比如开发 / 测试 / 生产)的资源。

bash

[root@master ~]# kubectl get namespaces

NAME STATUS AGE

default Active 18h

kube-flannel Active 17h

kube-node-lease Active 18h

kube-public Active 18h

kube-system Active 18h

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-56848fd9dc-9sw95 1/1 Running 0 25m

test-56848fd9dc-cxtnp 1/1 Running 0 27m

[root@master ~]# kubectl -n kube-flannel get pods

NAME READY STATUS RESTARTS AGE

kube-flannel-ds-hc8gt 1/1 Running 1 (17h ago) 17h

kube-flannel-ds-rvzng 1/1 Running 1 (17h ago) 17h

kube-flannel-ds-s29g5 1/1 Running 1 (17h ago) 17h

[root@master ~]# kubectl create namespace timinglee

namespace/timinglee created

[root@master ~]# kubectl get namespaces

NAME STATUS AGE

default Active 18h

kube-flannel Active 17h

kube-node-lease Active 18h

kube-public Active 18h

kube-system Active 18h

timinglee Active 6s

[root@master ~]# kubectl -n timinglee run testpod --image nginx:latest

pod/testpod created

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-56848fd9dc-fc87v 1/1 Running 0 7m4s

test-56848fd9dc-ld6wx 1/1 Running 0 7m44s

[root@master ~]# kubectl -n timinglee get pods

NAME READY STATUS RESTARTS AGE

testpod 1/1 Running 0 18s

[root@master ~]# kubectl -n timinglee run testpod --image nginx:latest

Error from server (AlreadyExists): pods "testpod" already exists

[root@master ~]# kubectl run testpod --image nginx:latest #默认用 default 命名空间

pod/testpod created六、kubectl命令

1.编辑 / 补丁更新 Deployment

#不修改 YAML 文件,直接通过命令更新 Deployment 的配置(比如 Pod 数量)。

- edit 是交互式修改,patch 是命令式修改,都能实时更新 Deployment;

- Deployment 修改后会自动调整 Pod 数量(扩容 / 缩容)。

bash

[root@master ~]# kubectl -n timinglee delete pods testpod

pod "testpod" deleted from timinglee namespace

[root@master ~]# kubectl get deployments.apps

NAME READY UP-TO-DATE AVAILABLE AGE

test 2/2 2 2 13m

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-56848fd9dc-fc87v 1/1 Running 0 12m

test-56848fd9dc-ld6wx 1/1 Running 0 13m

testpod 1/1 Running 0 5m20s

[root@master ~]# kubectl delete pods testpod

pod "testpod" deleted from default namespace

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-56848fd9dc-fc87v 1/1 Running 0 13m

test-56848fd9dc-ld6wx 1/1 Running 0 14m

#直接编辑 Deployment 的配置(改 replicas:4),保存后 K8s 自动生效,Pod 数量变成 4;

[root@master ~]# kubectl edit deployments.apps test

.....

replicas: 4

.....

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-56848fd9dc-fc87v 1/1 Running 0 15m

test-56848fd9dc-ld6wx 1/1 Running 0 15m

test-56848fd9dc-n278v 1/1 Running 0 17s

test-56848fd9dc-xj2hr 1/1 Running 0 17s

#用 "补丁" 快速修改 Pod 数量为 1(适合批量 / 脚本操作)。

[root@master ~]# kubectl patch deployments.apps test -p '{"spec":{"replicas":1}}'

deployment.apps/test patched #非交互式更改

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-56848fd9dc-fc87v 1/1 Running 0 17m

[root@master ~]# kubectl edit deployments.apps test

deployment.apps/test edited

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-56848fd9dc-dmvmc 1/1 Running 0 3s

test-56848fd9dc-fc87v 1/1 Running 0 20m

test-56848fd9dc-lv6wr 1/1 Running 0 3s

test-56848fd9dc-wl4tx 1/1 Running 0 3s

[root@master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

test-56848fd9dc-dmvmc 1/1 Running 0 17s 10.244.2.8 node2 <none> <none>

test-56848fd9dc-fc87v 1/1 Running 0 20m 10.244.1.2 node1 <none> <none>

test-56848fd9dc-lv6wr 1/1 Running 0 17s 10.244.2.7 node2 <none> <none>

test-56848fd9dc-wl4tx 1/1 Running 0 17s 10.244.1.5 node1 <none> <none>2.暴露端口(Service)

Pod 的 IP 是临时的,Service(服务)给 Pod 提供固定访问地址(VIP),让集群内 / 外能稳定访问 Pod。

- Service 的 VIP 是集群内可访问的,Pod 重启 IP 变了,Service 会自动更新 Endpoints;

- 这里创建的是 ClusterIP 类型的 Service,只能集群内访问。

bash

#为test的Deployment创建Service暴露80端口(port是Service的端口,targetPort 是Pod的端口);

[root@master ~]# kubectl expose deployment test --port 80 --target-port 80

service/test exposed

[root@master ~]# kubectl describe service test

Name: test

Namespace: default

Labels: app=test

Annotations: <none>

Selector: app=test

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.106.26.41

IPs: 10.106.26.41

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.2.8:80,10.244.1.2:80,10.244.2.7:80 + 1 more...

Session Affinity: None

Internal Traffic Policy: Cluster

Events: <none>

[root@master ~]# curl 10.106.26.41

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

html { color-scheme: light dark; }

body { width: 35em; margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif; }

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>3.Pod 日志 / 交互 / 文件拷贝

和运行中的 Pod 交互(看日志、进容器、传文件),排查问题。

logs是看日志的核心命令,排查 Pod 故障先看日志;exec -it是进入容器的标配命令。

bash

#查看 Pod 的日志,能看到 nginx 的访问日志(比如 curl 请求记录)

[root@master ~]# curl 10.106.26.41/hostname.html

test-68d8574cb-5zjjv

[root@master ~]# curl 10.106.26.41/hostname.html

test-68d8574cb-7bf6n

[root@master ~]# curl 10.106.26.41/hostname.html

test-68d8574cb-5zjjv

[root@master ~]# curl 10.106.26.41/hostname.html

test-68d8574cb-7bf6n

[root@master ~]# kubectl logs pods/test-68d8574cb-7bf6n

10.244.0.0 - - [02/Apr/2026:09:25:08 +0000] "GET /hostname.html HTTP/1.1" 200 21 "-" "curl/7.76.1" "-"

10.244.0.0 - - [02/Apr/2026:09:26:42 +0000] "GET /hostname.html HTTP/1.1" 200 21 "-" "curl/7.76.1" "-"

10.244.0.0 - - [02/Apr/2026:09:26:44 +0000] "GET /hostname.html HTTP/1.1" 200 21 "-" "curl/7.76.1" "-"

#attech(创建交互式 Pod(busybox 是轻量 Linux 镜像),直接进入 Pod 的命令行)

[root@master ~]# kubectl delete pods testpod

[root@master ~]# kubectl run testpod -it --image busyboxplus

All commands and output from this session will be recorded in container logs, including credentials and sensitive information passed through the command prompt.

If you don't see a command prompt, try pressing enter.

[ root@testpod:/ ]$

[ root@testpod:/ ]$

[ root@testpod:/ ]$ ctrl+pq(可后台挂起)

Session ended, resume using 'kubectl attach testpod -c testpod -i -t' command when the pod is running

#重新连接挂起的 Pod

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-68d8574cb-5zjjv 1/1 Running 0 10m

test-68d8574cb-7bf6n 1/1 Running 0 10m

test-68d8574cb-m8qnl 1/1 Running 0 10m

test-68d8574cb-nxm5r 1/1 Running 0 10m

testpod 1/1 Running 1 (90s ago) 114s

[root@master ~]# kubectl attach pods/testpod -it

All commands and output from this session will be recorded in container logs, including credentials and sensitive information passed through the command prompt.

If you don't see a command prompt, try pressing enter.

[ root@testpod:/ ]$

[ root@testpod:/ ]$ exit

Session ended, resume using 'kubectl attach testpod -c testpod -i -t' command when the pod is running

#进入已运行的 Pod(最常用),不用创建新 Pod,直接进入现有 Pod 的容器

[root@master ~]# kubectl exec -it pods/testpod -c testpod -- /bin/sh

/bin/sh: shopt: not found

[ root@testpod:/ ]$

[ root@testpod:/ ]$ 4.扩容/标签操作

- 扩容:调整 Deployment 管理的 Pod 数量;

- 标签:给 Pod 打 "标签"(键值对),用于筛选 / 管理 Pod。

bash

[root@master ~]# vim testpod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: testpod

name: testpod

spec:

containers:

- image: nginx:latest

name: web1

ports:

- containerPort: 80

- image: busybox:latest

name: busybox

command:

- /bin/sh

- -c

- sleep 3000

~

[root@master ~]# kubectl cp testpod.yml testpod:/ -c testpod

[root@master ~]# kubectl exec -it pods/testpod -c testpod -- /bin/sh

/ #

/ # ls

bin etc lib proc sys tmp var

dev home lib64 root testpod.yml usr

#扩容

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-68d8574cb-8xjdv 1/1 Running 0 14m

test-68d8574cb-b9p9x 1/1 Running 0 14m

test-68d8574cb-lb9gq 1/1 Running 0 14m

test-68d8574cb-xkd56 1/1 Running 0 14m

#把 test 的 Pod 数量扩到 6 个;

[root@master ~]# kubectl scale deployment test --replicas 6

deployment.apps/test scaled

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-68d8574cb-5kbgs 1/1 Running 0 2s

test-68d8574cb-7nnmn 1/1 Running 0 2s

test-68d8574cb-8xjdv 1/1 Running 0 15m

test-68d8574cb-b9p9x 1/1 Running 0 15m

test-68d8574cb-lb9gq 1/1 Running 0 15m

test-68d8574cb-xkd56 1/1 Running 0 15m

#缩容到 1 个,多余的 Pod 会被删除。

[root@master ~]# kubectl scale deployment test --replicas 1

deployment.apps/test scaled

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-68d8574cb-5kbgs 0/1 Completed 0 26s

test-68d8574cb-8xjdv 0/1 Completed 0 15m

test-68d8574cb-lb9gq 1/1 Running 0 15m

test-68d8574cb-xkd56 0/1 Completed 0 15m

testpod 1/1 Running 1 (6m54s ago) 8m12s

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

test-68d8574cb-lb9gq 1/1 Running 0 15m

testpod 1/1 Running 1 (6m56s ago) 8m14s

[root@master ~]# kubectl get pods --show-labels #查看 Pod 的标签

NAME READY STATUS RESTARTS AGE LABELS

test-68d8574cb-lb9gq 1/1 Running 0 16m app=test,pod-template-hash=68d8574cb

testpod 1/1 Running 1 (7m54s ago) 9m12s run=testpod

[root@master ~]# kubectl label pods testpod name=lee

pod/testpod labeled

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

test-68d8574cb-lb9gq 1/1 Running 0 17m app=test,pod-template-hash=68d8574cb

testpod 1/1 Running 1 (8m23s ago) 9m41s name=lee,run=testpod

[root@master ~]# kubectl label pods testpod name-

pod/testpod unlabeled

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

test-68d8574cb-lb9gq 1/1 Running 0 18m app=test,pod-template-hash=68d8574cb

testpod 1/1 Running 1 (9m10s ago) 10m run=testpod八、Pod应用

1.自主式管理pod

直接创建 Pod(无控制器管理),理解 Pod 的创建状态和故障(镜像拉取失败)。

bash

[root@master pod]# kubectl run myappv2 --image myapp:v2 --port 80

pod/myappv2 created

[root@master pod]# kubectl get pods

NAME READY STATUS RESTARTS AGE

myappv2 0/1 ContainerCreating 0 8s #创建中

[root@master pod]# kubectl get pods

NAME READY STATUS RESTARTS AGE

myappv2 0/1 ErrImagePull 0 20s #镜像拉取失败

[root@master ~]# ls

anaconda-ks.cfg kube-flannel.yml pod

cri-dockerd-0.3.14-3.el8.x86_64.rpm libcgroup-0.41-19.el8.x86_64.rpm testpod.yml

flannel-0.28.1.tar myapp.tar.gz test.yml

[root@master ~]# docker load -i myapp.tar.gz

Loaded image: timinglee/myapp:v1

Loaded image: timinglee/myapp:v2

[root@master ~]# docker tag timinglee/myapp:v2 reg.timinglee.org/library/myapp:v2

[root@master ~]# docker push reg.timinglee.org/library/myapp:v2

The push refers to repository [reg.timinglee.org/library/myapp]

05a9e65e2d53: Layer already exists

68695a6cfd7d: Layer already exists

c1dc81a64903: Layer already exists

8460a579ab63: Layer already exists

d39d92664027: Layer already exists

v2: digest: sha256:5f4afc8302ade316fc47c99ee1d41f8ba94dbe7e3e7747dd87215a15429b9102 size: 1362

[root@master pod]# kubectl get pods

NAME READY STATUS RESTARTS AGE

myappv2 0/1 ImagePullBackOff 0 3m48s #尝试从新拉去镜像

[root@master pod]# kubectl get pods

NAME READY STATUS RESTARTS AGE

myappv2 1/1 Running 0 4m20s

[root@master pod]# kubectl delete pods myappv2

pod "myappv2" deleted from default namespace

[root@master pod]# kubectl get pods

No resources found in default namespace. 2.利用控制器管理pod

用 Deployment 管理 Pod,支持扩容、版本更新、回滚。

- Deployment 会自动维护 Pod 数量,Pod 挂了会重建;

- 版本更新 / 回滚是 Deployment 的核心能力,适合生产环境。

bash

#创建管理 myapp:v2 的 Deployment,1个Pod。

[root@master pod]# kubectl create deployment webcluster --image myapp:v2 --replicas 1

deployment.apps/webcluster created

[root@master pod]# kubectl get deployments.apps -o wide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

webcluster 1/1 1 1 14s myapp myapp:v2 app=webcluster

#自由拉伸

[root@master pod]# kubectl scale deployment webcluster --replicas 2 #扩到 2 个 Pod

deployment.apps/webcluster scaled

[root@master pod]# kubectl scale deployment webcluster --replicas 1 #缩回 1 个

[root@master pod]# kubectl label pods webcluster-6c8b4bb9d7-jsjws app-

pod/webcluster-6c8b4bb9d7-jsjws unlabeled

[root@master pod]# kubectl label pods webcluster-6c8b4bb9d7-jsjws app=webcluster

pod/webcluster-6c8b4bb9d7-jsjws labeled

#暴漏控制器(设定访问pod的vip)

[root@master pod]# kubectl expose deployment webcluster --port 80 --target-port 80

[root@master pod]# kubectl describe svc webcluster | tail -n 10

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.98.36.168

IPs: 10.98.36.168

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.1.12:80

Session Affinity: None

Internal Traffic Policy: Cluster

Events: <none>

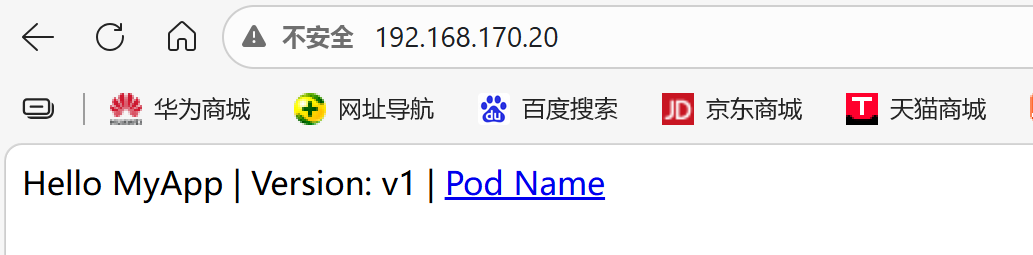

[root@master pod]# curl 10.98.36.168

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

#更新版本

[root@master pod]# kubectl set image deployments webcluster myapp=myapp:v1

deployment.apps/webcluster image updated

[root@master pod]# curl 10.98.36.168

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@master pod]# kubectl rollout history deployment webcluster

deployment.apps/webcluster

REVISION CHANGE-CAUSE

1 <none>

2 <none>

#版本回滚:

[root@master pod]# kubectl rollout undo deployment webcluster --to-revision 1

deployment.apps/webcluster rolled back

[root@master pod]# curl 10.98.36.168

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>3.利用yaml文件部署应用

用 YAML 文件定义 Pod,支持单容器、多容器部署,理解 Pod 内容器的网络共享。

运行单个容器

bash

#运行单个容器

[root@master ~]# kubectl get deployments.apps -o wide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

webcluster 1/1 1 1 16s myapp myapp:v2 app=webcluster

[root@master ~]# kubectl scale deployment webcluster --replicas 2

deployment.apps/webcluster scaled

[root@master ~]# kubectl scale deployment webcluster --replicas 1

deployment.apps/webcluster scaled

[root@master pod]# kubectl run lee1 --image myapp:v1 --dry-run=client -o yaml > 1test.yml

[root@master pod]# vim 1test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

name: lee1

name: lee1

spec:

containers:

- image: myapp:v1

name: myappv1

[root@master pod]# kubectl apply -f 1test.yml

pod/lee1 created

[root@master ~]# kubectl describe pods

Name: lee1

Namespace: default

Priority: 0

Service Account: default

Node: node2/192.168.170.20

Start Time: Thu, 02 Apr 2026 21:20:33 +0800

Labels: name=lee1

Annotations: <none>

Status: Running

IP: 10.244.2.17

IPs:

IP: 10.244.2.17

Containers:

myappv1:

Container ID: docker://4b006b20337fc8bf54478b4392578466322237d53edf017c655da02adea39e19

Image: myapp:v1

Image ID: docker-pullable://myapp@sha256:9eeca44ba2d410e54fccc54cbe9c021802aa8b9836a0bcf3d3229354e4c8870e

Port: <none>

Host Port: <none>

State: Running

Started: Thu, 02 Apr 2026 21:20:34 +0800

Ready: True

Restart Count: 0

Environment: <none>

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-svwln (ro)

Conditions:

Type Status

PodReadyToStartContainers True

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-svwln:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

Optional: false

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 60s default-scheduler Successfully assigned default/lee1 to node2

Normal Pulled 59s kubelet spec.containers{myappv1}: Container image "myapp:v1" already present on machine and can be accessed by the pod

Normal Created 59s kubelet spec.containers{myappv1}: Container created

Normal Started 59s kubelet spec.containers{myappv1}: Container started

[root@master pod]# kubectl get pods

NAME READY STATUS RESTARTS AGE

lee1 1/1 Running 0 78s

[root@master pod]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

lee1 1/1 Running 0 82s 10.244.2.23 node2 <none> <none>

[root@master pod]# kubectl delete -f 1test.yml

pod "lee1" deleted from default namespace运行多个容器

bash

[root@master pod]# cp 1test.yml 2test.yml

[root@master pod]# vim 2test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

containers:

- image: myapp:v1

name: myappv1

- image: busybox:latest

name: busybox

command:

- /bin/sh

- -c

- sleep 20000

[root@master pod]# kubectl apply -f 2test.yml

pod/lee1 created

[root@master pod]# kubectl get pods

NAME READY STATUS RESTARTS AGE

lee1 2/2 Running 0 4s

[root@master pod]# kubectl delete -f 2test.yml --force #强制删除理解pod间的网络整合

- 多容器 Pod 里的所有容器共享同一个网络(localhost互通);

- 进入 busybox 容器,

curl localhost能访问同 Pod 里的 myapp 容器。

bash

[root@master pod]# cp 2test.yml 3test.yml

[root@master pod]# vim 3test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

containers:

- image: myapp:v1

name: myappv1

- image: busyboxplus:latest

name: busybox

command:

- /bin/sh

- -c

- sleep 20000

[root@master ~]# kubectl apply -f 3test.yml

pod/lee1 created

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

lee1 2/2 Running 0 6s

[root@master ~]# kubectl exec -it pods/lee1 -c busybox -- /bin/sh

/bin/sh: shopt: not found

[ root@lee1:/ ]$ curl localhost

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>端口映射

把 Pod 的端口映射到宿主机,外部能访问;

bash

[root@master pod]# cp 1test.yml 4test.yml

[root@master pod]# vim 4test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

containers:

- image: myapp:v1

name: myappv1

ports:

- name: webport

containerPort: 80

hostPort: 80

protocol: TCP

[root@master pod]# kubectl apply -f 4test.yml

pod/lee1 created

[root@master pod]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

lee1 1/1 Running 0 7s 10.244.2.31 node2 <none> <none>

[root@master pod]# curl 192.168.170.20

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

选择运行节点

指定 Pod 跑在某个节点上;

bash

[root@master pod]# cp 4test.yml 5test.yml

[root@master pod]# vim 5test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

nodeSelector:

kubernetes.io/hostname: node1

containers:

- image: myapp:v1

name: myappv1

ports:

- name: webport

containerPort: 80

hostPort: 80

protocol: TCP

[root@master pod]# kubectl apply -f 5test.yml

pod/lee1 created

[root@master pod]# kubectl get pods -o wide #由此可见由v2变成v1

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

lee1 1/1 Running 0 5s 10.244.1.14 node1 <none> <none>共享宿主机网络

Pod 使用宿主机的网络(不是 K8s 虚拟网络)。

bash

root@master pod]# cp 5test.yml 6test.yml

[root@master pod]# vim 6test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

hostNetwork: true

nodeSelector:

kubernetes.io/hostname: node1

containers:

- image: busybox:latest

name: busybox

command:

- /bin/sh

- -c

- sleep 1000

[root@master pod]# kubectl apply -f 6test.yml

pod/lee1 created

[root@master pod]# kubectl exec -it pods/lee1 -c busybox -- /bin/sh

/ #

/ # ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq qlen 1000

link/ether 00:0c:29:67:23:76 brd ff:ff:ff:ff:ff:ff

inet 192.168.170.10/24 brd 192.168.170.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet6 fe80::7a35:2bf3:8ff4:9419/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue

link/ether 3a:0c:33:9f:d9:36 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

4: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue

link/ether 06:c7:70:fe:6f:e6 brd ff:ff:ff:ff:ff:ff

inet 10.244.1.0/32 scope global flannel.1

valid_lft forever preferred_lft forever

inet6 fe80::4c7:70ff:fefe:6fe6/64 scope link

valid_lft forever preferred_lft forever

5: cni0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue qlen 1000

link/ether 7a:22:fc:84:3f:e2 brd ff:ff:ff:ff:ff:ff

inet 10.244.1.1/24 brd 10.244.1.255 scope global cni0

valid_lft forever preferred_lft forever

inet6 fe80::7822:fcff:fe84:3fe2/64 scope link

valid_lft forever preferred_lft forever

/ #九、pod的生命周期

1.init 容器

Init 容器是 Pod 启动前执行的 "初始化容器",必须执行成功,主容器才会启动(比如等待依赖服务就绪)。

bash

[root@master pod]# cp 1test.yml init.yml

[root@master pod]# vim init.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

#初始化容器会一直检查 /testfile 文件是否存在,不存在就每隔 2 秒打印等待,直到文件存在"

initContainers:

- name: init-myservice

image: busybox

command: ["sh","-c","until test -e /testfile;do echo wating for myservice; sleep 2;done"]

containers:

- image: myapp:v1

name: myappv1

[root@master pod]# kubectl apply -f init.yml

pod/lee1 created

[root@master pod]# watch -n 1 kubectl get pods #监控命令

NAME READY STATUS RESTARTS AGE

lee1 0/1 Init:0/1 0 3s

[root@master pod]# kubectl exec -it pods/lee1 -c init-myservice -- /bin/sh

/ #

/ #

[root@master pod]# kubectl exec -it pods/lee1 -c init-myservice -- /bin/sh

/ #

/ # touch /testfile

/ # command terminated with exit code 137

[root@master pod]# kubectl get pods

NAME READY STATUS RESTARTS AGE

lee1 1/1 Running 0 2m32s2.livenessprobe(存活探针)

存活探针监控容器是否 "活着",如果探测失败,K8s 会重启容器(比如 nginx 挂了,自动重启)。

bash

[root@master pod]# kubectl create deployment webcluster --image myapp:v1 --replicas 1 --

[root@master pod]# kubectl create deployment webcluster --image myapp:v1 --replicas 1 --dry-run=client -o yaml > liveness.yml

[root@master pod]# kubectl expose deployment webcluster --port 80 --target-port 80 --dry-run=client -o yaml >> liveness.yml

[root@master pod]# kubectl delete -f liveness.yml

deployment.apps "webcluster" deleted from default namespace

Error from server (NotFound): error when deleting "liveness.yml": services "webcluster" not found

[root@master pod]# kubectl apply -f liveness.yml

deployment.apps/webcluster created

service/webcluster created

#测试liveness

[root@master pod]# watch -n 1 "kubectl get pods ;kubectl describe svc webcluster | tail -n 10"

NAME READY STATUS RESTARTS AGE

webcluster-584fddd575-4ttz9 1/1 Running 3 (2s ago) 92s

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.105.47.234

IPs: 10.105.47.234

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.2.36:80

Session Affinity: None

Internal Traffic Policy: Cluster

Events: <none>

[root@master pod]# kubectl exec -it pods/webcluster-7fd94cc55b-pgdx6 -c myapp -- /bin/sh

/ # nginx -s stop

2026/03/29 08:50:02 [notice] 59#59: signal process started

/ # command terminated with exit code 1373.ReadinessProbe

就绪探针监控容器是否 "就绪(能提供服务)",如果探测失败,Service 会把这个 Pod 从 Endpoints 中移除(不把请求发过来)。

- 就绪探针用于保证只有 "能提供服务" 的 Pod 才会接收请求;

- 和存活探针的区别:存活探针失败重启容器,就绪探针失败只是隔离 Pod,不重启。

bash

[root@master pod]# cp liveness.yml ReadinessProbe.yml

[root@master pod]# vim ReadinessProbe.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: webcluster

name: webcluster

spec:

replicas: 1

selector:

matchLabels:

app: webcluster

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: myapp:v1

name: myapp

readinessProbe:

httpGet:

path: /test.html #检测/test.html是否能访问

port: 80

initialDelaySeconds: 1 #启动1秒后开始探测

periodSeconds: 3 #每3秒探测一次

timeoutSeconds: 1

---

apiVersion: v1

kind: Service

metadata:

labels:

app: webcluster

name: webcluster

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: webcluster

[root@master pod]# kubectl apply -f ReadinessProbe.yml

deployment.apps/webcluster configured

service/webcluster unchanged

#监控

[root@master pod]# watch -n 1 "kubectl get pods ;kubectl describe svc webcluster | tail -n 10"

6

NAME READY STATUS RESTARTS AGE

webcluster-6bc85dfc84-zk4mn 0/1 Running 0 7s

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.107.142.60

IPs: 10.107.142.60

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints:

Session Affinity: None

Internal Traffic Policy: Cluster

Events: <none>

#进入容器,echo timinglee > /test.html创建文件,探针检测通过,READY 变成 1/1,Service 的 Endpoints 会添加 Pod 的 IP。

[root@master pod]# kubectl exec -it pods/webcluster-6bc85dfc84-zk4mn -c myapp -- /bin/sh

/ # echo timinglee > /usr/share/nginx/html/test.html

/ # rm -fr /usr/share/nginx/html/test.html

/ # echo timinglee > /usr/share/nginx/html/test.html

/ #