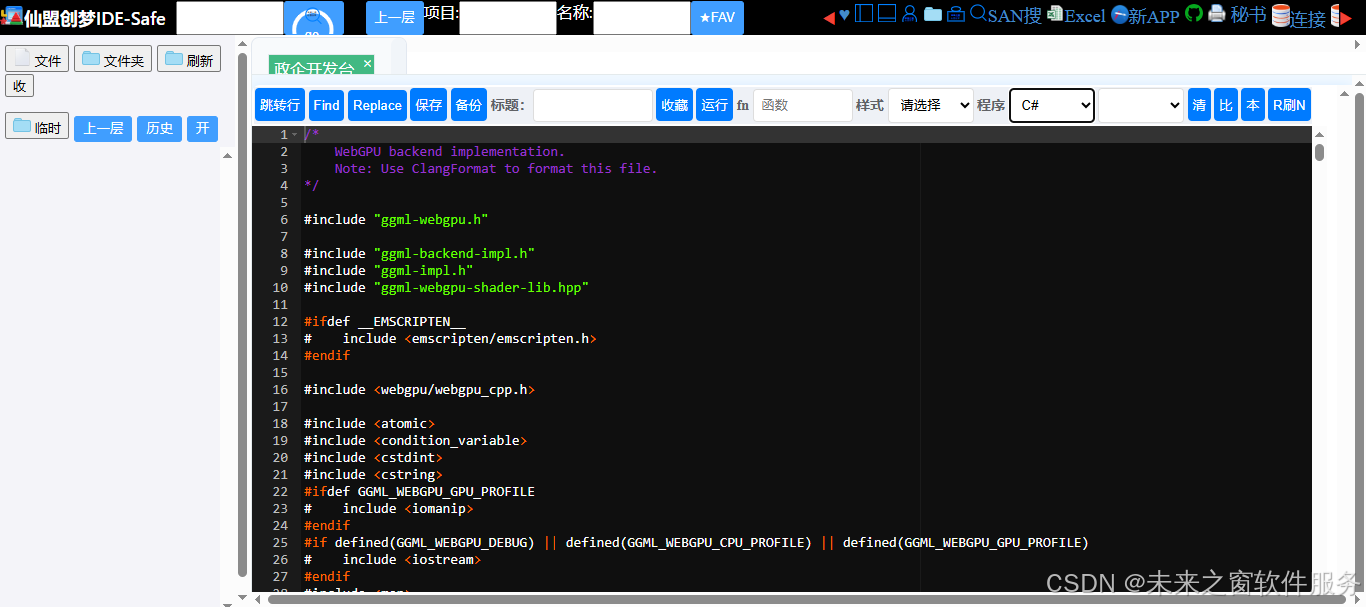

ggml-webgpu

核心代码

完整代码

/*

WebGPU backend implementation.

Note: Use ClangFormat to format this file.

*/

#include "ggml-webgpu.h"

#include "ggml-backend-impl.h"

#include "ggml-impl.h"

#include "ggml-webgpu-shader-lib.hpp"

#ifdef __EMSCRIPTEN__

# include <emscripten/emscripten.h>

#endif

#include <webgpu/webgpu_cpp.h>

#include <atomic>

#include <condition_variable>

#include <cstdint>

#include <cstring>

#ifdef GGML_WEBGPU_GPU_PROFILE

# include <iomanip>

#endif

#if defined(GGML_WEBGPU_DEBUG) || defined(GGML_WEBGPU_CPU_PROFILE) || defined(GGML_WEBGPU_GPU_PROFILE)

# include <iostream>

#endif

#include <map>

#include <memory>

#include <mutex>

#include <optional>

#include <string>

#include <utility>

#include <vector>

#define ROUNDUP_POW2(x, pow2) (((x) + ((pow2) - 1)) & ~((pow2) - 1))

#define CEIL_DIV(M, N) (((M) + (N) - 1) / (N))

// Return a rectangular grid of workgroups with minimal over-provisioned workgroups.

// Assumes that the total number of workgroups does not exceed max_per_dim^2.

static inline void compute_2d_workgroups(uint32_t total_wg, uint32_t max_per_dim, uint32_t & wg_x, uint32_t & wg_y) {

wg_y = std::max(1u, CEIL_DIV(total_wg, max_per_dim));

wg_x = CEIL_DIV(total_wg, wg_y);

}

#ifdef GGML_WEBGPU_DEBUG

# define WEBGPU_LOG_DEBUG(msg) std::cout << msg << std::endl

# define WEBGPU_DEBUG_BUF_ELEMS 512

#else

# define WEBGPU_LOG_DEBUG(msg) ((void) 0)

#endif // GGML_WEBGPU_DEBUG

#ifdef GGML_WEBGPU_CPU_PROFILE

// total timing (aggregated)

# define WEBGPU_CPU_PROFILE_TOTAL_START(id) auto cpu_total_start_##id = std::chrono::high_resolution_clock::now();

# define WEBGPU_CPU_PROFILE_TOTAL_END(id, ctx) \

auto cpu_total_end_##id = std::chrono::high_resolution_clock::now(); \

double cpu_total_time_##id = \

std::chrono::duration<double, std::milli>(cpu_total_end_##id - cpu_total_start_##id).count(); \

(ctx)->cpu_time_ms[#id] += cpu_total_time_##id;

// fine-grained timing (not included in totals)

# define WEBGPU_CPU_PROFILE_DETAIL_START(id) auto cpu_detail_start_##id = std::chrono::high_resolution_clock::now();

# define WEBGPU_CPU_PROFILE_DETAIL_END(id, ctx) \

auto cpu_detail_end_##id = std::chrono::high_resolution_clock::now(); \

double cpu_detail_time_##id = \

std::chrono::duration<double, std::milli>(cpu_detail_end_##id - cpu_detail_start_##id).count(); \

(ctx)->cpu_detail_ms[#id] += cpu_detail_time_##id;

#else

# define WEBGPU_CPU_PROFILE_TOTAL_START(id)

# define WEBGPU_CPU_PROFILE_TOTAL_END(id, ctx)

# define WEBGPU_CPU_PROFILE_DETAIL_START(id)

# define WEBGPU_CPU_PROFILE_DETAIL_END(id, ctx)

#endif // GGML_WEBGPU_CPU_PROFILE

#ifdef GGML_WEBGPU_GPU_PROFILE

# define WEBGPU_NUM_TIMESTAMP_QUERY_BUFS 32

# define WEBGPU_TIMESTAMP_QUERY_BUF_SIZE_BYTES 16 // e.g. enough for two timestamps

#endif

/* Constants */

#define WEBGPU_NUM_PARAM_BUFS 96u

#define WEBGPU_COMMAND_SUBMIT_BATCH_SIZE 32u

#define WEBGPU_WAIT_ANY_TIMEOUT_MS 0

// Maximum number of in-flight submissions per-thread, to avoid exhausting the

// parameter buffer pool

#define WEBGPU_MAX_INFLIGHT_SUBS_PER_THREAD (WEBGPU_NUM_PARAM_BUFS / WEBGPU_COMMAND_SUBMIT_BATCH_SIZE)

#define WEBGPU_PARAMS_BUF_SIZE_BYTES 128 // enough for 32 parameters

#define WEBGPU_SET_ROWS_ERROR_BUF_SIZE_BYTES 4

#define WEBGPU_STORAGE_BUF_BINDING_MULT 4 // a storage buffer binding size must be a multiple of 4

// For operations which process a row in parallel, this seems like a reasonable

// default

#define WEBGPU_ROW_SPLIT_WG_SIZE 64

// Track https://github.com/gpuweb/gpuweb/issues/5315 for fixes to

// implementations so this can be removed, necessary only for get_rows right now

#define WEBGPU_MAX_WG_SIZE 288

/* End Constants */

// This is a "fake" base pointer, since WebGPU buffers do not have pointers to

// their locations.

static void * const webgpu_ptr_base = (void *) (uintptr_t) 0x1000; // NOLINT

// Always returns the base offset of a tensor, regardless of views.

static uint64_t webgpu_tensor_offset(const ggml_tensor * tensor) {

if (tensor->view_src) {

return (uint8_t *) tensor->view_src->data - (uint8_t *) webgpu_ptr_base;

}

return (uint8_t *) tensor->data - (uint8_t *) webgpu_ptr_base;

}

/* Struct definitions */

// Forward reference

static void ggml_webgpu_create_buffer(wgpu::Device & device,

wgpu::Buffer & buffer,

size_t size,

wgpu::BufferUsage usage,

const char * label);

// Holds a pool of parameter buffers for WebGPU operations

struct webgpu_buf_pool {

std::vector<wgpu::Buffer> free;

// The pool must be synchronized because

// 1. The memset pool is shared globally by every ggml buffer,

// since allocating a pool per ggml buffer would consume too much memory.

// 2. For the per-thread buffer pools in webgpu_context,

// buffers are allocated and freed in Dawn callbacks,

// which can run on a different thread than the calling thread.

std::mutex mutex;

std::condition_variable cv;

size_t cur_pool_size;

size_t max_pool_size;

wgpu::Device device;

wgpu::BufferUsage dev_buf_usage;

size_t buf_size;

bool should_grow;

void init(wgpu::Device device,

int num_bufs,

size_t buf_size,

wgpu::BufferUsage dev_buf_usage,

bool should_grow = false,

size_t max_pool_size = WEBGPU_NUM_PARAM_BUFS * 2) {

this->max_pool_size = max_pool_size;

this->cur_pool_size = num_bufs;

this->device = device;

this->dev_buf_usage = dev_buf_usage;

this->buf_size = buf_size;

this->should_grow = should_grow;

for (int i = 0; i < num_bufs; i++) {

wgpu::Buffer dev_buf;

ggml_webgpu_create_buffer(device, dev_buf, buf_size, dev_buf_usage, "ggml_webgpu_dev_pool_buf");

free.push_back(dev_buf);

}

}

wgpu::Buffer alloc_bufs() {

std::unique_lock<std::mutex> lock(mutex);

if (!free.empty()) {

wgpu::Buffer buf = free.back();

free.pop_back();

return buf;

}

// Try growing the pool if no free buffers

if (free.empty() && cur_pool_size < max_pool_size && should_grow) {

cur_pool_size++;

wgpu::Buffer dev_buf;

ggml_webgpu_create_buffer(device, dev_buf, buf_size, dev_buf_usage, "ggml_webgpu_dev_pool_buf");

if (!dev_buf) {

GGML_ABORT("webgpu_buf_pool: failed to allocate buffers");

}

return dev_buf;

}

cv.wait(lock, [this] { return !free.empty(); });

wgpu::Buffer buf = free.back();

free.pop_back();

return buf;

}

void free_bufs(std::vector<wgpu::Buffer> bufs) {

std::lock_guard<std::mutex> lock(mutex);

free.insert(free.end(), bufs.begin(), bufs.end());

cv.notify_all();

}

void cleanup() {

std::lock_guard<std::mutex> lock(mutex);

for (auto & buf : free) {

if (buf) {

buf.Destroy();

}

}

free.clear();

}

~webgpu_buf_pool() { this->cleanup(); }

};

#ifdef GGML_WEBGPU_GPU_PROFILE

struct webgpu_gpu_profile_bufs {

wgpu::Buffer host_buf;

wgpu::Buffer dev_buf;

wgpu::QuerySet query_set;

};

// Holds a pool of parameter buffers for WebGPU operations

struct webgpu_gpu_profile_buf_pool {

std::vector<webgpu_gpu_profile_bufs> free;

std::mutex mutex;

std::condition_variable cv;

void init(wgpu::Device device,

int num_bufs,

size_t buf_size,

wgpu::BufferUsage dev_buf_usage,

wgpu::BufferUsage host_buf_usage) {

for (int i = 0; i < num_bufs; i++) {

wgpu::Buffer host_buf;

wgpu::Buffer dev_buf;

ggml_webgpu_create_buffer(device, host_buf, buf_size, host_buf_usage, "ggml_webgpu_host_profile_buf");

ggml_webgpu_create_buffer(device, dev_buf, buf_size, dev_buf_usage, "ggml_webgpu_dev_profile_buf");

// Create a query set for 2 timestamps

wgpu::QuerySetDescriptor ts_query_set_desc = {};

ts_query_set_desc.type = wgpu::QueryType::Timestamp;

ts_query_set_desc.count = 2;

wgpu::QuerySet ts_query_set = device.CreateQuerySet(&ts_query_set_desc);

free.push_back({ host_buf, dev_buf, ts_query_set });

}

}

webgpu_gpu_profile_bufs alloc_bufs() {

std::unique_lock<std::mutex> lock(mutex);

cv.wait(lock, [this] { return !free.empty(); });

webgpu_gpu_profile_bufs bufs = free.back();

free.pop_back();

return bufs;

}

void free_bufs(std::vector<webgpu_gpu_profile_bufs> bufs) {

std::lock_guard<std::mutex> lock(mutex);

free.insert(free.end(), bufs.begin(), bufs.end());

cv.notify_all();

}

void cleanup() {

std::lock_guard<std::mutex> lock(mutex);

for (auto & bufs : free) {

bufs.host_buf.Destroy();

bufs.dev_buf.Destroy();

bufs.query_set.Destroy();

}

free.clear();

}

~webgpu_gpu_profile_buf_pool() { this->cleanup(); }

};

#endif

struct webgpu_command {

uint32_t num_kernels;

wgpu::CommandBuffer commands;

std::vector<wgpu::Buffer> params_bufs;

#ifdef GGML_WEBGPU_GPU_PROFILE

webgpu_gpu_profile_bufs timestamp_query_bufs;

std::string pipeline_name;

#endif

};

struct webgpu_capabilities {

wgpu::Limits limits;

bool supports_subgroup_matrix = false;

uint32_t sg_mat_m = 0;

uint32_t sg_mat_n = 0;

uint32_t sg_mat_k = 0;

uint32_t subgroup_size = 0;

uint32_t max_subgroup_size = 0;

size_t memset_bytes_per_thread;

};

// Stores global webgpu members

struct webgpu_global_context_struct {

wgpu::Instance instance;

wgpu::Adapter adapter;

wgpu::Device device;

wgpu::Queue queue;

webgpu_capabilities capabilities;

// Shared buffer to move data from device to host

wgpu::Buffer get_tensor_staging_buf;

// Global mutex for pipeline and staging buffer, will be refactored to exclude pipeline caches.

std::recursive_mutex mutex;

webgpu_buf_pool memset_buf_pool;

std::map<int, webgpu_pipeline> memset_pipelines; // variant or type index

#ifdef GGML_WEBGPU_CPU_PROFILE

// Profiling: labeled CPU time in ms (total)

std::unordered_map<std::string, double> cpu_time_ms;

// Profiling: detailed CPU time in ms

std::unordered_map<std::string, double> cpu_detail_ms;

#endif

#ifdef GGML_WEBGPU_GPU_PROFILE

// Profiling: per-shader GPU time in ms

std::unordered_map<std::string, double> shader_gpu_time_ms;

// Profiling: pool of timestamp query buffers (one per operation)

webgpu_gpu_profile_buf_pool timestamp_query_buf_pool;

#endif

#ifdef GGML_WEBGPU_DEBUG

wgpu::Buffer debug_host_buf;

wgpu::Buffer debug_dev_buf;

#endif

~webgpu_global_context_struct() {

if (this->get_tensor_staging_buf) {

this->get_tensor_staging_buf.Destroy();

this->get_tensor_staging_buf = nullptr;

}

#ifdef GGML_WEBGPU_DEBUG

if (this->debug_host_buf) {

this->debug_host_buf.Destroy();

this->debug_host_buf = nullptr;

}

if (this->debug_dev_buf) {

this->debug_dev_buf.Destroy();

this->debug_dev_buf = nullptr;

}

#endif

}

};

typedef std::shared_ptr<webgpu_global_context_struct> webgpu_global_context;

struct webgpu_submission {

wgpu::FutureWaitInfo submit_done;

#ifdef GGML_WEBGPU_GPU_PROFILE

std::vector<wgpu::FutureWaitInfo> profile_futures;

#endif

};

// All the base objects needed to run operations on a WebGPU device

struct webgpu_context_struct {

// Points to global instances owned by ggml_backend_webgpu_reg_context

webgpu_global_context global_ctx;

std::unique_ptr<ggml_webgpu_shader_lib> shader_lib;

webgpu_buf_pool param_buf_pool;

wgpu::Buffer set_rows_dev_error_buf;

wgpu::Buffer set_rows_host_error_buf;

std::map<int, std::map<int, webgpu_pipeline>> cpy_pipelines; // src_type, dst_type

std::map<int, webgpu_pipeline> rms_norm_pipelines; // inplace

std::map<int, std::map<int, std::map<int, webgpu_pipeline>>> rope_pipelines; // type, ff, inplace

std::map<int, std::map<int, std::map<int, webgpu_pipeline>>> glu_pipelines; // glu_op, type, split

std::map<int, std::map<int, std::map<int, webgpu_pipeline>>> soft_max_pipelines; // mask_type, has_sink, inplace

size_t memset_bytes_per_thread;

};

typedef std::shared_ptr<webgpu_context_struct> webgpu_context;

// Metadata required for the ggml backend registration/discovery interface

struct ggml_backend_webgpu_reg_context {

// Since the Instance is a global entrypoint into the WebGPU API, it lives here

webgpu_global_context webgpu_global_ctx;

size_t device_count;

const char * name;

};

// Per-device struct for the global logical device interface

struct ggml_backend_webgpu_device_context {

webgpu_global_context webgpu_global_ctx;

std::string device_name;

std::string device_desc;

};

// Per-thread data required to actually run WebGPU operations in a backend instance

struct ggml_backend_webgpu_context {

webgpu_context webgpu_ctx;

std::string name;

};

// Per-thread data related to buffers

struct ggml_backend_webgpu_buffer_context {

wgpu::Buffer buffer;

std::string label;

webgpu_global_context global_ctx;

ggml_backend_webgpu_buffer_context(wgpu::Buffer buf, std::string lbl, webgpu_global_context global_ctx_) :

buffer(std::move(buf)),

label(std::move(lbl)),

global_ctx(std::move(global_ctx_)) {}

};

/* WebGPU object initializations */

static webgpu_pipeline ggml_webgpu_create_pipeline(wgpu::Device & device,

const char * shader_code,

const char * label,

const std::vector<wgpu::ConstantEntry> & constants = {}) {

wgpu::ShaderSourceWGSL shader_source;

shader_source.code = shader_code;

wgpu::ShaderModuleDescriptor shader_desc;

shader_desc.nextInChain = &shader_source;

wgpu::ShaderModule shader_module = device.CreateShaderModule(&shader_desc);

wgpu::ComputePipelineDescriptor pipeline_desc;

pipeline_desc.label = label;

pipeline_desc.compute.module = shader_module;

pipeline_desc.compute.entryPoint = "main"; // Entry point in the WGSL code

pipeline_desc.layout = nullptr; // nullptr means auto layout

if (constants.size() > 0) {

pipeline_desc.compute.constants = constants.data();

pipeline_desc.compute.constantCount = constants.size();

}

return { device.CreateComputePipeline(&pipeline_desc), label };

}

static void ggml_webgpu_create_buffer(wgpu::Device & device,

wgpu::Buffer & buffer,

size_t size,

wgpu::BufferUsage usage,

const char * label) {

wgpu::BufferDescriptor buffer_desc;

buffer_desc.size = size;

buffer_desc.usage = usage;

buffer_desc.label = label;

buffer_desc.mappedAtCreation = false;

// TODO: error handling

buffer = device.CreateBuffer(&buffer_desc);

}

/** End WebGPU object initializations */

/** WebGPU Actions */

static bool ggml_backend_webgpu_handle_wait_status(wgpu::WaitStatus status, bool allow_timeout = false) {

switch (status) {

case wgpu::WaitStatus::Success:

return true;

case wgpu::WaitStatus::TimedOut:

if (allow_timeout) {

return false;

}

GGML_LOG_ERROR("ggml_webgpu: WaitAny timed out unexpectedly\n");

return false;

case wgpu::WaitStatus::Error:

GGML_LOG_ERROR("ggml_webgpu: WaitAny returned an error\n");

return false;

default:

GGML_LOG_ERROR("ggml_webgpu: WaitAny returned an unknown status\n");

return false;

}

}

#ifdef GGML_WEBGPU_GPU_PROFILE

static void ggml_backend_webgpu_erase_completed_futures(std::vector<wgpu::FutureWaitInfo> & futures) {

futures.erase(std::remove_if(futures.begin(), futures.end(),

[](const wgpu::FutureWaitInfo & info) { return info.completed; }),

futures.end());

}

static void ggml_backend_webgpu_wait_profile_futures(webgpu_global_context & ctx,

std::vector<wgpu::FutureWaitInfo> & futures,

bool block) {

if (futures.empty()) {

return;

}

uint64_t timeout_ms = block ? UINT64_MAX : 0;

if (block) {

while (!futures.empty()) {

auto waitStatus = ctx->instance.WaitAny(futures.size(), futures.data(), timeout_ms);

if (ggml_backend_webgpu_handle_wait_status(waitStatus)) {

ggml_backend_webgpu_erase_completed_futures(futures);

}

}

} else {

auto waitStatus = ctx->instance.WaitAny(futures.size(), futures.data(), timeout_ms);

if (ggml_backend_webgpu_handle_wait_status(waitStatus, true)) {

ggml_backend_webgpu_erase_completed_futures(futures);

}

}

}

#endif

// Wait for the queue to finish processing all submitted work

static void ggml_backend_webgpu_wait(webgpu_global_context & ctx,

std::vector<webgpu_submission> & subs,

bool block = true) {

// If we have too many in-flight submissions, wait on the oldest one first.

if (subs.empty()) {

return;

}

while (subs.size() >= WEBGPU_MAX_INFLIGHT_SUBS_PER_THREAD) {

auto waitStatus = ctx->instance.WaitAny(1, &subs[0].submit_done, UINT64_MAX);

if (ggml_backend_webgpu_handle_wait_status(waitStatus)) {

#ifdef GGML_WEBGPU_GPU_PROFILE

ggml_backend_webgpu_wait_profile_futures(ctx, subs[0].profile_futures, true);

#endif

subs.erase(subs.begin());

}

}

if (subs.empty()) {

return;

}

if (block) {

for (auto & sub : subs) {

while (!sub.submit_done.completed) {

auto waitStatus = ctx->instance.WaitAny(1, &sub.submit_done, UINT64_MAX);

ggml_backend_webgpu_handle_wait_status(waitStatus);

}

#ifdef GGML_WEBGPU_GPU_PROFILE

ggml_backend_webgpu_wait_profile_futures(ctx, sub.profile_futures, true);

#endif

}

subs.clear();

} else {

// Poll each submit future once and remove completed submissions.

for (auto sub = subs.begin(); sub != subs.end();) {

auto waitStatus = ctx->instance.WaitAny(1, &sub->submit_done, 0);

ggml_backend_webgpu_handle_wait_status(waitStatus, true);

#ifdef GGML_WEBGPU_GPU_PROFILE

ggml_backend_webgpu_wait_profile_futures(ctx, sub->profile_futures, false);

if (sub->submit_done.completed && sub->profile_futures.empty()) {

#else

if (sub->submit_done.completed) {

#endif

sub = subs.erase(sub);

} else {

++sub;

}

}

}

}

static void ggml_backend_webgpu_map_buffer(webgpu_global_context & ctx,

wgpu::Buffer & buffer,

wgpu::MapMode mode,

size_t offset,

size_t size) {

ctx->instance.WaitAny(buffer.MapAsync(mode, offset, size, wgpu::CallbackMode::AllowSpontaneous,

[](wgpu::MapAsyncStatus status, wgpu::StringView message) {

if (status != wgpu::MapAsyncStatus::Success) {

GGML_LOG_ERROR("ggml_webgpu: Failed to map buffer: %s\n",

message.data);

}

}),

UINT64_MAX);

}

#ifdef GGML_WEBGPU_DEBUG

// This function adds debugging information to shaders, as WebGPU does not support printing directly.

// To use, add a bind group entry to the setup for the shader you are debugging, add the buffer and

// debug statements in the shader, and then call this function after encoding the commands and submitting them.

static void ggml_backend_webgpu_debug(webgpu_global_context & ctx) {

wgpu::CommandEncoder encoder = ctx->device.CreateCommandEncoder();

encoder.CopyBufferToBuffer(ctx->debug_dev_buf, 0, ctx->debug_host_buf, 0, ctx->debug_host_buf.GetSize());

wgpu::CommandBuffer commands = encoder.Finish();

ctx->queue.Submit(1, &commands);

ggml_backend_webgpu_map_buffer(ctx, ctx->debug_host_buf, wgpu::MapMode::Read, 0, ctx->debug_host_buf.GetSize());

const float * debug_data = (const float *) ctx->debug_host_buf.GetConstMappedRange();

std::cout << "debug[0]: " << debug_data[0] << "\n";

ctx->debug_host_buf.Unmap();

}

#endif

static webgpu_submission ggml_backend_webgpu_submit(webgpu_global_context & ctx,

std::vector<webgpu_command> & commands,

webgpu_buf_pool & param_buf_pool) {

std::vector<wgpu::CommandBuffer> command_buffers;

std::vector<wgpu::Buffer> params_bufs;

webgpu_submission submission;

#ifdef GGML_WEBGPU_GPU_PROFILE

std::vector<std::pair<std::string, webgpu_gpu_profile_bufs>> pipeline_name_and_ts_bufs;

#endif

for (const auto & command : commands) {

command_buffers.push_back(command.commands);

params_bufs.insert(params_bufs.end(), command.params_bufs.begin(), command.params_bufs.end());

}

ctx->queue.Submit(command_buffers.size(), command_buffers.data());

wgpu::Future p_f = ctx->queue.OnSubmittedWorkDone(

wgpu::CallbackMode::AllowSpontaneous,

[¶m_buf_pool, params_bufs](wgpu::QueueWorkDoneStatus status, wgpu::StringView message) {

if (status != wgpu::QueueWorkDoneStatus::Success) {

GGML_LOG_ERROR("ggml_webgpu: Failed to submit commands: %s\n", std::string(message).c_str());

}

// Free the staged buffers

param_buf_pool.free_bufs(params_bufs);

});

submission.submit_done = { p_f };

#ifdef GGML_WEBGPU_GPU_PROFILE

for (const auto & command : commands) {

auto label = command.pipeline_name;

auto ts_bufs = command.timestamp_query_bufs;

wgpu::Future f = ts_bufs.host_buf.MapAsync(

wgpu::MapMode::Read, 0, ts_bufs.host_buf.GetSize(), wgpu::CallbackMode::AllowSpontaneous,

[ctx, ts_bufs, label](wgpu::MapAsyncStatus status, wgpu::StringView message) {

if (status != wgpu::MapAsyncStatus::Success) {

GGML_LOG_ERROR("ggml_webgpu: Failed to map timestamp buffer: %s\n", std::string(message).c_str());

} else {

const uint64_t * ts_data = (const uint64_t *) ts_bufs.host_buf.GetConstMappedRange();

// WebGPU timestamps are in ns; convert to ms

double elapsed_ms = double(ts_data[1] - ts_data[0]) * 1e-6;

ctx->shader_gpu_time_ms[label] += elapsed_ms;

}

// We can't unmap in here due to WebGPU reentrancy limitations.

ctx->timestamp_query_buf_pool.free_bufs({ ts_bufs });

});

submission.profile_futures.push_back({ f });

}

#endif

return submission;

}

static webgpu_command ggml_backend_webgpu_build_multi(

webgpu_global_context & ctx,

webgpu_buf_pool & param_buf_pool,

const std::vector<webgpu_pipeline> & pipelines,

const std::vector<std::vector<uint32_t>> & params_list,

const std::vector<std::vector<wgpu::BindGroupEntry>> & bind_group_entries_list,

const std::vector<std::pair<uint32_t, uint32_t>> & workgroups_list) {

GGML_ASSERT(pipelines.size() == params_list.size());

GGML_ASSERT(pipelines.size() == bind_group_entries_list.size());

GGML_ASSERT(pipelines.size() == workgroups_list.size());

std::vector<wgpu::Buffer> params_bufs_list;

std::vector<wgpu::BindGroup> bind_groups;

for (size_t i = 0; i < pipelines.size(); i++) {

wgpu::Buffer params_bufs = param_buf_pool.alloc_bufs();

std::vector<wgpu::BindGroupEntry> entries = bind_group_entries_list[i];

uint32_t params_binding_num = entries.size();

entries.push_back(

{ .binding = params_binding_num, .buffer = params_bufs, .offset = 0, .size = params_bufs.GetSize() });

wgpu::BindGroupDescriptor bind_group_desc;

bind_group_desc.layout = pipelines[i].pipeline.GetBindGroupLayout(0);

bind_group_desc.entryCount = entries.size();

bind_group_desc.entries = entries.data();

bind_group_desc.label = pipelines[i].name.c_str();

bind_groups.push_back(ctx->device.CreateBindGroup(&bind_group_desc));

params_bufs_list.push_back(params_bufs);

}

wgpu::CommandEncoder encoder = ctx->device.CreateCommandEncoder();

for (size_t i = 0; i < params_bufs_list.size(); i++) {

ctx->queue.WriteBuffer(params_bufs_list[i], 0, params_list[i].data(), params_list[i].size() * sizeof(uint32_t));

}

#ifdef GGML_WEBGPU_GPU_PROFILE

webgpu_gpu_profile_bufs ts_bufs = ctx->timestamp_query_buf_pool.alloc_bufs();

if (ts_bufs.host_buf.GetMapState() == wgpu::BufferMapState::Mapped) {

ts_bufs.host_buf.Unmap();

}

wgpu::PassTimestampWrites ts_writes = { .querySet = ts_bufs.query_set,

.beginningOfPassWriteIndex = 0,

.endOfPassWriteIndex = 1 };

wgpu::ComputePassDescriptor pass_desc = { .timestampWrites = &ts_writes };

wgpu::ComputePassEncoder pass = encoder.BeginComputePass(&pass_desc);

#else

wgpu::ComputePassEncoder pass = encoder.BeginComputePass();

#endif

for (size_t i = 0; i < pipelines.size(); i++) {

pass.SetPipeline(pipelines[i].pipeline);

pass.SetBindGroup(0, bind_groups[i]);

pass.DispatchWorkgroups(workgroups_list[i].first, workgroups_list[i].second, 1);

}

pass.End();

#ifdef GGML_WEBGPU_GPU_PROFILE

encoder.ResolveQuerySet(ts_bufs.query_set, 0, 2, ts_bufs.dev_buf, 0);

encoder.CopyBufferToBuffer(ts_bufs.dev_buf, 0, ts_bufs.host_buf, 0, ts_bufs.host_buf.GetSize());

#endif

wgpu::CommandBuffer commands = encoder.Finish();

webgpu_command result = {};

result.commands = commands;

result.params_bufs = params_bufs_list;

result.num_kernels = pipelines.size();

#ifdef GGML_WEBGPU_GPU_PROFILE

result.timestamp_query_bufs = ts_bufs;

// TODO: handle multiple pipeline names

result.pipeline_name = pipelines.front().name;

#endif

return result;

}

static webgpu_command ggml_backend_webgpu_build(webgpu_global_context & ctx,

webgpu_buf_pool & param_buf_pool,

webgpu_pipeline & pipeline,

std::vector<uint32_t> params,

std::vector<wgpu::BindGroupEntry> bind_group_entries,

uint32_t wg_x,

uint32_t wg_y = 1) {

return ggml_backend_webgpu_build_multi(ctx, param_buf_pool,

{

pipeline

},

{ std::move(params) }, { std::move(bind_group_entries) },

{ { wg_x, wg_y } });

}

static void ggml_backend_webgpu_buffer_memset(webgpu_global_context & ctx,

wgpu::Buffer & buf,

uint32_t value,

size_t offset,

size_t size) {

std::vector<uint32_t> params = { (uint32_t) offset, (uint32_t) size, value };

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0, .buffer = buf, .offset = 0, .size = buf.GetSize() }

};

size_t bytes_per_wg = WEBGPU_MAX_WG_SIZE * ctx->capabilities.memset_bytes_per_thread;

uint32_t wg_x = CEIL_DIV(size + 3, bytes_per_wg);

webgpu_command command =

ggml_backend_webgpu_build(ctx, ctx->memset_buf_pool, ctx->memset_pipelines[0], params, entries, wg_x);

std::vector<webgpu_command> commands = { command };

std::vector<webgpu_submission> sub = { ggml_backend_webgpu_submit(ctx, commands, ctx->memset_buf_pool) };

ggml_backend_webgpu_wait(ctx, sub);

}

/** End WebGPU Actions */

/** GGML Backend Interface */

static const char * ggml_backend_webgpu_name(ggml_backend_t backend) {

ggml_backend_webgpu_context * ctx = (ggml_backend_webgpu_context *) backend->context;

return ctx->name.c_str();

}

static void ggml_backend_webgpu_free(ggml_backend_t backend) {

ggml_backend_webgpu_context * ctx = (ggml_backend_webgpu_context *) backend->context;

WEBGPU_LOG_DEBUG("ggml_backend_webgpu_free(" << ctx->name << ")");

#ifdef GGML_WEBGPU_CPU_PROFILE

std::cout << "\n[ggml_webgpu cpu profiling summary]\n";

double total_cpu = 0.0;

for (const auto & kv : ctx->webgpu_ctx->global_ctx->cpu_time_ms) {

total_cpu += kv.second;

}

std::cout << "ggml_webgpu: total cpu time: " << total_cpu << " ms\n";

std::cout << "ggml_webgpu: cpu breakdown:\n";

for (const auto & kv : ctx->webgpu_ctx->global_ctx->cpu_time_ms) {

double pct = (total_cpu > 0.0) ? (kv.second / total_cpu * 100.0) : 0.0;

std::cout << "ggml_webgpu: " << kv.first << ": " << kv.second << " ms (" << pct << "%)\n";

}

if (ctx->webgpu_ctx->global_ctx->cpu_detail_ms.size() > 0) {

std::cout << "ggml_webgpu: cpu detailed breakdown:\n";

}

for (const auto & kv : ctx->webgpu_ctx->global_ctx->cpu_detail_ms) {

double pct = (total_cpu > 0.0) ? (kv.second / total_cpu * 100.0) : 0.0;

std::cout << "ggml_webgpu: " << kv.first << ": " << kv.second << " ms (" << pct << "%)\n";

}

#endif

#ifdef GGML_WEBGPU_GPU_PROFILE

std::cout << "\n[ggml_webgpu gpu profiling summary]\n";

double total_gpu = 0.0;

for (const auto & kv : ctx->webgpu_ctx->global_ctx->shader_gpu_time_ms) {

total_gpu += kv.second;

}

std::cout << "ggml_webgpu: total gpu time (all shaders): " << total_gpu << " ms\n";

std::cout << "\nggml_webgpu: gpu breakdown:\n";

for (const auto & kv : ctx->webgpu_ctx->global_ctx->shader_gpu_time_ms) {

double pct = (total_gpu > 0.0) ? (kv.second / total_gpu * 100.0) : 0.0;

std::cout << "ggml_webgpu: " << kv.first << ": " << kv.second << " ms (" << std::fixed << std::setprecision(2)

<< pct << "%)\n";

}

#endif

#if defined(GGML_WEBGPU_CPU_PROFILE) && defined(GGML_WEBGPU_GPU_PROFILE)

std::cout << "ggml_webgpu: gpu/cpu ratio: " << (total_cpu > 0.0 ? total_gpu / total_cpu : 0.0) << "\n";

#endif

delete ctx;

delete backend;

}

static size_t ggml_webgpu_tensor_offset(const ggml_tensor * tensor) {

return webgpu_tensor_offset(tensor) + tensor->view_offs;

}

static wgpu::Buffer ggml_webgpu_tensor_buf(const ggml_tensor * tensor) {

ggml_backend_webgpu_buffer_context * ctx = (ggml_backend_webgpu_buffer_context *) tensor->buffer->context;

return ctx->buffer;

}

static size_t ggml_webgpu_tensor_misalignment(webgpu_context & ctx, const ggml_tensor * t) {

size_t offset = ggml_webgpu_tensor_offset(t);

return offset & (ctx->global_ctx->capabilities.limits.minStorageBufferOffsetAlignment - 1);

}

static size_t ggml_webgpu_tensor_align_offset(webgpu_context & ctx, const ggml_tensor * t) {

size_t offset = ggml_webgpu_tensor_offset(t);

return offset & ~(ctx->global_ctx->capabilities.limits.minStorageBufferOffsetAlignment - 1);

}

static size_t ggml_webgpu_tensor_binding_size(webgpu_context & ctx, ggml_tensor * t) {

return ROUNDUP_POW2(ggml_nbytes(t) + ggml_webgpu_tensor_misalignment(ctx, t), WEBGPU_STORAGE_BUF_BINDING_MULT);

}

// Used to determine if two tensors are the same for in-place operations

static bool ggml_webgpu_tensor_equal(ggml_tensor * a, ggml_tensor * b) {

return (ggml_webgpu_tensor_buf(a).Get() == ggml_webgpu_tensor_buf(b).Get()) &&

(ggml_webgpu_tensor_offset(a) == ggml_webgpu_tensor_offset(b));

}

// Used to determine if two tensors share the same buffer and their byte ranges overlap,

static bool ggml_webgpu_tensor_overlap(ggml_tensor * a, ggml_tensor * b) {

return (ggml_webgpu_tensor_buf(a).Get() == ggml_webgpu_tensor_buf(b).Get()) &&

ggml_webgpu_tensor_offset(a) < (ggml_webgpu_tensor_offset(b) + ggml_nbytes(b)) &&

ggml_webgpu_tensor_offset(b) < (ggml_webgpu_tensor_offset(a) + ggml_nbytes(a));

}

struct binary_overlap_flags {

bool inplace; // src0 == dst

bool overlap; // src1 == dst

bool src_overlap;

};

static binary_overlap_flags ggml_webgpu_detect_binary_overlap(ggml_tensor * src0,

ggml_tensor * src1,

ggml_tensor * dst) {

binary_overlap_flags flags = {};

flags.inplace = ggml_webgpu_tensor_equal(src0, dst);

flags.overlap = ggml_webgpu_tensor_overlap(src1, dst);

flags.src_overlap = ggml_webgpu_tensor_overlap(src0, src1);

return flags;

}

static webgpu_command ggml_webgpu_cpy(webgpu_context & ctx, ggml_tensor * src, ggml_tensor * dst) {

uint32_t ne = (uint32_t) ggml_nelements(dst);

std::vector<uint32_t> params = {

ne, (uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src) / ggml_type_size(src->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

// Convert byte-strides to element-strides

(uint32_t) (src->nb[0] / ggml_type_size(src->type)), (uint32_t) (src->nb[1] / ggml_type_size(src->type)),

(uint32_t) (src->nb[2] / ggml_type_size(src->type)), (uint32_t) (src->nb[3] / ggml_type_size(src->type)),

(uint32_t) (dst->nb[0] / ggml_type_size(dst->type)), (uint32_t) (dst->nb[1] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[2] / ggml_type_size(dst->type)), (uint32_t) (dst->nb[3] / ggml_type_size(dst->type)),

// Logical shapes

(uint32_t) src->ne[0], (uint32_t) src->ne[1], (uint32_t) src->ne[2], (uint32_t) dst->ne[0],

(uint32_t) dst->ne[1], (uint32_t) dst->ne[2]

};

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(src),

.offset = ggml_webgpu_tensor_align_offset(ctx, src),

.size = ggml_webgpu_tensor_binding_size(ctx, src) },

{ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) }

};

uint32_t wg_x = CEIL_DIV(ne, WEBGPU_MAX_WG_SIZE);

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, ctx->cpy_pipelines[src->type][dst->type],

params, entries, wg_x);

}

static webgpu_command ggml_webgpu_pad(webgpu_context & ctx, ggml_tensor * src, ggml_tensor * dst) {

ggml_webgpu_shader_lib_context shader_lib_ctx = {

.src0 = src, .dst = dst, .max_wg_size = ctx->global_ctx->capabilities.limits.maxComputeInvocationsPerWorkgroup

};

webgpu_pipeline pipeline = ctx->shader_lib->get_pad_pipeline(shader_lib_ctx);

auto * decisions = static_cast<ggml_webgpu_generic_shader_decisions *>(pipeline.context.get());

const uint32_t ne = (uint32_t) ggml_nelements(dst);

std::vector<uint32_t> params = {

ne,

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src) / ggml_type_size(src->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

// Strides (in elements)

(uint32_t) (src->nb[0] / ggml_type_size(src->type)),

(uint32_t) (src->nb[1] / ggml_type_size(src->type)),

(uint32_t) (src->nb[2] / ggml_type_size(src->type)),

(uint32_t) (src->nb[3] / ggml_type_size(src->type)),

// Shapes

(uint32_t) src->ne[0],

(uint32_t) src->ne[1],

(uint32_t) src->ne[2],

(uint32_t) src->ne[3],

(uint32_t) dst->ne[0],

(uint32_t) dst->ne[1],

(uint32_t) dst->ne[2],

(uint32_t) dst->ne[3],

// Pad sizes

(uint32_t) ggml_get_op_params_i32(dst, 0),

(uint32_t) ggml_get_op_params_i32(dst, 1),

(uint32_t) ggml_get_op_params_i32(dst, 2),

(uint32_t) ggml_get_op_params_i32(dst, 3),

(uint32_t) ggml_get_op_params_i32(dst, 4),

(uint32_t) ggml_get_op_params_i32(dst, 5),

(uint32_t) ggml_get_op_params_i32(dst, 6),

(uint32_t) ggml_get_op_params_i32(dst, 7),

};

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(src),

.offset = ggml_webgpu_tensor_align_offset(ctx, src),

.size = ggml_webgpu_tensor_binding_size(ctx, src) },

{ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) }

};

uint32_t wg_x = CEIL_DIV(ne, decisions->wg_size);

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, pipeline, params, entries, wg_x);

}

static std::optional<webgpu_command> ggml_webgpu_set_rows(webgpu_context & ctx,

ggml_tensor * src,

ggml_tensor * idx,

ggml_tensor * dst) {

// For set rows specifically, we need to check if src and idx are empty

// tensors.

if (ggml_is_empty(src) || ggml_is_empty(idx)) {

return std::nullopt;

}

ggml_webgpu_shader_lib_context shader_lib_ctx = {

.src0 = src,

.src1 = idx,

.dst = dst,

.max_wg_size = ctx->global_ctx->capabilities.limits.maxComputeInvocationsPerWorkgroup

};

webgpu_pipeline pipeline = ctx->shader_lib->get_set_rows_pipeline(shader_lib_ctx);

auto * decisions = static_cast<ggml_webgpu_set_rows_shader_decisions *>(pipeline.context.get());

std::vector<uint32_t> params = {

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src) / ggml_type_size(src->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, idx) / ggml_type_size(idx->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

// Convert byte-strides to element-strides

(uint32_t) (src->nb[1] / ggml_type_size(src->type)), (uint32_t) (src->nb[2] / ggml_type_size(src->type)),

(uint32_t) (src->nb[3] / ggml_type_size(src->type)), (uint32_t) (idx->nb[0] / ggml_type_size(idx->type)),

(uint32_t) (idx->nb[1] / ggml_type_size(idx->type)), (uint32_t) (idx->nb[2] / ggml_type_size(idx->type)),

(uint32_t) (dst->nb[1] / ggml_type_size(dst->type)), (uint32_t) (dst->nb[2] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[3] / ggml_type_size(dst->type)),

// Shape of src

(uint32_t) src->ne[0], (uint32_t) src->ne[1], (uint32_t) src->ne[2], (uint32_t) src->ne[3],

// Shape of idx

(uint32_t) (idx->ne[1]), (uint32_t) (idx->ne[2])

};

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(src),

.offset = ggml_webgpu_tensor_align_offset(ctx, src),

.size = ggml_webgpu_tensor_binding_size(ctx, src) },

{ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(idx),

.offset = ggml_webgpu_tensor_align_offset(ctx, idx),

.size = ggml_webgpu_tensor_binding_size(ctx, idx) },

{ .binding = 2,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) }

};

if (decisions->i64_idx) {

entries.push_back({ .binding = 3,

.buffer = ctx->set_rows_dev_error_buf,

.offset = 0,

.size = ctx->set_rows_dev_error_buf.GetSize() });

}

uint32_t threads;

if (decisions->vec4) {

threads = (src->ne[1] * src->ne[2] * src->ne[3]) * (src->ne[0] / 4);

} else {

threads = src->ne[0] * src->ne[1] * src->ne[2] * src->ne[3];

}

uint32_t wg_x = CEIL_DIV(threads, decisions->wg_size);

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, pipeline, params, entries, wg_x, 1);

}

// Workgroup size is a common constant

static std::vector<wgpu::ConstantEntry> ggml_webgpu_wg_size_entry(uint32_t wg_size) {

std::vector<wgpu::ConstantEntry> constants(1);

constants[0].key = "wg_size";

constants[0].value = wg_size;

return constants;

}

static webgpu_command ggml_webgpu_get_rows(webgpu_context & ctx,

ggml_tensor * src,

ggml_tensor * idx,

ggml_tensor * dst) {

ggml_webgpu_shader_lib_context shader_lib_ctx = {

.src0 = src,

.src1 = nullptr,

.dst = dst,

.max_wg_size = WEBGPU_MAX_WG_SIZE,

};

webgpu_pipeline pipeline = ctx->shader_lib->get_get_rows_pipeline(shader_lib_ctx);

auto * decisions = static_cast<ggml_webgpu_generic_shader_decisions *>(pipeline.context.get());

std::vector<uint32_t> params = { (uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src) / ggml_type_size(src->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, idx) / ggml_type_size(idx->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

(uint32_t) (src->nb[1] / ggml_type_size(src->type)),

(uint32_t) (src->nb[2] / ggml_type_size(src->type)),

(uint32_t) (src->nb[3] / ggml_type_size(src->type)),

(uint32_t) (idx->nb[0] / ggml_type_size(idx->type)),

(uint32_t) (idx->nb[1] / ggml_type_size(idx->type)),

(uint32_t) (idx->nb[2] / ggml_type_size(idx->type)),

(uint32_t) (dst->nb[1] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[2] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[3] / ggml_type_size(dst->type)),

(uint32_t) dst->ne[0],

(uint32_t) dst->ne[1],

(uint32_t) dst->ne[2],

(uint32_t) dst->ne[3],

(uint32_t) (idx->ne[1]),

(uint32_t) (idx->ne[2]) };

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(src),

.offset = ggml_webgpu_tensor_align_offset(ctx, src),

.size = ggml_webgpu_tensor_binding_size(ctx, src) },

{ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(idx),

.offset = ggml_webgpu_tensor_align_offset(ctx, idx),

.size = ggml_webgpu_tensor_binding_size(ctx, idx) },

{ .binding = 2,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) }

};

uint32_t wg_x = CEIL_DIV(dst->ne[1] * dst->ne[2] * dst->ne[3], decisions->wg_size);

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, pipeline, params, entries, wg_x);

}

static webgpu_command ggml_webgpu_mul_mat(webgpu_context & ctx,

ggml_tensor * src0,

ggml_tensor * src1,

ggml_tensor * dst) {

// Determine if this is a mat-vec operation

bool is_vec = (dst->ne[1] == 1);

// Determine if we should use fast path

bool use_fast = false;

switch (src1->type) {

case GGML_TYPE_F16:

use_fast = (src0->type == GGML_TYPE_F16);

break;

case GGML_TYPE_F32:

// TODO: implement better mat-mat for k-quants, mat-vec for all k-quants except q6_K

switch (src0->type) {

case GGML_TYPE_F32:

case GGML_TYPE_F16:

case GGML_TYPE_Q4_0:

case GGML_TYPE_Q4_1:

case GGML_TYPE_Q5_0:

case GGML_TYPE_Q5_1:

case GGML_TYPE_Q8_0:

case GGML_TYPE_Q8_1:

case GGML_TYPE_Q6_K:

use_fast = true;

break;

case GGML_TYPE_Q2_K:

case GGML_TYPE_Q3_K:

case GGML_TYPE_Q4_K:

case GGML_TYPE_Q5_K:

// we don't have fast mat-vec for these types, but we do have (semi) fast mat-mat

use_fast = !is_vec;

break;

default:

break;

}

break;

default:

break;

}

ggml_webgpu_shader_lib_context shader_lib_ctx = {

.src0 = src0,

.src1 = src1,

.dst = dst,

.max_wg_size = ctx->global_ctx->capabilities.limits.maxComputeInvocationsPerWorkgroup,

.supports_subgroup_matrix = ctx->global_ctx->capabilities.supports_subgroup_matrix,

.sg_mat_m = ctx->global_ctx->capabilities.sg_mat_m,

.sg_mat_n = ctx->global_ctx->capabilities.sg_mat_n,

.sg_mat_k = ctx->global_ctx->capabilities.sg_mat_k,

.max_subgroup_size = ctx->global_ctx->capabilities.max_subgroup_size,

};

// Get or create pipeline

webgpu_pipeline pipeline;

if (use_fast && is_vec) {

pipeline = ctx->shader_lib->get_mul_mat_vec_pipeline(shader_lib_ctx);

} else if (use_fast) {

pipeline = ctx->shader_lib->get_mul_mat_fast_pipeline(shader_lib_ctx);

} else {

pipeline = ctx->shader_lib->get_mul_mat_legacy_pipeline(shader_lib_ctx);

}

// Build params

std::vector<uint32_t> params = {

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src0) / ggml_type_size(src0->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src1) / ggml_type_size(src1->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

(uint32_t) dst->ne[0],

(uint32_t) dst->ne[1],

(uint32_t) src0->ne[0],

(uint32_t) (src0->nb[1] / ggml_type_size(src0->type)),

(uint32_t) (src1->nb[1] / ggml_type_size(src1->type)),

(uint32_t) (src0->nb[2] / ggml_type_size(src0->type)),

(uint32_t) (src1->nb[2] / ggml_type_size(src1->type)),

(uint32_t) (src0->nb[3] / ggml_type_size(src0->type)),

(uint32_t) (src1->nb[3] / ggml_type_size(src1->type)),

(uint32_t) src0->ne[2],

(uint32_t) src0->ne[3],

(uint32_t) (src1->ne[2] / src0->ne[2]),

(uint32_t) (src1->ne[3] / src0->ne[3])

};

// Build bind group entries

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(src0),

.offset = ggml_webgpu_tensor_align_offset(ctx, src0),

.size = ggml_webgpu_tensor_binding_size(ctx, src0) },

{ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(src1),

.offset = ggml_webgpu_tensor_align_offset(ctx, src1),

.size = ggml_webgpu_tensor_binding_size(ctx, src1) },

{ .binding = 2,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) },

};

// Calculate workgroup dimensions

uint32_t wg_x = 1;

uint32_t wg_y = 1;

const uint32_t max_wg_per_dim = ctx->global_ctx->capabilities.limits.maxComputeWorkgroupsPerDimension;

if (use_fast && is_vec) {

auto * decisions = static_cast<ggml_webgpu_mul_mat_vec_shader_decisions *>(pipeline.context.get());

uint32_t batches = dst->ne[2] * dst->ne[3];

uint32_t output_groups = CEIL_DIV(dst->ne[0], decisions->outputs_per_wg);

uint32_t total_wg = output_groups * batches;

compute_2d_workgroups(total_wg, max_wg_per_dim, wg_x, wg_y);

} else if (use_fast) {

auto * decisions = static_cast<ggml_webgpu_mul_mat_shader_decisions *>(pipeline.context.get());

// Fast-path tiled/subgroup calculations

uint32_t wg_m;

uint32_t wg_n;

if (decisions->use_subgroup_matrix) {

uint32_t wg_m_sg_tile =

decisions->subgroup_m * decisions->subgroup_matrix_m * ctx->global_ctx->capabilities.sg_mat_m;

wg_m = CEIL_DIV(dst->ne[0], wg_m_sg_tile);

uint32_t wg_n_sg_tile =

decisions->subgroup_n * decisions->subgroup_matrix_n * ctx->global_ctx->capabilities.sg_mat_n;

wg_n = CEIL_DIV(dst->ne[1], wg_n_sg_tile);

} else {

uint32_t tile_m_s = decisions->tile_m * decisions->wg_size_m;

uint32_t tile_n_s = decisions->tile_n * decisions->wg_size_n;

wg_m = CEIL_DIV(dst->ne[0], tile_m_s);

wg_n = CEIL_DIV(dst->ne[1], tile_n_s);

}

uint32_t total_wg = wg_m * wg_n * dst->ne[2] * dst->ne[3];

compute_2d_workgroups(total_wg, max_wg_per_dim, wg_x, wg_y);

} else { // legacy

auto * decisions = static_cast<ggml_webgpu_generic_shader_decisions *>(pipeline.context.get());

uint32_t wg_size = decisions->wg_size;

uint32_t total_wg = CEIL_DIV(dst->ne[0] * dst->ne[1] * dst->ne[2] * dst->ne[3], wg_size);

compute_2d_workgroups(total_wg, max_wg_per_dim, wg_x, wg_y);

}

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, pipeline, params, entries, wg_x, wg_y);

}

#ifndef __EMSCRIPTEN__

static webgpu_command ggml_webgpu_flash_attn(webgpu_context & ctx,

ggml_tensor * Q,

ggml_tensor * K,

ggml_tensor * V,

ggml_tensor * mask,

ggml_tensor * sinks,

ggml_tensor * dst) {

float scale = *(float *) dst->op_params;

float max_bias;

memcpy(&max_bias, (float *) dst->op_params + 1, sizeof(float));

float logit_softcap;

memcpy(&logit_softcap, (float *) dst->op_params + 2, sizeof(float));

if (logit_softcap != 0.0f) {

scale /= logit_softcap;

}

float n_head_log2 = float(1u << (uint32_t) floor(log2(Q->ne[2])));

float m0 = powf(2.0f, -(max_bias) / n_head_log2);

float m1 = powf(2.0f, -(max_bias / 2.0f) / n_head_log2);

const int has_mask = (mask != nullptr);

const int has_sinks = (sinks != nullptr);

std::vector<uint32_t> params = {

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, Q) / ggml_type_size(Q->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, K) / ggml_type_size(K->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, V) / ggml_type_size(V->type)),

has_mask ? (uint32_t) (ggml_webgpu_tensor_misalignment(ctx, mask) / ggml_type_size(mask->type)) : 0,

has_sinks ? (uint32_t) (ggml_webgpu_tensor_misalignment(ctx, sinks) / ggml_type_size(sinks->type)) : 0,

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

(uint32_t) Q->ne[2], // number of heads

(uint32_t) Q->ne[1], // sequence length (Q)

(uint32_t) K->ne[1], // sequence length (K/V)

(uint32_t) (Q->nb[1] / ggml_type_size(Q->type)), // stride (elements/blocks) of Q in dimension 1

(uint32_t) (Q->nb[2] / ggml_type_size(Q->type)), // stride (elements/blocks) of Q in dimension 2

(uint32_t) (Q->nb[3] / ggml_type_size(Q->type)), // stride (elements/blocks) of Q in dimension 3

(uint32_t) (K->nb[1] / ggml_type_size(K->type)), // stride (elements/blocks) of K in dimension 1

(uint32_t) (K->nb[2] / ggml_type_size(K->type)), // stride (elements/blocks) of K in dimension 2

(uint32_t) (K->nb[3] / ggml_type_size(K->type)), // stride (elements/blocks) of K in dimension 3

(uint32_t) (V->nb[1] / ggml_type_size(V->type)), // stride (elements/blocks) of V in dimension 1

(uint32_t) (V->nb[2] / ggml_type_size(V->type)), // stride (elements/blocks) of V in dimension 2

(uint32_t) (V->nb[3] / ggml_type_size(V->type)), // stride (elements/blocks) of V in dimension 3

has_mask ? (uint32_t) (mask->nb[3] / ggml_type_size(mask->type)) : 0, // stride of mask dim 3

(uint32_t) (Q->ne[2] / K->ne[2]), // repeat factor for K/V in dim 2 (MHA/MQA/GQA)

*(uint32_t *) &scale, // scale (possibly adjusted for logit softcap)

*(uint32_t *) &max_bias,

*(uint32_t *) &logit_softcap,

*(uint32_t *) &n_head_log2,

*(uint32_t *) &m0,

*(uint32_t *) &m1

};

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(Q),

.offset = ggml_webgpu_tensor_align_offset(ctx, Q),

.size = ggml_webgpu_tensor_binding_size(ctx, Q) },

{ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(K),

.offset = ggml_webgpu_tensor_align_offset(ctx, K),

.size = ggml_webgpu_tensor_binding_size(ctx, K) },

{ .binding = 2,

.buffer = ggml_webgpu_tensor_buf(V),

.offset = ggml_webgpu_tensor_align_offset(ctx, V),

.size = ggml_webgpu_tensor_binding_size(ctx, V) }

};

uint32_t binding_index = 3;

if (has_mask) {

entries.push_back({ .binding = binding_index++,

.buffer = ggml_webgpu_tensor_buf(mask),

.offset = ggml_webgpu_tensor_align_offset(ctx, mask),

.size = ggml_webgpu_tensor_binding_size(ctx, mask) });

}

if (has_sinks) {

entries.push_back({ .binding = binding_index++,

.buffer = ggml_webgpu_tensor_buf(sinks),

.offset = ggml_webgpu_tensor_align_offset(ctx, sinks),

.size = ggml_webgpu_tensor_binding_size(ctx, sinks) });

}

entries.push_back({ .binding = binding_index++,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) });

ggml_webgpu_shader_lib_context shader_lib_ctx = {

.src0 = Q,

.src1 = K,

.src2 = V,

.src3 = mask,

.src4 = sinks,

.dst = dst,

.max_wg_size = ctx->global_ctx->capabilities.limits.maxComputeInvocationsPerWorkgroup,

.wg_mem_limit_bytes = ctx->global_ctx->capabilities.limits.maxComputeWorkgroupStorageSize,

.sg_mat_m = ctx->global_ctx->capabilities.sg_mat_m,

.sg_mat_n = ctx->global_ctx->capabilities.sg_mat_n,

.sg_mat_k = ctx->global_ctx->capabilities.sg_mat_k,

.max_subgroup_size = ctx->global_ctx->capabilities.max_subgroup_size,

};

webgpu_pipeline pipeline = ctx->shader_lib->get_flash_attn_pipeline(shader_lib_ctx);

auto * decisions = static_cast<ggml_webgpu_flash_attn_shader_decisions *>(pipeline.context.get());

uint32_t wg_per_head = CEIL_DIV(Q->ne[1], decisions->q_tile);

uint32_t wg_x = wg_per_head * Q->ne[2] * Q->ne[3]; // wg per head * number of heads * number of batches

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, pipeline, params, entries, wg_x);

}

#endif

static webgpu_command ggml_webgpu_unary_op(webgpu_context & ctx, ggml_tensor * src, ggml_tensor * dst) {

bool is_unary = dst->op == GGML_OP_UNARY;

bool inplace = ggml_webgpu_tensor_equal(src, dst) || (dst->op == GGML_OP_FILL);

ggml_webgpu_shader_lib_context shader_lib_ctx = {

.src0 = src,

.src1 = nullptr,

.dst = dst,

.max_wg_size = ctx->global_ctx->capabilities.limits.maxComputeInvocationsPerWorkgroup,

.inplace = inplace,

};

webgpu_pipeline pipeline = ctx->shader_lib->get_unary_pipeline(shader_lib_ctx);

auto * decisions = static_cast<ggml_webgpu_generic_shader_decisions *>(pipeline.context.get());

uint32_t ne = (uint32_t) ggml_nelements(dst);

std::vector<uint32_t> params = { ne,

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src) / ggml_type_size(src->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

(uint32_t) (src->nb[0] / ggml_type_size(src->type)),

(uint32_t) (src->nb[1] / ggml_type_size(src->type)),

(uint32_t) (src->nb[2] / ggml_type_size(src->type)),

(uint32_t) (src->nb[3] / ggml_type_size(src->type)),

(uint32_t) src->ne[0],

(uint32_t) src->ne[1],

(uint32_t) src->ne[2] };

ggml_tensor * effective_src = src;

if (is_unary) {

ggml_unary_op unary_op = ggml_get_unary_op(dst);

switch (unary_op) {

case GGML_UNARY_OP_XIELU:

{

// Get float parameters and reinterpret their bit patterns as uint32_t

// for passing through the params buffer

float alpha_n = ggml_get_op_params_f32(dst, 1);

float alpha_p = ggml_get_op_params_f32(dst, 2);

float beta = ggml_get_op_params_f32(dst, 3);

float eps = ggml_get_op_params_f32(dst, 4);

params.push_back(*reinterpret_cast<const uint32_t *>(&alpha_n));

params.push_back(*reinterpret_cast<const uint32_t *>(&alpha_p));

params.push_back(*reinterpret_cast<const uint32_t *>(&beta));

params.push_back(*reinterpret_cast<const uint32_t *>(&eps));

break;

}

default:

break;

}

} else if (dst->op == GGML_OP_CLAMP) {

float clamp_min = ggml_get_op_params_f32(dst, 0);

float clamp_max = ggml_get_op_params_f32(dst, 1);

params.push_back(*reinterpret_cast<const uint32_t *>(&clamp_min));

params.push_back(*reinterpret_cast<const uint32_t *>(&clamp_max));

} else if (dst->op == GGML_OP_FILL) {

float fill_val = ggml_get_op_params_f32(dst, 0);

params.push_back(*reinterpret_cast<const uint32_t *>(&fill_val));

effective_src = dst; // fill simply fills dst

}

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(effective_src),

.offset = ggml_webgpu_tensor_align_offset(ctx, effective_src),

.size = ggml_webgpu_tensor_binding_size(ctx, effective_src) },

};

if (!inplace) {

entries.push_back({ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) });

}

uint32_t wg_x = CEIL_DIV(ne, decisions->wg_size);

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, pipeline, params, entries, wg_x);

}

static webgpu_command ggml_webgpu_binary_op(webgpu_context & ctx,

ggml_tensor * src0,

ggml_tensor * src1,

ggml_tensor * dst) {

binary_overlap_flags flags = ggml_webgpu_detect_binary_overlap(src0, src1, dst);

ggml_webgpu_shader_lib_context shader_lib_ctx = {

.src0 = src0,

.src1 = src1,

.dst = dst,

.max_wg_size = ctx->global_ctx->capabilities.limits.maxComputeInvocationsPerWorkgroup,

.inplace = flags.inplace,

.overlap = flags.overlap,

.src_overlap = flags.src_overlap,

};

webgpu_pipeline pipeline = ctx->shader_lib->get_binary_pipeline(shader_lib_ctx);

auto * decisions = static_cast<ggml_webgpu_generic_shader_decisions *>(pipeline.context.get());

uint32_t ne = (uint32_t) ggml_nelements(dst);

size_t src0_webgpu_tensor_align_offset = ggml_webgpu_tensor_align_offset(ctx, src0);

size_t src1_webgpu_tensor_align_offset = ggml_webgpu_tensor_align_offset(ctx, src1);

uint32_t offset_merged_src0 = 0;

uint32_t offset_merged_src1 = 0;

if (flags.src_overlap) {

size_t min_off = std::min(src0_webgpu_tensor_align_offset, src1_webgpu_tensor_align_offset);

offset_merged_src0 = (uint32_t) ((src0_webgpu_tensor_align_offset - min_off) / ggml_type_size(src0->type));

offset_merged_src1 = (uint32_t) ((src1_webgpu_tensor_align_offset - min_off) / ggml_type_size(src0->type));

}

std::vector<uint32_t> params = {

ne,

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src0) / ggml_type_size(src0->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src1) / ggml_type_size(src1->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

offset_merged_src0,

offset_merged_src1,

(uint32_t) (src0->nb[0] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[1] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[2] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[3] / ggml_type_size(src0->type)),

(uint32_t) (src1->nb[0] / ggml_type_size(src1->type)),

(uint32_t) (src1->nb[1] / ggml_type_size(src1->type)),

(uint32_t) (src1->nb[2] / ggml_type_size(src1->type)),

(uint32_t) (src1->nb[3] / ggml_type_size(src1->type)),

(uint32_t) src0->ne[0],

(uint32_t) src0->ne[1],

(uint32_t) src0->ne[2],

(uint32_t) src1->ne[0],

(uint32_t) src1->ne[1],

(uint32_t) src1->ne[2],

(uint32_t) src1->ne[3],

};

std::vector<wgpu::BindGroupEntry> entries;

if (flags.src_overlap) {

size_t merged_offset = std::min(src0_webgpu_tensor_align_offset, src1_webgpu_tensor_align_offset);

size_t merged_end = std::max(src0_webgpu_tensor_align_offset + ggml_webgpu_tensor_binding_size(ctx, src0),

src1_webgpu_tensor_align_offset + ggml_webgpu_tensor_binding_size(ctx, src1));

entries.push_back({

.binding = 0,

.buffer = ggml_webgpu_tensor_buf(src0),

.offset = merged_offset,

.size = merged_end - merged_offset,

});

entries.push_back({

.binding = 1,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst),

});

} else {

entries.push_back({

.binding = 0,

.buffer = ggml_webgpu_tensor_buf(src0),

.offset = src0_webgpu_tensor_align_offset,

.size = ggml_webgpu_tensor_binding_size(ctx, src0),

});

entries.push_back({

.binding = 1,

.buffer = ggml_webgpu_tensor_buf(src1),

.offset = src1_webgpu_tensor_align_offset,

.size = ggml_webgpu_tensor_binding_size(ctx, src1),

});

if (!flags.inplace && !flags.overlap) {

entries.push_back({

.binding = 2,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst),

});

}

}

uint32_t wg_x = CEIL_DIV(ne, decisions->wg_size);

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, pipeline, params, entries, wg_x);

}

static webgpu_command ggml_webgpu_concat(webgpu_context & ctx,

ggml_tensor * src0,

ggml_tensor * src1,

ggml_tensor * dst) {

uint32_t ne = (uint32_t) ggml_nelements(dst);

uint32_t dim = (uint32_t) dst->op_params[0];

std::vector<uint32_t> params = {

ne,

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src0) / ggml_type_size(src0->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src1) / ggml_type_size(src1->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

(uint32_t) (src0->nb[0] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[1] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[2] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[3] / ggml_type_size(src0->type)),

(uint32_t) (src1->nb[0] / ggml_type_size(src1->type)),

(uint32_t) (src1->nb[1] / ggml_type_size(src1->type)),

(uint32_t) (src1->nb[2] / ggml_type_size(src1->type)),

(uint32_t) (src1->nb[3] / ggml_type_size(src1->type)),

(uint32_t) dst->ne[0],

(uint32_t) dst->ne[1],

(uint32_t) dst->ne[2],

(uint32_t) dst->ne[3],

dim,

(uint32_t) src0->ne[dim]

};

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(src0),

.offset = ggml_webgpu_tensor_align_offset(ctx, src0),

.size = ggml_webgpu_tensor_binding_size(ctx, src0) },

{ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(src1),

.offset = ggml_webgpu_tensor_align_offset(ctx, src1),

.size = ggml_webgpu_tensor_binding_size(ctx, src1) },

{ .binding = 2,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) }

};

ggml_webgpu_shader_lib_context shader_lib_ctx = {

.src0 = src0,

.src1 = src1,

.dst = dst,

.max_wg_size = ctx->global_ctx->capabilities.limits.maxComputeInvocationsPerWorkgroup,

};

webgpu_pipeline pipeline = ctx->shader_lib->get_concat_pipeline(shader_lib_ctx);

auto * decisions = static_cast<ggml_webgpu_generic_shader_decisions *>(pipeline.context.get());

uint32_t wg_x = CEIL_DIV(ne, decisions->wg_size);

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, pipeline, params, entries, wg_x);

}

static webgpu_command ggml_webgpu_repeat(webgpu_context & ctx, ggml_tensor * src0, ggml_tensor * dst) {

uint32_t ne = (uint32_t) ggml_nelements(dst);

std::vector<uint32_t> params = { ne,

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src0) /

ggml_type_size(src0->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

(uint32_t) (src0->nb[0] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[1] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[2] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[3] / ggml_type_size(src0->type)),

(uint32_t) (src0->ne[0]),

(uint32_t) (src0->ne[1]),

(uint32_t) (src0->ne[2]),

(uint32_t) (src0->ne[3]),

(uint32_t) (dst->ne[0]),

(uint32_t) (dst->ne[1]),

(uint32_t) (dst->ne[2]) };

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(src0),

.offset = ggml_webgpu_tensor_align_offset(ctx, src0),

.size = ggml_webgpu_tensor_binding_size(ctx, src0) },

{ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) }

};

ggml_webgpu_shader_lib_context shader_lib_ctx = {

.src0 = src0,

.dst = dst,

.max_wg_size = ctx->global_ctx->capabilities.limits.maxComputeInvocationsPerWorkgroup,

};

webgpu_pipeline pipeline = ctx->shader_lib->get_repeat_pipeline(shader_lib_ctx);

auto * decisions = static_cast<ggml_webgpu_generic_shader_decisions *>(pipeline.context.get());

uint32_t wg_x = CEIL_DIV(ne, decisions->wg_size);

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, pipeline, params, entries, wg_x);

}

static webgpu_command ggml_webgpu_rms_norm(webgpu_context & ctx, ggml_tensor * src, ggml_tensor * dst) {

int inplace = ggml_webgpu_tensor_equal(src, dst);

std::vector<uint32_t> params = {

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src) / ggml_type_size(src->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

(uint32_t) (src->nb[1] / ggml_type_size(src->type)),

(uint32_t) (src->nb[2] / ggml_type_size(src->type)),

(uint32_t) (src->nb[3] / ggml_type_size(src->type)),

(uint32_t) (dst->nb[1] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[2] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[3] / ggml_type_size(dst->type)),

(uint32_t) src->ne[0],

(uint32_t) src->ne[1],

(uint32_t) src->ne[2],

(uint32_t) src->ne[3],

*(uint32_t *) dst->op_params // epsilon, treated as f32 in the shader

};

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(src),

.offset = ggml_webgpu_tensor_align_offset(ctx, src),

.size = ggml_webgpu_tensor_binding_size(ctx, src) }

};

if (!inplace) {

entries.push_back({ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) });

}

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, ctx->rms_norm_pipelines[inplace], params,

entries, ggml_nrows(src));

}

static webgpu_command ggml_webgpu_rope(webgpu_context & ctx,

ggml_tensor * src0,

ggml_tensor * src1,

ggml_tensor * src2,

ggml_tensor * dst) {

const int inplace = ggml_webgpu_tensor_equal(src0, dst);

const int has_freq_factor = (src2 != nullptr);

const int n_dims = ((int32_t *) dst->op_params)[1];

const int mode = ((int32_t *) dst->op_params)[2];

const int n_ctx_orig = ((int32_t *) dst->op_params)[4];

float freq_base;

float freq_scale;

float ext_factor;

float attn_factor;

float beta_fast;

float beta_slow;

memcpy(&freq_base, (int32_t *) dst->op_params + 5, sizeof(float));

memcpy(&freq_scale, (int32_t *) dst->op_params + 6, sizeof(float));

memcpy(&ext_factor, (int32_t *) dst->op_params + 7, sizeof(float));

memcpy(&attn_factor, (int32_t *) dst->op_params + 8, sizeof(float));

memcpy(&beta_fast, (int32_t *) dst->op_params + 9, sizeof(float));

memcpy(&beta_slow, (int32_t *) dst->op_params + 10, sizeof(float));

int sections[4];

memcpy(sections, (int32_t *) dst->op_params + 11, 4 * sizeof(int));

float theta_scale = powf(freq_base, -2.0f / n_dims);

float corr_dims[2];

ggml_rope_yarn_corr_dims(n_dims, n_ctx_orig, freq_base, beta_fast, beta_slow, corr_dims);

std::vector<uint32_t> params = {

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src0) / ggml_type_size(src0->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src1) / ggml_type_size(src1->type)),

src2 != nullptr ? (uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src2) / ggml_type_size(src2->type)) : 0,

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

(uint32_t) (src0->nb[1] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[2] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[3] / ggml_type_size(src0->type)),

(uint32_t) (dst->nb[1] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[2] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[3] / ggml_type_size(dst->type)),

(uint32_t) ggml_nelements(src0) / 2,

(uint32_t) src0->ne[0],

(uint32_t) src0->ne[1],

(uint32_t) src0->ne[2],

(uint32_t) n_dims,

(uint32_t) mode,

*(uint32_t *) &theta_scale,

*(uint32_t *) &attn_factor,

*(uint32_t *) &freq_scale,

*(uint32_t *) &ext_factor,

*(uint32_t *) &corr_dims[0],

*(uint32_t *) &corr_dims[1],

(uint32_t) sections[0],

(uint32_t) sections[1],

(uint32_t) sections[2],

(uint32_t) sections[3]

};

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(src0),

.offset = ggml_webgpu_tensor_align_offset(ctx, src0),

.size = ggml_webgpu_tensor_binding_size(ctx, src0) },

{ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(src1),

.offset = ggml_webgpu_tensor_align_offset(ctx, src1),

.size = ggml_webgpu_tensor_binding_size(ctx, src1) }

};

uint32_t dst_binding = 2;

if (has_freq_factor) {

dst_binding = 3;

entries.push_back({ .binding = 2,

.buffer = ggml_webgpu_tensor_buf(src2),

.offset = ggml_webgpu_tensor_align_offset(ctx, src2),

.size = ggml_webgpu_tensor_binding_size(ctx, src2) });

}

if (!inplace) {

entries.push_back({ .binding = dst_binding,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) });

}

webgpu_pipeline pipeline = ctx->rope_pipelines[dst->type][has_freq_factor][inplace];

uint32_t wg_x = CEIL_DIV(ggml_nelements(dst), WEBGPU_MAX_WG_SIZE);

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, pipeline, params, entries, wg_x);

}

static webgpu_command ggml_webgpu_glu(webgpu_context & ctx, ggml_tensor * src0, ggml_tensor * src1, ggml_tensor * dst) {

const int split = (src1 != nullptr);

std::vector<uint32_t> params = {

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src0) / ggml_type_size(src0->type)),

src1 != nullptr ? (uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src1) / ggml_type_size(src1->type)) : 0,

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

(uint32_t) (src0->nb[1] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[2] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[3] / ggml_type_size(src0->type)),

src1 != nullptr ? (uint32_t) (src1->nb[1] / ggml_type_size(src1->type)) :

(uint32_t) (src0->nb[1] / ggml_type_size(src0->type)),

src1 != nullptr ? (uint32_t) (src1->nb[2] / ggml_type_size(src1->type)) :

(uint32_t) (src0->nb[2] / ggml_type_size(src0->type)),

src1 != nullptr ? (uint32_t) (src1->nb[3] / ggml_type_size(src1->type)) :

(uint32_t) (src0->nb[3] / ggml_type_size(src0->type)),

(uint32_t) (dst->nb[1] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[2] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[3] / ggml_type_size(dst->type)),

(uint32_t) ggml_nelements(dst),

(uint32_t) dst->ne[0],

(uint32_t) dst->ne[1],

(uint32_t) dst->ne[2],

(uint32_t) ((int32_t *) dst->op_params)[1], // swapped

*(uint32_t *) &dst->op_params[2], // alpha, for swiglu_oai

*(uint32_t *) &dst->op_params[3], // limit, for swiglu_oai

};

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(src0),

.offset = ggml_webgpu_tensor_align_offset(ctx, src0),

.size = ggml_webgpu_tensor_binding_size(ctx, src0) },

};

uint32_t dst_binding = 1;

if (split) {

dst_binding = 2;

entries.push_back({ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(src1),

.offset = ggml_webgpu_tensor_align_offset(ctx, src1),

.size = ggml_webgpu_tensor_binding_size(ctx, src1) });

}

entries.push_back({ .binding = dst_binding,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) });

webgpu_pipeline pipeline = ctx->glu_pipelines[ggml_get_glu_op(dst)][dst->type][split];

uint32_t wg_x = CEIL_DIV(ggml_nelements(dst), WEBGPU_MAX_WG_SIZE);

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, pipeline, params, entries, wg_x);

}

static webgpu_command ggml_webgpu_scale(webgpu_context & ctx, ggml_tensor * src, ggml_tensor * dst) {

bool inplace = ggml_webgpu_tensor_equal(src, dst);

ggml_webgpu_shader_lib_context shader_lib_ctx = {

.src0 = src,

.src1 = nullptr,

.dst = dst,

.max_wg_size = ctx->global_ctx->capabilities.limits.maxComputeInvocationsPerWorkgroup,

.inplace = inplace,

};

webgpu_pipeline pipeline = ctx->shader_lib->get_scale_pipeline(shader_lib_ctx);

auto * decisions = static_cast<ggml_webgpu_generic_shader_decisions *>(pipeline.context.get());

// params unchanged

std::vector<uint32_t> params = {

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src) / ggml_type_size(src->type)),

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

(uint32_t) (src->nb[1] / ggml_type_size(src->type)),

(uint32_t) (src->nb[2] / ggml_type_size(src->type)),

(uint32_t) (src->nb[3] / ggml_type_size(src->type)),

(uint32_t) (dst->nb[1] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[2] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[3] / ggml_type_size(dst->type)),

(uint32_t) ggml_nelements(dst),

(uint32_t) src->ne[0],

(uint32_t) src->ne[1],

(uint32_t) src->ne[2],

*(uint32_t *) dst->op_params, // scale

*(uint32_t *) &dst->op_params[1] // bias

};

// bindgroups unchanged

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(src),

.offset = ggml_webgpu_tensor_align_offset(ctx, src),

.size = ggml_webgpu_tensor_binding_size(ctx, src) }

};

if (!inplace) {

entries.push_back({ .binding = 1,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) });

}

uint32_t wg_x = CEIL_DIV(ggml_nelements(dst), decisions->wg_size);

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool, pipeline, params, entries, wg_x);

}

static webgpu_command ggml_webgpu_soft_max(webgpu_context & ctx,

ggml_tensor * src0,

ggml_tensor * src1,

ggml_tensor * src2,

ggml_tensor * dst) {

const int inplace = ggml_webgpu_tensor_equal(src0, dst);

const int mask_type = (src1 != nullptr) ? src1->type : 2; // use 2 for no mask here

const int has_sink = (src2 != nullptr);

float max_bias;

memcpy(&max_bias, (float *) dst->op_params + 1, sizeof(float));

float n_head_log2 = float(1u << (uint32_t) floor(log2(src0->ne[2])));

float m0 = powf(2.0f, -(max_bias) / n_head_log2);

float m1 = powf(2.0f, -(max_bias / 2.0f) / n_head_log2);

std::vector<uint32_t> params = {

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src0) / ggml_type_size(src0->type)),

mask_type < 2 ? (uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src1) / ggml_type_size(src1->type)) : 0,

has_sink ? (uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src2) / ggml_type_size(src2->type)) : 0,

(uint32_t) (ggml_webgpu_tensor_misalignment(ctx, dst) / ggml_type_size(dst->type)),

(uint32_t) (src0->nb[1] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[2] / ggml_type_size(src0->type)),

(uint32_t) (src0->nb[3] / ggml_type_size(src0->type)),

mask_type < 2 ? (uint32_t) (src1->nb[1] / ggml_type_size(src1->type)) : 0,

mask_type < 2 ? (uint32_t) (src1->nb[2] / ggml_type_size(src1->type)) : 0,

mask_type < 2 ? (uint32_t) (src1->nb[3] / ggml_type_size(src1->type)) : 0,

(uint32_t) (dst->nb[1] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[2] / ggml_type_size(dst->type)),

(uint32_t) (dst->nb[3] / ggml_type_size(dst->type)),

(uint32_t) ggml_nelements(dst),

(uint32_t) src0->ne[0],

(uint32_t) src0->ne[1],

(uint32_t) src0->ne[2],

mask_type < 2 ? (uint32_t) src1->ne[2] : 0,

mask_type < 2 ? (uint32_t) src1->ne[3] : 0,

*(uint32_t *) dst->op_params, // scale

*(uint32_t *) &max_bias,

*(uint32_t *) &n_head_log2,

*(uint32_t *) &m0,

*(uint32_t *) &m1

};

std::vector<wgpu::BindGroupEntry> entries = {

{ .binding = 0,

.buffer = ggml_webgpu_tensor_buf(src0),

.offset = ggml_webgpu_tensor_align_offset(ctx, src0),

.size = ggml_webgpu_tensor_binding_size(ctx, src0) }

};

uint32_t binding_num = 1;

if (mask_type < 2) {

entries.push_back({ .binding = binding_num,

.buffer = ggml_webgpu_tensor_buf(src1),

.offset = ggml_webgpu_tensor_align_offset(ctx, src1),

.size = ggml_webgpu_tensor_binding_size(ctx, src1) });

binding_num++;

}

if (has_sink) {

entries.push_back({ .binding = binding_num,

.buffer = ggml_webgpu_tensor_buf(src2),

.offset = ggml_webgpu_tensor_align_offset(ctx, src2),

.size = ggml_webgpu_tensor_binding_size(ctx, src2) });

binding_num++;

}

if (!inplace) {

entries.push_back({ .binding = binding_num,

.buffer = ggml_webgpu_tensor_buf(dst),

.offset = ggml_webgpu_tensor_align_offset(ctx, dst),

.size = ggml_webgpu_tensor_binding_size(ctx, dst) });

}

return ggml_backend_webgpu_build(ctx->global_ctx, ctx->param_buf_pool,

ctx->soft_max_pipelines[mask_type][has_sink][inplace], params, entries,

ggml_nrows(dst));

}

static webgpu_command ggml_webgpu_argmax(webgpu_context & ctx, ggml_tensor * src, ggml_tensor * dst) {

std::vector<uint32_t> params = { (uint32_t) (ggml_webgpu_tensor_misalignment(ctx, src) / ggml_type_size(src->type)),