本文从工程视角系统讲解如何用数据管道+AI工具构建真正具有竞争壁垒的亚马逊选品分析体系。包含:评论语义分析、竞争位置数据采集、AI Agent工作流设计,以及完整的Python代码示例。

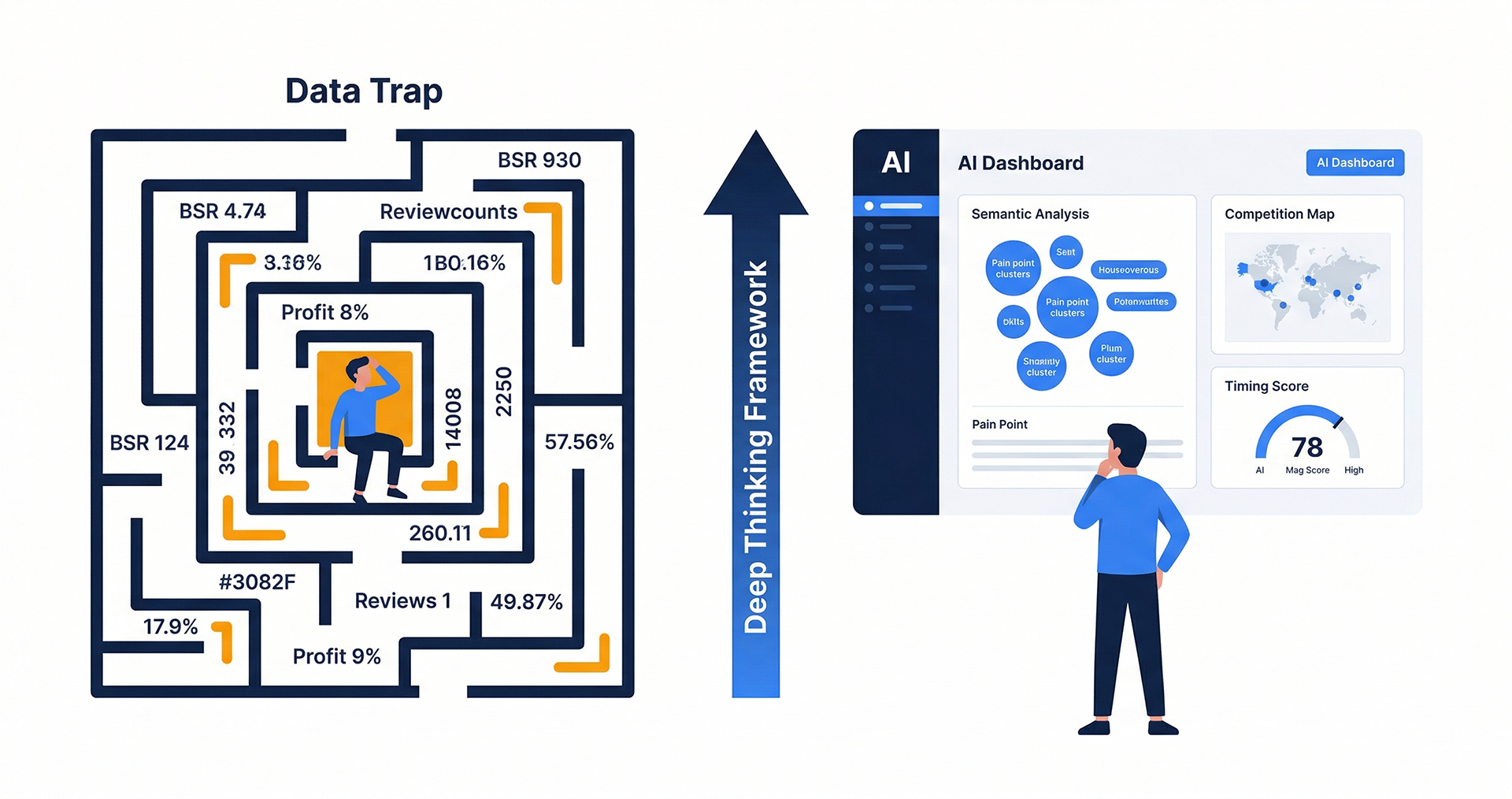

为什么说大多数AI选品工具没用到核心

当前市场上的AI选品工具停留在两个层次:数据聚合(把公开数据整合成Dashboard)和统计分析(在聚合数据上跑预设算法)。这两个层次解决的是效率问题,不是判断深度问题。

真正的竞争壁垒来自第三个层次:语义理解+自定义分析框架。具体来说,就是把原始的评论文本、搜索结果HTML、广告位分布数据,送入语义理解引擎,提取出比BSR和搜索量更接近市场真相的信号。

这需要三个技术组件:高质量的数据采集API(解决数据获取问题)、语义分析引擎(解决数据理解问题)、信号触发机制(解决时效性问题)。下文逐一展开。

组件一:评论语义分析系统

设计思路

评论是目前亚马逊生态中信噪比最高的用户反馈数据。比搜索量更能反映真实需求状态,比专家意见更有规模代表性。但原始评论文本需要处理才能变成可用的信号。

完整的评论分析pipeline如下:

原始URL列表

→ Reviews Scraper API(批量获取评论JSON)

→ 预处理(清洗、去重、日期过滤)

→ LLM语义分析(痛点分类、价值主题提取)

→ 结构化输出(供选品决策层消费)代码实现

python

import asyncio

import json

from dataclasses import dataclass, field

from typing import Optional

import httpx

import anthropic

PANGOLINFO_API_KEY = "your_api_key_here"

CLAUDE_API_KEY = "your_claude_key_here"

@dataclass

class ReviewBatch:

asin: str

reviews: list[dict]

total_count: int

avg_rating: float

@dataclass

class ReviewAnalysis:

asin: str

pain_points: list[dict] # [{theme: str, frequency: int, severity: float}]

positive_themes: list[dict] # [{theme: str, frequency: int}]

unmet_needs: list[str] # 现有产品未满足的核心需求

user_persona_insights: list[str] # 用户画像洞察

differentiation_opportunities: list[str] # 差异化机会

async def fetch_reviews(asin: str, pages: int = 5) -> ReviewBatch:

"""

通过 Pangolinfo Reviews Scraper API 获取指定 ASIN 的评论

支持分页采集,每页约10条评论

"""

all_reviews = []

async with httpx.AsyncClient(timeout=30) as client:

for page in range(1, pages + 1):

payload = {

"url": f"https://www.amazon.com/product-reviews/{asin}/?pageNumber={page}",

"output_format": "json",

"parse_template": "amazon_reviews",

"geo": {"country": "US"}

}

response = await client.post(

"https://api.pangolinfo.com/v1/scrape",

json=payload,

headers={"Authorization": f"Bearer {PANGOLINFO_API_KEY}"}

)

if response.status_code == 200:

data = response.json()

reviews = data.get("parsed_data", {}).get("reviews", [])

all_reviews.extend(reviews)

await asyncio.sleep(0.5) # 礼貌性延迟

if not all_reviews:

return ReviewBatch(asin=asin, reviews=[], total_count=0, avg_rating=0.0)

avg_rating = sum(r.get("rating", 3) for r in all_reviews) / len(all_reviews)

return ReviewBatch(

asin=asin,

reviews=all_reviews,

total_count=len(all_reviews),

avg_rating=avg_rating

)

async def analyze_reviews_with_ai(batch: ReviewBatch) -> ReviewAnalysis:

"""

用 Claude 对评论批次进行语义分析

聚焦提取:用户痛点、遗漏需求、差异化机会

"""

client = anthropic.Anthropic(api_key=CLAUDE_API_KEY)

# 准备评论文本(按评分分层,优先负面)

negative_reviews = [r for r in batch.reviews if r.get("rating", 5) <= 2]

positive_reviews = [r for r in batch.reviews if r.get("rating", 5) >= 4]

negative_text = "\n---\n".join([

f"Rating: {r.get('rating')}/5\nTitle: {r.get('title', '')}\nBody: {r.get('body', '')[:400]}"

for r in negative_reviews[:30] # 最多30条负面评论

])

positive_text = "\n---\n".join([

f"Rating: {r.get('rating')}/5\nTitle: {r.get('title', '')}\nBody: {r.get('body', '')[:300]}"

for r in positive_reviews[:20]

])

prompt = f"""

Analyze these Amazon product reviews for ASIN {batch.asin}.

NEGATIVE REVIEWS (total: {len(negative_reviews)}):

{negative_text}

POSITIVE REVIEWS (sample of {len(positive_reviews[:20])}):

{positive_text}

Provide a structured analysis in valid JSON with these exact fields:

{{

"pain_points": [

{{"theme": "string", "frequency_pct": float, "severity": "high|medium|low", "specific_examples": ["string"]}}

],

"positive_themes": [

{{"theme": "string", "frequency_pct": float}}

],

"unmet_needs": ["string"],

"user_persona_insights": ["string"],

"differentiation_opportunities": ["string"]

}}

Be specific. Use exact language from reviews where possible. Return ONLY the JSON, no other text.

"""

message = client.messages.create(

model="claude-opus-4-5",

max_tokens=2048,

messages=[{"role": "user", "content": prompt}]

)

try:

analysis_data = json.loads(message.content[0].text)

except json.JSONDecodeError:

# 如果JSON解析失败,返回空结构

analysis_data = {

"pain_points": [],

"positive_themes": [],

"unmet_needs": [],

"user_persona_insights": [],

"differentiation_opportunities": []

}

return ReviewAnalysis(asin=batch.asin, **analysis_data)

async def batch_analyze_category(asin_list: list[str]) -> list[ReviewAnalysis]:

"""

并发分析品类内多个ASIN的评论

控制并发数避免API限速

"""

semaphore = asyncio.Semaphore(5) # 最多5个并发请求

async def analyze_single(asin: str) -> Optional[ReviewAnalysis]:

async with semaphore:

try:

batch = await fetch_reviews(asin, pages=3)

if batch.total_count < 5:

return None

analysis = await analyze_reviews_with_ai(batch)

return analysis

except Exception as e:

print(f"Error analyzing {asin}: {e}")

return None

tasks = [analyze_single(asin) for asin in asin_list]

results = await asyncio.gather(*tasks)

return [r for r in results if r is not None]

def aggregate_category_insights(analyses: list[ReviewAnalysis]) -> dict:

"""

聚合品类级别的分析结论

从多个ASIN的分析中提取共性痛点和差异化机会

"""

from collections import Counter

# 聚合所有痛点

all_pain_points = []

for analysis in analyses:

for pp in analysis.pain_points:

all_pain_points.append(pp["theme"])

pain_point_counter = Counter(all_pain_points)

common_pain_points = pain_point_counter.most_common(5)

# 聚合所有差异化机会

all_opportunities = []

for analysis in analyses:

all_opportunities.extend(analysis.differentiation_opportunities)

# 聚合所有未满足需求

all_unmet_needs = []

for analysis in analyses:

all_unmet_needs.extend(analysis.unmet_needs)

return {

"category_pain_points": common_pain_points,

"differentiation_pool": list(set(all_opportunities)),

"unmet_needs_pool": list(set(all_unmet_needs)),

"asins_analyzed": len(analyses)

}组件二:竞争位置数据采集

SP广告位分布分析

SP广告位的覆盖率是竞争结构分析最核心的数据之一,但这类数据必须实时采集才有意义(广告位是实时竞拍的)。

python

import asyncio

from dataclasses import dataclass

from collections import defaultdict

import httpx

PANGOLINFO_API_KEY = "your_api_key"

@dataclass

class SearchResultSlot:

position: int

asin: str

is_sponsored: bool

slot_type: str # "top_banner" | "inline" | "sidebar" | "organic"

title: str

price: Optional[float]

rating: Optional[float]

review_count: Optional[int]

@dataclass

class KeywordCompetitionProfile:

keyword: str

total_slots: int

sponsored_slots: list[SearchResultSlot]

organic_top10: list[SearchResultSlot]

top_advertisers: list[str] # ASIN列表,按广告位数量排序

sp_concentration: float # 前三大广告主的SP占有率之和

organic_difficulty: float # 0-1,基于头部有机位ASIN的评论数量估算

async def fetch_search_result_page(

keyword: str,

page: int = 1,

zip_code: str = "10001"

) -> dict:

"""

采集亚马逊搜索结果页,包含SP广告位和有机排名

zip_code 参数确保获取特定地区的广告展示(亚马逊广告是地域定向的)

"""

url = f"https://www.amazon.com/s?k={keyword.replace(' ', '+')}&page={page}"

async with httpx.AsyncClient(timeout=30) as client:

response = await client.post(

"https://api.pangolinfo.com/v1/scrape",

json={

"url": url,

"render_js": True, # 需要JS渲染才能加载SP广告

"output_format": "json",

"parse_template": "amazon_search",

"geo": {"country": "US", "zip_code": zip_code}

},

headers={"Authorization": f"Bearer {PANGOLINFO_API_KEY}"}

)

if response.status_code != 200:

return {}

return response.json()

def parse_competition_profile(keyword: str, raw_data: dict) -> KeywordCompetitionProfile:

"""从原始采集数据提取竞争结构分析"""

parsed = raw_data.get("parsed_data", {})

results = parsed.get("results", [])

sponsored_slots = []

organic_slots = []

for i, result in enumerate(results):

slot = SearchResultSlot(

position=i + 1,

asin=result.get("asin", ""),

is_sponsored=result.get("is_sponsored", False),

slot_type=result.get("slot_type", "organic"),

title=result.get("title", ""),

price=result.get("price"),

rating=result.get("rating"),

review_count=result.get("review_count")

)

if slot.is_sponsored:

sponsored_slots.append(slot)

else:

organic_slots.append(slot)

# 计算SP集中度(前三大广告主的占有率)

advertiser_counts = defaultdict(int)

for slot in sponsored_slots:

if slot.asin:

advertiser_counts[slot.asin] += 1

top_advertisers = sorted(advertiser_counts.items(), key=lambda x: x[1], reverse=True)

top_3_count = sum(count for _, count in top_advertisers[:3])

sp_concentration = top_3_count / max(len(sponsored_slots), 1)

# 估算有机排名难度(基于头部有机位的评论数量)

organic_review_counts = [

slot.review_count or 0

for slot in organic_slots[:10]

if slot.review_count

]

avg_top_organic_reviews = (

sum(organic_review_counts) / len(organic_review_counts)

if organic_review_counts else 0

)

organic_difficulty = min(avg_top_organic_reviews / 5000, 1.0) # 归一化到0-1

return KeywordCompetitionProfile(

keyword=keyword,

total_slots=len(results),

sponsored_slots=sponsored_slots,

organic_top10=organic_slots[:10],

top_advertisers=[asin for asin, _ in top_advertisers[:5]],

sp_concentration=sp_concentration,

organic_difficulty=organic_difficulty

)

async def multi_keyword_competition_scan(

keywords: list[str],

days: int = 7

) -> dict[str, list[KeywordCompetitionProfile]]:

"""

多关键词竞争扫描

连续多天采集,观察竞争格局的时间变化

"""

results = defaultdict(list)

for keyword in keywords:

daily_profiles = []

for day in range(days):

raw = await fetch_search_result_page(keyword)

if raw:

profile = parse_competition_profile(keyword, raw)

daily_profiles.append(profile)

await asyncio.sleep(24 * 3600 / days) # 均匀分布在时间轴上(实际使用时应用定时任务替代)

results[keyword] = daily_profiles

return dict(results)组件三:Agent工作流------把分析流程自动化

通过 Pangolinfo Amazon Scraper Skill 接入 MCP 协议,可以让 AI Agent 自主触发数据采集和分析,无需人工干预每个步骤。

python

# Agent工作流的核心设计:信号检测 → 自动触发分析 → 推送决策建议

class MarketSignalDetector:

"""

市场信号检测器

当预设条件满足时,自动触发深度分析

"""

def __init__(self, thresholds: dict):

# thresholds 示例:

# {"search_volume_growth_weekly": 0.15, "new_listings_monthly": 50}

self.thresholds = thresholds

self.triggered_analyses = []

def check_entry_opportunity(self, keyword: str, metrics: dict) -> bool:

"""检测是否出现入场机会信号"""

conditions = [

# 搜索量周增长超过阈值

metrics.get("search_volume_growth_weekly", 0) >= self.thresholds["search_volume_growth_weekly"],

# 新品上架速度低于威胁阈值

metrics.get("new_listings_monthly", 999) <= self.thresholds["new_listings_monthly"],

# 头部广告主SP集中度低(说明市场尚未被垄断)

metrics.get("sp_concentration", 1.0) <= 0.6,

]

# 至少满足两个条件才触发

return sum(conditions) >= 2

def check_competitor_weakness(self, competitor_asin: str, recent_reviews: list) -> bool:

"""检测竞品是否出现负面爆发(潜在市场机会)"""

if len(recent_reviews) < 10:

return False

# 最近30天的评论平均评分

recent_avg = sum(r.get("rating", 3) for r in recent_reviews[-30:]) / 30

# 历史评分基线

historical_avg = sum(r.get("rating", 3) for r in recent_reviews[:-30]) / max(len(recent_reviews) - 30, 1)

# 评分下降超过0.5分视为负面爆发

return (historical_avg - recent_avg) > 0.5

# 使用示例

async def run_monitoring_cycle(watchlist: list[str]) -> list[dict]:

"""

定时运行的监控周期

对 watchlist 中的关键词执行完整的信号检测

"""

detector = MarketSignalDetector(thresholds={

"search_volume_growth_weekly": 0.12,

"new_listings_monthly": 40,

})

alerts = []

for keyword in watchlist:

# 获取最新搜索结果

raw_data = await fetch_search_result_page(keyword)

profile = parse_competition_profile(keyword, raw_data)

# 这里简化了 metrics 的来源,实际需要有历史数据作为基线

mock_metrics = {

"search_volume_growth_weekly": 0.18, # 模拟数据

"new_listings_monthly": 32,

"sp_concentration": profile.sp_concentration

}

if detector.check_entry_opportunity(keyword, mock_metrics):

alerts.append({

"type": "entry_opportunity",

"keyword": keyword,

"sp_concentration": profile.sp_concentration,

"organic_difficulty": profile.organic_difficulty,

"top_advertisers": profile.top_advertisers,

"recommended_action": "initiate_deep_analysis"

})

return alerts三层分析结论输出示例

python

def generate_sourcing_decision_brief(

category: str,

review_insights: dict,

competition_profiles: list[KeywordCompetitionProfile]

) -> str:

"""

生成结构化选品决策摘要

供选品团队直接用于决策

"""

# 提取关键指标

avg_sp_concentration = sum(p.sp_concentration for p in competition_profiles) / len(competition_profiles)

avg_organic_difficulty = sum(p.organic_difficulty for p in competition_profiles) / len(competition_profiles)

top_pain_points = review_insights.get("category_pain_points", [])[:3]

opportunities = review_insights.get("differentiation_pool", [])[:3]

brief = f"""

====== 选品决策摘要:{category} ======

📊 竞争结构评估

- SP广告集中度:{avg_sp_concentration:.1%}({'高' if avg_sp_concentration > 0.6 else '中' if avg_sp_concentration > 0.4 else '低'})

- 有机排名难度:{avg_organic_difficulty:.1%}({'高' if avg_organic_difficulty > 0.7 else '中' if avg_organic_difficulty > 0.4 else '低'})

😤 用户核心痛点(频率排序)

{chr(10).join(f' {i+1}. {pp[0]} ({pp[1]}个ASIN提及)' for i, pp in enumerate(top_pain_points))}

💡 差异化切入机会

{chr(10).join(f' • {opp}' for opp in opportunities)}

🎯 入场决策建议

{'建议入场' if avg_sp_concentration < 0.65 and avg_organic_difficulty < 0.7 else '谨慎评估'}

- 差异化方向:{opportunities[0] if opportunities else '待进一步分析'}

- 评论获取目标:前60天内完成首批高质量评论积累(建议目标50+条)

- 广告策略参考:{'优先长尾精准词' if avg_sp_concentration > 0.5 else '可考虑适度的宽泛匹配'}

"""

return brief总结

构建真正有竞争壁垒的AI选品系统,需要三个层次的工程工作:

| 层次 | 核心工具 | 输出 |

|---|---|---|

| 数据采集 | Pangolinfo Scrape API + Reviews Scraper API | 评论JSON、搜索结果页、广告位分布 |

| 语义分析 | Claude/GPT 等LLM | 痛点分类、差异化机会、用户洞察 |

| 信号自动化 | Agent工作流 + Amazon Scraper Skill | 实时机会警报、触发式深度分析 |

这套系统的竞争壁垒来源:你的分析频率(实时)远高于依赖平台订阅工具的竞争对手,你的分析维度(自定义语义分析)比通用算法更深,你的判断框架内嵌了自己对市场的独特认知。

Tags: #Python #亚马逊选品 #AI选品 #数据分析 #爬虫 #LLM #Agent #API #电商