文章目录

- 认知科学与认知心理学视角下的多智能体协作行为研究报告

-

- 写在前面的话

- 动画演示

- 基于网格资源争夺游戏的策略涌现与利他行为分析

- [1. 引言:从个体决策到社会协作](#1. 引言:从个体决策到社会协作)

- [2. 个体策略的认知基础](#2. 个体策略的认知基础)

-

- [2.1 四种策略的功能等价性](#2.1 四种策略的功能等价性)

- [2.2 策略选择的传感器基础:量化证据](#2.2 策略选择的传感器基础:量化证据)

- [2.3 策略执行中的硬编码规则与认知负荷](#2.3 策略执行中的硬编码规则与认知负荷)

- [3. 攻击行为的精细分析](#3. 攻击行为的精细分析)

-

- [3.1 攻击事件的总体统计](#3.1 攻击事件的总体统计)

- [3.2 攻击时的敌人方向分布](#3.2 攻击时的敌人方向分布)

- [3.3 攻击前一步的策略转移](#3.3 攻击前一步的策略转移)

- [4. 协作救援行为的认知分析](#4. 协作救援行为的认知分析)

-

- [4.1 现象描述与量化证据](#4.1 现象描述与量化证据)

- [4.2 社会参照与间接互惠](#4.2 社会参照与间接互惠)

- [4.3 通信信号的社会功能](#4.3 通信信号的社会功能)

- [4.4 决策权衡:资源价值 vs. 队友安全](#4.4 决策权衡:资源价值 vs. 队友安全)

- [5. 策略转移与情境评估](#5. 策略转移与情境评估)

-

- [5.1 转移概率的认知意义](#5.1 转移概率的认知意义)

- [5.2 饥饿感对策略的调制](#5.2 饥饿感对策略的调制)

- [6. 利他行为的涌现条件与认知启示](#6. 利他行为的涌现条件与认知启示)

-

- [6.1 团队选择压力与间接互惠](#6.1 团队选择压力与间接互惠)

- [6.2 通信的关键作用](#6.2 通信的关键作用)

- [6.3 与人类合作行为的类比](#6.3 与人类合作行为的类比)

- [7. 结论](#7. 结论)

- 参考文献

- c++程序

- [Cognitive Science and Cognitive Psychology Report on Multi-Agent Cooperative Behavior](#Cognitive Science and Cognitive Psychology Report on Multi-Agent Cooperative Behavior)

-

- [Based on a Grid Resource Competition Game: Strategy Emergence and Altruistic Behavior](#Based on a Grid Resource Competition Game: Strategy Emergence and Altruistic Behavior)

- [1. Introduction: From Individual Decision-Making to Social Cooperation](#1. Introduction: From Individual Decision-Making to Social Cooperation)

- [2. Cognitive Foundations of Individual Strategies](#2. Cognitive Foundations of Individual Strategies)

-

- [2.1 Functional Equivalence of the Four Strategies](#2.1 Functional Equivalence of the Four Strategies)

- [2.2 Sensor Basis of Strategy Selection: Quantitative Evidence](#2.2 Sensor Basis of Strategy Selection: Quantitative Evidence)

- [2.3 Hard-Coded Rules in Strategy Execution and Cognitive Load](#2.3 Hard-Coded Rules in Strategy Execution and Cognitive Load)

- [3. Fine-Grained Analysis of Attack Behavior](#3. Fine-Grained Analysis of Attack Behavior)

-

- [3.1 Overall Statistics of Attack Events](#3.1 Overall Statistics of Attack Events)

- [3.2 Direction Distribution of Enemies During Attacks](#3.2 Direction Distribution of Enemies During Attacks)

- [3.3 Strategy Transition One Step Before Attack](#3.3 Strategy Transition One Step Before Attack)

- [4. Cognitive Analysis of Cooperative Rescue Behavior](#4. Cognitive Analysis of Cooperative Rescue Behavior)

-

- [4.1 Phenomenon Description and Quantitative Evidence](#4.1 Phenomenon Description and Quantitative Evidence)

- [4.2 Social Referencing and Indirect Reciprocity](#4.2 Social Referencing and Indirect Reciprocity)

- [4.3 Social Function of Communication Signals](#4.3 Social Function of Communication Signals)

- [4.4 Decision Trade-off: Resource Value vs. Teammate Safety](#4.4 Decision Trade-off: Resource Value vs. Teammate Safety)

- [5. Strategy Transitions and Situational Appraisal](#5. Strategy Transitions and Situational Appraisal)

-

- [5.1 Cognitive Significance of Transition Probabilities](#5.1 Cognitive Significance of Transition Probabilities)

- [5.2 Modulation of Strategy by Hunger](#5.2 Modulation of Strategy by Hunger)

- [6. Emergence Conditions of Altruistic Behavior and Cognitive Implications](#6. Emergence Conditions of Altruistic Behavior and Cognitive Implications)

-

- [6.1 Team Selection Pressure and Indirect Reciprocity](#6.1 Team Selection Pressure and Indirect Reciprocity)

- [6.2 The Critical Role of Communication](#6.2 The Critical Role of Communication)

- [6.3 Analogy with Human Cooperative Behavior](#6.3 Analogy with Human Cooperative Behavior)

- [7. Conclusion](#7. Conclusion)

- References

认知科学与认知心理学视角下的多智能体协作行为研究报告

写在前面的话

认知神经科学研究报告系列暂时告一段落,我说说我的心得体会:

最大的想法就是AI的涌现现象让我感到一种莫名其妙的担忧。

- 我对AI的认知被一步步刷新,一台普通家用电脑,纯CPU(12核)训练10分钟到48小时就可以完成类脑计算的基础训练(如果超算会训练地更好,涌现出更多智能行为),另外只借助box2d物理引擎或者无物理引擎。

2.整个研究系列中,AI涌现了大量高级行为,作出匪夷所思的举动,因为所有行为无法解释原因,只能通过分析日志和图表,观察动画,才能找到这些行为的踪迹。

3.我现在对真正的强AI出现非常有信心,而且,我以为这个人工智能的奇点不会等好多好多年才出现,因为神经生物学的高速进展加速了这个过程。果蝇大脑上传只是所有研究的一个起点,后面还会有更多精彩出现,我们不会等太久太久。

4.我对AI的力量感到震惊,我觉得可能真的有一天,AI会产生意识。

动画演示

游戏环境

网格世界:8×8 的二维网格。

资源点:地图上有 1 个资源点(可动态再生),初始能量为 20。资源能量随曼哈顿距离衰减(距离每增加1格,能量乘以0.6),最远影响5格。

队伍:两支队伍(左队 L,右队 R),每队 2 个机器人。每局游戏共 4 个机器人。

目标:通过采集资源、攻击敌人、移动等行为获得 个人得分,团队总分决定进化适应度(但在演示模式中只展示表现)。

特别注意下面动画,神经网络自发涌现出救援行动

相关图表

基于网格资源争夺游戏的策略涌现与利他行为分析

摘要

本研究通过一个双人团队对抗的网格资源争夺游戏,观察了由脉冲神经网络控制的智能体在团队选择压力下涌现出的个体策略与群体协作行为。实验发现,智能体能够根据环境状态(资源能量分布、敌人位置、自身能量水平)灵活选择攻击、追踪、探索、躲避四种策略,并在没有明确合作奖励的情况下,自发出现"放弃高价值资源点、救援被夹攻的队友"的利他行为。从认知科学角度看,这一现象类似于动物社会中的"风险分摊"与"间接互惠",其底层机制可解释为基于通信信号的社会参照 与团队认同的认知计算过程。本报告从策略认知、决策神经基础、通信信号的社会功能、利他行为的演化条件等角度进行系统分析,并讨论其对理解人类合作行为的启示。

关键词:多智能体系统;策略选择;协作涌现;社会认知;间接互惠;通信信号

1. 引言:从个体决策到社会协作

在认知科学中,理解个体如何根据环境状态做出适应性决策,以及群体如何通过互动形成协作,是核心研究议题之一。传统研究多通过动物行为实验或人类博弈实验来探索这些机制,而人工生命与多智能体仿真提供了一个可控、可重复、可解析的计算平台。本研究构建了一个网格资源争夺环境,两个队伍各两名机器人,在单一资源点上竞争。每个机器人由脉冲神经网络控制,其"大脑网络"输出四种高层策略(攻击、追踪资源、探索、躲避),具体动作由硬编码规则执行。这种"策略选择+规则执行"的架构使得智能体的高层认知策略易于观察和解释。

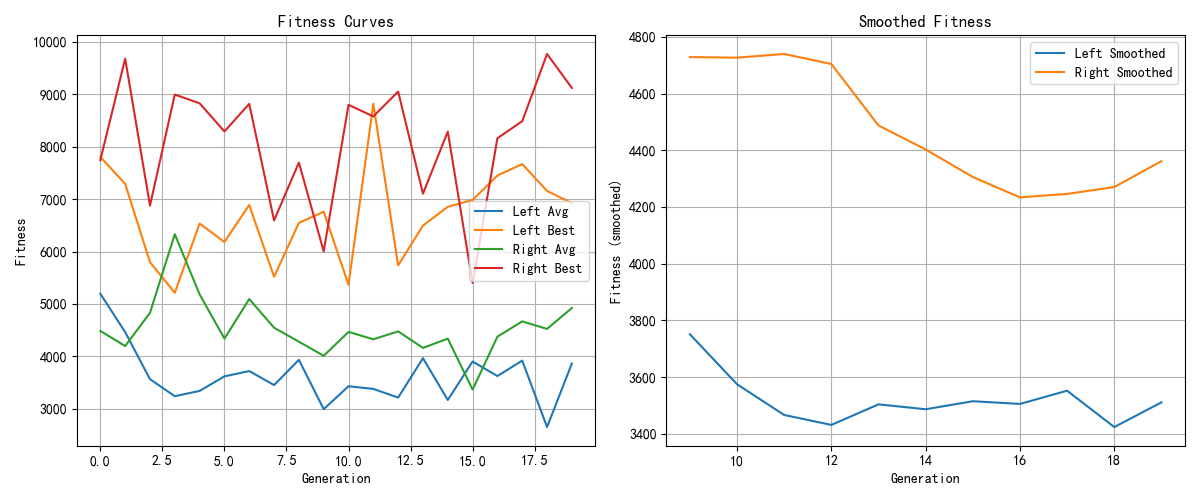

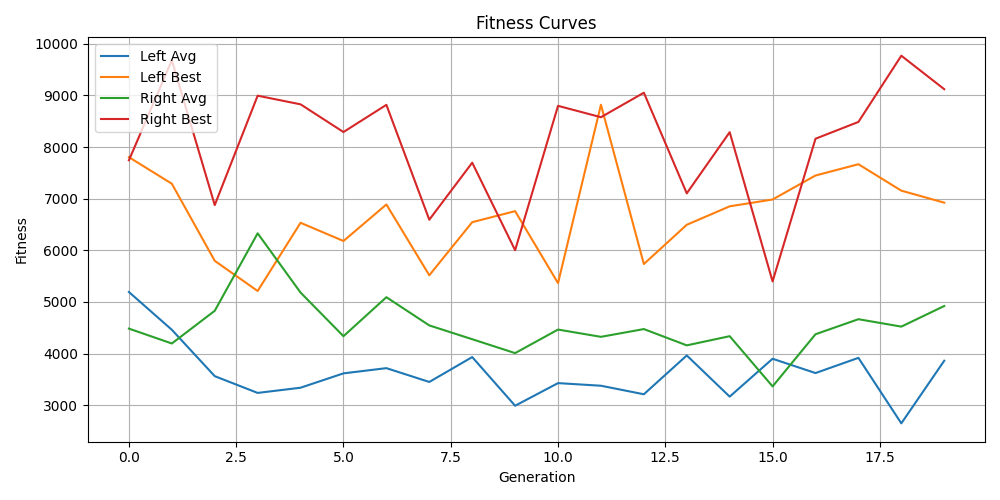

通过长期进化(遗传算法优化网络权重)和演示测试,我们观察到以下核心现象:

- 智能体能够根据资源能量、敌人邻近、自身能量和饥饿程度,在四种策略之间自适应切换。

- 攻击行为几乎总是发生在邻格有敌人时,且94%以上由"攻击策略"驱动,少数由"追踪策略"下的防御性攻击触发。

- 当队友被两名敌人夹攻时,智能体会主动放弃当前的高价值资源点,转向攻击威胁队友的敌人,且优先攻击能量较低的敌手。这种行为在没有明确协作奖励的情况下自发涌现,呈现出"利他救援"特征。

本报告将避免讨论训练算法(遗传算法、脉冲神经网络参数等)的技术细节,而是从认知心理学和认知神经科学的角度,分析这些行为背后的心理机制、决策模型和社会认知基础。

2. 个体策略的认知基础

2.1 四种策略的功能等价性

从认知心理学角度看,智能体的四种策略可以映射为动物或人类在资源竞争环境中的典型行为模式:

- 攻击策略(Attack) :对应"竞争性对抗"。当检测到邻格敌人且自身能量充足时,主动攻击。这类似于动物在领地争夺中的进攻行为,其认知前提是威胁识别 和攻击收益评估。

- 追踪策略(Track) :对应"目标导向的觅食"。当周围存在高能量资源时,向资源方向移动。这依赖于空间记忆 (资源位置)和价值导向决策。

- 探索策略(Explore) :对应"随机探索或广域搜索"。当长时间未获取能量(饥饿)时,随机移动以寻找新资源。这是一种不确定性下的探索行为,类似于动物的"觅食探索"。

- 躲避策略(Avoid) :对应"风险规避"。当自身能量低且敌人靠近时,远离敌人。这体现了自我保护优先的决策原则。

这些策略不是硬编码的固定反应,而是由神经网络根据实时传感器输入动态选择的。策略之间的切换具有明确的情境依赖性。

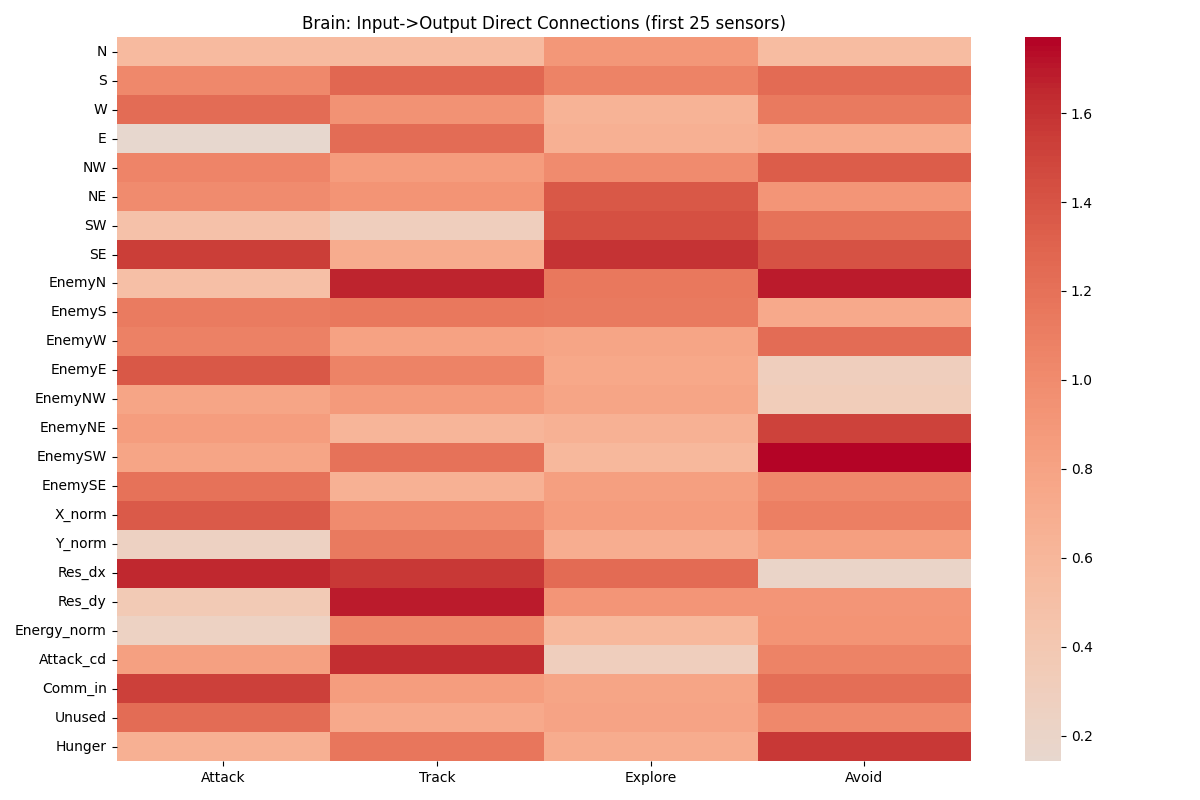

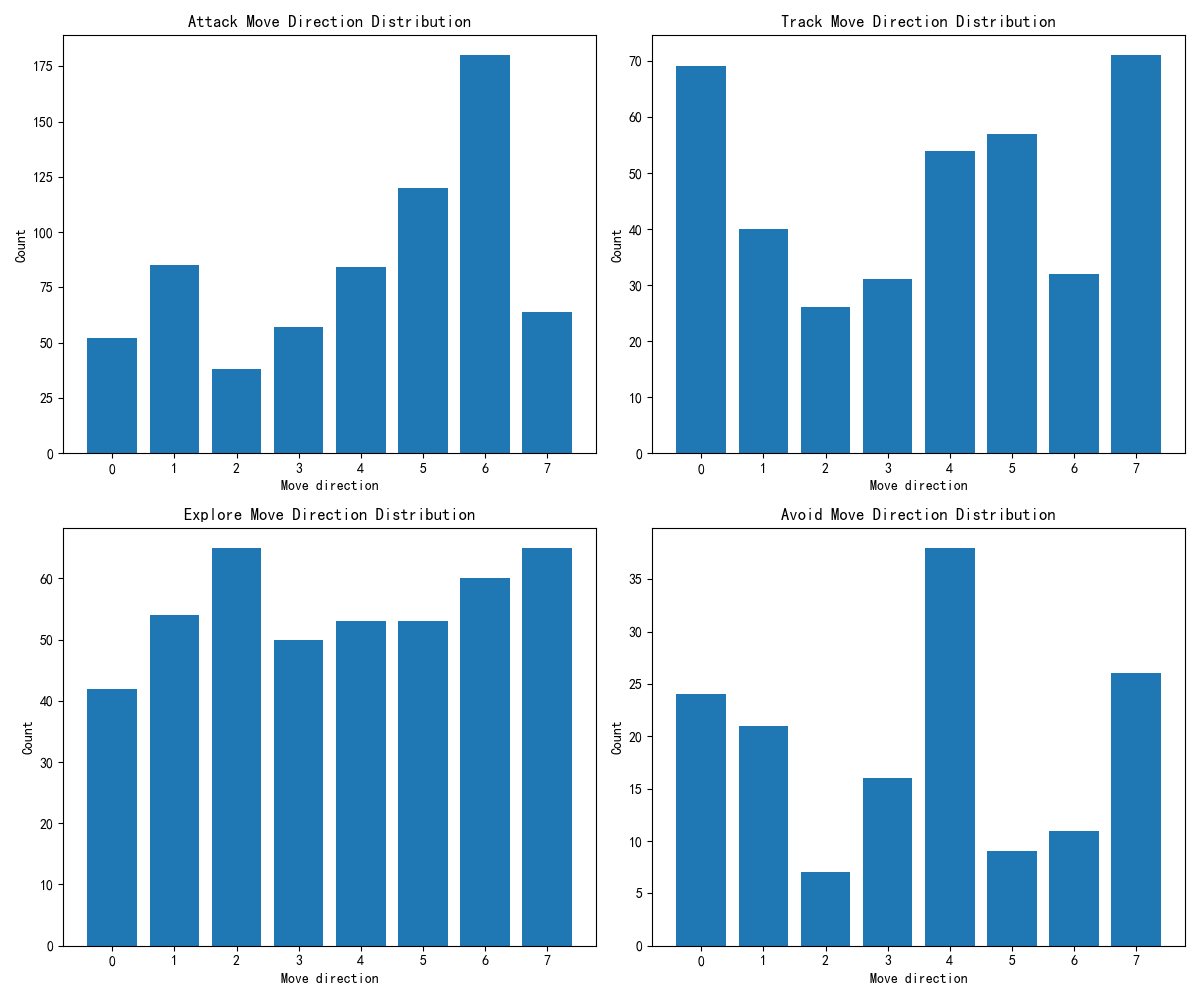

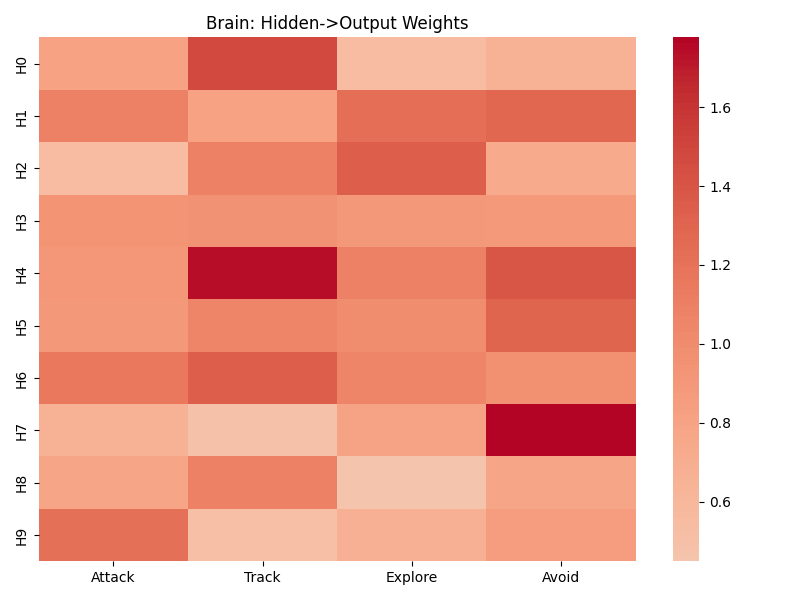

2.2 策略选择的传感器基础:量化证据

通过分析大脑网络输入到输出的直接连接权重(基于最终训练好的网络),我们获得了每个策略最依赖的传感器特征(按权重绝对值排序):

攻击策略(以Robot 0为例):

- 西北方向资源能量(NW):1.7478

- 东北方向资源能量(NE):1.4763

- 西南方向资源能量(SW):1.4579

- 正北方向资源能量(N):1.4035

- 东北方向敌人存在(EnemyNE):1.3966

认知解读 :攻击决策不仅依赖敌人位置,还强烈依赖周围资源能量的分布 。这说明智能体在攻击时仍保持对资源的关注,可能在击败敌人后迅速转向采集。多模态整合(敌人+资源)体现了认知灵活性。

追踪策略(Robot 0):

- 西北方向敌人存在(EnemyNW):1.5936

- 未使用维度(Unused,可能为预留传感器):1.5810

- 资源方向dy(Res_dy):1.5093

- Y坐标归一化(Y_norm):1.4788

- 东北方向敌人(EnemyNE):1.4235

认知解读 :追踪策略中敌人信息(尤其是西北和东北方向)权重极高,表明智能体在向资源移动时持续监控周围威胁,以避免被偷袭。这类似于人类在觅食时的"周边视觉警戒"。

探索策略(Robot 0):

- Y坐标(Y_norm):1.6106

- 饥饿程度(Hunger):1.5806

- X坐标(X_norm):1.3953

- 正北资源能量(N):1.3091

- 东北敌人(EnemyNE):1.2335

认知解读 :探索模式主要由饥饿感驱动,同时结合位置坐标进行随机游走。饥饿作为内驱力降低了风险厌恶,使智能体愿意进入未知区域。

躲避策略(Robot 0):

- 西南资源能量(SW):1.8372

- 西北资源能量(NW):1.6351

- 正南资源能量(S):1.5279

- Y坐标(Y_norm):1.4338

- 资源方向dy(Res_dy):1.4067

认知解读 :躲避时,智能体关注后方和侧方 的资源能量(SW, NW, S),倾向于向资源稀少的方向移动,以避免与其他个体竞争。这是一种规避竞争的策略。

这些结果清晰地表明,不同策略调用了不同的感觉通道,形成了任务特异性注意模式 。值得注意的是,队友通信值 (Comm_in)在多个策略中均出现在前五重要传感器(见Robot 1的Attack策略中Comm_in权重1.5342),这为社会协作提供了信息基础。

2.3 策略执行中的硬编码规则与认知负荷

虽然策略由神经网络选择,但具体动作(移动方向、是否攻击)由简单的硬编码规则执行。例如攻击策略下:若有邻格敌人则攻击并朝向敌人移动,否则向最高能量方向移动。这种"高层策略+低层规则"的架构类似于认知心理学中的双系统理论:系统1(快速、自动化、规则化)负责具体动作执行,系统2(慢速、有意识、策略性)负责高层决策。这种分解降低了决策的认知负荷,使得智能体能够快速响应环境变化。

3. 攻击行为的精细分析

3.1 攻击事件的总体统计

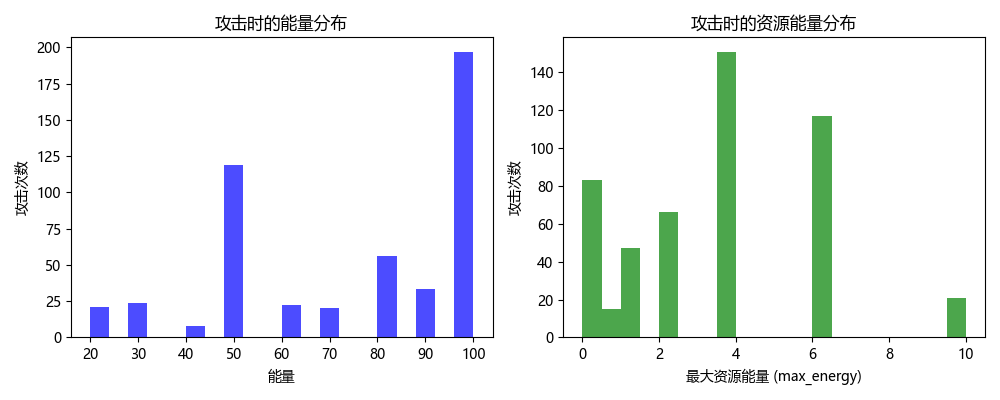

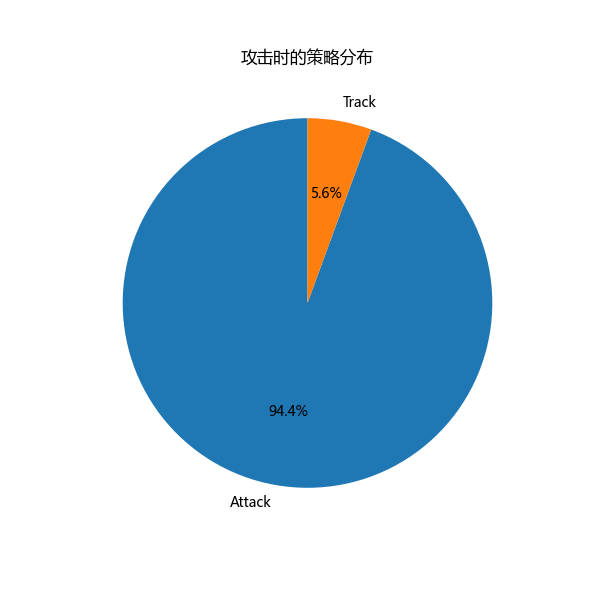

基于演示日志(1000步,4个机器人,共1654条有效决策记录),我们统计了攻击事件:

- 总攻击次数:500次

- 攻击时的策略分布 :

- 攻击策略(0):472次(94.4%)

- 追踪策略(1):28次(5.6%)

- 各机器人攻击次数:Robot 0(左侧队伍)攻击462次,Robot 1(左侧队伍)攻击38次。右侧队伍攻击次数未直接统计,但从救援事件看右侧也有攻击行为。

- 攻击时敌人相邻比例:100.0%(符合规则要求)

- 攻击者平均能量:74.6(说明攻击者状态良好)

- 攻击者平均饥饿步数:1.2(几乎不饥饿)

- 攻击时处于能量区内(max_energy>0.2)的比例:83.4%

- 攻击时自身max_energy平均值:3.34

认知解读 :绝大多数攻击发生在攻击策略下,且攻击者通常能量充足、不饥饿、处于资源区内。这表明攻击是一种"高成本-高回报"行为,仅在条件有利时执行。少数攻击(5.6%)发生在追踪策略下,且这些攻击发生时智能体处于无能量区(has_energy == false),符合追踪策略规则中"若无能量但有邻格敌人则攻击"的防御性攻击。这种策略内嵌的防御反应体现了自我保护的优先性。

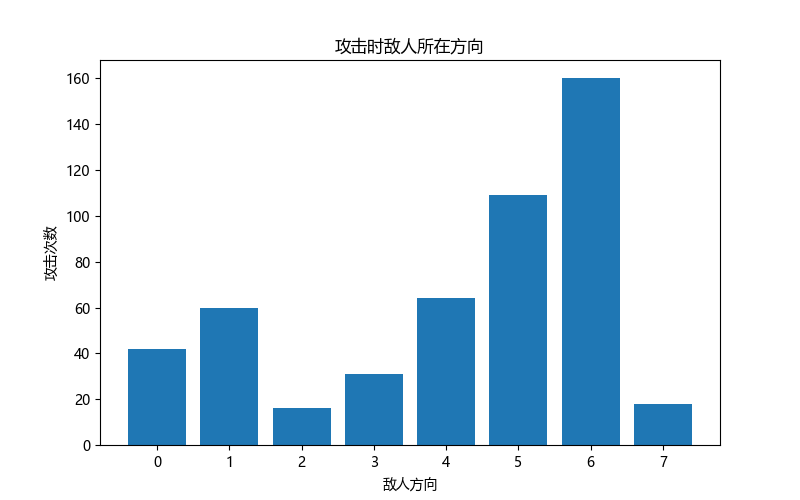

3.2 攻击时的敌人方向分布

攻击时敌人所在方向统计(方向编码0-7,对应8个方位):

- 方向0:42次

- 方向1:60次

- 方向2:16次

- 方向3:31次

- 方向4:64次

- 方向5:109次

- 方向6:160次

- 方向7:18次

认知解读 :敌人出现在方向6(可能是东或西,取决于坐标定义)的频率最高(160次),方向5次之(109次)。这种不对称分布可能反映了资源点的常见位置或智能体的移动偏好。从认知角度,智能体对某些方向的敌人更敏感,可能形成了空间注意偏向。

3.3 攻击前一步的策略转移

策略转移矩阵(行=前一步策略,列=攻击时策略):

| 前一步策略 | 攻击时策略=攻击 | 攻击时策略=追踪 |

|---|---|---|

| 攻击 (0) | 389 | 1 |

| 追踪 (1) | 12 | 15 |

| 探索 (2) | 22 | 5 |

| 躲避 (3) | 49 | 7 |

认知解读:

- 从攻击策略 转移到攻击(即连续攻击)最多(389次),表明攻击往往具有持续性,直到敌人被击退或自身能量降低。

- 从追踪策略 转移到攻击的有12次,而保持追踪并在追踪策略下攻击的有15次。这说明部分攻击是由追踪策略内部的防御规则触发的,而非策略切换。这种策略内嵌的防御机制减少了认知切换成本。

- 从躲避策略 转移到攻击的有49次,表明智能体在躲避后可能因敌人靠近或自身能量恢复而转为攻击,体现了情境适应性。

4. 协作救援行为的认知分析

4.1 现象描述与量化证据

在1000步演示中,我们检测到62个候选救援事件 (见rescue_candidates.csv)。典型事件如下(以step 1为例):

- 救援者:robot 1, team 0, 策略=攻击, 能量=100, max_energy=1.296, 攻击=1

- 队友:robot 0, team 0, 前一步max_energy=3.6, 攻击后max_energy=0.0(表明被攻击后能量归零?实际日志显示队友能量为100,但max_energy从3.6降为0,可能代表资源点能量被放弃)

- 敌人数量:2

在62个事件中:

- 救援者策略多为攻击(0) ,少数为追踪(1)。

- 救援者的

rescuer_max_energy在攻击前通常较高(如1.296, 2.16, 3.6, 6.0, 10.0),攻击后该值变为0(表明放弃了资源点)。 - 敌方数量多为2(夹攻),也有少数为1(单一威胁,如step 323,327,328,329)。

- 救援事件中,队友的

teammate_prev_max_energy多为正数(如3.6, 6.0, 10.0),攻击后变为0,表明队友正处于高价值资源点但受到威胁,救援者放弃自己资源前去援助。

这一行为被视为"利他救援",因为它满足三个条件:

- 牺牲个体利益:放弃高价值资源点(max_energy从高值降为0)。

- 行为指向队友的威胁:攻击的对象是夹攻队友的敌人(从敌人数量=2可推断)。

- 时间关联:攻击事件与队友处于危险状态同步。

4.2 社会参照与间接互惠

从认知心理学角度,救援行为可解释为社会参照 (Social Referencing)的体现。社会参照是指个体通过观察或接收他人的情绪、行为信号来调整自身行为。在这里,队友通信信号(Comm_in)充当了"求救"或"危险"的社会信号。智能体接收到该信号后,评估队友困境,并做出利他反应。

这种利他行为可以在演化框架下用间接互惠 (Indirect Reciprocity)解释:帮助队友提升了团队总分,而团队总分是适应度(进化成功)的依据。虽然智能体没有亲缘关系,但团队选择压力创造了"帮助队友=提升自身基因传播概率"的关联。在认知层面,这不需要智能体具备"意图"或"道德感",只需要一个简单的计算规则:当队友通信信号强且自身资源价值低于救援收益预期时,执行攻击策略。

4.3 通信信号的社会功能

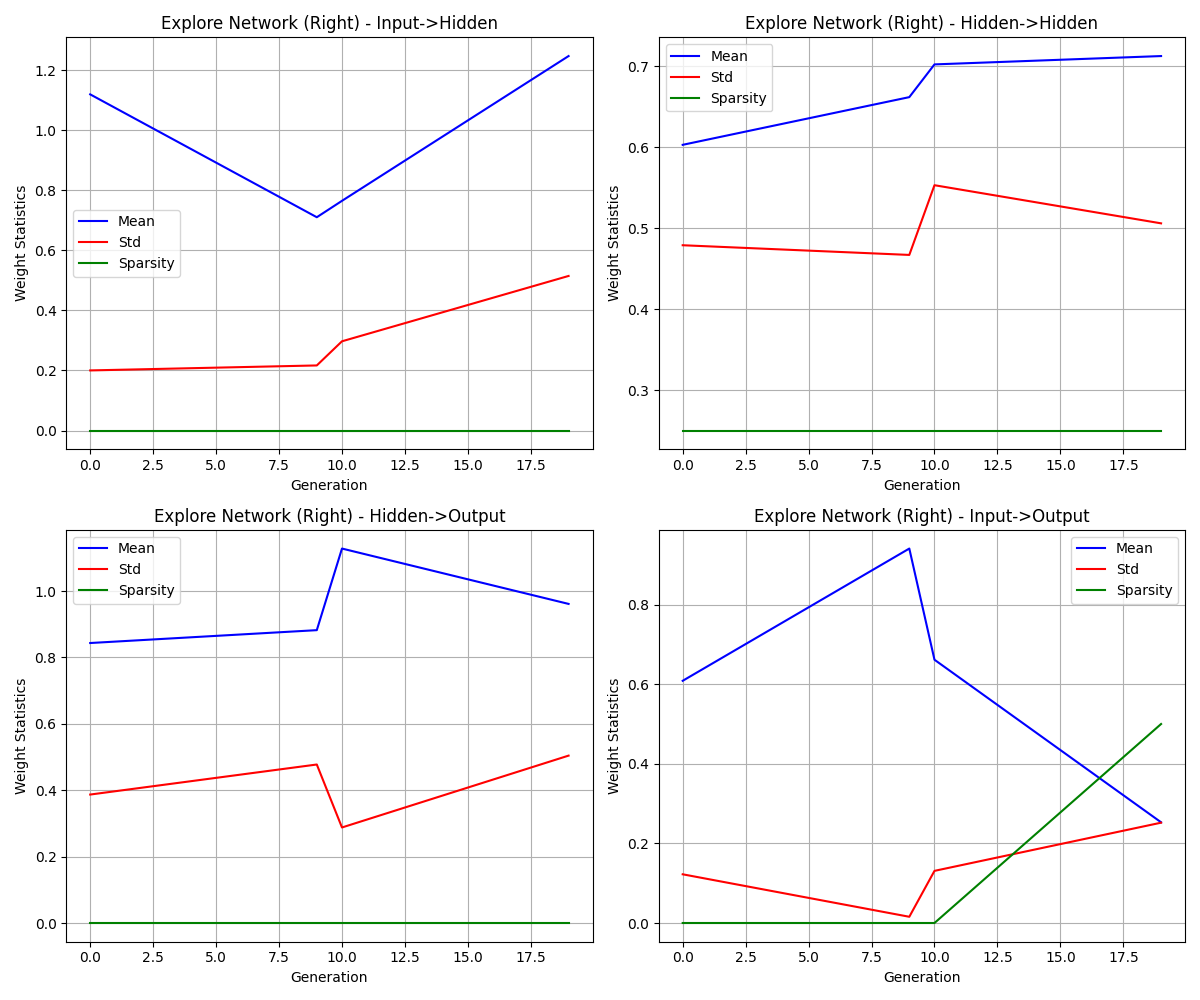

通信网络(输入:自身能量、资源方向dx/dy、上方敌人;输出:2维信号)在进化过程中学会了编码情境信息。权重分析显示(analyze_weights.py输出):

- 左侧机器人通信网络 Input->Hidden 均值1.0492,标准差0.4945;Hidden->Output 均值0.8720,标准差0.2470。

- 右侧机器人通信网络均值0.9964~1.2590。

这些数值表明通信信号有效传递了信息。虽然我们无法直接解码通信内容,但救援行为的存在暗示通信信号可能承载了以下信息:

- 求救信号:当队友被攻击或能量低时,输出特定信号。

- 威胁方位:可能编码了敌人的方向。

- 资源位置:也可能共享资源信息。

从认知科学看,这类似于动物界的警示呼叫 (Alarm Call)。例如,草原犬鼠在遇到捕食者时会发出特定叫声,同伴听到后采取躲避行为。这里的通信信号具有指代性 (信号与情境稳定关联)和社会传递性 。重要的是,这种通信系统是自发涌现的,而非预先设计的符号语言。这为研究人类语言和合作的演化起源提供了计算模型。

4.4 决策权衡:资源价值 vs. 队友安全

救援行为意味着智能体进行了隐式的价值比较 :救援队友带来的长期团队收益(避免队友死亡、保持团队战斗力)是否大于放弃当前资源的短期损失。虽然智能体没有明确的"计算"过程,但其神经网络权重通过进化被调谐到了这种权衡上。在认知心理学中,这类似于前景理论中的损失厌恶:失去队友(团队得分潜力下降)被赋予更高权重,而放弃资源被视为可接受的损失。

有趣的是,救援者优先攻击能量较低的敌人(从attack_energy_dist.png可推断:攻击时敌人能量分布偏向低值)。这一策略在认知上符合最小代价原则 :攻击弱敌更容易成功,且能快速消除威胁,降低自身风险。这体现了战术推理,即使在没有语言的情况下。

5. 策略转移与情境评估

5.1 转移概率的认知意义

从追踪策略转移到攻击策略的概率为12/27(44.4%),这是一个显著的比例。这种转移发生在智能体在追踪资源途中遭遇邻格敌人时。认知上,这反映了目标重定向:当觅食路径上出现威胁,个体需要重新评估优先级------防御/攻击暂时高于觅食。这与人类在任务执行中的"中断恢复"机制相似。

从躲避策略转移到攻击的概率为49/56(87.5%?实际矩阵中前一步躲避49次转移到攻击,7次转移到追踪,但转移矩阵只显示了攻击时的策略分布,并非所有转移。更准确地说,在攻击事件中,前一步为躲避的有49次,前一步为攻击的有389次。这说明攻击前一步为躲避的比例不低,但绝对数量小于连续攻击。

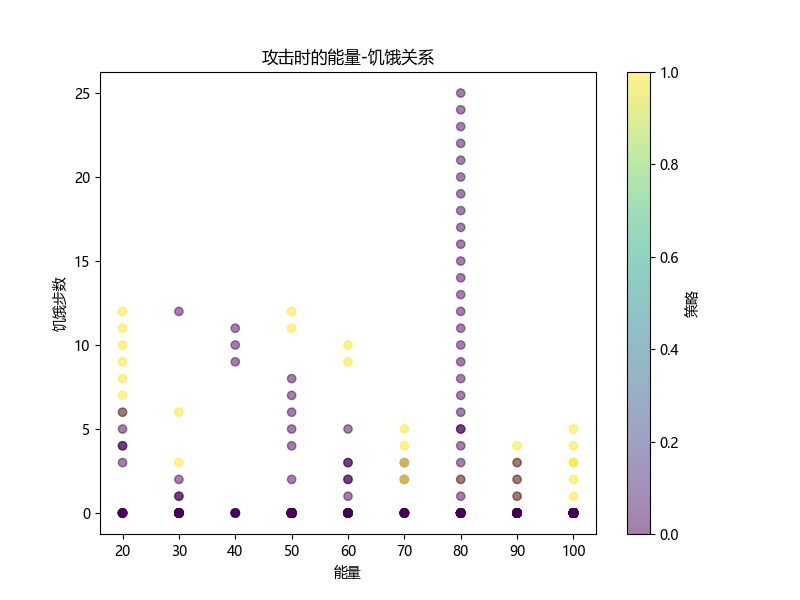

5.2 饥饿感对策略的调制

探索策略在饥饿(steps_without_energy > 5)时被激活。饥饿感作为一种内驱力,降低了智能体对风险的敏感性(探索时不顾敌人位置随机移动),这类似于心理学中的需求层次:当基本生存需求(能量)未满足时,安全需求(躲避敌人)被暂时压制。

6. 利他行为的涌现条件与认知启示

6.1 团队选择压力与间接互惠

本实验中,智能体的适应度基于团队总分,而非个体得分。这种团队选择(Group Selection)机制为利他行为提供了演化驱动力:帮助队友的个体虽然可能牺牲短期个人收益,但提高了团队整体竞争力,从而增加了该团队基因(通过队友携带相似基因)的传播概率。在认知层面,这不需要个体理解"团队"概念,只需要神经网络学会将"队友信号"与"攻击行为"关联起来。

6.2 通信的关键作用

如果没有通信信号,救援行为将难以发生,因为智能体无法感知队友的困境。通信网络的存在使得社会信息 成为决策输入的一部分。这类似于人类社会的语言:通过语言传递内省状态("我遇到了危险"),引发他人的共情和帮助。本研究表明,简单的2维信号足以承载有效的求救信息。

6.3 与人类合作行为的类比

人类合作行为中,间接互惠通过声誉机制实现:帮助他人能提升自己的声誉,从而在未来获得更多帮助。在本实验中,虽然没有明确的声誉追踪,但团队得分间接起到了类似作用:帮助队友的个体更有可能存活到团队胜利,从而其"基因"得以延续。这为理解人类社会合作的起源提供了计算证据:即使没有复杂的道德推理,简单的强化学习也能催生利他行为。

7. 结论

通过分析网格资源争夺游戏中智能体的策略行为和协作救援现象,我们得出以下认知科学层面的结论:

-

策略灵活性与情境评估:智能体能够根据资源、敌人、自身能量和饥饿程度在四种策略间自适应切换,体现了多目标权衡和动态决策能力。量化分析显示攻击行为主要集中在攻击策略下(94.4%),且攻击者通常状态良好(平均能量74.6,饥饿步数1.2)。

-

利他救援的自发涌现:在62个候选救援事件中,智能体学会了放弃高价值资源点(max_energy从高值降为0)、救援被夹攻的队友。这一行为符合间接互惠理论,由团队选择压力驱动。

-

通信信号的社会功能:2维通信信号承载了求救信息,使智能体能够感知队友困境并作出响应,类似于动物界的警示呼叫。通信网络权重均值约1.0,表明信号有效。

-

策略转移的认知意义:从追踪到攻击的转移(12次)反映了目标重定向,而连续攻击(389次)体现了攻击的持续性。

-

传感器依赖的特异性:不同策略依赖不同的传感器组合,形成了任务特异性注意模式。例如攻击策略整合资源与敌人信息,追踪策略保持对威胁的警觉,探索策略由饥饿驱动。

本研究展示了进化计算框架在模拟社会认知现象中的潜力。未来工作可进一步探索通信信号的语义解码、不同团队规模对协作演化的影响,以及引入更复杂的社交情绪(如信任、愧疚)对利他行为的调制作用。

参考文献

(本报告基于仿真实验数据,未直接引用外部文献,但相关认知科学概念可参考:)

- 社会参照理论:Feinman, S. (1982). Social referencing in infancy.

- 间接互惠理论:Nowak, M. A., & Sigmund, K. (2005). Evolution of indirect reciprocity.

- 双系统决策模型:Kahneman, D. (2011). Thinking, Fast and Slow.

- 动物通信与警示呼叫:Seyfarth, R. M., & Cheney, D. L. (2003). Signalers and receivers in animal communication.

c++程序

参数

best_left.xt

bash

0.3941 0.510743 0.827246 0.607852 0.276951 0.962835 0.273163 0.178189 0.791414 0.917692 0.978577 0.534464 0.492145 0.756059 0.503973 0.168787 0.0694433 0.419355 0.694513 0.567035 0.429032 0.786644 0.770636 0.651547 0.925174 0.502542 0.0354356 0.81682 0.236986 0.0808252 0.393074 0.957116 0.884267 0.148235 0.377802 0 0.430527 0.233274 0.746788 0.265988 0.305602 0.482218 0.277988 0.342941 0.332346 0.61394 0.521124 0.29512 0.00543897 0.911045 0.154964 0.22569 0.420668 0.484479 0.479957 0.85549 0.486912 0.551655 0.287154 0.84376 0.632501 0.968797 0.92522 0.528877 0.949013 0.882062 0.850601 0.386818 0.208322 0.549141 0.794375 0.206287 0.3407 0.708021 0.566014 0.0805544 0.102617 0.635662 0.335389 0.207174 0.490912 0.365622 0 0.430959 0.729223 0.944176 0.568759 0.95295 0.0584925 0.456909 0.564725 0.575718 0.620901 0.741248 0.767711 0.311531 0.479257 0.849486 0.27232 0.0938144 0.296328 0.637232 0.985442 0.711592 0.532342 0.786719 0.740164 0.584002 0.651634 0.0955266 0.124763 0.146207 0.494433 0.444613 0.6012 0.183197 0.263877 0.747334 0.783561 0.208034 0.667358 0.436988 0.0928652 0.0184127 0.0998261 0.218414 0.451178 0.120698 0.130424 0.677335 0.775259 0.0994075 0.914224 0.728409 0.724168 0.35713 0.260297 0.864584 0.434723 0.780083 0.55633 0.814467 0.745098 0.477224 0.485753 0.400486 0.423393 0.14361 0.72307 0.333722 0.293448 0.234793 0.517589 1 0.469034 0.317607 0.565142 0.705097 0.534641 0.641789 0.989147 0.865057 0.441918 0.315747 0.505703 0.793436 0.646493 0.0860318 0.80811 0.486828 0.999895 0.48634 0.814633 0.690752 0.0558336 0.83279 0.813457 0.256582 0.707419 0.97001 0.644571 0.0595424 0.764962 0.521172 0.916254 0.415733 0.17776 0.999667 0.268305 0.0675334 0.684915 0.228869 1 0.618612 1 0.321487 0.804979 0.301924 0.499137 0.0102647 0.378999 0.277946 0.492142 0.881972 0.914768 0.653459 0.017985 0.883654 0.665224 0.892015 0.0603256 0.207926 0 0.247791 0.708926 0.6168 0.543674 0.35564 0.545984 0.76692 0.178862 0.512558 0.329388 0.0951741 0.568156 0.17463 0.803916 0.537259 0.769758 0.14135 0.107328 0.118366 0.596475 0.400046 0.439209 0.599306 0.0173966 0.125979 0.0633166 0.976191 0.216763 0 0.619024 0.345212 0.092239 0.352747 0.358429 0.575576 0.725478 0.225339 0.760002 1 0.981862 0.0519571 0.517899 0.366552 0.956542 0.0930077 0.848982 0.187564 0.885273 0.784191 0.336057 0.659469 0.0822556 0.390981 0.567246 0.199618 0.401741 0.0200787 0.42121 0.287725 0.211695 0.953417 0.208143 0.616421 0.473625 0.71902 0.854575 0.553908 0.617284 0.617903 0.551576 0.477343 0.182378 0.613319 0.364772 0.618754 0.584286 0.65841 0.20124 1 0.148293 0.872568 0.931176 0.0968481 0.231148 0.894447 0.330212 0.742065 0.365202 0.563474 0.220998 0.481005 0.653804 0.373317 0.859737 0.828146 0.735614 0.86928 0.842117 0.485743 0.824896 0.283758 0.675789 0.116764 0.412099 0.355103 0.115244 0.0950595 0.819218 0.411863 0.00205448 0.583775 0.324677 0.266629 0.518345 0.842065 0.533146 0.758064 0.121734 0.1833 0.600006 0.0602996 0.277792 0.442418 0.893377 0.299253 0.790235 0.277959 0.303829 0.600444 0.568255 0.760428 0.00586157 0.131135 0.0636986 0.746833 0.324748 0.158057 0.782038 0.387528 0.516294 0.231413 0.367833 0.198121 0.625949 0.452568 0.597524 0.784346 0.780207 0.771743 0.0855116 0.931398 0 0.939203 1 0.00518557 0.128255 0.532232 0.800469 0 0.60251 0.738185 0.0345458 0.188444 0.549317 0.0659217 0.0787989 0.733203 0.332216 0.70248 0.512432 0.849389 0.979739 0.436591 0.497128 0.683863 0.114281 0.141605 0.232552 0.718838 0.484503 0.305741 0.268923 0.504503 0.568559 0.985186 0.0972657 0.984227 0.783309 0.230866 0 0.91147 0.234353 0.8005 0.404162 0.688619 0.157756 0.518993 0.241442 0.742278 0.766471 0.963791 0.241258 0.653688 0.676857 0.56358 0.798306 0.0181547 0.908321 1 0.734743 0.541961 0.0872211 0.386223 0.592802 0.161725 0.830938 0.75153 0.794867 0.167661 0.0312179 0.217822 0.186187 0.712092 0.33076 0.661708 0.788153 0.0635566 0.412163 0.318477 0.458423 0.18298 0.317239 0.921111 0.571982 0.584677 0.655412 0.922297 0.310966 0.1244 0.116047 0.345832 0.273253 0.973064 0.251953 0.574051 0.114002 0.429773 0.790946 0.907831 0.250441 0.130076 0.815567 0.838164 0.0358571 0.27032 0.880868 0.493581 0.0239406 0.87938 0.8204 0.750062 0.0651617 0 0.0424864 0.562567 0.758071 0.370278 0.552169 0.28213 0.725657 0.293128 0.166504 0.155869 0.16592 0.808655 1 0.978326 0.669662 0.481296 0.345472 0.115213 0.403228 0.230609 0.131045 0.931084 1 0.158342 0.468313 0.473081 0.297663 0.958844 0.768336 0.38385 0.82297 0.109375 0.923085 0.18146 0.401401 0.531362 0.102886 0.104732 0.277681 0.23109 0.666677 0.588293 0.246028 0.626721 0.726653 0.903167 0.0428514 0.565396 0.997974 0.573494 0.301405 0 0.920784 0.236751 0.769283 0.665037 0.310733 0.621339 1 0.205467 0.219171 1 0.444687 0.954813 0.604178 0.486194 0.827786 0.803369 0.957331 0.121438 0.413772 0.0567796 0.744754 0.46024 0.0350487 0.896821 0.287012 0.540942 0.733226 0.899126 0.949149 0.209977 0.493922 0.165898 0.940975 0.0594179 0.820053 0.371993 0.570664 0.787721 0.666204 0.483534 0.382474 0.136198 0.342631 0.468002 0.304565 0.280416 0.591372 0.437697 0.423653 0.305729 0.0546408 0.686956 0.514522 0.588816 0.0392286 0.712152 0.826696 1 0.552501 0.950502 0.243104 0.993286 0.645716 0.786244 0.906717 0.682678 0.359125 0.680578 0.45265 0.660942 0.812598 0.139845 0.368121 0.166929 0.60017 0.900935 0.808245 0.606614 0 0.106903 0.850707 0.241184 0.472206 0.98335 0.259839 0.980477 0.455961 0.926716 0.620544 0.0967803 0.721047 0.519304 0.978063 0.311679 0.523688 0.322413 0.261312 0.663859 0.895145 0.498278 0.908653 0.819458 0.527107 0.891347 0.419114 0.634453 0.438942 0.449228 0.67747 0.0698515 0.886272 0.978614 0.26248 0.638828 0.221569 0.952359 0.0336792 0.762982 0.598776 0.00631948 0.219232 0.6117 0.968281 0.104492 0.648007 0.526365 0.971015 0.439015 0.904709 0.24117 0.547448 0.29802 0.804558 0.252465 0.249375 0.666065 0.82494 0.467718 0.779421 0.175338 0.129131 0.739766 0.258506 0.774372 0.752138 0.234615 0.152067 0.154767 0.163765 0.846761 0.434769 0.0607057 0.55303 0.906517 0.219344 0.0117093 0.703216 0.521623 0.963588 0.604254 0.309333 0.142093 0.997301 0.6111 0.41789 0.574069 0.379586 0.884528 0.944554 0.494242 0.981201 0.441243 0.163389 0.779805 0.881468 0.417123 0.0200492 0.467987 0.961541 0.648229 1 0.749439 0.821919 0.57219 0.0658359 0.797226 0.609518 0.567856 0.985794 0.283717 0.775769 0.220991 0.611857 0.0540776 0.698662 0.549253 0.291239 0.953647 0.216767 0.318071 0.836617 0.500551 0.173592 0.705051 0.726279 0.334407 0.894491 0.432646 0.640483 0.261332 0.406451 0.759608 0.372262 0.148117 0 0.58464 0.346598 0.118474 0.233967 0.398643 0.604706 0 0.0874388 0.209266 0.610885 0.136169 0.983129 0.509582 0.475013 0.223805 0.357737 0.234705 0.610232 0.400083 0.260863 0.300782 0.962906 0.87101 0.975541 0.556901 0.612048 0.121426 0.241095 1 0.550872 0.361601 0.140135 0.0792129 0.550858 0.30535 0.741252 0.680811 0.398025 0.26036 0.276632 0.557327 0 0.756917 0.140996 0.871542 0.0179288 0.172985 0.366405 0.20357 0.997467 0.20432 0.613273 0.502847 0.294178 0.290885 0.896954 0.406106 0.798006 0.720575 1 0.411778 0.430791 0.582561 0.848896 0.660299 0.493365 0.172116 0.312247 0.604418 0.239698 0.0526957 0.825891 0.816067 0.428667 0.469283 0.117379 0.568076 0.171593 0.823761 0.426555 0.414324 0.280576 0.487822 0.674178 0.997727 0.361888 1 0.131816 0.449762 0.761706 0.290416 0.66554 0.955687 0.434983 0.76172 0.180829 0.430116 0.626303 0.498202 0.135893 0.518985 0.420885 0.68126 0.55246 0.941315 0 0.191792 0.690938 0.363924 0.318237 0.84966 0.865332 0.550681 0.28694 0 0.355807 0.525608 0.676618 0.727105 0.168159 0.464612 0.905909 0.748139 0.406958 0.937904 0.192955 0.504191 0.403682 0.846316 0.849898 0.132871 0.333393 0.321749 0.738625 0.854796 0 0.652127 0.700604 0.406781 0.273225 0.415837 0.959347 0.594722 0.194611 0.857433 1 0.395764 0.569843 0.380739 0.0299997 0.176388 0.855442 0.878874 0.781818 0.376888 0.829216 0 0.515089 0.858323 0.65481 0.334096 0.0768061 0.0841039 0.530864 0.30342 0.0977342 0.785348 0.0310403 0.512243 0.902124 0.57911 0.586633 0.239008 0.915172 1 0.903391 0.296437 0.0948387 0.875944 0.377648 0.563212 0.42552 0.316971 0.0917009 0.513536 0.631745 0.211981 0.397207 0.116375 0.815233 0.149268 0.309058 0.323769 0.159058 0.286362 0.898042 0.49207 0.507467 0.176509 0.37268 0.722343 0.569978 0.156577 0.72614 0.814562 0.365127 0.441765 0.126396 0.480129 0.226116 0.722906 0.227971 0.602648 0.37277 0.158537 0.743963 0.225735 0.437687 0.610501 0.747165 0.573214 0.400734 0.010372 0.786185 0.94611 0.284364 0.273492 0 0.295497 0.736958 0.817504 0.478717 0.623949 0.0888485 0.423877 0.569377 0.668735 0.563028 0.801105 0.91821 0.782017 0.284311 0.278769 0.732932 0.604019 0.378734 0.00219024 0.668881 0.216065 0.826392 0.391746 0.97247 0.616596 0.192441 0.0864803 0.711309 0.477028 0.764684 0.638809 0.602547 0.584821 0.401879 0.907201 0 0.29575 0.860504 0.027366 0.507216 0.413348 0.109285 0.404535 0.590849 0.93707 0.728267 0.326751 0.210223 0.503929 0.57591 0.581511 0.223462 0.556976 0.120843 0.382744 0.919844 0.0771364 0.17963 0.804175 0.253409 0.974769 0.84042 0.907419 0.564506 0.692987 0.12736 0.697974 0.645132 0.989979 0.626632 0.849646 0.496365 0.495755 0.309906 0.0421337 0.210293 0.687888 0.52101 0.93693 0.241083 0.825404 0.368718 0.39849 1 0.837246 0.0914442 0.663869 0.18994 0.146707 0.576449 0.142954 0.626679 0.24137 0.335484 0.957479 0.052152 0.785595 0.457231 0.0806296 0.253933 0.173984 0 0.744866 0.27351 0 0.478661 0.479822 0.142891 0.0657057 0.800502 0.576005 0.315613 0.725998 0.250707 0.890489 0.165188 0.730319 0.831841 0.275062 0.403226 0.33825 0.513482 0.0788338 0.296018 0.781659 0.0843473 0.577324 0.673529 0.920078 0.467467 0.295248 0.507382 0.404633 0.60319 0.793455 0.79657 0.626935 0.831019 0.402467 0.142143 0.411321 0.262742 0.27089 0.78384 0.271726 0.30605 0.23605 0.493238 0.256853 0.383625 0.103902 0.248701 0.441359 0.313852 0.191627 0.32837 0 0.531161 0.572002 0.473092 0.0418384 0.49165 0.903616 0.0831675 0.724887 0.647637 0.288237 0.848 0.630818 0.672172 0.938448 0.571337 0.862511 0.73896 0.319358 0.734621 0.243049 0.108589 0.644119 0.156208 0.259458 0.605984 0.0547533 0.583921 0.925387 0.254937 0.899807 0.747916 0.658772 0.944058 0.326004 0 0.861312 0.269578 0.326378 0.359037 0.911631 0.0796648 0 0.230511 0.909878 0.596074 0.21523 0.113381 0.256497 0.869933 0.159016 0.903137 0.371882 0.0881551 0.671735 0.899973 0.229775 0.246474 0.369482 0.912629 0.668335 0.391088 0.6659 0 0.581748 0.284456 0.842777 0.622927 0.653579 0.692306 0.888249 0.51188 0.172179 0.311282 0.0754732 0.0479486 0.712202 0 0.388036 1 0.0583323 0.645579 0.702478 0.538037 0.487402 0.671183 0.630218 0.08434 0.798677 0.0573745 0.866658 0.276536 0.072633 0.041965 0.145228 0.254586 0.862531 0.651842 0.439207 0.101222 0.565648 0.674498 0.718547 0.650816 0.186083 0.727824 0.0303839 0.936769 0.898019 0.493664 0.66707 0.506279 0.208 0.223203 0.171236 0.718376 0.213641 0.738433 0.94565 0.609653 0.457264 0.139062 0.247956 0.798396 0.634593 0.380885 0.379576 0.00543062 0.0293405 0.953939 0.486393 0.11586 0.588314 0.617771 0.232577 0.636963 0.229993 0.0517572 0.758067 0.886363 0.599955 0.775585 0.81829 0.146311 0.722691 0.548937 0.607738 0.200372 0.365903 0.330924 0.110552 0.741396 0.350148 0.114843 0.685616 0.74937 0.699367 0.43767 0.0823239 0.867424 0.0404768 0.872635 0.550958 0 0.648677 0.859459 0.82558 0.238941 0.436044 0.0965092 0.910697 0.638507 0.57271 0.120895 0.524034 0.827652 0.761607 0.218827 1 0.759148 0.0885961 0.0670585 0.691913 0.0102561 0 0.290778 0.163493 0.394162 0.668375 0.909158 0.619303 0.812418 0.616245 0.360517 0.236003 0.266898 0.985371 0.651658 0.741797 0.777412 0.789428 0.769303 0.714541 0.569994 0.00139704 0.727849 0.0210768 0.989712 0.777713 0.0504648 0.22494 0.880971 0.866149 0.915986 0.675161 0 0.424341 0.965175 0.252298 0.269474 0.881793 0.270473 0 0.427313 0.840991 0.659739 0.470469 0.160138 0.436211 0.156012 0.439143 0.877725 0.305847 0.854074 0.981372 0.153542 0.804729 0.60337 0.974482 0.517156 0.176816 0.0646905 0.505628 0.295401 0.128325 0.469338 0.511181 0.378637 0.287247 0.294312 0.0633713 0.307234 0.950255 1 0.608424 0.190323 0.156005 0.998255 0.295739 0.294651 0.542346 0.713182 0.114976 0.520125 0.29872 0.600991 0.882621 0.327421 0.146721 0.741674 0.224803 0.308885 0.540077 0.210202 0.264205 0.799528 0.148039 0.583417 0.541653 0.799017 0.159774 0.111952 0.989132 0.640114 0.0213347 0.0312183 0.657693 0.95994 0.114202 0.984531 0.0931107 0.232871 0.00814978 0.134435 0.86188 0.729001 0.0506886 0 0.76841 0.585293 0.406052 0.873716 0.137285 0.853137 0.727657 0.709973 1 0.267739 0.587304 0.13993 0.94606 0.468354 0.203672 0.322949 0.587715 0.865035 0.664551 0.735428 0.0813444 0.0265249 0.859721 0.797303 1 0.889932 0.956388 0.877212 0.987094 0.425892 0.850126 0.899032 0.92923 0.235454 0.316261 0.6821 0.586705 0.5006 0.0364643 0.553272 0.904658 0.794843 0.589154 0.232954 0.81731 0.376122 0.856339 1 0.645036 0.400775 0.849321 0.413208 0.79977 0.195959 0.609432 0.198925 0.854482 0.296937 0.251226 0.961762 0.241795 0.738492 0.0268367 0.571518 0.346888 0.925848 0.73886 0 0.202616 0.324118 0.17481 0.837763 0.400746 0.8172 0.934215 1 0.398341 0.857637 0.600282 0.422307 0.0862065 0.791226 0.968559 0.526177 0.0427097 0.948711 0.725047 0.579799 0.597823 0.892131 0.748656 0.154229 0.42779 0.376825 0.998588 0.709906 0.276036 0.706641 0.256258 0.688815 0.751751 0 0.610468 0.590131 0.366598 0.803413 0.364356 0.226297 0.389678 0.359143 0.166852 0.471223 0.572838 0.839217 0.56946 0.0471315 0.777792 0.299438 0.184816 0.229146 0.760828 0.482736 0.957715 0.0167182 0.406562 0.640056 0.0475687 0.00183125 0.324508 0.799905 0.408062 0.557599 0.632191 0.22273 0.0720132 0.910051 0.415391 0.833088 0.256525 0.954667 0.632297 0.878793 0.911248 0.257801 0.967223 0.94081 0.975791 0.477154 0.19637 0.444436 0.326344 0.252479 0.896481 0.189841 0.814539 0.948918 0.00514712 0.56657 0.157999 0.92561 0.731629 0.776023 0.53737 0.669847 0.489511 0.640377 0.224958 0.111622 0.853352 0.753762 0.631197 0.291993 0.105613 0.749509 0.686399 0.0350172 0.534295 0.70122 0.373189 1 0.281413 0.0791625 0.739431 0.952297 0.394622 0.062807 0.313726 0.659228 0.749087 0.587226 0.142467 0.488619 0.679641 0.170616 0.403042 0.652634 0.664939 0.167669 0.519313 0.508293 0.929202 0.342954 0.670915 0.968039 0.860257 0.617782 0.828704 0.216851 0 0.739002 1 0.168796 0.290772 0.398884 0.966073 0.688487 0.441061 1 0.478506 0.0335651 0.240692 0.182656 0.389662 0.752435 0.477322 0.814267 0.477765 0.455599 0.231689 0.869753 0.128831 0.518253 0.662904 0.917799 0.01836 0.834596 0.898995 0.19805 0.0252794 0.812178 0.83901 0.497361 0.287447 0.236995 0.445083 0.634419 0.362533 0.344115 0.535221 0.0430642 0.329523 0.948785 0.433089 0.364149 0.698952 0.594473 0.121743 0.626377 0.954248 0.918641 0.493694 0.00747989 0.0898052 0.95054 0.469949 0.530031 0.188234 0.691533 0.326951 0.968009 0.0832345 0.224534 0.0843252 0.512815 0.746119 0.72099 0.357847 0.704036 0.421796 0.522535 0.0294463 0.524778 0.458994 0.423547 0.340483 0.667939 0.827541 0.273676 0.0450387 0.529892 0.264537 0.656787 0.167289 0.224157 0 0.389117 0.441518 0.929516 0.580362 0.271967 0.788 0.89016 0.243347 0.024319 0 0.203791 0.45851 0.990839 0.204914 0.90579 0.803512 0.375907 0.985053 0.560544 0.718864 0.938678 0.854695 0.226606 0.615552 0.173423 0.553986 0.566364 0.195868 0.326222 0.724256 0.813859 0.375437 0.818707 0.227776 0.201845 0.415825 0.361105 0.102261 0.333061 0.48944 0.258756 0.811946 0.143309 0.64286 0.385677 0.708867 0.999634 0.673293 0.729527 0.606221 0.815581 0.31559 0.76209 0.63843 0.70334 0.219634 0.449642 0.695265 0.230679 0.306932 0.943569 0.160751 1 0.0823068 0.41675 0.136231 0.996079 0.446617 0.37543 0.139763 0.478108 0.759443 0.637716 0.724107 0.567211 0.292577 1 0.190751 0.240388 0 0 0.320797 0.304197 0.861363 0.433471 0.448861 0.57247 0.271163 0.569164 0.672589 0.865826 0.93799 0.57718 0.733669 0.185933 0.562117 0.0533693 0.716708 0.715579 0.754203 0.260235 0.784356 0.496944 0.05935 0 0.155741 0.994507 0.399348 0.610821 0.213002 0.965966 0.900431 0.310612 0.714093 0.931311 0.10189 0.869849 0.702831 0.541532 0.330453 0.0469248 0.517277 0.303269 0.297995 0.543462 0.8249 0.495827 0.258198 0.418721 0.0900385 0.477316 0.4591 0.605923 0.589221 0.935693 0.922491 0.303356 0.226069 0.184548 0.510723 0.972421 0.56412 0.39073 0.147153 0.663075 0.126559 0.0919864 0.316596 0.217459 0.0721397 0.21436 0.223414 0.116979 0 0.466611 0.106298 0.0315728 0.842114 0.519748 0.620214 0.560638 0.858775 0.982261 0.493506 0.827395 0.767793 0.788494 0.586177 0.873227 0.9339 0.151018 1 0.905795 0.896603 0.724366 0.791374 0.723105 0.571217 0.901437 0.233872 0.0324547 0.128727 0.486491 0.151821 0.091492 0.721567 0.0663835 0.253863 0.130686 0.34529 1 0.8548 0.480745 0.212094 0.477198 0.210853 0.517512 0.947432 1 0.678441 0.718086 0.293088 0.593803 0.0483014 0.46594 0.590653 0.739079 0.99806 0 0.044243 0.686621 0.762842 0.518468 0.493545 0.267397 0.340418 0.540815 0.93238 0.695593 0.815637 0.610797 0.11459 0.435064 0.00430222 0.25931 0.898671 0.865894 0.57936 0.332907 0.785209 0.890976 0.339867 0.0846227 0 0.841619 0.157019 0.967603 0.426856 0.401337 0.701152 0.491751 0.67712 0.360645 0.329593 0.43152 0.0815198 0.967353 0.70833 0.719 0.546353 0.133276 0.315513 0.7972 0.467744 0.241088 0.39021 0.912214 0.691256 0.139699 0.909796 0.698476 0.772927 1 0.867283 0.858752 0.84355 0.990667 0.255881 0.903186 0.183249 0.382867 0.261182 0.703984 0.184066 0.750379 0.239594 0.906374 0.524758 0.0313714 0.205195 0.777849 0.508508 0.77678 0.0837286 0.244591 0.18406 0.484663 0.352462 0.203093 0.196245 0.162165 0.893032 0.291196 0.521045 0.322019 0.587046 0.362836 0.107687 0.945234 0.842212 0.493247 0 0.641082 0.417207 0.651967 0.37649 0 0.906886 0.104654 0.226889 0.290446 0.00125487 0.818693 0.747806 0.237715 0.168604 0.130708 0.702976 0.633362 0.984587 0.142912 0.00978676 0.700278 0.0003895 0.704862 0.573295 0.750888 0.996975 0.260984 0.746999 0.964263 0.615939 0.369271 0.752725 0.128891 0.539396 0.104746 0.913962 0.707 0.352723 0.556826 0.844459 0.605656 0.588696 0.789989 0.565164 0.824869 0.924709 0.0541134 0.544028 0.785941 0.791485 0.460038 0.285689 0.158101 0.641803 0.450705 0.301508 0.678131 0.419058 0.350498 0.642597 0.476407 0.210895 0.236945 0.79917 0.68618 0.505947 0.185717 0.278846 0.370511 0.797927 0.429914 0.876102 0.727962 0.573517 0.100427 0.563496 0.555578 0.768707 0.683397 0.823349 0.213549 0.961931 0.0390487 0.608102 0.454572 0.556162 0.278975 0.847752 0.695701 0.303472 0.794754 0.967533 0.197407 0.62749 0.153161 0.502494 0.158574 0.163065 0.586499 0.823575 0.716 0.716679 0.346773 0.361381 0.801276 0.688799 0.32936 0.803093 0.745937 0.728426 0.0134476 0.84613 0.536959 0.155636 0.516471 0.574377 1 0.2582 0.302005 0.437727 0 0.546378 0.229683 0.714645 0.802125 0.680089 0.523108 0.382544 0.826352 0.858969 0.347826 0.512407 0.466729 0.0267147 0.190238 0.748083 0.840016 0.703091 0.343924 0.721605 0.0162748 6.27626e-05 0.509712 0.05016 0.899757 0.276459 0.920526 0.414331 0.144483 0.208125 0.154885 0.838989 0.820204 0.00618495 0.0523178 0.827971 0.170102 0.545494 0.655425 0.0775235 0.0405577 0.817673 0.865889 0.935564 0.085664 0.67851 0.704403 0.657456 0.603839 0.118135 0.515755 0.605297 0.43486 0.876447 0.218668 0.129886 0.720246 0.413984 0.837678 0.348878 0.219736 0.638907 0.689691 0.996935 0.790209 0.614607 0.600658 0.623708 0.129626 0.75056 0.835909 0.252579 0.665164 0.0697306 0.0399169 0.78863 0.811398 0.398794 0.840986 0.778657 0.30693 0.0454546 0.105597 0.411427 0.0130188 0.818915 0.721222 0.0576433 0.520113 0.0733635 0.633388 1 0.376151 0.843024 0.495214 0.299434 0.583873 0.292243 0.699955 0.700421 0.808675 0.857222 0.289174 0.615676 0.540845 0.117672 0.504714 0.624277 0.735964 0.684717 0.455819 0.229859 0.0363767 0.968135 0.524256 0.452384 0.475385 0.74661 0.445932 0.772716 0.74096 0.105645 0.0194945 0.373611 0.993392 0.6922 0.838975 0.954749 0.34159 0.303391 0.431642 0.700263 0.633877 0.580735 0.93851 0.326877 0.0685188 0.279487 0.873102 0.797739 0.523685 0.954579 0.440676 0.396762 0.571659 0.643654 0.131164 0.394215 0.475709 0.412828 0.100211 0.548162 0.587568 0.790792 0.272978 0.526081 0.703175 0.351529 0.619388 0.612339 0.850277 0.138868 0.71853 0.289647 0.751533 0.914464 0.629638 0.246669 0.939949 0.432718 0.166728 0.914258 0.165066 0.271084 0.906367 0.732348 0.130177 0.00240978 0.0728549 0.41499 0.11486 0.091888 0.201847 0.18262 0.281518 0.400194 0.266443 0.0340112 0.557175 0.0588534 0.330546 0.445337 0.170937 0.935472 0.290005 0.822787 0 0.814714 0.455749 0.0537973 0.180058 0.169122 0.36561 0.378295 0.903589 0.835918 0.348825 0.0999119 0.667449 0.624351 0.778529 0.231018 0.133546 0.0879869 0.283388 0.910606 0.188867 0.0477961 0.0846276 0.718836 0.819208 0.48014 0.106894 0.59287 0.836407 0.173347 0.736454 0.270441 0.416576 0.156485 0.882583 0.00745076 0.197209 0.811276 0.949837 0.452207 0.161502 0.748039 0.0105772 0.501899 0.479784 0.851572 0.594034 0.823117 0.810704 0.715364 0.611396 0.5855 0.700438 0.0531724 0.437868 0.578132 0.293185 0.886289 0.00701511 0.853293 0.00253165 0.310659 0.627202 0.160847 0.290725 0.318854 0.501025 0.556674 0.898112 0.0149284 0.209744 0.66862 0.0487263 0.759903 0.449934 0.898421 0.223732 0.444416 0.364648 0.448217 0.477937 0.998779 0.367276 0.17631 0.551589 0.106444 1 0.0820614 0.815802 0 0.608321 0.61957 0.493909 0.790189 0.130064 0.427193 0.735489 0.358141 0.858444 0.485795 0.297482 0.432993 0.721937 0.213702 0.510819 0.442416 0.789216 0.105681 0.000277775 0.888328 0.0191225 0.89075 0.91388 0.826964 0.708215 0.382246 0.448096 0.951456 0.347062 0.553479 0.48058 0.585725 0.680167 0.30932 0.647474 0.64603 0.943655 0.213725 0.663503 0.679484 0.772614 0.283488 0.443665 0.522868 0.277173 0.387903 0.487071 0 0.465454 0.335435 0.910503 0.866573 0.784878 0 1 0.0945258 0.434631 0.0142332 0.690962 0.0994329 0.296216 0.914745 0.0245229 0.481655 0.0296998 0.644245 0.726474 0.130561 0.244588 0.317411 0.465497 0.0995175 0.712534 0.190924 0.358483 0.195801 0.808431 0.214762 0.49171 0.78816 0.718583 0.349294 0.681649 0.563882 0.41663 0.820906 0.777827 0.177804 0.640407 0.00611607 0.326953 0.807907 0.0624953 0.0802459 0.705059 0.533677 0.083436 0.584356 0 0.732931 0.214946 0.843024 0.373107 0.489769 1 0 0.698879 0.647229 0.133254 0.866477 0.0594176 0.874049 0.381272 1 0.324127 0.596004 0.377625 0.0992456 0.176599 0.126712 0.526546 0.901933 0.771087 0.427406 0.59753 0.944848 0.552589 0.159215 0.81202 0.782609 0.748384 0.661271 0.482723 0.0226624 0.740467 0.918641 0.956753 0.193413 0.848828 0.844897 0.273914 0.848709 0.653059 0.502366 0.221801 0.9129 0.164826 0.576603 0.548672 0.541158 0.716705 0.0973726 0.1609 0.615798 0.8763 0.354793 0.63813 0.743122 0.451543 0.62555 0.376397 0.374126 0.0968616 0.206473 0.668251 0.119859 0.336447 0.54851 0.00897785 0.765716 0.173498 0.158327 0.420559 0.43074 0.586139 0.0282846 0.408773 0.254664 0.756508 0.757189 0.610839 0.172106 0.251947 0.938188 0.00989393 0.582166 0.858632 0.911547 0.680278 0.518161 0.310931 0.34426 0.685834 0.15431 0.0924373 0.946413 0.965795 0.391714 0.521407 0.481664 0.243662 0.615077 0.242137 0.857321 0.017655 0.238548 0.726121 0.418483 0.583739 0.12039 0.279457 0.559475 0.7433 0.908386 0.563246 1 0.636318 0.611421 0.172279 0.0272857 0.330979 0.0422824 0.686969 1 0.136075 0.779831 0.694485 0.226348 0.235072 0.0109943 0.838911 0.207928 0.259248 0.330247 0.126031 0.808946 0.481075 0.347001 0.746549 0.871588 0.109848 0.194697 0.204778 0.863239 0.744502 0.789675 0.0210467 0.822836 0.647799 0.126653 0.590857 0.636902 0.67994 0.562241 0.504862 0.234113 0.140098 0.661276 0.54546 0.480488 0.182692 0.491008 0.975037 0.192925 0.415827 0.300727 1 0.570161 0.196506 0.575448 0.244202 0.834116 0.173746 0.929794 0.52332 0.0801093 0.105931 0.272343 0.171984 0.447857 1 0.538159 0.707788 0.900444 0.933144 0.381468 0.189354 0.398282 0.939396 0.887739 0.610138 0.0461196 0.160596 0.566982 0.230729 0.618397 0.0343528 0.312083 0.875182 0.073511 0.573988 0.743889 1 0.96651 0.534899 0.150903 0.30199 0.502199 0.377353 0.0760824 0.811407 0.450294 0.0922108 0.840392 0.385266 0.409732 0.119272 0.317491 0.473759 0.414568 0.673307 0.261959 0.0358483 0.951329 0.556937 0.291899 0.840201 0.201589 0.67934 0.895897 0.239604 0.578696 0.153124 0.0490032 0.00498848 0.251066 0.118098 0.413486 0.192746 0.499923 0.669342 0.418137 0.464045 0.163027 0.560162 0.0730115 0.640394 0.364546 0.919263 0.635084 0.915677 0.932628 0.327874 0.870642 0.711995 0.244734 0.0491378 0.289657 0.536678 0.103596 0.444793 0.748131 0.651005 0.414582 0.651757 0.79768 0.500665 0.281701 0.601331 0.271985 0.837421 0.359488 0.675535 0.298682 0.562402 0.110423 0.366978 0.860008 0.646601 0.0668919 0.445765 0.327286 0.407297 0.164961 0.134017 0.818256 0.701603 0.814439 0.893743 0.161526 0.204239 0.682233 0.72729 0.505042 0.704585 0.379894 0.217358 0.4886 0.434727 1 0 0.852013 0.468144 0.767617 0.278629 0.543626 0.791236 0.753654 0.194885 0.371795 0.616907 0.173108 0.625034 0.443855 0.706814 0.657194 0.0948187 0.225672 0.147534 0.467023 0 0.176367 0.0599112 0.549802 0.417718 0.178197 0.797769 0.963203 0.108557 0.118699 0.926156 0.561666 0.975883 0.619253 0.969685 0.491639 0.54542 0.00186232 0.576968 0.372571 0.887834 0.87119 0.294832 0 0.855025 0.222109 0.820725 0.432027 0.210105 0.535708 0.211857 0.165369 0.602548 0.109582 0.344786 0.134212 0.401596 0.960944 0.490286 0.258156 0.536257 0.298093 0.816041 0.291332 0.877932 0.617275 0.324224 0.145458 0.501935 0.0932056 0.920742 0.420755 0.169594 0.476383 0.764494 0.868173 0.104633 0.45847 0.853505 0.207887 0.404478 0.738426 0.418817 1 0.802956 0.853891 0.902666 1 0.321221 0.937522 0.418774 0.509709 0.644773 0.548554 0.831453 0.871754 0.328343 0.651454 0.710064 0.238763 0.91482 0.38769 0.55006 0.416535 0.27508 0.366154 0.703572 0.478451 0.40353 0.333733 0.340535 0.281167 0.192707 0.145851 0 0.586027 0.506701 0.393833 0.551982 0 0.276368 0.879961 0.106441 1 0.470707 0 0.312346 0.805967 0.0950172 0.979173 0.578982 0.304703 0.899173 0 0.359878 0.523727 0.936189 0.654126 0.33195 0.371021 0.699178 0.0650665 0.588887 0.107623 0.581018 0.745343 0.77825 0.974186 0.84701 0.442974 0.739456 0.483002 0.236603 0.433722 0.291528 0.0863163 0.46994 0.902404 0.484977 0.656503 0.872781 0.603203 0.451083 0.13577 best_right.txt

bash

0.916306 0.873191 0.0276138 0.902038 0.350969 0.774623 0.20447 0.894456 0.847921 0.401186 0.739859 0.153806 0.748671 0.136722 0.341239 0.685225 0.160383 0.0348778 0.325621 0.161261 0.681228 0.555104 0.16435 0.177401 0.161364 0.668386 0.443836 0.012553 0.535797 0.968595 0.916411 0.352902 0.0578949 0.789893 0.80648 0.852365 0.905078 0.345864 0.0886015 0.602836 0.931834 0.740036 0.227732 0.476618 0.547557 0.240846 0.841453 0.698014 0 0.972908 0.542277 0.179576 0.0114305 0.578907 0.833037 0.866768 0.0605532 0.753787 0.0994637 0.469366 0.388348 0.226076 0.201368 0.325246 0.757908 0.0555237 0.429682 0.334311 0.891451 0.444166 0.0607759 0.459109 0.242393 0.511354 0.598846 0.590225 0.321381 0.502183 0.215426 0.72621 0.253717 0.901435 0.957462 0.230566 0.110926 0.0682452 0.406016 0.761315 0.570127 0.839884 1 0.718434 1 0.997315 0.47129 0.762899 0.970339 0.521664 0.64674 0.908857 0.0506133 0.158556 0 0.00215961 0.653057 0.696343 0.568081 0.108975 0.162659 0.738249 0.418077 0.578835 0.399347 0.745037 0.455721 0.429955 0.836494 0.74621 0.073996 0.872428 0.832852 0.0771859 0.203666 0.514263 0.67754 0.848382 0.0932117 0.430429 0.930387 0.204481 0 0.69153 0.265273 0.273854 0.524134 0.802119 0.910687 0.14226 0.527884 0.808321 0.678232 0.370673 0.959973 0.158824 0.904921 0.518605 0.361596 0.564325 0.131015 0.580558 0.332855 0.15057 0.949939 0.753481 0.187509 0 0.0673106 0.985154 0.458956 0.444348 0.262756 0.328042 0.100238 0.966145 0.524724 0.0805108 0.478773 0.206862 0.48341 0.764748 0.250764 0.300449 0.733511 0.8433 0.15956 0.820687 0.564436 0.953434 0.810622 0.219848 0.8498 0.855912 0.770771 0.939916 0.663531 0.938993 0.569148 0.207504 0.64035 1 0.287966 0.251335 0.704922 0.697121 0.484285 0.357042 0.971219 0.427791 0.531339 0.588409 0.663802 0.641301 0.164763 0.249544 0.911619 0.529805 0.703455 0.91752 0.655403 0.21043 0.682171 0.25399 0.366219 0.213028 0 0.594871 0.100334 0.137208 0.169678 0.318777 0.547744 0.883107 0.420683 0.784335 0.107025 0.254304 0.852427 0.187673 0.527539 0.486114 0.878726 0.797876 0.259414 0.736765 0.471524 0.00553327 0.54012 0.304951 0.403159 0.262279 0.515842 0.818423 0.228987 0.774481 0.659092 0.279174 0.30551 0.623011 0.422512 0.460805 0.502076 0.346933 0 0.0965623 0.966531 0.326348 0.825799 0.801201 0.00308955 0.216477 0.482084 0.697285 0.838265 0.509271 0.218044 0.667849 0.958028 0.528341 0.69088 0.731553 0.322705 0.235552 0.785828 0.912077 0.752414 0.607384 0.773782 0.326848 0.149399 0.164113 0.99864 0.86516 0.753983 0.194205 0.352641 0.000321255 0.629206 0.604277 0.74707 0.660956 0.714778 0.944433 0.128331 0.375113 0 0.0943292 0.498191 0.341142 0.331095 0.333604 0.0137387 0.205683 0.190804 0.4096 0.127879 0.56098 0.344596 0.547221 0.987881 0.725439 0.304682 0.361964 0.814017 0.521566 0.632356 0.194695 0.561888 0.433214 0.623001 0.645326 0.531498 0.445317 0.846894 0.257271 0.420337 0.438042 0.0518385 0.0077708 0.567377 0.318202 0.340778 0.183584 0.790252 0.90334 0.843566 0.981256 0.105111 0.727944 0.73877 0.949671 0.842596 0.535685 0.93658 0.0532531 0.713409 0.271169 1.15349e-06 0.402687 0.179167 0.520844 0.279007 0.298904 0.826625 0.278207 1 1 0.245848 0.890784 0.81243 0.731167 0.279312 0.244613 0.568899 0.157384 0.127685 0.741236 0.645035 0.596215 0.70922 0.239985 0.229088 0.0103593 0.999626 0.0888969 0.241113 0.386235 0.748949 0.598841 0.575531 0.316653 0.831594 0.504284 0.796073 0.495179 0.488529 1 0.789801 0.478933 0.425334 0.45803 0.582978 0.0965413 0.863506 0.356871 0.761718 0.604167 0.847348 0.838896 0 0.385305 0.874213 0.363777 0.357405 0.480873 0.680183 0.999293 0.0501021 0.569744 0.545722 0.814405 0.374277 0.666421 0.834818 0.245202 0.434729 0.41244 0.149177 0.962704 0.779369 0.768224 0.0553396 0.559368 0.165672 0.163549 0.460181 0.868985 0.654769 0.527556 0.588537 0.0606413 0.325512 0.637828 0.189753 0.46587 0.778267 0.977616 0.619831 0.857495 0.743027 0.649236 0.302553 0.520036 0.151107 0.601148 0.812021 0.397656 0.378882 0.259555 0.489737 0.0649311 0.10855 0.288787 0.086889 0.428068 0.44235 0.849242 0.119045 0.970358 0.570424 0.876733 0.927079 0.504029 0.370474 0.0591247 0.561061 0.103793 0.679195 0.459565 0.519171 0.485887 0.142659 0.374245 0.911197 0.203421 0.0723892 0.402443 0.668904 0.900235 0.207359 0.243585 0.880719 0.92022 0.892212 0.182842 0.305699 1 0.499032 0.246947 0.620816 0.283149 0.447862 0.52468 0.405799 0.136538 0.0679243 0.804048 1 0.741222 0.146206 0.391341 0.400332 0.755154 0.487785 0.10393 0.680114 0.780295 0.322389 0.309637 0.289538 0.609618 0.786873 0.437026 0.714317 0.796111 0.799496 0.705482 0.675721 0.63561 0.717209 0.0271122 0.281308 0.285148 1 0.902418 0.0530592 0.205879 0.605902 0.267737 0.316096 0.341869 0.89463 0.357878 0.00948036 0.744798 0.899999 0.817846 0.274702 0.855833 0.377191 0.784522 0.798553 0.43314 0.90266 0.827148 0.422759 0.632717 0.350867 1 0.885453 0.0876703 0.451503 0.983092 0 0.337176 0.767341 0.822578 0.303432 0.713491 0.341314 0.841789 0.318751 0.198099 0.599202 0.652194 0.406686 0.907712 0.687079 0.371518 0.80744 0.383488 0.641843 0.275468 0.407651 1 0.525025 0.257654 0.578803 0.999888 0.431468 0.068141 0.561734 0.177926 1 0.55274 0.637335 0.667139 0.249544 0.154829 0.701078 0.908616 0.442106 0.215628 0.0150956 0.289356 0.00404386 0.888542 0.883967 0.549025 0.187202 0.247108 0.658979 0.661839 0.342586 0.237502 0.369092 0.00930012 0.558748 0 0.369898 0.526952 0.730133 0.158413 0.20346 0.24322 0.690432 0.235968 0.246812 0.716262 0.89168 0.428707 0.734031 0.20848 0.305254 1 0.849638 0.249741 0.334683 0.883519 0.73624 0.262904 0.587826 0.616938 0.965953 0.530879 0.672746 0.157597 0.407926 0.999483 0.654895 0.88972 0.274441 0.0165663 0.774308 0.761135 0.937303 0.749833 0.0851907 0.740271 0.473146 0.444762 0.992824 0.294083 0.841354 0.24838 0.736208 0.73902 0.670038 0.320966 0.920491 0.751143 0.602767 0.911277 0.856707 0.956998 0.554406 0.406718 0.381038 0.00135911 0.376708 0.582971 0.141763 0.16624 0.181289 0.52588 0.444297 0.366092 0.240035 0.828312 0.897852 0.746359 0.948442 0.23527 0.605597 0.874764 0.165921 0.77597 0.156539 0.482778 0.910062 0.816355 0.837938 0.918624 0.604343 0.783846 0.268315 0.190605 0.427693 0.00377726 0.82824 0.0299622 0.301129 0.837113 0.385905 0.633447 1 1 0.41948 0.732385 0.723204 0.637973 0.526906 0 0.0953296 0.371289 0.709915 0.714498 0.909909 0.411169 0.0379975 0.622909 0.680342 0.00450156 0.0978793 0.386558 0.157667 0.309189 0.931113 0.178773 0.826876 0.658843 0.490455 0.388317 0.053018 0.427566 1 0.522575 0.258057 1 0.925533 0.669426 0.0511857 0.543467 0.861447 0.784422 0.0576807 0.679138 0.154325 0.334374 0.132587 0.447964 0.677424 0.8338 0.96073 0.790164 0.175634 0.427654 0.302339 0.638558 0.877099 0.340701 0.740763 0.526214 0.792045 0.533262 0.863704 0 0.00421524 0.56697 0.198182 0.569231 0.0990852 0.774508 0.373616 0.918784 0.0410205 0.473961 0.691576 0.123678 0.592646 0.178859 0 0.373001 0.37324 0.714411 0.787059 0.337271 0.0215245 0.588588 0.690878 0.315771 0.99793 0.704718 0.857971 0.429744 0.810941 0.455384 0.773384 0 0.787962 0.381908 0.514574 0.220073 1 0.0347921 0.97536 0.824006 0.102347 0.197196 0.378864 0 0.73617 0.596417 0.136114 0.944432 0.443675 0.0435947 0.375406 0.530342 0.0139287 0.0230834 0.0495783 0.923336 0.402737 0.472439 0.635763 0.336596 0.143072 0.755075 0.376674 0.634195 0.478072 0 0.348279 0.784779 0.481428 0.476411 0.332183 0.530692 0.518129 0.486687 0.581884 0.561078 0.780402 0.625715 0.521619 0.174916 0.620907 0.3801 0.912005 0.192327 0.00801465 0.217356 0.505048 0.372913 0 0.495046 0.840119 0.5448 0.744699 0.610325 0.15822 0.994345 0.0917828 0.680514 0.398939 0.0130112 0.11643 0.145707 0.456711 0.130993 0.193048 0.854106 0.139463 0.643868 0.645347 0.952762 0.0188076 1 0.957282 0.295766 0.525971 0.32665 0.737184 0.351013 0.355306 0.90024 0.94575 0.105302 0.580474 0.992952 0.0403145 0.177242 0.00102368 0.405668 1 0.639635 0.809325 0.713687 0.1583 0.829962 0.185804 0.0974455 0.258584 0.0290751 0.678783 0.137034 0.196182 0.95421 0.999961 0.0723852 0.504593 0.63145 0.578195 0.453947 0.394245 0.500165 0.966742 0.589219 0.442894 0.931933 0.636154 0.93165 0.88347 0.0829427 0.905532 0.101124 0.725128 0.318261 0.389117 0.823579 0.794961 0.789022 0.344216 0.548556 0.821998 1 0 0.34745 0.770156 0.535432 0.950682 0.67422 0.221073 0.716407 0.743898 0.174671 0.355238 0.648478 0.910067 0.795796 0.566248 0.982123 0.554477 0.548479 0.883991 0.419637 0.996462 0.13844 0.918897 0.660583 0.567125 0.362391 0.927542 0.816204 0.873585 0.0353041 0.524143 1 0.907148 0.835521 0.808109 0.818335 0.33121 0.57803 0.116869 0.148951 0.395284 0.625426 0.921563 0.671073 0.7411 0.896917 0.313101 0.534794 0.762992 0.404471 0.748143 0.521033 0.331807 0.269183 0.798818 0.983577 0.183519 0.422396 0.332009 0.438339 0.000947228 0.896835 0.307602 1 0.866309 0.724596 0.458596 0.303714 0.700186 0.505249 0.943438 0.10015 0.698811 0.14822 0.574957 0.459353 0.907861 0.624306 0.523819 0.690772 0.551198 0.936137 0.354279 0.20809 0.100953 0.203323 0.56549 0.697505 0.851949 0.227921 0.326284 0.774112 0.0663896 0.52855 0.900973 0.0883954 0.327496 0.46617 0.448517 0.611191 0.537013 0.432689 0.580501 0.437163 0.860779 0.0274239 0.709241 0.157561 0.244255 0.388498 0 0.579243 0.0210123 0.0372137 0.0861612 0.175129 0.269675 0.0468194 0.170535 0.396007 0.583421 0.953092 0.915315 0.466925 0.406795 0.168436 0 0.300726 0.686152 0.422499 0.712784 1 0.216621 0.615188 0.804422 0.808484 0.330392 0.658523 0.458073 0.938913 0.643602 0.738231 0.337502 0.341673 0.217955 0.861684 0.580088 0.24644 0.0658224 0.276762 0.307064 0.0874832 0.00307963 0.484364 0.826759 0.922179 0.253056 0.456183 0.526538 0.194285 0.871252 0.497388 0.170301 0.436653 0 0.492523 0.187194 0.895138 0.334335 0.669522 0.813454 0.150387 0.779692 0.624342 0.239763 0.65642 0.825986 0.914667 0.961265 0.892085 0.921411 0.628606 0.316538 0.324244 0.875204 0.960047 0.876538 0.767315 0.0880148 0.00146803 0.479811 0 0.455964 0.199968 0.50542 0.0211152 0.526172 0.455992 0.935068 0.542565 0.997471 0.178302 0.668511 0.0871589 0.181069 0.248295 0.362131 0.215925 0.623042 0.81694 1 0.902542 0.422703 0.644106 0.119604 0.235579 0.450367 0.501481 0.741258 0.586445 0.653465 0.364525 0.752757 0.998466 0.343949 0.67456 0.560031 0.131523 0.504658 0.0577666 0.76763 0.324379 0.205328 0.179335 0.375063 0.458176 0.0375483 0.644992 0.39372 0.567537 0.396383 0.839188 0.657554 0.1096 0.477263 0.350828 0.0680796 0.905798 0.774868 0.511741 0.415356 0.472946 0.124413 0.775977 0.106149 0.0708385 0.586527 0.892276 0.402807 0.588337 0.999832 1 0.273624 0.572779 0.264046 0.584995 0.584623 0.775267 0.189363 0.471379 0.367181 0.779119 0.474023 0.649173 0.226695 0.203263 1 0.310997 0.981584 0.518224 0.978631 0.717913 0.627003 0.775894 0.561961 0.550535 0.489386 0.262202 0.181404 0.793783 0.314449 0.186609 0.5927 0.544462 0.754129 0.627374 0.108343 0.845907 0.792671 0.232497 0.0567189 0.461912 0.742861 0.644504 0.606657 0.321726 0.313746 0.400698 0.0987155 0.565476 0.299213 0.119181 0.380202 0.134847 0.36185 0.806885 0.359288 0.336476 0.185271 0.989737 0.562447 0.272927 0.31268 0.906397 0.554561 0.448371 0.99665 0.11505 0.398327 0.0755071 0.0851148 0.771636 0.109225 0.339405 0.193945 0.590838 0.456606 0.644517 0.500037 0.52174 0.921949 0.456475 0.221141 0.850401 0.883398 0.116809 0.469776 0.398664 1 0.128817 0.34885 0.433369 0.141263 0.844456 0.939543 0.396859 0 0.254434 0.82864 0.645345 0.28639 0.122948 0.0450718 0.29532 0.275461 0.428535 0.415854 0.512615 0 0.316224 0.102384 0.152087 0.715689 0.946041 0.885375 0.186267 0.503845 0.357185 0.0548665 0.0903459 0.748626 0.561815 0.461212 1 0.177882 0.62658 0.71062 0.867151 0.308961 0.0912104 0.309199 0.537601 0.140033 0.459527 0.3227 0.623139 1 0.0470499 0.91023 0.432053 0.728939 0.270243 0.0775508 0 1 0.545466 0.572788 0.781105 0.136962 0.734327 0.74563 0.990047 0 0.144243 0.0736941 0.0996098 0.407947 0.4909 5.37509e-05 0.311416 0.922202 0.940178 0.237978 0.590054 0.258963 0.373515 0.984664 0.211129 0.353625 0.878386 0.106622 0.815523 0.0895007 0.215004 0.432926 0.146633 0.711174 0.108425 0.55213 0.375387 0.641905 0.580044 0.852025 0.224312 0.419351 0.78013 0.122001 0.314783 0.38967 0.535281 0.593655 0.917549 0.588761 0 0.970765 0.295372 0.557031 0.250627 0.725838 0.248407 0.365452 0.763125 0.265446 0.0827697 0.803911 0.647416 0.723276 0.706339 0.759961 0.86895 0.522517 0.00615902 0.235193 0.751619 0.81828 0.00185315 0.689494 0.188457 0.752628 0.444163 0.955905 0.217511 0.146441 0.272258 0 0.830789 0.665068 0.357991 0.327141 0.608784 0.112602 0.05211 0.204356 0.483715 0.178335 0.280577 0.385113 0.917754 0.455425 0.682021 0.757722 0.608559 0.762293 0.950874 0.233007 0.320733 0.350466 0.204635 0.119887 0.1829 0.696655 0.919824 0.188299 0.413905 0.937069 0.917358 0.129055 0.993093 0.769708 0.761457 0.876043 0.363177 0.723819 0.979503 0.485221 0.38979 0.874423 0.684536 0.181316 0.187579 0.426512 0.683115 0.0535045 0.950469 0.374692 0.0737289 0.0425442 0.976587 0.485389 0.878772 0.668955 0.0525055 0.775842 0.0494976 0.932239 0.375088 0.0390684 0.764057 0.31555 0.711865 0 0.33537 0.616739 0.142967 0.755849 0.967447 0.000973004 0 0.0900369 0.415054 0.45365 0.913318 0.300816 0.536012 0.98219 0.386211 0.224746 0.999982 0.894475 0.343223 0.525976 0.386561 0.242226 0.87936 0.253936 0.495686 0.271107 0.461843 0.57553 0.60163 0.476797 0.147286 0.238552 0.136008 0.349111 0.0982363 0.3761 0.159939 0.796841 0.999706 1 0.91652 0.125479 0.592341 0.890454 0.969997 0.151574 0.0778459 0.132768 0.573227 0.512652 0.809847 0.257185 0.002182 0.489926 0.157493 0.785512 0.00294071 0.882098 0.655824 0.774046 0.530342 0.543214 0.820767 0.757339 0.414169 0.974039 0.838659 0.0743927 0.192544 0.148338 0.480968 0.47106 0.848416 0.86752 0.559792 0.782708 0.789768 0.555565 0.94453 0.551273 0.716118 0.557305 0.575966 0.465055 0.183626 0.775508 0.578979 0.0756914 0 0.128104 0.753645 0.61761 0.102458 0.534111 0.949157 0.990604 0.838529 0.799256 0.280254 0.967689 0.642873 0.975553 0.807759 0.734584 0.0906803 0.844553 0.817023 0.414261 0.553492 0.0241581 0.524251 0.681862 0.854729 0.477755 0.906322 0.986323 0 0.425396 0.222278 0.176342 0.43505 0.528786 0.467356 0.392617 0.774989 0.610472 0.947778 0.214379 0.3288 0.842125 0.498493 0.47827 0.818933 0.991053 0.983671 0.846171 0.530844 0.421887 0.27999 0.840349 0.756312 0.0997935 0.729647 0.435697 0.120371 0.552839 0.0788781 0.152115 0.994083 0.0294961 0.886781 0.0694525 0.116455 0.235076 0.429882 0.274086 0.87995 0.247503 0.381276 0.202392 0.278081 0.325065 0.381526 0.558745 0.250653 0.242515 0.933834 0.143409 0.238593 0.961047 0.992045 0.416428 0.141762 0.429262 0.193559 0.833363 1 0.384701 0.817181 0.187339 0.307123 0.216749 0.491413 0.778809 0.342316 0.0522092 0.832338 0.451622 1 0.192665 0.606261 0.31496 0.820236 0.0980446 0.0367273 0.148987 0.484619 0.223899 1 0.827483 0.251228 0.742163 0.387264 0.922375 0.889882 0.320415 0.479852 0.870147 0.489667 0.594917 0.835986 0.355738 0.159018 0.343933 0.753995 0.184556 0.858374 0.32606 0.117663 0.0537386 0.927972 0.990552 0.349224 0.0922258 0.85075 0.315362 0.310894 0.396148 0.797164 0.520847 0.426408 0.235292 0.733057 0.988457 0.990988 0.63092 0.836958 0.574099 0.798782 0.999981 0.821541 0.256725 0.668967 0.765024 0.703571 0.117225 0.568265 0.146989 0.0537488 0.859089 0.14392 0.437047 0.90295 0.413133 0.329809 0.637929 0.299131 0.636405 0.931256 0.00214809 0.150235 0.219838 0.497666 0.824113 0.361098 0.398562 0.0215945 0.887782 0.0832426 0.244249 0.744947 0.689143 0.933982 0.431162 0.43894 0.32837 0.12038 0.693625 0.797359 0.000887867 0.242829 0.173155 0.0586053 0.14155 0.0626069 0.113859 0.134167 0.607875 0.383414 0.269209 0.864718 0.872934 0.203165 0.8864 0.140057 0.51876 0.527931 0.306982 0.953454 0.405225 0.935441 0.787481 0.724593 0.94928 1 0.150266 0 0.540961 0.0986596 0.626131 0.270704 0.0745312 0.740686 0.0559245 0.344795 0.406703 0.718546 0 0.266645 0.144415 0.913188 0.488049 0.199171 0.251717 0.665505 0.124956 0.534937 0.926591 1 0.285126 0.452449 0.104034 0.515205 0.0148141 0.211794 0.883123 0.0739122 0.581172 0.810695 0.517506 0.808948 0.32649 0.0619982 0.652967 0.930089 0.258591 0.606331 0 0.469853 0.400384 0.757283 0.846898 0.871356 0.851265 0.620787 0.439811 0.234212 0.688172 0.299765 0 0.0684319 0.219576 0.0722892 0.999965 0.0192363 0.417497 0.257999 0 0.386411 0.938486 0.348509 0.929867 0.560081 0.359436 0.184109 0.434404 0.182686 0.469802 0.17619 0.740225 0.859874 0.430422 0.826344 0.27118 0.965174 0.570106 0.621977 0.926897 0.565886 0.258893 0.840284 0.787885 0.314291 0.440217 0.878921 0.704368 0.206427 0.771397 0.160303 0.95326 0.190628 0.929274 0.098383 0.269161 0.807912 0.161053 0.505731 0.799351 0.042438 0.812023 1 0.955093 0.414111 0.400077 0.95142 0.702469 0.781103 0.261055 0.0679934 0.34676 0.77607 0.928005 0.60982 0 0.300493 0.770138 0.781044 0.687174 0.978654 0.496622 0.51439 0.663113 0.536782 0.226837 0.00169598 0.538354 0.319498 0.399436 0.47851 0.751173 0.192665 0.595828 0.724131 0.309314 0.729342 0.940842 0.376767 0.567867 0.893055 0.195434 1 0.803602 0.0471579 0.999972 0.935618 0.977306 0.552425 0.321283 0.845099 0.368463 0.0718521 0.351932 0.82737 0.906714 0.552731 0.591875 0.299968 0.260528 0.624181 0.263095 0.0783473 0.860923 0.823209 0.279004 0.999372 0.242625 0.150516 0.162398 0.594177 0.639298 0.718499 0.598515 0.905489 0.134289 0.159123 0.999478 0.110861 0.084287 0.215826 0.593041 0.814877 0.59511 0.832089 0.523892 1 0.32022 0.573464 0.245514 0.757084 0.147445 0.847117 0.52698 0.189698 0.294661 0.486486 0.445628 0.586601 0.910489 0.467752 0.589636 0.600764 0.515464 0.492937 0.919948 0.738782 0.437745 0.293498 0.816827 0.733063 0.738639 0.566777 0.722434 0.125224 0.817729 0.27872 0.320314 0.0840193 0.78081 0.321459 0.355157 0.965097 0.572892 0.575691 0.228383 0.612861 0.217472 0.377317 0.44665 0.376809 0.791775 1 0.0787564 0.818068 0.343494 0.581288 0.261089 0.492209 0.112078 0.351665 1 0.832722 0.723621 0.212771 0.594834 0.679733 0.2123 0.000785825 0.115338 0.102515 0.263568 0.650406 0.497431 0.344479 0.389209 0.189673 0.822929 0.363983 0.137375 0.293392 0.378843 0.9 0.997929 0.496693 0.717617 0.135069 0.557513 0.856187 0.0252131 0.468182 0.00617703 0.809149 0.857504 0.424283 0.497174 0.688132 0.983401 0.446151 0.390369 0.282205 0.997264 0.559934 0.754037 0.760775 0.817136 0.0681688 0.36596 0.178769 0.544579 0.295894 0.525929 0.459486 0.576542 0.910384 0.92642 0.412652 0.0915696 0.854384 0.685013 0.75288 0.835396 0.20071 0.681055 0.321941 0.157416 0.197825 0.62655 0.707828 0.867114 0.622834 0.633521 0.167178 0.828521 0.552057 1 0.855799 0.880621 0.17329 0.513224 0.425874 0.353876 0.327554 0.318019 0.69681 0.819689 0.565558 0.355422 0.16928 0.341996 0.606944 0.248022 0.130426 0.958531 0.291001 0.0486333 0.036299 0.904443 0.196587 0.898525 0.8509 0.255811 0.310076 0.999985 0.763976 0.845796 0.260607 0.927903 0.993562 0.00100495 0.0567352 0.795425 0.366436 0.334008 0.942172 0.0589966 0.52657 0.547968 0.211603 0.522112 0.725194 0.868658 0.452962 0.0140329 0.289569 0.483715 0.300741 0.129449 0.911945 0.781095 0.243302 0.0554126 0.55792 0.348041 0.619685 0.589132 0.279167 0.327728 0.460946 0.204099 0.00324333 0.94034 0.290211 0.0719315 0.810099 0.55129 0.0955225 0.637753 0.088545 0.181068 0.17526 0.882788 0.378967 0.25471 0 1 0.0142155 0.87468 0.716345 0.613803 0.052793 0.393878 0.256396 0.556737 0.901562 0.0659651 0.736546 0.693194 0.437103 0.00135475 0.95437 1 0.810679 0.438297 0.659341 0.554771 0 0.0236551 0.606216 0.606082 0.384748 0.850596 1 0.961649 0.49988 0.752558 0.370483 0.351106 0.390959 0.514295 0.15392 0.187014 0.406984 0.877176 0 0.264721 0.119217 0.21901 0.840914 0.967675 0 0.697361 0.999981 0.471361 0.330441 0.0660234 0.753584 0.321608 0.799346 0.138463 0.752332 0.343164 0.329967 0.0580598 0.368677 0.351732 0.708639 0.480841 0.331756 9.21947e-05 0.632158 0.161949 0.286767 0.64119 0.52668 0.00570584 0.699617 0.198818 0.486503 0 0.79866 0.402876 0.904256 0.690621 0.918613 0.303524 0.382163 1 0.779379 0.71316 0.520307 0.396573 0.283983 0.244314 0.317934 0.887678 0.728404 0.0875426 0.101743 0.571226 0.227352 0.519278 0.668371 0.361125 0.150365 0.884387 0.741729 0.994572 0.499268 0.919947 0.455113 0.829238 0.669899 0.189064 0.356377 0.515506 0 0.662821 0.390095 0.48587 0.852222 0.518766 0.0903284 0.209231 0.581605 0.595671 0.129103 0.221816 0.902415 1 0.814691 0.450186 0.985891 1 0.41005 0.850076 0.943889 0.00050463 0.0881142 0.306942 0.89976 0.230123 0.973114 0.855026 0.252543 0.33392 0.764547 0.657246 0.381964 0.315811 0.488075 0.342125 0.909987 0.0430627 0.577246 0.648335 0.942957 0.794445 0.123931 0.879116 0.746141 0.728185 0.328102 0.825088 0.860465 0.301023 0.893543 0.193648 0.98294 0.169885 0.913318 0.798083 0.334878 0.973884 0.988698 0.0692444 0.175231 0.917673 0.0630831 0.916876 0.294565 0.383848 0.965559 0.538346 0.189002 0.687613 0.603164 0.821466 0.7597 0.32636 0.262164 0.23926 0.800798 0.0924236 0.590273 0.267814 0.205642 0.137055 0.739593 0.17221 0.437136 0.35649 0.813473 0.504715 0.68756 0.0823036 0.789779 0.567749 0.384877 0.75905 0.743609 0.50249 0.530683 0.271762 0.694778 0.173044 0.1019 0.433742 0.754103 0.113287 0.478205 0.271493 0.533896 0.884321 0.841006 0.357188 0.480889 0.924827 0.462137 0.589163 0.479217 0.297023 0.450145 0.664423 0.688706 0.851625 0.89604 0.467691 0.179332 0.234071 0.544015 0.934162 0.169858 0.279064 0.776217 0.491535 0.859223 0.744014 0.589309 0.66775 0.627965 0.505915 0.750188 0.236778 0.385658 0.207589 0.696244 0.735408 0.46841 0.33434 0.946674 0.401298 0.841416 0.722184 0.600414 0.311465 1 0.488628 0.963431 0.0816938 0.406624 0.102771 0.584633 0.662556 0.75163 0.261318 0.885598 0.295738 0.240479 0.111414 0.136037 0.491836 0.504049 0.343716 0.288127 0.791579 0.722679 0.260006 0.845063 0.884776 0.450501 0.480032 0.91971 0.364406 0.00415735 0.943527 0.782366 0.237295 0.966143 0.274053 0.492573 0.293258 0.468141 0.852594 0.887498 0.664342 0.263285 0.167034 0.633972 0.580436 0.584849 0.866847 0.351401 0 0.733648 0.104445 0.952549 0.993525 0.806351 0.518082 0.593794 0.124876 0.109357 0.980651 0.451756 0.571735 0.721335 0.520351 0.281014 0.286586 0.870545 0.673781 0.659855 0.354422 0.127244 0.388961 1 0.899988 1 0.434712 0.0688012 0.0332615 0.628087 0.801134 0.643301 0.187581 0.448115 0.826924 0.0852237 0.733218 0.97827 0.755565 0.898946 0.590586 0.37641 0.29898 0.169972 0.639532 0.770491 0.706654 0.0447136 0.783034 0.4776 0.324644 0.419607 0.496296 0.604742 0.344078 0.133485 0.23527 0.818584 0.0576868 0.499426 0.24693 0.769586 0.658753 0.20767 0.22071 0.229009 0.156375 0.453561 0.687548 0.463681 0.437846 0.991385 0.96675 0 0.0124175 0.871721 0.957325 0.0842747 0.999105 0.761506 0.245834 0.538997 0.804376 0.585104 0.984073 0.457059 0.852184 0.906748 0.557252 0.529182 0.982131 0.747803 0.0780117 0.739949 0.768112 0.403234 0.980561 0.30605 0.282538 0.302903 0.739963 0.565211 0.571207 0.444311 0.0867534 0.000299202 0.932568 0.820312 0.76527 0.207386 0.0738633 0.133687 0.120827 0.937849 0 0.182225 0.157169 0.0595396 0 0.209801 0.923049 0.773461 0.706634 0.811767 0.149237 0.367422 0.697389 0.639742 0.460225 0.316578 0.699116 0.246329 0.448206 0.789642 0.459835 0.315213 0.978849 0.677676 0.888881 0.697407 0.301118 0.159284 0.938315 0.84694 0.145694 0.287106 0.405233 0.632649 0.671656 0.365356 0.524168 0.648081 0.815725 0.525924 0.352405 0.783676 0.562742 0.298995 0.228758 0.433671 0.932441 0.285813 0.536983 0.341819 0.178747 0.753671 0.778367 0.965434 0.999463 0.634547 0.388988 0.000164376 0.903666 0.163647 0.872745 0.173096 0.715069 0.884519 0.558607 0.262879 0.358881 0.367799 0.488378 0.4416 0 0.534978 0.320171 0.000869706 0.201981 0 0.626504 0.498277 0.990521 0.989717 0.0967879 0.0503995 0.760772 0.988813 0.604841 0.519585 0.392237 0.499255 0.311206 0.974777 0.914508 0.436016 0.434041 0.57834 0.240226 0.185156 0.696067 0.999999 0.534379 0.476189 0.36316 0.436911 0.53775 0.113561 0.874417 0.069888 0.220511 0.308719 1 0.280663 0.858537 0.483475 0.0614508 0.0210153 0.913511 0.425323 0.155969 0.43267 0.298395 0.46473 0.377965 0.849446 0.997152 0.296479 0.671692 0.988784 0.746169 0.887678 0.917695 1 0.16389 0.194406 0.915083 0.18457 0.539494 0.494383 0.461546 0.794008 0.605727 0.828121 0.929805 0.309132 0.846629 0 0.856392 0.864304 0.421887 0.804788 0.552775 0.0605609 0.263594 0.427633 0.422554 0.879277 0.572287 0.456457 0.883546 0 0.0550654 0.209636 0.303897 0.844215 0.803725 0.426292 0.99991 0.720171 0.263736 0.500528 0.296198 0.299394 0.517168 0.166753 1 0.0868448 0.311366 0.841068 0.659658 0.838373 0.217374 0.649033 0.255206 0.973071 0.181689 0.535911 0.886064 0.139752 0.570747 0.727779 0.230896 0.209234 0.0831579 0.84025 0.903223 0.518103 0.401782 0.745271 0.0240175 0.0123743 0.235707 0.908925 0.402152 0.753507 0.749942 0.772602 0.116998 0.323581 0.402051 0.173894 0.0828066 0.371826 0.798816 0.3975 0.663948 0.69928 0.941099 0.429632 0.209592 0.190471 0.0703703 0.186417 0.254919 0.563426 0.688658 0.80412 0.800458 0.492129 0.490768 0.947125 0.299798 0.290648 0.0413179 0.555516 0.359887 0.294126 0.341368 4.79712e-07 0.729521 0.57016 0.483796 0.655533 0.456948 0.869373 0.615044 0.56832 0.890654 0.877886 0.711189 0.772157 0.576216 0.669833 0.108378 0 0.879802 0.808036 0.779811 0.857894 0.360595 0.750336 0.562499 0.310114 0.357137 0.714296 0.960462 0.10597 0.925429 0.205289 0.675758 0 0.42741 0.710903 0.887152 0.209157 0.982517 0.165768 0.533447 0.781029 0.742517 0.038848 0.578684 0.0752179 0.344263 0.830756 0.76398 0.460006 0.206259 0 0.380518 0.60201 0.345582 0.419459 0.0125058 0 0.632445 0.0437613 0.436446 0.0873638 0.999981 0.387311 0.693602 0.195614 0.884028 0.256668 0.725087 0.546654 0.799529 0.955244 0.926682 0.326149 0.327647 0.980557 0.933234 0.0919144 0.918861 0.569519 0.734868 0.963006 0.551375 0.466276 0.660503 0.463348 0.938546 0.82457 0.439982 0.226333 0.943377 0.0330489 0.545294 0.514946 0.608751 0.117012 0.633513 0.36793 0.266089 0.0610145 0.459803 0.495496 0.23882 0.916291 0.296778 0.0677233 0.607305 0.847429 0.279544 0.621763 0.941133 0.682653 0.233076 0.775044 0.00353035 0.660688 0.791307 0.638464 0.859686 0.70421 0.422773 0.803128 0.37649 0.772712 0.0705127 0.741816 0.603385 0.544958 0.498104 0.252443 0.501897 0.973061 0.404557 0.365292 0.86352 0.663635 0.292174 0.764924 0.839383 0.319707 0.917533 0.719669 0.223674 0.437835 0.873284 0.596038

cpp

// GridResourceWar_DemoOnly.cpp

// 仅演示模式,加载已训练权重,运行网格资源争夺游戏(ASCII显示)

// 编译: g++ -std=c++17 -fopenmp -O3 -o GridResourceWarDemo GridResourceWar_DemoOnly.cpp

// 运行: ./GridResourceWarDemo

#include <iostream>

#include <vector>

#include <cmath>

#include <random>

#include <algorithm>

#include <chrono>

#include <thread>

#include <fstream>

#include <queue>

#include <omp.h>

#include <numeric>

#include <iomanip>

#include <cstring>

#include <climits>

// ======================== 游戏世界参数 ========================

constexpr int GRID_SIZE = 8;

constexpr int NUM_RESOURCES = 1;

constexpr int RESOURCE_ENERGY = 20;

constexpr int RESOURCE_DECAY_RADIUS = 5;

constexpr double ENERGY_DECAY_FACTOR = 0.6;

constexpr double EXPLORE_ENERGY_THRESHOLD = 0.1;

constexpr int DEMO_STEPS = 1000;

constexpr int ATTACK_COOLDOWN_MAX = 3;

constexpr double ATTACK_DAMAGE = 10.0;

constexpr double MOVE_REWARD = 0.00;

constexpr double COLLECT_REWARD = 50.0;

constexpr double ATTACK_REWARD = 2.0;

constexpr double DEATH_PENALTY = -5.0;

constexpr double INIT_ENERGY = 100.0;

// 探索网络参数

constexpr int EXPLORE_IN = 2;

constexpr int EXPLORE_HIDDEN = 4;

constexpr int EXPLORE_OUT = 1;

constexpr double EXPLORE_ACTIVE_THRESHOLD = 5;

// 方向数组

const int DX[8] = {0, 0, -1, 1, -1, 1, -1, 1};

const int DY[8] = {1, -1, 0, 0, 1, 1, -1, -1};

// ======================== SNN 参数 ========================

const double DT = 0.1;

const double TAU_M = 10.0;

const double V_REST = 0.0;

const double V_RESET = 0.0;

const double V_TH = 0.5;

const double V_MAX = 1.0;

const double REFRACTORY = 0.5;

const double A_PLUS = 0.01;

const double A_MINUS = 0.012;

const double TAU_STDP = 20.0;

const double R_STDP_BETA = 0.1;

const int NUM_SYNAPSES_PER_PAIR = 2; // 与训练版本一致

const double PULSE_AMP = 0.8;

// ======================== 网络结构 ========================

const int BRAIN_HIDDEN = 10;

const int BRAIN_IN = 25 + 2 + BRAIN_HIDDEN; // 37

const int BRAIN_OUT = 4;

const int COMM_IN = 4;

const int COMM_HIDDEN = 6;

const int COMM_OUT = 2;

const int NUM_ROBOTS_PER_TEAM = 2;

// 线程局部随机数

inline std::mt19937& get_local_gen() {

thread_local std::mt19937 gen(std::random_device{}() +

std::hash<std::thread::id>{}(std::this_thread::get_id()));

return gen;

}

inline double rand_uniform() {

std::uniform_real_distribution<> dis(0.0, 1.0);

return dis(get_local_gen());

}

inline int rand_int(int low, int high) {

std::uniform_int_distribution<> dis(low, high);

return dis(get_local_gen());

}

// ======================== 神经元和MSF突触 ========================

struct Neuron {

double v, last_spike;

bool refractory;

Neuron() : v(V_REST), last_spike(-100.0), refractory(false) {}

};

struct MSFSynapse {

int pre, post;

double weight;

int delay;

double threshold;

double trace_pre, trace_post, eligibility;

std::queue<double> spike_buffer;

MSFSynapse(int p, int q, double w, int d, double th)

: pre(p), post(q), weight(w), delay(d), threshold(th),

trace_pre(0), trace_post(0), eligibility(0) {}

double update_buffer() {

if (spike_buffer.empty()) return 0.0;

double cur = spike_buffer.front();

spike_buffer.pop();

return cur;

}

void add_spike(double amp) {

while ((int)spike_buffer.size() < delay - 1) spike_buffer.push(0.0);

spike_buffer.push(amp);

}

};

class SNN {

public:

std::vector<Neuron> neurons;

std::vector<MSFSynapse> synapses;

std::vector<std::vector<int>> post_synapses;

double current_time;

int n_input, n_hidden, n_output;

std::vector<double> hidden_spikes;

SNN(int input_dim, int hidden_dim, int output_dim)

: n_input(input_dim), n_hidden(hidden_dim), n_output(output_dim) {

int total = input_dim + hidden_dim + output_dim;

neurons.resize(total);

post_synapses.resize(total);

current_time = 0.0;

hidden_spikes.assign(hidden_dim, 0.0);

init_connections();

}

void add_msf_connection(int pre_start, int pre_end, int post_start, int post_end, double) {

for (int pre = pre_start; pre < pre_end; ++pre) {

for (int post = post_start; post < post_end; ++post) {

if (pre == post) continue;

for (int k = 0; k < NUM_SYNAPSES_PER_PAIR; ++k) {

double w = std::normal_distribution<>(0.6, 0.2)(get_local_gen());

w = std::max(0.0, std::min(1.0, w));

int d = rand_int(1, 3);

double th = 0.3 + rand_uniform() * 0.4;

synapses.emplace_back(pre, post, w, d, th);

post_synapses[pre].push_back(static_cast<int>(synapses.size() - 1));

}

}

}

}

void init_connections() {

add_msf_connection(0, n_input, n_input, n_input + n_hidden, 0.1);

add_msf_connection(n_input, n_input + n_hidden, n_input, n_input + n_hidden, 0.05);

add_msf_connection(n_input, n_input + n_hidden, n_input + n_hidden, n_input + n_hidden + n_output, 0.1);

add_msf_connection(0, n_input, n_input + n_hidden, n_input + n_hidden + n_output, 0.05);

}

void reset() {

for (auto& n : neurons) {

n.v = V_REST + rand_uniform() * 0.8;

n.last_spike = -100.0;

n.refractory = false;

}

for (auto& syn : synapses) {

syn.trace_pre = syn.trace_post = syn.eligibility = 0;

while (!syn.spike_buffer.empty()) syn.spike_buffer.pop();

}

current_time = 0.0;

std::fill(hidden_spikes.begin(), hidden_spikes.end(), 0.0);

}

std::vector<double> update(const std::vector<double>& input) {

std::vector<double> ext_current(neurons.size(), 0.0);

for (int i = 0; i < n_input && i < (int)input.size(); ++i)

ext_current[i] = input[i];

std::vector<double> injected_current(neurons.size(), 0.0);

for (auto& syn : synapses) {

double cur = syn.update_buffer();

if (cur != 0.0) injected_current[syn.post] += cur;

}

std::vector<int> fired;

for (int i = 0; i < (int)neurons.size(); ++i) {

Neuron& n = neurons[i];

if (n.refractory && (current_time - n.last_spike) < REFRACTORY) continue;

n.refractory = false;

if (n.v >= V_TH) {

n.v = V_RESET;

n.last_spike = current_time;

n.refractory = true;

fired.push_back(i);

}

}

for (int pre : fired) {

for (int syn_idx : post_synapses[pre]) {

MSFSynapse& syn = synapses[syn_idx];

if (V_TH >= syn.threshold) {

syn.add_spike(syn.weight * PULSE_AMP);

syn.trace_pre = 1.0;

syn.eligibility += A_PLUS * syn.trace_pre - A_MINUS * syn.trace_post;

}

}

}

std::vector<double> new_hidden_spikes(n_hidden, 0.0);

for (int i = 0; i < (int)neurons.size(); ++i) {

Neuron& n = neurons[i];

if (n.refractory && (current_time - n.last_spike) < REFRACTORY) continue;

if (n.refractory && (current_time - n.last_spike) >= REFRACTORY)

n.refractory = false;

double I = ext_current[i] + injected_current[i];

double dv = (-(n.v - V_REST) + I) / TAU_M * DT;

n.v += dv;

if (n.v > V_MAX) n.v = V_MAX;

if (n.v < V_REST) n.v = V_REST;

if (i >= n_input && i < n_input + n_hidden) {

new_hidden_spikes[i - n_input] = (n.v >= V_TH) ? 1.0 : 0.0;

}

}

hidden_spikes = new_hidden_spikes;

for (auto& syn : synapses) {

syn.trace_pre *= std::exp(-DT / TAU_STDP);

syn.trace_post *= std::exp(-DT / TAU_STDP);

syn.eligibility *= std::exp(-DT / TAU_STDP);

}

for (int post : fired) {

for (int i = 0; i < (int)neurons.size(); ++i) {

for (int syn_idx : post_synapses[i]) {

if (synapses[syn_idx].post == post)

synapses[syn_idx].trace_post = 1.0;

}

}

}

current_time += DT;

std::vector<double> out(n_output);

for (int i = 0; i < n_output; ++i)

out[i] = std::max(0.0, neurons[n_input + n_hidden + i].v / V_MAX);

return out;

}

std::vector<double> get_hidden_spikes() const { return hidden_spikes; }

void apply_rstdp(double reward) {}

std::vector<double> get_weights() const {

std::vector<double> w;

for (auto& s : synapses) w.push_back(s.weight);

return w;

}

void set_weights(const std::vector<double>& w) {

for (size_t i = 0; i < synapses.size() && i < w.size(); ++i)

synapses[i].weight = w[i];

}

int get_num_weights() const { return static_cast<int>(synapses.size()); }

};

// ======================== 机器人结构 ========================

class Robot {

public:

SNN brain_net, comm_net;

SNN explore_net;

int x, y;

int team;

int id;

double energy;

int attack_cooldown;

double score;

bool alive;

int steps_without_energy;

double last_energy;

int last_x, last_y;

int explore_weight_update_counter;

std::vector<double> last_comm_output;

double last_strategy;

mutable double last_max_energy;

mutable double last_sensor0;

mutable std::vector<double> last_sensors;

std::vector<double> prev_brain_hidden;

Robot(int robot_id, int team_id)

: brain_net(BRAIN_IN, BRAIN_HIDDEN, BRAIN_OUT),

comm_net(COMM_IN, COMM_HIDDEN, COMM_OUT),

explore_net(EXPLORE_IN, EXPLORE_HIDDEN, EXPLORE_OUT),

id(robot_id), team(team_id),

steps_without_energy(0),

explore_weight_update_counter(0),

last_comm_output(2, 0.5),

last_max_energy(0.0),

last_sensor0(0.0),

last_sensors(25, 0.0),

prev_brain_hidden(BRAIN_HIDDEN, 0.0),

last_strategy(0) {

reset();

}

void reset() {

energy = INIT_ENERGY;

attack_cooldown = 0;

score = 0.0;

alive = true;

last_x = -1; last_y = -1;

last_energy = 0.0;

steps_without_energy = 0;

last_comm_output = {0.5, 0.5};

last_max_energy = 0.0;

last_sensor0 = 0.0;

std::fill(last_sensors.begin(), last_sensors.end(), 0.0);

std::fill(prev_brain_hidden.begin(), prev_brain_hidden.end(), 0.0);

last_strategy = 0;

brain_net.reset();

comm_net.reset();

explore_net.reset();

}

std::vector<double> get_sensor_input(const std::vector<Robot>& teammates,

const std::vector<Robot>& enemies,

const std::vector<std::pair<int,int>>& resource_positions,

const std::vector<double>& resource_energy_map,

double teammate_comm) const {

std::vector<double> sensors(25, 0.0);

double max_energy = 0.0;

for (int dir = 0; dir < 8; ++dir) {

int nx = x + DX[dir];

int ny = y + DY[dir];

if (nx >= 0 && nx < GRID_SIZE && ny >= 0 && ny < GRID_SIZE) {

double raw = resource_energy_map[ny * GRID_SIZE + nx];

sensors[dir] = raw / 2.0;

if (sensors[dir] > max_energy) max_energy = sensors[dir];

} else {

sensors[dir] = 0.0;

}

}

if (max_energy < EXPLORE_ENERGY_THRESHOLD) {

const_cast<Robot*>(this)->steps_without_energy++;

} else {

const_cast<Robot*>(this)->steps_without_energy = 0;

}

for (int dir = 0; dir < 8; ++dir) {

int nx = x + DX[dir];

int ny = y + DY[dir];

sensors[8+dir] = 0.0;

for (const auto& enemy : enemies) {

if (enemy.alive && enemy.x == nx && enemy.y == ny) {

sensors[8+dir] = 1.0;

break;

}

}

}

sensors[16] = x / (double)(GRID_SIZE - 1);

sensors[17] = y / (double)(GRID_SIZE - 1);

double min_dist = 1e9;

int target_dx = 0, target_dy = 0;

for (const auto& res : resource_positions) {

int dx = res.first - x;

int dy = res.second - y;

double dist = std::abs(dx) + std::abs(dy);

if (dist < min_dist) {

min_dist = dist;

target_dx = dx;

target_dy = dy;

}

}

if (min_dist < 1e8) {

sensors[18] = target_dx / (double)GRID_SIZE * 2.0;

sensors[19] = target_dy / (double)GRID_SIZE * 2.0;

if (sensors[18] > 1.0) sensors[18] = 1.0;

if (sensors[18] < -1.0) sensors[18] = -1.0;

if (sensors[19] > 1.0) sensors[19] = 1.0;

if (sensors[19] < -1.0) sensors[19] = -1.0;

}

sensors[20] = std::min(2.0, (energy / INIT_ENERGY) * 2.0);

sensors[21] = (attack_cooldown > 0) ? 0.0 : 1.0;

sensors[22] = teammate_comm;

sensors[23] = 0.0;

sensors[24] = 1.0 - std::exp(-steps_without_energy / 20.0);

for (int i = 0; i < 24; ++i) {

sensors[i] += (rand_uniform() - 0.5) * 0.02;

if (i >= 8 && i < 16) {

if (sensors[i] < 0.0) sensors[i] = 0.0;

if (sensors[i] > 1.0) sensors[i] = 1.0;

} else if (i == 21 || i == 22 || i == 23) {

if (sensors[i] < 0.0) sensors[i] = 0.0;

if (sensors[i] > 1.0) sensors[i] = 1.0;

} else if (i == 24) {

if (sensors[i] < 0.0) sensors[i] = 0.0;

if (sensors[i] > 1.0) sensors[i] = 1.0;

} else if (i == 18 || i == 19) {

if (sensors[i] > 1.0) sensors[i] = 1.0;

if (sensors[i] < -1.0) sensors[i] = -1.0;

} else {

if (sensors[i] < 0.0) sensors[i] = 0.0;

}

}

if (sensors[24] < 0.0) sensors[24] = 0.0;

if (sensors[24] > 1.0) sensors[24] = 1.0;

last_max_energy = max_energy;

last_sensor0 = sensors[0];

last_sensors = sensors;

return sensors;

}

void update_networks(const std::vector<double>& sensors,

double teammate_comm,

const std::vector<Robot>& enemies,

std::vector<double>& brain_out,

std::vector<double>& comm_out) {

std::vector<double> brain_input = sensors;

brain_input.push_back(teammate_comm);

brain_input.push_back(0.0);

brain_input.insert(brain_input.end(), prev_brain_hidden.begin(), prev_brain_hidden.end());

for (int step = 0; step < 80; ++step)

brain_out = brain_net.update(brain_input);

int strategy = 0;

double max_val = brain_out[0];

for (int i = 1; i < BRAIN_OUT; ++i) {

if (brain_out[i] > max_val) {

max_val = brain_out[i];

strategy = i;

}

}

last_strategy = strategy;

prev_brain_hidden = brain_net.get_hidden_spikes();