🔥个人主页: Milestone-里程碑

❄️个人专栏: <<力扣hot100>> <<C++>><<Linux>>

🌟心向往之行必能至

一 .epoll初识

不会出现poll随着用户增加,效率会发生一定地下降

按照man⼿册的说法: 是为处理⼤批量句柄⽽作了改进的poll.

它是在2.5.44内核中被引进的(epoll(4) is a new API introduced in Linux kernel 2.5.44)

它⼏乎具备了之前所说的⼀切优点,被公认为Linux2.6下性能最好的多路I/O就绪通知⽅法.

二.epoll的相关接口

2.1 epoll_create

bash

EPOLL_CREATE(2) Linux Programmer's Manual EPOLL_CREATE(2)

NAME

epoll_create, epoll_create1 - open an epoll file descriptor

SYNOPSIS

#include <sys/epoll.h>

int epoll_create(int size);创建⼀个epoll的句柄. epoll模型

• ⾃从linux2.6.8之后,size参数是被忽略的.

• ⽤完之后, 必须调⽤close()关闭

2.2 epoll_ctl

bash

EPOLL_CTL(2) Linux Programmer's Manual EPOLL_CTL(2)

NAME

epoll_ctl - control interface for an epoll file descriptor

SYNOPSIS

#include <sys/epoll.h>

int epoll_ctl(int epfd, int op, int fd, struct epoll_event *event);epfd :即epoll_create的返回值

fd,即用户要内核帮忙监视的socket

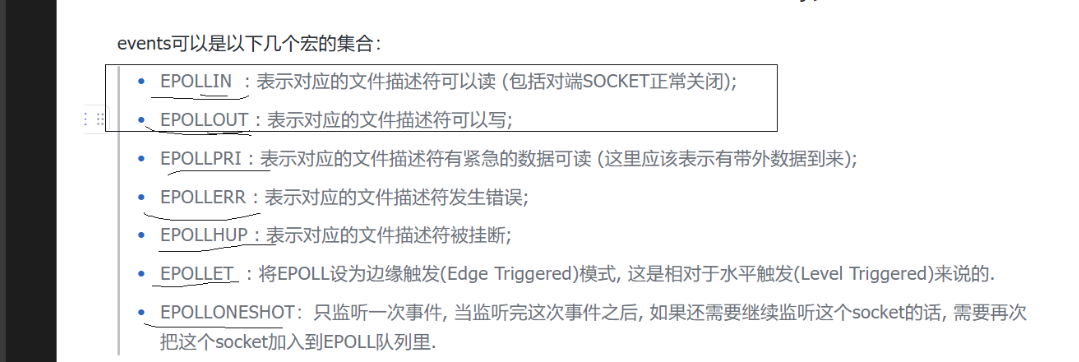

event

其中下面的data是存放用户写入的数据的,常用的是fd,内核不修改

bashThe event argument describes the object linked to the file descriptor fd. The struct epoll_event is defined as: typedef union epoll_data { void *ptr; int fd; uint32_t u32; uint64_t u64; } epoll_data_t; struct epoll_event { uint32_t events; /* Epoll events */ epoll_data_t data; /* User data variable */ }; The data member of the epoll_event structure specifies data that the kernel should save and then return (via epoll_wait(2)) when this file descriptor becomes ready. The events member of the epoll_event structure is a bit mask composed by ORing together zero or more of the following available event types: EPOLLIN The associated file is available for read(2) operations. EPOLLOUT The associated file is available for write(2) operations. EPOLLRDHUP (since Linux 2.6.17) Stream socket peer closed connection, or shut down writing half of connection. (This flag is especially useful for writing simple code to detect peer shutdown when using edge-triggered monitoring.) EPOLLPRI There is an exceptional condition on the file descriptor. See the discussion of POLLPRI in poll(2). EPOLLERR Error condition happened on the associated file descriptor. This event is also reported for the write end of a pipe when the read end has been closed.

2.3 epoll_wait

bash

NAME

epoll_wait, epoll_pwait - wait for an I/O event on an epoll file de?

scriptor

SYNOPSIS

#include <sys/epoll.h>

int epoll_wait(int epfd, struct epoll_event *events,

int maxevents, int timeout);

events即:内核通知用户,你让我关心的fd们,上面的哪些事件就绪了

maxevents是数组长度

timeout 同poll 毫秒

三. epoll的使用

bash

#pragma once

#include <iostream>

#include <memory>

#include <unistd.h>

#include <sys/poll.h>

#include <sys/epoll.h>

#include "Socket.hpp"

#include "Log.hpp"

using namespace SocketModule;

using namespace LogModule;

class EpollServer

{

const static int size = 4096;

const static int defaultfd = -1;

public:

EpollServer(int port) : _listensock(std::make_unique<TcpSocket>()), _isrunning(false), _epfd(defaultfd)

{

// 1.创建套接字

_listensock->BuildTcpSocketMethod(port); // 4

// 2. 创建epoll模型

_epfd = epoll_create(256); // 4

if (_epfd == -1)

{

LOG(LogLevel::ERROR) << "epoll_create fail";

exit(EPOLL_CREATE_ERROR);

}

LOG(LogLevel::INFO) << "epoll_create success , _epfd :" << _epfd;

// 3. 将listensocket设置到内核中

struct epoll_event ev; // 有没有设置到内核中,有没有rb_tree中新增节点??没有!!

ev.events = EPOLLIN;

ev.data.fd = _listensock->Fd(); // 这里用来维护用户的数据 常见的为fd 内核不修改

int n = epoll_ctl(_epfd, EPOLL_CTL_ADD, _listensock->Fd(), &ev);

if (n < 0)

{

LOG(LogLevel::FATAL) << "add listensockfd failed";

exit(EPOLL_CTL_ERR);

}

}

void Start()

{

_isrunning = true;

while (_isrunning)

{

int timeout = -1; // 1000毫秒 1秒

// rfds: 0000 0000

int n = epoll_wait(_epfd, _revs, size, timeout); //-1 阻塞

switch (n)

{

case -1:

LOG(LogLevel::ERROR) << "epoll error";

break;

case 0:

LOG(LogLevel::INFO) << "time out...";

break;

default:

// 有事件就绪,就不仅仅是新连接到来了吧?读事件就绪啊?

LOG(LogLevel::DEBUG) << "有事件就绪了..., n : " << n;

Dispatcher(n); // 处理就绪的事件啊!

break;

}

}

_isrunning = false;

}

// 事件派发器

void Dispatcher(int rnum)

{

LOG(LogLevel::DEBUG) << "event ready ..."; // LT: 水平触发模式--epoll默认

// 合法 : 读事件就绪&& 新连接到来 读事件就绪&&可读

// 不合法

for (int i = 0; i < rnum; ++i)

{

int sockfd = _revs[i].data.fd;

uint16_t revent = _revs[i].events;

if (revent & EPOLLIN)

{

if (sockfd == _listensock->Fd()) // 读事件就绪 && 新连接到来

Accepter();

else

{

// 读事件就绪 && 普通socket可读

Recver(sockfd);

}

}

}

}

// 链接管理器

void Accepter()

{

InetAddr client;

int sockfd = _listensock->Accept(&client); // accept会不会阻塞?

if (sockfd >= 0)

{

// 获取新链接到来成功, 然后呢??能不能直接

// read/recv(), sockfd是否读就绪,我们不清楚

// 只有谁最清楚,未来sockfd上是否有事件就绪?select!

// 将新的sockfd,托管给select!

// 如何托管? 将新的fd放入辅助数组!

LOG(LogLevel::INFO) << "get a new link, sockfd: "

<< sockfd << ", client is: " << client.StringAddr();

// 交给epoll

struct epoll_event ev;

ev.events = EPOLLIN;

ev.data.fd = sockfd;

// 能不能直接recv??? 不能!!!

// 将新的sockfd添加到内核

int n = epoll_ctl(_epfd, EPOLL_CTL_ADD, sockfd, &ev);

if (n < 0)

{

LOG(LogLevel::WARNING) << "add listensockfd failed";

}

else

{

LOG(LogLevel::INFO) << "epoll_ctl add sockfd success: " << sockfd;

}

}

}

// IO处理器

void Recver(int sockfd)

{

char buffer[1024];

// 我在这里读取的时候,会不会阻塞?

ssize_t n = recv(sockfd, buffer, sizeof(buffer) - 1, 0); // recv写的时候有bug吗?

if (n > 0)

{

buffer[n] = 0;

std::cout << "client say@ " << buffer << std::endl;

}

else if (n == 0)

{

LOG(LogLevel::INFO) << "clien quit...";

// 从epoll中移除 &&关闭fd epoll_ctl:只能合法 所以只能先移除再关闭

int m = epoll_ctl(_epfd, EPOLL_CTL_DEL, sockfd, nullptr);

if (m > 0)

{

LOG(LogLevel::INFO) << "epoll_ctl remove sockfd success: " << sockfd;

}

close(sockfd);

}

else

{

LOG(LogLevel::ERROR) << "recv error";

// 必须先关闭再修改,反了就成为关闭-1了

int m = epoll_ctl(_epfd, EPOLL_CTL_DEL, sockfd, nullptr);

if (m > 0)

{

LOG(LogLevel::INFO) << "epoll_ctl remove sockfd success: " << sockfd;

}

close(sockfd);

}

}

void Stop()

{

_isrunning = false;

}

~EpollServer()

{

_listensock->Close();

if (_epfd > 0)

close(_epfd);

}

private:

std::unique_ptr<Socket> _listensock;

bool _isrunning;

int _epfd;

struct epoll_event _revs[size];

};四.epoll的工作原理

• 当某⼀进程调⽤epoll_create⽅法时,Linux内核会创建⼀个eventpoll结构体,这个结构体中有

两个成员与epoll的使⽤⽅式密切相关.

bash

struct eventpoll{

....

/*红⿊树的根节点,这颗树中存储着所有添加到epoll中的需要监控的事件*/

struct rb_root rbr;

/*双链表中则存放着将要通过epoll_wait返回给⽤⼾的满⾜条件的事件*/

struct list_head rdlist;

....

};每⼀个epoll对象都有⼀个独⽴的eventpoll结构体,⽤于存放通过epoll_ctl⽅法向epoll对象中添

加进来的事件.

• 这些事件都会挂载在红⿊树中,如此,重复添加的事件就可以通过红⿊树⽽⾼效的识别出来(红⿊

树的插⼊时间效率是lgn,其中n为树的⾼度).

• ⽽所有添加到epoll中的事件都会与设备(⽹卡)驱动程序建⽴回调关系,也就是说,当响应的事件

发⽣时会调⽤这个回调⽅法.

• 这个回调⽅法在内核中叫ep_poll_callback,它会将发⽣的事件添加到rdlist双链表中.

• 在epoll中,对于每⼀个事件,都会建⽴⼀个epitem结构体.