文章目录

- 一、准备环境

- 二、项目目录结构(先建好)

- [三、Docker 启动所有基础组件](#三、Docker 启动所有基础组件)

- 四、创建数据库表(自动执行)

- [五、注册 Debezium Connector(关键一步)](#五、注册 Debezium Connector(关键一步))

- [六、确认 Kafka Topic 已生成](#六、确认 Kafka Topic 已生成)

- [七、Spring Boot 缓存失效服务(完整代码)](#七、Spring Boot 缓存失效服务(完整代码))

- 八、验证整个链路

PostgreSQL 表更新 → Kafka 有事件 → Spring Boot 消费 → Redis Key 被删除

一、准备环境

本地环境要求

- Docker ≥ 20

- Docker Compose ≥ 2

- JDK 17

- Maven

- 一个终端

二、项目目录结构(先建好)

text

uav-cdc/

├── docker-compose.yml

├── init-db.sql

├── connector-uav.json

└── cache-invalidator/

├── pom.xml

└── src/main/

├── java/com/uav/invalidator/

│ ├── CacheInvalidatorApplication.java

│ ├── CacheInvalidatorListener.java

│ ├── DebeziumParser.java

│ └── RedisKeyService.java

└── resources/application.yml三、Docker 启动所有基础组件

1️⃣ docker-compose.yml

yaml

services:

postgres:

image: postgres:16

container_name: pg

environment:

POSTGRES_USER: debezium

POSTGRES_PASSWORD: dbz

POSTGRES_DB: uav

ports:

- "5432:5432"

command: >

postgres

-c wal_level=logical

-c max_replication_slots=10

-c max_wal_senders=10

volumes:

- ./init-db.sql:/docker-entrypoint-initdb.d/init-db.sql:ro

redis:

image: redis:7

container_name: redis

ports:

- "6379:6379"

kafka:

image: confluentinc/cp-kafka:7.6.0

container_name: kafka

ports:

- "9092:9092"

environment:

KAFKA_NODE_ID: 1

KAFKA_PROCESS_ROLES: "broker,controller"

KAFKA_LISTENERS: "PLAINTEXT://0.0.0.0:9092,CONTROLLER://0.0.0.0:9093"

KAFKA_ADVERTISED_LISTENERS: "PLAINTEXT://localhost:9092"

KAFKA_CONTROLLER_LISTENER_NAMES: "CONTROLLER"

KAFKA_LISTENER_SECURITY_PROTOCOL_MAP: "CONTROLLER:PLAINTEXT,PLAINTEXT:PLAINTEXT"

KAFKA_CONTROLLER_QUORUM_VOTERS: "1@kafka:9093"

KAFKA_OFFSETS_TOPIC_REPLICATION_FACTOR: 1

connect:

image: quay.io/debezium/connect:latest

container_name: connect

depends_on:

- kafka

- postgres

ports:

- "8083:8083"

environment:

BOOTSTRAP_SERVERS: "kafka:9092"

GROUP_ID: "connect-uav"

CONFIG_STORAGE_TOPIC: "connect_configs"

OFFSET_STORAGE_TOPIC: "connect_offsets"

STATUS_STORAGE_TOPIC: "connect_statuses"

KEY_CONVERTER: "org.apache.kafka.connect.json.JsonConverter"

VALUE_CONVERTER: "org.apache.kafka.connect.json.JsonConverter"

KEY_CONVERTER_SCHEMAS_ENABLE: "false"

VALUE_CONVERTER_SCHEMAS_ENABLE: "false"

kafka-ui:

image: provectuslabs/kafka-ui:latest

container_name: kafka-ui

depends_on:

- kafka

ports:

- "8080:8080"

environment:

KAFKA_CLUSTERS_0_NAME: local

KAFKA_CLUSTERS_0_BOOTSTRAPSERVERS: kafka:9092

KAFKA_CLUSTERS_0_KAFKACONNECT_0_NAME: connect

KAFKA_CLUSTERS_0_KAFKACONNECT_0_ADDRESS: http://connect:80832️⃣ 启动

bash

docker compose up -d确认:

bash

docker ps四、创建数据库表(自动执行)

init-db.sql

sql

CREATE TABLE uav_device (

id BIGINT PRIMARY KEY,

sn TEXT,

status TEXT,

updated_at TIMESTAMPTZ DEFAULT now()

);

CREATE TABLE mission (

id BIGINT PRIMARY KEY,

device_id BIGINT,

state TEXT,

updated_at TIMESTAMPTZ DEFAULT now()

);

CREATE TABLE user_info (

id BIGINT PRIMARY KEY,

nickname TEXT,

updated_at TIMESTAMPTZ DEFAULT now()

);

INSERT INTO uav_device VALUES (1,'SN-001','IDLE',now());

INSERT INTO mission VALUES (101,1,'CREATED',now());

INSERT INTO user_info VALUES (1001,'OLD',now());五、注册 Debezium Connector(关键一步)

1️⃣ connector-uav.json

json

{

"name": "uav-pg-connector",

"config": {

"connector.class": "io.debezium.connector.postgresql.PostgresConnector",

"database.hostname": "postgres",

"database.port": "5432",

"database.user": "debezium",

"database.password": "dbz",

"database.dbname": "uav",

"topic.prefix": "uavcdc",

"schema.include.list": "public",

"table.include.list": "public.uav_device,public.mission,public.user_info",

"plugin.name": "pgoutput",

"publication.autocreate.mode": "filtered",

"slot.name": "uavcdc_slot",

"snapshot.mode": "initial",

"tombstones.on.delete": "false",

/* ===== 核心增强开始 ===== */

"transforms": "unwrap",

"transforms.unwrap.type": "io.debezium.transforms.ExtractNewRecordState",

"transforms.unwrap.drop.tombstones": true,

"transforms.unwrap.delete.handling.mode": "rewrite",

"heartbeat.interval.ms": "30000",

"heartbeat.topics.prefix": "uavcdc-heartbeat"

}

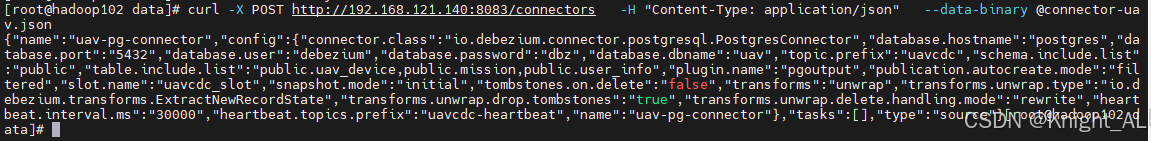

}2️⃣ 注册

bash

curl -X POST http://192.168.121.140:8083/connectors \

-H "Content-Type: application/json" \

--data-binary @connector-uav.json

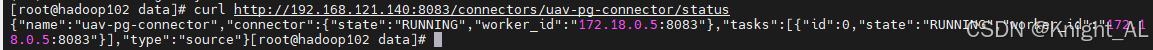

3️⃣ 检查状态

bash

curl http://192.168.121.140:8083/connectors/uav-pg-connector/status | jq六、确认 Kafka Topic 已生成

打开浏览器:

http://192.168.121.140:8080你会看到:

text

uavcdc.public.uav_device

uavcdc.public.mission

uavcdc.public.user_info七、Spring Boot 缓存失效服务(完整代码)

1️⃣ pom.xml

csharp

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-data-redis</artifactId>

</dependency>

<dependency>

<groupId>com.fasterxml.jackson.core</groupId>

<artifactId>jackson-databind</artifactId>

</dependency>

</dependencies>2️⃣ application.yml

yaml

spring:

kafka:

bootstrap-servers: 192.168.121.140:9092

consumer:

group-id: uav-cache-invalidator

auto-offset-reset: earliest

data:

redis:

host: 192.168.121.140

port: 6379

cdc:

topics:

device: uavcdc.public.uav_device

mission: uavcdc.public.mission

user: uavcdc.public.user_info

csharp

package com.uav.invalidator;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

@SpringBootApplication

public class CacheInvalidatorApplication {

public static void main(String[] args) {

SpringApplication.run(CacheInvalidatorApplication.class, args);

}

}

csharp

package com.uav.invalidator;

import org.springframework.data.redis.core.StringRedisTemplate;

import org.springframework.stereotype.Service;

@Service

public class RedisKeyService {

private final StringRedisTemplate redisTemplate;

public RedisKeyService(StringRedisTemplate redisTemplate) {

this.redisTemplate = redisTemplate;

}

public void delete(String key) {

redisTemplate.delete(key);

System.out.println("[REDIS] DEL " + key);

}

}

csharp

package com.uav.invalidator;

import com.fasterxml.jackson.databind.JsonNode;

import com.fasterxml.jackson.databind.ObjectMapper;

public class DebeziumParser {

private static final ObjectMapper MAPPER = new ObjectMapper();

public static ParsedRow parse(String json) throws Exception {

JsonNode root = MAPPER.readTree(json);

JsonNode payload = root.path("payload");

if (payload.isMissingNode() || payload.isNull()) return null;

// 表名

String table = payload.path("source").path("table").asText(null);

if (table == null) return null;

// after 优先,delete 时用 before

JsonNode row = payload.path("after");

if (row.isMissingNode() || row.isNull()) {

row = payload.path("before");

}

if (row.isMissingNode() || row.isNull()) return null;

// 主键(示例表统一用 id)

JsonNode idNode = row.get("id");

if (idNode == null || idNode.isNull()) return null;

return new ParsedRow(table, idNode.asText(), row);

}

public record ParsedRow(String table, String id, JsonNode row) {}

}

csharp

package com.uav.invalidator;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.stereotype.Component;

@Component

public class CacheInvalidatorListener {

private final RedisKeyService redisKeyService;

public CacheInvalidatorListener(RedisKeyService redisKeyService) {

this.redisKeyService = redisKeyService;

}

@KafkaListener(

topics = {

"${cdc.topics.device}",

"${cdc.topics.mission}",

"${cdc.topics.user}"

}

)

public void onMessage(String message) {

try {

DebeziumParser.ParsedRow row = DebeziumParser.parse(message);

if (row == null) return;

switch (row.table()) {

case "uav_device" ->

redisKeyService.delete("device:info:" + row.id());

case "mission" ->

redisKeyService.delete("mission:detail:" + row.id());

case "user_info" ->

redisKeyService.delete("user:info:" + row.id());

default -> {

// 其他表忽略

}

}

} catch (Exception e) {

System.err.println("[ERROR] " + e.getMessage());

}

}

}3️⃣ 启动服务

bash

cd cache-invalidator

mvn spring-boot:run八、验证整个链路

1️⃣ 写缓存(模拟业务)

bash

redis-cli set device:info:1 test

redis-cli set mission:detail:101 test

redis-cli set user:info:1001 test2️⃣ 更新 PostgreSQL

bash

docker exec -it pg psql -U debezium -d uav -c \

"UPDATE uav_device SET status='FLYING' WHERE id=1;"3️⃣ 你应该看到:

Spring Boot 控制台:

text

[REDIS] DEL device:info:1Redis 中:

bash

redis-cli get device:info:1

# nil

text

PostgreSQL UPDATE

→ WAL 记录

→ Debezium 解析

→ Kafka Topic

→ Spring Boot 消费

→ Redis 缓存失效