QEMU学习之路(12)--- 使用qemu-system-riscv64测试IOMMU

一、前言

QEMU安装参考:QEMU学习之路(3)--- qemu-system-riscv64安装

Linux相关安装参考:QEMU学习之路(6)--- RISC-V 64 启动Linux

二、安装QEMU

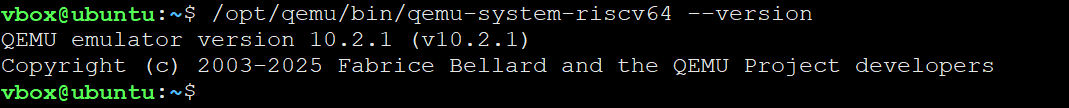

QEMU 从 10.0.0 版本开始正式支持 RISC-V IOMMU,所以我们需要安装的QEMU版本10.0.0 版本后,我当前安装的版本为10.2.1;

三、构建Linux

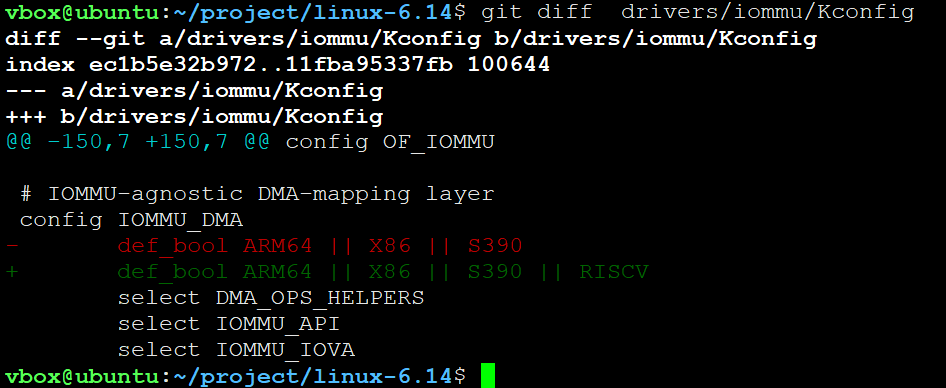

Linux内核从6.13+后支持RISC-V IOMMU,我现在以linux-6.14版本为例;

bash

git clone https://mirrors.tuna.tsinghua.edu.cn/git/linux.git -b v6.14 linux-6.14获取源码后我们需要做一些修改,打开drivers/iommu/Kconfig 文件,找到config IOMMU_DMA选项,添加RISCV支持

然后再配置内核

bash

make ARCH=riscv CROSS_COMPILE=riscv64-linux-gnu- defconfig然后开始编译内核

bash

make ARCH=riscv CROSS_COMPILE=riscv64-linux-gnu- -j $(nproc)四、构建rootfs

1、获取busybox 源码

bash

git clone https://gitee.com/mirrors/busyboxsource -b 1_35_0 busybox-1.35.02、设置环境变量

bash

export CROSS_COMPILE=riscv64-linux-gnu-3、使用默认配置

bash

make defconfig4、配置静态编译

使用如下命令进入配置界面

bash

make menuconfig使用以下选项,选择静态方式编译busybox,目的是将程序的所有依赖库直接打包进二进制文件,避免了Linux系统运行时依赖动态库。

bash

Settings --->

[*] Build static binary (no shared libs) 另外如果使用较新的riscv64-linux-gnu-gcc工具,需要将tc功能去掉,这个功能已经在新版本被抛弃了

bash

Networking Utilities --->

[ ] tc (8.3 kb) 5、编译busybox

bash

make -j $(nproc)6、安装

新建一个rootfs目录,将busybox安装到该目录下

bash

mkdir ../rootfs; make install CONFIG_PREFIX=../rootfs在rootfs文件夹下新建如下目录:

bash

mkdir etc proc sys dev lib lib64 root并且新建init文件,写入内容如下所示

bash

#!/bin/sh

mount -t proc proc /proc

mount -t sysfs sysfs /sys

mount -t devtmpfs devtmpfs /dev

echo "Kernel: $(uname -r)"

echo "Machine: $(uname -m)"

exec /sbin/init然后给init文件添加可执行权限

bash

chmod +x init使用如下命令打包

bash

find . | cpio -H newc -o | gzip > ../rootfs.cpio.gz使用如下命令启动

bash

/opt/qemu/bin/qemu-system-riscv64 \

-machine virt,aia=aplic-imsic,iommu-sys=on -cpu rv64 \

-m 2G -smp 2 -nographic \

-kernel ../../linux-6.14/arch/riscv/boot/Image \

-initrd ./rootfs.cpio.gz \

-append "console=ttyS0 earlycon=sbi root=/dev/ram0 rw" \

-device edu,dma_mask=0xffffffff \

-device virtio-net-pci,netdev=net0 -netdev user,id=net0五、进行测试

创建hello.c文件,内容如下所示:

c

#include <linux/kernel.h>

#include <linux/module.h>

#include <linux/pci.h>

#include <linux/init.h>

#include <linux/errno.h>

#include <linux/cdev.h>

#include <linux/device.h>

#include <linux/dma-mapping.h>

#include <linux/io.h>

#define HELLO_PCI_DEVICE_ID 0x11e8

#define HELLO_PCI_VENDOR_ID 0x1234

#define HELLO_PCI_REVISION_ID 0x10

#define ONCHIP_MEM_BASE 0x40000

#define DMA_BUF_SIZE 4096

static struct pci_device_id ids[] = {

{ PCI_DEVICE(HELLO_PCI_VENDOR_ID, HELLO_PCI_DEVICE_ID), },

{ 0 , }

};

struct hello_pci_info_t {

dev_t dev_id;

struct cdev char_dev;

struct class *class;

struct device *device;

struct pci_dev *pdev;

void __iomem *address_bar0;

atomic_t dma_running;

spinlock_t lock;

wait_queue_head_t r_wait;

};

static struct hello_pci_info_t hello_pci_info;

MODULE_DEVICE_TABLE(pci, ids);

MODULE_LICENSE("GPL");

MODULE_AUTHOR("Fixed Driver");

MODULE_DESCRIPTION("PCIe DMA Character Driver");

static irqreturn_t hello_pci_irq_handler(int irq, void *dev_info)

{

struct hello_pci_info_t *info = dev_info;

u32 irq_status;

// get irq_stutas

irq_status = ioread32(info->address_bar0 + 0x24);

// 你要的中断打印

printk("hello_pcie: get irq status: 0x%0x\n", irq_status);

// clean irq

iowrite32(irq_status, info->address_bar0 + 0x64);

// get irq_stutas

irq_status = ioread32(info->address_bar0 + 0x24);

if(irq_status == 0x00){

printk("hello_pcie: receive irq and clean success. \n");

}else{

printk("hello_pcie: receive irq but clean failed !!! \n");

return IRQ_NONE;

}

atomic_set(&info->dma_running, 0);

smp_wmb();

wake_up_interruptible(&info->r_wait);

return IRQ_HANDLED;

}

static int hello_pcie_open(struct inode *inode, struct file *file)

{

file->private_data = &hello_pci_info;

dev_dbg(&hello_pci_info.pdev->dev, "Device opened\n");

return 0;

}

static int hello_pcie_close(struct inode *inode, struct file *file)

{

dev_dbg(&hello_pci_info.pdev->dev, "Device closed\n");

return 0;

}

static void dma_write_block(dma_addr_t dma_addr, int count, struct hello_pci_info_t *info)

{

unsigned long flags;

spin_lock_irqsave(&info->lock, flags);

iowrite32(lower_32_bits(dma_addr), info->address_bar0 + 0x80);

iowrite32(upper_32_bits(dma_addr), info->address_bar0 + 0x84);

iowrite32(ONCHIP_MEM_BASE, info->address_bar0 + 0x88);

iowrite32(0, info->address_bar0 + 0x8c);

iowrite32(count, info->address_bar0 + 0x90);

iowrite32(0x05, info->address_bar0 + 0x98);

spin_unlock_irqrestore(&info->lock, flags);

}

static void dma_read_block(dma_addr_t dma_addr, int count, struct hello_pci_info_t *info)

{

unsigned long flags;

spin_lock_irqsave(&info->lock, flags);

iowrite32(ONCHIP_MEM_BASE, info->address_bar0 + 0x80);

iowrite32(0, info->address_bar0 + 0x84);

iowrite32(lower_32_bits(dma_addr), info->address_bar0 + 0x88);

iowrite32(upper_32_bits(dma_addr), info->address_bar0 + 0x8c);

iowrite32(count, info->address_bar0 + 0x90);

iowrite32(0x07, info->address_bar0 + 0x98);

spin_unlock_irqrestore(&info->lock, flags);

}

static ssize_t hello_pcie_write(struct file *file, const char __user *buf, size_t cnt, loff_t *offt)

{

struct hello_pci_info_t *info = file->private_data;

int ret;

u8 *databuf;

dma_addr_t dma_addr;

if (cnt > DMA_BUF_SIZE)

return -EINVAL;

databuf = dma_alloc_coherent(&info->pdev->dev, DMA_BUF_SIZE, &dma_addr, GFP_KERNEL);

if (!databuf)

return -ENOMEM;

else {

dev_info(&info->pdev->dev, "get dma_handle_addr success: 0x%0llx\n", (unsigned long long)dma_addr);

}

if (copy_from_user(databuf, buf, cnt)) {

ret = -EFAULT;

goto out_free;

}

atomic_set(&info->dma_running, 1);

smp_wmb();

dma_write_block(dma_addr, cnt, info);

ret = wait_event_interruptible(info->r_wait, !atomic_read(&info->dma_running));

if (ret)

goto out_free;

ret = cnt;

out_free:

dma_free_coherent(&info->pdev->dev, DMA_BUF_SIZE, databuf, dma_addr);

return ret;

}

static ssize_t hello_pcie_read(struct file *file, char __user *buf, size_t cnt, loff_t *offt)

{

struct hello_pci_info_t *info = file->private_data;

int ret;

u8 *databuf;

dma_addr_t dma_addr;

if (cnt > DMA_BUF_SIZE)

return -EINVAL;

databuf = dma_alloc_coherent(&info->pdev->dev, DMA_BUF_SIZE, &dma_addr, GFP_KERNEL);

if (!databuf)

return -ENOMEM;

else {

dev_info(&info->pdev->dev, "get dma_handle_addr success: 0x%0llx\n", (unsigned long long)dma_addr);

}

atomic_set(&info->dma_running, 1);

smp_wmb();

dma_read_block(dma_addr, cnt, info);

ret = wait_event_interruptible(info->r_wait, !atomic_read(&info->dma_running));

if (ret)

goto out_free;

if (copy_to_user(buf, databuf, cnt)) {

ret = -EFAULT;

goto out_free;

}

ret = cnt;

out_free:

dma_free_coherent(&info->pdev->dev, DMA_BUF_SIZE, databuf, dma_addr);

return ret;

}

static struct file_operations hello_pcie_fops = {

.owner = THIS_MODULE,

.open = hello_pcie_open,

.release = hello_pcie_close,

.read = hello_pcie_read,

.write = hello_pcie_write,

};

static int hello_pcie_probe(struct pci_dev *pdev, const struct pci_device_id *id)

{

int ret;

int bar = 0;

resource_size_t len;

ret = pci_enable_device(pdev);

if (ret)

return ret;

ret = dma_set_mask_and_coherent(&pdev->dev, DMA_BIT_MASK(64));

if (ret) {

dev_err(&pdev->dev, "DMA mask failed\n");

goto err_disable;

}

pci_set_master(pdev);

ret = pci_request_region(pdev, bar, "hello_pcie_bar0");

if (ret)

goto err_disable;

len = pci_resource_len(pdev, bar);

hello_pci_info.address_bar0 = pci_iomap(pdev, bar, len);

if (!hello_pci_info.address_bar0) {

ret = -ENOMEM;

goto err_release;

}

hello_pci_info.pdev = pdev;

ret = request_irq(pdev->irq, hello_pci_irq_handler, 0, "hello_pci", &hello_pci_info);

if (ret) {

dev_err(&pdev->dev, "IRQ request failed\n");

goto err_iounmap;

}

iowrite32(0x80, hello_pci_info.address_bar0 + 0x20);

spin_lock_init(&hello_pci_info.lock);

init_waitqueue_head(&hello_pci_info.r_wait);

atomic_set(&hello_pci_info.dma_running, 0);

ret = alloc_chrdev_region(&hello_pci_info.dev_id, 0, 1, "hello_pcie");

if (ret)

goto err_irq;

cdev_init(&hello_pci_info.char_dev, &hello_pcie_fops);

hello_pci_info.char_dev.owner = THIS_MODULE;

ret = cdev_add(&hello_pci_info.char_dev, hello_pci_info.dev_id, 1);

if (ret)

goto err_unreg;

hello_pci_info.class = class_create("hello_pcie");

if (IS_ERR(hello_pci_info.class)) {

ret = PTR_ERR(hello_pci_info.class);

goto err_cdev;

}

hello_pci_info.device = device_create(hello_pci_info.class, &pdev->dev,

hello_pci_info.dev_id, NULL, "hello_pcie");

if (IS_ERR(hello_pci_info.device)) {

ret = PTR_ERR(hello_pci_info.device);

goto err_class;

}

dev_info(&pdev->dev, "PCIe probe success\n");

return 0;

err_class:

class_destroy(hello_pci_info.class);

err_cdev:

cdev_del(&hello_pci_info.char_dev);

err_unreg:

unregister_chrdev_region(hello_pci_info.dev_id, 1);

err_irq:

free_irq(pdev->irq, &hello_pci_info);

err_iounmap:

pci_iounmap(pdev, hello_pci_info.address_bar0);

err_release:

pci_release_region(pdev, bar);

err_disable:

pci_disable_device(pdev);

return ret;

}

static void hello_pcie_remove(struct pci_dev *pdev)

{

iowrite32(0x00, hello_pci_info.address_bar0 + 0x20);

free_irq(pdev->irq, &hello_pci_info);

device_destroy(hello_pci_info.class, hello_pci_info.dev_id);

class_destroy(hello_pci_info.class);

cdev_del(&hello_pci_info.char_dev);

unregister_chrdev_region(hello_pci_info.dev_id, 1);

pci_iounmap(pdev, hello_pci_info.address_bar0);

pci_release_region(pdev, 0);

pci_disable_device(pdev);

dev_info(&pdev->dev, "PCIe remove success\n");

}

static struct pci_driver hello_pci_driver = {

.name = "hello_pcie",

.id_table = ids,

.probe = hello_pcie_probe,

.remove = hello_pcie_remove,

};

static int __init hello_pci_init(void)

{

return pci_register_driver(&hello_pci_driver);

}

static void __exit hello_pci_exit(void)

{

pci_unregister_driver(&hello_pci_driver);

}

module_init(hello_pci_init);

module_exit(hello_pci_exit);编写Makefile如下所示,使用make命令进行编译;

bash

KERNELDIR=/home/william/project/linux-5.18

export ARCH=riscv

export CROSS_COMPILE=riscv64-linux-gnu-

obj-m := hello.o

all:

make -C ${KERNELDIR} M=${PWD} modules

clean:

make -C ${KERNELDIR} M=${PWD} clean

.PHONY: all clean编写testapp.c文件如下所示:

c

#include "stdio.h"

#include "stdint.h"

#include "unistd.h"

#include "sys/types.h"

#include "sys/stat.h"

#include "fcntl.h"

#include "stdlib.h"

#include "string.h"

#define BUFFER_LENGTH 128

int main(int argc, char *argv[])

{

int fd, retvalue;

char *filename = "/dev/hello_pcie";

unsigned char *write_buf = malloc(BUFFER_LENGTH);

unsigned char *read_buf = malloc(BUFFER_LENGTH);

/* 打开驱动设备文件 */

fd = open(filename, O_RDWR);

if(fd < 0){

printf("file %s open failed!\n", filename);

return -1;

}

for(int i = 0;i < BUFFER_LENGTH;i++)

{

write_buf[i] = i;

}

/* 向/dev/hello_pcie文件写入数据 */

retvalue = write(fd, write_buf, BUFFER_LENGTH);

if(retvalue < 0){

printf("Write %s Failed!\n", filename);

close(fd);

return -1;

}

printf("write success, data: 0x%0x, 0x%0x, 0x%0x, 0x%0x, 0x%0x, 0x%0x, 0x%0x, 0x%0x\n", write_buf[0], write_buf[1], write_buf[2], write_buf[3], write_buf[4], write_buf[5], write_buf[6], write_buf[7]);

retvalue = read(fd, read_buf, BUFFER_LENGTH);

if(retvalue < 0){

printf("Read %s Failed!\n", filename);

close(fd);

return -1;

}

printf("read success, data: 0x%0x, 0x%0x, 0x%0x, 0x%0x, 0x%0x, 0x%0x, 0x%0x, 0x%0x\n", read_buf[0], read_buf[1], read_buf[2], read_buf[3], read_buf[4], read_buf[5], read_buf[6], read_buf[7]);

retvalue = close(fd); /* 关闭文件 */

if(retvalue < 0){

printf("file %s close failed!\r\n", filename);

return -1;

}

return 0;

}使用如下命令编译成可执行文件:

bash

riscv64-linux-gnu-gcc -static testapp.c -o test.x然后将hello.ko和test.x拷贝到rootfs/root/文件夹下,重新生成rootfs.cpio.gz后启动系统

bash

rm -rf rootfs.cpio.gz

rm -rf rootfs/root/hello.ko

cp hello/hello.ko rootfs/root/hello.ko

cp hello/test.x rootfs/root/test.x

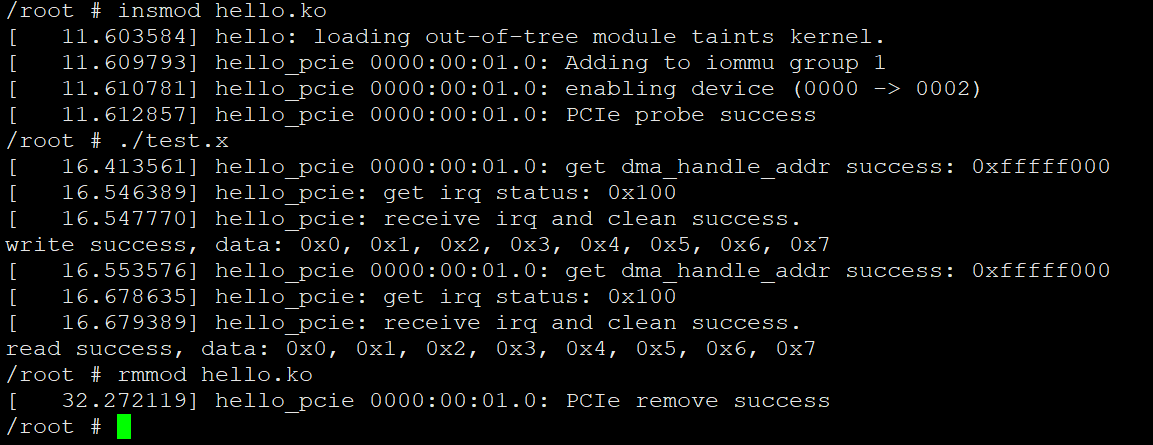

cd rootfs; find . | cpio -H newc -o | gzip > ../rootfs.cpio.gz重新进入系统后到root目录下,加载驱动,执行测试如下所示:

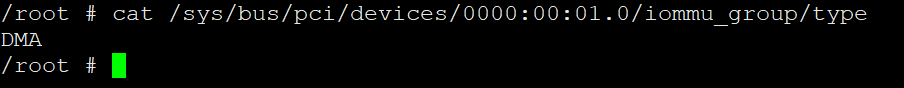

然后使用如下命令查看一下IOMMU的工作类型:

bash

cat /sys/bus/pci/devices/0000:00:01.0/iommu_group/type