本期接着前文的**智能Agent实战指南:从入门到精通(工具)**继续来进行探讨Agent嵌入记忆组件。需求:北京明天的天气怎么样?上海呢?(是要通过两次对话实现)

1、传统方式实现

1.1 以ReAct方式

python

from langchain_classic.memory import ConversationBufferMemory

from langchain_classic.agents import AgentType, initialize_agent

from langchain_classic.tools import Tool

from langchain_community.tools import TavilySearchResults

from langchain_openai import ChatOpenAI

import os

import dotenv

dotenv.load_dotenv()

# 读取配置文件

os.environ["TAVILY_API_KEY"] = os.getenv("TAVILY_API_KEY")

# 获取Tavily搜索工具实例

search = TavilySearchResults(max_results = 3)

search_tool = Tool(

func = search.run,

name = "search",

description = "用于检索互联网上的信息",

)

# 获取大模型

os.environ['OPENAI_API_KEY'] = os.getenv("OPENAI_API_KEY")

os.environ['OPENAI_BASE_URL'] = os.getenv("OPENAI_BASE_URL")

llm = ChatOpenAI(

model="gpt-4o-mini",

temperature=0,

)

# 获取记忆的实例:ConversationBufferMemory

memory = ConversationBufferMemory(

return_messages= True,

memory_key= "chat_history" # 必须设置memory_key的值为chat_history,因为提示词模版中使用的是chat_history,二者必须一致

)

# 创建agent示例

agent = AgentType.CONVERSATIONAL_REACT_DESCRIPTION

# 创建agentExecutor实例

agent_execute = initialize_agent(

llm =llm,

agent = agent,

tools = [search_tool],

verbose = True,

memory = memory,

)

# 通过agentExecutor调用invoke(),并得到响应

result = agent_execute.invoke("查询西安今天天气情况")

# 处理响应数据

print(result)

此时再询问上海的:

python

result = agent_execute.invoke("上海呢?")

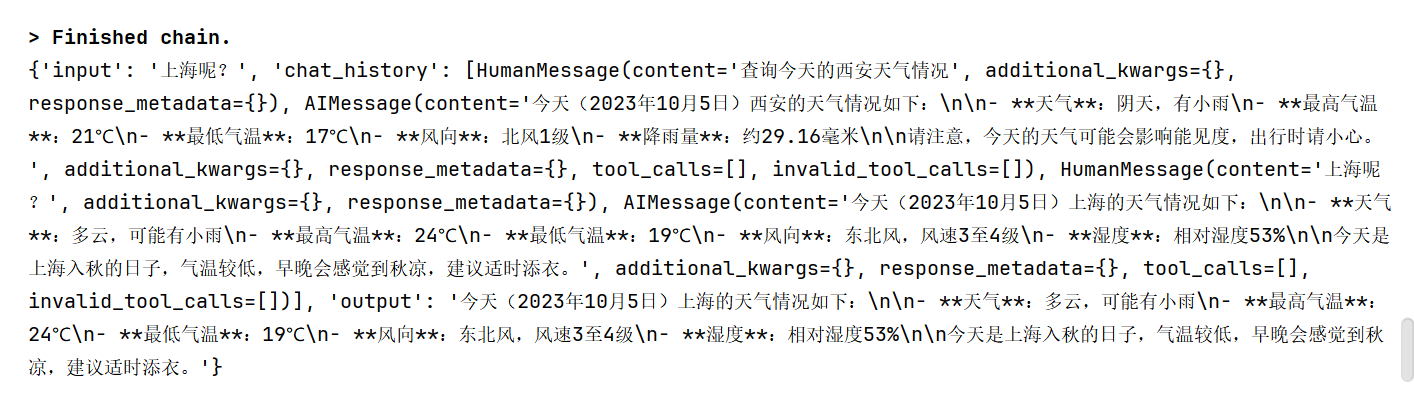

print(result)这里我们的问题只是"上海呢?"具体指代的就是上海的天气,通过嵌入的记忆获取。

1.2 以function_call方式

python

from langchain_classic.memory import ConversationBufferMemory

from langchain_classic.agents import AgentType, initialize_agent

from langchain_classic.tools import Tool

from langchain_community.tools import TavilySearchResults

from langchain_openai import ChatOpenAI

import os

import dotenv

dotenv.load_dotenv()

# 读取配置文件

os.environ["TAVILY_API_KEY"] = os.getenv("TAVILY_API_KEY")

# 获取Tavily搜索工具实例

search = TavilySearchResults(max_results = 3)

search_tool = Tool(

func = search.run,

name = "search",

description = "用于检索互联网上的信息",

)

# 获取大模型

os.environ['OPENAI_API_KEY'] = os.getenv("OPENAI_API_KEY")

os.environ['OPENAI_BASE_URL'] = os.getenv("OPENAI_BASE_URL")

llm = ChatOpenAI(

model="gpt-4o-mini",

temperature=0,

)

# 获取记忆的实例:ConversationBufferMemory

memory = ConversationBufferMemory(

return_messages= True,

memory_key= "chat_history" # 必须设置memory_key的值为chat_history,因为提示词模版中使用的是chat_history,二者必须一致

)

# 创建agent示例

agent = AgentType.OPENAI_FUNCTIONS

# 创建agentExecutor实例

agent_execute = initialize_agent(

llm =llm,

agent = agent,

tools = [search_tool],

verbose = True,

memory = memory,

)

# 通过agentExecutor调用invoke(),并得到响应

response = agent_execute.invoke({"input":"今天是周几"})

# 处理响应数据

print(response)2、通用方式实现

2.1 以function_call方式

python

from langchain_classic.memory import ConversationBufferMemory

from langchain_core.prompts import ChatPromptTemplate

from langchain_classic.agents import AgentType, initialize_agent, create_tool_calling_agent, AgentExecutor

from langchain_classic.tools import Tool

from langchain_community.tools import TavilySearchResults

from langchain_openai import ChatOpenAI

import os

import dotenv

dotenv.load_dotenv()

# 读取配置文件

os.environ["TAVILY_API_KEY"] = os.getenv("TAVILY_API_KEY")

# 获取Tavily搜索工具实例

search = TavilySearchResults(max_results = 3)

# 2、获取对应工具实例

search_tool = Tool(

func = search.run,

name = "search",

description = "用于检索互联网上的信息",

)

# 3、获取大模型

os.environ['OPENAI_API_KEY'] = os.getenv("OPENAI_API_KEY")

os.environ['OPENAI_BASE_URL'] = os.getenv("OPENAI_BASE_URL")

llm = ChatOpenAI(

model="gpt-4o-mini",

temperature=0,

)

memory = ConversationBufferMemory(

return_messages=True,

memory_key="chat_history",

)

# 4、提示词模版(ChatPromptTemplate为例)

prompt_template = ChatPromptTemplate.from_messages([

("system","你是一个乐于助人的ai助手,根据用户的提问,必要时调用search工具,使用互联网检索数据"),

("system","{chat_history}"), # 添加一个chat_history的变量,用于记录上下文记忆

("human","{input}"),

("system","{agent_scratchpad}"), # agent_scratchpad必须声明 agent_scratchpad 放在 input 之前确实可能导致输出重复或混乱

])

# 5、获取Agent实例:create_tool_calling_agent()

agent = create_tool_calling_agent(

llm = llm,

prompt = prompt_template,

tools = [search_tool],

)

# 6、获取AgentExecutor实例

agent_executor = AgentExecutor(

agent = agent,

tools = [search_tool],

verbose = True,

memory = memory,

)

# 7、通过AgentExecutor实例调用invoke方法,得到响应

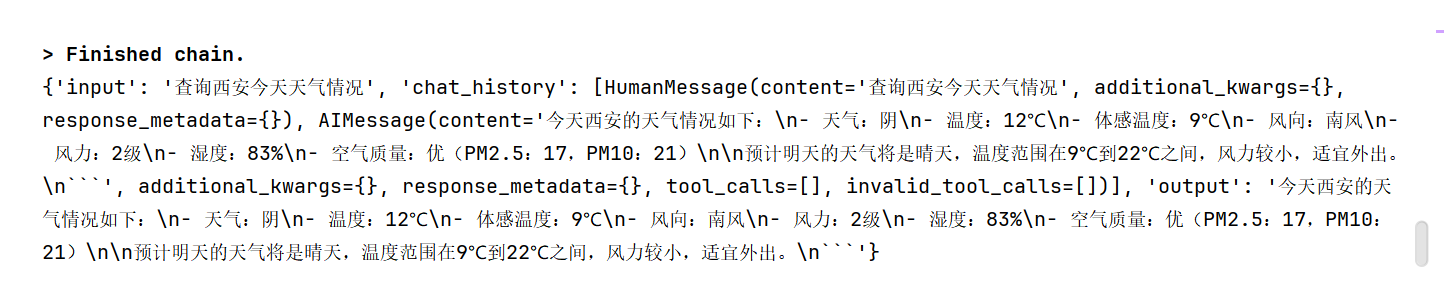

response = agent_executor.invoke({"input":"查询今天的西安天气情况"})

# 8、处理响应

print(response)

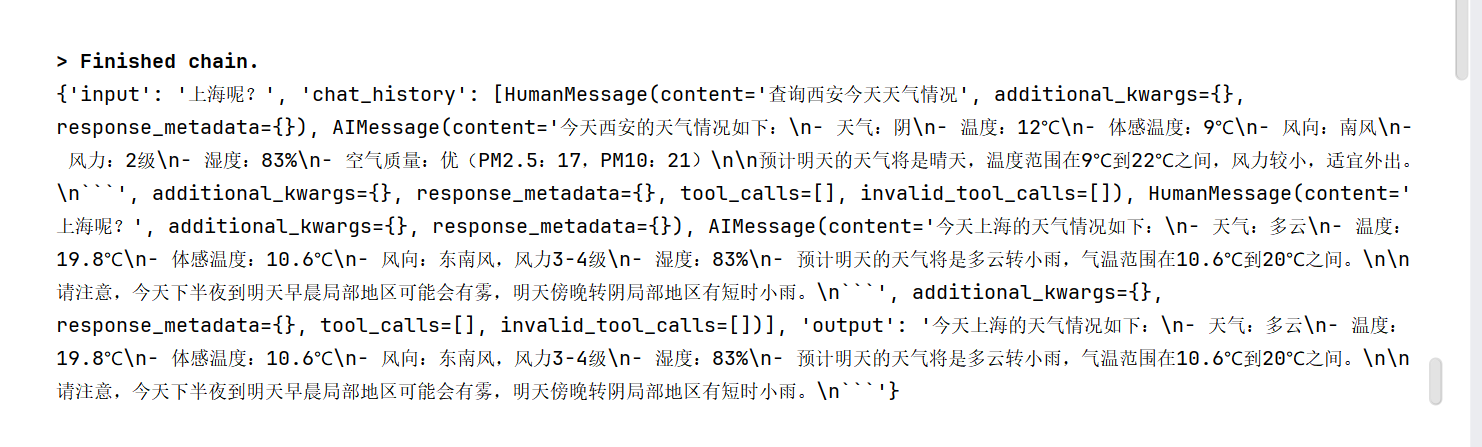

此时继续询问上海的:

python

response = agent_executor.invoke({"input":"上海呢?"})

print(response)

2.2 以ReAct方式

直接使用现成的提示词模版

python

from langchain_classic import hub

from langchain_core.prompts import ChatPromptTemplate, PromptTemplate

from langchain_classic.agents import AgentType, initialize_agent, create_tool_calling_agent, AgentExecutor, create_react_agent

from langchain_core.tools import StructuredTool

from langchain_classic.tools import Tool

from langchain_community.tools import TavilySearchResults

from langchain_openai import ChatOpenAI

import os

import dotenv

dotenv.load_dotenv()

# 读取配置文件

os.environ["TAVILY_API_KEY"] = os.getenv("TAVILY_API_KEY")

# 获取Tavily搜索工具实例

search = TavilySearchResults(max_results = 3)

# 2、获取对应工具实例

search_tool = Tool(

func = search.run,

name = "search",

description = "用于检索互联网上的信息",

)

# 3、获取大模型

os.environ['OPENAI_API_KEY'] = os.getenv("OPENAI_API_KEY")

os.environ['OPENAI_BASE_URL'] = os.getenv("OPENAI_BASE_URL")

llm = ChatOpenAI(

model="gpt-4o-mini",

temperature=0,

)

# 4、使用LangChain Hub中的官方ReAct提示模板

prompt_template = hub.pull("hwchase17/react-chat")

memory = ConversationBufferMemory(

return_messages= True,

memory_key="chat_history",

)

# 5、获取Agent实例:create_react_agent()

agent = create_react_agent(

llm = llm,

prompt = prompt_template,

tools = [search_tool],

)

# 6、获取AgentExecutor实例

agent_executor = AgentExecutor(

agent = agent,

tools = [search_tool],

verbose = True,

memory = memory,

)

# 7、通过AgentExecutor实例调用invoke方法,得到响应

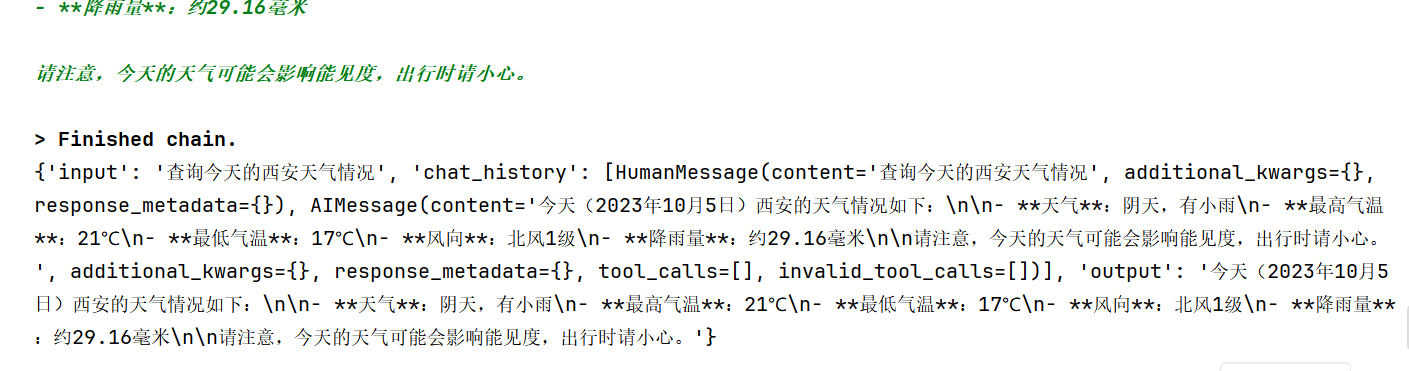

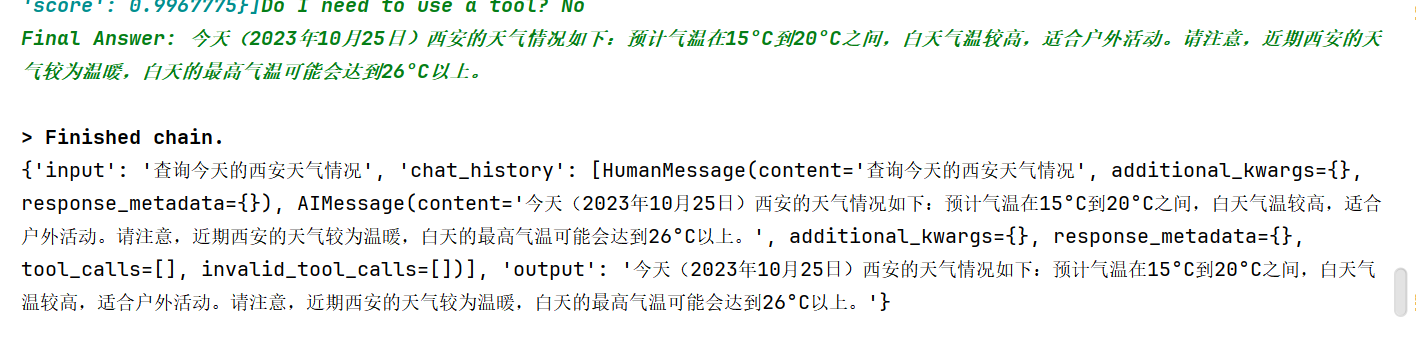

response = agent_executor.invoke({"input":"查询今天的西安天气情况"})

# 8、处理响应

print(response)

指定某一天的:

python

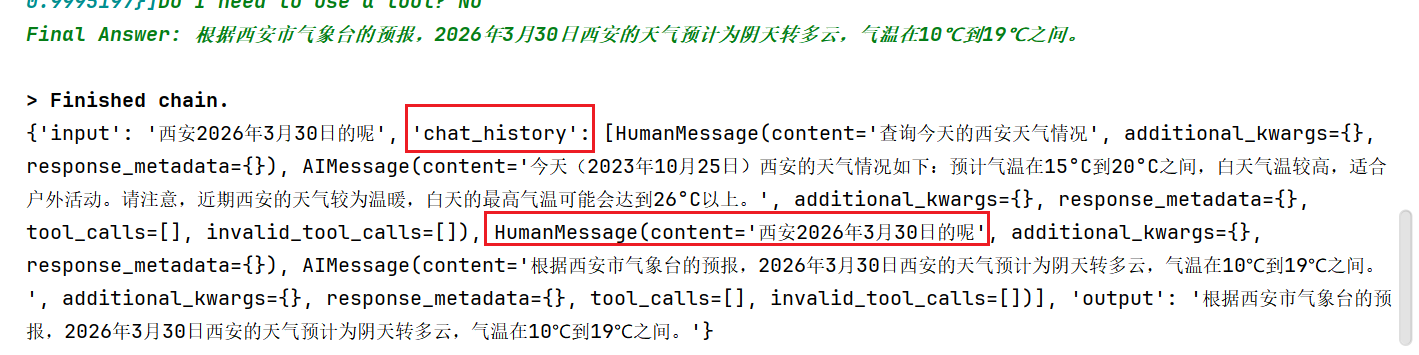

response = agent_executor.invoke({"input":"西安2026年3月30日的呢"})

print(response)