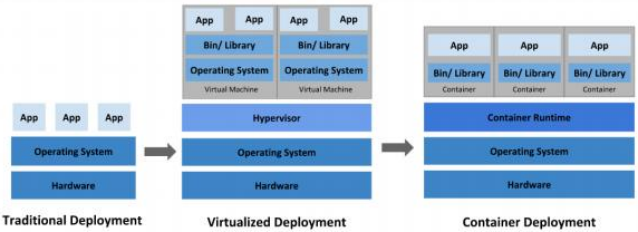

应用部署方式演变

在部署应用程序的方式上,主要经历了三个阶段:

传统部署:互联网早期,会直接将应用程序部署在物理机上

优点:简单,不需要其它技术的参与

缺点:不能为应用程序定义资源使用边界,很难合理地分配计算资源,而且程序之间容易产生影响

虚拟化部署:可以在一台物理机上运行多个虚拟机,每个虚拟机都是独立的一个环境

优点:程序环境不会相互产生影响,提供了一定程度的安全性

缺点:增加了操作系统,浪费了部分资源

容器化部署:与虚拟化类似,但是共享了操作系统

容器化部署方式给带来很多的便利,但是也会出现一些问题,比如说:

一个容器故障停机了,怎么样让另外一个容器立刻启动去替补停机的容器

当并发访问量变大的时候,怎么样做到横向扩展容器数量

kubernetes 简介

在Docker 作为高级容器引擎快速发展的同时,在Google内部容器技术已经应用很多年。

Borg系统运行管理着成千上万的容器应用。

Kubernetes项目来源于Borg,可以说是集结了Borg设计思想的精华,并且吸收了Borg

系统中的经验和教训。

Kubernetes对计算资源进行了更高层次的抽象,通过将容器进行细致的组合,将最终的应用服务交给用户。

kubernetes的本质是一组服务器集群,它可以在集群的每个节点上运行特定的程序,来对节点中的容器

进行管理。目的是实现资源管理的自动化,主要提供了如下的主要功能:

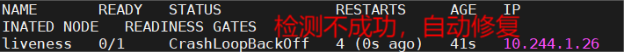

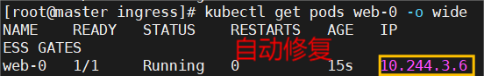

自我修复:一旦某一个容器崩溃,能够在1秒中左右迅速启动新的容器

弹性伸缩:可以根据需要,自动对集群中正在运行的容器数量进行调整

服务发现:服务可以通过自动发现的形式找到它所依赖的服务

负载均衡:如果一个服务起动了多个容器,能够自动实现请求的负载均衡

版本回退:如果发现新发布的程序版本有问题,可以立即回退到原来的版本

存储编排:可以根据容器自身的需求自动创建存储卷

k8s 集群部署

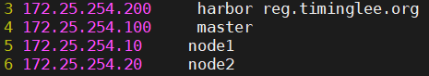

k8s 环境部署说明

|----------------|---------|------------------|

| 主机IP | 主机名 | 角色 |

| 172.25.254.100 | master | master,k8s集群控制节点 |

| 172.25.254.10 | node1 | k8s集群工作节点1 |

| 172.25.254.20 | node2 | k8s集群工作节点2 |

| 172.25.254.200 | harbor | harbor仓库 |所有节点禁用selinux和防火墙

所有节点同步时间和解析

所有节点安装docker-ce

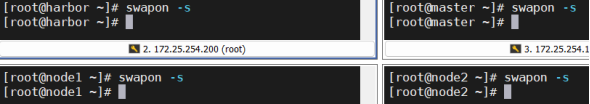

所有节点禁用swap,注意注释掉/etc/fstab文件中的定义

构建harbor镜像仓库

所有主机 ~]# cat > /etc/yum.repos.d/docker.repo <<EOF

docker

name = docker

baseurl = https://mirrors.aliyun.com/docker-ce/linux/rhel/9.6/x86_64/stable/

gpgcheck = 0

EOF

# dnf install docker-ce-3:28.5.2-1.el9 -y

# echo br_netfilter > /etc/modules-load.d/docker_mod.conf

# modprobe -a br_netfilter

# vim /etc/sysctl.d/docker.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

# sysctl --system

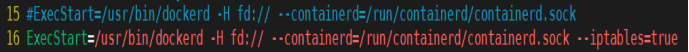

# vim /lib/systemd/system/docker.service

# systemctl daemon-reload

# systemctl enable --now docker

# mkdir /etc/docker/certs.d/reg.timinglee.org/ -p

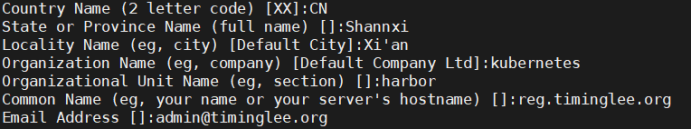

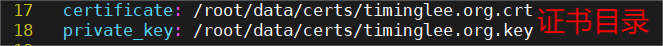

生成证书key

harbor ~]# mkdir /data/certs -p

# openssl req -newkey rsa:4096 \

-nodes -sha256 -keyout /data/certs/timinglee.org.key \

-addext "subjectAltName = DNS:reg.timinglee.org" \

-x509 -days 365 -out /data/certs/timinglee.org.crt

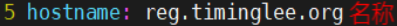

编辑harbor配置文件

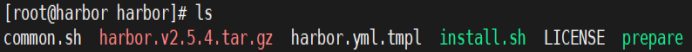

# tar zxf harbor-offline-installer-v2.5.4.tgz -C /opt/

# cd /opt/harbor/

# ls

# cp harbor.yml.tmpl harbor.yml

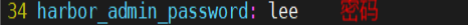

# vim harbor.yml

# ./install.sh --with-chartmuseum

启动并验证

# mkdir /etc/docker/certs.d/reg.timinglee.org/ -p

# cp /data/certs/timinglee.org.crt /etc/docker/certs.d/reg.timinglee.org/ca.crt

# vim /etc/hosts

# systemctl restart docker

# docker compose up -d

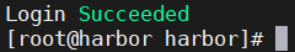

# docker login reg.timinglee.org -u admin

构建部署kubernetes所需主机

所有主机 ~]# systemctl disable --now swap.target

# systemctl mask swap.target

# sed '/swap/s/^/#/g' -i /etc/fstab

配置可以使用harbor仓库

harbor ~]# for i in 100 10 20 ; do scp /data/certs/timinglee.org.crt root@172.25.254.$i:/etc/docker/certs.d/reg.timinglee.org/ca.crt ; done 在harbor主机中分发证书到所有主机

# for i in 10 20 100; do ssh -l root 172.25.254.$i systemctl enable --now docker ;done

# for i in 10 20 100; do ssh -l root 172.25.254.$i systemctl restart docker ;done

# for i in 100 10 20 ; do scp /etc/hosts root@172.25.254.$i:/etc/hosts ; done 所有主机建立解析

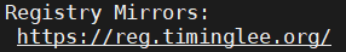

配置docker加速器

所有主机 ~]# cat >/etc/docker/daemon.json <<EOF

{

"registry-mirrors":"https://reg.timinglee.org"

}

EOF

# systemctl restart docker

# docker info

配置kubernetes安装源

# cat > /etc/yum.repos.d/kubernetes.repo <<EOF

kubernetes

name = kubernetes

baseurl = https://mirrors.aliyun.com/kubernetes-new/core/stable/v1.35/rpm/

gpgcheck = 0

EOF

# dnf list kubelet 检测

# reboot

# swapon -s 【无输出】

kubernetes的部署

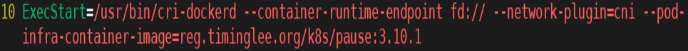

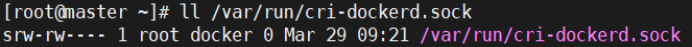

安装cri-dockerd

所有主机 ~]# rpm -ivh cri-dockerd-0.3.14-3.el8.x86_64.rpm libcgroup-0.41-19.el8.x86_64.rpm

# vim /lib/systemd/system/cri-docker.service

# systemctl enable --now cri-docker.service

安装构建kubernetes 集群所需软件

master ~]# dnf install kubectl -y master节点

master和node1-2 ~]# dnf install kubelet kubeadm -y

# systemctl enable --now kubelet.service

master节点中 kubectl 和kubeadm 补齐

master ~]# echo "source <(kubectl completion bash)" >> ~/.bashrc

# echo "source <(kubeadm completion bash)" >> ~/.bashrc

# source ~/.bashrc

下载kubernetes集群所需镜像

master ~]# kubeadm config images pull \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.35.3 \

--cri-socket=unix:///var/run/cri-dockerd.sock

上传镜像到本地harbor

# docker login reg.timinglee.org -u admin

# docker images --format "{{.Repository}}:{{.Tag}}" | awk -F "/" '/google/{system("docker tag "0" reg.timinglee.org/k8s/"3)}'

# docker images --format "{{.Repository}}:{{.Tag}}" | awk -F "/" '/timinglee/{system("docker push "$0)}'

在master中初始化kubernetes集群

# kubeadm init --pod-network-cidr=10.244.0.0/16 --image-repository reg.timinglee.org/k8s \

--kubernetes-version v1.35.3 --cri-socket=unix:///var/run/cri-dockerd.sock

# kubeadm token create --print-join-command 如果忘记 ,用这个查看

注意:如果初始化出问题

# kubeadm reset --cri-socket=unix:///var/run/cri-dockerd.sock 可以重置集群设定

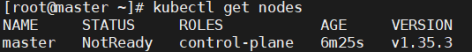

添加kubernets环境变量到本机

# echo "export KUBECONFIG=/etc/kubernetes/admin.conf" > ~/.bash_profile

# source ~/.bash_profile

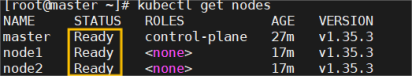

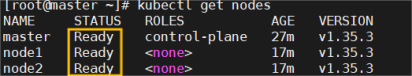

# kubectl get nodes

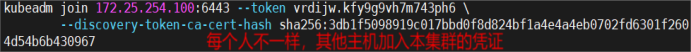

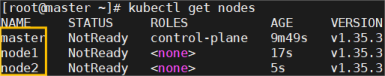

添加node节点到本集群

node1-2 ~]# kubeadm join 172.25.254.100:6443 --token 2t4r7h.lyjbe040nxhr0aob --discovery-token-ca-cert-hash sha256:3db1f5098919c017bbd0f8d824bf1a4e4a4eb0702fd6301f2604d54b6b430967 --cri-socket=unix:///var/run/cri-dockerd.sock

安装网络插件

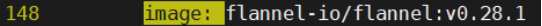

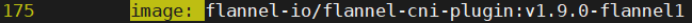

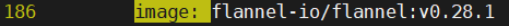

# docker load -i flannel-0.28.1.tar

# docker tag ghcr.io/flannel-io/flannel-cni-plugin:v1.9.0-flannel1 reg.timinglee.org/flannel-io/flannel-cni-plugin:v1.9.0-flannel1

# docker tag ghcr.io/flannel-io/flannel:v0.28.1 reg.timinglee.org/flannel-io/flannel:v0.28.1

# docker push reg.timinglee.org/flannel-io/flannel-cni-plugin:v1.9.0-flannel1

# docker push reg.timinglee.org/flannel-io/flannel:v0.28.1

# vim kube-flannel.yml

# kubectl apply -f kube-flannel.yml

# kubectl get nodes

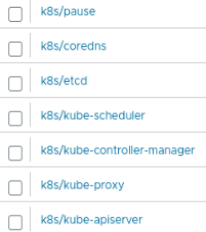

仓库查看

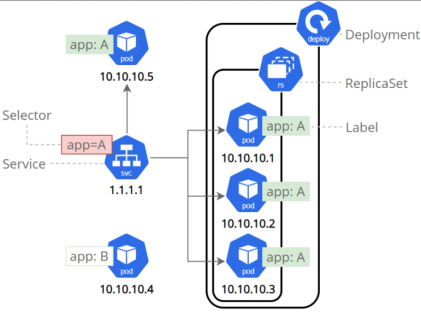

kubernetes 中的资源

资源管理介绍

在kubernetes中,所有的内容都抽象为资源,用户需要通过操作资源来管理kubernetes。

kubernetes的本质上就是一个集群系统,用户可以在集群中部署各种服务

所谓的部署服务,其实就是在kubernetes集群中运行一个个的容器,并将指定的程序跑在容器中。

kubernetes的最小管理单元是pod而不是容器,只能将容器放在`Pod`中,

kubernetes一般也不会直接管理Pod,而是通过`Pod控制器`来管理Pod的。

Pod中服务的访问是由kubernetes提供的`Service`资源来实现。

Pod中程序的数据需要持久化是由kubernetes提供的各种存储系统来实现

资源使用的方法

harbor ~]# cd /opt/harbor/

# docker compose up -d

harbor和master ~]# docker login reg.timinglee.org -u admin

master ~]docker load -i nginx-1.23.tar.gz

# docker tag reg.timinglee.org/library/nginx:1.23 reg.timinglee.org/library/nginx:latest

# docker push reg.timinglee.org/library/nginx:latest 【推送】

# kubectl run webpod --image nginx:latest --port 80

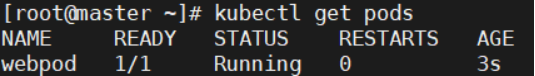

# kubectl get pods

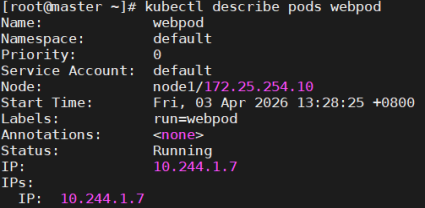

# kubectl describe pods webpod

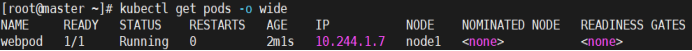

# kubectl get pods -o wide

# kubectl delete pods webpod 删除pod

yaml文件方式

# kubectl create deployment test --image nginx --replicas 1 --dry-run=client -o yaml > test.yml

# vim test.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: test

name: test

spec:

replicas: 1

selector:

matchLabels:

app: test

template:

metadata:

labels:

app: test

spec:

containers:

- image: nginx

name: nginx

# kubectl create -f test.yml

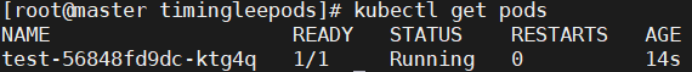

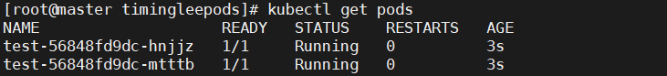

# kubectl get pods

# kubectl apply -f test.yml

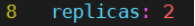

# vim test.yml

# kubectl apply -f test.yml

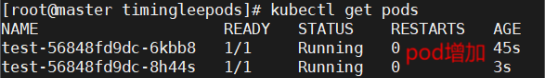

# kubectl get pods

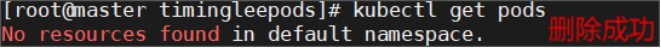

# kubectl delete -f test.yml

# kubectl get pods

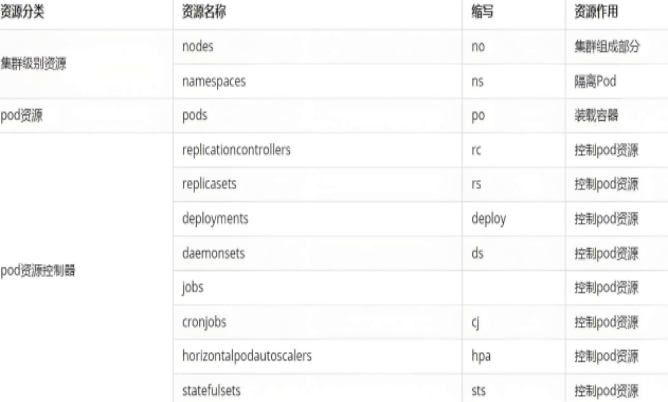

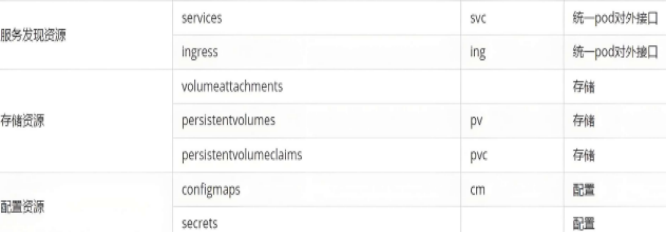

资源类型

node

# kubectl get nodes

namespace

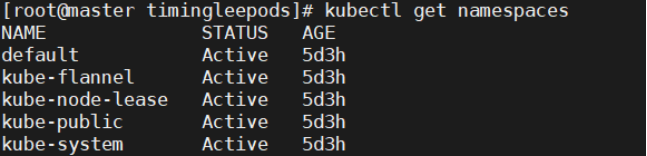

# kubectl get namespaces 列出集群中所有的命名空间

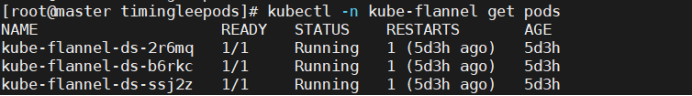

# kubectl -n kube-flannel get pods 查看 kube-flannel 命名空间中的 Pod

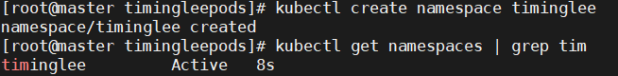

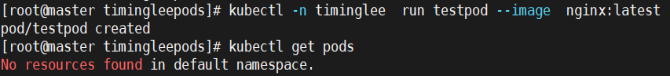

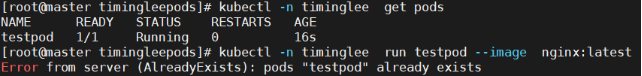

# kubectl create namespace timinglee 创建一个名为 timinglee 的新命名空间

# kubectl -n timinglee run testpod --image nginx:latest 指定命名空间创建pod

# kubectl -n timinglee get pods

# kubectl run testpod --image nginx:latest

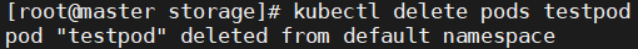

# kubectl delete pod testpod

# kubectl delete -n timinglee pod testpod

# kubectl delete namespaces timinglee

# kubectl get namespaces | grep tim

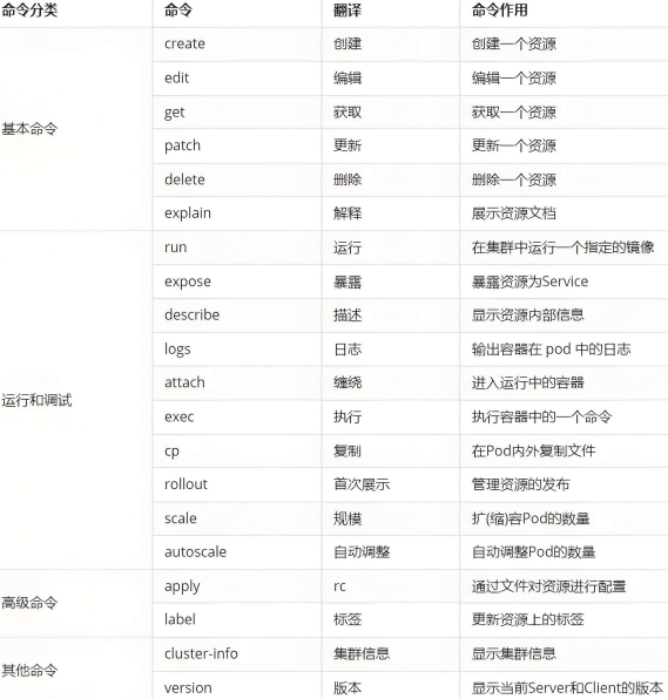

kubectl命令

kubernetes中所有的内容都抽象为资源,# kubectl api-resources 【查看资源】

常用资源类型

kubectl 常见命令操作

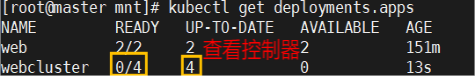

kubectl控制器更改pod数

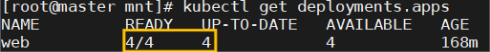

# kubectl create deployment webcluster --image myapp:v1 --replicas 4【创建控制器,pod为4】

# kubectl get deployments.apps 查看结果:

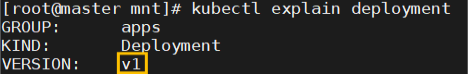

# kubectl explain deployment 【查看资源帮助】

# kubectl explain deployment.spec 【查看控制器参数帮助】

# kubectl edit deployments.apps webcluster 【更改控制器数据】

# kubectl get deployments.apps 查看更改:

# kubectl patch deployments.apps web -p '{"spec":{"replicas":4}}'

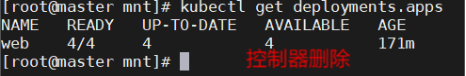

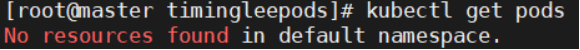

# kubectl delete deployments.apps webcluster 【删除控制器】

# kubectl get deployments.apps 查看更改:

# kubectl patch deployments.apps test -p '{"spec":{"replicas":1}}' 非交互更改

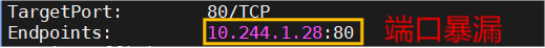

端口暴漏

# docker load -i /root/busyboxplus.tar.gz

# docker tag busyboxplus:latest reg.timinglee.org/library/busyboxplus:latest

# docker push reg.timinglee.org/library/busyboxplus:latest

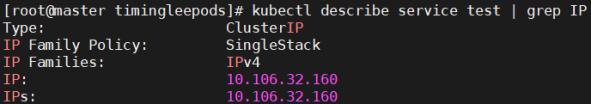

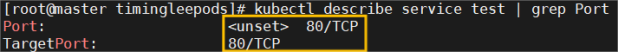

# kubectl expose deployment test --port 80 --target-port 80

# kubectl describe service test | grep IP

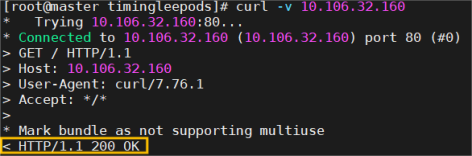

# curl -v 10.106.32.160

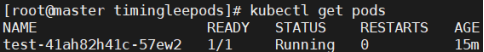

# kubectl get pods

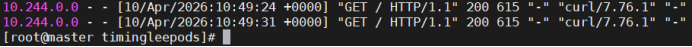

# kubectl logs pods/test-56848fd9dc-chsl7

attach

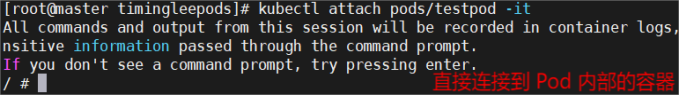

# kubectl run testpod -it --image busybox 确认可以进入就curl+pq

# kubectl attach pods/testpod -it 缠绕进入容器

# kubectl cp test.yml testpod:/ -c testpod

# kubectl exec -it pods/testpod -c testpod -- /bin/sh

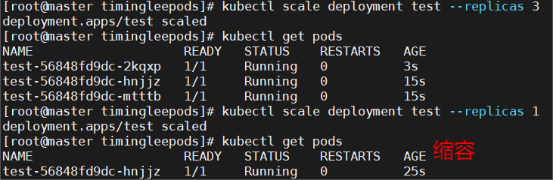

扩容

# kubectl get pods 查看当前 Pod 列表

# kubectl scale deployment test --replicas 3扩容 Deployment 到 3 个副本

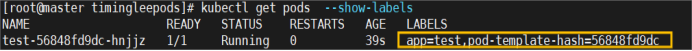

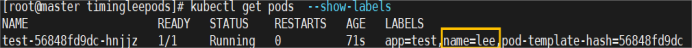

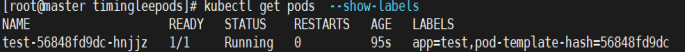

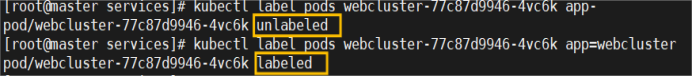

# kubectl get pods --show-labels 查看 Pod 的标签

# kubectl label pods test-56848fd9dc-hnjjz name=lee给 Pod 添加自定义标签lee

****# kubectl label pods test-56848fd9dc-hnjjz name-****删除 Pod 上的标签

Pod应用

自助式管理pod

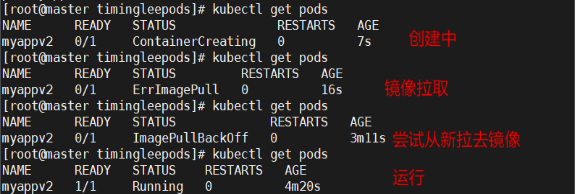

# kubectl run myappv2 --image myapp:v2 --port 80

# kubectl delete pods myappv2

# kubectl get pods

利用控制器管理pod

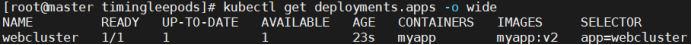

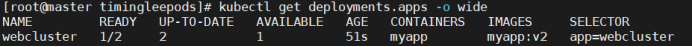

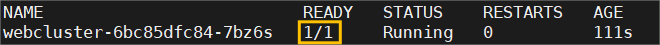

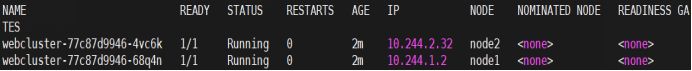

# kubectl create deployment webcluster --image myapp:v2 --replicas 1

# kubectl get deployments.apps -o wide

# kubectl scale deployment webcluster --replicas 2

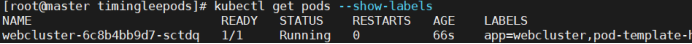

# kubectl label pods webcluster-6c8b4bb9d7-sctdq app=webcluster

# kubectl get pods --show-labels

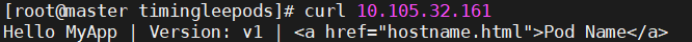

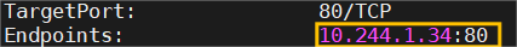

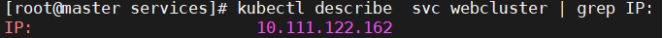

设定访问pod的vip

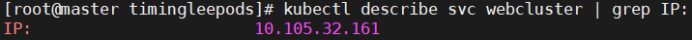

# kubectl expose deployment webcluster --port 80 --target-port 80

# kubectl describe svc webcluster | tail -n 10

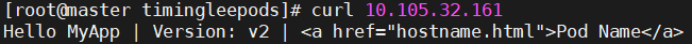

# curl 10.105.32.161

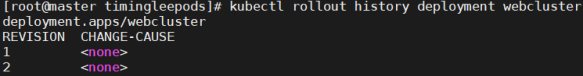

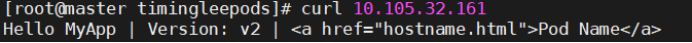

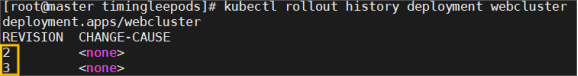

更新版本

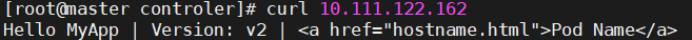

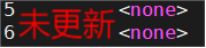

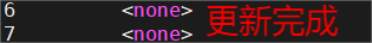

# kubectl set image deployments webcluster myapp=myapp:v1

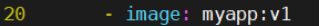

# kubectl rollout history deployment webcluster

# kubectl rollout undo deployment webcluster --to-revision 1

利用yaml文件部署应用

资源清单参数

参数名 类型 说明

version String 这里是指的是K8S API的版本,目前基本上是v1,可以用kubectl api-versions命令查询

kind String 这里指的是yaml文件定义的资源类型和角色,比如:Pod

metadata Object 元数据对象,固定值就写metadata

metadata.name String 元数据对象的名字,这里由我们编写,比如命名Pod的名字

metadata.namespace String 元数据对象的命名空间,由我们自身定义

Spec Object 详细定义对象,固定值就写Spec

spec.containers\[\] list 这里是Spec对象的容器列表定义,是个列表

spec.containers\[\].name String 这里定义容器的名字

spec.containers\[\].image string 这里定义要用到的镜像名称

spec.containers\[\].imagePullPolicy String 定义镜像拉取策略,有三个值可选: (1) Always: 每次都尝试重新拉取镜像 (2) IfNotPresent:如果本地有镜像就使用本地镜像 (3) )Never:表示仅使用本地镜像

spec.containers\[\].command\[\] list 指定容器运行时启动的命令,若未指定则运行容器打包时指定的命令

spec.containers\[\].args\[\] list 指定容器运行参数,可以指定多个

spec.containers\[\].workingDir String 指定容器工作目录

spec.containers\[\].volumeMounts\[\] list 指定容器内部的存储卷配置

spec.containers\[\].volumeMounts\[\].name String 指定可被容器挂载的存储卷的名称

spec.containers\[\].volumeMounts\[\].mountPath String 指定可被容器挂载的存储卷路径

spec.containers\[\].volumeMounts\[\].readOnly String 设置存储卷路径的读写模式,ture或false,默认为读写模式

spec.containers\[\].ports\[\] list 指定容器需要用到的端口列表

spec.containers\[\].ports\[\].name String 指定端口名称

spec.containers\[\].ports\[\].containerPort String 指定容器需要监听的端口号

spec.containers\[\] ports\[\].hostPort String 指定容器所在主机需要监听的端口号,默认跟上面containerPort相同,注意设置了hostPort同一台主机无法启动该容器的相同副本(因为主机的端口号不能相同,这样会冲突)

spec.containers\[\].ports\[\].protocol String 指定端口协议,支持TCP和UDP,默认值为 TCP

spec.containers\[\].env\[\] list 指定容器运行前需设置的环境变量列表

spec.containers\[\].env\[\].name String 指定环境变量名称

spec.containers\[\].env\[\].value String 指定环境变量值

spec.containers\[\].resources Object 指定资源限制和资源请求的值(这里开始就是设置容器的资源上限)

spec.containers\[\].resources.limits Object 指定设置容器运行时资源的运行上限

spec.containers\[\].resources.limits.cpu String 指定CPU的限制,单位为核心数,1=1000m

spec.containers\[\].resources.limits.memory String 指定MEM内存的限制,单位为MIB、GiB

spec.containers\[\].resources.requests Object 指定容器启动和调度时的限制设置

spec.containers\[\].resources.requests.cpu String CPU请求,单位为core数,容器启动时初始化可用数量

spec.containers\[\].resources.requests.memory String 内存请求,单位为MIB、GIB,容器启动的初始化可用数量

spec.restartPolicy string 定义Pod的重启策略,默认值为Always. (1)Always: Pod-旦终止运行,无论容器是如何 终止的,kubelet服务都将重启它 (2)OnFailure: 只有Pod以非零退出码终止时,kubelet才会重启该容器。如果容器正常结束(退出码为0),则kubelet将不会重启它 (3) Never: Pod终止后,kubelet将退出码报告给Master,不会重启该

spec.nodeSelector Object 定义Node的Label过滤标签,以key:value格式指定

spec.imagePullSecrets Object 定义pull镜像时使用secret名称,以name:secretkey格式指定

spec.hostNetwork Boolean 定义是否使用主机网络模式,默认值为false。设置true表示使用宿主机网络,不使用docker网桥,同时设置了true将无法在同一台宿主机 上启动第二个副本

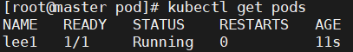

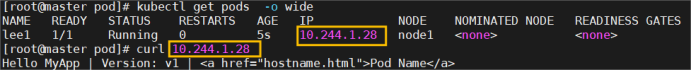

运行单个容器

master ~]# mkdir -p pod

# cd pod/

# kubectl run lee1 --image myapp:v1 --dry-run=client -o yaml > 1test.yml

# vim 1test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

name: lee1

name: lee1

spec:

containers:

- image: myapp:v1

name: myappv1

# kubectl apply -f 1test.yml

# kubectl get pods

# kubectl describe pods 【查看详细信息】

# kubectl get pods -o wide

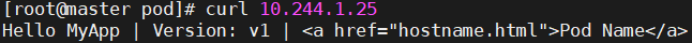

# curl 10.244.1.25

# kubectl delete -f 1test.yml

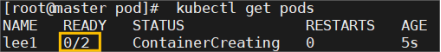

运行多个容器

# cp 1test.yml 2test.yml

# vim 2test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

containers:

- image: myapp:v1

name: myappv1

- image: busybox:latest

name: busybox

command:

/bin/sh

-c

sleep 20000

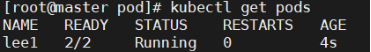

# kubectl apply -f 2test.yml

# kubectl get pods

# kubectl delete -f 2test.yml --force

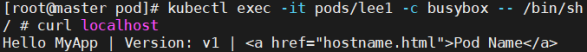

pod间的网络整合

# vim 3test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

containers:

- image: myapp:v1

name: myappv1

- image: busyboxplus:latest

name: busybox

command:

/bin/sh

-c

sleep 20000

# kubectl apply -f 3test.yml

# kubectl get pods

# kubectl exec -it pods/lee1 -c busybox -- /bin/sh

$ curl localhost

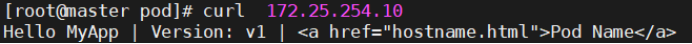

端口映射

# vim 4test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

containers:

- image: myapp:v1

name: myappv1

ports:

- name: webport

containerPort: 80

hostPort: 80

protocol: TCP

# kubectl apply -f 4test.yml

# kubectl get pods -o wide

# kubectl describe pods | grep Port

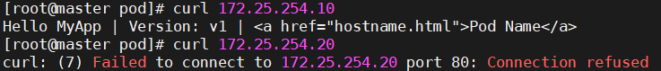

# curl 172.25.254.10

# kubectl delete -f 4test.yml

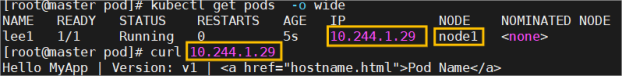

选择运行节点

# vim 5test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

nodeSelector:

kubernetes.io/hostname: node1 ##选择node1节点

containers:

- image: myapp:v1

name: myappv1

ports:

- name: webport

containerPort: 80

hostPort: 80

protocol: TCP

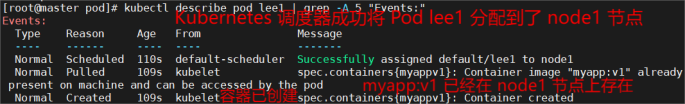

# kubectl apply -f 5test.yml

# kubectl get pods -o wide

# kubectl describe pod lee1 | grep -A 5 "Events:"

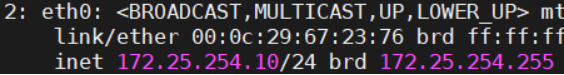

共享宿主机网络

# vim 6test.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

hostNetwork: true

nodeSelector:

kubernetes.io/hostname: node1

containers:

- image: busybox:latest

name: busybox

command:

/bin/sh

-c

sleep 1000

# kubectl exec -it pods/lee1 -c busybox -- /bin/sh

/ # ip a

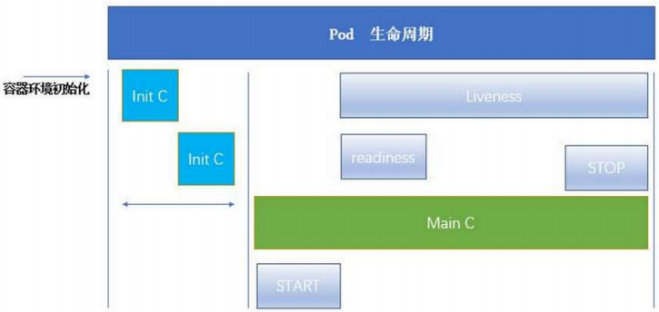

pod的生命周期

INIT 容器

# vim init.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: lee1

name: lee1

spec:

initContainers:

- name: init-myservice

image: busybox

command: "sh","-c","until test -e /testfile;do echo wating for myservice; sleep 2;done"

containers:

- image: myapp:v1

name: myappv1

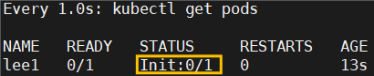

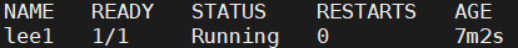

# kubectl apply -f init.yml

# watch -n 1 kubectl get pods 监控命令

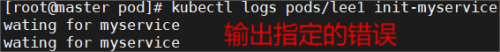

# kubectl logs pods/lee1 init-myservice

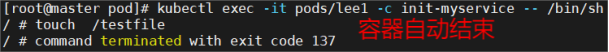

# kubectl exec -it pods/lee1 -c init-myservice -- /bin/sh

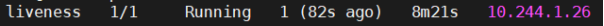

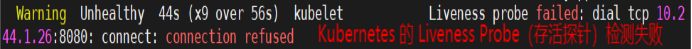

livenessprobe存活探针示例:

# kubectl create deployment webcluster --image myapp:v1 --replicas 1 --

# kubectl create deployment webcluster --image myapp:v1 --replicas 1 --dry-run=client -o yaml > liveness.yml

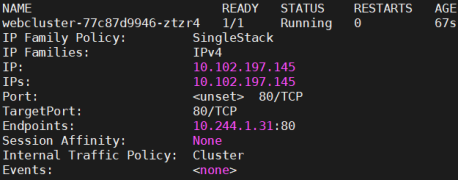

# kubectl expose deployment webcluster --port 80 --target-port 80 --dry-run=client -o yaml >> liveness.yml

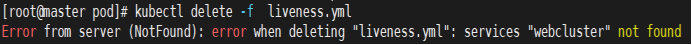

# kubectl delete -f liveness.yml

# kubectl apply -f liveness.yml

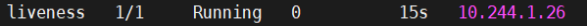

# watch -n 1 "kubectl get pods ;kubectl describe svc webcluster | tail -n 10"

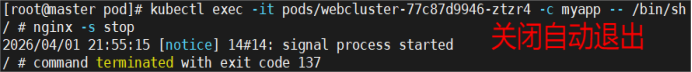

# kubectl exec -it pods/webcluster-77c87d9946-ztzr4 -c myapp -- /bin/sh 测试liveness

/ # nginx -s stop

yml存活探针

# vim web.yml

apiVersion: v1

kind: Pod

metadata:

labels:

name: liveness

name: liveness

spec:

containers:

- name: myapp # 注意:这里需要短横线(列表项)

image: myapp:v1

livenessProbe:

tcpSocket: #检测端口存在性

port: 8080 # 检测 8080 端口

initialDelaySeconds: 3 #容器启动后要等待多少秒后就探针开始工作,默认是 0

periodSeconds: 1 #执行探测的时间间隔,默认为 10s

timeoutSeconds: 1 #探针执行检测请求后,等待响应的超时时间,默认为 1s

# kubectl delete pod initpod

# kubectl apply -f web.yml

# kubectl exec -it liveness -- /bin/sh

/ # nginx -s stop

# kubectl describe pods

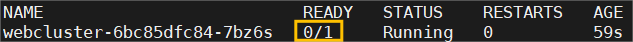

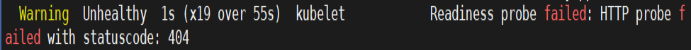

ReadinessProbe就绪探针示例:

# vim ReadinessProbe.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: webcluster

name: webcluster

spec:

replicas: 1

selector:

matchLabels:

app: webcluster

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: myapp:v1

name: myapp

readinessProbe:

httpGet:

path: /test.html

port: 80

initialDelaySeconds: 1

periodSeconds: 3

timeoutSeconds: 1

apiVersion: v1

kind: Service

metadata:

labels:

app: webcluster

name: webcluster

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: webcluster

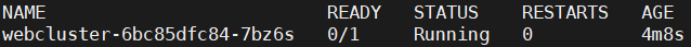

# kubectl apply -f ReadinessProbe.yml

# watch -n 1 "kubectl get pods;kubectl describe svc webcluster | tail -n 10"

# kubectl exec -it pods/webcluster-6bc85dfc84-7bz6s -c myapp -- /bin/sh

/ # echo timinglee > /usr/share/nginx/html/test.html

/ # rm -fr /usr/share/nginx/html/test.html

yml文件简化就绪探针

# vim web.yml

apiVersion: v1

kind: Pod

metadata:

labels:

name: readiness

name: readiness

spec:

containers:

- name: myapp # 必须用短横线开头(列表项)

image: myapp:v1

ports:

- containerPort: 80 # 显式声明端口(可选但推荐)

readinessProbe:

httpGet:

path: /test.html # 确保该路径存在于应用中

port: 80

initialDelaySeconds: 5 # 建议延长初始延迟

periodSeconds: 3

timeoutSeconds: 1

# kubectl delete pod liveness

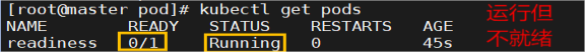

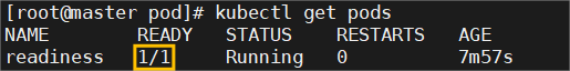

# kubectl apply -f web.yml

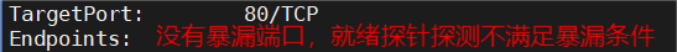

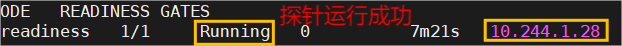

# kubectl get pods 查看运行:

# kubectl describe pods readiness

# kubectl describe services readiness

# kubectl exec pods/readiness -c myapp -- /bin/sh -c "echo test > /usr/share/nginx/html/test.html" 【配置文件】

# kubectl describe services readiness 查看结果:

控制器

控制器常用类型

建立Replicaset控制器

# mkdir -p controler

# cd controler/

# kubectl create deployment webcluster --image myapp:v1 --dry-run=client -o yaml > repset.yml

# vim repset.yml

apiVersion: apps/v1

kind: ReplicaSet

metadata:

labels:

app: webcluster

name: webcluster

spec:

replicas: 2

selector:

matchLabels:

app: webcluster# strategy: {}

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: myapp:v1

name: myapp

# kubectl apply -f repset.yml

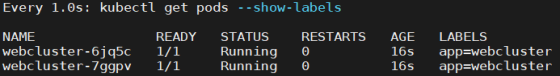

# watch -n 1 kubectl get pods --show-labels

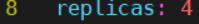

# vim repset.yml

# kubectl apply -f repset.yml

# kubectl delete -f repset.yml

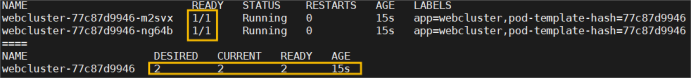

建立deployment控制器

监控 代码 # watch -n 1 "kubectl get pods --show-labels;echo ====;kubectl get replicasets.apps"

# kubectl create deployment webcluster --image myapp:v1 --dry-run=client -o yaml > dep.yml

# vim dep.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: webcluster

name: webcluster

spec:

minReadySeconds: 5

replicas: 2

selector:

matchLabels:

app: webcluster# strategy: {}

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: myapp:v1

name: myapp

resources: {}

# kubectl apply -f dep.yml

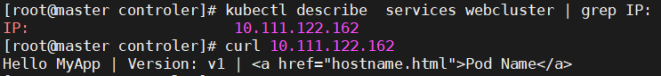

# kubectl expose deployment webcluster --port 80 --target-port 80 发布服务

# kubectl describe services webcluster | grep IP:

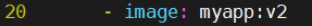

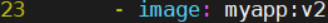

# vim dep.yml 升级和回滚

# vim dep.yml 回滚

版本更新管理及优化

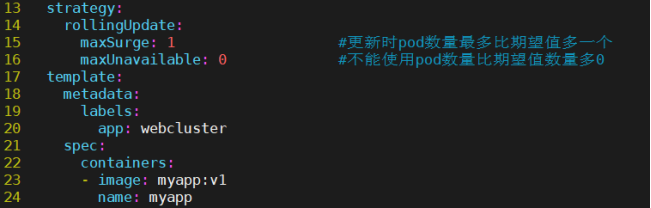

# vim dep.yml

# kubectl apply -f dep.yml

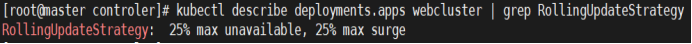

# kubectl describe deployments.apps webcluster | grep RollingUpdateStrategy 查看更新信息

# vim dep.yml 设定更新策略

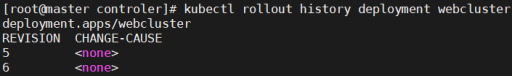

# kubectl rollout history deployment webcluster

# vim dep.yml

# kubectl apply -f dep.yml #执行成功。但更新过程在监控中没出现

# kubectl rollout history deployment webcluster 开启更新

DaemonSet

# kubectl create deployment daemonset --image myapp:v1 --dry-run=client -o yaml > daemonset.yml

# vim daemonset.yml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: daemonset-example

spec:

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

tolerations: #对于污点节点的容忍

- effect: NoSchedule

operator: Exists

containers:

- name: nginx

image: nginx

# kubectl delete -f *.yml

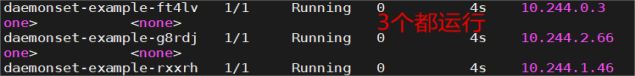

# kubectl apply -f daemonset-example.yml

# kubectl get pods -o wide

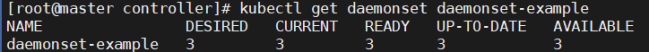

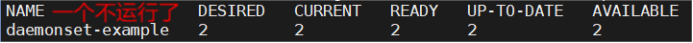

# kubectl get daemonset daemonset-example 【检查 DaemonSet 状态】

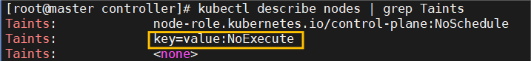

# kubectl taint nodes work1.timinglee.org key=value:NoExecute 【添加强力的污点】

# kubectl describe nodes | grep Taints 【查看污点】

Job控制器

master ~]# docker load -i perl-5.34.tar.gz

# docker login reg.timinglee.org -u admin

# docker tag perl:5.34.0 reg.timinglee.org/library/perl:5.34.0

# docker push reg.timinglee.org/library/perl:5.34.0

# cd controler/

# kubectl create job job --image perl:5.34.0 --dry-run=client -o yaml > job.yml

# vim job.yml

apiVersion: batch/v1

kind: Job

metadata:

name: job

spec:

completions: 6

parallelism: 2

template:

spec:

containers:

- image: perl:5.34.0

name: job

command: "perl", "-Mbignum=bpi", "-wle", "print bpi(2000)"

restartPolicy: Never

backoffLimit: 4

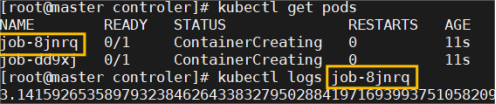

# kubectl apply -f job.yml

# kubectl logs job-8jnrq

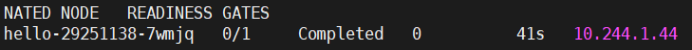

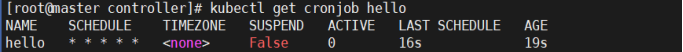

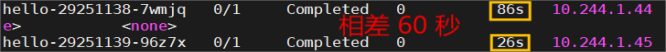

Cronjob控制器

# kubectl create cronjob cronjob --image busybox --schedule "* * * * *" --dry-run=client -o yaml > cronjob.yml

# vim cronjob.yml

apiVersion: batch/v1

kind: CronJob

metadata:

name: hello

spec:

schedule: "* * * * *"

jobTemplate:

spec:

template:

spec:

containers:

- name: hello

image: busybox

imagePullPolicy: IfNotPresent

command: "/bin/sh", "-c", "date; echo Hello from the Kubernetes cluster"

restartPolicy: OnFailure

# kubectl delete -f *.yml

# kubectl apply -f cronjob.yml

# kubectl get cronjob hello

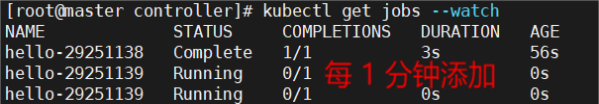

# kubectl get jobs --watch 查看运行:

什么是微服务

用控制器来完成集群的工作负载,那么应用如何暴漏出去?需要通过微服务暴漏出去后才能被访问

Service是一组提供相同服务的Pod对外开放的接口。

借助Service,应用可以实现服务发现和负载均衡。

service默认只支持4层负载均衡能力,没有7层功能。(可以通过Ingress实现)

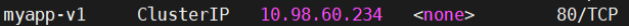

创建一个clusterIP微服务

master ~]# mkdir -p services

# cd services/

# kubectl create deployment webcluster --image myapp:v1 --replicas 2 --dry-run=client -o yaml > clusterIP.yml

# kubectl apply -f clusterIP.yml

# kubectl expose deployment webcluster --port 80 --target-port 80 --dry-run=client -o yaml >> clusterIP.yml

# vim clusterIP.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: webcluster

name: webcluster

spec:

replicas: 2

selector:

matchLabels:

app: webcluster

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: myapp:v1

name: myapp

apiVersion: v1

kind: Service

metadata:

labels:

app: webcluster

name: webcluster

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: webcluster

type: ClusterIP

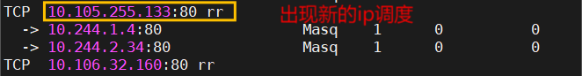

# kubectl apply -f clusterIP.yml

# watch -n 1 " kubectl get pods -o wide ; kubectl describe svc webcluster" 检控查看效果

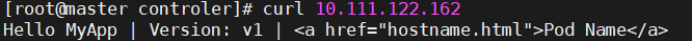

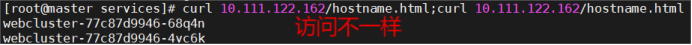

# curl 10.111.122.162/hostname.html;curl 10.111.122.162/hostname.html

测试微服务

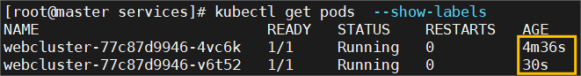

# kubectl delete pods webcluster-77c87d9946-68q4n

# kubectl get pods --show-labels

优化service工作模式

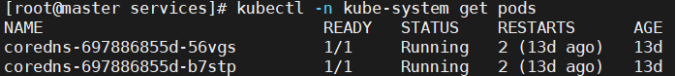

# dnf install ipvsadm -y

# kubectl -n kube-system edit cm kube-proxy

# kubectl -n kube-system get pods

# kubectl -n kube-system delete pods kube-proxy-2q6j9;kubectl -n kube-system delete pods kube-proxy-74lss;kubectl -n kube-system delete pods kube-proxy-dh762

# watch -n 1 ipvsadm -Ln

# kubectl apply -f clusterIP.yml

微服务解析

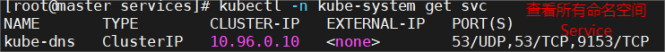

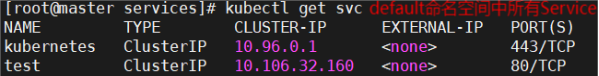

# kubectl -n kube-system get svc

# kubectl get svc

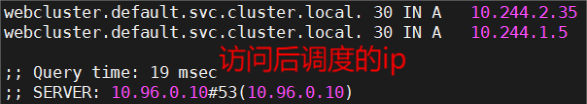

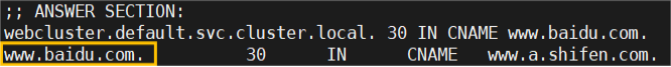

# dig webcluster.default.svc.cluster.local @10.96.0.10 使用k8s集群内部DNS服务解析域名

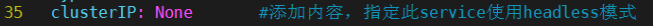

clusterIP中的无头服务headless

# kubectl delete -f clusterIP.yml

# vim clusterIP.yml

# kubectl apply -f clusterIP.yml

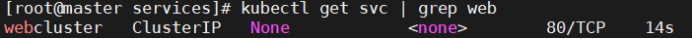

# kubectl get svc | grep web

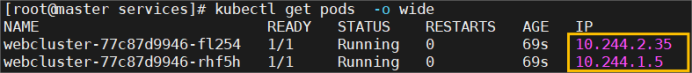

# kubectl get pods -o wide

# dig webcluster.default.svc.cluster.local @10.96.0.10

nodeport

nodeport的建立

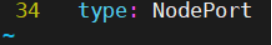

# kubectl delete -f clusterIP.yml

# cp clusterIP.yml nodeport.yml

# vim nodeport.yml

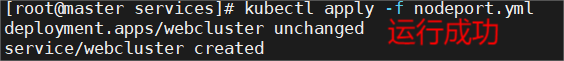

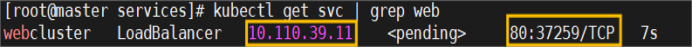

# kubectl apply -f nodeport.yml

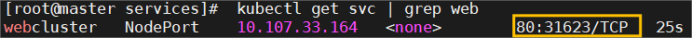

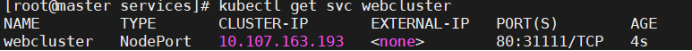

# kubectl get svc

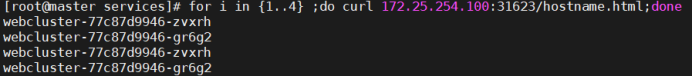

# for i in {1..4} ;do curl 172.25.254.100:31623/hostname.html;done

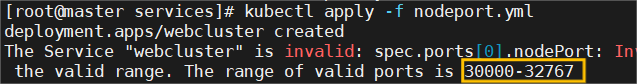

nodeport端口管理(默认范围30000~32767)

# vim nodeport.yml

# kubectl apply -f nodeport.yml

# kubectl get svc webcluster

# kubectl delete -f nodeport.yml

# vim nodeport.yml

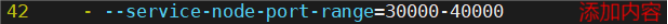

# vim /etc/kubernetes/manifests/kube-apiserver.yaml 解锁默认端口范围

# kubectl apply -f nodeport.yml 上述文件修改会重启集群,120秒****# kubectl get nodes****看状态

# kubectl get svc | grep web

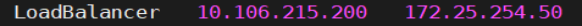

loadbalancer

创建loadbalancer

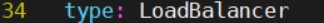

# kubectl delete -f nodeport.yml

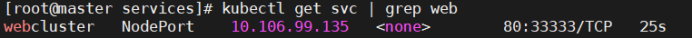

# cp nodeport.yml loadbalance.yml

# vim loadbalance.yml

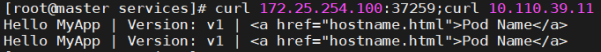

# curl 172.25.254.100:37259 ; curl 10.110.39.11

部署metallb

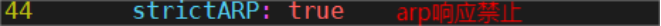

# kubectl edit configmap -n kube-system kube-proxy

# kubectl -n kube-system get pods

# kubectl -n kube-system delete pods kube-proxy-7sf67;kubectl -n kube-system delete pods kube-proxy-cbqjp;kubectl -n kube-system delete pods kube-proxy-qznm6

下载metallb的yml文件

services]# wget https://raw.githubusercontent.com/metallb/metallb/ v0.15.3/config/manifests/metallb-native.yaml

# docker pull quay.io/metallb/controller:v0.15.3

# docker pull quay.io/metallb/speaker:v0.15.3

# docker tag quay.io/metallb/controller:v0.15.3 reg.timinglee.org/metallb/controller:v0.15.3

# docker push reg.timinglee.org/metallb/controller:v0.15.3

# docker tag quay.io/metallb/speaker:v0.15.3 reg.timinglee.org/metallb/speaker:v0.15.3

# docker push reg.timinglee.org/metallb/speaker:v0.15.3

# cd services/

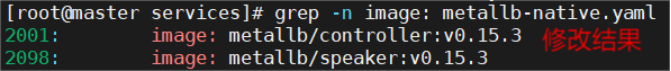

# vim metallb-native.yaml 修改内容

# grep -n image: metallb-native.yaml

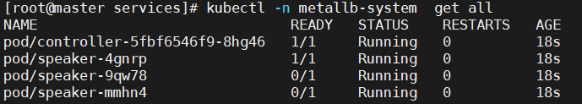

# kubectl apply -f metallb-native.yaml

# kubectl -n metallb-system get all

配置metallb

# vim configmap.yml

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: first-pool

namespace: metallb-system

spec:

addresses:

- 172.25.254.50-172.25.254.80

apiVersion: metallb.io/v1beta1

kind: L2Advertisement

metadata:

name: example

namespace: metallb-system

spec:

ipAddressPools:

- first-pool

# kubectl apply -f configmap.yml

# kubectl get svc

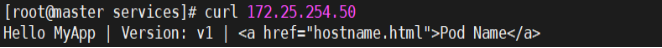

# curl 172.25.254.50

externalname

# vim externalname.yml

apiVersion: v1

kind: Service

metadata:

labels:

app: webcluster

name: webcluster

spec:

selector:

app: webcluster

type: ExternalName

externalName: www.baidu.com

# kubectl apply -f externalname.yml

# dig webcluster.default.svc.cluster.local @10.96.0.10

ingress-nginx

安装

services]# wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.15.1/deploy/static/provider/baremetal/deploy.yaml

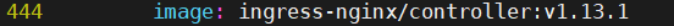

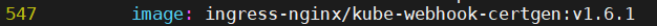

# vim deploy.yaml

# docker load -i ingress-nginx-1.13.1.tar

# docker tag registry.k8s.io/ ingress-nginx/kube-webhook-certgen:v1.6.1 reg.timinglee.org/ ingress-nginx/kube-webhook-certgen:v1.6.1

# docker push reg.timinglee.org/ ingress-nginx/kube-webhook-certgen:v1.6.1

# docker tag registry.k8s.io/ingress-nginx/controller:v1.13.1 reg.timinglee.org/ingress-nginx/controller:v1.13.1

# docker push reg.timinglee.org/ingress-nginx/controller:v1.13.1

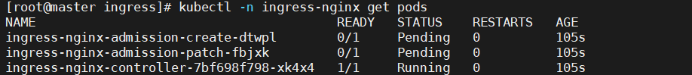

# kubectl apply -f deploy.yaml

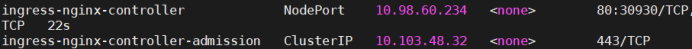

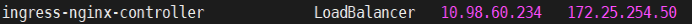

# kubectl -n ingress-nginx get svc

# kubectl -n ingress-nginx get pods

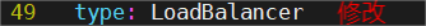

# kubectl -n ingress-nginx edit svc ingress-nginx-controller 【修改微服务为loadbalancer】

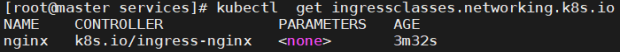

# kubectl get ingressclasses.networking.k8s.io

# kubectl -n ingress-nginx get svc

在ingress-nginx-controller中看到的对外IP就是ingress最终对外开放的ip

密钥配置启动ip分配

# KEY=$(openssl rand -base64 32) 【生成密钥】

# kubectl -n metallb-system create secret generic memberlist --from-literal=secretkey="$KEY"

# kubectl -n metallb-system delete pods --all 【重启】

测试ingress

# kubectl create ingress webcluster --rule '*/=timinglee-svc:80' --dry-run=client -o yaml > timinglee-ingress.yml

# vim timinglee-ingress.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: test-ingress

spec:

ingressClassName: nginx

rules:

- http:

paths:

- backend:

service:

name: timinglee-svc

port:

number: 80

path: /

pathType: Prefix

#Exact(精确匹配),ImplementationSpecific(特定实现),Prefix(前缀匹配),Regular expression(正则表达式匹配)

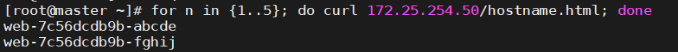

# kubectl apply -f timinglee-ingress.yml

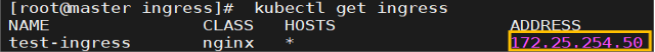

# kubectl get ingress

# for n in {1..5}; do curl 172.25.254.50/hostname.html; done

ingress 的高级用法

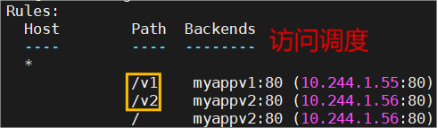

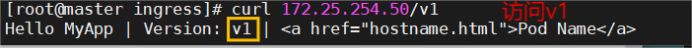

基于路径的访问

建立用于测试的控制器myapp

# vim myapp-v1.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-test

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

spec:

ingressClassName: nginx

rules:

- http:

paths:

- backend:

service:

name: myappv1

port:

number: 80

path: /v1

pathType: Prefix

- backend:

service:

name: myappv2

port:

number: 80

path: /v2

pathType: Prefix

# kubectl apply -f myapp-v1.yaml

# kubectl get services

# kubectl get ingress ingress-test

# kubectl -n ingress-nginx get svc

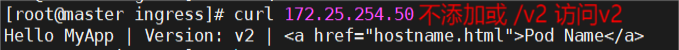

# curl 172.25.254.50 访问结果:

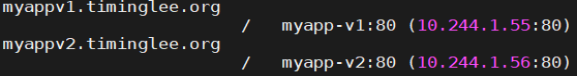

基于域名的访问

# vim /etc/hosts 【在测试主机中设定解析】

172.25.254.50 myappv1.timinglee.org myappv2.timinglee.org

# vim ingressmy.yml 【建立基于域名的yml文件】

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

name: ingressmy

spec:

ingressClassName: nginx

rules:

- host: myappv1.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

- host: myappv2.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v2

port:

number: 80

path: /

pathType: Prefix

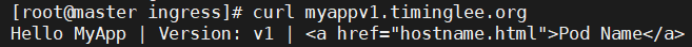

# kubectl apply -f ingressmy.yml

# kubectl describe ingress ingressmy

# curl myappv2.timinglee.org

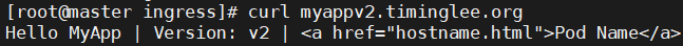

建立tls加密

建立证书

# openssl req -newkey rsa:2048 -nodes -keyout tls.key -x509 -days 365 -subj "/CN=nginxsvc/O=nginxsvc" -out tls.crt

# ls tls* 查看结果:

# kubectl create secret tls web-tls-secret --key tls.key --cert tls.crt【把证书加到集群内成为资源】

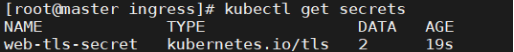

# kubectl get secrets

# kubectl describe secrets web-tls-secret

secret通常在kubernetes中存放敏感数据,他并不是一种加密方式

建立基于tls认证的yml文件

# vim my.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

name: ingress3

spec:

tls:

hosts:

secretName: web-tls-secret

ingressClassName: nginx

rules:

- host: myappv1.timinglee.org

http:

paths:

- backend:

service:

name: myapp-v1

port:

number: 80

path: /

pathType: Prefix

# kubectl apply -f my.yml

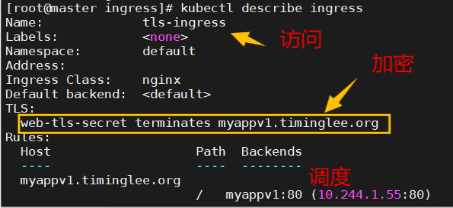

# kubectl describe ingress 查看结果:

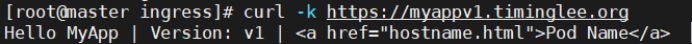

# curl -k https://myappv1.timinglee.org

建立auth认证

建立认证文件

# dnf install httpd-tools -y

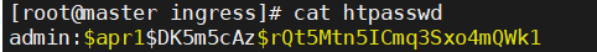

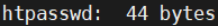

# htpasswd -cm htpasswd admin 【建立认证】

# cat htpasswd 查看结果:

建立认证类型资源

# kubectl create secret generic auth-web --from-file htpasswd 【提取为资源】

# kubectl describe secrets auth-web

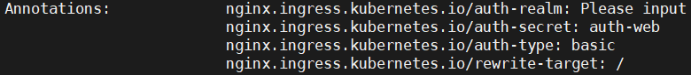

建立基于用户认证的yaml文件

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: host-ingress

annotations:

nginx.ingress.kubernetes.io/auth-type: basic

nginx.ingress.kubernetes.io/auth-secret: auth-web

nginx.ingress.kubernetes.io/auth-realm: "Please input username and password"

nginx.ingress.kubernetes.io/rewrite-target: /

spec:

ingressClassName: nginx

rules:

- host: myappv1.timinglee.org

http:

paths:

- backend:

service:

name: myappv1

port:

number: 80

path: /

pathType: Prefix

建立yhingress

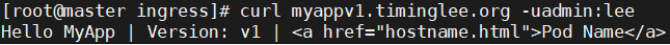

# kubectl describe ingress host-ingress

# curl myappv1.timinglee.org 【无法访问】

# curl myappv1.timinglee.org -uadmin:lee 【证书访问】

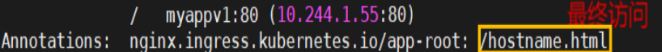

rewrite重定向

访问文件重定向

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: rew-ingress

annotations:

nginx.ingress.kubernetes.io/app-root: /hostname.html #默认访问文件hostname.html

spec:

ingressClassName: nginx

rules:

- http:

paths:

- backend:

service:

name: myappv1

port:

number: 80

path: /

pathType: Prefix

# kubectl describe ingress rew-ingress 查看结果:

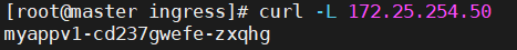

# curl -L 172.25.254.50

正则表达式重定向

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: rew-ingress

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /$2 # 修正:捕获第二个分组

nginx.ingress.kubernetes.io/use-regex: "true"

spec:

ingressClassName: nginx

rules:

- http:

paths:

- backend:

service:

name: myappv1

port:

number: 80

path: /haha(/|)(.\*) # haha后的元素是 2

pathType: ImplementationSpecific # 匹配正则表达式

# kubectl describe ingress rew-ingress 查看结果:

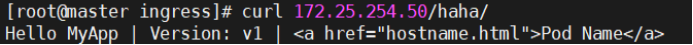

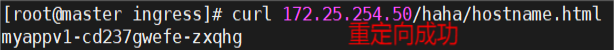

# curl 172.25.254.50/haha/

# curl 172.25.254.50/haha/hostname.html

/(.*) /(.*) # curl 172.25.254.50/ 第一个变量 / 第二个变量

Canary金丝雀发布

金丝雀发布(Canary Release)也称为灰度发布,是一种软件发布策略。

主要目的是在将新版本的软件全面推广到生产环境之前,先在一小部分用户或服务器上进行测试和验证,以降低因新版本引入重大问题而对整个系统造成的影响。

是一种Pod的发布方式。金丝雀发布采取先添加、再删除的方式,保证Pod的总量不低于期望值。并且在更新部分Pod后,暂停更新,当确认新Pod版本运行正常后再进行其他版本的Pod的更新。

Canary发布方式

其中header和weiht中的最多

基于head的灰度发布

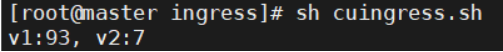

# vim cuingress.sh

#!/bin/bash

v1=0

v2=0

for (( i=0; i<10; i++))

do

response=`curl -s 172.25.254.51 |grep -c v1`

v1=`expr v1 + response`

v2=`expr v2 + 1 - response`

done

echo "v1:v1, v2:v2"

# chmod +x cuingress.sh

# sh cuingress.sh

建立老版本

# vim ingressold.yml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

name: webcluster

spec:

ingressClassName: nginx

rules:

- host: myapp1.timinglee.org

http:

paths:

- backend:

service:

name: myapp1

port:

number: 80

path: /

pathType: Prefix

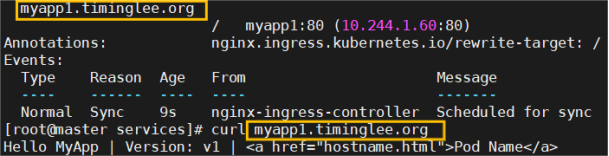

# kubectl describe ingress webcluster

# curl myapp1.timinglee.org

建立新的ingress

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-by-header: "version"

nginx.ingress.kubernetes.io/canary-by-header-value: "2"

nginx.ingress.kubernetes.io/rewrite-target: /

name: webcluster-new

spec:

ingressClassName: nginx

rules:

- host: myapp1.timinglee.org

http:

paths:

- backend:

service:

name: myapp2

port:

number: 80

path: /

pathType: Prefix

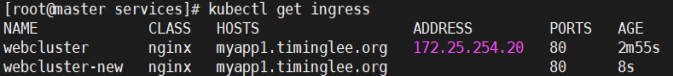

# kubectl get ingress

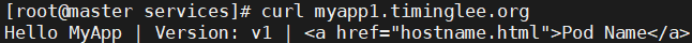

# curl myapp1.timinglee.org

# curl -H "version:2" myapp1.timinglee.org

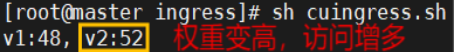

基于权重的灰度发布

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/canary: "true"

nginx.ingress.kubernetes.io/canary-weight: "10"

nginx.ingress.kubernetes.io/canary-weight-total: "100"

nginx.ingress.kubernetes.io/rewrite-target: /

name: webcluster-new

spec:

ingressClassName: nginx

rules:

- host: myapp1.timinglee.org

http:

paths:

- backend:

service:

name: myapp2

port:

number: 80

path: /

pathType: Prefix

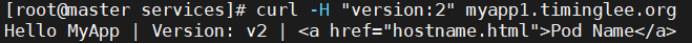

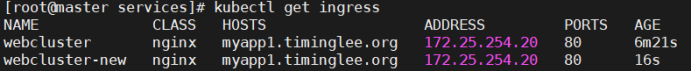

# kubectl get ingress

# sh cuingress.sh 查看测试:

#更改完毕权重后继续测试可观察变化

# vim ingressnew.yml

nginx.ingress.kubernetes.io/canary-weight: "10" #更改权重值

# sh cuingress.sh 查看测试:

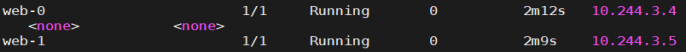

无头服务优化维护

# kubectl create deployment web --image myapp:v1 --replicas 2 --dry-run=client -o yaml > web.yml

# kubectl create service clusterip web --clusterip="None" --dry-run=client -o yaml > service.yml

# vim web.yml 【statefulset控制器】

apiVersion: apps/v1

kind: StatefulSet

metadata:

creationTimestamp: null

labels:

app: web

name: web

spec:

serviceName: web

replicas: 2

selector:

matchLabels:

app: web

template:

metadata:

labels:

app: web

spec:

containers:

- image: myapp:v1

name: myapp

# vim service.yml 【无头服务】

apiVersion: v1

kind: Service

metadata:

labels:

app: web

name: web

spec:

clusterIP: None

selector:

app: web

type: ClusterIP

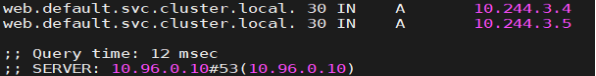

# kubectl get pods -o wide

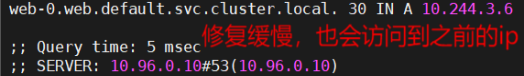

# dig web.default.svc.cluster.local @10.96.0.10 查看结果:

# kubectl delete pods web-0 【测试删除】

# dig web-0.web default.svc.cluster.local @10.96.0.10

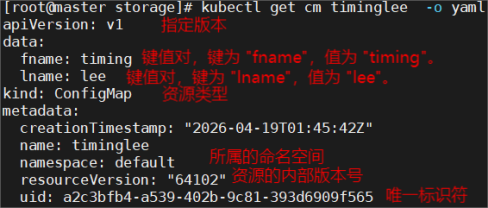

configmap创建方式

通过字符方式

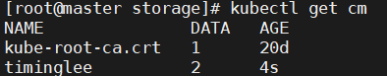

# kubectl create cm timinglee --from-literal fname=timing --from-literal lname=lee

# kubectl get cm

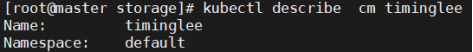

# kubectl describe cm timinglee

# kubectl get cm timinglee -o yaml

通过文件方式

# echo hello timinglee > timinglee

# kubectl create cm timinglee2 --from-file timinglee

# kubectl describe cm timinglee2

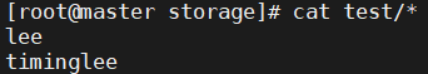

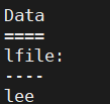

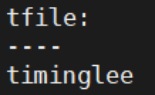

通过目录方式

# mkdir test

# echo timinglee > test/tfile

# echo lee > test/lfile

# ls test/

# cat test/*

# kubectl create cm timinglee3 --from-file test/

# kubectl describe cm timinglee3

通过yaml方式

# kubectl create cm timinglee4 --from-literal timinglee=abc --dry-run=client -o yaml > timinglee4.yaml

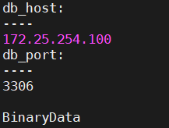

# vim timinglee4.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: lee-config

data:

db_host: "172.25.254.100"

db_port: "3306"

# kubectl apply -f timinglee4.yaml

# kubectl describe cm lee-config查看结果:

ConfigMap的使用方法

填充自定义变量

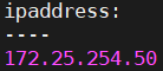

# vim timinglee4.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: timinglee

data:

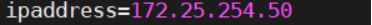

ipaddress: "172.25.254.50"

port: "3306"

# kubectl delete cm timinglee ; kubectl delete cm timinglee 2; kubectl delete cm timinglee 3

# kubectl apply -f timinglee4.yaml

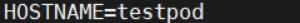

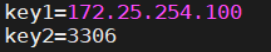

# kubectl run testpod --image busybox --dry-run=client -o yaml > testpod.yml

# vim testpod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: testpod

name: testpod

spec:

containers:

- image: busybox

name: testpod

command:

/bin/sh

-c

env

env:

- name: key1 #设定key1的值

valueFrom:

configMapKeyRef:

name: timinglee

key: ipaddress

- name: key2 #设定key2的值

valueFrom:

configMapKeyRef:

name: timinglee

key: port

restartPolicy: Never

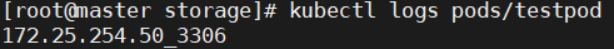

# kubectl apply -f testpod.yml

# kubectl logs pods/testpod

# kubectl delete pods testpod

直接把configMap中的变量填充到系统给变量

# vim testpod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: testpod

name: testpod

spec:

containers:

- image: busybox

name: testpod

command:

/bin/sh

-c

env

envFrom:

- configMapRef:

name: timinglee

restartPolicy: Never

# kubectl apply -f testpod.yml

# kubectl logs pods/testpod

变量的表示方式

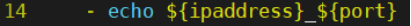

# vim testpod.yml

# kubectl logs pods/testpod

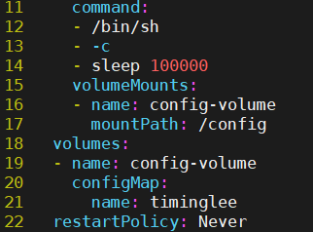

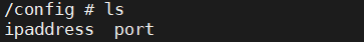

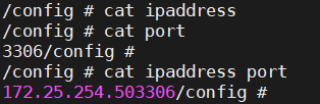

通过数据卷使用configmap

# vim testpod.yml

# kubectl exec -it pods/testpod -- /bin/sh

/ # cd config /

/config # cat ipaddress

/config # cat port

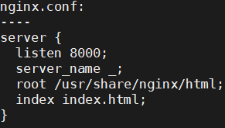

通过cm来配置pod

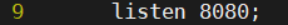

# vim nginx.conf

server {

listen 8000;

server_name _;

root /usr/share/nginx/html;

index index.html;

}

# kubectl create cm nginx --from-file nginx.conf

# kubectl describe cm nginx

# vim testpod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: nginx

name: nginx

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- name: config-volume

mountPath: /etc/nginx/conf.d

volumes:

- name: config-volume

configMap:

name: nginx

restartPolicy: Never

# kubectl apply -f testpod.yml

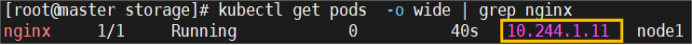

# kubectl get pods -o wide

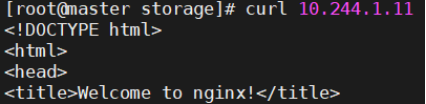

# curl 10.244.1.11

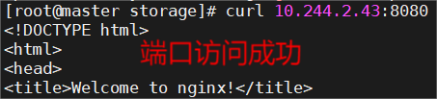

通过修改该cm的内容来更新配置

# kubectl edit cm nginx

# kubectl delete -f testpod.yml

# kubectl apply -f testpod.yml

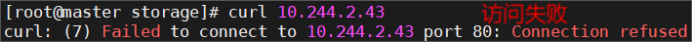

# curl 10.244.2.43:8080

secrets配置管理

secrets的功能介绍

Secret 对象类型用来保存敏感信息,例如密码、OAuth 令牌和 ssh key。

敏感信息放在 secret 中比放在 Pod 的定义或者容器镜像中来说更加安全和灵活

Pod 可以用两种方式使用 secret:

作为 volume 中的文件被挂载到 pod 中的一个或者多个容器里。

当 kubelet 为 pod 拉取镜像时使用。

Secret的类型:

Service Account:Kubernetes 自动创建包含访问 API 凭据的 secret,并自动修改 pod 以使用此类型的 secret。

Opaque:使用base64编码存储信息,可以通过base64 --decode解码获得原始数据,因此安全性弱。

kubernetes.io/dockerconfigjson:用于存储docker registry的认证信息

secrets建立

通过命令方式建立

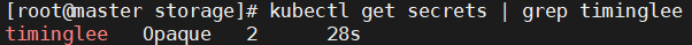

# kubectl create secret generic timinglee --from-literal userlist=timinglee --from-literal password=lee

# kubectl get secrets

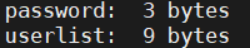

# kubectl describe secrets timinglee

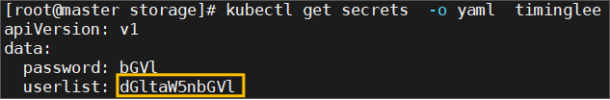

# kubectl get secrets -o yaml timinglee

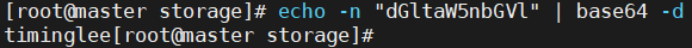

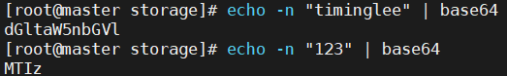

# echo -n "dGltaW5nbGVl" | base64 -d

# kubectl delete secrets timinglee

通过文件方式建立

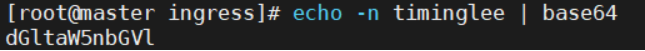

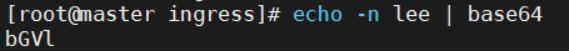

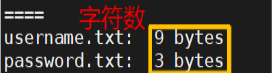

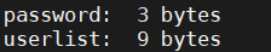

# echo -n timinglee > username.txt

# echo -n lee > password.txt

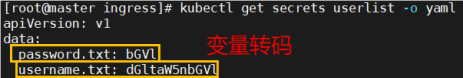

# kubectl create secret generic userlist --from-file username.txt --from-file password.txt

# kubectl get secrets userlist -o yaml

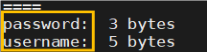

编写yaml文件

# kubectl create secret generic userlist --dry-run=client -o yaml > userlist.yml

# vim userlist.yml

apiVersion: v1

kind: Secret

metadata:

creationTimestamp: null

name: userlist

type: Opaque

data:

username: dGltaW5nbGVl

password: bGVl

# kubectl apply -f userlist.yml

# kubectl describe secrets userlist

通过yaml的方式建立

# kubectl create secret generic timinglee --from-literal userlist=timinglee --dry-run=client -o yaml > timinglee_secrets.yml

# vim timinglee_secrets.yml

apiVersion: v1

kind: Secret

metadata:

name: timinglee

data:

userlist: dGltaW5nbGVl

password: MTIz

# kubectl apply -f timinglee_secrets.yml

# kubectl describe secrets timinglee

secrets用法

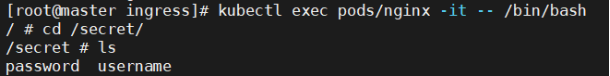

注入pod文件中

# vim testpod.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: nginx

name: nginx

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- name: secrets

mountPath: /secret

readOnly: true

volumes:

- name: secrets

secret:

secretName: userlist

# kubectl describe secrets userlist

# kubectl exec pods/nginx -it -- /bin/bash

向指定路径映射 secret 密钥

# vim pod.yaml

apiVersion: v1

kind: Pod

metadata:

labels:

run: nginx1

name: nginx1

spec:

containers:

- image: nginx

name: nginx1

volumeMounts:

- name: secrets

mountPath: /secret

readOnly: true

volumes:

- name: secrets

secret:

secretName: userlist

items:

- key: username

path: my-users/username

# kubectl exec pods/nginx1 -it -- /bin/bash

/# cd secret/my-users

/secret/my-users# ls

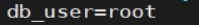

将Secret设置为环境变量

# kubectl run pod-sc --image busybox --dry-run=client -o yaml > pod-sc.yml

# vim pod-sc.yml

apiVersion: v1

kind: Pod

metadata:

labels:

run: pod-sc

name: pod-sc

spec:

containers:

- image: busybox

name: pod-sc

command:

/bin/sh

-c

env

env:

- name: db_user

valueFrom:

secretKeyRef:

name: testsc

key: db_user

- name: db

valueFrom:

secretKeyRef:

name: testsc

key: db_pass

restartPolicy: Never

# kubectl logs pods/pod-sc