题意: 由 getattr 引起的有趣的 bug

问题背景:

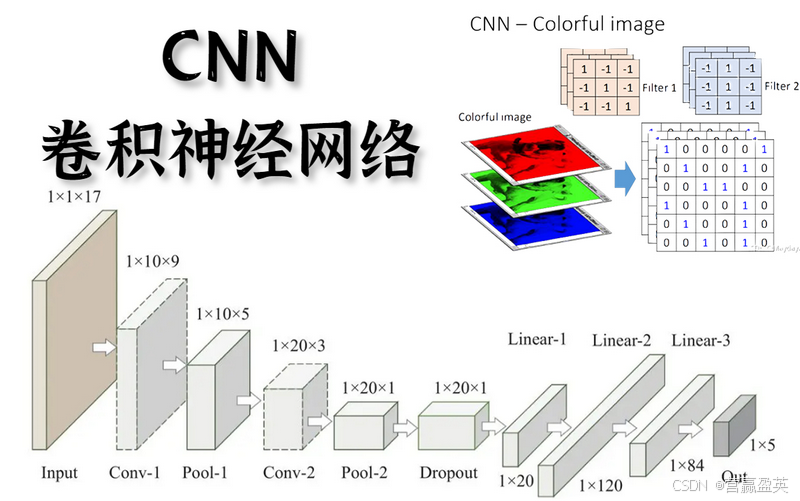

I try to train 8 CNN models with the same structures simultaneously. After training a model on a batch, I need to synchronize the weights of the feature extraction layers in other 7 models.

我尝试同时训练8个具有相同结构的卷积神经网络(CNN)模型。在对一个批次的数据训练一个模型后,我需要同步其他7个模型中特征提取层的权重。

This is the model: 这是模型

python

class GNet(nn.Module):

def __init__(self, dim_output, dropout=0.5):

super(GNet, self).__init__()

self.out_dim = dim_output

# Load the pretrained AlexNet model

alexnet = models.alexnet(pretrained=True)

self.pre_filtering = nn.Sequential(

alexnet.features[:4]

)

# Set requires_grad to False for all parameters in the pre_filtering network

for param in self.pre_filtering.parameters():

param.requires_grad = False

# construct the feature extractor

# every intermediate feature will be fed to the feature extractor

# res: 25 x 25

self.feat_ex1 = nn.Conv2d(192, 128, kernel_size=3, stride=1)

# res: 25 x 25

self.feat_ex2 = nn.Sequential(

nn.BatchNorm2d(128),

nn.Dropout(p=dropout),

nn.Conv2d(128, 128, kernel_size=3, stride=1, padding=1)

)

# res: 25 x 25

self.feat_ex3 = nn.Sequential(

nn.BatchNorm2d(128),

nn.Dropout(p=dropout),

nn.Conv2d(128, 128, kernel_size=3, stride=1, padding=1)

)

# res: 13 x 13

self.feat_ex4 = nn.Sequential(

nn.MaxPool2d(kernel_size=3, stride=2, padding=1),

nn.BatchNorm2d(128),

nn.Dropout(p=dropout),

nn.Conv2d(128, 128, kernel_size=3, stride=1, padding=1)

)

# res: 13 x 13

self.feat_ex5 = nn.Sequential(

nn.BatchNorm2d(128),

nn.Dropout(p=dropout),

nn.Conv2d(128, 128, kernel_size=3, stride=1, padding=1)

)

# res: 13 x 13

self.feat_ex6 = nn.Sequential(

nn.BatchNorm2d(128),

nn.Dropout(p=dropout),

nn.Conv2d(128, 128, kernel_size=3, stride=1, padding=1)

)

# res: 13 x 13

self.feat_ex7 = nn.Sequential(

nn.BatchNorm2d(128),

nn.Dropout(p=dropout),

nn.Conv2d(128, 64, kernel_size=3, stride=1, padding=1)

)

# define the flexible pooling field of each layer

# use a full convolution layer here to perform flexible pooling

self.fpf13 = nn.Conv2d(in_channels=448, out_channels=448, kernel_size=13, groups=448)

self.fpf25 = nn.Conv2d(in_channels=384, out_channels=384, kernel_size=25, groups=384)

self.linears = {}

for i in range(self.out_dim):

self.linears[f'linear_{i+1}'] = nn.Linear(832, 1)

self.LogTanh = LogTanh()

self.flatten = nn.Flatten()And this is the function to synchronize the weights:

这是同步权重的函数:

python

def sync_weights(models, current_sub, sync_seqs):

for sub in range(1, 9):

if sub != current_sub:

# Synchronize the specified layers

with torch.no_grad():

for seq_name in sync_seqs:

reference_layer = getattr(models[current_sub], seq_name)[2]

layer = getattr(models[sub], seq_name)[2]

layer.weight.data = reference_layer.weight.data.clone()

if layer.bias is not None:

layer.bias.data = reference_layer.bias.data.clone()then an error is raised: 然后出现了一个错误:

python

'Conv2d' object is not iterablewhich means the getattr() returns a Conv2D object. But if I remove [2]:

意思是 getattr() 函数返回了一个 Conv2D 对象。但是,如果我移除了 [2]

python

def sync_weights(models, current_sub, sync_seqs):

for sub in range(1, 9):

if sub != current_sub:

# Synchronize the specified layers

with torch.no_grad():

for seq_name in sync_seqs:

reference_layer = getattr(models[current_sub], seq_name)

layer = getattr(models[sub], seq_name)

layer.weight.data = reference_layer.weight.data.clone()

if layer.bias is not None:

layer.bias.data = reference_layer.bias.data.clone()I get another error: 我得到了另一个错误

python

'Sequential' object has no attribute 'weight'which means the getattr() returns a Sequential. But previously it returns a Conv2D object. Does anyone know anything about this? For your information, the sync_seqs parameter passed in sync_weights is:

意思是 getattr() 现在返回的是一个 Sequential 模型,但之前它返回的是一个 Conv2D 对象。有人知道这是怎么回事吗?为了提供更多信息,sync_weights 函数中传入的 sync_seqs 参数是:

sync_seqs = [

'feat_ex1',

'feat_ex2',

'feat_ex3',

'feat_ex4',

'feat_ex5',

'feat_ex6',

'feat_ex7'

]问题解决:

In both instances, getattr is returning a Sequential, which in turn contains a bunch of objects. In the second case, you're directly assigning that Sequential to a variable, so reference_layer ends up containing a Sequential.

在这两种情况下,getattr 都返回了一个 Sequential 对象,而这个 Sequential 对象又包含了一系列的其他对象。在第二种情况下,你直接将这个 Sequential 对象赋值给了一个变量,因此 reference_layer 最终包含了一个 Sequential 对象。

In the first case, however, you're not doing that direct assignemnt. You're taking the Sequential object and then indexing it with [2]. That means reference_layer contains the third item in the Sequential, which is a Conv2d object.

在第一种情况下,你没有进行直接的赋值。你是先获取了 Sequential 对象,然后使用 [2] 对其进行索引。这意味着 reference_layer 包含的是 Sequential 中的第三个项目,这个项目是一个 Conv2D 对象。

Take a more simple example. Suppose I had a ListContainer class that did nothing except hold a list. I could then recreate your example as follows, with test1 corresponding to your first test case and vice versa:

以一个更简单的例子来说明。假设我有一个 ListContainer 类,它唯一的作用就是持有一个列表。然后我可以按照以下方式重现你的例子,其中 test1 对应你的第一个测试用例,反之亦然:

python

class ListContainer:

def __init__(self, list_items):

self.list_items = list_items

letters = ["a", "b", "c"]

container = ListContainer(letters)

test1 = getattr(container, "list_items")[0]

test2 = getattr(container, "list_items")

print(type(test1)) # <class 'str'>

print(type(test2)) # <class 'list'>In both tests, getattr itself is returning a list - but in the second, we're doing something with that list after we get it, so test2 ends up being a string instead.

在两次测试中,getattr 本身都返回了一个列表------但在第二次测试中,我们在获取到这个列表之后对它进行了某种操作,所以 test2 最终变成了一个字符串而不是列表。