1.通过深度学习框架的高级API能够使实现softmax回归更容易

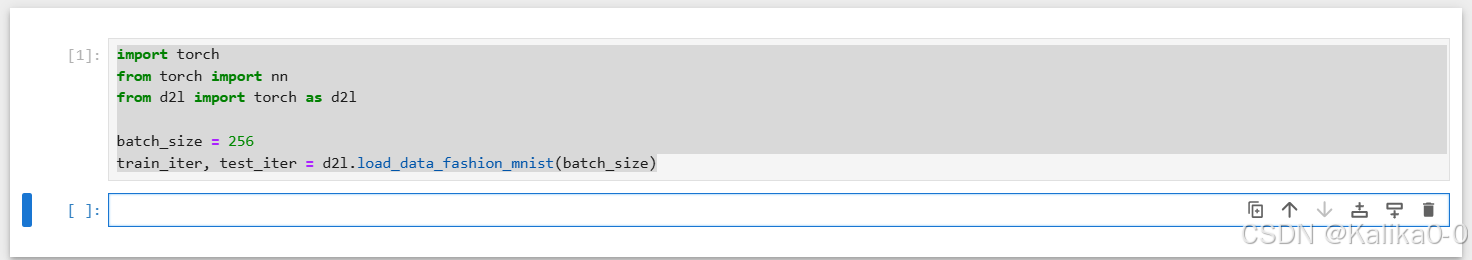

import torch

from torch import nn

from d2l import torch as d2l

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

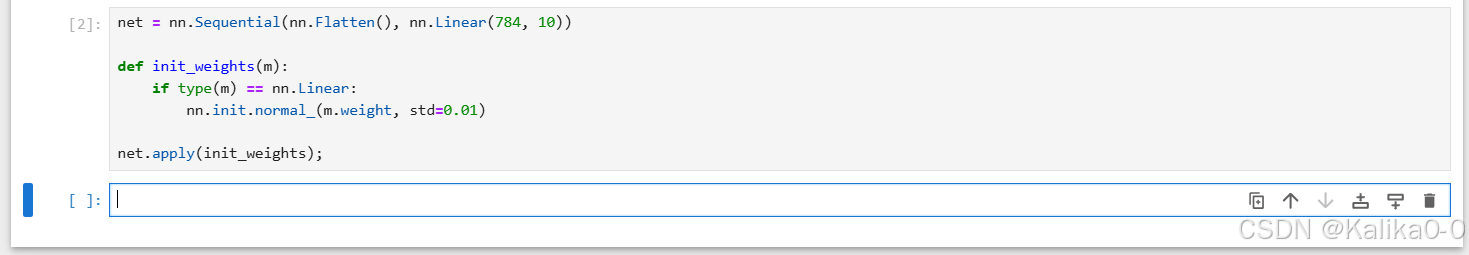

2.softmax回归输出层是一个全连接层

net = nn.Sequential(nn.Flatten(), nn.Linear(784, 10))

def init_weights(m):

if type(m) == nn.Linear:

nn.init.normal_(m.weight, std=0.01)

net.apply(init_weights);

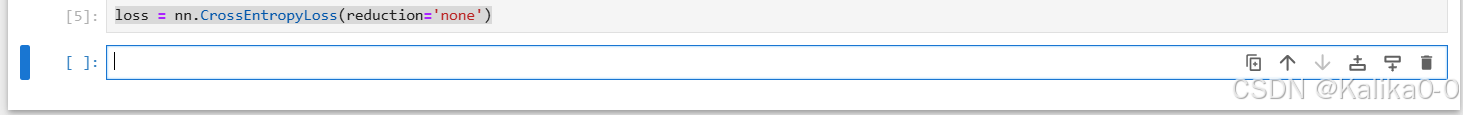

3.在交叉熵损失函数中传递为归一化的预测,计算softmax及对数

loss = nn.CrossEntropyLoss(reduction='none')

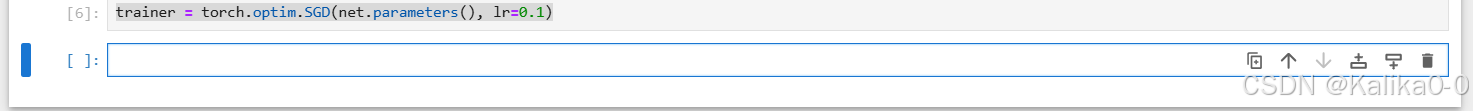

4.使用学习率为0.1的小批量随机梯度下降作为优化算法

trainer = torch.optim.SGD(net.parameters(), lr=0.1)