📚所属栏目:python

开篇:重构高等教育全流程,AI 从 "辅助" 到 "核心赋能"

高等教育的核心痛点从来不是 "资源短缺",而是 "精准匹配"------ 计算机专业学生需要实时编程指导,文科学生缺乏思辨性对话伙伴,理工科学生难以反复开展实体实验,而教师则被海量作业批改、个性化答疑占据大量精力。传统教育 AI 停留在 "课件分发""自动阅卷" 的浅层辅助,无法贯穿教学全流程。

2025 年,高等教育 AI 智能体的核心进化方向是 "全流程闭环赋能":以 "导学 - 伴学 - 诊断 - 实践" 为主线,深度适配不同学科特性,成为连接 "教" 与 "学" 的智能枢纽。本期将跳出 "模块堆砌" 的传统结构,以 "学科场景为核心,闭环逻辑为骨架,落地工具为支撑",重新拆解高等教育 AI 智能体的构建逻辑,附分学科实战代码、高校落地方法论与避坑指南,让技术真正服务于教学本质。

一、核心逻辑:"导学诊践" 闭环的底层设计(跨学科通用)

1. 闭环本质:从 "单向输出" 到 "双向交互"

高等教育 AI 智能体的闭环不是简单的 "环节串联",而是基于 "学生学情数据" 的动态迭代系统:

- 数据驱动:以 "知识点掌握度图谱" 为核心,整合预习、课堂、作业、实践全场景数据;

- 动态适配:根据学生实时表现调整内容难度、辅导方式、实践任务;

- 教学协同:教师可介入调整智能体策略,形成 "AI + 教师" 的协同教学模式。

2. 技术底座:三大核心能力(跨学科通用代码)

(1)知识点掌握度图谱构建

python

import networkx as nx

import pandas as pd

from sklearn.preprocessing import MinMaxScaler

class KnowledgeMasteryGraph:

def __init__(self, course_id):

self.course_id = course_id

self.graph = self._build_knowledge_graph() # 知识点关联图谱

self.scaler = MinMaxScaler() # 得分归一化

self.mastery_data = pd.DataFrame(columns=["student_id", "knowledge_point", "mastery_score", "data_source"])

def _build_knowledge_graph(self):

"""构建知识点关联图谱(从课程大纲导入)"""

# 从CSV加载知识点关系(知识点ID、名称、先修知识点、难度)

kp_df = pd.read_csv(f"./data/{self.course_id}_knowledge_points.csv")

graph = nx.DiGraph()

for _, row in kp_df.iterrows():

graph.add_node(

row["kp_id"],

name=row["kp_name"],

difficulty=row["difficulty"],

weight=row["exam_weight"] # 考试权重

)

# 添加先修关系边

if pd.notna(row["prerequisite_kp"]):

for prereq in str(row["prerequisite_kp"]).split(","):

graph.add_edge(prereq.strip(), row["kp_id"], relation="prerequisite")

return graph

def update_mastery_score(self, student_id, kp_data):

"""更新知识点掌握度(支持多数据源:预习、作业、考试、实践)"""

# kp_data格式:[{"kp_id": "K101", "score": 85, "data_source": "homework"}, ...]

new_rows = []

for data in kp_data:

# 得分归一化(0-1)

normalized_score = self.scaler.fit_transform([[data["score"]]])[0][0]

new_rows.append({

"student_id": student_id,

"knowledge_point": data["kp_id"],

"mastery_score": round(normalized_score, 2),

"data_source": data["data_source"]

})

# 合并数据(取最新值)

new_df = pd.DataFrame(new_rows)

self.mastery_data = pd.concat([self.mastery_data, new_df]).drop_duplicates(

subset=["student_id", "knowledge_point"], keep="last"

)

def get_student_mastery(self, student_id):

"""获取学生知识点掌握度图谱"""

student_data = self.mastery_data[self.mastery_data["student_id"] == student_id]

if student_data.empty:

return {"status": "error", "message": "无学生学习数据"}

# 构建学生专属掌握度图谱

mastery_graph = self.graph.copy()

for _, row in student_data.iterrows():

kp_id = row["knowledge_point"]

if kp_id in mastery_graph.nodes:

mastery_graph.nodes[kp_id]["mastery_score"] = row["mastery_score"]

# 根据掌握度标注状态:薄弱(<0.4)、及格(0.4-0.6)、良好(0.6-0.8)、优秀(≥0.8)

if row["mastery_score"] < 0.4:

mastery_graph.nodes[kp_id]["status"] = "weak"

elif row["mastery_score"] < 0.6:

mastery_graph.nodes[kp_id]["status"] = "pass"

elif row["mastery_score"] < 0.8:

mastery_graph.nodes[kp_id]["status"] = "good"

else:

mastery_graph.nodes[kp_id]["status"] = "excellent"

# 输出薄弱知识点(含先修关系影响)

weak_kps = [

{"kp_id": kp, "name": mastery_graph.nodes[kp]["name"], "prerequisites": list(self.graph.predecessors(kp))}

for kp in mastery_graph.nodes if mastery_graph.nodes[kp].get("status") == "weak"

]

return {

"mastery_graph": nx.node_link_data(mastery_graph),

"weak_knowledge_points": weak_kps,

"overall_mastery": round(student_data["mastery_score"].mean(), 2)

}

# 测试示例

if __name__ == "__main__":

# 初始化计算机专业《数据结构》课程的知识点掌握度图谱

kp_graph = KnowledgeMasteryGraph(course_id="CS202")

# 更新学生(S2025001)的掌握度数据(作业+考试)

kp_graph.update_mastery_score(

student_id="S2025001",

kp_data=[

{"kp_id": "K101", "score": 75, "data_source": "homework"}, # 数组基础

{"kp_id": "K102", "score": 30, "data_source": "exam"}, # 链表操作

{"kp_id": "K103", "score": 90, "data_source": "homework"} # 栈与队列

]

)

# 获取学生掌握度情况

student_mastery = kp_graph.get_student_mastery(student_id="S2025001")

print("学生整体掌握度:", student_mastery["overall_mastery"])

print("薄弱知识点:")

for kp in student_mastery["weak_knowledge_points"]:

print(f"- {kp['name']}(先修知识点:{[kp_graph.graph.nodes[p]['name'] for p in kp['prerequisites']]})")(2)跨场景数据融合引擎

python

import json

import datetime

from typing import Dict, List

class DataFusionEngine:

def __init__(self, course_id):

self.course_id = course_id

self.data_sources = ["preview", "class", "homework", "exam", "practice"] # 五大数据源

self.fusion_rules = self._load_fusion_rules() # 数据融合规则(不同数据源权重)

def _load_fusion_rules(self):

"""加载数据融合规则(可由教师自定义)"""

with open(f"./config/{self.course_id}_fusion_rules.json", "r", encoding="utf-8") as f:

return json.load(f)

def fuse_student_data(self, student_id: str, raw_data: Dict[str, List]):

"""融合多场景学生数据,生成统一学情报告"""

# 1. 数据校验(确保数据源合法)

for source in raw_data.keys():

if source not in self.data_sources:

raise ValueError(f"不支持的数据源:{source},仅支持{self.data_sources}")

# 2. 按规则计算各知识点综合得分

kp_scores = {}

for source, data_list in raw_data.items():

source_weight = self.fusion_rules[source]["weight"] # 数据源权重

for data in data_list:

kp_id = data["kp_id"]

score = data["score"] * source_weight # 加权得分

if kp_id not in kp_scores:

kp_scores[kp_id] = {"total_score": 0, "weight_sum": 0}

kp_scores[kp_id]["total_score"] += score

kp_scores[kp_id]["weight_sum"] += source_weight

# 3. 计算最终掌握度(总分/总权重)

mastery_scores = [

{

"kp_id": kp_id,

"score": round(score_info["total_score"] / score_info["weight_sum"], 2),

"data_source": list(raw_data.keys())

}

for kp_id, score_info in kp_scores.items() if score_info["weight_sum"] > 0

]

# 4. 生成学情报告(含学习行为分析)

learning_behavior = self._analyze_learning_behavior(raw_data)

return {

"student_id": student_id,

"course_id": self.course_id,

"mastery_scores": mastery_scores,

"learning_behavior": learning_behavior,

"update_time": datetime.datetime.now().strftime("%Y-%m-%d %H:%M:%S")

}

def _analyze_learning_behavior(self, raw_data: Dict[str, List]):

"""分析学习行为(如预习时长、作业提交及时性、实践频次)"""

behavior = {}

# 预习行为分析

if "preview" in raw_data and raw_data["preview"]:

preview_times = [data["spend_time"] for data in raw_data["preview"]]

behavior["preview_avg_time"] = round(sum(preview_times)/len(preview_times), 1)

behavior["preview_completion_rate"] = len([d for d in raw_data["preview"] if d["completed"]]) / len(raw_data["preview"])

# 作业行为分析

if "homework" in raw_data and raw_data["homework"]:

submit_deltas = [data["submit_delta"] for data in raw_data["homework"]] # 提交时差(分钟,负数为提前)

behavior["homework_avg_submit_delta"] = round(sum(submit_deltas)/len(submit_deltas), 1)

behavior["homework_excellent_rate"] = len([d for d in raw_data["homework"] if d["score"] >= 90]) / len(raw_data["homework"])

# 实践行为分析

if "practice" in raw_data and raw_data["practice"]:

behavior["practice_count"] = len(raw_data["practice"])

behavior["practice_avg_score"] = round(sum([d["score"] for d in raw_data["practice"]])/len(raw_data["practice"]), 1)

return behavior

# 测试示例

if __name__ == "__main__":

# 初始化数据融合引擎

fusion_engine = DataFusionEngine(course_id="CS202")

# 模拟学生多场景原始数据

student_raw_data = {

"preview": [

{"kp_id": "K101", "spend_time": 25, "completed": True},

{"kp_id": "K102", "spend_time": 10, "completed": False}

],

"homework": [

{"kp_id": "K101", "score": 80, "submit_delta": -10}, # 提前10分钟提交

{"kp_id": "K102", "score": 40, "submit_delta": 5} # 延迟5分钟提交

],

"practice": [

{"kp_id": "K101", "score": 85, "practice_type": "coding"},

{"kp_id": "K103", "score": 92, "practice_type": "coding"}

]

}

# 融合数据生成学情报告

student_report = fusion_engine.fuse_student_data(student_id="S2025001", raw_data=student_raw_data)

print("学生学情报告:")

print(f"知识点掌握度:{[{'kp_id': item['kp_id'], 'score': item['score']} for item in student_report['mastery_scores']]}")

print(f"学习行为分析:{student_report['learning_behavior']}")(3)个性化策略生成模型

python

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

class PersonalizedStrategyGenerator:

def __init__(self, course_id):

self.course_id = course_id

self.tokenizer = AutoTokenizer.from_pretrained("edu-agi/college-strategy-7b")

self.model = AutoModelForCausalLM.from_pretrained(

"edu-agi/college-strategy-7b",

device_map="auto",

torch_dtype=torch.float16

)

# 加载课程资源库(预习资料、习题、实践任务)

with open(f"./data/{self.course_id}_resources.json", "r", encoding="utf-8") as f:

self.resources = json.load(f)

def generate_strategy(self, student_report: Dict):

"""基于学情报告生成个性化学习策略"""

# 提取核心信息

student_id = student_report["student_id"]

mastery_scores = student_report["mastery_scores"]

weak_kps = [item for item in mastery_scores if item["score"] < 0.4]

learning_behavior = student_report["learning_behavior"]

# 构造提示词(融合学情数据与课程资源)

prompt = f"""

作为《{self.course_id}》课程的AI教学顾问,基于以下学生学情报告生成个性化学习策略:

一、学生基础信息

学生ID:{student_id}

整体掌握度:{round(sum([item['score'] for item in mastery_scores])/len(mastery_scores), 2)}

薄弱知识点:{[item['kp_id'] for item in weak_kps]}

学习行为分析:{learning_behavior}

二、课程资源库(可推荐)

预习资料:{[r['name'] for r in self.resources['preview']][:3]}

习题资源:{[r['name'] for r in self.resources['exercises']][:3]}

实践任务:{[r['name'] for r in self.resources['practice']][:3]}

三、策略生成要求

1. 针对性:优先解决薄弱知识点,结合学习行为短板(如预习不足、作业拖延);

2. 可执行性:分阶段设计(1周内),每天任务时长≤60分钟;

3. 资源匹配:推荐课程资源库中的具体内容,附使用建议;

4. 学科适配:符合计算机专业学习特点,侧重编程实践与逻辑理解;

5. 反馈机制:明确学习效果检测方式(如习题、小测、实践项目)。

"""

# 模型生成

inputs = self.tokenizer(prompt, return_tensors="pt", truncation=True, max_length=1024)

outputs = self.model.generate(

**inputs,

max_length=2048,

temperature=0.4,

top_p=0.9,

do_sample=False,

eos_token_id=self.tokenizer.eos_token_id

)

strategy = self.tokenizer.decode(outputs[0], skip_special_tokens=True).split("输出:")[-1].strip()

return {

"student_id": student_id,

"personalized_strategy": strategy,

"recommended_resources": self._match_resources(weak_kps)

}

def _match_resources(self, weak_kps: List):

"""为薄弱知识点匹配对应资源"""

matched = []

for kp in weak_kps:

kp_id = kp["kp_id"]

# 匹配预习资料

preview_res = [r for r in self.resources["preview"] if kp_id in r["related_kps"]]

# 匹配习题

exercise_res = [r for r in self.resources["exercises"] if kp_id in r["related_kps"]]

# 匹配实践任务

practice_res = [r for r in self.resources["practice"] if kp_id in r["related_kps"]]

matched.append({

"kp_id": kp_id,

"preview_resources": preview_res[:1],

"exercise_resources": exercise_res[:2],

"practice_resources": practice_res[:1]

})

return matched

# 测试示例

if __name__ == "__main__":

# 初始化策略生成器

strategy_generator = PersonalizedStrategyGenerator(course_id="CS202")

# 模拟学生学情报告(承接上文数据融合结果)

student_report = {

"student_id": "S2025001",

"course_id": "CS202",

"mastery_scores": [

{"kp_id": "K101", "score": 0.75, "data_source": ["preview", "homework"]},

{"kp_id": "K102", "score": 0.35, "data_source": ["preview", "homework"]},

{"kp_id": "K103", "score": 0.88, "data_source": ["practice"]}

],

"learning_behavior": {

"preview_avg_time": 17.5,

"preview_completion_rate": 0.5,

"homework_avg_submit_delta": -2.5,

"homework_excellent_rate": 0.0,

"practice_count": 2,

"practice_avg_score": 88.5

},

"update_time": "2025-01-15 14:30:00"

}

# 生成个性化学习策略

personalized_strategy = strategy_generator.generate_strategy(student_report)

print("个性化学习策略:")

print(personalized_strategy["personalized_strategy"])

print("\n推荐资源:")

for item in personalized_strategy["recommended_resources"]:

print(f"- 知识点{item['kp_id']}:")

print(f" 预习资料:{item['preview_resources'][0]['name'] if item['preview_resources'] else '无'}")

print(f" 习题:{[e['name'] for e in item['exercise_resources']]}")二、分学科落地:场景化解决方案(含实战代码)

1. 计算机编程专业:代码级智能辅导闭环

核心场景:编程学习全流程(预习 - 编码 - 调试 - 实战)

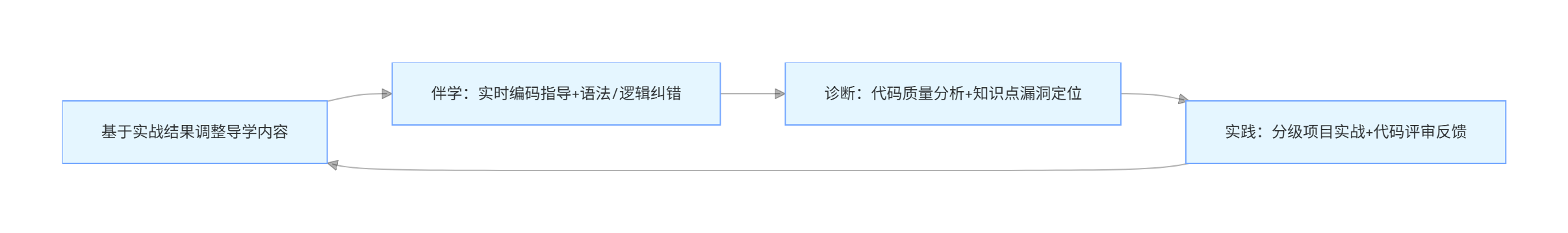

闭环设计:

关键代码:实时编码辅导模块

python

import ast

import subprocess

import tempfile

from typing import Tuple, Optional

class CodeTutoringModule:

def __init__(self, course_id):

self.course_id = course_id

# 加载编程知识点库与常见错误库

with open(f"./data/{self.course_id}_code_knowledge.json", "r", encoding="utf-8") as f:

self.code_knowledge = json.load(f)

self.common_errors = self.code_knowledge["common_errors"]

def parse_code(self, code: str, language: str = "python") -> Tuple[bool, Optional[str], Optional[dict]]:

"""解析代码,检测语法/逻辑错误"""

if language != "python":

return False, "暂仅支持Python语言", None

# 1. 语法错误检测

try:

ast.parse(code)

except SyntaxError as e:

error_msg = f"语法错误:{e.msg}(行号:{e.lineno})"

fix_suggestion = self._get_error_fix(e.msg)

return False, error_msg, {"fix_suggestion": fix_suggestion, "error_type": "syntax"}

# 2. 逻辑错误检测(运行代码并捕获异常)

with tempfile.NamedTemporaryFile(mode="w", suffix=".py", delete=False) as f:

f.write(code)

file_path = f.name

try:

result = subprocess.run(

["python", file_path],

capture_output=True,

text=True,

timeout=10

)

if result.returncode != 0:

error_msg = f"运行错误:{result.stderr.strip()}"

fix_suggestion = self._get_error_fix(result.stderr.strip())

return False, error_msg, {"fix_suggestion": fix_suggestion, "error_type": "runtime"}

else:

# 3. 代码质量分析(规范、效率)

quality_analysis = self._analyze_code_quality(code)

return True, "代码运行正常", {"quality_analysis": quality_analysis, "output": result.stdout.strip()}

finally:

import os

os.unlink(file_path)

def _get_error_fix(self, error_msg: str) -> str:

"""根据错误信息匹配修复建议"""

for error in self.common_errors:

if any(keyword in error_msg.lower() for keyword in error["keywords"]):

return error["fix_suggestion"]

return "未找到匹配的修复建议,请检查代码逻辑或语法"

def _analyze_code_quality(self, code: str) -> dict:

"""分析代码质量(规范、效率、可读性)"""

# 简化实现:基于关键字匹配与代码结构分析

lines = code.strip().split("\n")

quality = {

"line_count": len(lines),

"comment_rate": round(sum(1 for line in lines if line.strip().startswith("#")) / len(lines), 2) if lines else 0,

"naming_standard": "合规" if self._check_naming_standard(code) else "不合规",

"efficiency_suggestions": []

}

# 效率优化建议(如使用列表推导式替代for循环)

if "for" in code and "append" in code and "[" not in code[code.index("for"):code.index("append")+6]:

quality["efficiency_suggestions"].append("建议使用列表推导式替代for循环+append,提升代码效率")

# 检测冗余代码(如重复判断)

if code.count("if") > 3 and len(set([line.strip() for line in lines if line.strip().startswith("if")])) < code.count("if"):

quality["efficiency_suggestions"].append("检测到可能的重复判断,建议优化条件逻辑")

return quality

def _check_naming_standard(self, code: str) -> bool:

"""检查命名规范(Python PEP8:变量/函数使用蛇形命名法)"""

# 简化实现:检测变量名是否包含下划线(排除关键字)

tree = ast.parse(code)

for node in ast.walk(tree):

if isinstance(node, ast.Name) and not node.id.isupper(): # 排除常量

if node.id.isalpha() and node.id[0].islower() and not "_" in node.id:

# 纯小写无下划线,可能不符合蛇形命名法

return False

return True

# 测试示例

if __name__ == "__main__":

code_tutor = CodeTutoringModule(course_id="CS202")

# 测试有语法错误的代码

error_code = """

def calculate_sum(a, b)

return a + b

"""

success, msg, details = code_tutor.parse_code(error_code)

print(f"语法错误测试:{success},{msg}")

print(f"修复建议:{details['fix_suggestion']}")

# 测试运行正常但质量待优化的代码

normal_code = """

# 计算1-10的和

sum = 0

for i in range(11):

sum = sum + i

print(sum)

"""

success, msg, details = code_tutor.parse_code(normal_code)

print(f"\n正常代码测试:{success},{msg}")

print(f"代码质量分析:{details['quality_analysis']}")2. 文科思辨专业(哲学 / 中文 / 法学):深度对话闭环

核心场景:思辨能力培养(话题讨论 - 论点构建 - 论证强化 - 反馈迭代)

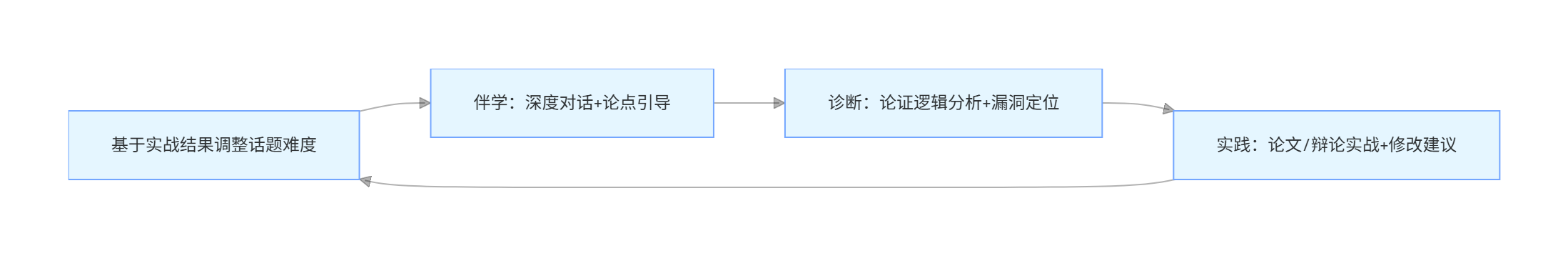

闭环设计:

关键代码:思辨对话引导模块

python

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

class CriticalThinkingDialogueModule:

def __init__(self, course_id):

self.course_id = course_id

self.tokenizer = AutoTokenizer.from_pretrained("edu-agi/college-critical-7b")

self.model = AutoModelForCausalLM.from_pretrained(

"edu-agi/college-critical-7b",

device_map="auto",

torch_dtype=torch.float16

)

# 加载话题库与论证逻辑框架

with open(f"./data/{self.course_id}_topics.json", "r", encoding="utf-8") as f:

self.topics = json.load(f)

def generate_topic(self, student_level: str = "undergraduate") -> dict:

"""生成适配学生水平的思辨话题"""

# 筛选适配难度的话题

matched_topics = [t for t in self.topics if t["level"] == student_level]

if not matched_topics:

matched_topics = self.topics # 无匹配时取默认话题

# 随机选择话题并生成引导问题

topic = matched_topics[0]

prompt = f"""

为《{self.course_id}》课程生成思辨话题引导问题,话题:{topic['title']}

要求:

1. 分3个层次:基础理解→深度分析→拓展思考;

2. 每个层次1个问题,引导学生构建完整论证;

3. 语言符合{student_level}学生认知水平,避免过于抽象。

"""

inputs = self.tokenizer(prompt, return_tensors="pt", truncation=True)

outputs = self.model.generate(**inputs, max_length=512, temperature=0.5)

guide_questions = self.tokenizer.decode(outputs[0], skip_special_tokens=True)

return {

"topic_title": topic["title"],

"topic_description": topic["description"],

"guide_questions": guide_questions.split("\n")[:3],

"related_literature": topic["related_literature"][:3]

}

def dialogue_guide(self, topic: str, student_opinion: str) -> dict:

"""基于学生观点进行对话引导,强化论证逻辑"""

prompt = f"""

作为《{self.course_id}》课程的思辨对话导师,基于以下话题和学生观点进行引导:

话题:{topic}

学生观点:{student_opinion}

引导要求:

1. 先肯定合理部分(若有);

2. 提出针对性追问,指出论证逻辑漏洞(如论据不足、偷换概念);

3. 提供论证优化建议(如补充案例、引用理论);

4. 语气友好,鼓励学生进一步思考,不直接否定观点。

"""

inputs = self.tokenizer(prompt, return_tensors="pt", truncation=True, max_length=1024)

outputs = self.model.generate(**inputs, max_length=1536, temperature=0.4)

guide_response = self.tokenizer.decode(outputs[0], skip_special_tokens=True)

# 分析学生论证逻辑

logic_analysis = self._analyze_argument_logic(student_opinion)

return {

"guide_response": guide_response,

"argument_logic_analysis": logic_analysis

}

def _analyze_argument_logic(self, opinion: str) -> dict:

"""分析学生论证逻辑(论点、论据、论证过程)"""

prompt = f"""

分析以下学生观点的论证逻辑:{opinion}

输出格式:

论点:xxx(明确/模糊)

论据:xxx(充分/不足/无)

论证过程:xxx(逻辑闭环/存在漏洞/无论证)

优化建议:xxx

"""

inputs = self.tokenizer(prompt, return_tensors="pt", truncation=True)

outputs = self.model.generate(**inputs, max_length=512, temperature=0.3)

analysis = self.tokenizer.decode(outputs[0], skip_special_tokens=True)

# 解析分析结果为字典

analysis_dict = {}

for line in analysis.split("\n"):

if ":" in line:

key, value = line.split(":", 1)

analysis_dict[key.strip()] = value.strip()

return analysis_dict

# 测试示例

if __name__ == "__main__":

dialogue_module = CriticalThinkingDialogueModule(course_id="PH101") # 哲学课程

# 生成思辨话题

topic = dialogue_module.generate_topic(student_level="undergraduate")

print("思辨话题:", topic["topic_title"])

print("引导问题:")

for i, q in enumerate(topic["guide_questions"], 1):

print(f"{i}. {q}")

# 模拟学生观点,进行对话引导

student_opinion = "我认为人工智能不会取代人类,因为人类有情感和创造力,而AI只是执行程序。"

guide_result = dialogue_module.dialogue_guide(topic=topic["topic_title"], student_opinion=student_opinion)

print(f"\n学生观点:{student_opinion}")

print(f"引导回应:{guide_result['guide_response']}")

print(f"论证逻辑分析:{guide_result['argument_logic_analysis']}")3. 理工科实验专业(物理 / 化学 / 工程):虚拟仿真闭环

核心场景:实验能力培养(预习 - 虚拟操作 - 数据处理 - 故障诊断)

闭环设计:

关键代码:虚拟实验数据处理与诊断模块

python

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from scipy import stats

class VirtualExperimentDiagnosisModule:

def __init__(self, experiment_id):

self.experiment_id = experiment_id

# 加载实验标准数据与误差允许范围

with open(f"./data/experiment_{experiment_id}_standard.json", "r", encoding="utf-8") as f:

self.standard_data = json.load(f)

self.allowed_error = self.standard_data["allowed_error"] # 各指标允许误差(百分比)

def analyze_experiment_data(self, student_data: pd.DataFrame) -> dict:

"""分析学生实验数据,诊断误差与问题"""

# 1. 数据预处理(去除异常值)

cleaned_data = self._remove_outliers(student_data)

# 2. 计算核心指标(与标准数据对比)

standard_metrics = self.standard_data["metrics"]

student_metrics = self._calculate_metrics(cleaned_data)

# 3. 误差分析

error_analysis = {}

abnormal_metrics = []

for metric, student_value in student_metrics.items():

standard_value = standard_metrics[metric]

error_rate = abs((student_value - standard_value) / standard_value) * 100

error_analysis[metric] = {

"student_value": round(student_value, 4),

"standard_value": round(standard_value, 4),

"error_rate": round(error_rate, 2),

"is_abnormal": error_rate > self.allowed_error[metric]

}

if error_rate > self.allowed_error[metric]:

abnormal_metrics.append(metric)

# 4. 问题诊断(基于异常指标匹配可能原因)

problem_diagnosis = self._diagnose_problem(abnormal_metrics)

# 5. 生成数据可视化图表(简化为描述)

visualization = self._generate_visualization(cleaned_data, standard_data["standard_curve"])

return {

"data_cleaning": f"原始数据{len(student_data)}条,清洗后{len(cleaned_data)}条(去除异常值{len(student_data)-len(cleaned_data)}条)",

"metric_analysis": error_analysis,

"abnormal_metrics": abnormal_metrics,

"problem_diagnosis": problem_diagnosis,

"visualization_description": visualization,

"improvement_suggestions": self._generate_improvement_suggestions(problem_diagnosis)

}

def _remove_outliers(self, data: pd.DataFrame) -> pd.DataFrame:

"""使用3σ法则去除异常值"""

return data[(np.abs(stats.zscore(data.select_dtypes(include=[np.number]))) < 3).all(axis=1)]

def _calculate_metrics(self, data: pd.DataFrame) -> dict:

"""计算实验核心指标(如平均值、峰值、斜率等)"""

metrics = {}

# 示例:计算电压-电流实验的电阻(欧姆定律)

if self.experiment_id == "PHY201":

metrics["resistance"] = np.mean(data["voltage"] / data["current"])

metrics["voltage_peak"] = data["voltage"].max()

metrics["current_stability"] = data["current"].std()

return metrics

def _diagnose_problem(self, abnormal_metrics: List) -> str:

"""基于异常指标诊断实验问题"""

problem_mapping = self.standard_data["problem_mapping"]

for metric in abnormal_metrics:

if metric in problem_mapping:

return problem_mapping[metric]

return "未明确匹配到常见问题,可能是操作误差或设备参数设置问题"

def _generate_visualization(self, student_data: pd.DataFrame, standard_curve: List) -> str:

"""生成数据可视化描述(实际项目中可生成图表)"""

# 示例:电压-电流曲线对比

student_curve = list(zip(student_data["current"], student_data["voltage"]))

return f"学生实验曲线与标准曲线对比:标准曲线斜率{round(standard_curve[1][1]/standard_curve[1][0],2)},学生曲线斜率{round(student_curve[1][1]/student_curve[1][0],2)},误差主要集中在电流0.5-1.0A区间"

def _generate_improvement_suggestions(self, problem: str) -> List:

"""基于问题诊断生成改进建议"""

suggestion_mapping = self.standard_data["suggestion_mapping"]

return suggestion_mapping.get(problem, ["检查实验操作步骤", "重新校准设备参数", "增加实验数据采集次数"])

# 测试示例

if __name__ == "__main__":

# 初始化物理实验"电压-电流测量"诊断模块

exp_diagnosis = VirtualExperimentDiagnosisModule(experiment_id="PHY201")

# 模拟学生实验数据

student_data = pd.DataFrame({

"current": [0.1, 0.2, 0.3, 0.4, 0.5, 2.0, 0.6, 0.7], # 包含异常值2.0

"voltage": [0.2, 0.4, 0.6, 0.8, 1.0, 3.5, 1.2, 1.4]

})

# 分析实验数据

analysis_result = exp_diagnosis.analyze_experiment_data(student_data)

print("实验数据诊断结果:")

print(f"数据清洗情况:{analysis_result['data_cleaning']}")

print(f"异常指标:{analysis_result['abnormal_metrics']}")

print(f"问题诊断:{analysis_result['problem_diagnosis']}")

print(f"改进建议:{analysis_result['improvement_suggestions']}")三、高校落地方法论:从试点到规模化推广

1. 落地三步法(东北大学实践验证)

第一步:小范围试点(1-2 门核心课程)

- 选择标准:课程受众广、实践需求强(如计算机专业《编程实践》、理工科《基础实验》);

- 数据准备:整理课程大纲、知识点体系、习题 / 实验数据(优先复用现有教学资源);

- 技术部署:采用 "云端服务器 + 端侧轻量客户端" 模式,降低硬件门槛;

- 反馈收集:通过问卷、访谈收集师生反馈,聚焦核心痛点迭代功能。

第二步:跨学科适配(3-5 个不同类型学科)

- 学科选择:覆盖编程、文科思辨、理工科实验三大类,验证跨学科通用性;

- 个性化适配:针对不同学科特性调整核心模块(如文科强化对话引导,理工科强化仿真与数据处理);

- 教师培训:开展 AI 智能体使用培训,指导教师自定义知识点、调整辅导策略;

- 效果评估:设置对照组(传统教学班级),从成绩、学习效率、师生满意度多维度评估效果。

第三步:规模化推广(全校覆盖)

- 平台集成:对接高校现有教学平台(如超星、智慧树),实现数据互通;

- 资源共建:建立 "教师 - 技术团队" 资源共建机制,持续丰富知识点库、习题库、实践资源;

- 运维保障:搭建专属运维团队,处理技术故障、数据安全等问题;

- 持续迭代:基于全校教学数据,优化模型性能与教学策略。

2. 避坑指南(高校落地常见问题)

| 常见问题 | 解决方案 |

|---|---|

| 教师抵触 AI 介入教学 | 强调 "AI 辅助而非替代",展示 AI 减少重复工作(如批改、答疑)的实际效果;提供定制化权限,让教师掌控教学核心环节 |

| 学生过度依赖 AI | 设计 "AI 引导 + 自主思考" 机制(如编程辅导只给思路不给完整代码,思辨对话只提追问不直接给答案);设置 AI 使用时长限制 |

| 数据安全与隐私风险 | 学生数据本地存储,仅上传脱敏后的统计数据;符合 GDPR、中国《个人信息保护法》等合规要求;定期开展安全审计 |

| 跨学科适配效果差 | 建立学科专属配置模板,允许教师自定义知识点关联、辅导语气、实践任务类型;针对重点学科进行模型微调 |

| 硬件资源不足 | 提供分级部署方案:核心功能(如答疑、诊断)部署在云端,轻量功能(如预习、简单练习)支持端侧离线使用 |

四、总结与下期预告

第 37 期,我们以 "学科场景为核心,闭环逻辑为骨架,落地工具为支撑",重构了高等教育 AI 智能体的写作结构,拆解了跨学科通用的 "导学诊践" 闭环底层设计,提供了编程、文科思辨、理工科实验三大类学科的场景化解决方案与实战代码,分享了从试点到规模化的高校落地方法论。核心价值在于跳出 "模块堆砌",让 AI 智能体真正适配不同学科的教学本质,成为教师与学生的 "个性化教学伙伴"。