Replicaset控制器

建立控制器

[root@K8S-master ~]# kubectl create deployment webcluster --image myapp:v1 --dry-run=client -o yaml > repset.yml

[root@K8S-master ~]# vim repset.yml

apiVersion: apps/v1

kind: ReplicaSet

metadata:

labels:

app: webcluster

name: webcluster

spec:

replicas: 2

selector:

matchLabels:

app: webcluster

# strategy: {}

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: myapp:v1

name: myapp

[root@K8S-master ~]# kubectl apply -f repset.yml

#打开一个新的shell

[root@K8S-master ~]# watch -n 1 kubectl get pods --show-labels

Every 1.0s: kubectl get pods --show-labels master: Sat Apr 11 10:08:32 2026

NAME READY STATUS RESTARTS AGE LABELS

webcluster-77c87d9946-296p5 1/1 Running 0 23m app=webcluster,pod-template-hash=77c87d9946

webcluster-77c87d9946-lpsf9 1/1 Running 0 21m app=webcluster,pod-template-hash=77c87d9946测试功能

[root@K8S-master ~]# vim repset.yml

。。。。

spec:

replicas: 4

。。。。

[root@K8S-master ~]# kubectl apply -f repset.yml

[root@K8S-master ~]# watch -n 1 kubectl get pods --show-labels

Every 1.0s: kubectl get pods --show-labels master: Sat Apr 11 10:11:06 2026

NAME READY STATUS RESTARTS AGE LABELS

webcluster-77c87d9946-296p5 1/1 Running 0 24m app=webcluster,pod-template-hash=77c87d9946

webcluster-77c87d9946-ckwz2 1/1 Running 0 3s app=webcluster,pod-template-hash=77c87d9946

webcluster-77c87d9946-lpsf9 1/1 Running 0 22m app=webcluster,pod-template-hash=77c87d9946

webcluster-77c87d9946-xtdbd 1/1 Running 0 3s app=webcluster,pod-template-hash=77c87d9946

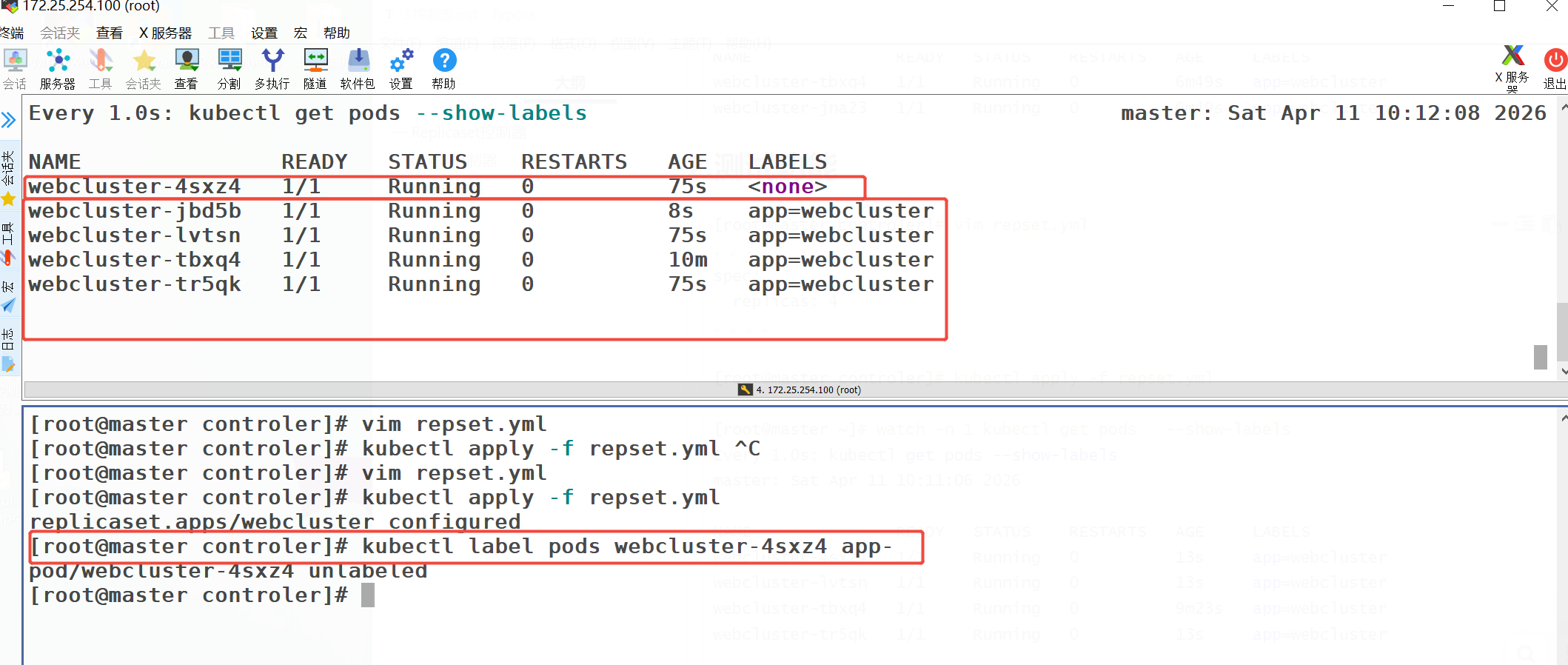

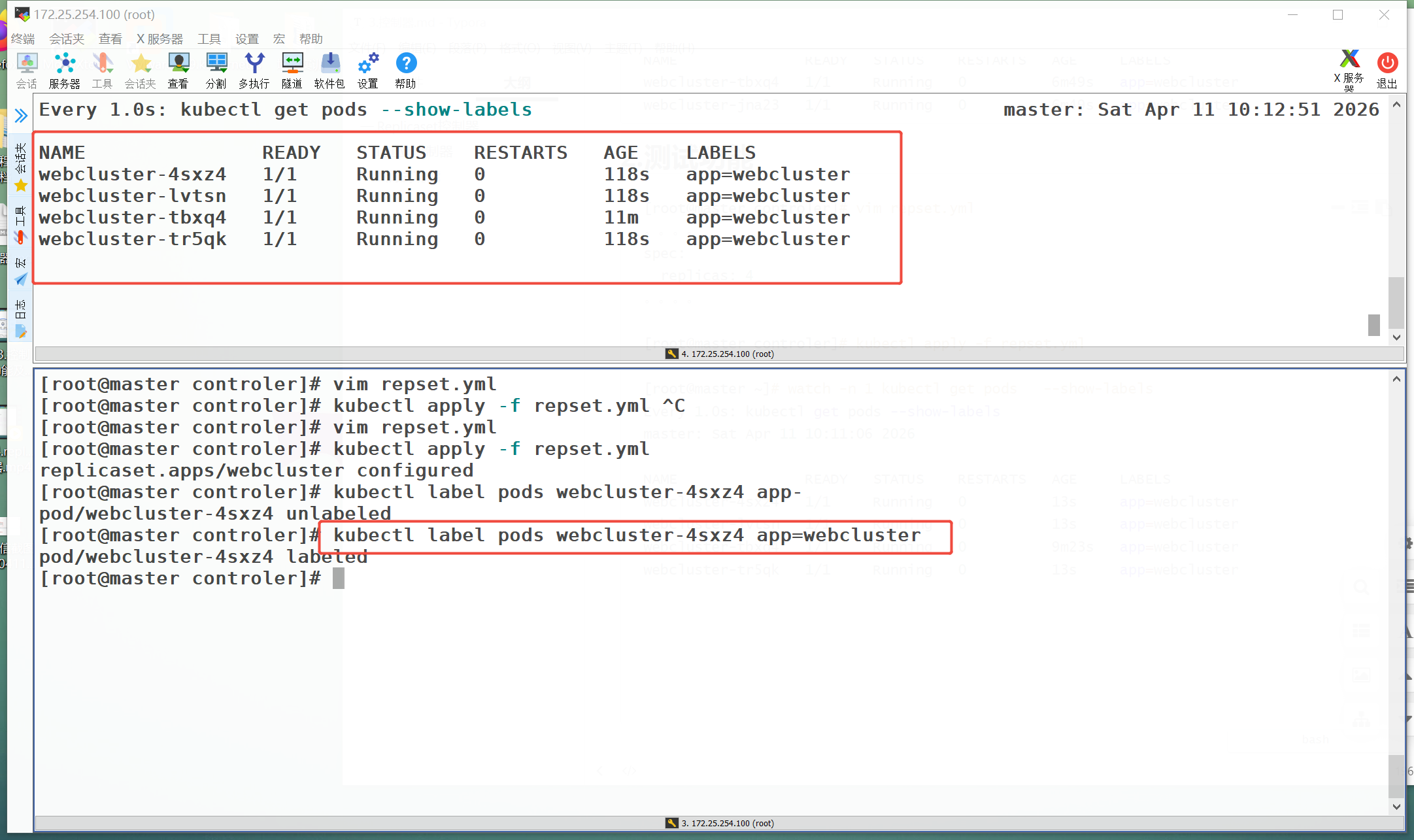

[root@K8S-master ~]# kubectl label pods webcluster-77c87d9946-296p5 app-

pod/webcluster-77c87d9946-296p5 unlabeled

[root@K8S-master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

webcluster-77c87d9946-296p5 1/1 Running 0 26m pod-template-hash=77c87d9946

webcluster-77c87d9946-chlgv 1/1 Running 0 37s app=webcluster,pod-template-hash=77c87d9946

webcluster-77c87d9946-lpsf9 1/1 Running 0 24m app=webcluster,pod-template-hash=77c87d9946

[root@K8S-master ~]# kubectl label pods webcluster-77c87d9946-296p5 app=webcluster

pod/webcluster-77c87d9946-296p5 labeled

[root@K8S-master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

webcluster-77c87d9946-296p5 1/1 Running 0 26m app=webcluster,pod-template-hash=77c87d9946

webcluster-77c87d9946-lpsf9 1/1 Running 0 24m app=webcluster,pod-template-hash=77c87d9946

deployment

监控

[root@K8S-master ~]# watch -n 1 " kubectl get pods --show-labels;echo ====;kubectl get replicasets.apps"建立deployment控制器

[root@K8S-master ~]# kubectl create deployment webcluster --image myapp:v1 --dry-run=client -o yaml > deployment.yml

[root@K8S-master ~]# vim deployment.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: webcluster

name: webcluster

spec:

minReadySeconds: 5

replicas: 2

selector:

matchLabels:

app: webcluster

# strategy: {}

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: myapp:v1

name: myapp

# resources: {}

[[root@K8S-master ~]# kubectl apply -f deployment.yml

#监控部分显示效果

Every 1.0s: kubectl get pods --show-labels;echo ====;kubectl get replicase... master: Sat Apr 11 10:43:24 2026

NAME READY STATUS RESTARTS AGE LABELS

webcluster-77c87d9946-5xktb 1/1 Running 0 49s app=webcluster,pod-template-hash=77c87d9946

webcluster-77c87d9946-ll6bf 1/1 Running 0 55s app=webcluster,pod-template-hash=77c87d9946

====

NAME DESIRED CURRENT READY AGE

webcluster-6c8b4bb9d7 0 0 0 3m39s

webcluster-77c87d9946 2 2 2 12m

#发布服务

[root@K8S-master ~]# kubectl expose deployment webcluster --port 80 --target-port 80

[root@K8S-master ~]# kubectl describe services webcluster

Name: webcluster

Namespace: default

Labels: app=webcluster

Annotations: <none>

Selector: app=webcluster

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.96.117.18

IPs: 10.96.117.18

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.1.7:80,10.244.2.28:80

Session Affinity: None

Internal Traffic Policy: Cluster

Events: <none>

#访问:

[root@K8S-master ~]# curl 10.107.142.60

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@K8S-master ~]# kubectl exec -it webcluster-77c87d9946-pjp9d -- wget -O- -T 3 http://localhost:80

Connecting to localhost:80 (127.0.0.1:80)

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

- 100% |*******************************************************| 65 0:00:00 ETA升级和回滚

#升级

[root@K8S-master ~]# vim deployment.yml

。。。。

spec:

minReadySeconds: 5

replicas: 2

selector:

matchLabels:

app: webcluster

# strategy: {}

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: myapp:v2 #升级为版本2

name: myapp

。。。。

[root@master controler]# kubectl apply -f dep.yml

deployment.apps/webcluster configured

[root@K8S-master ~]# kubectl exec -it webcluster-6c8b4bb9d7-knzqj -- wget -O- -T 3 http://localhost:80

Connecting to localhost:80 (127.0.0.1:80)

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

- 100% |*******************************| 65 0:00:00 ETA

#回滚

[root@master controler]# deployment.yml

。。。。

spec:

minReadySeconds: 5

replicas: 2

selector:

matchLabels:

app: webcluster

# strategy: {}

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: myapp:v1 #回滚为版本1

name: myapp

。。。。

[root@K8S-master ~]# kubectl apply -f dep.yml

[root@K8S-master ~]# kubectl exec -it webcluster-77c87d9946-5xktb -- wget -O- -T 3 http://localhost:80

Connecting to localhost:80 (127.0.0.1:80)

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

- 100% |*******************************| 65 0:00:00 ETA版本更新管理及优化

[root@master controler]# vim dep.yml

spec:

minReadySeconds: 5

replicas: 6 #把pod数量设定为6方便观察

selector:

[root@master controler]# kubectl apply -f dep.yml

deployment.apps/webcluster configured查看更新策略信息

[root@K8S-master ~]# kubectl describe deployments.apps webcluster

RollingUpdateStrategy: 25% max unavailable, 25% max surge #默认值

[root@K8S-master ~]# kubectl describe deployments.apps webcluster

Name: webcluster

Namespace: default

CreationTimestamp: Sat, 11 Apr 2026 10:54:43 +0800

Labels: app=webcluster

Annotations: deployment.kubernetes.io/revision: 5

Selector: app=webcluster

Replicas: 6 desired | 6 updated | 6 total | 6 available | 0 unavailable

StrategyType: RollingUpdate

MinReadySeconds: 5

RollingUpdateStrategy: 0 max unavailable, 1 max surge

Pod Template:

Labels: app=webcluster

Containers:

myapp:

Image: myapp:v1

Port: <none>

Host Port: <none>

Environment: <none>

Mounts: <none>

Volumes: <none>

Node-Selectors: <none>

Tolerations: <none>

Conditions:

Type Status Reason

---- ------ ------

Available True MinimumReplicasAvailable

Progressing True NewReplicaSetAvailable

OldReplicaSets: webcluster-6c8b4bb9d7 (0/0 replicas created)

NewReplicaSet: webcluster-77c87d9946 (6/6 replicas created)

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal ScalingReplicaSet 44m deployment-controller Scaled up replica set webcluster-77c87d9946 from 0 to 2

Normal ScalingReplicaSet 35m deployment-controller Scaled down replica set webcluster-77c87d9946 from 2 to 1

Normal ScalingReplicaSet 35m deployment-controller Scaled down replica set webcluster-77c87d9946 from 1 to 0

Normal ScalingReplicaSet 33m deployment-controller Scaled up replica set webcluster-77c87d9946 from 0 to 1

Normal ScalingReplicaSet 33m deployment-controller Scaled down replica set webcluster-6c8b4bb9d7 from 2 to 1

Normal ScalingReplicaSet 33m deployment-controller Scaled up replica set webcluster-77c87d9946 from 1 to 2

Normal ScalingReplicaSet 32m deployment-controller Scaled down replica set webcluster-6c8b4bb9d7 from 1 to 0

Normal ScalingReplicaSet 2m32s deployment-controller Scaled up replica set webcluster-77c87d9946 from 2 to 6

Normal ScalingReplicaSet 2m6s (x2 over 35m) deployment-controller Scaled up replica set webcluster-6c8b4bb9d7 from 0 to 1

Normal ScalingReplicaSet 2m1s (x2 over 35m) deployment-controller Scaled up replica set webcluster-6c8b4bb9d7 from 1 to 2

Normal ScalingReplicaSet 2m1s deployment-controller Scaled down replica set webcluster-77c87d9946 from 6 to 5

Normal ScalingReplicaSet 116s deployment-controller Scaled down replica set webcluster-77c87d9946 from 5 to 4

Normal ScalingReplicaSet 116s deployment-controller Scaled up replica set webcluster-6c8b4bb9d7 from 2 to 3

Normal ScalingReplicaSet 110s deployment-controller Scaled down replica set webcluster-77c87d9946 from 4 to 3

Normal ScalingReplicaSet 110s deployment-controller Scaled up replica set webcluster-6c8b4bb9d7 from 3 to 4

Normal ScalingReplicaSet 104s deployment-controller Scaled down replica set webcluster-77c87d9946 from 3 to 2

Normal ScalingReplicaSet 42s (x15 over 104s) deployment-controller (combined from similar events): Scaled up replica set webcluster-77c87d9946 from 5 to 6

[root@K8S-master ~]# 设定更新策略

[root@K8S-master ~]# vim deployment.yml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: webcluster

name: webcluster

spec:

minReadySeconds: 5 #最小就绪时间,指定pod每隔多久更新一次

replicas: 6

selector:

matchLabels:

app: webcluster

strategy: #指定更新策略

rollingUpdate:

maxSurge: 1 #比定义pod数量多几个

maxUnavailable: 0 #比定义pod个数少几个

template:

metadata:

labels:

app: webcluster

spec:

containers:

- image: myapp:v1

name: myapp

#resources: {}

[root@K8S-master ~]# kubectl apply -f dep.yml

maxSurge: 1含义 :在滚动更新过程中,可以超出期望 Pod 总数的最大 Pod 数量

-

值为 1 :表示最多可以比

replicas定义的 Pod 数量多 1 个 -

可以是绝对值 (如 1)或百分比(如 25%)

maxUnavailable: 0

含义 :在滚动更新过程中,不可用的 Pod 数量最大值

-

值为 0 :表示在更新期间不允许有任何 Pod 不可用

-

可以是绝对值 或百分比

更新暂停和恢复

[root@master controler]# kubectl rollout history deployment webcluster

deployment.apps/webcluster

REVISION CHANGE-CAUSE

7 <none>

8 <none>

[root@K8S-master ~]# kubectl rollout pause deployment webcluster #暂停更新

deployment.apps/webcluster paused

[root@K8S-master ~]# vim deployment.yml

。。。

containers:

- image: myapp:v2

name: myapp

。。。

[root@K8S-master ~]# kubectl apply -f dep.yml #执行成功。但更新过程在监控中没出现

deployment.apps/webcluster configured

[root@K8S-master ~]# kubectl rollout history deployment webcluster

deployment.apps/webcluster

REVISION CHANGE-CAUSE

5 <none>

6 <none>

[root@K8S-master ~]# kubectl rollout resume deployment webcluster #开启更新

deployment.apps/webcluster resumed

[root@K8S-master ~]# kubectl rollout history deployment webcluster

deployment.apps/webcluster

REVISION CHANGE-CAUSE

6 <none>

7 <none>DaemonSet

[root@K8S-master ~]# kubectl create deployment daemonset --image myapp:v1 --dry-run=client -o yaml > daemonset.yml

[root@K8S-master ~]# vim daemonset.yml

apiVersion: apps/v1

kind: DaemonSet

metadata:

labels:

app: daemonset

name: daemonset

spec:

selector:

matchLabels:

app: daemonset

template:

metadata:

labels:

app: daemonset

spec:

containers:

- image: myapp:v1

name: myapp

#另外开启一个主机node3,并设定在初始化集群时的所有设定确保所有服务的开启

#在master中重新生成集群主机注册时需要的token

[root@K8S-master ~]# kubeadm token create --print-join-command

kubeadm join 172.25.254.100:6443 --token lqcz14.6f4krq91w75h58bt --discovery-token-ca-cert-hash sha256:6b5950ef2cdba85d6dfdb564ee90d4187fa3d341767dc9852cbdd5c9dee4f927

[root@K8S-master ~]# kubeadm join 172.25.254.100:6443 --token lqcz14.6f4krq91w75h58bt --discovery-token-ca-cert-hash sha256:6b5950ef2cdba85d6dfdb564ee90d4187fa3d341767dc9852cbdd5c9dee4f927 --cri-socket unix:///var/run/cri-dockerd.sock

#当node3加入集群后会在node3中立即群星指定的pod,其原因是因为运行了daemonset

#可以在master中观察pod的状态Job控制器

[root@K8S-master ~]# docker load -i perl-5.34.tar.gz

[root@K8S-master ~]# docker tag perl:5.34.0 reg.hjw.org/library/perl:5.34.0

[root@K8S-master ~]#docker login reg.hjw.org -u admin

Password:

Login Succeeded

[root@K8S-master ~]# docker push reg.hjw.org/library/perl:5.34.0

[root@K8S-master ~]# kubectl create job job --image perl:5.34.0 --dry-run=client -o yaml > job.yml

[root@K8S-master ~]# vim job.yml

apiVersion: batch/v1

kind: Job

metadata:

name: job

spec:

completions: 6

parallelism: 2

template:

spec:

containers:

- image: perl:5.34.0

name: job

command: ["perl", "-Mbignum=bpi", "-wle", "print bpi(2000)"]

restartPolicy: Never

backoffLimit: 4

[root@K8S-master ~]# kubectl apply -f job.yml

job.batch/job created

[root@K8S-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

job-2gmqh 0/1 Completed 0 78s

job-82zzk 0/1 Completed 0 98s

job-bdkq5 0/1 Completed 0 80s

job-cl4rk 0/1 Completed 0 74s

job-cwkvt 0/1 Completed 0 75s

job-x5m94 0/1 Completed 0 98s

[root@K8S-master ~]# kubectl logs job-2gmqh

3.1415926535897932384626433832795028841971693993751058209749445923078164062862089986280348253421170679821480865132823066470938446095505822317253594081284811174502841027019385211055596446229489549303819644288109756659334461284756482337867831652712019091456485669234603486104543266482133936072602491412737245870066063155881748815209209628292540917153643678925903600113305305488204665213841469519415116094330572703657595919530921861173819326117931051185480744623799627495673518857527248912279381830119491298336733624406566430860213949463952247371907021798609437027705392171762931767523846748184676694051320005681271452635608277857713427577896091736371787214684409012249534301465495853710507922796892589235420199561121290219608640344181598136297747713099605187072113499999983729780499510597317328160963185950244594553469083026425223082533446850352619311881710100031378387528865875332083814206171776691473035982534904287554687311595628638823537875937519577818577805321712268066130019278766111959092164201989380952572010654858632788659361533818279682303019520353018529689957736225994138912497217752834791315155748572424541506959508295331168617278558890750983817546374649393192550604009277016711390098488240128583616035637076601047101819429555961989467678374494482553797747268471040475346462080466842590694912933136770289891521047521620569660240580381501935112533824300355876402474964732639141992726042699227967823547816360093417216412199245863150302861829745557067498385054945885869269956909272107975093029553211653449872027559602364806654991198818347977535663698074265425278625518184175746728909777727938000816470600161452491921732172147723501414419735685481613611573525521334757418494684385233239073941433345477624168625189835694855620992192221842725502542568876717904946016534668049886272327917860857843838279679766814541009538837863609506800642251252051173929848960841284886269456042419652850222106611863067442786220391949450471237137869609563643719172874677646575739624138908658326459958133904780275901Cronjob控制器

[root@K8S-master ~]# kubectl create cronjob cronjob --image busybox --schedule "* * * * *" --dry-run=client -o yaml > cronjob.yml

[root@K8S-master ~]# vim cronjob.yml

apiVersion: batch/v1

kind: CronJob

metadata:

name: cronjob

spec:

jobTemplate:

metadata:

name: cronjob

spec:

template:

spec:

containers:

- image: busybox

name: cronjob

command:

- /bin/sh

- -c

- echo "hello hjw"

restartPolicy: OnFailure

schedule: '* * * * *'

[root@K8S-master ~]# kubectl apply -f cronjob.yml #整分运行

[root@K8S-master ~]#[root@K8S-master ~]#[root@K8S-master ~]# kubectl get cronjobs.batch

NAME SCHEDULE TIMEZONE SUSPEND ACTIVE LAST SCHEDULE AGE

cronjob * * * * * <none> False 0 17s 70s

[root@K8S-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

cronjob-29598257-5sfmh 0/1 Completed 0 27s

[root@K8S-master ~]# kubectl logs cronjob-29598257-5sfmh

hello hjw

[root@K8S-master ~]#