Title

题目

PneumoLLM: Harnessing the power of large language model for pneumoconiosis diagnosis

PneumoLLM: 利用大语言模型的力量进行尘肺病诊断

01

文献速递介绍

在计算机辅助诊断领域,对医学数据的处理和分析能力至关重要。它有助于潜在疾病的诊断和未来临床结果的预测。随着深度学习理论的快速发展,研究人员设计了复杂的网络架构(He 等,2016;Dosovitskiy 等,2020),并整理了大量高质量的数据集(Deng 等,2009;Wang 等,2017)来预训练这些强大的网络。预训练策略通过优化权重分布赋予网络宝贵的知识,从而使研究人员能够利用针对特定疾病诊断的标记数据进一步优化模型。当数据充足且标记准确时,这种经典的范式通常能取得可嘉的结果,特别是在常见疾病的诊断中。例如,EchoNet-Dynamic(Ouyang 等,2020)在心脏功能评估中已经超过了医学专家的水平。

然而,当我们深入研究如尘肺病(Li 等,2023b;Dong 等,2022)等职业病时,情况发生了变化。在没有个人防护设备的粉尘环境中长期暴露的人群容易患上肺纤维化,这通常是尘肺病的前兆(Qi 等,2021;Devnath 等,2022)。尘肺病高发地区通常缺乏经济发展、医疗资源和基础设施,以及专业的医疗从业人员。此外,人们对疾病筛查和诊断存在明显的抗拒,导致这些疾病的临床数据严重不足(Sun 等,2023;Huang 等,2023b)。数据的匮乏使得传统的预训练与微调策略难以奏效。

Aastract

摘要

The conventional pretraining-and-finetuning paradigm, while effective for common diseases with ampledata, faces challenges in diagnosing data-scarce occupational diseases like pneumoconiosis. Recently, largelanguage models (LLMs) have exhibits unprecedented ability when conducting multiple tasks in dialogue,bringing opportunities to diagnosis. A common strategy might involve using adapter layers for vision--language alignment and diagnosis in a dialogic manner. Yet, this approach often requires optimization ofextensive learnable parameters in the text branch and the dialogue head, potentially diminishing the LLMs'efficacy, especially with limited training data. In our work, we innovate by eliminating the text branch andsubstituting the dialogue head with a classification head. This approach presents a more effective methodfor harnessing LLMs in diagnosis with fewer learnable parameters. Furthermore, to balance the retention ofdetailed image information with progression towards accurate diagnosis, we introduce the contextual multitoken engine. This engine is specialized in adaptively generating diagnostic tokens. Additionally, we proposethe information emitter module, which unidirectionally emits information from image tokens to diagnosistokens. Comprehensive experiments validate the superiority of our methods.

传统的预训练与微调范式虽然在数据充足的常见疾病诊断中有效,但在诊断如尘肺病等数据稀缺的职业病时面临挑战。近年来,大型语言模型(LLMs)在对话中执行多项任务时展现了前所未有的能力,这为诊断带来了新的机遇。一个常见的策略可能是使用适配器层来实现视觉-语言对齐,并以对话的方式进行诊断。然而,这种方法通常需要优化文本分支和对话头中的大量可学习参数,可能会削弱LLMs的效力,尤其是在训练数据有限的情况下。在我们的工作中,我们通过去除文本分支并将对话头替换为分类头来创新。这种方法为在诊断中利用LLMs提供了一种更有效的方法,同时减少了可学习参数的数量。此外,为了在保留详细图像信息与准确诊断之间取得平衡,我们引入了上下文多标记引擎。该引擎专门用于自适应生成诊断标记。除此之外,我们还提出了信息发射模块,该模块单向地将信息从图像标记传递到诊断标记。全面的实验验证了我们方法的优越性。

Method

方法

The efficacy of computer-aided diagnosis systems is crucial in processing and analyzing medical data. However, these systems often facea significant shortfall in clinical data availability. Leveraging the richknowledge reservoirs of foundational models is a promising strategyto address this data scarcity. Yet, the conventional pretraining-andfinetuning approach may compromise the representation capabilities ofLLMs, due to substantial changes in their parameter spaces, leading toincreased training time and memory overhead (Touvron et al., 2023a,b;OpenAI, 2023b).

计算机辅助诊断系统在处理和分析医学数据方面的效率至关重要。然而,这些系统通常面临临床数据严重不足的问题。利用基础模型的丰富知识储备是应对数据稀缺的一种有前景的策略。然而,传统的预训练与微调方法可能会因其参数空间的显著变化而削弱LLM的表示能力,导致训练时间增加和内存开销增加(Touvron 等,2023a,b;OpenAI,2023b)。

Conclusion

结论

In this paper, we introduce PneumoLLM, a pioneering approachutilizing large language models for streamlined diagnostic processesin medical imaging. By discarding the text branch and transformingthe dialogue head into a classification head, PneumoLLM simplifies theworkflow for eliciting knowledge from LLMs. This innovation provesparticular effectiveness when only classification labels are available fortraining, rather than extensive descriptive sentences. The streamlinedprocess also significantly reduces the optimization space, facilitatinglearning with limited training data. Ablation studies further underscorethe necessity and effectiveness of the proposed modules, especiallyin maintaining the integrity of source image details while advancingtowards accurate diagnostic outcomes.

在本文中,我们介绍了PneumoLLM,这是一种利用大语言模型简化医学影像诊断流程的创新方法。通过去除文本分支并将对话头转换为分类头,PneumoLLM简化了从LLMs中提取知识的工作流程。这一创新在仅有分类标签而没有大量描述性句子用于训练的情况下表现出特别的有效性。简化的流程还显著减少了优化空间,有助于在有限的训练数据下进行学习。消融研究进一步强调了所提出模块的必要性和有效性,尤其是在保持源图像细节完整性的同时,实现准确的诊断结果。

Figure

图

Fig. 1. Representative pipelines to elicit knowledge from large models. (a) Traditional works conduct vision--language contrastive learning to align multimodal representations. (b)To utilize large language models, existing works transform images into visual tokens, and send visual tokens to LLM to generate text descriptions. (c) Our work harnesses LLM todiagnose medical images by proper designs, forming a simple and effective pipeline.

图1. 从大型模型中引出知识的代表性流程。(a)传统工作通过视觉-语言对比学习来对齐多模态表示。(b)为了利用大语言模型,现有工作将图像转换为视觉标记,并将视觉标记发送到LLM以生成文本描述。(c)我们的工作通过适当的设计利用LLM来诊断医学图像,形成了一个简单而有效的流程。

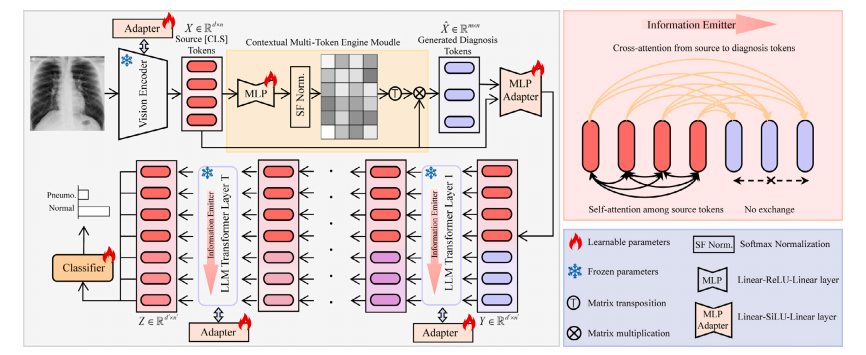

Fig. 2. Diagram of the proposed PneumoLLM. The vision encoder processes chest radiography and extracts source tokens. The contextual multi-token engine generates multiplediagnosis tokens conditioned on source tokens. To elicit in-depth knowledge from the LLM, we design the information emitter module within the LLM Transformer layers, enablingunidirectional information flow from source tokens to diagnosis tokens, preserving complete radiographic source details and aggregating critical diagnostic information.

图2. 所提出的PneumoLLM的示意图。视觉编码器处理胸部放射图像并提取源标记。上下文多标记引擎基于源标记生成多个诊断标记。为了从LLM中引出深入的知识,我们在LLM的Transformer层中设计了信息发射模块,使得信息可以单向从源标记流向诊断标记,从而保留完整的放射图像源细节并汇聚关键的诊断信息。

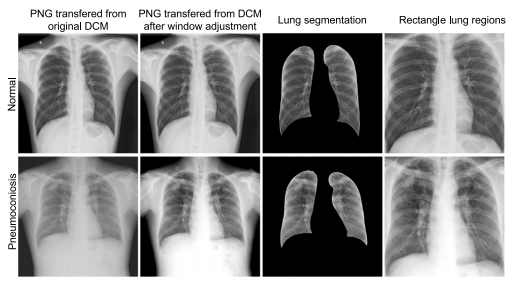

Fig. 3. The illustration examples of dataset preprocessing: two examples labeled as''Normal'' and ''Pneumoconiosis''. The window adjustment operation use the defaultwindow level and width (stored in the DICOM tags) to pre-process the original DICOMfiles. The segmentation results are obtained using the CheXmask pipeline, as proposedin the paper by Gaggion et al. (2023). The selection of the rectangular lung regions isbased on the largest external rectangle of the segmentation results.

图3. 数据集预处理的示例说明:两个被标记为"正常"和"尘肺病"的示例。窗口调整操作使用默认的窗口水平和宽度(存储在DICOM标签中)来预处理原始DICOM文件。分割结果是使用Gaggion等人(2023)论文中提出的CheXmask流程获得的。矩形肺区域的选择基于分割结果的最大外部矩形。

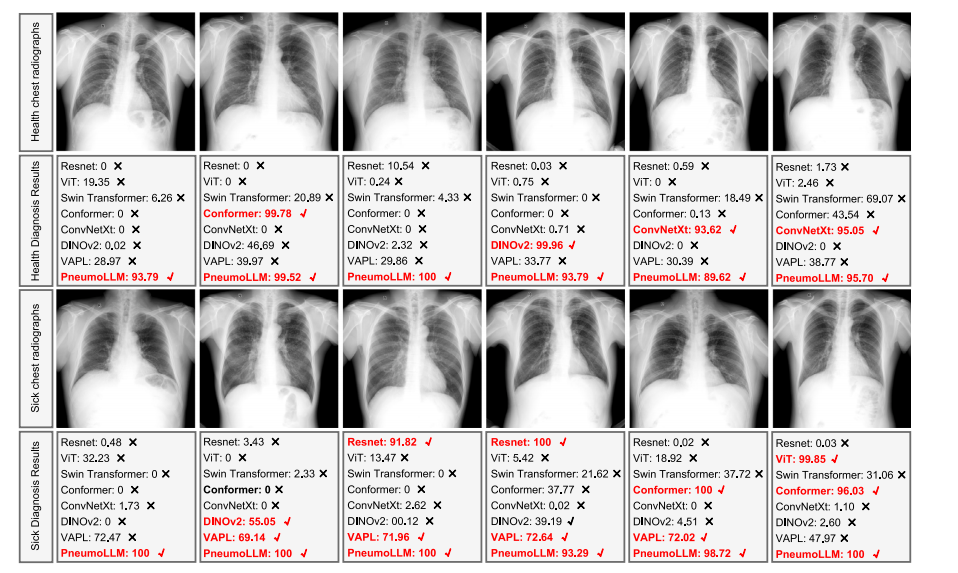

Fig. 4. Heat maps generated by MMGPL on different subjects in ADNI dataset.

图4. MMGPL在ADNI数据集不同受试者上生成的热图。

Fig. 5. The visualization of concept-similarity graph on the ADNI dataset. The horizontal and vertical axes represent concepts and tokens. Different colors represent conceptsbelonging to different categories. The red texts represent concepts related to NC, the green texts represent concepts related to LMCI, and the blue texts represent concepts relatedto AD.

图5. ADNI数据集上概念相似性图的可视化。横轴和纵轴代表概念和标记。不同的颜色代表属于不同类别的概念。红色文本代表与NC相关的概念,绿色文本代表与LMCI相关的概念,蓝色文本代表与AD相关的概念。

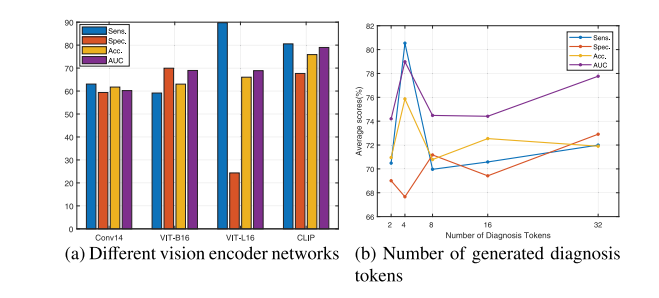

Fig. 6. Illustration on various vision encoder networks and the number of generateddiagnosis tokens. Please zoom in for the best view.

图6. 不同视觉编码器网络及生成诊断标记数量的示意图。请放大查看最佳效果。

Table

表

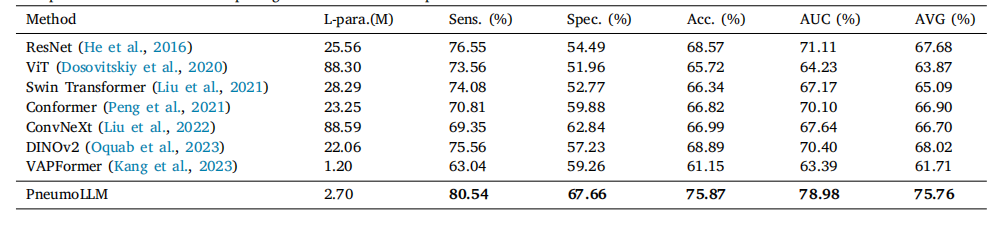

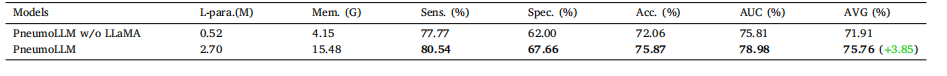

Table 1Existing diagnosis methods for pneumoconiosis.

表1 现有的尘肺病诊断方法。

Table 2Comparison results with recent prestigious methods on the pneumoconiosis dataset.

表2在尘肺病数据集上与近期著名方法的比较结果。

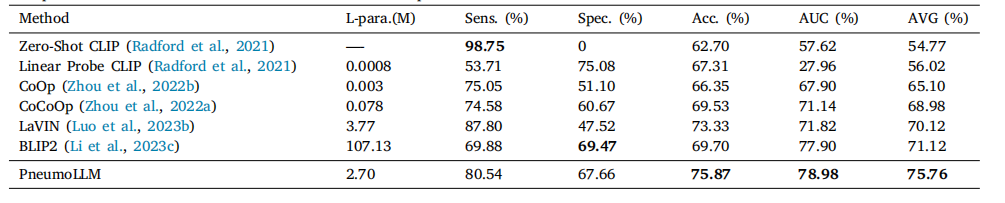

Table 3Comparison results with recent LLM-based methods on the pneumoconiosis dataset.

表3 尘肺病数据集上与近期基于LLM方法的比较结果。

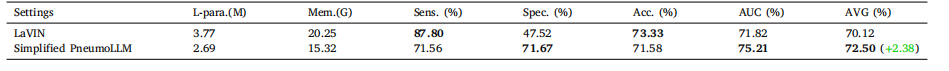

Table 4Analysis of LLaMA-7B foundational model in pneumoconiosis diagnosis.

表4 LLaMA-7B基础模型在尘肺病诊断中的分析。

Table 5Ablation study on eliminating the textual processing branch in LLM

表5 消除LLM中文本处理分支的消融研究。

Table 6Ablation study on various PneumoLLM components.

表6 针对PneumoLLM各组件的消融研究。