文章目录

- kubernetes核心概念Service

-

- service作用

- kube-proxy三种代理模式

-

- userspace模式

- iptables模式

- ipvs模式

- iptables与ipvs对比

- service类型

- service参数

- service创建

-

- 1:ClusterIP类型

-

- 命令创建service

- 验证负载均衡功能

- 通过YAML文件创建service

- [headless service](#headless service)

- DNS

- 2:NodePort类型

- 3:LoadBalancer

- 4:ExternalName

- sessionAffinity

kubernetes核心概念Service

service作用

使用kubernetes集群运行工作负载时,由于Pod经常处于用后即焚状态,Pod经常被重新生成,因此 Pod对应的IP地址也会经常变化,导致无法直接访问Pod提供的服务,Kuberetes中使用了Service来解决 这一问题,即在Pod前面使用Service对Pod进行代理,无论Pod怎样变化,只要有Label,就可以让 Service能够联系上Pod,把Pod lP地址添加到Service对应的端点列表(Endpoints)实现对Pod IP跟踪, 进而实现通过Service访问Pod目的。

- 通过service为pod客户端提供访问pod方法,即可客户端访问pod入口

- 通过标签动态感知podIP地址变化等

- 防止pod失联

- 定义访问pod访问策略

- 通过label-selector相关联

- 通过Service实现Pod的负载均衡(TCP/UDP4层)

- 底层实现由kube-proxy通过userspace、iptables、ipvs三种代理模式

kube-proxy三种代理模式

- kubernetes集群中有三层网络,一类是真实存在的,例如Node Network、Pod Network,提供真 实IP地址;一类是虚拟的,例如ClusterNetwork或Service Network,提供虚拟IP地址,不会出现在 接口上,仅会出现在Service当中

- kube-proxy始终watch(监控)kube-apiserver上关于Service相关的资源变动状态,一旦获取相关信 息kube:proxy都要把相关信息转化为当前节点之上的,能够实现Service资源调度到特定Pod之上 的规则,进而实现访问Service就能够获取Pod所提供的服务

- kube-proxy三种代理模式:userspace模式、iptables模式、ipvs模式

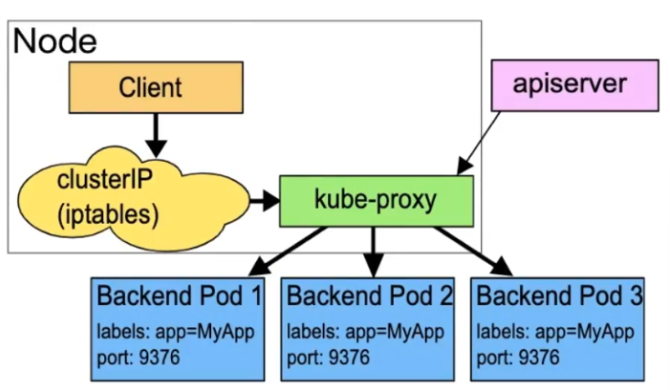

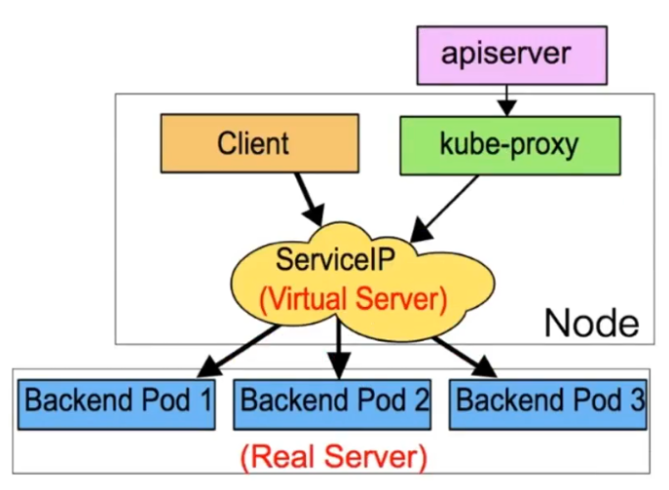

userspace模式

userspace 模式是 kube-proxy 使用的第一代模式,该模式在 kubernetes v1.0 版本开始支持使用。 userspace 模式的实现原理图示如下:

kube-proxy会为每个 Service 随机监听一个端口(proxyport),并增加一条 iptables规则。所以通过 ClusterlP:Port 访问 Service 的报文都redirect 到 proxy port,kube-proxy 从它监听的 proxy port 收到 报文以后,走 round robin(默认)或是 session affinity(会话亲和力,即同client IP 都走同一链路给同- pod 服务),分发给对应的 pod。

由于 userspace 模式会造成所有报文都走一遍用户态(也就是Service 请求会先从用户空间进入内核 iptables,然后再回到用户空间,由kube-proxy 完成后端 Endpoints 的选择和代理工作),需要在内核 空间和用户空间转换,流量从用户空间进出内核会带来性能损耗,所以这种模式效率低、性能不高,不 推荐使用。

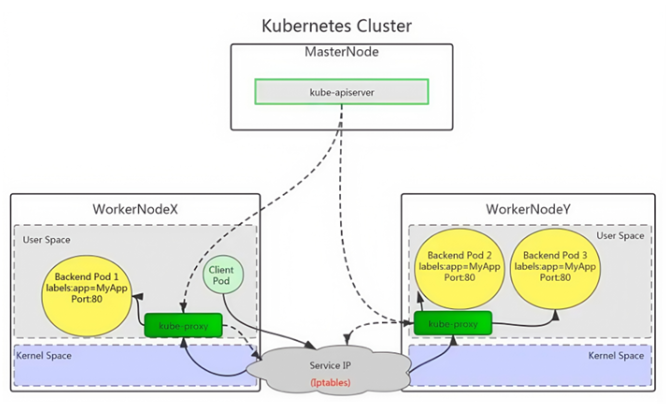

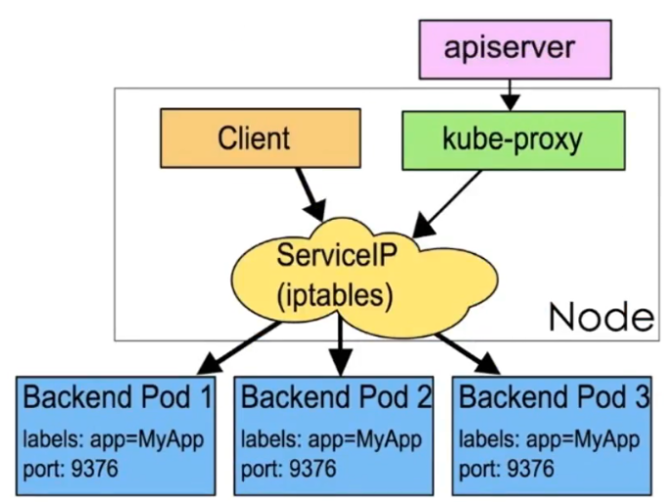

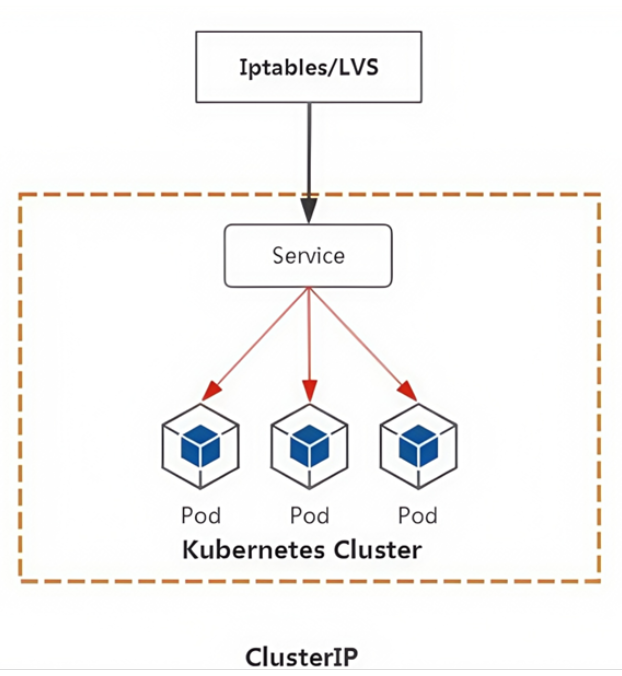

iptables模式

iptables 模式是 kube-proxy使用的第二代模式,该模式在 kubernetes v1.1版本开始支持,从v1.2 版本 开始成为 kube-proxy 的默认模式。

iptables 模式的负载均衡模式是通过底层 netfilter/iptables 规则来实现的,通过 informer 机制 Watch 接口实时跟踪 Service 和 Endpoint 的变更事件,并触发对 iptables 规则的同步更新。

iptables 模式的实现原理图示如下:

通过图示可以发现在 iptables模式下,kube proxy只是作为 controller,而不是server,真正服务的是 内核的 netfilter,体现在用户态 的是 iptables。所以整体的效率会比 userspace 模式高。

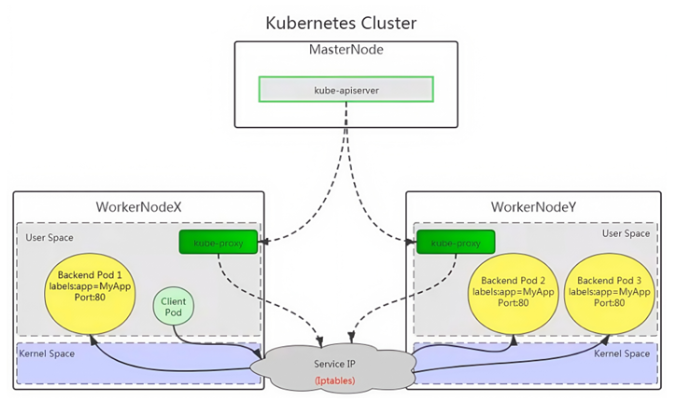

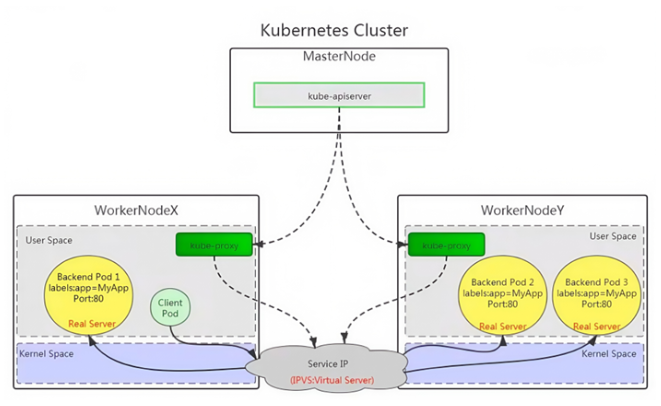

ipvs模式

ipvs 模式被 kube-proxy采纳为第三代模式,模式在 kubernetes v1.8 版本开始引入,在 v1.9 版本中处 于 beta 阶段,在 v1.11 版本中正式开始使用。

ipvs(iP Virtual Server)实现了传输层负载均衡,也就是4层交换,作为 Linux 内核的一部分。ipvs运行在 主机上,在真实服务器前充当负载均衡器。ipvs 可以将基于 TCP和 UDP 的服务请求转发到真实服务器 上,并使真实服务器上的服务在单个IP 地址上显示为虚拟服务。

ipvs 模式的实现原理图示如下:

ipvs 和 iptables 都是基于 netfilter 的,那么ipvs 模式有哪些更好的性能呢?

- ipvs 为大型集群提供了更好的可拓展性和性能

- ipvs 支持比 iptables 更复杂的负载均衡算法(包括:最小负载、最少连接、加权等)

- ipvs 支持服务器健康检查和连接重试等功能

- 可以动态修改 ipset的集合,即使iptables 的规则正在使用这个集合

ipvs 依赖于 iptables。ipvs 会使用 iptables 进行包过滤、airpin-masquerade tricks(地址伪装)、SNAT 等功能,但是使用的是 iptables 的扩展ipset,并不是直接调用 iptables 来生成规则链。通过 ipset 来存 储需要 DROP或 masquerade 的流量的源或目标地址,用于确保iptables 规则的数量是恒定的,这样我 们就不需要关心有多少 Service 或是 Pod 了。

使用 ipset 相较于 iptables有什么优点呢?iptables 是线性的数据结构,而ipset引入了带索引的数据结 构,当规则很多的时候,ipset 依然可以很高效的查找和匹配。可以将 ipset 简单理解为一个IP(段)的集 合,这个集合的内容可以是IP 地址、IP 网段、端口等,iptables 可以直接添加规则对这个"可变的集合进 行操作",这样就可以大大减少iptables规则的数量,从而减少性能损耗。

举一个例子,如果我们要禁止成千上万个IP访问我们的服务器,如果使用 iptables 就需要一条一条的添 加规则,这样会在 iptables 中生成大量的规则:如果用ipset 就只需要将相关的IP 地址(网段)加入到 ipset 集合中,然后只需要设置少量的 iptables 规则就可以实现这个目标。

下面的表格是ipvs模式下维护的ipset表集合:

iptables与ipvs对比

- iptables

- 工作在内核空间

- 优点

- 灵活,功能强大(可以在数据包不同阶段对包进行操作)

- 缺点

- 表中规则过多时,响应变慢,即规则遍历匹配和更新,呈线性延时

- ipvs

- 工作在内核空间

- 优点

- 转发效率高

- 调度算法丰富:rr,wrr,lc,wlc,ip hash等

- 缺点

- 内核支持不全,低版本内核不能使用,需要升级到4.0或5.0以上。

- 使用iptables与ipvs时机

- 1.10版本之前使用iptables(1.1版本之前使用UserSpace进行转发)

- 1.11版本之后同时支持iptables与ipvs,默认使用ipvs,如果ipvs模块没有加载时,会自动降 级至iptables

service类型

- ClusterIP

- 默认,分配一个集群内部可以访问的虚拟IP

- NodePort

- 在每个Node上分配一个端口作为外部访问入口

- nodePort端口范围为:30000-32767

- LoadBalancer

- 工作在特定的Cloud Provider上,例如Google Cloud,AWS,OpenStack

- ExternalName

- 表示把集群外部的服务引入到集群内部中来,即实现了集群内部pod和集群外部的服务进行通信

service参数

- port 访问service的端口

- targetPort 访问pod的端口

- nodePort 通过Node实现外网用户访问k8s集群内service(30000-32767)

service创建

Service的创建在工作中有两种方式,一是命令行创建,二是通过资源清单文件YAML文件创建。

1:ClusterIP类型

ClusterlP根据是否生成ClusterlP又可分为普通Service和Headless Service。

service两类:

- 普通service:

为Kubernetes的Service分配一个集群内部可访问的固定虚拟IP(Cluster IP),实现集群内的访问,。

- Headless Service

该服务不会分配Cluster Ip,也不通过kube-proxy做反向代理和负载均衡。而是通过DNS提供稳定的网络ID来访问,DNS会将headless service的后端直接解析为pod IP列表。

ClusterIP Service

命令创建service

创建应用deployment类型的应用

bash

[root@master new_k8s 11:26:22]# kubectl apply -f nginx-deploy.yaml

#显示

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deploy

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: c1

image: nginx:1.26-alpine

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

name: web验证

bash

[root@master new_k8s 11:26:37]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-deploy-d4c954465-khgkk 1/1 Running 0 18s 10.244.104.44 node2 <none> <none>

nginx-deploy-d4c954465-w4h24 1/1 Running 0 18s 10.244.166.130 node1 <none> <none>

[root@master new_k8s 11:26:55]# kubectl get deployment

NAME READY UP-TO-DATE AVAILABLE AGE

nginx-deploy 2/2 2 2 31s创建clusterIP类型service与Deployment类型应用关联

bash

#暴露端口,开启服务

[root@master new_k8s 11:27:08]# kubectl expose deployment nginx-deploy --type=ClusterIP --target-port=80 --port=80

[root@master new_k8s 11:28:07]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 8d

nginx-deploy ClusterIP 10.106.5.12 <none> 80/TCP 8s

bash

说明

expose 创建service

deployment.apps 控制器类型

nginx-server1 应用名称,也是service名称

--type=ClusterIP 指定service类型

--target-port=80 指定Pod中容器端口

--port=80 指定service端口查看详情描述

bash

[root@master new_k8s 11:28:15]# kubectl describe svc nginx-deploy

Name: nginx-deploy

Namespace: default

Labels: <none>

Annotations: <none>

Selector: app=nginx

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.106.5.12

IPs: 10.106.5.12

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.104.44:80,10.244.166.130:80

Session Affinity: None

Events: <none>

#后端podIP的集合

[root@master new_k8s 11:28:37]# kubectl get endpoints

NAME ENDPOINTS AGE

k8s-sigs.io-nfs-subdir-external-provisioner <none> 20h

kubernetes 192.168.18.128:6443 8d

nginx-deploy 10.244.104.44:80,10.244.166.130:80 58s访问clusterIP就可以看到网页内容

bash

#初始首页

[root@master new_k8s 11:29:05]# curl http://10.106.5.12

<!DOCTYPE html>

<html>

。。。。验证负载均衡功能

查看pod

bash

#修改首页验证负载均衡

[root@master new_k8s 11:29:23]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-deploy-d4c954465-khgkk 1/1 Running 0 2m59s

nginx-deploy-d4c954465-w4h24 1/1 Running 0 2m59s修改pod首页内容

bash

[root@master new_k8s 11:29:36]# kubectl exec -it nginx-deploy-d4c954465-khgkk -- /bin/sh

/ # cd /usr/share/nginx/html

/usr/share/nginx/html # echo web1 > index.html

[root@master new_k8s 11:31:08]# kubectl exec -it nginx-deploy-d4c954465-w4h24 -- /bin/sh

/ # cd /usr/share/nginx/html/

/usr/share/nginx/html # echo web2 > index.html验证负载均衡

bash

#验证

[root@master new_k8s 11:31:46]# curl http://10.106.5.12

web1

[root@master new_k8s 11:32:18]# curl http://10.106.5.12

web2

[root@master new_k8s 11:32:19]# curl http://10.106.5.12

web1

[root@master new_k8s 11:32:22]# curl http://10.106.5.12

web2通过YAML文件创建service

编写应用YAML文件

bash

[root@master new_k8s 13:39:47]# kubectl apply -f nginx-deploy.yaml

deployment.apps/nginx-deploy created

service/nginx-svc created

#显示

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deploy

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: c1

image: nginx:1.26-alpine

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

name: web

--- #增加service类型

apiVersion: v1

kind: Service

metadata:

name: nginx-svc

spec:

type: ClusterIP

ports:

- port: 80

targetPort: 80

protocol: TCP

selector:

app: nginx验证查看

bash

[root@master new_k8s 13:40:00]# kubectl get deployment

NAME READY UP-TO-DATE AVAILABLE AGE

nginx-deploy 2/2 2 2 16s

[root@master new_k8s 13:40:16]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-deploy-d4c954465-4l9z2 1/1 Running 0 22s

nginx-deploy-d4c954465-d7v9c 1/1 Running 0 22s

[root@master new_k8s 13:40:22]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 8d

nginx-svc ClusterIP 10.105.29.238 <none> 80/TCP 29sheadless service

则为clusterIP没有值,只能通过域名访问

- 普通的clusterIP service是service name解析为cluster ip,然后cluster ip对应到后面pod ip。

- headless service是指service name直接解析为后面的pod ip

创建应用deployment控制器类型的YAML文件

bash

[root@master new_k8s 13:53:36]# kubectl apply -f nginx-deploy.yaml

deployment.apps/nginx-deploy created

service/nginx-svc created

#显示

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deploy

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: c1

image: nginx:1.26-alpine

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

name: web

---

apiVersion: v1

kind: Service

metadata:

name: nginx-svc

spec:

type: ClusterIP

clusterIP: None #设置无头服务

ports:

- port: 80

targetPort: 80

protocol: TCP

selector:

app: nginx查看

bash

[root@master new_k8s 13:54:17]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 8d

nginx-svc ClusterIP None <none> 80/TCP 11s

bash

[root@master new_k8s 13:54:28]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-deploy-d4c954465-966md 1/1 Running 0 22s 10.244.104.41 node2 <none> <none>

nginx-deploy-d4c954465-tqzm9 1/1 Running 0 22s 10.244.166.189 node1 <none> <none>

bash

[root@master new_k8s 13:54:39]# kubectl get endpoints

NAME ENDPOINTS AGE

k8s-sigs.io-nfs-subdir-external-provisioner <none> 22h

kubernetes 192.168.18.128:6443 8d

nginx-svc 10.244.104.41:80,10.244.166.189:80 37sDNS

DNS服务监视Kubernetes APl,为每一个Service创建DNS记录用于域名解析

headless service需要DNS来解决访问问题

DNS记录格式为: pod名称+service名称+命令空间+类型+cluster+local.(或者pod名称省略)

查看kube-dns服务的IP

bash

[root@master new_k8s 13:56:16]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 8d

metrics-server ClusterIP 10.107.166.207 <none> 443/TCP 5d21h在集群主机通过DNS服务地址查找无头服务的dns解析

bash

[root@master new_k8s 13:58:24]# dig -t a nginx-svc.default.svc.cluster.local. @10.96.0.10

; <<>> DiG 9.11.4-P2-RedHat-9.11.4-26.P2.el7_9.16 <<>> -t a nginx-svc.default.svc.cluster.local. @10.96.0.10

;; global options: +cmd

;; Got answer:

;; WARNING: .local is reserved for Multicast DNS

;; You are currently testing what happens when an mDNS query is leaked to DNS

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 8668

;; flags: qr aa rd; QUERY: 1, ANSWER: 2, AUTHORITY: 0, ADDITIONAL: 1

;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

;; QUESTION SECTION:

;nginx-svc.default.svc.cluster.local. IN A

;; ANSWER SECTION:

nginx-svc.default.svc.cluster.local. 30 IN A 10.244.104.41

nginx-svc.default.svc.cluster.local. 30 IN A 10.244.166.189

;; Query time: 0 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: 四 1月 22 13:58:52 CST 2026

;; MSG SIZE rcvd: 166在集群内创建pod进行解析

bash

[root@master new_k8s 13:58:52]# kubectl run -it centos --image centos:7 --image-pull-policy IfNotPresent

If you don't see a command prompt, try pressing enter.

[root@centos /]# curl http://nginx-svc.default.svc.cluster.local.

<!DOCTYPE html>

<html>

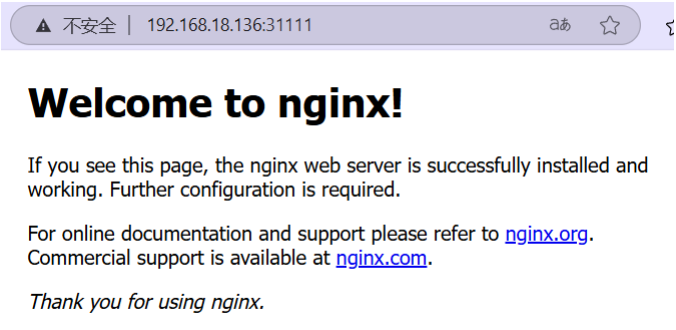

。。。。2:NodePort类型

创建应用YAML文件

bash

[root@master new_k8s 14:11:54]# cat nginx-nodeport.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-nodeport

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: c1

image: nginx:1.26-alpine

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80 #容器端口

name: web

---

apiVersion: v1

kind: Service

metadata:

name: nginx-nodeport

spec:

type: NodePort

ports:

- port: 8060 #service内部集群访问的端口

targetPort: 80 #pod端口

nodePort: 31111 #外部访问的集群端口 范围是30000-32767

protocol: TCP

selector:

app: nginx

#应用

[root@master new_k8s 14:12:06]# kubectl apply -f nginx-nodeport.yaml

deployment.apps/nginx-nodeport created

service/nginx-nodeport created查看

bash

[root@master new_k8s 14:12:24]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 8d

nginx-nodeport NodePort 10.99.135.77 <none> 8060:31111/TCP 11s

bash

[root@master new_k8s 14:12:35]# kubectl get pod

NAME READY STATUS RESTARTS AGE

centos 1/1 Running 1 (10m ago) 13m

nginx-nodeport-d4c954465-ldzlf 1/1 Running 0 32s

nginx-nodeport-d4c954465-wq8gx 1/1 Running 0 32s

bash

[root@master new_k8s 14:12:56]# kubectl get deployment

NAME READY UP-TO-DATE AVAILABLE AGE

nginx-nodeport 2/2 2 2 43s

bash

#查看分布节点

[root@master new_k8s 14:13:07]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

centos 1/1 Running 1 (11m ago) 14m 10.244.104.43 node2 <none> <none>

nginx-nodeport-d4c954465-ldzlf 1/1 Running 0 73s 10.244.166.188 node1 <none> <none>

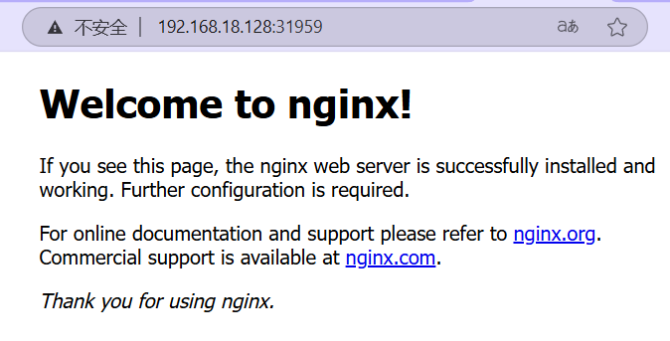

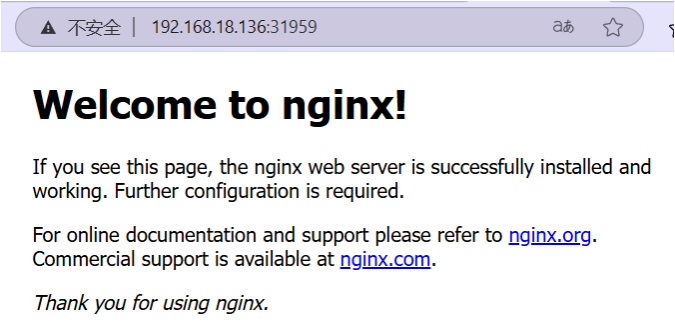

nginx-nodeport-d4c954465-wq8gx 1/1 Running 0 73s 10.244.104.42 node2 <none> <none>直接使用浏览器访问

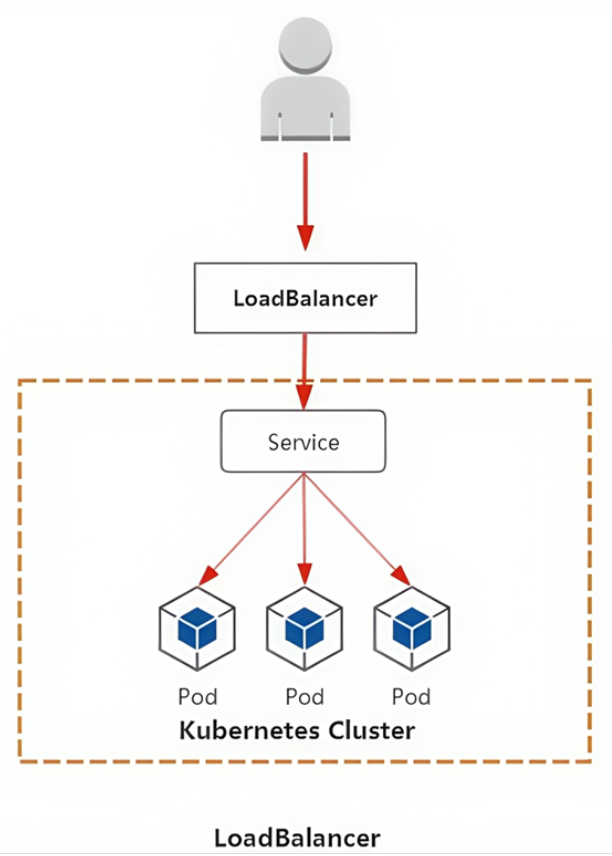

3:LoadBalancer

集群外访问过程

- 用户

- 域名

- 云服务提供商提供LB服务

- NodeIP:Port(service IP)

- Pod IP:端口

MetalLB

自建kubernetes的loadbalancer类型服务方案

MetalLB可以为kubernetes集群中的Service提供网络负载均衡功能

MetalLB两大功能为:

- 地址分配,类似于DHCP

- 外部通告,一旦MetalLB为服务分配了外部IP地址,它就需要使群集之外的网络意识到该IP在群集 中"存在"。MetalLB使用标准路由协议来实现此目的:ARP,NDP或BGP。

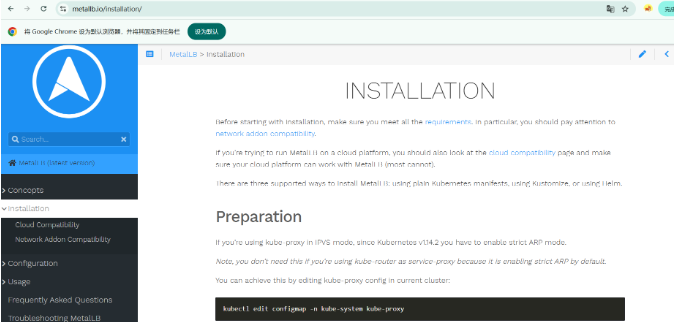

参考文档: https://metallb.universe.tf/installation/

下载资源清单文件

v0.12

bash

[root@master LB 15:01:39]# wget https://raw.githubusercontent.com/metallb/metallb/v0.12.1/manifests/namespace.yaml

[root@master LB 15:01:38]# wget https://raw.githubusercontent.com/metallb/metallb/v0.12.1/manifests/metallb.yaml应用文件

bash

#先创建命名空间

[root@master LB 15:02:20]# kubectl apply -f namespace.yaml

[root@master LB 15:03:57]# kubectl apply -f metallb.yaml 问题解决:如果出现pod中有失败状态,可以在node节点中直接导入镜像文件(metallb_controller.tar 和metallb_speaker.tar),解决镜像下载问题

给metallb做资源配置

bash

[root@master LB 15:14:13]# kubectl apply -f metallb-conf.yaml

#显示

apiVersion: v1

kind: ConfigMap

metadata:

name: config

namespace: metallb-system

data:

config: |

address-pools:

- name: default

protocol: layer2

addresses:

- 192.168.18.200-192.168.18.210 #与集群节点服务器处于同一网段创建nginx应用资源

bash

[root@master LB 15:27:32]# kubectl apply -f nginx-metallb.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

namespace: metallb-system

name: nginx-metallb

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx-mmetallb1

image: nginx:1.26-alpine

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80创建service资源

bash

[root@master LB 15:32:28]# kubectl apply -f lb-service.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx-metallb

namespace: metallb-system #指定相同的命名空间,跨命名空间导致 Service 找不到 Pod

spec:

type: LoadBalancer

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: nginx查看

bash

[root@master LB 15:32:50]# kubectl get pod -n metallb-system

NAME READY STATUS RESTARTS AGE

controller-8d6664589-dxfvg 1/1 Running 0 61m

nginx-metallb-5dd57db67f-42l5n 1/1 Running 0 36m

nginx-metallb-5dd57db67f-kdsn4 1/1 Running 0 36m

speaker-hddh5 1/1 Running 0 61m

speaker-qfncm 1/1 Running 0 61m

[root@master LB 16:05:29]# kubectl get configmap -n metallb-system

NAME DATA AGE

config 1 50m

kube-root-ca.crt 1 62m

[root@master LB 16:19:36]# kubectl describe cm config -n metallb-system

Name: config

Namespace: metallb-system

Labels: <none>

Annotations: <none>

Data

====

config:

----

address-pools:

- name: default

protocol: layer2

addresses:

- 192.168.18.200-192.168.18.210

BinaryData

====

Events: <none>

#根据提DHCP分配的地址去浏览器查看

[root@master LB 16:19:54]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 8d

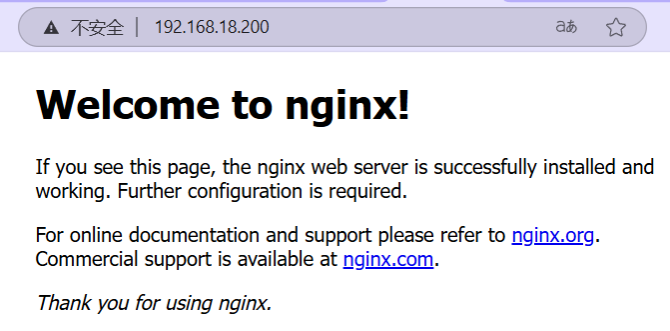

nginx-metallb LoadBalancer 10.110.225.199 192.168.18.200 80:32032/TCP 47m1.直接查看DHCP地址

2.节点结合node暴露端口查看

新版本MetalLB

v0.15

1:修改kube-proxy配置文件

bash

#保存上一个实验的namespace.yaml配置,其他的删除

[root@master LB 16:51:07]# kubectl edit configmap -n kube-system kube-proxy

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: "ipvs" #检查模式

ipvs:

strictARP: true ####设置为true2:使用YAML文件创建资源(打开魔法)

bash

[root@master LB 16:52:34]# kubectl apply -f https://raw.githubusercontent.com/metallb/metallb/v0.15.2/config/manifests/metallb-native.yaml

#同时也可以把YAML下载

[root@master LB 16:53:44]# wget https://raw.githubusercontent.com/metallb/metallb/v0.15.2/config/manifests/metallb-native.yaml

[root@master LB 17:10:37]# kubectl get all -n metallb-system

NAME READY STATUS RESTARTS AGE

pod/controller-8666ddd68b-khl6s 1/1 Running 0 101m

pod/speaker-b688x 1/1 Running 0 101m

pod/speaker-bcdh8 1/1 Running 0 101m

pod/speaker-wg6mz 1/1 Running 0 101m

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/metallb-webhook-service ClusterIP 10.100.203.237 <none> 443/TCP 101m

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

daemonset.apps/speaker 3 3 3 3 3 kubernetes.io/os=linux 101m

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/controller 1/1 1 1 101m

NAME DESIRED CURRENT READY AGE

replicaset.apps/controller-8666ddd68b 1 1 1 101m

这里就和之前版本有区别

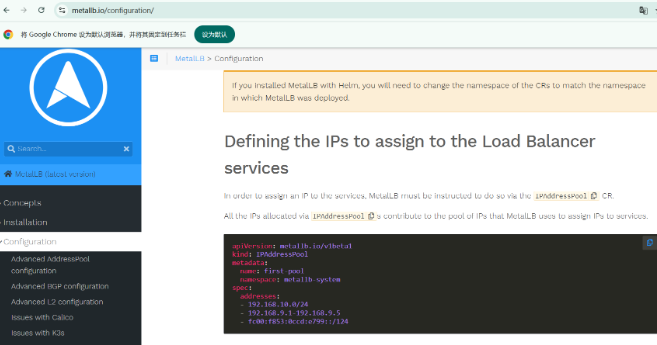

3:不需要创建configmap资源对象,而是直接使用IPAddressPool资源

bash

[root@master LB 17:02:42]# vim ipaddresspool.yaml

[root@master LB 17:09:21]# cat ipaddresspool.yaml

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: first-pool

namespace: metallb-system

spec:

addresses:

- 192.168.18.200-192.168.18.210

[root@master LB 17:09:24]# kubectl apply -f ipaddresspool.yaml4:查看创建资源

bash

[root@master LB 18:35:42]# kubectl get ipaddresspool -n metallb-system

NAME AUTO ASSIGN AVOID BUGGY IPS ADDRESSES

first-pool true false ["192.168.18.200-192.168.18.210"]5:创建nginx应用资源

bash

[root@master LB 18:38:01]# kubectl apply -f nginx-metallb.yaml

deployment.apps/nginx-metallb created

#显示

apiVersion: apps/v1

kind: Deployment

metadata:

namespace: metallb-system

name: nginx-metallb

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx-mmetallb1

image: nginx:1.26-alpine

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80查看

bash

[root@master LB 18:40:13]# kubectl get deployment -n metallb-system

NAME READY UP-TO-DATE AVAILABLE AGE

controller 1/1 1 1 106m

nginx-metallb 2/2 2 2 35s

[root@master LB 18:40:21]# kubectl get pod -n metallb-system

NAME READY STATUS RESTARTS AGE

controller-8666ddd68b-khl6s 1/1 Running 0 107m

nginx-metallb-5dd57db67f-896t5 1/1 Running 0 82s

nginx-metallb-5dd57db67f-kxwvf 1/1 Running 0 82s

speaker-b688x 1/1 Running 0 107m

speaker-bcdh8 1/1 Running 0 107m

speaker-wg6mz 1/1 Running 0 107m6:创建service资源

bash

[root@master LB 18:41:08]# kubectl apply -f lb-service.yaml

#显示

apiVersion: v1

kind: Service

metadata:

name: nginx-metallb

spec:

type: LoadBalancer

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: nginx

[root@master LB 18:42:50]# kubectl get svc -n metallb-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

metallb-webhook-service ClusterIP 10.100.203.237 <none> 443/TCP 109m

nginx-metallb LoadBalancer 10.97.21.176 192.168.18.200 80:32589/TCP 61s验证查看网站

4:ExternalName

作用:

- 把集群外部的服务引入到集群内部中来,实现了集群内部pod和集群外部的服务进行通信

- ExternalName类型的服务适用于外部服务使用域名的方式,缺点是不能指定端口

- 还有一点要注意:集群内的Pod会继承Node上的DNS解析规则。所以只要Node可以访问的服务,Pod中也可以访问到,这就实现了集群内服务访问集群外服务

公网域名引入

查看公网域名解析

bash

#解析百度地址

#dig -t a 和dig -t A没有区别

[root@master ~ 09:05:51]# dig -t a www.baidu.com @10.96.0.10

; <<>> DiG 9.11.4-P2-RedHat-9.11.4-26.P2.el7_9.16 <<>> -t a www.baidu.com @10.96.0.10

;; global options: +cmd

;; Got answer:

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 7203

;; flags: qr rd ra; QUERY: 1, ANSWER: 3, AUTHORITY: 0, ADDITIONAL: 1

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

;; QUESTION SECTION:

;www.baidu.com. IN A

;; ANSWER SECTION:

www.baidu.com. 5 IN CNAME www.a.shifen.com.

www.a.shifen.com. 5 IN A 180.101.49.44

www.a.shifen.com. 5 IN A 180.101.51.73

;; Query time: 115 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: 五 1月 23 09:09:15 CST 2026

;; MSG SIZE rcvd: 149创建应用YAML

bash

[root@master ~ 09:09:15]# mkdir server_dir

[root@master ~ 09:16:43]# cd server_dir

[root@master server_dir 09:16:55]# kubectl apply -f externalname.yaml

service/my-externalname created

#显示

apiVersion: v1

kind: Service

metadata:

name: my-externalname

namespace: default

spec:

type: ExternalName

externalName: www.baidu.com查看创建资源

bash

[root@master server_dir 09:17:27]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 9d

my-externalname ExternalName <none> www.baidu.com <none> 10s查看本地域名解析

bash

[root@master server_dir 09:17:37]# dig -t A my-externalname.default.svc.cluster.local. @10.96.0.10

; <<>> DiG 9.11.4-P2-RedHat-9.11.4-26.P2.el7_9.16 <<>> -t A my-externalname.default.svc.cluster.local. @10.96.0.10

;; global options: +cmd

;; Got answer:

;; WARNING: .local is reserved for Multicast DNS

;; You are currently testing what happens when an mDNS query is leaked to DNS

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 37074

;; flags: qr aa rd; QUERY: 1, ANSWER: 4, AUTHORITY: 0, ADDITIONAL: 1

;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

;; QUESTION SECTION:

;my-externalname.default.svc.cluster.local. IN A

;; ANSWER SECTION:

my-externalname.default.svc.cluster.local. 5 IN CNAME www.baidu.com.

www.baidu.com. 5 IN CNAME www.a.shifen.com.

www.a.shifen.com. 5 IN A 180.101.49.44

www.a.shifen.com. 5 IN A 180.101.51.73

;; Query time: 102 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: 五 1月 23 09:19:07 CST 2026

;; MSG SIZE rcvd: 245开启测试Pod来解析域名

bash

[root@master server_dir 09:22:44]# kubectl run -it expod --image=busybox:1.28

/ # nslookup www.baidu.com

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: www.baidu.com

Address 1: 240e:e9:6002:1fd:0:ff:b0e1:fe69

Address 2: 240e:e9:6002:1ac:0:ff:b07e:36c5

Address 3: 180.101.51.73

Address 4: 180.101.49.44

/ # nslookup my-externalname.default.svc.cluster.local.

Server: 10.96.0.10

Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local

Name: my-externalname.default.svc.cluster.local.

Address 1: 240e:e9:6002:1ac:0:ff:b07e:36c5

Address 2: 240e:e9:6002:1fd:0:ff:b0e1:fe69

Address 3: 180.101.49.44

Address 4: 180.101.51.73

#windows也可以使用nslookup解析 地址不同命名空间访问

**案例:**实现ns1和ns2两个命名空间之间服务的访问

1:创建ns1命名空间和相关deployment,pod,service

bash

[root@master server_dir 09:59:40]# kubectl apply -f pod-ns1.yaml

namespace/ns1 created

deployment.apps/pod-ns1 created

service/svc1 created

service/external-svc1 created

#显示

apiVersion: v1

kind: Namespace

metadata:

name: ns1

---

#应用资源

apiVersion: apps/v1

kind: Deployment

metadata:

name: pod-ns1

namespace: ns1

spec:

replicas: 1

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: c1

image: nginx:1.26-alpine

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 80

---

#端口暴露

apiVersion: v1

kind: Service

metadata:

name: svc1

namespace: ns1

spec:

type: ClusterIP

clusterIP: None #屋头服务

selector:

app: nginx

ports:

- port: 80

targetPort: 80

---

#命名空间

apiVersion: v1

kind: Service

metadata:

name: external-svc1

namespace: ns1

spec:

type: ExternalName

externalName: svc2.ns2.svc.cluster.local #将ns2空间的svc2服务引入到ns12:查看命名空间ns1中的资源

bash

[root@master server_dir 10:00:34]# kubectl get pod,svc -n ns1

NAME READY STATUS RESTARTS AGE

pod/pod-ns1-f9fcffc5f-97nnr 1/1 Running 0 36s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/external-svc1 ExternalName <none> svc2.ns2.svc.cluster.local <none> 36s

service/svc1 ClusterIP None <none> 80/TCP 36s3:使用dns:10.96.0.10解析域名,可以直接看到pod的ip

bash

#查看dns地址

[root@master server_dir 10:01:05]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 9d

#解析本地服务地址

[root@master server_dir 10:01:34]# dig -t a svc1.ns1.svc.cluster.local. @10.96.0.10

; <<>> DiG 9.11.4-P2-RedHat-9.11.4-26.P2.el7_9.16 <<>> -t a svc1.ns1.svc.cluster.local. @10.96.0.10

;; global options: +cmd

;; Got answer:

;; WARNING: .local is reserved for Multicast DNS

;; You are currently testing what happens when an mDNS query is leaked to DNS

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 61629

;; flags: qr aa rd; QUERY: 1, ANSWER: 1, AUTHORITY: 0, ADDITIONAL: 1

;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

;; QUESTION SECTION:

;svc1.ns1.svc.cluster.local. IN A

;; ANSWER SECTION:

svc1.ns1.svc.cluster.local. 30 IN A 10.244.166.134

;; Query time: 1 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: 五 1月 23 10:02:17 CST 2026

;; MSG SIZE rcvd: 97

#验证

[root@master server_dir 10:02:17]# kubectl get pod -n ns1 -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pod-ns1-f9fcffc5f-97nnr 1/1 Running 0 2m15s 10.244.166.134 node1 <none> <none>4:创建ns2命名空间和相关deployment,pod,service

bash

[root@master server_dir 10:00:29]# kubectl apply -f pod-ns2.yaml

namespace/ns2 created

deployment.apps/pod-ns2 created

service/svc2 created

service/external-svc2 created

#显示

apiVersion: v1

kind: Namespace

metadata:

name: ns2

---

#应用资源

apiVersion: apps/v1

kind: Deployment

metadata:

name: pod-ns2

namespace: ns2

spec:

replicas: 1

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: c1

image: nginx:1.26-alpine

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 80

---

#端口暴露

apiVersion: v1

kind: Service

metadata:

name: svc2

namespace: ns2

spec:

type: ClusterIP

clusterIP: None

selector:

app: nginx

ports:

- port: 80

targetPort: 80

---

#命名空间

apiVersion: v1

kind: Service

metadata:

name: external-svc2

namespace: ns2

spec:

type: ExternalName

externalName: svc1.ns1.svc.cluster.local #将ns1空间的svc1引入到ns1空间5:查看命名空间ns2中的资源

\

[root@master server_dir 10:02:44]# kubectl get pod,svc -n ns2

NAME READY STATUS RESTARTS AGE

pod/pod-ns2-f9fcffc5f-fvgjh 1/1 Running 0 2m33s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/external-svc2 ExternalName <none> svc1.ns1.svc.cluster.local <none> 2m33s

service/svc2 ClusterIP None <none> 80/TCP 2m33s6:使用dns解析pod的ip

bash

[root@master server_dir 10:03:07]# dig -t a svc2.ns2.svc.cluster.local. @10.96.0.10

; <<>> DiG 9.11.4-P2-RedHat-9.11.4-26.P2.el7_9.16 <<>> -t a svc2.ns2.svc.cluster.local. @10.96.0.10

;; global options: +cmd

;; Got answer:

;; WARNING: .local is reserved for Multicast DNS

;; You are currently testing what happens when an mDNS query is leaked to DNS

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 32768

;; flags: qr aa rd; QUERY: 1, ANSWER: 1, AUTHORITY: 0, ADDITIONAL: 1

;; WARNING: recursion requested but not available

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

;; QUESTION SECTION:

;svc2.ns2.svc.cluster.local. IN A

;; ANSWER SECTION:

svc2.ns2.svc.cluster.local. 30 IN A 10.244.166.143

;; Query time: 1 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: 五 1月 23 10:03:55 CST 2026

;; MSG SIZE rcvd: 97

#验证

[root@master server_dir 10:04:05]# kubectl get pod -n ns2 -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pod-ns2-f9fcffc5f-fvgjh 1/1 Running 0 3m39s 10.244.166.143 node1 <none> <none>在pod中使用域名进行访问

进入ns2中的pod,使用nslookup对ns1中的pod进行域名解析

bash

[root@master server_dir 10:04:13]# kubectl exec -it pod-ns2-f9fcffc5f-fvgjh -n ns2 -- /bin/sh

/ # nslookup svc2

Server: 10.96.0.10

Address: 10.96.0.10:53

** server can't find svc2.cluster.local: NXDOMAIN

** server can't find svc2.cluster.local: NXDOMAIN

Name: svc2.ns2.svc.cluster.local

Address: 10.244.166.143 #解析出来了

** server can't find svc2.svc.cluster.local: NXDOMAIN

** server can't find svc2.svc.cluster.local: NXDOMAIN

/ # nslookup svc1.ns1.svc.cluster.local.

Server: 10.96.0.10

Address: 10.96.0.10:53

Name: svc1.ns1.svc.cluster.local

Address: 10.244.166.134对于nslookup svc2解析时因为没有标明完整域名,所会不断尝试添加完整域名,当你执行

nslookup svc2时,系统不会直接解析svc2,而是按搜索域顺序依次补全后缀,尝试 3 次解析

bash#可以直接使用完整域名解析 / # nslookup svc2.ns2.svc.cluster.local. Server: 10.96.0.10 Address: 10.96.0.10:53 Name: svc2.ns2.svc.cluster.local Address: 10.244.166.143

sessionAffinity

会话粘黏

设置sessionAffinity为clientip(类似nginx的ip_hash算法、lvs的sh算法)

创建nginx资源使用clusterip访问

bash

[root@master server_dir 10:52:30]# kubectl apply -f nginx-session.yaml

deployment.apps/nginx-server created

service/nginx-svc created

#显示

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-server

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: c1

image: nginx:1.26-alpine

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: nginx-svc

spec:

type: ClusterIP

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: nginx查看资源

bash

[root@master server_dir 10:52:46]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-server-5b6d5cd699-j25q4 1/1 Running 0 3m23s

nginx-server-5b6d5cd699-mm59f 1/1 Running 0 3m23s

[root@master server_dir 10:56:09]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 9d

nginx-svc ClusterIP 10.96.252.227 <none> 80/TCP 3m33s更新两个pod中首页内容作为鉴别区分

验证负载均衡

bash

[root@master server_dir 10:56:19]# kubectl exec -it nginx-server-5b6d5cd699-j25q4 -- /bin/sh

/ # cd /usr/share/nginx/html/

/usr/share/nginx/html # echo web1 > index.html

/usr/share/nginx/html # exit

[root@master server_dir 10:57:16]# kubectl exec -it nginx-server-5b6d5cd699-mm59f -- /bin/sh

/ # cd /usr/share/nginx/html/

/usr/share/nginx/html # echo web2 > index.html

/usr/share/nginx/html # exit

[root@master server_dir 10:58:46]# curl http://10.96.252.227

web2

[root@master server_dir 10:59:08]# curl http://10.96.252.227

web1粘黏的功能就是更改Session Affinity选项

bash

[root@master server_dir 10:59:09]# kubectl describe svc nginx-svc

Name: nginx-svc

Namespace: default

Labels: <none>

Annotations: <none>

Selector: app=nginx

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.96.252.227

IPs: 10.96.252.227

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.104.52:80,10.244.166.136:80

Session Affinity: None

Events: <none>修改

bash

[root@master server_dir 11:01:16]# kubectl patch svc nginx-svc -p '{"spec":{"sessionAffinity":"ClientIP"}}'

service/nginx-svc patched再次查看更改结果

bash

[root@master server_dir 11:01:25]# kubectl describe svc nginx-svc

Name: nginx-svc

Namespace: default

Labels: <none>

Annotations: <none>

Selector: app=nginx

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.96.252.227

IPs: 10.96.252.227

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.104.52:80,10.244.166.136:80

Session Affinity: ClientIP

Events: <none>验证

bash

####第一次访问的时哪个pod,后面就一致访问这个pod,直到失效时间####

[root@master server_dir 11:01:37]# curl http://10.96.252.227

web2

[root@master server_dir 11:01:57]# curl http://10.96.252.227

web2sessionAffinity机制默认失效时间为10800秒(3小时)

defaultLabels:

Annotations:

Selector: app=nginx

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.96.252.227

IPs: 10.96.252.227

Port: 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.104.52:80,10.244.166.136:80

Session Affinity: None

Events:

修改

```bash

[root@master server_dir 11:01:16]# kubectl patch svc nginx-svc -p '{"spec":{"sessionAffinity":"ClientIP"}}'

service/nginx-svc patched再次查看更改结果

bash

[root@master server_dir 11:01:25]# kubectl describe svc nginx-svc

Name: nginx-svc

Namespace: default

Labels: <none>

Annotations: <none>

Selector: app=nginx

Type: ClusterIP

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.96.252.227

IPs: 10.96.252.227

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 10.244.104.52:80,10.244.166.136:80

Session Affinity: ClientIP

Events: <none>验证

bash

####第一次访问的时哪个pod,后面就一致访问这个pod,直到失效时间####

[root@master server_dir 11:01:37]# curl http://10.96.252.227

web2

[root@master server_dir 11:01:57]# curl http://10.96.252.227

web2sessionAffinity机制默认失效时间为10800秒(3小时)