本文深入探讨了多智能体强化学习(MARL)的核心概念,详细介绍了环境建模方法,并实现了多种MARL算法。内容涵盖了MARL与单智能体RL的区别、面临的挑战(如环境非平稳性、信用分配问题等),以及不同类型的MARL问题(协作型、竞争型、混合型)。文章还通过实例展示了如何构建多智能体环境,并实现了独立学习和QMIX算法。最后,本文提出了应对MARL挑战的解决方案,并提供了算法选择指南,帮助读者更好地理解和应用MARL技术。

课程目标

- 理解多智能体强化学习的基本概念

- 掌握多智能体环境建模方法

- 实现多智能体强化学习算法

多智能体强化学习概述

与单智能体RL的区别

多智能体强化学习(MARL)相比单智能体强化学习面临以下挑战:

- 环境非平稳性:其他智能体的学习导致环境动态变化

- 信用分配问题:难以确定哪个智能体对团队奖励贡献最大

- 状态空间爆炸:联合状态空间随智能体数量指数增长

- 通信约束:智能体间通信可能受限

MARL问题分类

- 协作型:所有智能体有共同目标

- 竞争型:智能体有冲突目标

- 混合型:包含协作和竞争元素

多智能体环境建模

部分可观测马尔可夫决策过程(POMDP)

code-snippet__js

import numpy as np

import random

import gym

from gym import spaces

from typing import List, Dict, Any, Tuple, Optional

import matplotlib.pyplot as plt

class MultiAgentEnvironment:

"""多智能体环境基类"""

def __init__(self, n_agents: int, state_dim: int, action_dim: int,

observation_dim: int, max_steps: int = 100):

self.n_agents = n_agents

self.state_dim = state_dim

self.action_dim = action_dim

self.observation_dim = observation_dim

self.max_steps = max_steps

self.step_count = 0

# 状态和观测空间

self.state_space = spaces.Box(low=-np.inf, high=np.inf, shape=(state_dim,))

self.observation_spaces = [spaces.Box(low=-np.inf, high=np.inf, shape=(observation_dim,))

for _ in range(n_agents)]

self.action_spaces = [spaces.Discrete(action_dim) for _ in range(n_agents)]

# 当前状态

self.state = None

self.observations = [None] * n_agents

self.rewards = [0.0] * n_agents

self.dones = [False] * n_agents

def reset(self) -> List[np.ndarray]:

"""重置环境"""

self.state = self.sample_initial_state()

self.observations = [self.get_observation(agent_id) for agent_id in range(self.n_agents)]

self.rewards = [0.0] * self.n_agents

self.dones = [False] * self.n_agents

self.step_count = 0

return self.observations

def step(self, actions: List[int]) -> Tuple[List[np.ndarray], List[float], List[bool], Dict]:

"""执行一步"""

if len(actions) != self.n_agents:

raise ValueError(f"需要 {self.n_agents} 个动作,但只提供了 {len(actions)} 个")

# 更新状态

next_state = self.transition(self.state, actions)

# 计算奖励

rewards = self.compute_rewards(next_state, actions)

# 检查终止条件

dones = [self.is_done() for _ in range(self.n_agents)]

# 更新观测

observations = [self.get_observation(agent_id) for agent_id in range(self.n_agents)]

self.state = next_state

self.observations = observations

self.rewards = rewards

self.dones = dones

self.step_count += 1

info = {'step': self.step_count}

return observations, rewards, dones, info

def sample_initial_state(self) -> np.ndarray:

"""采样初始状态"""

return np.random.rand(self.state_dim)

def transition(self, state: np.ndarray, actions: List[int]) -> np.ndarray:

"""状态转移函数"""

# 子类需要实现

raise NotImplementedError

def compute_rewards(self, state: np.ndarray, actions: List[int]) -> List[float]:

"""计算奖励"""

# 子类需要实现

raise NotImplementedError

def get_observation(self, agent_id: int) -> np.ndarray:

"""获取智能体观测"""

# 子类需要实现

raise NotImplementedError

def is_done(self) -> bool:

"""检查是否终止"""

return self.step_count >= self.max_steps

class SimpleCooperativeEnv(MultiAgentEnvironment):

"""简单协作环境:智能体需要协作到达目标"""

def __init__(self, n_agents: int = 2, grid_size: int = 5):

self.grid_size = grid_size

self.n_agents = n_agents

self.action_dim = 5 # 0:不动, 1:上, 2:下, 3:左, 4:右

# 状态:[agent1_x, agent1_y, agent2_x, agent2_y, target_x, target_y]

state_dim = 2 * n_agents + 2

observation_dim = 2 * n_agents + 2 # 每个智能体观测所有位置

super().__init__(n_agents, state_dim, self.action_dim, observation_dim)

# 目标位置

self.target_pos = np.array([grid_size - 1, grid_size - 1])

def sample_initial_state(self) -> np.ndarray:

"""采样初始状态:智能体随机位置,目标固定"""

state = np.zeros(self.state_dim)

# 智能体位置

for i in range(self.n_agents):

agent_x = random.randint(0, self.grid_size - 2) # 避免直接在目标位置

agent_y = random.randint(0, self.grid_size - 2)

state[2*i] = agent_x

state[2*i + 1] = agent_y

# 目标位置

state[-2] = self.target_pos[0]

state[-1] = self.target_pos[1]

return state

def transition(self, state: np.ndarray, actions: List[int]) -> np.ndarray:

"""状态转移:根据动作移动智能体"""

new_state = state.copy()

for i, action in enumerate(actions):

agent_x_idx = 2 * i

agent_y_idx = 2 * i + 1

agent_x = int(new_state[agent_x_idx])

agent_y = int(new_state[agent_y_idx])

# 执行动作

if action == 1: # 上

agent_y = max(0, agent_y - 1)

elif action == 2: # 下

agent_y = min(self.grid_size - 1, agent_y + 1)

elif action == 3: # 左

agent_x = max(0, agent_x - 1)

elif action == 4: # 右

agent_x = min(self.grid_size - 1, agent_x + 1)

# action == 0 时不动

new_state[agent_x_idx] = agent_x

new_state[agent_y_idx] = agent_y

return new_state

def compute_rewards(self, state: np.ndarray, actions: List[int]) -> List[float]:

"""计算奖励:协作到达目标"""

rewards = []

# 计算每个智能体到目标的距离

agent_positions = []

for i in range(self.n_agents):

x = state[2 * i]

y = state[2 * i + 1]

agent_positions.append(np.array([x, y]))

target_pos = np.array([state[-2], state[-1]])

# 奖励基于所有智能体到目标的平均距离的倒数

distances = [np.linalg.norm(pos - target_pos) for pos in agent_positions]

avg_distance = np.mean(distances)

# 如果有智能体到达目标,给予团队奖励

team_reward = 0

for pos in agent_positions:

if np.array_equal(pos, target_pos):

team_reward += 1.0 # 到达目标的智能体

# 个人奖励:靠近目标

individual_rewards = [max(0, 1 - dist/self.grid_size) for dist in distances]

# 组合奖励

for i in range(self.n_agents):

rewards.append(individual_rewards[i] + team_reward * 0.5)

return rewards

def get_observation(self, agent_id: int) -> np.ndarray:

"""获取智能体观测:可以看到所有智能体和目标的位置"""

obs = np.zeros(self.observation_dim)

# 每个智能体的位置

for i in range(self.n_agents):

obs[2 * i] = self.state[2 * i] / self.grid_size # 归一化

obs[2 * i + 1] = self.state[2 * i + 1] / self.grid_size

# 目标位置

obs[-2] = self.state[-2] / self.grid_size

obs[-1] = self.state[-1] / self.grid_size

return obs

def render(self):

"""渲染环境状态"""

grid = np.zeros((self.grid_size, self.grid_size))

# 标记目标位置

target_x, target_y = int(self.state[-2]), int(self.state[-1])

grid[target_x, target_y] = 2 # 目标用2表示

# 标记智能体位置

for i in range(self.n_agents):

agent_x, agent_y = int(self.state[2 * i]), int(self.state[2 * i + 1])

if grid[agent_x, agent_y] == 0: # 如果当前位置没有其他标记

grid[agent_x, agent_y] = 1 # 智能体用1表示

else:

grid[agent_x, agent_y] = 3 # 重叠位置用3表示

print("环境状态 (0=空, 1=智能体, 2=目标, 3=重叠):")

print(grid)

# 测试简单协作环境

def test_simple_cooperative_env():

print("=== 测试简单协作环境 ===")

env = SimpleCooperativeEnv(n_agents=2, grid_size=5)

obs = env.reset()

print(f"初始观测: {obs}")

env.render()

# 执行几个随机步骤

for step in range(5):

actions = [random.randint(0, 4) for _ in range(env.n_agents)]

next_obs, rewards, dones, info = env.step(actions)

print(f"步骤 {step + 1}: 动作={actions}, 奖励={rewards}")

env.render()

if any(dones):

print("环境终止")

break多智能体强化学习算法

独立学习(Independent Learning)

code-snippet__js

class QTableAgent:

"""Q表智能体(用于独立学习)"""

def __init__(self, agent_id: int, action_space: int, observation_dim: int,

learning_rate: float = 0.1, discount_factor: float = 0.9,

epsilon: float = 0.1):

self.agent_id = agent_id

self.action_space = action_space

self.observation_dim = observation_dim

self.learning_rate = learning_rate

self.discount_factor = discount_factor

self.epsilon = epsilon

# Q表:使用观测的离散化作为键

self.q_table = {}

def discretize_observation(self, obs: np.ndarray) -> str:

"""将连续观测离散化"""

# 简单的离散化:将每个维度分成10个区间

discrete_values = []

for val in obs:

discrete_val = int(val * 10) # 假设观测值在[0,1]区间

discrete_values.append(discrete_val)

return str(discrete_values)

def get_action(self, obs: np.ndarray, training: bool = True) -> int:

"""根据观测选择动作"""

obs_key = self.discretize_observation(obs)

# 初始化Q值

if obs_key not in self.q_table:

self.q_table[obs_key] = [0.0] * self.action_space

# ε-贪婪策略

if training and random.random() < self.epsilon:

return random.randint(0, self.action_space - 1)

else:

return np.argmax(self.q_table[obs_key])

def update(self, obs: np.ndarray, action: int, reward: float,

next_obs: np.ndarray, done: bool):

"""更新Q表"""

obs_key = self.discretize_observation(obs)

next_obs_key = self.discretize_observation(next_obs)

# 初始化

if obs_key not in self.q_table:

self.q_table[obs_key] = [0.0] * self.action_space

if next_obs_key not in self.q_table:

self.q_table[next_obs_key] = [0.0] * self.action_space

# Q学习更新

current_q = self.q_table[obs_key][action]

if done:

target_q = reward

else:

target_q = reward + self.discount_factor * max(self.q_table[next_obs_key])

new_q = current_q + self.learning_rate * (target_q - current_q)

self.q_table[obs_key][action] = new_q

class IndependentLearningTrainer:

"""独立学习训练器"""

def __init__(self, env: MultiAgentEnvironment):

self.env = env

self.agents = []

# 为每个智能体创建Q表智能体

for i in range(env.n_agents):

agent = QTableAgent(

agent_id=i,

action_space=env.action_spaces[i].n,

observation_dim=env.observation_dim,

learning_rate=0.1,

discount_factor=0.9,

epsilon=0.1

)

self.agents.append(agent)

def train_episode(self) -> List[float]:

"""训练一个episode"""

obs_list = self.env.reset()

total_rewards = [0.0] * self.env.n_agents

step_count = 0

while step_count < self.env.max_steps:

# 每个智能体独立选择动作

actions = []

for i, agent in enumerate(self.agents):

action = agent.get_action(obs_list[i], training=True)

actions.append(action)

# 执行动作

next_obs_list, rewards, dones, info = self.env.step(actions)

# 每个智能体独立更新Q表

for i, agent in enumerate(self.agents):

agent.update(

obs_list[i],

actions[i],

rewards[i],

next_obs_list[i],

dones[i]

)

# 累积奖励

for i in range(self.env.n_agents):

total_rewards[i] += rewards[i]

obs_list = next_obs_list

step_count += 1

if any(dones):

break

return total_rewards

def test_independent_learning():

print("\n=== 独立学习测试 ===")

env = SimpleCooperativeEnv(n_agents=2, grid_size=5)

trainer = IndependentLearningTrainer(env)

# 训练几个episode

for episode in range(10):

rewards = trainer.train_episode()

print(f"Episode {episode + 1}, Rewards: {rewards}")价值分解方法(QMIX)

code-snippet__js

import torch

import torch.nn as nn

import torch.optim as optim

import torch.nn.functional as F

class IndividualRNN(nn.Module):

"""单智能体RNN网络"""

def __init__(self, obs_dim: int, action_dim: int, rnn_hidden_dim: int = 64):

super(IndividualRNN, self).__init__()

self.fc1 = nn.Linear(obs_dim, rnn_hidden_dim)

self.rnn = nn.GRUCell(rnn_hidden_dim, rnn_hidden_dim)

self.fc2 = nn.Linear(rnn_hidden_dim, action_dim)

def forward(self, obs, hidden_state):

x = F.relu(self.fc1(obs))

h = self.rnn(x, hidden_state)

q = self.fc2(h)

return q, h

class MixingNetwork(nn.Module):

"""混合网络(QMIX的核心)"""

def __init__(self, n_agents: int, state_dim: int, embed_dim: int = 32):

super(MixingNetwork, self).__init__()

self.n_agents = n_agents

self.embed_dim = embed_dim

# 状态编码器

self.state_encoder = nn.Sequential(

nn.Linear(state_dim, embed_dim),

nn.ReLU(),

nn.Linear(embed_dim, embed_dim),

nn.ReLU()

)

# 第一层:agent_i -> hypernet_w1_i, hypernet_b1_i

self.hyper_w1 = nn.Sequential(

nn.Linear(embed_dim, embed_dim),

nn.ReLU(),

nn.Linear(embed_dim, self.n_agents * embed_dim)

)

self.hyper_b1 = nn.Sequential(

nn.Linear(embed_dim, embed_dim),

nn.ReLU(),

nn.Linear(embed_dim, embed_dim)

)

# 第二层:hypernet_w2, hypernet_b2

self.hyper_w2 = nn.Sequential(

nn.Linear(embed_dim, embed_dim),

nn.ReLU(),

nn.Linear(embed_dim, embed_dim)

)

self.hyper_b2 = nn.Sequential(

nn.Linear(embed_dim, embed_dim),

nn.ReLU(),

nn.Linear(embed_dim, 1)

)

def forward(self, agent_qs, states):

"""

Args:

agent_qs: (batch_size, n_agents) - 每个智能体的Q值

states: (batch_size, state_dim) - 全局状态

"""

bs = agent_qs.size(0)

# 编码全局状态

state_encoded = self.state_encoder(states) # (batch_size, embed_dim)

# 计算第一层权重和偏置

w1 = self.hyper_w1(state_encoded) # (batch_size, n_agents * embed_dim)

w1 = w1.view(-1, self.n_agents, self.embed_dim) # (batch_size, n_agents, embed_dim)

b1 = self.hyper_b1(state_encoded) # (batch_size, embed_dim)

b1 = b1.view(-1, 1, self.embed_dim) # (batch_size, 1, embed_dim)

# 应用第一层混合

# agent_qs.unsqueeze(-1): (batch_size, n_agents, 1)

# w1: (batch_size, n_agents, embed_dim)

# y1: (batch_size, n_agents, embed_dim)

y1 = torch.bmm(agent_qs.unsqueeze(-1).expand(-1, -1, self.embed_dim), w1) + b1

# 激活函数

y1 = F.elu(y1) # (batch_size, n_agents, embed_dim)

# 第二层权重和偏置

w2 = self.hyper_w2(state_encoded) # (batch_size, embed_dim)

w2 = w2.view(-1, self.embed_dim, 1) # (batch_size, embed_dim, 1)

b2 = self.hyper_b2(state_encoded) # (batch_size, 1)

b2 = b2.view(-1, 1, 1) # (batch_size, 1, 1)

# 应用第二层混合

# y1: (batch_size, n_agents, embed_dim)

# w2: (batch_size, embed_dim, 1)

# q_tot: (batch_size, n_agents, 1) -> (batch_size, n_agents)

y2 = torch.bmm(y1, w2) + b2

q_tot = y2.squeeze(-1).sum(dim=1, keepdim=True).squeeze(-1) # (batch_size,)

return q_tot

class QMixAgent:

"""QMIX智能体"""

def __init__(self, n_agents: int, obs_dim: int, action_dim: int, state_dim: int):

self.n_agents = n_agents

self.obs_dim = obs_dim

self.action_dim = action_dim

self.state_dim = state_dim

# 为每个智能体创建RNN网络

self.individual_rnns = [IndividualRNN(obs_dim, action_dim) for _ in range(n_agents)]

# 混合网络

self.mixer = MixingNetwork(n_agents, state_dim)

# 目标网络

self.target_individual_rnns = [IndividualRNN(obs_dim, action_dim) for _ in range(n_agents)]

self.target_mixer = MixingNetwork(n_agents, state_dim)

# 优化器

self.params = []

for rnn in self.individual_rnns:

self.params.extend(rnn.parameters())

self.params.extend(self.mixer.parameters())

self.optimizer = optim.Adam(self.params, lr=0.001)

# 更新目标网络

self.update_target_networks()

def update_target_networks(self):

"""更新目标网络"""

for i in range(self.n_agents):

self.target_individual_rnns[i].load_state_dict(

self.individual_rnns[i].state_dict()

)

self.target_mixer.load_state_dict(self.mixer.state_dict())

def get_actions(self, obs_list: List[np.ndarray], hidden_states: List[torch.Tensor],

training: bool = True, epsilon: float = 0.1) -> Tuple[List[int], List[torch.Tensor]]:

"""获取动作"""

actions = []

new_hidden_states = []

with torch.no_grad():

for i in range(self.n_agents):

obs_tensor = torch.FloatTensor(obs_list[i]).unsqueeze(0) # (1, obs_dim)

hidden_tensor = hidden_states[i] # (1, rnn_hidden_dim)

q_values, new_hidden = self.individual_rnns[i](obs_tensor, hidden_tensor)

if training and random.random() < epsilon:

action = random.randint(0, self.action_dim - 1)

else:

action = q_values.argmax().item()

actions.append(action)

new_hidden_states.append(new_hidden)

return actions, new_hidden_states

def train(self, batch_data: Dict[str, torch.Tensor]):

"""训练"""

# 提取批次数据

obs_batch = batch_data['obs'] # (batch_size, max_episode_len, n_agents, obs_dim)

actions_batch = batch_data['actions'] # (batch_size, max_episode_len, n_agents)

rewards_batch = batch_data['rewards'] # (batch_size, max_episode_len, 1)

states_batch = batch_data['states'] # (batch_size, max_episode_len, state_dim)

dones_batch = batch_data['dones'] # (batch_size, max_episode_len, 1)

batch_size, max_len = obs_batch.shape[0], obs_batch.shape[1]

# 初始化隐藏状态

hidden_states = [torch.zeros(batch_size, 64) for _ in range(self.n_agents)]

target_hidden_states = [torch.zeros(batch_size, 64) for _ in range(self.n_agents)]

loss = 0

for t in range(max_len - 1):

# 计算当前时间步的Q值

agent_qs = []

for i in range(self.n_agents):

obs_t = obs_batch[:, t, i, :] # (batch_size, obs_dim)

h_t = hidden_states[i] # (batch_size, rnn_hidden_dim)

q_t, h_next = self.individual_rnns[i](obs_t, h_t)

agent_qs.append(q_t.gather(1, actions_batch[:, t, i].unsqueeze(1)).squeeze(1)) # (batch_size,)

hidden_states[i] = h_next

agent_qs = torch.stack(agent_qs, dim=1) # (batch_size, n_agents)

state_t = states_batch[:, t, :] # (batch_size, state_dim)

# 混合Q值

q_tot = self.mixer(agent_qs, state_t) # (batch_size,)

# 计算目标Q值

with torch.no_grad():

next_agent_qs = []

for i in range(self.n_agents):

obs_next = obs_batch[:, t + 1, i, :]

h_next = target_hidden_states[i]

q_next, h_next2 = self.target_individual_rnns[i](obs_next, h_next)

next_agent_qs.append(q_next.max(1)[0]) # 取最大Q值

target_hidden_states[i] = h_next2

next_agent_qs = torch.stack(next_agent_qs, dim=1) # (batch_size, n_agents)

next_state = states_batch[:, t + 1, :]

next_q_tot = self.target_mixer(next_agent_qs, next_state) # (batch_size,)

target_q = rewards_batch[:, t, 0] + \

(1 - dones_batch[:, t, 0]) * 0.9 * next_q_tot # (batch_size,)

# 计算损失

td_error = (q_tot - target_q.detach()) ** 2

loss += td_error.mean()

# 反向传播

self.optimizer.zero_grad()

loss.backward()

self.optimizer.step()

return loss.item()

class QMixTrainer:

"""QMIX训练器"""

def __init__(self, env: MultiAgentEnvironment, max_episodes: int = 1000):

self.env = env

self.max_episodes = max_episodes

self.agent = QMixAgent(

n_agents=env.n_agents,

obs_dim=env.observation_dim,

action_dim=env.action_spaces[0].n,

state_dim=env.state_dim

)

def train(self):

"""训练"""

episode_rewards = []

for episode in range(self.max_episodes):

obs_list = self.env.reset()

total_reward = 0

step_count = 0

# 存储轨迹

trajectory = {

'obs': [],

'actions': [],

'rewards': [],

'states': [],

'dones': []

}

hidden_states = [torch.zeros(1, 64) for _ in range(self.env.n_agents)]

while step_count < self.env.max_steps:

# 获取动作

actions, hidden_states = self.agent.get_actions(

obs_list, hidden_states,

training=True, epsilon=max(0.05, 1.0 - episode / 200)

)

# 执行动作

next_obs_list, rewards, dones, info = self.env.step(actions)

# 存储经验

trajectory['obs'].append([obs.copy() for obs in obs_list])

trajectory['actions'].append(actions.copy())

trajectory['rewards'].append([sum(rewards)]) # 团队奖励

trajectory['states'].append(self.env.state.copy())

trajectory['dones'].append([any(dones)])

obs_list = next_obs_list

total_reward += sum(rewards)

step_count += 1

if any(dones):

break

episode_rewards.append(total_reward)

# 训练(这里简化,实际应该存储更多经验再训练)

if len(trajectory['obs']) > 1:

# 将轨迹转换为张量并训练

obs_tensor = torch.FloatTensor(np.array(trajectory['obs']))

actions_tensor = torch.LongTensor(np.array(trajectory['actions']))

rewards_tensor = torch.FloatTensor(np.array(trajectory['rewards'])).unsqueeze(-1)

states_tensor = torch.FloatTensor(np.array(trajectory['states']))

dones_tensor = torch.FloatTensor(np.array(trajectory['dones'])).unsqueeze(-1)

batch_data = {

'obs': obs_tensor,

'actions': actions_tensor,

'rewards': rewards_tensor,

'states': states_tensor,

'dones': dones_tensor

}

loss = self.agent.train(batch_data)

# 定期更新目标网络

if episode % 100 == 0:

self.agent.update_target_networks()

if episode % 100 == 0:

avg_reward = np.mean(episode_rewards[-100:]) if len(episode_rewards) >= 100 else np.mean(episode_rewards)

print(f"Episode {episode}, Avg Reward: {avg_reward:.2f}")

def test_qmix():

print("\n=== QMIX测试(简化版)===")

# 由于QMIX实现复杂,这里展示概念

print("QMIX概念说明:")

print("- 使用独立的RNN为每个智能体计算Q值")

print("- 使用单调混合网络将个体Q值合并为全局Q值")

print("- 通过反向单调性保证训练稳定性")

print("- 适用于协作型多智能体任务")协作与竞争场景

竞争环境:捕食者-猎物

code-snippet__js

class PredatorPreyEnv(MultiAgentEnvironment):

"""捕食者-猎物环境"""

def __init__(self, n_predators: int = 2, n_prey: int = 1, grid_size: int = 10):

self.n_predators = n_predators

self.n_prey = n_prey

self.n_agents = n_predators + n_prey

self.grid_size = grid_size

self.action_dim = 5 # 不动、上下左右

# 状态:所有智能体的位置 [pred1_x, pred1_y, ..., prey1_x, prey1_y]

state_dim = 2 * self.n_agents

observation_dim = 2 * self.n_agents # 每个智能体看到所有位置

super().__init__(self.n_agents, state_dim, self.action_dim, observation_dim)

# 捕获半径

self.capture_radius = 1.0

def sample_initial_state(self) -> np.ndarray:

"""采样初始状态"""

state = np.zeros(self.state_dim)

# 随机放置捕食者

for i in range(self.n_predators):

state[2 * i] = random.randint(0, self.grid_size - 1)

state[2 * i + 1] = random.randint(0, self.grid_size - 1)

# 随机放置猎物(远离捕食者)

for i in range(self.n_prey):

prey_idx = self.n_predators + i

while True:

x = random.randint(0, self.grid_size - 1)

y = random.randint(0, self.grid_size - 1)

# 检查是否远离所有捕食者

min_dist = float('inf')

for j in range(self.n_predators):

dist = np.sqrt((x - state[2*j])**2 + (y - state[2*j + 1])**2)

min_dist = min(min_dist, dist)

if min_dist > 3: # 确保猎物开始时远离捕食者

state[2 * prey_idx] = x

state[2 * prey_idx + 1] = y

break

return state

def transition(self, state: np.ndarray, actions: List[int]) -> np.ndarray:

"""状态转移"""

new_state = state.copy()

for i, action in enumerate(actions):

x_idx = 2 * i

y_idx = 2 * i + 1

x = int(new_state[x_idx])

y = int(new_state[y_idx])

# 执行动作

if action == 1: # 上

y = max(0, y - 1)

elif action == 2: # 下

y = min(self.grid_size - 1, y + 1)

elif action == 3: # 左

x = max(0, x - 1)

elif action == 4: # 右

x = min(self.grid_size - 1, x + 1)

new_state[x_idx] = x

new_state[y_idx] = y

return new_state

def compute_rewards(self, state: np.ndarray, actions: List[int]) -> List[float]:

"""计算奖励"""

rewards = [0.0] * self.n_agents

# 捕食者奖励

prey_positions = []

for i in range(self.n_prey):

prey_idx = self.n_predators + i

prey_positions.append(np.array([state[2 * prey_idx], state[2 * prey_idx + 1]]))

predator_positions = []

for i in range(self.n_predators):

predator_positions.append(np.array([state[2 * i], state[2 * i + 1]]))

# 检查捕获

captured = [False] * self.n_prey

for prey_idx, prey_pos in enumerate(prey_positions):

for pred_pos in predator_positions:

dist = np.linalg.norm(pred_pos - prey_pos)

if dist <= self.capture_radius:

captured[prey_idx] = True

break

# 分配奖励

for prey_idx in range(self.n_prey):

if captured[prey_idx]:

# 猎物被捕捉:捕食者获得正奖励,猎物获得负奖励

prey_abs_idx = self.n_predators + prey_idx

rewards[prey_abs_idx] = -10.0 # 猎物惩罚

# 所有捕食者共享奖励

shared_reward = 5.0

for pred_idx in range(self.n_predators):

rewards[pred_idx] += shared_reward / self.n_predators

# 捕食者额外奖励:接近猎物

for pred_idx in range(self.n_predators):

pred_pos = predator_positions[pred_idx]

min_dist_to_prey = min([np.linalg.norm(pred_pos - prey_pos)

for prey_pos in prey_positions])

# 距离越近奖励越高

rewards[pred_idx] += max(0, (10 - min_dist_to_prey) / 10)

# 猎物奖励:远离捕食者

for prey_idx in range(self.n_prey):

if not captured[prey_idx]:

prey_abs_idx = self.n_predators + prey_idx

prey_pos = prey_positions[prey_idx]

# 计算到最近捕食者的距离

min_dist_to_pred = min([np.linalg.norm(prey_pos - pred_pos)

for pred_pos in predator_positions])

rewards[prey_abs_idx] += min_dist_to_pred / 10

return rewards

def get_observation(self, agent_id: int) -> np.ndarray:

"""获取观测"""

obs = np.zeros(self.observation_dim)

# 所有智能体的位置(归一化)

for i in range(self.n_agents):

obs[2 * i] = self.state[2 * i] / self.grid_size

obs[2 * i + 1] = self.state[2 * i + 1] / self.grid_size

return obs

def render(self):

"""渲染"""

grid = np.zeros((self.grid_size, self.grid_size))

# 标记猎物位置(用2)

for i in range(self.n_prey):

idx = self.n_predators + i

x, y = int(self.state[2 * idx]), int(self.state[2 * idx + 1])

grid[x, y] = 2

# 标记捕食者位置(用1)

for i in range(self.n_predators):

x, y = int(self.state[2 * i]), int(self.state[2 * i + 1])

if grid[x, y] == 0:

grid[x, y] = 1

else:

grid[x, y] = 3 # 重叠

print("捕食者-猎物环境 (1=捕食者, 2=猎物, 3=重叠):")

print(grid)

def test_predator_prey():

print("\n=== 捕食者-猎物环境测试 ===")

env = PredatorPreyEnv(n_predators=2, n_prey=1, grid_size=8)

obs = env.reset()

print(f"初始观测形状: {[o.shape for o in obs]}")

env.render()

# 执行几个随机步骤

for step in range(3):

actions = [random.randint(0, 4) for _ in range(env.n_agents)]

next_obs, rewards, dones, info = env.step(actions)

print(f"步骤 {step + 1}: 动作={actions}, 奖励={rewards}")

env.render()

if any(dones):

print("环境终止")

break多智能体学习的挑战与解决方案

信用分配问题

code-snippet__js

class CreditAssignmentModule:

"""信用分配模块"""

def __init__(self, n_agents: int):

self.n_agents = n_agents

self.contribution_history = {} # 记录各智能体的贡献

def compute_contributions(self, joint_action: List[int],

joint_observation: List[np.ndarray],

team_reward: float,

individual_rewards: List[float]) -> List[float]:

"""计算每个智能体的贡献"""

contributions = []

# 方法1: 基于动作差异的贡献计算

for i in range(self.n_agents):

# 计算如果没有智能体i的动作,团队表现会如何

hypothetical_team_reward = self.estimate_hypothetical_reward(joint_action, i)

# 智能体i的贡献 = 实际奖励 - 假设奖励

contribution = team_reward - hypothetical_team_reward

contributions.append(contribution)

# 方法2: 基于观测影响的贡献计算

# 这里可以实现更复杂的贡献计算方法

return contributions

def estimate_hypothetical_reward(self, joint_action: List[int], agent_idx: int) -> float:

"""估计缺少某个智能体时的奖励"""

# 简化:随机替换该智能体的动作

hypothetical_actions = joint_action.copy()

hypothetical_actions[agent_idx] = random.randint(0, 4) # 假设动作空间为5

# 返回基于假设动作的预期奖励(这里简化)

return random.uniform(0, 1)非平稳环境处理

code-snippet__js

class OpponentModelingAgent:

"""具有对手建模能力的智能体"""

def __init__(self, agent_id: int, own_action_space: int, opponent_action_space: int):

self.agent_id = agent_id

self.own_action_space = own_action_space

self.opponent_action_space = opponent_action_space

# 对手模型:记录对手行为模式

self.opponent_models = {} # agent_id -> model

self.action_frequency = {} # 记录对手动作频率

def update_opponent_model(self, opponent_id: str, opponent_action: int):

"""更新对手模型"""

if opponent_id not in self.action_frequency:

self.action_frequency[opponent_id] = [0] * self.opponent_action_space

self.action_frequency[opponent_id][opponent_action] += 1

def predict_opponent_action(self, opponent_id: str) -> int:

"""预测对手动作"""

if opponent_id not in self.action_frequency:

return random.randint(0, self.opponent_action_space - 1)

# 使用频率最高的动作作为预测

freq = self.action_frequency[opponent_id]

return freq.index(max(freq))

def adapt_strategy(self, opponent_predictions: List[int]) -> int:

"""根据对手预测调整策略"""

# 这里可以实现对抗性策略

# 比如在石头剪刀布中,如果预测对手出石头,则出布

pass

def demonstrate_challenges():

print("\n=== 多智能体学习挑战演示 ===")

print("1. 信用分配问题:")

print(" - 问题: 如何将团队奖励分配给各个智能体")

print(" - 解决: 使用贡献度分析、注意力机制等")

print("\n2. 非平稳环境:")

print(" - 问题: 其他智能体学习导致环境动态变化")

print(" - 解决: 对手建模、策略稳定性技术")

print("\n3. 通信约束:")

print(" - 问题: 智能体间通信可能受限")

print(" - 解决: 通信学习、注意力机制")

print("\n4. 探索困境:")

print(" - 问题: 个体探索可能损害团队利益")

print(" - 解决: 集中训练分散执行、内在动机")

if __name__ == "__main__":

test_simple_cooperative_env()

test_independent_learning()

test_qmix()

test_predator_prey()

demonstrate_challenges()MARL算法选择指南

算法适用场景

| 算法类型 | 适用场景 | 优点 | 缺点 |

| 独立Q学习 | 简单协作任务 | 实现简单,计算效率高 | 可能收敛到次优解 |

| QMIX | 协作任务 | 全局最优保证 | 实现复杂,需要全局状态 |

| MADDPG | 连续动作空间 | 处理连续动作 | 样本效率低 |

| COMA | 部分可观测环境 | 解决信用分配 | 方差较大 |

选择原则

- 任务性质:协作vs竞争vs混合

- 观测类型:完全可观测vs部分可观测

- 动作空间:离散vs连续

- 通信需求:是否需要显式通信

实践练习

-

实现一个MADDPG算法应用于连续动作空间的多智能体环境。

-

扩展捕食者-猎物环境,增加障碍物和更复杂的地形。

-

创建一个MARL算法比较框架,评估不同算法在不同环境下的性能。

总结

本节课详细介绍了多智能体强化学习的基本概念、环境建模方法和主要算法。MARL是一个活跃的研究领域,面临着许多独特的挑战,但也为解决复杂的多智能体协作和竞争问题提供了强大工具。

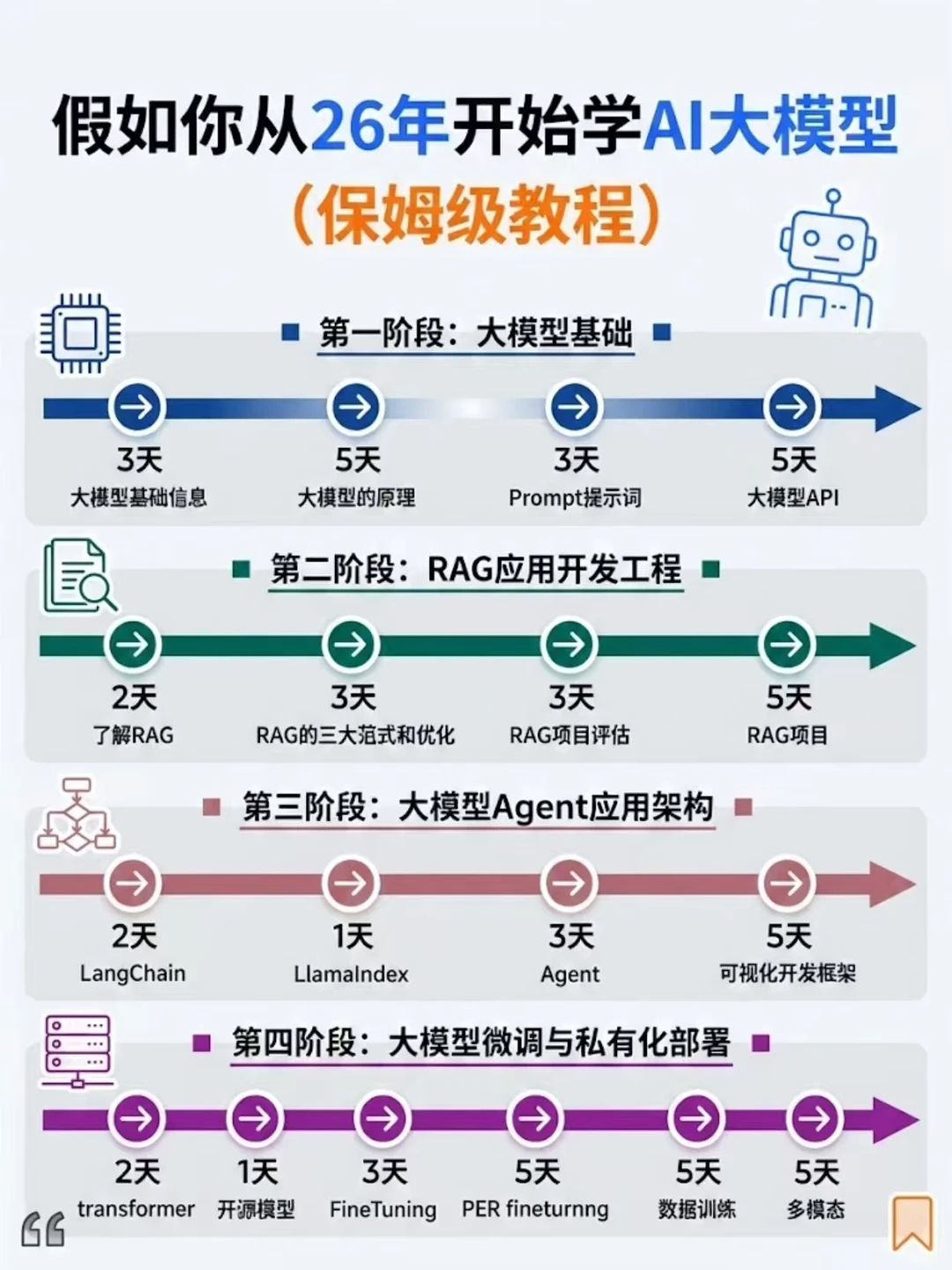

假如你从2026年开始学大模型,按这个步骤走准能稳步进阶。

接下来告诉你一条最快的邪修路线,

3个月即可成为模型大师,薪资直接起飞。

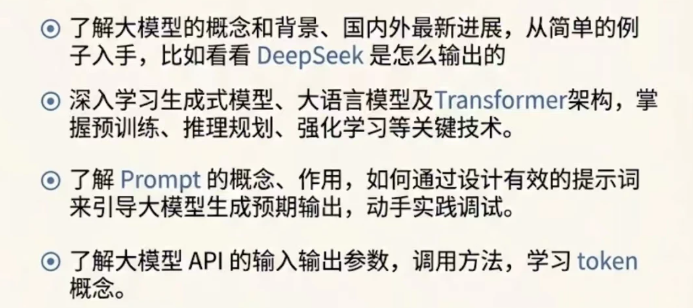

阶段1:大模型基础

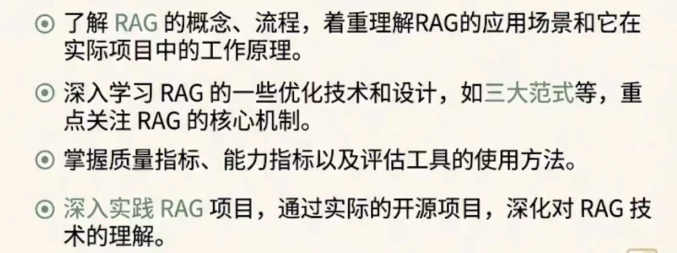

阶段2:RAG应用开发工程

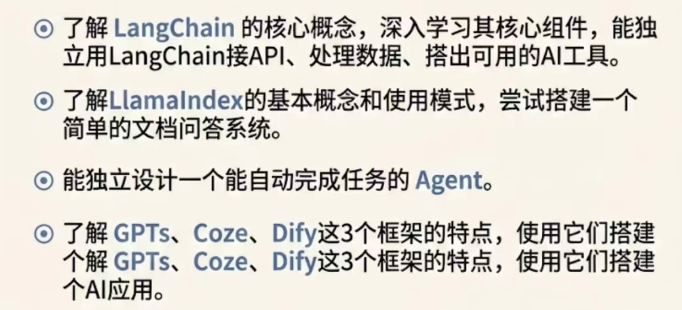

阶段3:大模型Agent应用架构

阶段4:大模型微调与私有化部署

配套文档资源+全套AI 大模型 学习资料,朋友们如果需要可以微信扫描下方二维码免费领取【保证100%免费】👇👇

配套文档资源+全套AI 大模型 学习资料,朋友们如果需要可以微信扫描下方二维码免费领取【保证100%免费】👇👇