k8s集群部署Prometheus和Grafana

一、部署node-exporter

(一)创建命名空间

bash

kubectl create ns monitor-sa(二)部署node-exporter

- 获取镜像

这里采用私服的方式进行镜像的pull和push操作。如不会搭建Harbor私服请参考另一篇文章k8s部署EFK日志管理系统

bash

docker pull swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io/prom/node-exporter:v0.16.0

docker tag swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io/prom/node-exporter:v0.16.0 192.168.224.130/library/node-exporter:v0.16.0

docker login -u admin(Harbor账号) -p Harbor12345(Harbor密码) 192.168.224.130(Harbor IP地址)

docker push 192.168.224.130/library/node-exporter:v0.16.0- 定义yaml文件

使用yaml文件,以DaemonSet副本控制器,在每个节点上部署node-exporter

bash

vim node-export.yaml

bash

---

apiVersion: apps/v1

kind: DaemonSet #可以保证 k8s 集群的每个节点都运行完全一样的 pod

metadata:

name: node-exporter

namespace: monitor-sa

labels:

name: node-exporter

spec:

selector:

matchLabels:

name: node-exporter

template:

metadata:

labels:

name: node-exporter

spec:

hostPID: true #允许Pod使用宿主机的 PID

hostIPC: true #允许Pod使用宿主机的IPC

hostNetwork: true #允许Pod使用宿主机的网络命名空间

containers:

- name: node-exporter

image: 192.168.224.130/library/node-exporter:v0.16.0 #指定镜像(这里需要修改成自己私服地址)

ports:

- containerPort: 9100 #指定容器暴露端口

resources:

requests:

cpu: 0.15 #这个容器运行至少需要0.15核cpu

securityContext:

privileged: true #开启特权模式

args: #传递给容器的命令行参数

- --path.procfs

- /host/proc

- --path.sysfs

- /host/sys

- --collector.filesystem.ignored-mount-points

- '"^/(sys|proc|dev|host|etc)($|/)"'

volumeMounts: #定义了容器内挂载卷的路径

- name: dev

mountPath: /host/dev

- name: proc

mountPath: /host/proc

- name: sys

mountPath: /host/sys

- name: rootfs

mountPath: /rootfs

tolerations:

- key: "node-role.kubernetes.io/control-plane"

operator: "Exists"

effect: "NoSchedule"

volumes: #定义了 Pod 中要使用的卷

- name: proc

hostPath:

path: /proc

- name: dev

hostPath:

path: /dev

- name: sys

hostPath:

path: /sys

- name: rootfs

hostPath:

path: /镜像拉取需要改成自己搭建的私服地址

- 创建服务

bash

kubectl apply -f node-export.yaml

bash

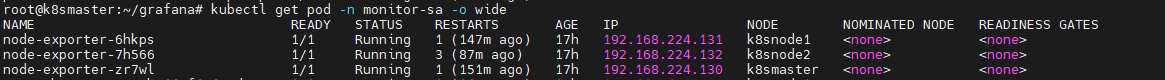

kubectl get pod -n monitor-sa -o wide

每个节点都运行一个node-exporter

二、部署Prometheus

(一)创建账号并授权

创建一个管理账号,并绑定admin用户,admin用户拥有所有资源的所有权限

bash

kubectl create serviceaccount monitor -n monitor-sa

kubectl create clusterrolebinding monitor-clusterrolebinding -n monitor-sa --clusterrole=cluster-admin --serviceaccount=monitor-sa:monitor(二)创建configmap存储卷

bash

vim prometheus-cfg.yaml

bash

apiVersion: v1

kind: ConfigMap

metadata:

labels:

app: prometheus

name: prometheus-config

namespace: monitor-sa

data:

prometheus.yml: |

global: #指定prometheus的全局配置,比如采集间隔,抓取超时时间等

scrape_interval: 15s #采集目标主机监控数据的时间间隔,默认为1m

scrape_timeout: 10s #数据采集超时时间,默认10s

evaluation_interval: 1m #触发告警生成alert的时间间隔,默认是1m

scrape_configs: #配置数据源,称为target,每个target用job_name命名。

- job_name: 'kubernetes-node'

kubernetes_sd_configs: #指定的是k8s的服务发现

- role: node #使用node角色,它使用默认的kubelet提供的http端口来发现集群中每个node节点

relabel_configs: #重新标记,修改目标地址

- source_labels: [__address__] #配置的原始标签,匹配地址

regex: '(.*):10250' #匹配带有10250端口的url

replacement: '${1}:9100' #把匹配到的ip:10250的ip保留,类似与sed的后向引用

target_label: __address__ #新生成的url是${1}获取到的ip:9100

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- job_name: 'kubernetes-node-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https #定义了与cAdvisor服务通信时使用HTTPS协议

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt #CA证书文件的路径

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap #把匹配到的标签保留

regex: __meta_kubernetes_node_label_(.+) #保留匹配到的具有__meta_kubernetes_node_label的标签

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+) #把原始标签中__meta_kubernetes_node_name值匹配到

target_label: __metrics_path__ #获取__metrics_path__对应的值

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: 'kubernetes-apiserver'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name创建服务

bash

kubectl apply -f prometheus-cfg.yaml

bash

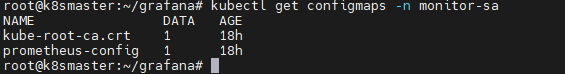

kubectl get configmaps -n monitor-sa

(三)部署prometheus

拉取镜像

这里采用私服的方式进行镜像的pull和push操作。如不会搭建Harbor私服请参考另一篇文章k8s部署EFK日志管理系统

bash

docker pull swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io/prom/prometheus:v2.52.0

docker tag swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io/prom/prometheus:v2.52.0 192.168.224.130/library/prometheus:v2.52.0

docker login -u admin(Harbor账号) -p Harbor12345(Harbor密码) 192.168.224.130(Harbor IP地址)

docker push 192.168.224.130/library/prometheus:v2.52.0定义yaml文件

bash

vim prometheus-deploy.yaml

bash

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus-server #部署的名称

namespace: monitor-sa #指定命名空间

labels:

app: prometheus

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

component: server

template:

metadata:

labels:

app: prometheus

component: server

annotations:

prometheus.io/scrape: 'false' #Pod的注解,表示Prometheus不从此端点抓取数据

spec:

nodeName: node01 #指定Pod应调度到的节点名称

serviceAccountName: monitor #指定Pod使用的ServiceAccount的名称

containers:

- name: prometheus

image: 192.168.224.130/library/prometheus:v2.52.0 #要使用的容器镜像和标签

imagePullPolicy: IfNotPresent

command:

- prometheus #启动命令

- --config.file=/etc/prometheus/prometheus.yml #指定加载的配置文件

- --storage.tsdb.path=/prometheus #数据存储目录

- --storage.tsdb.retention=720h #数据保存时长

- --web.enable-lifecycle #开启热加载

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- mountPath: /etc/prometheus/prometheus.yml

name: prometheus-config

subPath: prometheus.yml

- mountPath: /prometheus/

name: prometheus-storage-volume

volumes:

- name: prometheus-config

configMap: #指定挂载的configMap资源

name: prometheus-config

items:

- key: prometheus.yml

path: prometheus.yml

mode: 0644

- name: prometheus-storage-volume

hostPath:

path: /data

type: Directory镜像需要改成自己的私服地址。

在node1节点创建挂载目录

bash

[root@node1 ~]#mkdir /data && chmod 777 /data创建服务

bash

kubectl apply -f prometheus-deploy.yaml

bash

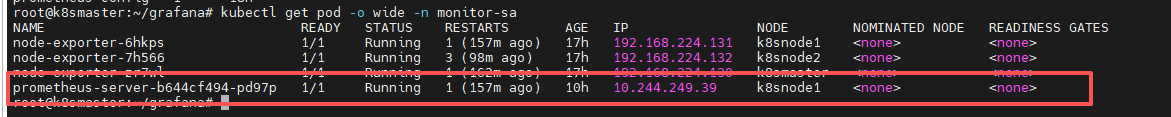

kubectl get pod -o wide -n monitor-sa

(四)创建svc

bash

vim prometheus-svc.yaml

bash

apiVersion: v1

kind: Service

metadata:

name: prometheus

namespace: monitor-sa

labels:

app: prometheus

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

protocol: TCP

nodePort: 31000

selector:

app: prometheus

component: server

bash

kubectl apply -f prometheus-svc.yaml

bash

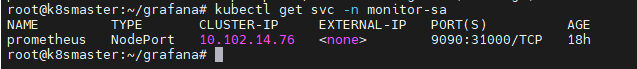

kubectl get svc -n monitor-sa

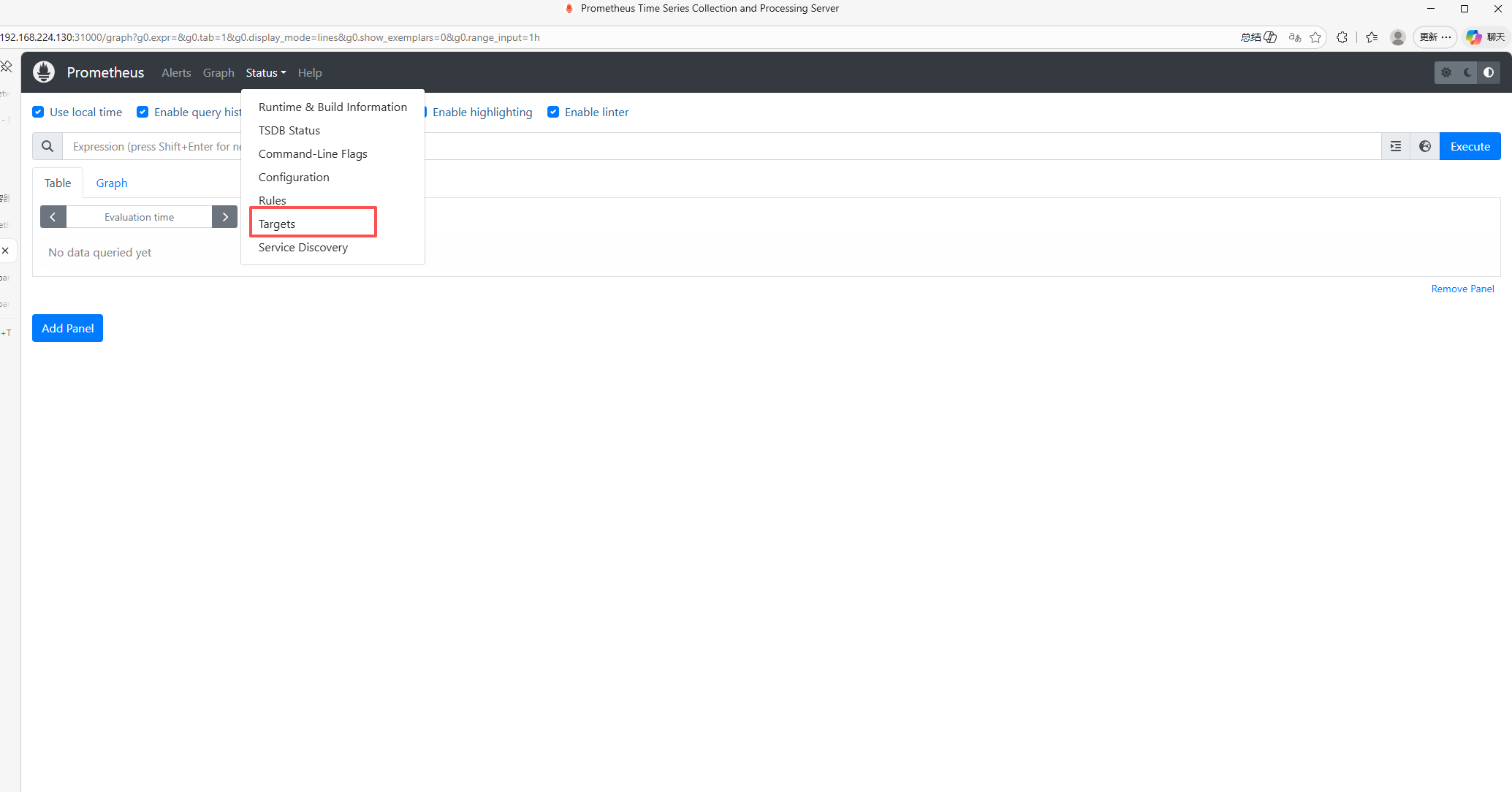

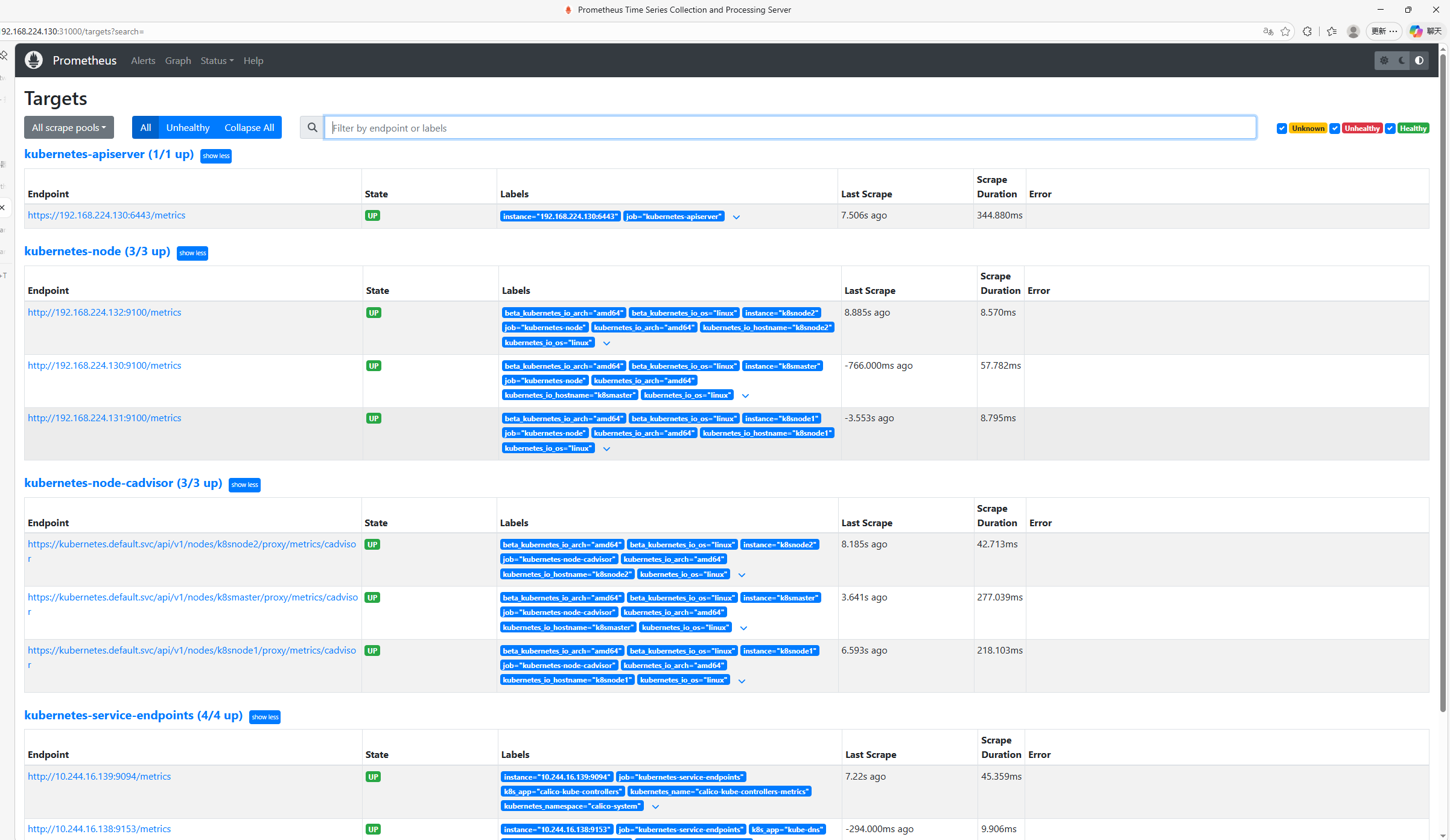

使用web浏览器访问节点的31000端口

点击Targets查看加入监控的服务。

三、部署Grafana

(一)准备镜像

这里采用私服的方式进行镜像的pull和push操作。如不会搭建Harbor私服请参考另一篇文章k8s部署EFK日志管理系统

bash

docker pull swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io/grafana/grafana:7.3.7

docker tag swr.cn-north-4.myhuaweicloud.com/ddn-k8s/docker.io/grafana/grafana:7.3.7 192.168.224.130/library/grafana:7.3.7

docker login -u admin -p Harbor12345 192.168.224.130

docker push 192.168.224.130/library/grafana:7.3.7(二)创建Grafana的pod资源

bash

vim grafana.yaml

bash

apiVersion: apps/v1

kind: Deployment

metadata:

name: monitoring-grafana

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

task: monitoring

k8s-app: grafana

template:

metadata:

labels:

task: monitoring

k8s-app: grafana

spec:

containers:

- name: grafana

image: grafana/grafana:5.0.4

ports:

- containerPort: 3000

protocol: TCP

volumeMounts: #定义了如何挂载卷到容器中

- mountPath: /etc/ssl/certs

name: ca-certificates

readOnly: true

- mountPath: /var/lib/grafana/

name: grafana-storage

env: #定义容器内的环境变量

- name: INFLUXDB_HOST

value: monitoring-influxdb

#指定了Grafana连接到的InfluxDB数据库的主机名或IP地址

- name: GF_SERVER_HTTP_PORT

value: "3000"

#指定了Grafana HTTP服务器监听的端口

- name: GF_AUTH_BASIC_ENABLED

value: "false"

#用于启用或禁用Grafana的基本认证,false表示禁用

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

#用于启用或禁用Grafana的匿名访问,true表示启用

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

#当匿名访问被启用时,这个环境变量定义了匿名用户所拥有的组织角色,Admin表示具有管理员权限

- name: GF_SERVER_ROOT_URL

value: /

#定义了Grafana服务器的根URL

volumes: #定义了要挂载到Pod中的卷

- name: ca-certificates

hostPath:

path: /etc/ssl/certs

- name: grafana-storage

emptyDir: {}创建资源

bash

kubectl apply -f grafana.yaml(三)创建Grafana的svc资源

bash

vim grafana-service.yaml

bash

apiVersion: v1

kind: Service

metadata:

labels:

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-grafana

name: monitoring-grafana

namespace: kube-system

spec:

ports:

- port: 80

targetPort: 3000

selector:

k8s-app: grafana

type: NodePort

[root@master01 prometheus]#

service/monitoring-grafana created

bash

kubectl apply -f grafana-service.yaml

bash

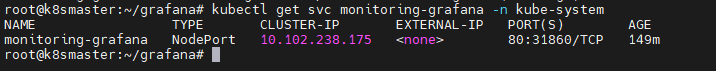

kubectl get svc monitoring-grafana -n kube-system

(四)访问Grafana

- 登录grafana

浏览器访问http://192.168.224.130:31860,登陆 grafana

默认账号admin,密码admin

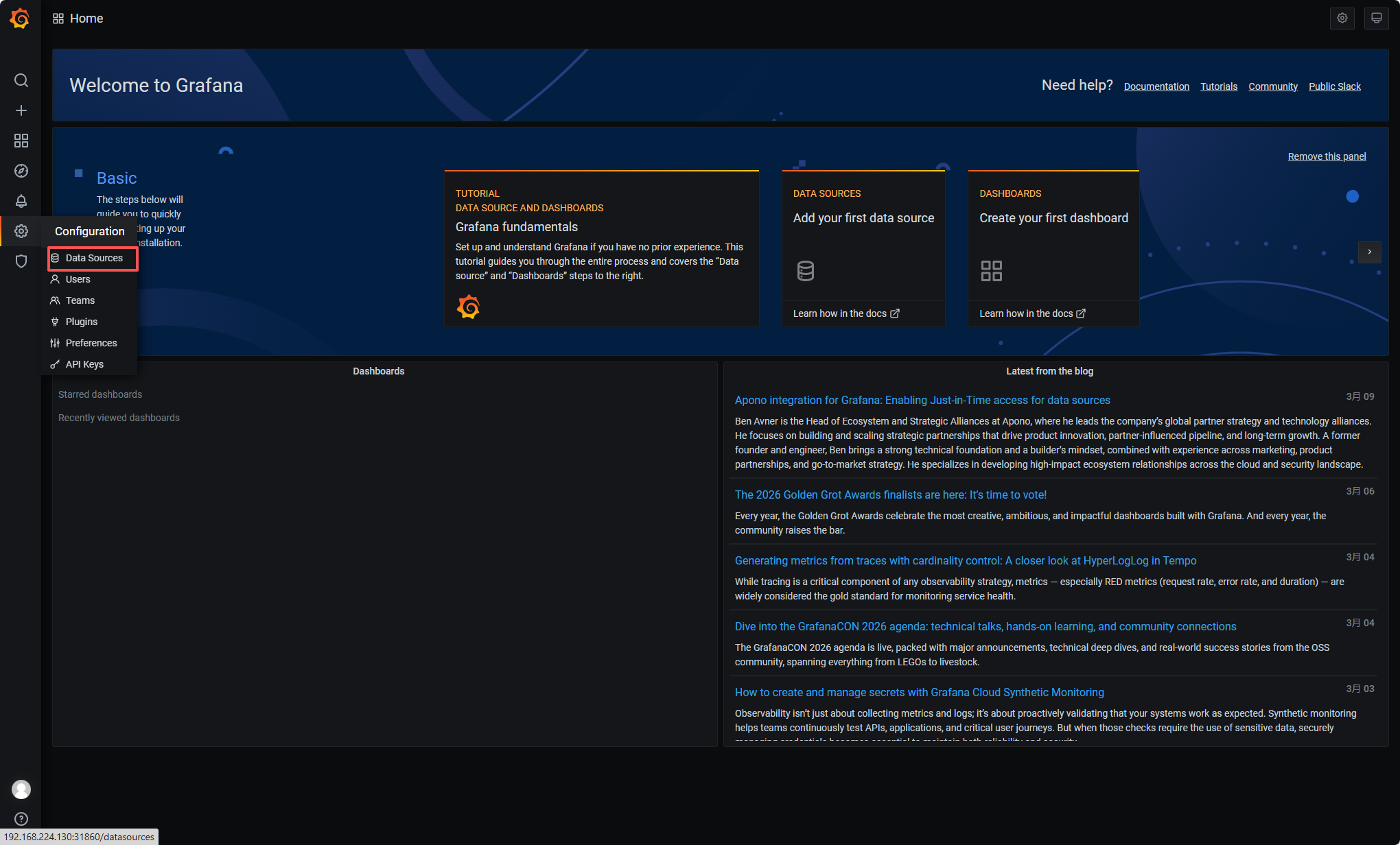

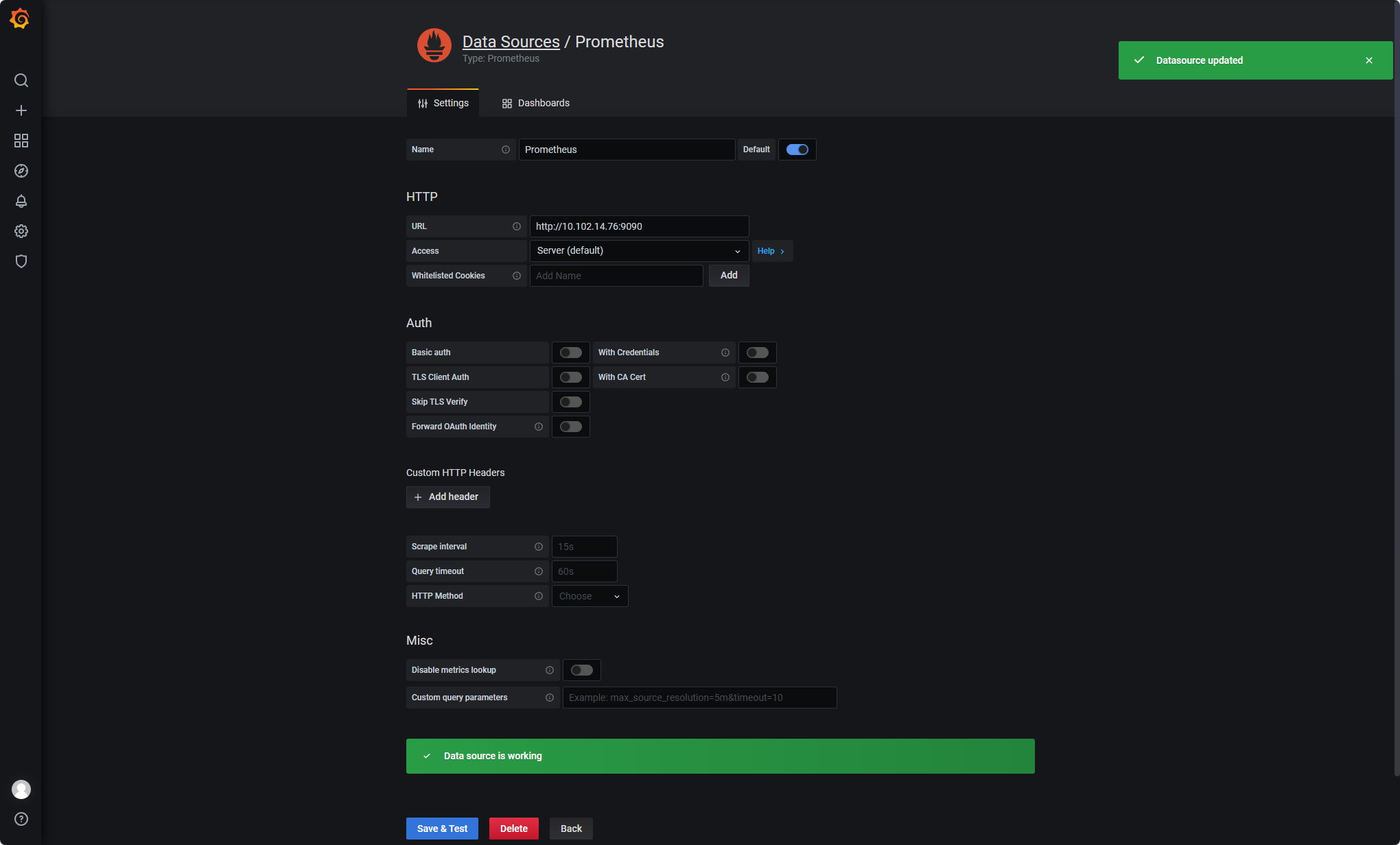

- 配置grafana

配置数据源Prometheus 。

【Name】设置成 Prometheus

【Type】选择 Prometheus 【URL】设置成 http://10.102.14.76:9090

#使用service的集群内部端口配置服务端地址,kubectl get svc -n monitor-sa查看

点击 【Save & Test】

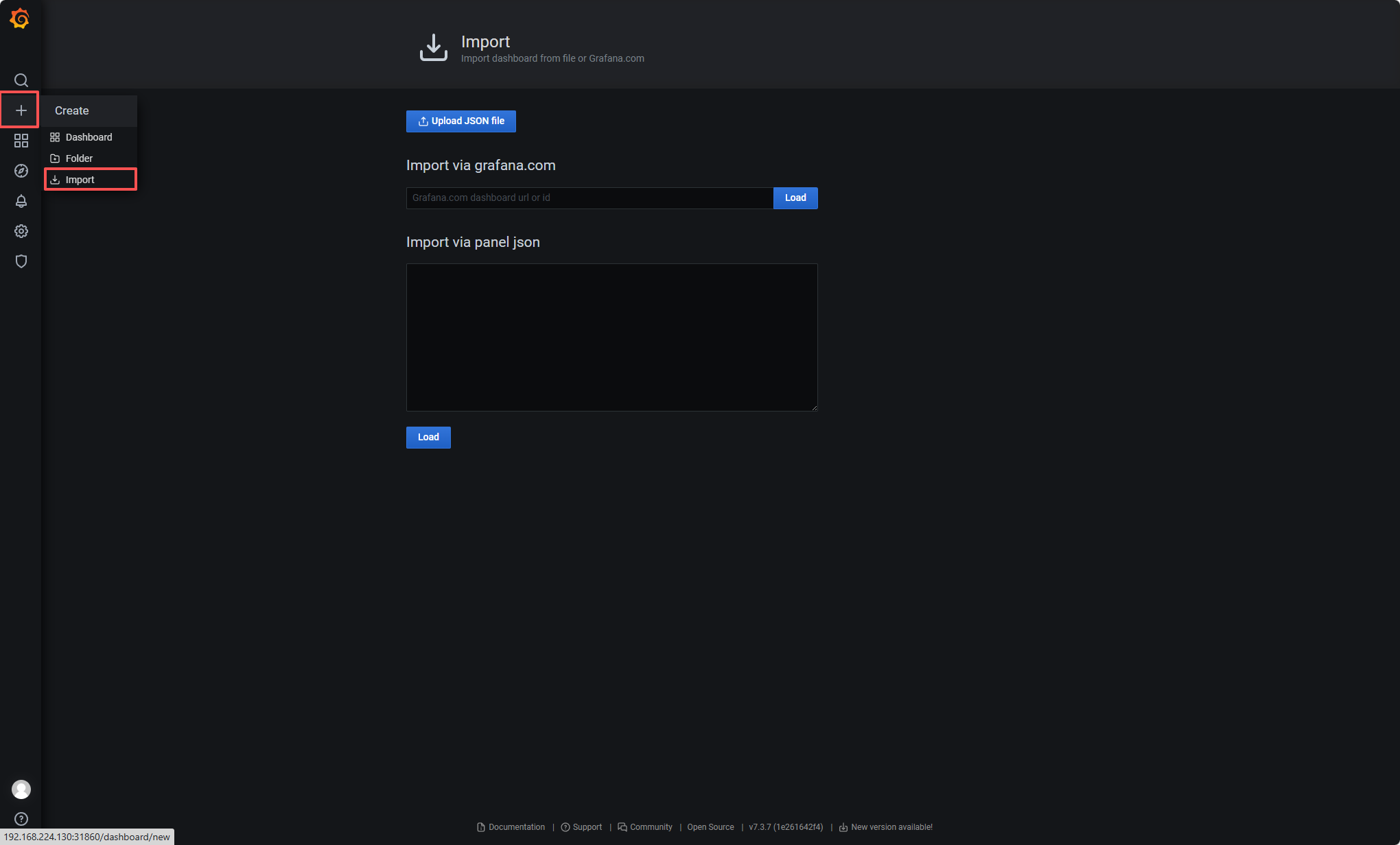

- 导入监控模板

官方链接:https://grafana.com/dashboards?dataSource=prometheus\&search=kubernetes

选择适合的面板,点击 Copy ID 或者 Download JSON

3.1 导入监控模板

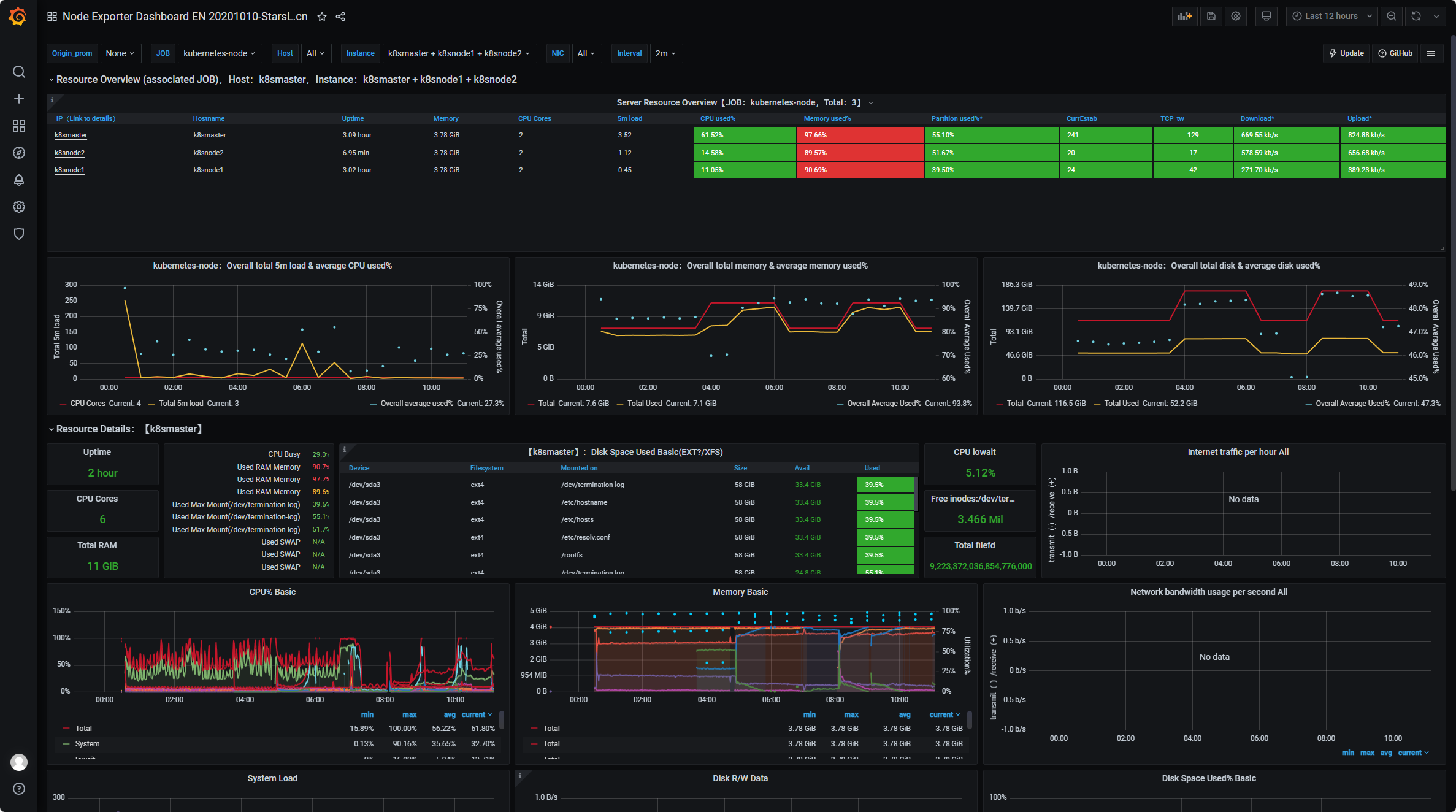

监控状态

点击左侧+号选择【Import】

点击【Upload .json File】导入下载的模板文件

【Prometheus】选择 Prometheus

点击【Import】

可以在监控模板中挑选适合的进行导入。

四、部署kube-state-metrics

kube-state-metrics是一个Kubernetes集群中的重要组件,它的主要作用是收集和暴露Kubernetes资源的状态指标,以便于监控和分析

(一)部署kube-state-metrics

- 创建sa账号并授权

创建serviceaccount用户账号,并授予指定组的权限,使其对k8s资源有管理的权限,能够收集到资源信息

bash

vim kube-state-metrics-rbac.yaml

bash

apiVersion: v1

kind: ServiceAccount

metadata:

name: kube-state-metrics

namespace: kube-system

---

#创建集群角色,并指定角色权限

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: kube-state-metrics

rules:

- apiGroups: [""]

resources: ["nodes", "pods", "services", "resourcequotas", "replicationcontrollers", "limitranges", "persistentvolumeclaims", "persistentvolumes", "namespaces", "endpoints"]

verbs: ["list", "watch"]

- apiGroups: ["extensions"]

resources: ["daemonsets", "deployments", "replicasets"]

verbs: ["list", "watch"]

- apiGroups: ["apps"]

resources: ["statefulsets"]

verbs: ["list", "watch"]

- apiGroups: ["batch"]

resources: ["cronjobs", "jobs"]

verbs: ["list", "watch"]

- apiGroups: ["autoscaling"]

resources: ["horizontalpodautoscalers"]

verbs: ["list", "watch"]

---

#对用户与集群角色进行绑定,使创建的用户有一定的权限去管理,并获取资源信息

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kube-state-metrics

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kube-state-metrics

subjects:

- kind: ServiceAccount

name: kube-state-metrics

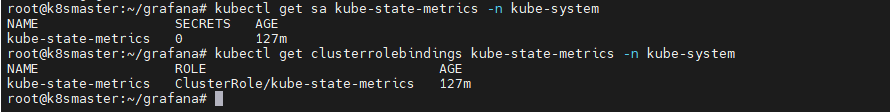

namespace: kube-system创建并查看信息

bash

kubectl apply -f kube-state-metrics-rbac.yaml

bash

kubectl get sa kube-state-metrics -n kube-system

bash

kubectl get clusterrolebindings kube-state-metrics -n kube-system

- 安装kube-state-metrics组件

拉取镜像

这里采用私服的方式进行镜像的pull和push操作。如不会搭建Harbor私服请参考另一篇文章k8s部署EFK日志管理系统

bash

docker pull swr.cn-north-4.myhuaweicloud.com/ddn-k8s/quay.io/coreos/kube-state-metrics:v1.9.7

docker tag swr.cn-north-4.myhuaweicloud.com/ddn-k8s/quay.io/coreos/kube-state-metrics:v1.9.7 192.168.224.130/library/kube-state-metrics:v1.9.7

docker login -u admin -p Harbor12345 192.168.224.130

docker push 192.168.224.130/library/kube-state-metrics:v1.9.7创建服务

bash

vim kube-state-metrics-deploy.yaml

bash

apiVersion: apps/v1

kind: Deployment

metadata:

name: kube-state-metrics

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: kube-state-metrics

template:

metadata:

labels:

app: kube-state-metrics

spec:

serviceAccountName: kube-state-metrics

containers:

- name: kube-state-metrics

image: quay.io/coreos/kube-state-metrics:v1.9.0

ports:

- containerPort: 8080

bash

kubectl apply -f kube-state-metrics-deploy.yaml- 创建service资源

bash

vim kube-state-metrics-svc.yaml

bash

apiVersion: v1

kind: Service

metadata:

annotations:

prometheus.io/scrape: 'true' #注解信息,表示从此端点抓取数据

name: kube-state-metrics

namespace: kube-system

labels:

app: kube-state-metrics

spec:

ports:

- name: kube-state-metrics

port: 8080

protocol: TCP

selector:

app: kube-state-metrics

bash

kubectl apply -f kube-state-metrics-svc.yaml

bash

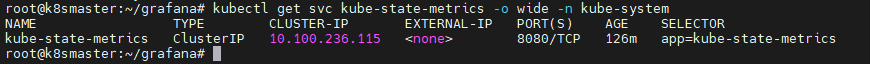

kubectl get svc kube-state-metrics -o wide -n kube-system