前情提要:本篇博客详细介绍了Docker的几个自定义网络模式,以及对应的使用场景下的详细配 置流程。

系统:RHEL9.3

一、Docker自定义网络介绍

自定义网络模式,docker提供了三种自定义网络驱动:

-

bridge

-

overlay

-

macvlan

bridge驱动类似默认的bridge网络模式,但增加了一些新的功能,

overlay和macvlan是用于创建跨主机网络

建议使用自定义的网络来控制哪些容器可以相互通信,还可以自动DNS解析容器名称到IP地址。

二、自定义桥接网络

2.1 自定义桥接网络介绍与配置

在建立自定以网络时,默认使用桥接模式

cpp

[root@docker ~]# docker network create my_net1

f2aae5ce8ce43e8d1ca80c2324d38483c2512d9fb17b6ba60d05561d6093f4c4

[root@docker ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

2a93d6859680 bridge bridge local

4d81ddd9ed10 host host local

f2aae5ce8ce4 my_net1 bridge local

8c8c95f16b68 none null local桥接默认是单调递增,每自定义一个桥接网络,就多一个br开头的网卡

cpp

[root@docker ~]# ifconfig

br-f2aae5ce8ce4: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.18.0.1 netmask 255.255.0.0 broadcast 172.18.255.255

ether 02:42:70:57:f2:82 txqueuelen 0 (Ethernet)

RX packets 21264 bytes 1497364 (1.4 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 27359 bytes 215202367 (205.2 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

docker0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.17.0.1 netmask 255.255.0.0 broadcast 172.17.255.255

inet6 fe80::42:5fff:fee2:346c prefixlen 64 scopeid 0x20<link>

ether 02:42:5f:e2:34:6c txqueuelen 0 (Ethernet)

RX packets 21264 bytes 1497364 (1.4 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 27359 bytes 215202367 (205.2 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0桥接也支持自定义子网和网关

cpp

[root@docker ~]# docker network create my_net2 --subnet 192.168.0.0/24 --gateway 192.168.0.100

7e77cd2e44c64ff3121a1f1e0395849453f8d524d24b915672da265615e0e4f9

[root@docker ~]# docker network inspect my_net2

[

{

"Name": "my_net2",

"Id": "7e77cd2e44c64ff3121a1f1e0395849453f8d524d24b915672da265615e0e4f9",

"Created": "2024-08-17T17:05:19.167808342+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": {},

"Config": [

{

"Subnet": "192.168.0.0/24",

"Gateway": "192.168.0.100"

}

]

},

"Internal": false,

"Attachable": false,

"Ingress": false,

"ConfigFrom": {

"Network": ""

},

"ConfigOnly": false,

"Containers": {},

"Options": {},

"Labels": {}

}

]2.2 为什么要自定义桥接网络

多容器之间虽然可以通过ip互访,但是存在问题

cpp

[root@docker ~]# docker run -d --name web1 nginx

d5da7eaa913fa6cdd2aa9a50561042084eca078c114424cb118c57eeac473424

[root@docker ~]# docker run -d --name web2 nginx

0457a156b02256915d4b42f6cc52ea71b18cf9074ce550c886f206fef60dfae5

[root@docker ~]# docker inspect web1

"Networks": {

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"MacAddress": "02:42:ac:11:00:03",

"DriverOpts": null,

"NetworkID": "2a93d6859680b45eae97e5f6232c3b8e070b1ec3d01852b147d2e1385034bce5",

"EndpointID": "4d54b12aeb2d857a6e025ee220741cbb3ef1022848d58057b2aab544bd3a4685",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.2", #注意ip信息

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"DNSNames": null

[root@docker ~]# docker inspect web1

"Networks": {

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"MacAddress": "02:42:ac:11:00:03",

"DriverOpts": null,

"NetworkID": "2a93d6859680b45eae97e5f6232c3b8e070b1ec3d01852b147d2e1385034bce5",

"EndpointID": "4d54b12aeb2d857a6e025ee220741cbb3ef1022848d58057b2aab544bd3a4685",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.3", #注意ip信息

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"DNSNames": null

#关闭容器后重启容器,启动顺序调换

[root@docker ~]# docker stop web1 web2

web1

web2

[root@docker ~]# docker start web2

web2

[root@docker ~]# docker start web1

web1

#我们会发现容器ip颠倒docker引擎在分配ip时时根据容器启动顺序分配到,谁先启动谁用,是动态变更的

多容器互访用ip很显然不是很靠谱,那么多容器访问一般使用容器的名字访问更加稳定

docker原生网络是不支持dns解析的,自定义网络中内嵌了dns

cpp

[root@docker ~]# docker run -d --network my_net1 --name web nginx

d9ed01850f7aae35eb1ca3e2c73ff2f83d13c255d4f68416a39949ebb8ec699f

[root@docker ~]# docker run -it --network my_net1 --name test busybox

/ # ping web

PING web (172.18.0.2): 56 data bytes

64 bytes from 172.18.0.2: seq=0 ttl=64 time=0.197 ms

64 bytes from 172.18.0.2: seq=1 ttl=64 time=0.096 ms

64 bytes from 172.18.0.2: seq=2 ttl=64 time=0.087 ms注意:不同的自定义网络是不能通讯的

cpp

#在rhel7中使用的是iptables进行网络隔离,在rhel9中使用nftpables

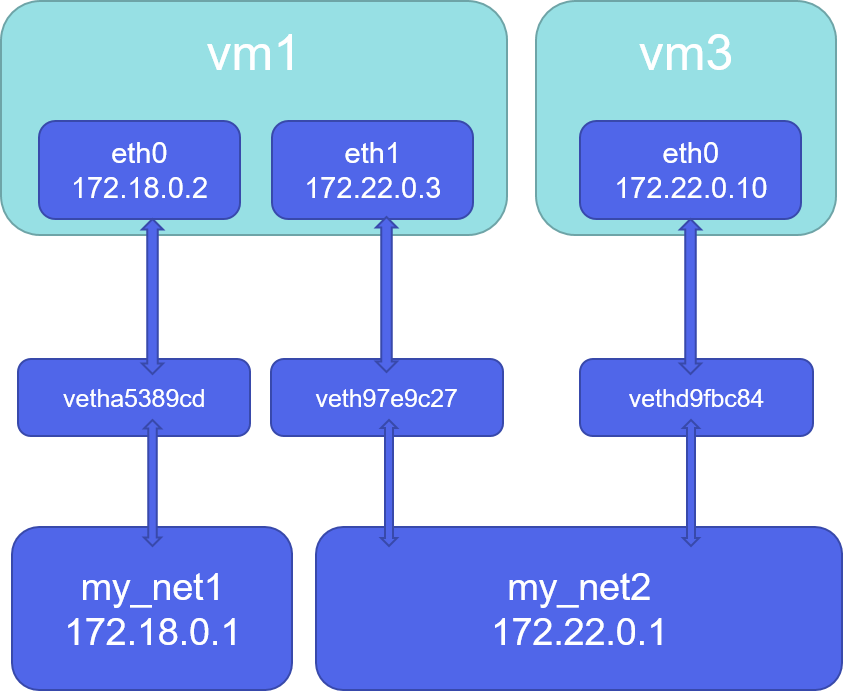

[root@docker ~]# nft list ruleset可以看到网络隔离策略三、自定义网络互通

操作如下

cpp

[root@docker ~]# docker run -d --name web1 --network my_net1 nginx

[root@docker ~]# docker run -it --name test --network my_net2 busybox

/ # ifconfig

eth0 Link encap:Ethernet HWaddr 02:42:C0:A8:00:01

inet addr:192.168.0.1 Bcast:192.168.0.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:36 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:5244 (5.1 KiB) TX bytes:0 (0.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:0 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:0 (0.0 B) TX bytes:0 (0.0 B)

/ # ping 172.18.0.2

PING 172.18.0.2 (172.18.0.2): 56 data bytes

[root@docker ~]# docker network connect my_net1 test

#在上面test容器中加入网络eth1

/ # ifconfig

eth0 Link encap:Ethernet HWaddr 02:42:C0:A8:00:01

inet addr:192.168.0.1 Bcast:192.168.0.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:45 errors:0 dropped:0 overruns:0 frame:0

TX packets:8 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:5879 (5.7 KiB) TX bytes:602 (602.0 B)

eth1 Link encap:Ethernet HWaddr 02:42:AC:12:00:03

inet addr:172.18.0.3 Bcast:172.18.255.255 Mask:255.255.0.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:15 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:2016 (1.9 KiB) TX bytes:0 (0.0 B)

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:65536 Metric:1

RX packets:4 errors:0 dropped:0 overruns:0 frame:0

TX packets:4 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:212 (212.0 B) TX bytes:212 (212.0 B)四、Docker容器内外网的访问

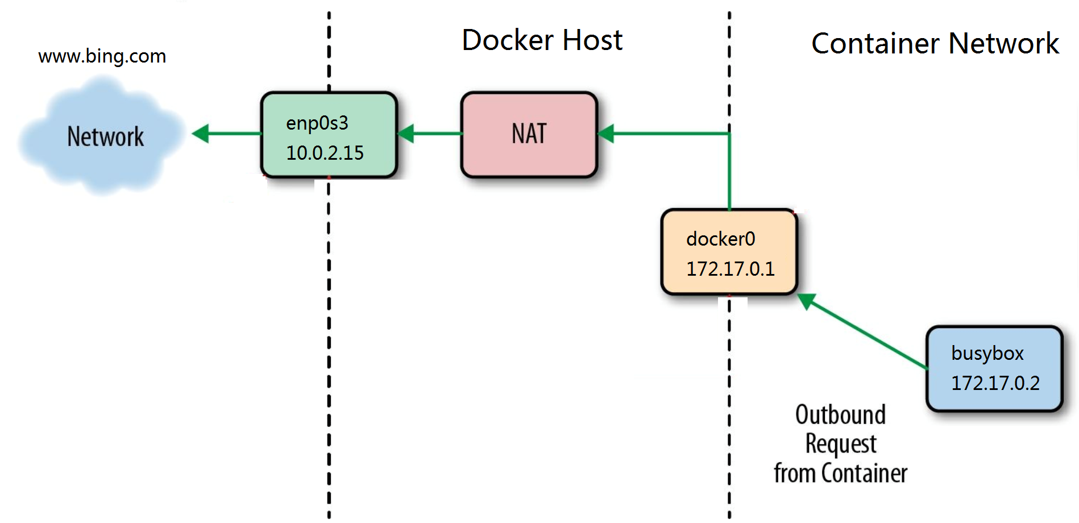

4.1 容器访问外网

-

在rhel7中,docker访问外网是通过iptables添加地址伪装策略来完成容器网文外网

-

在rhel7之后的版本中通过nftables添加地址伪装来访问外网

通信步骤:

1、容器发起访问外网请求,数据包传递到docker0网桥

2、SNAT地址转换,将容器的IP地址转换为主机公网IP(数据包从docker0到eth0,主机需要开启内核路由功能)

3、请求发送到外网

cpp

[root@docker ~]# iptables -t nat -nL

Chain PREROUTING (policy ACCEPT)

target prot opt source destination

Chain INPUT (policy ACCEPT)

target prot opt source destination

Chain OUTPUT (policy ACCEPT)

target prot opt source destination

Chain POSTROUTING (policy ACCEPT)

target prot opt source destination

MASQUERADE 6 -- 172.17.0.2 172.17.0.2 tcp dpt:80 #内网访问外网策略

Chain DOCKER (0 references)

target prot opt source destination

DNAT 6 -- 0.0.0.0/0 0.0.0.0/0 tcp dpt:80 to:172.17.0.2:80

# 将内网访问外网的策略删除后docker就无法再访问外网了

# 开启一个容器可见可以访问外网

[root@docker-node1 ~]# docker run -it --name busybox rickiechina/busyboxplus:latest

/bin/sh: shopt: not found

[ root@e99ce1949814:/ ]$ ping www.baidu.com

PING www.baidu.com (103.235.46.115): 56 data bytes

64 bytes from 103.235.46.115: seq=0 ttl=127 time=1.758 ms

64 bytes from 103.235.46.115: seq=1 ttl=127 time=1.625 ms

^C

--- www.baidu.com ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 1.625/1.691/1.758 ms

# 将nat中的策略删除

[root@docker-node1 ~]# iptables -t nat -D POSTROUTING 1

[root@docker ~]# iptables -t nat -nL

Chain PREROUTING (policy ACCEPT)

target prot opt source destination

Chain INPUT (policy ACCEPT)

target prot opt source destination

Chain OUTPUT (policy ACCEPT)

target prot opt source destination

Chain POSTROUTING (policy ACCEPT)

target prot opt source destination

# 内网访问外网的策略没了

Chain DOCKER (0 references)

target prot opt source destination

DNAT 6 -- 0.0.0.0/0 0.0.0.0/0 tcp dpt:80 to:172.17.0.2:80

# 重新回到容器测试

[root@docker-node1 ~]# docker attach busybox

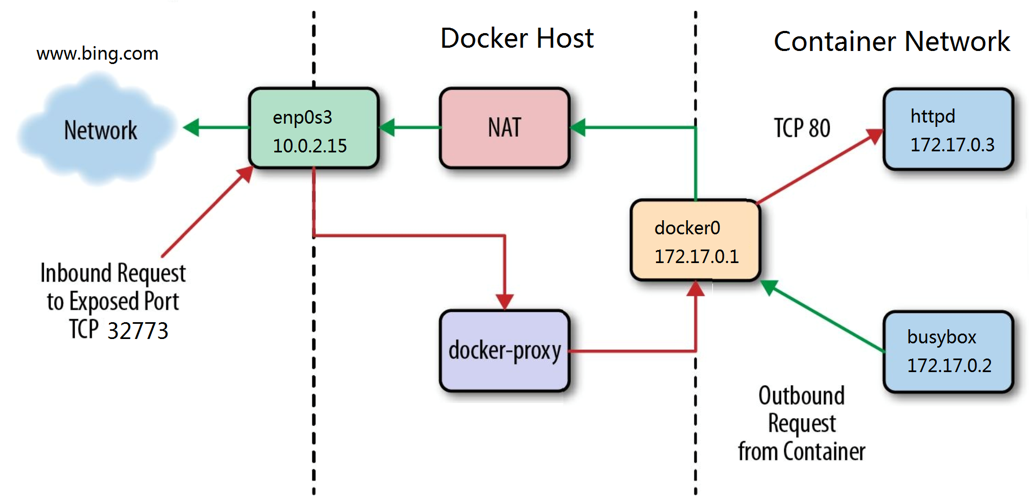

[ root@e99ce1949814:/ ]$ ping www.baidu.com # 无法连接外网了4.2 外网访问容器

端口映射 -p 本机端口:容器端口来暴漏端口从而达到访问效果

通信步骤

1、外网将数据包发送到eth0

2、DNAT地址转换,将数据包的目标地址从主机IP转换为容器IP

3、数据包发送到docker0然后回到容器

cpp

#通过docker-proxy对数据包进行内转

[root@docker ~]# docker run -d --name webserver -p 80:80 nginx

[root@docker ~]# ps ax

133986 ? Sl 0:00 /usr/bin/docker-proxy -proto tcp -host-ip 0.0.0.0 -host-port 80 -container-ip 172.17.0.2 -container-port 80

133995 ? Sl 0:00 /usr/bin/docker-proxy -proto tcp -host-ip :: -host-port 80 -container-ip 172.17.0.2 -container-port 80

134031 ? Sl 0:00 /usr/bin/containerd-shim-runc-v2 -namespace moby -id cae79497a01c0b8c488c7597b43de4a43f361f21a398ff423b4504c0905db143 -address /run/containerd/containerd.sock

134059 ? Ss 0:00 nginx: master process nginx -g daemon off;

134099 ? S 0:00 nginx: worker process

134100 ? S 0:00 nginx: worker process

#通过dnat策略来完成浏览内转

[root@docker ~]# iptables -t nat -nL

Chain PREROUTING (policy ACCEPT)

target prot opt source destination

Chain INPUT (policy ACCEPT)

target prot opt source destination

Chain OUTPUT (policy ACCEPT)

target prot opt source destination

Chain POSTROUTING (policy ACCEPT)

target prot opt source destination

MASQUERADE 6 -- 172.17.0.2 172.17.0.2 tcp dpt:80

Chain DOCKER (0 references)

target prot opt source destination

DNAT 6 -- 0.0.0.0/0 0.0.0.0/0 tcp dpt:80 to:172.17.0.2:80注意:docker-proxy和dnat在容器建立端口映射后都会开启,哪个传输速录高走哪个

五、Docker容器间跨主机访问

在生产环境中,我们的容器不可能都在同一个系统中,所以需要容器具备跨主机通信的能力

-

跨主机网络解决方案

-

docker原生的overlay和macvlan

-

第三方的flannel、weave、calico

-

-

众多网络方案是如何与docker集成在一起的

-

libnetwork docker容器网络库

-

CNM (Container Network Model)这个模型对容器网络进行了抽象

-

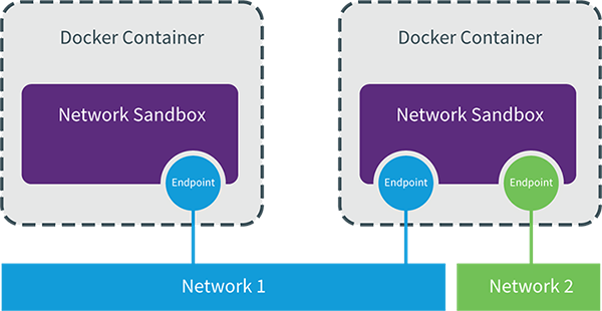

5.1 CNM (Container Network Model)

CNM分三类组件

Sandbox:容器网络栈,包含容器接口、dns、路由表。(namespace) Endpoint:作用是将sandbox接入network (veth pair) Network:包含一组endpoint,同一network的endpoint可以通信

5.2 macvlan网络方式实现跨主机通信

macvlan网络方式

-

Linux kernel提供的一种网卡虚拟化技术。

-

无需Linux bridge,直接使用物理接口,性能极好

-

容器的接口直接与主机网卡连接,无需NAT或端口映射。

-

macvlan会独占主机网卡,但可以使用vlan子接口实现多macvlan网络

-

vlan可以将物理二层网络划分为4094个逻辑网络,彼此隔离,vlan id取值为1~4094

macvlan网络间的隔离和连通

-

macvlan网络在二层上是隔离的,所以不同macvlan网络的容器是不能通信的

-

可以在三层上通过网关将macvlan网络连通起来

-

docker本身不做任何限制,像传统vlan网络那样管理即可

实现方法如下:

1、在两台docker主机上各添加一块网卡(仅主机模式),均打开网卡混杂模式:

cpp

[root@docker-node1 ~]# ip link set eth1 promisc on

[root@docker-node1 ~]# ip link set up eth1

[root@docker-node1 ~]# ip a s eth1

11: eth1: <BROADCAST,MULTICAST,PROMISC,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:dd:45:a8 brd ff:ff:ff:ff:ff:ff

altname enp11s0

altname ens1922、添加macvlan网络

cpp

[root@docker ~]# docker network create \

-d macvlan \

--subnet 1.1.1.0/24 \

--gateway 1.1.1.1 \

-o parent=eth1 macvlan1

[root@docker-node1 ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

6040deaf7317 bridge bridge local

7fc981c8c297 host host local

83903417114a macvlan1 macvlan local

8cff8a861f8b my_net1 bridge local

1d42d28522d9 my_net2 bridge local

76f674c83d42 none null local3、通信测试

cpp

# node1开启容器

[root@docker-node2 ~]# docker run -it --name busybox --network macvlan1 --ip 1.1.1.100 --rm rickiechina/busyboxplus:latest

# 在node2开启容器

[root@docker-node1 ~]# docker run -it --name busybox --network macvlan1 --ip 1.1.1.101 --rm rickiechina/busyboxplus:latest

# ping

[ root@8157d7f54341:/ ]$ ping 1.1.1.101

PING 1.1.1.101 (1.1.1.101): 56 data bytes

64 bytes from 1.1.1.101: seq=0 ttl=64 time=0.104 ms

64 bytes from 1.1.1.101: seq=1 ttl=64 time=0.101 ms

^C

--- 1.1.1.101 ping statistics ---

2 packets transmitted, 2 packets received, 0% packet loss

round-trip min/avg/max = 0.101/0.102/0.104 ms综上,docker自定义网络介绍完毕