【YOLOv8改进系列】:

YOLOv8改进系列(1)----替换主干网络之EfficientViT(CVPR2023)

YOLOv8改进系列(2)----替换主干网络之FasterNet

YOLOv8改进系列(3)----替换主干网络之ConvNeXt V2

YOLOv8改进系列(4)----替换C2f之FasterNet中的FasterBlock替换C2f中的Bottleneck

YOLOv8改进系列(5)----替换主干网络之EfficientFormerV2

YOLOv8改进系列(6)----替换主干网络之VanillaNet

YOLOv8改进系列(7)----替换主干网络之LSKNet

YOLOv8改进系列(8)----替换主干网络之Swin Transformer

YOLOv8改进系列(9)----替换主干网络之RepViT

YOLOv8改进系列(10)----替换主干网络之UniRepLKNet

YOLOv8改进系列(11)----替换主干网络之MobileNetV4

目录

[1. 简介](#1. 简介)

[2. StarNet架构设计](#2. StarNet架构设计)

[3. 实验与结果](#3. 实验与结果)

[4. 关键结论](#4. 关键结论)

[5. 研究贡献](#5. 研究贡献)

[6. 研究局限与未来工作](#6. 研究局限与未来工作)

💯一、StarNet介绍

- 论文题目:《Rewrite the Stars》

- 论文地址:https://arxiv.org/pdf/2403.19967

1. 简介

论文背景与研究动机

-

背景:近年来,深度学习领域中"星操作"(即逐元素乘法)在神经网络设计中的潜力逐渐受到关注。尽管已有许多直观的解释,但其背后的基础原理尚未得到充分探索。

-

研究动机:作者试图揭示星操作能够将输入映射到高维非线性特征空间的能力,类似于核技巧,但无需增加网络宽度。此外,作者还希望通过引入一个简单而强大的原型网络StarNet,展示其在紧凑网络结构和高效预算下的出色性能和低延迟。

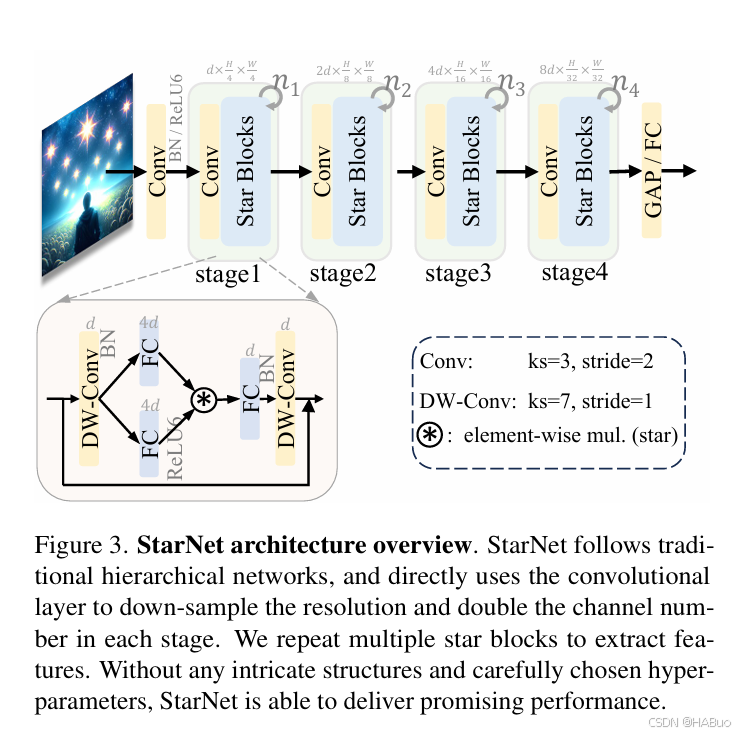

2. StarNet架构设计

研究方法

-

星操作的理论分析

-

单层星操作:作者通过数学推导,将单层星操作重写为一种形式,展示了其能够生成约 d2/2 个线性独立的维度,其中 d 是输入通道数。这种高维特征空间的生成方式与传统的增加网络宽度的方法不同,类似于多项式核函数。

-

多层星操作:通过堆叠多层星操作,作者证明了可以指数级地增加隐含维度,即使只有几层,也能在紧凑的特征空间内达到近似无限的维度。

-

特殊情况讨论:作者还讨论了星操作的一些特殊情况,例如在某些网络中,一个分支可能没有变换,或者没有可学习的变换等,并分析了这些情况对隐含维度的影响。

-

-

实验验证

-

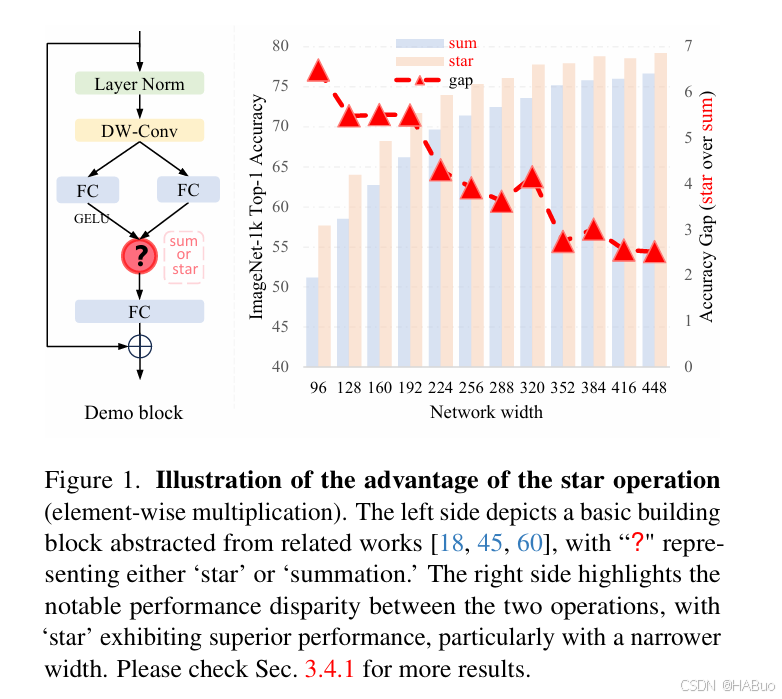

DemoNet实验:作者构建了一个名为DemoNet的简单网络,通过改变网络的宽度和深度,比较了星操作和求和操作的性能。实验结果表明,星操作在不同网络宽度和深度下均优于求和操作。

-

决策边界比较:在2D月牙形数据集上,作者可视化了星操作和求和操作的决策边界。结果显示,星操作的决策边界更精确,与多项式核函数的决策边界相似,而求和操作则与高斯核函数的决策边界差异较大。

-

无激活函数网络实验:作者尝试从DemoNet中移除所有激活函数,结果表明,星操作在没有激活函数的情况下仍能保持较好的性能,而求和操作的性能则大幅下降。

-

3. 实验与结果

-

DemoNet性能对比:在不同宽度和深度设置下,星操作的DemoNet在ImageNet-1k数据集上的Top-1准确率均高于求和操作的DemoNet。例如,在宽度为128、深度为12时,星操作的准确率为64.0%,而求和操作为58.5%。

-

决策边界可视化:星操作的决策边界更接近多项式核函数的决策边界,而求和操作则更接近高斯核函数的决策边界。

-

无激活函数网络性能:移除所有激活函数后,星操作的DemoNet准确率仅下降1.2%,而求和操作的准确率下降了33.8%。

-

StarNet性能:StarNet在不同大小变体下均展现出优异性能。例如,StarNet-S4在iPhone13上的推理时间为1.0ms,准确率为78.4%,优于MobileOne-S2(77.4%)和EdgeViT-XS(77.5%)等模型。

4. 关键结论

-

星操作能够将输入映射到高维非线性特征空间,类似于多项式核函数,但无需增加网络宽度。

-

星操作在紧凑的特征空间内实现高维特征表示,使其特别适合于高效网络设计。

-

StarNet作为一种简单的原型网络,展示了星操作在实际应用中的潜力,即使在没有复杂设计和精细调整超参数的情况下,也能超越许多精心设计的高效模型。

-

星操作可能为未来的研究提供新的方向,例如探索无激活函数网络、优化隐含高维空间中的系数分布等。

5. 研究贡献

-

理论贡献:作者首次从理论上揭示了星操作能够隐式地将输入映射到高维非线性特征空间的能力,并通过数学推导和实验验证了这一观点。

-

方法贡献:提出了StarNet,这是一种基于星操作的高效网络架构,展示了星操作在紧凑网络设计中的潜力。

-

实验贡献:通过广泛的实验,包括DemoNet的性能对比、决策边界的可视化以及无激活函数网络的实验,全面验证了星操作的优越性和有效性。

6. 研究局限与未来工作

-

局限性:尽管作者对星操作进行了深入分析,但其理论分析主要基于特定的网络结构和实验设置,可能无法完全涵盖所有可能的情况。此外,StarNet的设计相对简单,未来可能需要进一步优化以提高性能。

-

未来工作:作者提出了几个未来的研究方向,包括进一步探索无激活函数网络的可能性、优化星操作在隐含高维空间中的系数分布、以及将星操作与其他高效网络设计技术相结合等。

💯二、具体添加方法

第①步:创建StarNet.py

创建完成后,将下面代码直接复制粘贴进去:

import torch

import torch.nn as nn

from timm.models.layers import DropPath, trunc_normal_

__all__ = ['starnet_s050', 'starnet_s100', 'starnet_s150', 'starnet_s1', 'starnet_s2', 'starnet_s3', 'starnet_s4']

model_urls = {

"starnet_s1": "https://github.com/ma-xu/Rewrite-the-Stars/releases/download/checkpoints_v1/starnet_s1.pth.tar",

"starnet_s2": "https://github.com/ma-xu/Rewrite-the-Stars/releases/download/checkpoints_v1/starnet_s2.pth.tar",

"starnet_s3": "https://github.com/ma-xu/Rewrite-the-Stars/releases/download/checkpoints_v1/starnet_s3.pth.tar",

"starnet_s4": "https://github.com/ma-xu/Rewrite-the-Stars/releases/download/checkpoints_v1/starnet_s4.pth.tar",

}

class ConvBN(torch.nn.Sequential):

def __init__(self, in_planes, out_planes, kernel_size=1, stride=1, padding=0, dilation=1, groups=1, with_bn=True):

super().__init__()

self.add_module('conv', torch.nn.Conv2d(in_planes, out_planes, kernel_size, stride, padding, dilation, groups))

if with_bn:

self.add_module('bn', torch.nn.BatchNorm2d(out_planes))

torch.nn.init.constant_(self.bn.weight, 1)

torch.nn.init.constant_(self.bn.bias, 0)

class Block(nn.Module):

def __init__(self, dim, mlp_ratio=3, drop_path=0.):

super().__init__()

self.dwconv = ConvBN(dim, dim, 7, 1, (7 - 1) // 2, groups=dim, with_bn=True)

self.f1 = ConvBN(dim, mlp_ratio * dim, 1, with_bn=False)

self.f2 = ConvBN(dim, mlp_ratio * dim, 1, with_bn=False)

self.g = ConvBN(mlp_ratio * dim, dim, 1, with_bn=True)

self.dwconv2 = ConvBN(dim, dim, 7, 1, (7 - 1) // 2, groups=dim, with_bn=False)

self.act = nn.ReLU6()

self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()

def forward(self, x):

input = x

x = self.dwconv(x)

x1, x2 = self.f1(x), self.f2(x)

x = self.act(x1) * x2

x = self.dwconv2(self.g(x))

x = input + self.drop_path(x)

return x

class StarNet(nn.Module):

def __init__(self, base_dim=32, depths=[3, 3, 12, 5], mlp_ratio=4, drop_path_rate=0.0, num_classes=1000, **kwargs):

super().__init__()

self.num_classes = num_classes

self.in_channel = 32

# stem layer

self.stem = nn.Sequential(ConvBN(3, self.in_channel, kernel_size=3, stride=2, padding=1), nn.ReLU6())

dpr = [x.item() for x in torch.linspace(0, drop_path_rate, sum(depths))] # stochastic depth

# build stages

self.stages = nn.ModuleList()

cur = 0

for i_layer in range(len(depths)):

embed_dim = base_dim * 2 ** i_layer

down_sampler = ConvBN(self.in_channel, embed_dim, 3, 2, 1)

self.in_channel = embed_dim

blocks = [Block(self.in_channel, mlp_ratio, dpr[cur + i]) for i in range(depths[i_layer])]

cur += depths[i_layer]

self.stages.append(nn.Sequential(down_sampler, *blocks))

self.channel = [i.size(1) for i in self.forward(torch.randn(1, 3, 640, 640))]

self.apply(self._init_weights)

def _init_weights(self, m):

if isinstance(m, nn.Linear or nn.Conv2d):

trunc_normal_(m.weight, std=.02)

if isinstance(m, nn.Linear) and m.bias is not None:

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.LayerNorm or nn.BatchNorm2d):

nn.init.constant_(m.bias, 0)

nn.init.constant_(m.weight, 1.0)

def forward(self, x):

features = []

x = self.stem(x)

features.append(x)

for stage in self.stages:

x = stage(x)

features.append(x)

return features

def starnet_s1(pretrained=False, **kwargs):

model = StarNet(24, [2, 2, 8, 3], **kwargs)

if pretrained:

url = model_urls['starnet_s1']

checkpoint = torch.hub.load_state_dict_from_url(url=url, map_location="cpu")

model.load_state_dict(checkpoint["state_dict"], strict=False)

return model

def starnet_s2(pretrained=False, **kwargs):

model = StarNet(32, [1, 2, 6, 2], **kwargs)

if pretrained:

url = model_urls['starnet_s2']

checkpoint = torch.hub.load_state_dict_from_url(url=url, map_location="cpu")

model.load_state_dict(checkpoint["state_dict"], strict=False)

return model

def starnet_s3(pretrained=False, **kwargs):

model = StarNet(32, [2, 2, 8, 4], **kwargs)

if pretrained:

url = model_urls['starnet_s3']

checkpoint = torch.hub.load_state_dict_from_url(url=url, map_location="cpu")

model.load_state_dict(checkpoint["state_dict"], strict=False)

return model

def starnet_s4(pretrained=False, **kwargs):

model = StarNet(32, [3, 3, 12, 5], **kwargs)

if pretrained:

url = model_urls['starnet_s4']

checkpoint = torch.hub.load_state_dict_from_url(url=url, map_location="cpu")

model.load_state_dict(checkpoint["state_dict"], strict=False)

return model

# very small networks #

def starnet_s050(pretrained=False, **kwargs):

return StarNet(16, [1, 1, 3, 1], 3, **kwargs)

def starnet_s100(pretrained=False, **kwargs):

return StarNet(20, [1, 2, 4, 1], 4, **kwargs)

def starnet_s150(pretrained=False, **kwargs):

return StarNet(24, [1, 2, 4, 2], 3, **kwargs)第②步:修改task.py

(1)引入创建的StarNet文件

from ultralytics.nn.backbone.starnet import *(2)修改_predict_once函数

可直接将下述代码替换对应位置

def _predict_once(self, x, profile=False, visualize=False, embed=None):

"""

Perform a forward pass through the network.

Args:

x (torch.Tensor): The input tensor to the model.

profile (bool): Print the computation time of each layer if True, defaults to False.

visualize (bool): Save the feature maps of the model if True, defaults to False.

embed (list, optional): A list of feature vectors/embeddings to return.

Returns:

(torch.Tensor): The last output of the model.

"""

y, dt, embeddings = [], [], [] # outputs

for idx, m in enumerate(self.model):

if m.f != -1: # if not from previous layer

x = y[m.f] if isinstance(m.f, int) else [x if j == -1 else y[j] for j in m.f] # from earlier layers

if profile:

self._profile_one_layer(m, x, dt)

if hasattr(m, 'backbone'):

x = m(x)

for _ in range(5 - len(x)):

x.insert(0, None)

for i_idx, i in enumerate(x):

if i_idx in self.save:

y.append(i)

else:

y.append(None)

# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x if x_ is not None])}')

x = x[-1]

else:

x = m(x) # run

y.append(x if m.i in self.save else None) # save output

# if type(x) in {list, tuple}:

# if idx == (len(self.model) - 1):

# if type(x[1]) is dict:

# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x[1]["one2one"]])}')

# else:

# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x[1]])}')

# else:

# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x if x_ is not None])}')

# elif type(x) is dict:

# print(f'layer id:{idx:>2} {m.type:>50} output shape:{", ".join([str(x_.size()) for x_ in x["one2one"]])}')

# else:

# if not hasattr(m, 'backbone'):

# print(f'layer id:{idx:>2} {m.type:>50} output shape:{x.size()}')

if visualize:

feature_visualization(x, m.type, m.i, save_dir=visualize)

if embed and m.i in embed:

embeddings.append(nn.functional.adaptive_avg_pool2d(x, (1, 1)).squeeze(-1).squeeze(-1)) # flatten

if m.i == max(embed):

return torch.unbind(torch.cat(embeddings, 1), dim=0)

return x(3)修改parse_model函数

可以直接把下面的代码粘贴到对应的位置中

def parse_model(d, ch, verbose=True): # model_dict, input_channels(3)

"""

Parse a YOLO model.yaml dictionary into a PyTorch model.

Args:

d (dict): Model dictionary.

ch (int): Input channels.

verbose (bool): Whether to print model details.

Returns:

(tuple): Tuple containing the PyTorch model and sorted list of output layers.

"""

import ast

# Args

max_channels = float("inf")

nc, act, scales = (d.get(x) for x in ("nc", "activation", "scales"))

depth, width, kpt_shape = (d.get(x, 1.0) for x in ("depth_multiple", "width_multiple", "kpt_shape"))

if scales:

scale = d.get("scale")

if not scale:

scale = tuple(scales.keys())[0]

LOGGER.warning(f"WARNING ⚠️ no model scale passed. Assuming scale='{scale}'.")

if len(scales[scale]) == 3:

depth, width, max_channels = scales[scale]

elif len(scales[scale]) == 4:

depth, width, max_channels, threshold = scales[scale]

if act:

Conv.default_act = eval(act) # redefine default activation, i.e. Conv.default_act = nn.SiLU()

if verbose:

LOGGER.info(f"{colorstr('activation:')} {act}") # print

if verbose:

LOGGER.info(f"\n{'':>3}{'from':>20}{'n':>3}{'params':>10} {'module':<60}{'arguments':<50}")

ch = [ch]

layers, save, c2 = [], [], ch[-1] # layers, savelist, ch out

is_backbone = False

for i, (f, n, m, args) in enumerate(d["backbone"] + d["head"]): # from, number, module, args

try:

if m == 'node_mode':

m = d[m]

if len(args) > 0:

if args[0] == 'head_channel':

args[0] = int(d[args[0]])

t = m

m = getattr(torch.nn, m[3:]) if 'nn.' in m else globals()[m] # get module

except:

pass

for j, a in enumerate(args):

if isinstance(a, str):

with contextlib.suppress(ValueError):

try:

args[j] = locals()[a] if a in locals() else ast.literal_eval(a)

except:

args[j] = a

n = n_ = max(round(n * depth), 1) if n > 1 else n # depth gain

if m in {

Classify, Conv, ConvTranspose, GhostConv, Bottleneck, GhostBottleneck, SPP, SPPF, DWConv, Focus,

BottleneckCSP, C1, C2, C2f, ELAN1, AConv, SPPELAN, C2fAttn, C3, C3TR,

C3Ghost, nn.Conv2d, nn.ConvTranspose2d, DWConvTranspose2d, C3x, RepC3, PSA, SCDown, C2fCIB

}:

if args[0] == 'head_channel':

args[0] = d[args[0]]

c1, c2 = ch[f], args[0]

if c2 != nc: # if c2 not equal to number of classes (i.e. for Classify() output)

c2 = make_divisible(min(c2, max_channels) * width, 8)

if m is C2fAttn:

args[1] = make_divisible(min(args[1], max_channels // 2) * width, 8) # embed channels

args[2] = int(

max(round(min(args[2], max_channels // 2 // 32)) * width, 1) if args[2] > 1 else args[2]

) # num heads

args = [c1, c2, *args[1:]]

elif m in {AIFI}:

args = [ch[f], *args]

c2 = args[0]

elif m in (HGStem, HGBlock):

c1, cm, c2 = ch[f], args[0], args[1]

if c2 != nc: # if c2 not equal to number of classes (i.e. for Classify() output)

c2 = make_divisible(min(c2, max_channels) * width, 8)

cm = make_divisible(min(cm, max_channels) * width, 8)

args = [c1, cm, c2, *args[2:]]

if m in (HGBlock):

args.insert(4, n) # number of repeats

n = 1

elif m is ResNetLayer:

c2 = args[1] if args[3] else args[1] * 4

elif m is nn.BatchNorm2d:

args = [ch[f]]

elif m is Concat:

c2 = sum(ch[x] for x in f)

elif m in frozenset({Detect, WorldDetect, Segment, Pose, OBB, ImagePoolingAttn, v10Detect}):

args.append([ch[x] for x in f])

elif m is RTDETRDecoder: # special case, channels arg must be passed in index 1

args.insert(1, [ch[x] for x in f])

elif m is CBLinear:

c2 = make_divisible(min(args[0][-1], max_channels) * width, 8)

c1 = ch[f]

args = [c1, [make_divisible(min(c2_, max_channels) * width, 8) for c2_ in args[0]], *args[1:]]

elif m is CBFuse:

c2 = ch[f[-1]]

elif isinstance(m, str):

t = m

if len(args) == 2:

m = timm.create_model(m, pretrained=args[0], pretrained_cfg_overlay={'file': args[1]},

features_only=True)

elif len(args) == 1:

m = timm.create_model(m, pretrained=args[0], features_only=True)

c2 = m.feature_info.channels()

elif m in {starnet_s050, starnet_s100, starnet_s150, starnet_s1, starnet_s2, starnet_s3, starnet_s4

}:

m = m(*args)

c2 = m.channel

else:

c2 = ch[f]

if isinstance(c2, list):

is_backbone = True

m_ = m

m_.backbone = True

else:

m_ = nn.Sequential(*(m(*args) for _ in range(n))) if n > 1 else m(*args) # module

t = str(m)[8:-2].replace('__main__.', '') # module type

m.np = sum(x.numel() for x in m_.parameters()) # number params

m_.i, m_.f, m_.type = i + 4 if is_backbone else i, f, t # attach index, 'from' index, type

if verbose:

LOGGER.info(f"{i:>3}{str(f):>20}{n_:>3}{m.np:10.0f} {t:<60}{str(args):<50}") # print

save.extend(x % (i + 4 if is_backbone else i) for x in ([f] if isinstance(f, int) else f) if

x != -1) # append to savelist

layers.append(m_)

if i == 0:

ch = []

if isinstance(c2, list):

ch.extend(c2)

for _ in range(5 - len(ch)):

ch.insert(0, 0)

else:

ch.append(c2)

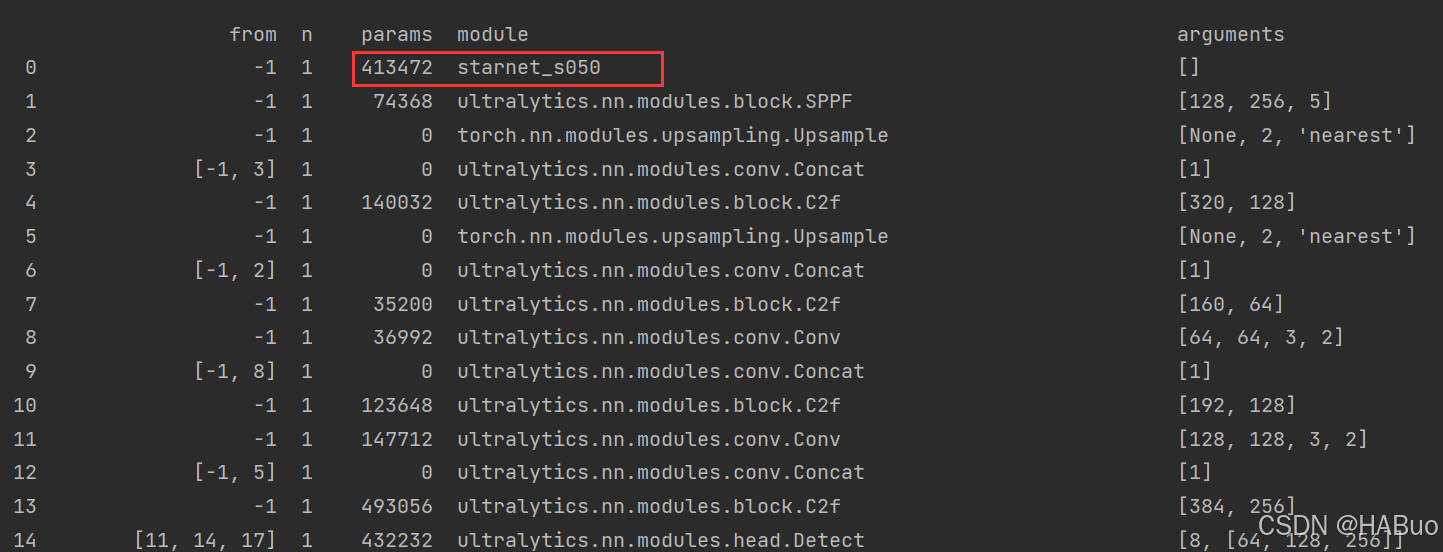

return nn.Sequential(*layers), sorted(save)具体改进差别如下图所示:

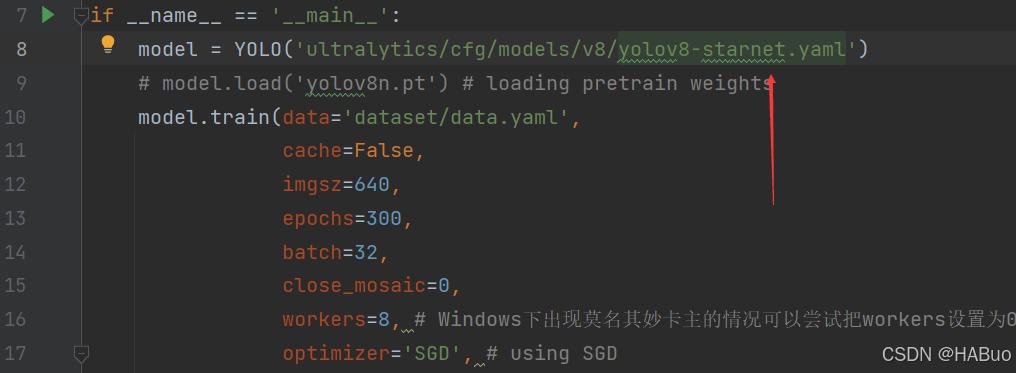

第③步:yolov8.yaml文件修改

在下述文件夹中创立yolov8-starnet.yaml

# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs

s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs

m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs

l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs

x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs

# 0-P1/2

# 1-P2/4

# 2-P3/8

# 3-P4/16

# 4-P5/32

# YOLOv8.0n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, starnet_s050, []] # 4

- [-1, 1, SPPF, [1024, 5]] # 5

# YOLOv8.0n head

head:

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 6

- [[-1, 3], 1, Concat, [1]] # 7 cat backbone P4

- [-1, 3, C2f, [512]] # 8

- [-1, 1, nn.Upsample, [None, 2, 'nearest']] # 9

- [[-1, 2], 1, Concat, [1]] # 10 cat backbone P3

- [-1, 3, C2f, [256]] # 11 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]] # 12

- [[-1, 8], 1, Concat, [1]] # 13 cat head P4

- [-1, 3, C2f, [512]] # 14 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]] # 15

- [[-1, 5], 1, Concat, [1]] # 16 cat head P5

- [-1, 3, C2f, [1024]] # 17 (P5/32-large)

- [[11, 14, 17], 1, Detect, [nc]] # Detect(P3, P4, P5)第④步:验证是否加入成功

将train.py中的配置文件进行修改,并运行

【YOLOv8改进系列】:

YOLOv8改进系列(1)----替换主干网络之EfficientViT(CVPR2023)

YOLOv8改进系列(2)----替换主干网络之FasterNet

YOLOv8改进系列(3)----替换主干网络之ConvNeXt V2

YOLOv8改进系列(4)----替换C2f之FasterNet中的FasterBlock替换C2f中的Bottleneck

YOLOv8改进系列(5)----替换主干网络之EfficientFormerV2

YOLOv8改进系列(6)----替换主干网络之VanillaNet

YOLOv8改进系列(7)----替换主干网络之LSKNet

YOLOv8改进系列(8)----替换主干网络之Swin Transformer

YOLOv8改进系列(9)----替换主干网络之RepViT

YOLOv8改进系列(10)----替换主干网络之UniRepLKNet

YOLOv8改进系列(11)----替换主干网络之MobileNetV4