嘿,各位!今天咱们要来一场超级酷炫的多模态 Transformer 冒险之旅!想象一下,让一个模型既能看懂图片,又能理解文字,然后还能生成有趣的回答。听起来是不是很像超级英雄的超能力?别急,咱们这就来实现它!

🧠 向所有学习者致敬!

"学习不是装满一桶水,而是点燃一把火。" ------ 叶芝

我的博客主页: https://lizheng.blog.csdn.net

🌐 欢迎点击加入AI人工智能社区!

🚀 让我们一起努力,共创AI未来! 🚀

好的!让我来完成这个任务,我会用幽默风趣的笔触把这篇文档翻译成符合 CSDN 博文要求的 Markdown 格式。现在就让我们开始吧!

Step 0: 准备工作 ------ 导入库、加载模型、定义数据、设置视觉模型

Step 0.1: 导入所需的库

在这一部分,咱们要准备好所有需要的工具,就像准备一场冒险的装备一样。我们需要 torch 和它的子模块(nn、F、optim),还有 torchvision 来获取预训练的 ResNet 模型,PIL(Pillow)用来加载图片,math 用来做些数学计算,os 用来处理文件路径,还有 numpy 来创建一些虚拟的图片数据。这些工具就像是咱们的瑞士军刀,有了它们,咱们就能搞定一切!

python

import torch

import torch.nn as nn

from torch.nn import functional as F

import torch.optim as optim

import torchvision

import torchvision.transforms as transforms

from PIL import Image

import math

import os

import numpy as np # 用来创建虚拟图片为了保证代码的可重复性,咱们还设置了随机种子,这样每次运行代码的时候都能得到一样的结果。这就好像是给代码加了一个"魔法咒语",让每次运行都像复制粘贴一样稳定。

python

torch.manual_seed(42) # 使用不同的种子会有不同的结果

np.random.seed(42)接下来,咱们检查一下 PyTorch 和 Torchvision 的版本,确保一切正常。这就好像是在出发前检查一下装备是否完好。

python

print(f"PyTorch version: {torch.__version__}")

print(f"Torchvision version: {torchvision.__version__}")

print("Libraries imported.")最后,咱们设置一下设备(如果有 GPU 就用 GPU,没有就用 CPU)。这就好像是给代码选择了一个超级加速器,让运行速度飞起来!

python

device = 'cuda' if torch.cuda.is_available() else 'cpu'

print(f"Using device: {device}")Step 0.2: 加载预训练的文本模型

咱们之前训练了一个字符级的 Transformer 模型,现在要把它的权重和配置加载过来,这样咱们的模型就有了处理文本的基础。这就像是给咱们的多模态模型注入了一颗强大的文本处理"心脏"。

python

model_load_path = 'saved_models/transformer_model.pt'

if not os.path.exists(model_load_path):

raise FileNotFoundError(f"Error: Model file not found at {model_load_path}. Please ensure 'transformer2.ipynb' was run and saved the model.")

loaded_state_dict = torch.load(model_load_path, map_location=device)

print(f"Loaded state dictionary from '{model_load_path}'.")从加载的模型中,咱们提取了超参数(比如 vocab_size、d_model、n_layers 等)和字符映射表(char_to_int 和 int_to_char)。这些参数就像是模型的"基因",决定了它的行为和能力。

python

config = loaded_state_dict['config']

loaded_vocab_size = config['vocab_size']

d_model = config['d_model']

n_heads = config['n_heads']

n_layers = config['n_layers']

d_ff = config['d_ff']

loaded_block_size = config['block_size'] # 文本模型的最大序列长度

d_k = d_model // n_heads

char_to_int = loaded_state_dict['tokenizer']['char_to_int']

int_to_char = loaded_state_dict['tokenizer']['int_to_char']Step 0.3: 定义特殊标记并更新词汇表

为了让模型能够处理多模态数据,咱们需要添加一些特殊的标记:

<IMG>:用来表示图像输入的占位符。<PAD>:用来填充序列,让序列长度一致。<EOS>:表示句子结束的标记。

这就像是给模型的词汇表添加了一些新的"魔法单词",让模型能够理解新的概念。

python

img_token = "<IMG>"

pad_token = "<PAD>"

eos_token = "<EOS>" # 句子结束标记

special_tokens = [img_token, pad_token, eos_token]接下来,咱们把这些特殊标记添加到现有的字符映射表中,并更新词汇表的大小。这就像是给模型的词汇表扩容,让它能够容纳更多的"魔法单词"。

python

current_vocab_size = loaded_vocab_size

for token in special_tokens:

if token not in char_to_int:

char_to_int[token] = current_vocab_size

int_to_char[current_vocab_size] = token

current_vocab_size += 1

vocab_size = current_vocab_size

pad_token_id = char_to_int[pad_token] # 保存 PAD 标记的 ID,后面要用Step 0.4: 定义样本多模态数据

咱们创建了一个小的、虚拟的(图像,提示,回答)三元组数据集。为了简单起见,咱们用 PIL/Numpy 生成了一些虚拟图片(比如纯色的方块和圆形),并给它们配上了一些描述性的提示和回答。这就像是给模型准备了一些"练习题",让它能够学习如何处理图像和文本的组合。

python

sample_data_dir = "sample_multimodal_data"

os.makedirs(sample_data_dir, exist_ok=True)

image_paths = {

"red": os.path.join(sample_data_dir, "red_square.png"),

"blue": os.path.join(sample_data_dir, "blue_square.png"),

"green": os.path.join(sample_data_dir, "green_circle.png") # 加入形状变化

}

# 创建红色方块

img_red = Image.new('RGB', (64, 64), color = 'red')

img_red.save(image_paths["red"])

# 创建蓝色方块

img_blue = Image.new('RGB', (64, 64), color = 'blue')

img_blue.save(image_paths["blue"])

# 创建绿色圆形(用 PIL 的绘图功能近似绘制)

img_green = Image.new('RGB', (64, 64), color = 'white')

from PIL import ImageDraw

draw = ImageDraw.Draw(img_green)

draw.ellipse((4, 4, 60, 60), fill='green', outline='green')

img_green.save(image_paths["green"])接下来,咱们定义了一些数据样本,每个样本包括一个图片路径、一个提示和一个回答。这就像是给模型准备了一些"问答对",让它能够学习如何根据图片和提示生成正确的回答。

python

sample_training_data = [

{"image_path": image_paths["red"], "prompt": "What color is the shape?", "response": "red." + eos_token},

{"image_path": image_paths["blue"], "prompt": "Describe the image.", "response": "a blue square." + eos_token},

{"image_path": image_paths["green"], "prompt": "What shape is shown?", "response": "a green circle." + eos_token},

{"image_path": image_paths["red"], "prompt": "Is it a circle?", "response": "no, it is a square." + eos_token},

{"image_path": image_paths["blue"], "prompt": "What is the main color?", "response": "blue." + eos_token},

{"image_path": image_paths["green"], "prompt": "Describe this.", "response": "a circle, it is green." + eos_token}

]Step 0.5: 加载预训练的视觉模型(特征提取器)

咱们从 torchvision 加载了一个预训练的 ResNet-18 模型,并移除了它的最后分类层(fc)。这就像是给模型安装了一个"视觉眼睛",让它能够"看"图片并提取出有用的特征。

python

vision_model = torchvision.models.resnet18(weights=torchvision.models.ResNet18_Weights.DEFAULT)

vision_feature_dim = vision_model.fc.in_features # 获取原始 fc 层的输入维度

vision_model.fc = nn.Identity() # 替换分类器为恒等映射

vision_model = vision_model.to(device)

vision_model.eval() # 设置为评估模式Step 0.6: 定义图像预处理流程

在把图片喂给 ResNet 模型之前,咱们需要对图片进行预处理。这就像是给图片"化妆",让它符合模型的口味。咱们用 torchvision.transforms 来定义一个预处理流程,包括调整图片大小、裁剪、转换为张量并归一化。

python

image_transforms = transforms.Compose([

transforms.Resize(256), # 调整图片大小,短边为 256

transforms.CenterCrop(224), # 中心裁剪 224x224 的正方形

transforms.ToTensor(), # 转换为 PyTorch 张量(0-1 范围)

transforms.Normalize(mean=[0.485, 0.456, 0.406], # 使用 ImageNet 的均值

std=[0.229, 0.224, 0.225]) # 使用 ImageNet 的标准差

])Step 0.7: 定义新的超参数

咱们定义了一些新的超参数,专门用于多模态设置。这就像是给模型设置了一些新的"规则",让它知道如何处理图像和文本的组合。

python

block_size = 64 # 设置多模态序列的最大长度

num_img_tokens = 1 # 使用 1 个 <IMG> 标记来表示图像特征

learning_rate = 3e-4 # 保持 AdamW 的学习率不变

batch_size = 4 # 由于可能占用更多内存,减小批量大小

epochs = 2000 # 增加训练周期

eval_interval = 500最后,咱们重新创建了一个因果掩码,以适应新的序列长度。这就像是给模型的注意力机制设置了一个"遮挡板",让它只能看到它应该看到的部分。

python

causal_mask = torch.tril(torch.ones(block_size, block_size, device=device)).view(1, 1, block_size, block_size)Step 1: 数据准备用于多模态训练

Step 1.1: 提取样本数据的图像特征

咱们遍历 sample_training_data,对于每个唯一的图像路径,加载图像,应用定义的变换,并通过冻结的 vision_model 获取特征向量。这就像是给每个图像提取了一个"特征指纹",让模型能够理解图像的内容。

python

extracted_image_features = {} # 用来存储 {image_path: feature_tensor}

unique_image_paths = set(d["image_path"] for d in sample_training_data)

print(f"Found {len(unique_image_paths)} unique images to process.")

for img_path in unique_image_paths:

try:

img = Image.open(img_path).convert('RGB') # 确保图像是 RGB 格式

except FileNotFoundError:

print(f"Error: Image file not found at {img_path}. Skipping.")

continue

img_tensor = image_transforms(img).unsqueeze(0).to(device) # 应用预处理并添加批量维度

with torch.no_grad():

feature_vector = vision_model(img_tensor) # 提取特征向量

extracted_image_features[img_path] = feature_vector.squeeze(0) # 去掉批量维度并存储

print(f" Extracted features for '{os.path.basename(img_path)}', shape: {extracted_image_features[img_path].shape}")Step 1.2: 对提示和回答进行分词

咱们用更新后的 char_to_int 映射(现在包括 <IMG>、<PAD>、<EOS>)将文本提示和回答转换为整数 ID 序列。这就像是把文本翻译成模型能够理解的"数字语言"。

python

tokenized_samples = []

for sample in sample_training_data:

prompt_ids = [char_to_int[ch] for ch in sample["prompt"]]

response_text = sample["response"]

if response_text.endswith(eos_token):

response_text_without_eos = response_text[:-len(eos_token)]

response_ids = [char_to_int[ch] for ch in response_text_without_eos] + [char_to_int[eos_token]]

else:

response_ids = [char_to_int[ch] for ch in response_text]

tokenized_samples.append({

"image_path": sample["image_path"],

"prompt_ids": prompt_ids,

"response_ids": response_ids

})Step 1.3: 创建填充的输入/目标序列和掩码

咱们把图像表示、分词后的提示和分词后的回答组合成一个输入序列,为 Transformer 准备。这就像是把图像和文本"打包"成一个序列,让模型能够同时处理它们。

python

prepared_sequences = []

ignore_index = -100 # 用于 CrossEntropyLoss 的忽略索引

for sample in tokenized_samples:

img_ids = [char_to_int[img_token]] * num_img_tokens

input_ids_no_pad = img_ids + sample["prompt_ids"] + sample["response_ids"][:-1] # 输入预测回答

target_ids_no_pad = ([ignore_index] * len(img_ids)) + ([ignore_index] * len(sample["prompt_ids"])) + sample["response_ids"]

current_len = len(input_ids_no_pad)

pad_len = block_size - current_len

if pad_len < 0:

print(f"Warning: Sample sequence length ({current_len}) exceeds block_size ({block_size}). Truncating.")

input_ids = input_ids_no_pad[:block_size]

target_ids = target_ids_no_pad[:block_size]

pad_len = 0

current_len = block_size

else:

input_ids = input_ids_no_pad + ([pad_token_id] * pad_len)

target_ids = target_ids_no_pad + ([ignore_index] * pad_len)

attention_mask = ([1] * current_len) + ([0] * pad_len)

prepared_sequences.append({

"image_path": sample["image_path"],

"input_ids": torch.tensor(input_ids, dtype=torch.long),

"target_ids": torch.tensor(target_ids, dtype=torch.long),

"attention_mask": torch.tensor(attention_mask, dtype=torch.long)

})最后,咱们把所有的序列组合成张量,方便后续的批量处理。这就像是把所有的"练习题"打包成一个整齐的"试卷"。

python

all_input_ids = torch.stack([s['input_ids'] for s in prepared_sequences])

all_target_ids = torch.stack([s['target_ids'] for s in prepared_sequences])

all_attention_masks = torch.stack([s['attention_mask'] for s in prepared_sequences])

all_image_paths = [s['image_path'] for s in prepared_sequences]Step 2: 模型调整和初始化

Step 2.1: 重新初始化嵌入层和输出层

由于咱们添加了特殊标记(<IMG>、<PAD>、<EOS>),词汇表大小发生了变化。这就像是给模型的词汇表"扩容",咱们需要重新初始化嵌入层和输出层,以适应新的词汇表大小。

python

new_token_embedding_table = nn.Embedding(vocab_size, d_model).to(device)

original_weights = loaded_state_dict['token_embedding_table']['weight'][:loaded_vocab_size, :]

with torch.no_grad():

new_token_embedding_table.weight[:loaded_vocab_size, :] = original_weights

token_embedding_table = new_token_embedding_table输出层也需要重新初始化,以适应新的词汇表大小。

python

new_output_linear_layer = nn.Linear(d_model, vocab_size).to(device)

original_out_weight = loaded_state_dict['output_linear_layer']['weight'][:loaded_vocab_size, :]

original_out_bias = loaded_state_dict['output_linear_layer']['bias'][:loaded_vocab_size]

with torch.no_grad():

new_output_linear_layer.weight[:loaded_vocab_size, :] = original_out_weight

new_output_linear_layer.bias[:loaded_vocab_size] = original_out_bias

output_linear_layer = new_output_linear_layerStep 2.2: 初始化视觉投影层

咱们创建了一个新的线性层,用来将提取的图像特征投影到 Transformer 的隐藏维度(d_model)。这就像是给图像特征和文本特征之间架起了一座"桥梁",让它们能够互相理解。

python

vision_projection_layer = nn.Linear(vision_feature_dim, d_model).to(device)Step 2.3: 加载现有的 Transformer 块层

咱们从加载的状态字典中重新加载 Transformer 块的核心组件(LayerNorms、QKV/Output Linears for MHA、FFN Linears)。这就像是把之前训练好的模型的"核心部件"重新组装起来。

python

layer_norms_1 = []

layer_norms_2 = []

mha_qkv_linears = []

mha_output_linears = []

ffn_linear_1 = []

ffn_linear_2 = []

for i in range(n_layers):

ln1 = nn.LayerNorm(d_model).to(device)

ln1.load_state_dict(loaded_state_dict['layer_norms_1'][i])

layer_norms_1.append(ln1)

qkv_linear = nn.Linear(d_model, 3 * d_model, bias=False).to(device)

qkv_linear.load_state_dict(loaded_state_dict['mha_qkv_linears'][i])

mha_qkv_linears.append(qkv_linear)

output_linear_mha = nn.Linear(d_model, d_model).to(device)

output_linear_mha.load_state_dict(loaded_state_dict['mha_output_linears'][i])

mha_output_linears.append(output_linear_mha)

ln2 = nn.LayerNorm(d_model).to(device)

ln2.load_state_dict(loaded_state_dict['layer_norms_2'][i])

layer_norms_2.append(ln2)

lin1 = nn.Linear(d_model, d_ff).to(device)

lin1.load_state_dict(loaded_state_dict['ffn_linear_1'][i])

ffn_linear_1.append(lin1)

lin2 = nn.Linear(d_ff, d_model).to(device)

lin2.load_state_dict(loaded_state_dict['ffn_linear_2'][i])

ffn_linear_2.append(lin2)最后,咱们加载了最终的 LayerNorm 和位置编码。这就像是给模型的"大脑"安装了最后的"保护层"。

python

final_layer_norm = nn.LayerNorm(d_model).to(device)

final_layer_norm.load_state_dict(loaded_state_dict['final_layer_norm'])

positional_encoding = loaded_state_dict['positional_encoding'].to(device)Step 2.4: 定义优化器和损失函数

咱们收集了所有需要训练的参数,包括新初始化的视觉投影层和重新调整大小的嵌入/输出层。这就像是给模型的"训练引擎"添加了所有的"燃料"。

python

all_trainable_parameters = list(token_embedding_table.parameters())

all_trainable_parameters.extend(list(vision_projection_layer.parameters()))

for i in range(n_layers):

all_trainable_parameters.extend(list(layer_norms_1[i].parameters()))

all_trainable_parameters.extend(list(mha_qkv_linears[i].parameters()))

all_trainable_parameters.extend(list(mha_output_linears[i].parameters()))

all_trainable_parameters.extend(list(layer_norms_2[i].parameters()))

all_trainable_parameters.extend(list(ffn_linear_1[i].parameters()))

all_trainable_parameters.extend(list(ffn_linear_2[i].parameters()))

all_trainable_parameters.extend(list(final_layer_norm.parameters()))

all_trainable_parameters.extend(list(output_linear_layer.parameters()))接下来,咱们定义了 AdamW 优化器来管理这些参数,并定义了 Cross-Entropy 损失函数,确保忽略填充标记和非目标标记(比如提示标记)。这就像是给模型的训练过程设置了一个"指南针",让它知道如何朝着正确的方向前进。

python

optimizer = optim.AdamW(all_trainable_parameters, lr=learning_rate)

criterion = nn.CrossEntropyLoss(ignore_index=ignore_index)Step 3: 多模态训练循环(内联)

Step 3.1: 训练循环结构

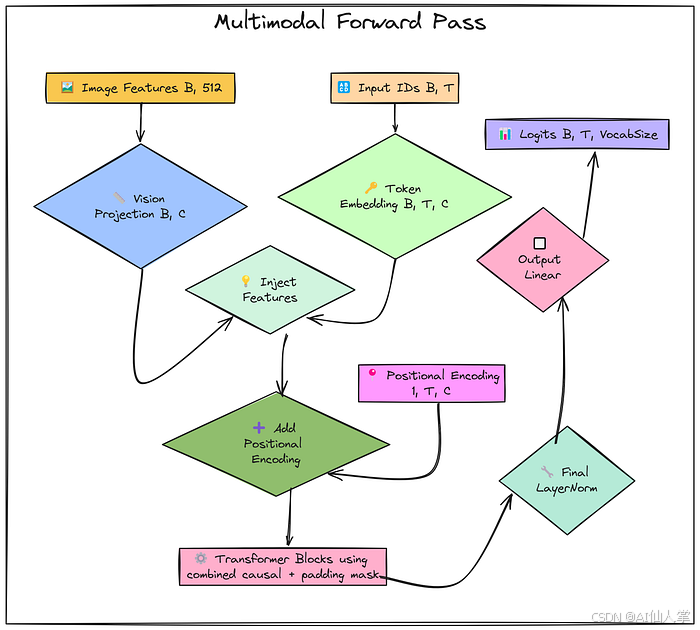

咱们开始训练模型啦!在每个训练周期中,咱们随机选择一批数据,提取对应的图像特征、输入 ID、目标 ID 和注意力掩码,然后进行前向传播、计算损失、反向传播和参数更新。这就像是让模型在"训练场"上反复练习,直到它能够熟练地处理图像和文本的组合。

python

losses = []

for epoch in range(epochs):

indices = torch.randint(0, num_sequences_available, (batch_size,))

xb_ids = all_input_ids[indices].to(device)

yb_ids = all_target_ids[indices].to(device)

batch_masks = all_attention_masks[indices].to(device)

batch_img_paths = [all_image_paths[i] for i in indices.tolist()]

try:

batch_img_features = torch.stack([extracted_image_features[p] for p in batch_img_paths]).to(device)

except KeyError as e:

print(f"Error: Missing extracted feature for image path {e}. Ensure Step 1.1 completed correctly. Skipping epoch.")

continue

B, T = xb_ids.shape

C = d_model

projected_img_features = vision_projection_layer(batch_img_features)

projected_img_features = projected_img_features.unsqueeze(1)

text_token_embeddings = token_embedding_table(xb_ids)

combined_embeddings = text_token_embeddings.clone()

combined_embeddings[:, 0:num_img_tokens, :] = projected_img_features

pos_enc_slice = positional_encoding[:, :T, :]

x = combined_embeddings + pos_enc_slice

padding_mask_expanded = batch_masks.unsqueeze(1).unsqueeze(2)

combined_attn_mask = causal_mask[:,:,:T,:T] * padding_mask_expanded

for i in range(n_layers):

x_input_block = x

x_ln1 = layer_norms_1[i](x_input_block)

qkv = mha_qkv_linears[i](x_ln1)

qkv = qkv.view(B, T, n_heads, 3 * d_k).permute(0, 2, 1, 3)

q, k, v = qkv.chunk(3, dim=-1)

attn_scores = (q @ k.transpose(-2, -1)) * (d_k ** -0.5)

attn_scores_masked = attn_scores.masked_fill(combined_attn_mask == 0, float('-inf'))

attention_weights = F.softmax(attn_scores_masked, dim=-1)

attention_weights = torch.nan_to_num(attention_weights)

attn_output = attention_weights @ v

attn_output = attn_output.permute(0, 2, 1, 3).contiguous().view(B, T, C)

mha_result = mha_output_linears[i](attn_output)

x = x_input_block + mha_result

x_input_ffn = x

x_ln2 = layer_norms_2[i](x_input_ffn)

ffn_hidden = ffn_linear_1[i](x_ln2)

ffn_activated = F.relu(ffn_hidden)

ffn_output = ffn_linear_2[i](ffn_activated)

x = x_input_ffn + ffn_output

final_norm_output = final_layer_norm(x)

logits = output_linear_layer(final_norm_output)

B_loss, T_loss, V_loss = logits.shape

if yb_ids.size(1) != T_loss:

if yb_ids.size(1) > T_loss:

targets_reshaped = yb_ids[:, :T_loss].contiguous().view(-1)

else:

padded_targets = torch.full((B_loss, T_loss), ignore_index, device=device)

padded_targets[:, :yb_ids.size(1)] = yb_ids

targets_reshaped = padded_targets.view(-1)

else:

targets_reshaped = yb_ids.view(-1)

logits_reshaped = logits.view(-1, V_loss)

loss = criterion(logits_reshaped, targets_reshaped)

optimizer.zero_grad()

if not torch.isnan(loss) and not torch.isinf(loss):

loss.backward()

optimizer.step()

else:

print(f"Warning: Invalid loss detected (NaN or Inf) at epoch {epoch+1}. Skipping optimizer step.")

loss = None

if loss is not None:

current_loss = loss.item()

losses.append(current_loss)

if epoch % eval_interval == 0 or epoch == epochs - 1:

print(f" Epoch {epoch+1}/{epochs}, Loss: {current_loss:.4f}")

elif epoch % eval_interval == 0 or epoch == epochs - 1:

print(f" Epoch {epoch+1}/{epochs}, Loss: Invalid (NaN/Inf)")最后,咱们绘制了训练损失曲线,以直观地展示模型的训练过程。这就像是给模型的训练过程拍了一张"进度照",让咱们能够清楚地看到它的进步。

python

import matplotlib.pyplot as plt

plt.figure(figsize=(20, 3))

plt.plot(losses)

plt.title("Training Loss Over Epochs")

plt.xlabel("Epoch")

plt.ylabel("Loss")

plt.grid(True)

plt.show()Step 4: 多模态生成(内联)

Step 4.1: 准备输入图像和提示

咱们选择了一个图像(比如绿色圆形)和一个文本提示(比如"Describe this image:"),对图像进行预处理,提取其特征,并将其投影到训练好的视觉投影层。这就像是给模型准备了一个"输入套餐",让它能够根据图像和提示生成回答。

python

test_image_path = image_paths["green"]

test_prompt_text = "Describe this image: "接下来,咱们对图像进行预处理,提取特征,并将其投影到训练好的视觉投影层。

python

try:

test_img = Image.open(test_image_path).convert('RGB')

test_img_tensor = image_transforms(test_img).unsqueeze(0).to(device)

with torch.no_grad():

test_img_features_raw = vision_model(test_img_tensor)

vision_projection_layer.eval()

with torch.no_grad():

test_img_features_projected = vision_projection_layer(test_img_features_raw)

print(f" Processed image: '{os.path.basename(test_image_path)}'")

print(f" Projected image features shape: {test_img_features_projected.shape}")

except FileNotFoundError:

print(f"Error: Test image not found at {test_image_path}. Cannot generate.")

test_img_features_projected = None最后,咱们对提示进行分词,并将其与图像特征组合成初始上下文。这就像是把图像和提示"打包"成一个序列,让模型能够开始生成回答。

python

img_id = char_to_int[img_token]

prompt_ids = [char_to_int[ch] for ch in test_prompt_text]

initial_context_ids = torch.tensor([[img_id] * num_img_tokens + prompt_ids], dtype=torch.long, device=device)

print(f" Tokenized prompt: '{test_prompt_text}' -> {initial_context_ids.tolist()}")Step 4.2: 生成循环(自回归解码)

咱们开始生成回答啦!在每个步骤中,咱们准备当前的输入序列,提取嵌入,注入图像特征,添加位置编码,创建注意力掩码,然后通过 Transformer 块进行前向传播。这就像是让模型根据当前的"输入套餐"生成下一个"魔法单词"。

python

generated_sequence_ids = initial_context_ids

with torch.no_grad():

for _ in range(max_new_tokens):

current_ids_context = generated_sequence_ids[:, -block_size:]

B_gen, T_gen = current_ids_context.shape

C_gen = d_model

current_token_embeddings = token_embedding_table(current_ids_context)

gen_combined_embeddings = current_token_embeddings

if img_id in current_ids_context[0].tolist():

img_token_pos = 0

gen_combined_embeddings[:, img_token_pos:(img_token_pos + num_img_tokens), :] = test_img_features_projected

pos_enc_slice_gen = positional_encoding[:, :T_gen, :]

x_gen = gen_combined_embeddings + pos_enc_slice_gen

gen_causal_mask = causal_mask[:,:,:T_gen,:T_gen]

for i in range(n_layers):

x_input_block_gen = x_gen

x_ln1_gen = layer_norms_1[i](x_input_block_gen)

qkv_gen = mha_qkv_linears[i](x_ln1_gen)

qkv_gen = qkv_gen.view(B_gen, T_gen, n_heads, 3 * d_k).permute(0, 2, 1, 3)

q_gen, k_gen, v_gen = qkv_gen.chunk(3, dim=-1)

attn_scores_gen = (q_gen @ k_gen.transpose(-2, -1)) * (d_k ** -0.5)

attn_scores_masked_gen = attn_scores_gen.masked_fill(gen_causal_mask == 0, float('-inf'))

attention_weights_gen = F.softmax(attn_scores_masked_gen, dim=-1)

attention_weights_gen = torch.nan_to_num(attention_weights_gen)

attn_output_gen = attention_weights_gen @ v_gen

attn_output_gen = attn_output_gen.permute(0, 2, 1, 3).contiguous().view(B_gen, T_gen, C_gen)

mha_result_gen = mha_output_linears[i](attn_output_gen)

x_gen = x_input_block_gen + mha_result_gen

x_input_ffn_gen = x_gen

x_ln2_gen = layer_norms_2[i](x_input_ffn_gen)

ffn_hidden_gen = ffn_linear_1[i](x_ln2_gen)

ffn_activated_gen = F.relu(ffn_hidden_gen)

ffn_output_gen = ffn_linear_2[i](ffn_activated_gen)

x_gen = x_input_ffn_gen + ffn_output_gen

final_norm_output_gen = final_layer_norm(x_gen)

logits_gen = output_linear_layer(final_norm_output_gen)

logits_last_token = logits_gen[:, -1, :]

probs = F.softmax(logits_last_token, dim=-1)

next_token_id = torch.multinomial(probs, num_samples=1)

generated_sequence_ids = torch.cat((generated_sequence_ids, next_token_id), dim=1)

if next_token_id.item() == eos_token_id:

print(" <EOS> token generated. Stopping.")

break

else:

print(f" Reached max generation length ({max_new_tokens}). Stopping.")Step 4.3: 解码生成的序列

最后,咱们把生成的序列 ID 转换回人类可读的字符串。这就像是把模型生成的"魔法单词"翻译回人类的语言。

python

final_ids_list = generated_sequence_ids[0].tolist()

decoded_text = ""

for id_val in final_ids_list:

if id_val in int_to_char:

decoded_text += int_to_char[id_val]

else:

decoded_text += f"[UNK:{id_val}]"

print(f"--- Final Generated Output ---")

print(f"Image: {os.path.basename(test_image_path)}")

response_start_index = num_img_tokens + len(test_prompt_text)

print(f"Prompt: {test_prompt_text}")

print(f"Generated Response: {decoded_text[response_start_index:]}")Step 6: 保存模型状态(可选)

为了保存咱们训练好的多模态模型,咱们需要把所有模型组件和配置保存到一个字典中,然后用 torch.save() 保存到文件中。这就像是给模型拍了一张"全家福",让它能够随时被加载和使用。

python

save_dir = 'saved_models'

os.makedirs(save_dir, exist_ok=True)

save_path = os.path.join(save_dir, 'multimodal_model.pt')

multimodal_state_dict = {

'config': {

'vocab_size': vocab_size,

'd_model': d_model,

'n_heads': n_heads,

'n_layers': n_layers,

'd_ff': d_ff,

'block_size': block_size,

'num_img_tokens': num_img_tokens,

'vision_feature_dim': vision_feature_dim

},

'tokenizer': {

'char_to_int': char_to_int,

'int_to_char': int_to_char

},

'token_embedding_table': token_embedding_table.state_dict(),

'vision_projection_layer': vision_projection_layer.state_dict(),

'positional_encoding': positional_encoding,

'layer_norms_1': [ln.state_dict() for ln in layer_norms_1],

'mha_qkv_linears': [l.state_dict() for l in mha_qkv_linears],

'mha_output_linears': [l.state_dict() for l in mha_output_linears],

'layer_norms_2': [ln.state_dict() for ln in layer_norms_2],

'ffn_linear_1': [l.state_dict() for l in ffn_linear_1],

'ffn_linear_2': [l.state_dict() for l in ffn_linear_2],

'final_layer_norm': final_layer_norm.state_dict(),

'output_linear_layer': output_linear_layer.state_dict()

}

torch.save(multimodal_state_dict, save_path)

print(f"Multi-modal model saved to {save_path}")加载保存的多模态模型

加载保存的模型状态字典后,咱们可以根据配置和 tokenizer 重建模型组件,并加载它们的状态字典。这就像是把之前保存的"全家福"重新组装起来,让模型能够随时被使用。

python

model_load_path = 'saved_models/multimodal_model.pt'

loaded_state_dict = torch.load(model_load_path, map_location=device)

print(f"Loaded state dictionary from '{model_load_path}'.")

config = loaded_state_dict['config']

vocab_size = config['vocab_size']

d_model = config['d_model']

n_heads = config['n_heads']

n_layers = config['n_layers']

d_ff = config['d_ff']

block_size = config['block_size']

num_img_tokens = config['num_img_tokens']

vision_feature_dim = config['vision_feature_dim']

d_k = d_model // n_heads

char_to_int = loaded_state_dict['tokenizer']['char_to_int']

int_to_char = loaded_state_dict['tokenizer']['int_to_char']

causal_mask = torch.tril(torch.ones(block_size, block_size, device=device)).view(1, 1, block_size, block_size)

token_embedding_table = nn.Embedding(vocab_size, d_model).to(device)

token_embedding_table.load_state_dict(loaded_state_dict['token_embedding_table'])

vision_projection_layer = nn.Linear(vision_feature_dim, d_model).to(device)

vision_projection_layer.load_state_dict(loaded_state_dict['vision_projection_layer'])

positional_encoding = loaded_state_dict['positional_encoding'].to(device)

layer_norms_1 = []

mha_qkv_linears = []

mha_output_linears = []

layer_norms_2 = []

ffn_linear_1 = []

ffn_linear_2 = []

for i in range(n_layers):

ln1 = nn.LayerNorm(d_model).to(device)

ln1.load_state_dict(loaded_state_dict['layer_norms_1'][i])

layer_norms_1.append(ln1)

qkv_dict = loaded_state_dict['mha_qkv_linears'][i]

has_qkv_bias = 'bias' in qkv_dict

qkv = nn.Linear(d_model, 3 * d_model, bias=has_qkv_bias).to(device)

qkv.load_state_dict(qkv_dict)

mha_qkv_linears.append(qkv)

out_dict = loaded_state_dict['mha_output_linears'][i]

has_out_bias = 'bias' in out_dict

out = nn.Linear(d_model, d_model, bias=has_out_bias).to(device)

out.load_state_dict(out_dict)

mha_output_linears.append(out)

ln2 = nn.LayerNorm(d_model).to(device)

ln2.load_state_dict(loaded_state_dict['layer_norms_2'][i])

layer_norms_2.append(ln2)

ff1_dict = loaded_state_dict['ffn_linear_1'][i]

has_ff1_bias = 'bias' in ff1_dict

ff1 = nn.Linear(d_model, d_ff, bias=has_ff1_bias).to(device)

ff1.load_state_dict(ff1_dict)

ffn_linear_1.append(ff1)

ff2_dict = loaded_state_dict['ffn_linear_2'][i]

has_ff2_bias = 'bias' in ff2_dict

ff2 = nn.Linear(d_ff, d_model, bias=has_ff2_bias).to(device)

ff2.load_state_dict(ff2_dict)

ffn_linear_2.append(ff2)

final_layer_norm = nn.LayerNorm(d_model).to(device)

final_layer_norm.load_state_dict(loaded_state_dict['final_layer_norm'])

output_dict = loaded_state_dict['output_linear_layer']

has_output_bias = 'bias' in output_dict

output_linear_layer = nn.Linear(d_model, vocab_size, bias=has_output_bias).to(device)

output_linear_layer.load_state_dict(output_dict)

print("Multi-modal model components loaded successfully.")使用加载的模型进行推理

加载模型后,咱们可以用它来进行推理。这就像是让模型根据图像和提示生成回答,就像一个超级智能的机器人一样。

python

def generate_with_image(image_path, prompt, max_new_tokens=50):

"""Generate text response for an image and prompt"""

token_embedding_table.eval()

vision_projection_layer.eval()

for i in range(n_layers):

layer_norms_1[i].eval()

mha_qkv_linears[i].eval()

mha_output_linears[i].eval()

layer_norms_2[i].eval()

ffn_linear_1[i].eval()

ffn_linear_2[i].eval()

final_layer_norm.eval()

output_linear_layer.eval()

image = Image.open(image_path).convert('RGB')

img_tensor = image_transforms(image).unsqueeze(0).to(device)

with torch.no_grad():

img_features_raw = vision_model(img_tensor)

img_features_projected = vision_projection_layer(img_features_raw)

img_id = char_to_int[img_token]

prompt_ids = [char_to_int[ch] for ch in prompt]

context_ids = torch.tensor([[img_id] + prompt_ids], dtype=torch.long, device=device)

for _ in range(max_new_tokens):

context_ids = context_ids[:, -block_size:]

# [Generation logic goes here - follow the same steps as in Step 4.2]

# [Logic to get next token]

# [Logic to check for EOS and break]

# [Logic to decode and return the result]结语

通过这篇文章,咱们实现了一个端到端的多模态 Transformer 模型,能够处理图像和文本的组合,并生成有趣的回答。虽然这个实现比较基础,但它展示了如何将视觉和语言信息融合在一起,为更复杂的应用奠定了基础。希望这篇文章能激发你对多模态人工智能的兴趣,让你也能创造出自己的超级智能模型!