💡💡💡本专栏所有程序均经过测试,可成功执行💡💡💡

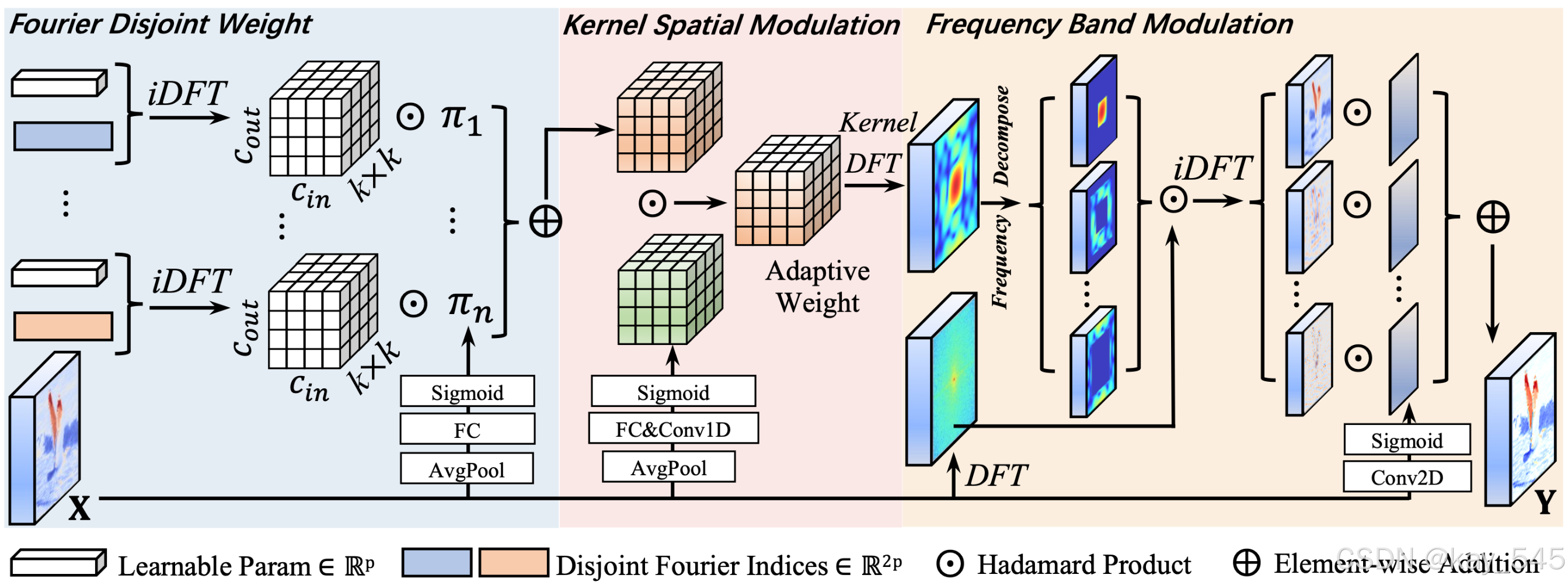

本文给大家带来的教程是将YOLO26的Conv替换为FDConv来提取特征。文章在介绍主要的原理后,将手把手教学如何进行模块的代码添加和修改,并将修改后的完整代码放在文章的最后,方便大家一键运行,小白也可轻松上手实践。以帮助您更好地学习深度学习目标检测YOLO系列的挑战。

专栏地址:YOLO26改进-论文涨点------点击跳转看所有内容,关注不迷路!****

目录

[2. FDConv代码实现](#2. FDConv代码实现)

[2.1 将FDConv添加到YOLO26中](#2.1 将FDConv添加到YOLO26中)

[2.2 更改init.py文件](#2.2 更改init.py文件)

[2.3 添加yaml文件](#2.3 添加yaml文件)

[2.4 在task.py中进行注册](#2.4 在task.py中进行注册)

[2.5 执行程序](#2.5 执行程序)

[3. 完整代码分享](#3. 完整代码分享)

[4. GFLOPs](#4. GFLOPs)

[5. 进阶](#5. 进阶)

1.论文

论文地址: Frequency Dynamic Convolution for Dense Image Prediction

官方代码: 官方代码仓库点击即可跳转

2. FDConv代码实现

2.1 将FDConv添加到YOLO26中

**关键步骤一:**在ultralytics\ultralytics\nn\modules下面新建文件夹models,在文件夹下新建FDConv.py,粘贴下面代码

python

import torch

import torch.nn as nn

import torch.nn.functional as F

from ultralytics.nn.modules.conv import autopad

class StarReLU(nn.Module):

"""

StarReLU: s * relu(x) ** 2 + b

"""

def __init__(self, scale_value=1.0, bias_value=0.0,

scale_learnable=True, bias_learnable=True,

inplace=False):

super().__init__()

self.relu = nn.ReLU(inplace=inplace)

self.scale = nn.Parameter(torch.tensor(scale_value), requires_grad=scale_learnable)

self.bias = nn.Parameter(torch.tensor(bias_value), requires_grad=bias_learnable)

def forward(self, x):

return self.scale * self.relu(x).pow(2) + self.bias

def get_fft2freq(d1, d2, use_rfft=False):

freq_h = torch.fft.fftfreq(d1)

freq_w = torch.fft.rfftfreq(d2) if use_rfft else torch.fft.fftfreq(d2)

grid = torch.stack(torch.meshgrid(freq_h, freq_w), dim=-1)

dist = torch.norm(grid, dim=-1)

flat = dist.view(-1)

_, idx = torch.sort(flat)

if use_rfft:

d2_ = d2 // 2 + 1

else:

d2_ = d2

coords = torch.stack([idx // d2_, idx % d2_], dim=1)

return coords.t(), grid

class KernelSpatialModulation_Global(nn.Module):

def __init__(self, in_planes, out_planes, kernel_size,

reduction=0.0625, kernel_num=4, min_channel=16,

temp=1.0, kernel_temp=None, att_multi=2.0,

ksm_only_kernel_att=False, stride=1,

spatial_freq_decompose=False, act_type='sigmoid'):

super().__init__()

attention_channel = max(int(in_planes * reduction), min_channel)

self.kernel_size = kernel_size

self.temperature = temp

self.kernel_temp = kernel_temp

self.att_multi = att_multi

self.ksm_only_kernel_att = ksm_only_kernel_att

self.act_type = act_type

self.fc = nn.Conv2d(in_planes, attention_channel, 1, bias=False)

self.bn = nn.BatchNorm2d(attention_channel)

self.relu = StarReLU()

# Channel

if ksm_only_kernel_att:

self.func_channel = lambda x: 1.0

else:

out_c = in_planes * 2 if spatial_freq_decompose and kernel_size>1 else in_planes

self.channel_fc = nn.Conv2d(attention_channel, out_c, 1)

self.func_channel = self._get_channel

# Filter

if in_planes==out_planes or ksm_only_kernel_att:

self.func_filter = lambda x: 1.0

else:

out_f = out_planes * 2 if spatial_freq_decompose and stride>1 else out_planes

self.filter_fc = nn.Conv2d(attention_channel, out_f, 1, stride=stride)

self.func_filter = self._get_filter

# Spatial

if kernel_size==1 or ksm_only_kernel_att:

self.func_spatial = lambda x: 1.0

else:

self.spatial_fc = nn.Conv2d(attention_channel, kernel_size*kernel_size,1)

self.func_spatial = self._get_spatial

# Kernel mixing

if kernel_num==1:

self.func_kernel = lambda x: 1.0

else:

self.kernel_fc = nn.Conv2d(attention_channel, kernel_num,1)

self.func_kernel = self._get_kernel

def _get_channel(self, x):

att = self.channel_fc(x).view(x.size(0),1,1,-1,1,1)

if self.act_type=='sigmoid': return torch.sigmoid(att/self.temperature)*self.att_multi

elif self.act_type=='tanh': return 1+torch.tanh(att/self.temperature)

raise

def _get_filter(self,x):

att = self.filter_fc(x).view(x.size(0),1,-1,1,1,1)

if self.act_type=='sigmoid': return torch.sigmoid(att/self.temperature)*self.att_multi

elif self.act_type=='tanh': return 1+torch.tanh(att/self.temperature)

raise

def _get_spatial(self,x):

att = self.spatial_fc(x).view(x.size(0),1,1,1,self.kernel_size,self.kernel_size)

if self.act_type=='sigmoid': return torch.sigmoid(att/self.temperature)*self.att_multi

elif self.act_type=='tanh': return 1+torch.tanh(att/self.temperature)

raise

def _get_kernel(self,x):

att = self.kernel_fc(x).view(x.size(0),-1,1,1,1,1)

return F.softmax(att/self.kernel_temp,dim=1)

def forward(self,x):

g = self.relu(self.bn(self.fc(x)))

return self.func_channel(g), self.func_filter(g), self.func_spatial(g), self.func_kernel(g)

class KernelSpatialModulation_Local(nn.Module):

def __init__(self, channel, kernel_num=1, out_n=1, k_size=3, use_global=False):

super().__init__()

self.kn, self.out_n, self.use_global = kernel_num, out_n, use_global

self.conv1d = nn.Conv1d(1, kernel_num*out_n, k_size, padding=(k_size-1)//2)

self.norm = nn.LayerNorm(channel)

if use_global:

self.complex_w = nn.Parameter(torch.randn(1,channel//2+1,2)*1e-6)

def forward(self,x):

y = x.mean(-1,keepdim=True).transpose(-1,-2)

if self.use_global:

fft = torch.fft.rfft(y.float(),dim=-1)

real = fft.real*self.complex_w[...,0]

imag = fft.imag*self.complex_w[...,1]

y = y+torch.fft.irfft(torch.complex(real,imag),dim=-1)

y = self.norm(y)

att = self.conv1d(y).reshape(y.size(0),self.kn,self.out_n,-1)

return att.permute(0,1,3,2)

class FrequencyBandModulation(nn.Module):

def __init__(self,in_channels,k_list=[2,4,8],lowfreq_att=False,fs_feat='feat',act='sigmoid',spatial='conv',spatial_group=1,spatial_kernel=3,init='zero'):

super().__init__()

self.k_list,self.lowfreq_att,self.act= k_list,lowfreq_att,act

# n_freq_groups used here

self.spatial_group = spatial_group

self.convs = nn.ModuleList()

for _ in range(len(k_list)+int(lowfreq_att)):

conv=nn.Conv2d(in_channels, self.spatial_group, spatial_kernel, padding=spatial_kernel//2, groups=self.spatial_group)

if init=='zero': nn.init.normal_(conv.weight, std=1e-6); nn.init.zeros_(conv.bias)

self.convs.append(conv)

def forward(self,x):

b,c,h,w=x.shape

x_fft=torch.fft.rfft2(x,norm='ortho')

out=0

for i,f in enumerate(self.k_list):

mask=torch.zeros_like(x_fft[:,:1])

coords,_=get_fft2freq(h,w,use_rfft=True)

mask[:,:,coords.max(-1)[0]<0.5/f]=1

low=torch.fft.irfft2(x_fft*mask,s=(h,w),norm='ortho')

high=x-low; x=low

w_att=self.convs[i](x)

w_att = (w_att.sigmoid()*2) if self.act=='sigmoid' else (1+w_att.tanh())

out+= (w_att.reshape(b,self.spatial_group,-1,h,w)*high.reshape(b,self.spatial_group,-1,h,w)).reshape(b,-1,h,w)

if self.lowfreq_att:

w_att=self.convs[-1](x)

w_att=(w_att.sigmoid()*2) if self.act=='sigmoid' else (1+w_att.tanh())

out+= (w_att.reshape(b,self.spatial_group,-1,h,w)*x.reshape(b,self.spatial_group,-1,h,w)).reshape(b,-1,h,w)

else:

out+=x

return out

class FDConv(nn.Conv2d):

def __init__(self, in_channels, out_channels, kernel_size,

stride=1, padding=None, dilation=1, groups=1, bias=True,

reduction=0.0625, kernel_num=4, n_freq_groups=1,

kernel_temp=1.0, temp=None, att_multi=2.0,

param_ratio=1, param_reduction=1.0,

ksm_only_kernel_att=False, spatial_freq_decompose=False,

use_ksm_local=True, ksm_local_act='sigmoid', ksm_global_act='sigmoid',

fbm_cfg=None):

padding = (kernel_size-1)//2 if padding is None else padding

super().__init__(in_channels, out_channels, kernel_size,

stride, padding, dilation, groups, bias)

self.kernel_num = kernel_num

self.att_multi = att_multi

self.param_ratio = param_ratio

self.param_reduction = param_reduction

self.n_freq_groups = n_freq_groups

# 全域調製模組

if temp is None:

temp = kernel_temp

self.KSMG = KernelSpatialModulation_Global(

in_planes=in_channels,

out_planes=out_channels,

kernel_size=kernel_size,

reduction=reduction,

kernel_num=kernel_num,

temp=temp,

kernel_temp=kernel_temp,

att_multi=att_multi,

ksm_only_kernel_att=ksm_only_kernel_att,

spatial_freq_decompose=spatial_freq_decompose,

act_type=ksm_global_act

)

# 局部調製模組

self.use_local = use_ksm_local

if use_ksm_local:

self.KSML = KernelSpatialModulation_Local(

channel=in_channels,

kernel_num=1,

out_n=out_channels * kernel_size * kernel_size

)

# 頻帶調製模組

if fbm_cfg is None:

fbm_cfg = {}

fbm_cfg['spatial_group'] = n_freq_groups

self.FBM = FrequencyBandModulation(in_channels, **fbm_cfg)

# 準備 DFT 權重

self._prepare_dft()

def _prepare_dft(self):

# 1) 計算 out×in 大矩陣的 DFT

d1, d2 = self.out_channels, self.in_channels

k = self.kernel_size[0]

flat = self.weight.permute(0,2,1,3).reshape(d1*k, d2*k)

freq = torch.fft.rfft2(flat, dim=(0,1))

w = torch.stack([freq.real, freq.imag], dim=-1) # shape = (d1*k, d2*k, 2)

# 2) 將它做成可訓練參數

# shape → (param_ratio, d1*k, d2*k, 2)

self.dft_weight = nn.Parameter(

w[None].repeat(self.param_ratio, 1, 1, 1) // (min(d1, d2)//2)

)

# **不要再刪除 self.weight**

# if self.bias is not None:

# self.bias = nn.Parameter(torch.zeros_like(self.bias))

def forward(self, x):

# fallback to plain conv if小channel 或 非1/3kernel

if min(self.in_channels, self.out_channels) <= 16 or self.kernel_size[0] not in [1,3]:

# 這裡 super().forward 會呼叫 nn.Conv2d.forward,必須有 self.weight

return super().forward(x)

b, _, h, w = x.shape

# 計算各種 attention map

ch, fl, sp, kn = self.KSMG(F.adaptive_avg_pool2d(x, 1))

hr = 1

if self.use_local:

local = self.KSML(F.adaptive_avg_pool2d(x, 1))

hr = (local.sigmoid() * self.att_multi) if self.KSML.use_global else (1 + local.tanh())

# 建 DFT map 並還原到 spatial domain

coords, _ = get_fft2freq(

self.out_channels * self.kernel_size[0],

self.in_channels * self.kernel_size[1],

use_rfft=True

)

DFTmap = torch.zeros(

(b, self.out_channels * self.kernel_size[0], self.in_channels * self.kernel_size[1]//2+1, 2),

device=x.device

)

for i in range(self.param_ratio):

w = self.dft_weight[i][coords[0], coords[1]][None]

DFTmap[...,0] += w[...,0] * kn[:, i]

DFTmap[...,1] += w[...,1] * kn[:, i]

adapt = torch.fft.irfft2(torch.view_as_complex(DFTmap), dim=(1,2))

adapt = adapt.reshape(

b, 1, self.out_channels, self.kernel_size[0],

self.in_channels, self.kernel_size[1]

).permute(0,1,2,4,3,5)

# 頻帶模組

x_fbm = self.FBM(x)

x_in = x_fbm if hasattr(self, 'FBM') else x

# 聚合所有 attention

agg = sp * ch * fl * adapt * hr

agg = agg.sum(1).view(

-1,

self.in_channels // self.groups,

self.kernel_size[0],

self.kernel_size[1]

)

# Depthwise batch conv trick

xcat = x_in.reshape(1, -1, h, w)

out = F.conv2d(

xcat, agg, None,

self.stride, self.padding,

self.dilation, self.groups * b

)

out = out.view(b, self.out_channels, out.size(-2), out.size(-1))

if self.bias is not None:

out += self.bias.view(1, -1, 1, 1)

return out

class FDConv_cfg(nn.Module):

"""Standard convolution Block with Batch Norm and Activation, using FDConv."""

default_act = nn.SiLU() # Default activation

def __init__(self, c1, c2, k=1, s=1, freq_groups=1, p=None, g=1, d=1, act=True):

"""

Args:

c1 (int): input channels

c2 (int): output channels

k (int): kernel size

s (int): stride

p (int or None): padding; if None uses autopad

g (int): groups

d (int): dilation

act (bool or nn.Module): activation to use (True→default_act, False→Identity, or custom)

"""

super().__init__()

padding = autopad(k, p, d)

# replace nn.Conv2d with FDConv

self.conv = FDConv(

in_channels=c1,

out_channels=c2,

kernel_size=k,

stride=s,

n_freq_groups=freq_groups,

padding=padding,

dilation=d,

groups=g,

bias=False

)

self.bn = nn.BatchNorm2d(c2)

self.act = (

self.default_act

if act is True

else (act if isinstance(act, nn.Module) else nn.Identity())

)

def forward(self, x):

"""Conv → BatchNorm → Activation"""

x = self.conv(x)

x = self.bn(x)

return self.act(x)

def forward_fuse(self, x):

"""Conv only (for fused deployments) → Activation"""

return self.act(self.conv(x))

if __name__ == "__main__":

# Usage example:

fd = FDConv(in_channels=64, out_channels=64, kernel_size=3, n_freq_groups=64)

print(fd)

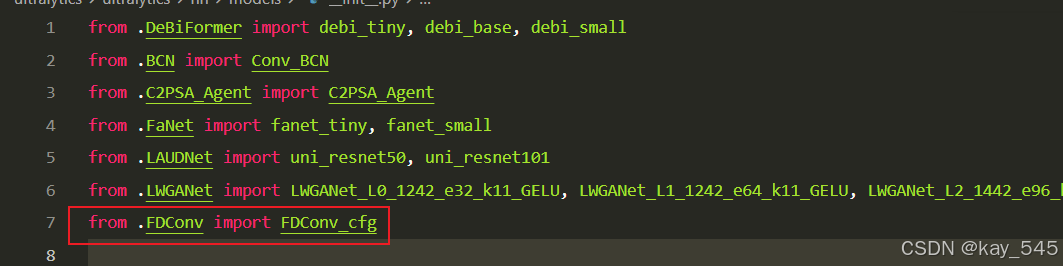

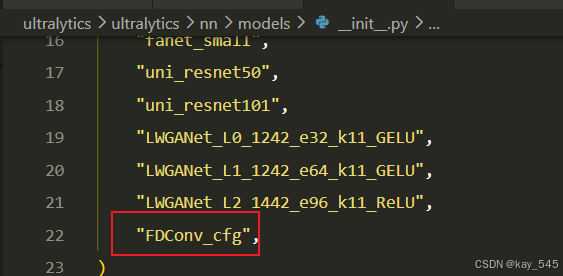

2.2 更改init.py文件

**关键步骤二:**在文件ultralytics\ultralytics\nn\modules\models文件夹下新建__init__.py文件,先导入函数

然后在下面的__all__中声明函数

2.3 添加yaml文件

**关键步骤三:**在/ultralytics/ultralytics/cfg/models/26下面新建文件yolo26_FDConv.yaml文件,粘贴下面的内容

- 目标检测

python

# Ultralytics 🚀 AGPL-3.0 License - https://ultralytics.com/license

# Ultralytics YOLO26 object detection model with P3/8 - P5/32 outputs

# Model docs: https://docs.ultralytics.com/models/yolo26

# Task docs: https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

end2end: True # whether to use end-to-end mode

reg_max: 1 # DFL bins

scales: # model compound scaling constants, i.e. 'model=yolo26n.yaml' will call yolo26.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.50, 0.25, 1024] # summary: 260 layers, 2,572,280 parameters, 2,572,280 gradients, 6.1 GFLOPs

s: [0.50, 0.50, 1024] # summary: 260 layers, 10,009,784 parameters, 10,009,784 gradients, 22.8 GFLOPs

m: [0.50, 1.00, 512] # summary: 280 layers, 21,896,248 parameters, 21,896,248 gradients, 75.4 GFLOPs

l: [1.00, 1.00, 512] # summary: 392 layers, 26,299,704 parameters, 26,299,704 gradients, 93.8 GFLOPs

x: [1.00, 1.50, 512] # summary: 392 layers, 58,993,368 parameters, 58,993,368 gradients, 209.5 GFLOPs

# YOLO26n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, FDConv_cfg, [64, 3, 2, 3]] # 0-P1/2

- [-1, 1, FDConv_cfg, [128, 3, 2, 16]] # 1-P2/4

- [-1, 2, C3k2, [256, False, 0.25]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 2, C3k2, [512, False, 0.25]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 2, C3k2, [512, True]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 2, C3k2, [1024, True]]

- [-1, 1, SPPF, [1024, 5]] # 9

- [-1, 2, C2PSA, [1024]] # 10

# YOLO26n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 2, C3k2, [512, False]] # 13

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 13], 1, Concat, [1]] # cat head P4

- [-1, 2, C3k2, [512, False]] # 19 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 10], 1, Concat, [1]] # cat head P5

- [-1, 2, C3k2, [1024, True]] # 22 (P5/32-large)

- [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)- 语义分割

python

# Ultralytics 🚀 AGPL-3.0 License - https://ultralytics.com/license

# Ultralytics YOLO26 object detection model with P3/8 - P5/32 outputs

# Model docs: https://docs.ultralytics.com/models/yolo26

# Task docs: https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

end2end: True # whether to use end-to-end mode

reg_max: 1 # DFL bins

scales: # model compound scaling constants, i.e. 'model=yolo26n.yaml' will call yolo26.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.50, 0.25, 1024] # summary: 260 layers, 2,572,280 parameters, 2,572,280 gradients, 6.1 GFLOPs

s: [0.50, 0.50, 1024] # summary: 260 layers, 10,009,784 parameters, 10,009,784 gradients, 22.8 GFLOPs

m: [0.50, 1.00, 512] # summary: 280 layers, 21,896,248 parameters, 21,896,248 gradients, 75.4 GFLOPs

l: [1.00, 1.00, 512] # summary: 392 layers, 26,299,704 parameters, 26,299,704 gradients, 93.8 GFLOPs

x: [1.00, 1.50, 512] # summary: 392 layers, 58,993,368 parameters, 58,993,368 gradients, 209.5 GFLOPs

# YOLO26n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, FDConv_cfg, [64, 3, 2, 3]] # 0-P1/2

- [-1, 1, FDConv_cfg, [128, 3, 2, 16]] # 1-P2/4

- [-1, 2, C3k2, [256, False, 0.25]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 2, C3k2, [512, False, 0.25]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 2, C3k2, [512, True]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 2, C3k2, [1024, True]]

- [-1, 1, SPPF, [1024, 5]] # 9

- [-1, 2, C2PSA, [1024]] # 10

# YOLO26n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 2, C3k2, [512, False]] # 13

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 13], 1, Concat, [1]] # cat head P4

- [-1, 2, C3k2, [512, False]] # 19 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 10], 1, Concat, [1]] # cat head P5

- [-1, 2, C3k2, [1024, True]] # 22 (P5/32-large)

- [[16, 19, 22], 1, Segment, [nc, 32, 256]]- 旋转目标检测

python

# Ultralytics 🚀 AGPL-3.0 License - https://ultralytics.com/license

# Ultralytics YOLO26 object detection model with P3/8 - P5/32 outputs

# Model docs: https://docs.ultralytics.com/models/yolo26

# Task docs: https://docs.ultralytics.com/tasks/detect

# Parameters

nc: 80 # number of classes

end2end: True # whether to use end-to-end mode

reg_max: 1 # DFL bins

scales: # model compound scaling constants, i.e. 'model=yolo26n.yaml' will call yolo26.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.50, 0.25, 1024] # summary: 260 layers, 2,572,280 parameters, 2,572,280 gradients, 6.1 GFLOPs

s: [0.50, 0.50, 1024] # summary: 260 layers, 10,009,784 parameters, 10,009,784 gradients, 22.8 GFLOPs

m: [0.50, 1.00, 512] # summary: 280 layers, 21,896,248 parameters, 21,896,248 gradients, 75.4 GFLOPs

l: [1.00, 1.00, 512] # summary: 392 layers, 26,299,704 parameters, 26,299,704 gradients, 93.8 GFLOPs

x: [1.00, 1.50, 512] # summary: 392 layers, 58,993,368 parameters, 58,993,368 gradients, 209.5 GFLOPs

# YOLO26n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, FDConv_cfg, [64, 3, 2, 3]] # 0-P1/2

- [-1, 1, FDConv_cfg, [128, 3, 2, 16]] # 1-P2/4

- [-1, 2, C3k2, [256, False, 0.25]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 2, C3k2, [512, False, 0.25]]

- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16

- [-1, 2, C3k2, [512, True]]

- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32

- [-1, 2, C3k2, [1024, True]]

- [-1, 1, SPPF, [1024, 5]] # 9

- [-1, 2, C2PSA, [1024]] # 10

# YOLO26n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 6], 1, Concat, [1]] # cat backbone P4

- [-1, 2, C3k2, [512, False]] # 13

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 4], 1, Concat, [1]] # cat backbone P3

- [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 13], 1, Concat, [1]] # cat head P4

- [-1, 2, C3k2, [512, False]] # 19 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 10], 1, Concat, [1]] # cat head P5

- [-1, 2, C3k2, [1024, True]] # 22 (P5/32-large)

- [[16, 19, 22], 1, OBB, [nc, 1]]温馨提示:本文只是对yolo26基础上添加模块,如果要对yolo26 n/l/m/x进行添加则只需要指定对应的depth_multiple 和 width_multiple

python

end2end: True # whether to use end-to-end mode

reg_max: 1 # DFL bins

scales: # model compound scaling constants, i.e. 'model=yolo26n.yaml' will call yolo26.yaml with scale 'n'

# [depth, width, max_channels]

n: [0.50, 0.25, 1024] # summary: 260 layers, 2,572,280 parameters, 2,572,280 gradients, 6.1 GFLOPs

s: [0.50, 0.50, 1024] # summary: 260 layers, 10,009,784 parameters, 10,009,784 gradients, 22.8 GFLOPs

m: [0.50, 1.00, 512] # summary: 280 layers, 21,896,248 parameters, 21,896,248 gradients, 75.4 GFLOPs

l: [1.00, 1.00, 512] # summary: 392 layers, 26,299,704 parameters, 26,299,704 gradients, 93.8 GFLOPs

x: [1.00, 1.50, 512] # summary: 392 layers, 58,993,368 parameters, 58,993,368 gradients, 209.5 GFLOPs2.4 在task.py中进行注册

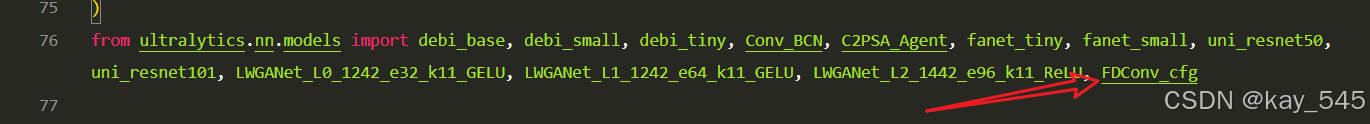

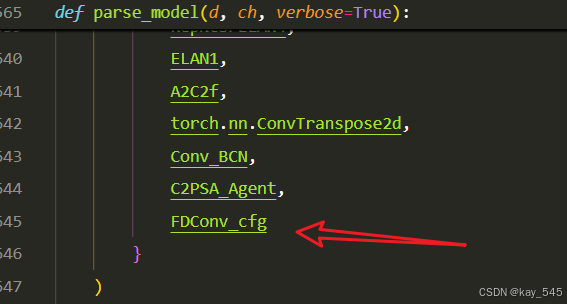

**关键步骤四:**在parse_model函数中进行注册,添加FDConv

先在task.py导入函数

然后在task.py文件下找到parse_model这个函数,如下图,添加FDConv

2.5 执行程序

关键步骤五: 在ultralytics文件中新建train.py,将model的参数路径设置为yolo26_FDConv .yaml的路径即可 【注意是在外边的Ultralytics下新建train.py】

python

from ultralytics import YOLO

import warnings

warnings.filterwarnings('ignore')

from pathlib import Path

if __name__ == '__main__':

# 加载模型

model = YOLO("ultralytics/cfg/26/yolo26.yaml") # 你要选择的模型yaml文件地址

# Use the model

results = model.train(data=r"你的数据集的yaml文件地址",

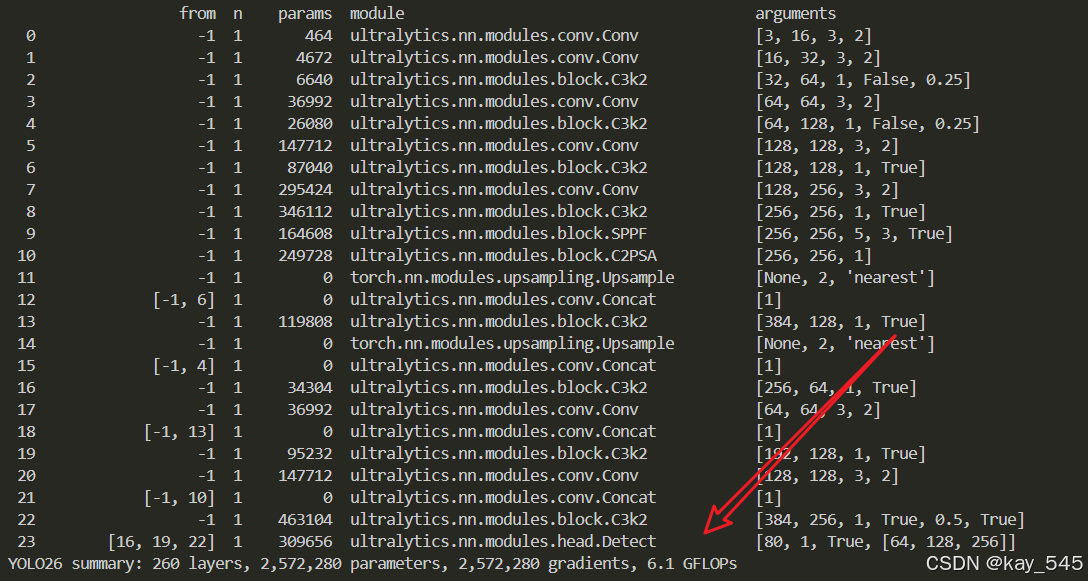

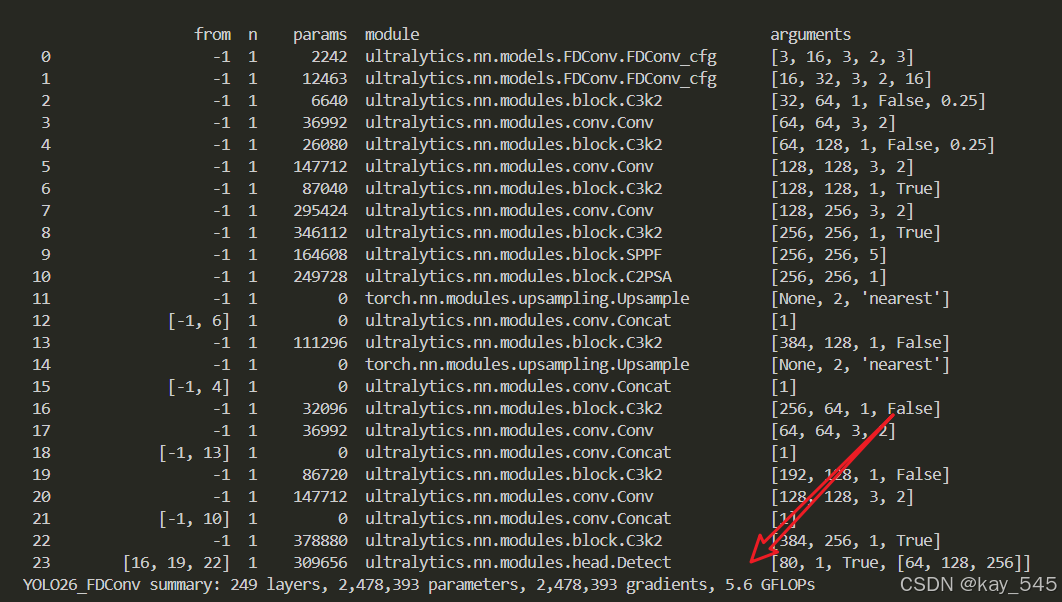

epochs=100, batch=16, imgsz=640, workers=4, name=Path(model.cfg).stem) # 训练模型🚀运行程序,如果出现下面的内容则说明添加成功🚀

python

from n params module arguments

0 -1 1 2242 ultralytics.nn.models.FDConv.FDConv_cfg [3, 16, 3, 2, 3]

1 -1 1 12463 ultralytics.nn.models.FDConv.FDConv_cfg [16, 32, 3, 2, 16]

2 -1 1 6640 ultralytics.nn.modules.block.C3k2 [32, 64, 1, False, 0.25]

3 -1 1 36992 ultralytics.nn.modules.conv.Conv [64, 64, 3, 2]

4 -1 1 26080 ultralytics.nn.modules.block.C3k2 [64, 128, 1, False, 0.25]

5 -1 1 147712 ultralytics.nn.modules.conv.Conv [128, 128, 3, 2]

6 -1 1 87040 ultralytics.nn.modules.block.C3k2 [128, 128, 1, True]

7 -1 1 295424 ultralytics.nn.modules.conv.Conv [128, 256, 3, 2]

8 -1 1 346112 ultralytics.nn.modules.block.C3k2 [256, 256, 1, True]

9 -1 1 164608 ultralytics.nn.modules.block.SPPF [256, 256, 5]

10 -1 1 249728 ultralytics.nn.modules.block.C2PSA [256, 256, 1]

11 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

12 [-1, 6] 1 0 ultralytics.nn.modules.conv.Concat [1]

13 -1 1 111296 ultralytics.nn.modules.block.C3k2 [384, 128, 1, False]

14 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

15 [-1, 4] 1 0 ultralytics.nn.modules.conv.Concat [1]

16 -1 1 32096 ultralytics.nn.modules.block.C3k2 [256, 64, 1, False]

17 -1 1 36992 ultralytics.nn.modules.conv.Conv [64, 64, 3, 2]

18 [-1, 13] 1 0 ultralytics.nn.modules.conv.Concat [1]

19 -1 1 86720 ultralytics.nn.modules.block.C3k2 [192, 128, 1, False]

20 -1 1 147712 ultralytics.nn.modules.conv.Conv [128, 128, 3, 2]

21 [-1, 10] 1 0 ultralytics.nn.modules.conv.Concat [1]

22 -1 1 378880 ultralytics.nn.modules.block.C3k2 [384, 256, 1, True]

23 [16, 19, 22] 1 309656 ultralytics.nn.modules.head.Detect [80, 1, True, [64, 128, 256]]

YOLO26_FDConv summary: 249 layers, 2,478,393 parameters, 2,478,393 gradients, 5.6 GFLOPs3. 完整代码分享

++主页侧边++

4. GFLOPs

关于GFLOPs的计算方式可以查看 :百面算法工程师 | 卷积基础知识------Convolution

未改进的YOLO26n GFLOPs

改进后的GFLOPs

5. 进阶

可以与其他的注意力机制或者损失函数等结合,进一步提升检测效果

6.总结

通过以上的改进方法,我们成功提升了模型的表现。这只是一个开始,未来还有更多优化和技术深挖的空间。在这里,我想隆重向大家推荐我的专栏------<专栏地址: YOLO26改进-论文涨点------点击跳转看所有内容,关注不迷路!>。这个专栏专注于前沿的深度学习技术,特别是目标检测领域的最新进展,不仅包含对YOLO26的深入解析和改进策略,还会定期更新来自各大顶会(如CVPR、NeurIPS等)的论文复现和实战分享。

为什么订阅我的专栏? ------专栏地址:YOLO26改进-论文涨点------点击跳转看所有内容,关注不迷路!****

-

前沿技术解读:专栏不仅限于YOLO系列的改进,还会涵盖各类主流与新兴网络的最新研究成果,帮助你紧跟技术潮流。

-

详尽的实践分享 :所有内容实践性也极强。每次更新都会附带代码和具体的改进步骤,保证每位读者都能迅速上手。

-

问题互动与答疑 :订阅我的专栏后,你将可以随时向我提问,获取及时的答疑。

-

实时更新,紧跟行业动态:不定期发布来自全球顶会的最新研究方向和复现实验报告,让你时刻走在技术前沿。

专栏适合人群:

-

对目标检测、YOLO系列网络有深厚兴趣的同学

-

希望在用YOLO算法写论文的同学

-

对YOLO算法感兴趣的同学等