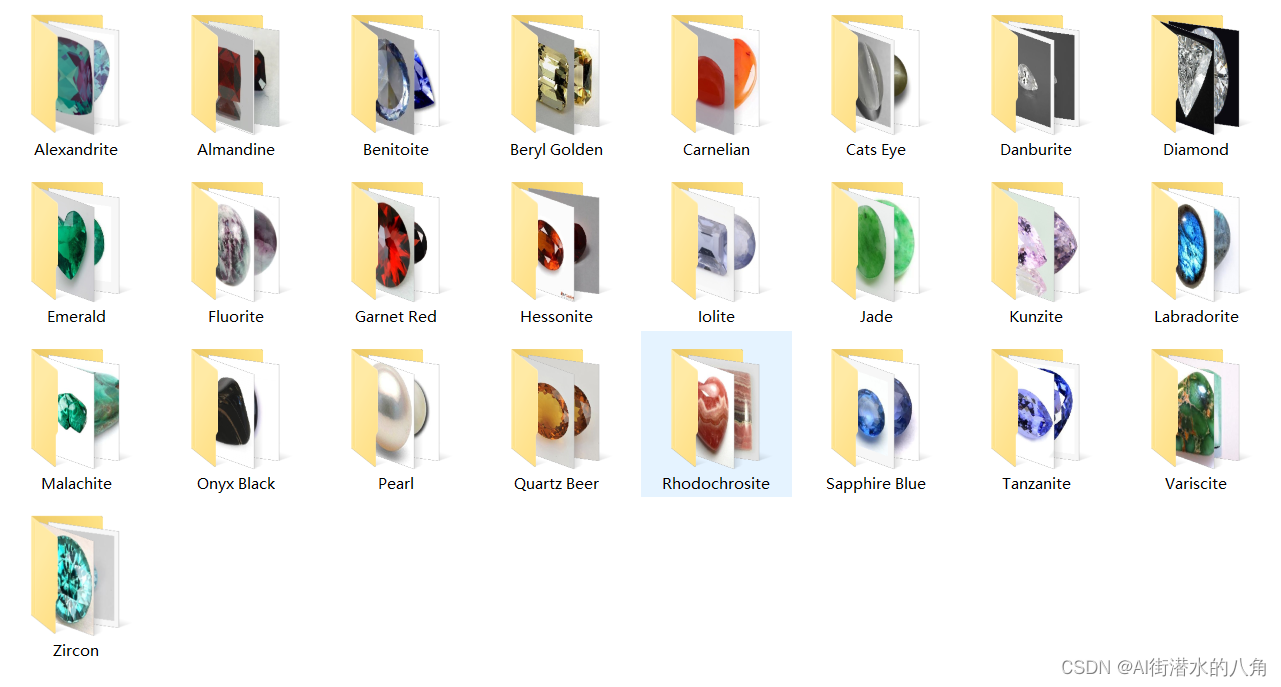

第一步:准备数据

25种宝石数据,总共800张:

{ "0": "Alexandrite","1": "Almandine","2": "Benitoite","3": "Beryl Golden","4": "Carnelian", "5": "Cats Eye","6": "Danburite", "7": "Diamond","8": "Emerald","9": "Fluorite","10": "Garnet Red","11": "Hessonite","12": "Iolite","13": "Jade","14": "Kunzite","15": "Labradorite","16": "Malachite","17": "Onyx Black","18": "Pearl","19": "Quartz Beer","20": "Rhodochrosite","21": "Sapphire Blue","22": "Tanzanite","23": "Variscite","24": "Zircon"}

第二步:搭建模型

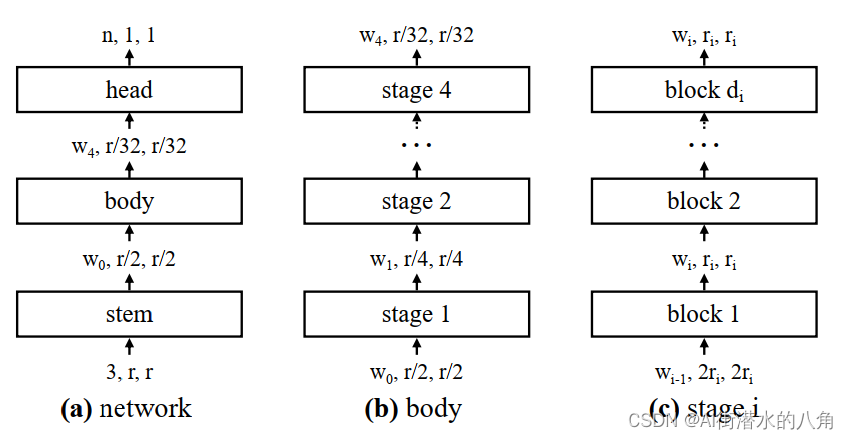

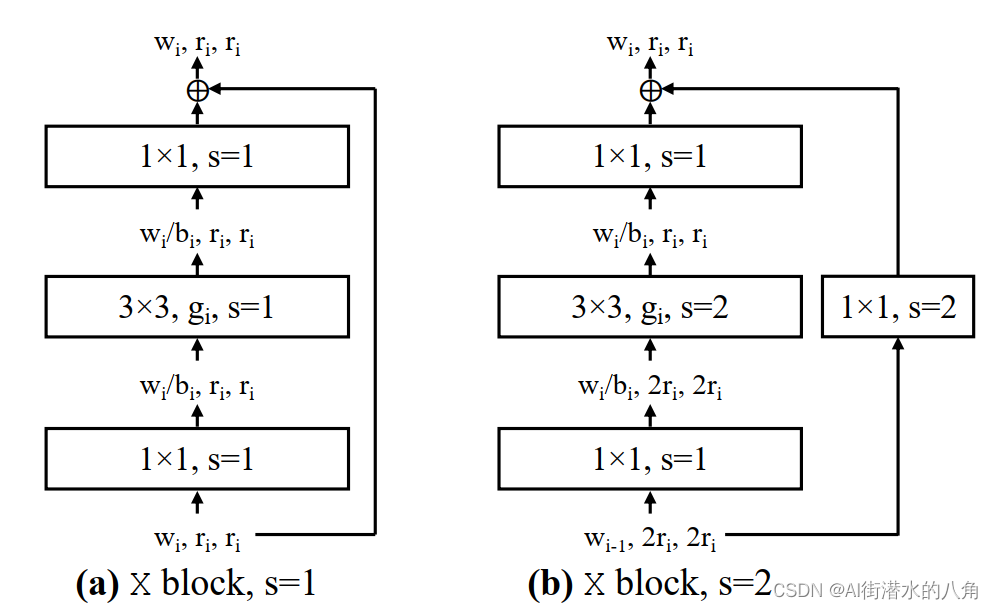

本文选择一个RegNet网络,其原理介绍如下:

该论文提出了一个新的网络设计范式,并不是专注于设计单个网络实例,而是设计了一个网络设计空间network design space。整个过程类似于经典的手工网络设计,但被提升到了设计空间的水平。使用本文的方法,作者探索了网络设计的结构方面,并得到了一个由简单、规则的网络构成了低维设计空间并称之为RegNet。RegNet设计空间提供了各个范围flop下简单、快速的网络。在类似的训练设置和flops下,RegNet的效果超过了EfficientNet同时在GPU上快了5倍

第三步:训练代码

1)损失函数为:交叉熵损失函数

2)训练代码:

python

import os

import math

import argparse

import torch

import torch.optim as optim

from torch.utils.tensorboard import SummaryWriter

from torchvision import transforms

import torch.optim.lr_scheduler as lr_scheduler

from model import create_regnet

from my_dataset import MyDataSet

from utils import read_split_data, train_one_epoch, evaluate

def main(args):

device = torch.device(args.device if torch.cuda.is_available() else "cpu")

print(args)

print('Start Tensorboard with "tensorboard --logdir=runs", view at http://localhost:6006/')

tb_writer = SummaryWriter()

if os.path.exists("./weights") is False:

os.makedirs("./weights")

train_images_path, train_images_label, val_images_path, val_images_label = read_split_data(args.data_path)

data_transform = {

"train": transforms.Compose([transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])]),

"val": transforms.Compose([transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])])}

# 实例化训练数据集

train_dataset = MyDataSet(images_path=train_images_path,

images_class=train_images_label,

transform=data_transform["train"])

# 实例化验证数据集

val_dataset = MyDataSet(images_path=val_images_path,

images_class=val_images_label,

transform=data_transform["val"])

batch_size = args.batch_size

nw = min([os.cpu_count(), batch_size if batch_size > 1 else 0, 8]) # number of workers

print('Using {} dataloader workers every process'.format(nw))

train_loader = torch.utils.data.DataLoader(train_dataset,

batch_size=batch_size,

shuffle=True,

pin_memory=True,

num_workers=nw,

collate_fn=train_dataset.collate_fn)

val_loader = torch.utils.data.DataLoader(val_dataset,

batch_size=batch_size,

shuffle=False,

pin_memory=True,

num_workers=nw,

collate_fn=val_dataset.collate_fn)

# 如果存在预训练权重则载入

model = create_regnet(model_name=args.model_name,

num_classes=args.num_classes).to(device)

# print(model)

if args.weights != "":

if os.path.exists(args.weights):

weights_dict = torch.load(args.weights, map_location=device)

load_weights_dict = {k: v for k, v in weights_dict.items()

if model.state_dict()[k].numel() == v.numel()}

print(model.load_state_dict(load_weights_dict, strict=False))

else:

raise FileNotFoundError("not found weights file: {}".format(args.weights))

# 是否冻结权重

if args.freeze_layers:

for name, para in model.named_parameters():

# 除最后的全连接层外,其他权重全部冻结

if "head" not in name:

para.requires_grad_(False)

else:

print("train {}".format(name))

pg = [p for p in model.parameters() if p.requires_grad]

optimizer = optim.SGD(pg, lr=args.lr, momentum=0.9, weight_decay=5E-5)

# Scheduler https://arxiv.org/pdf/1812.01187.pdf

lf = lambda x: ((1 + math.cos(x * math.pi / args.epochs)) / 2) * (1 - args.lrf) + args.lrf # cosine

scheduler = lr_scheduler.LambdaLR(optimizer, lr_lambda=lf)

for epoch in range(args.epochs):

# train

mean_loss = train_one_epoch(model=model,

optimizer=optimizer,

data_loader=train_loader,

device=device,

epoch=epoch)

scheduler.step()

# validate

acc = evaluate(model=model,

data_loader=val_loader,

device=device)

print("[epoch {}] accuracy: {}".format(epoch, round(acc, 3)))

tags = ["loss", "accuracy", "learning_rate"]

tb_writer.add_scalar(tags[0], mean_loss, epoch)

tb_writer.add_scalar(tags[1], acc, epoch)

tb_writer.add_scalar(tags[2], optimizer.param_groups[0]["lr"], epoch)

torch.save(model.state_dict(), "./weights/model-{}.pth".format(epoch))

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument('--num_classes', type=int, default=25)

parser.add_argument('--epochs', type=int, default=100)

parser.add_argument('--batch-size', type=int, default=4)

parser.add_argument('--lr', type=float, default=0.001)

parser.add_argument('--lrf', type=float, default=0.01)

# 数据集所在根目录

# https://storage.googleapis.com/download.tensorflow.org/example_images/flower_photos.tgz

parser.add_argument('--data-path', type=str,

default=r"G:\demo\data\gemstone\archive_train")

parser.add_argument('--model-name', default='RegNetY_400MF', help='create model name')

# 预训练权重下载地址

# 链接: https://pan.baidu.com/s/1XTo3walj9ai7ZhWz7jh-YA 密码: 8lmu

parser.add_argument('--weights', type=str, default='regnety_400mf.pth',

help='initial weights path')

parser.add_argument('--freeze-layers', type=bool, default=False)

parser.add_argument('--device', default='cuda:0', help='device id (i.e. 0 or 0,1 or cpu)')

opt = parser.parse_args()

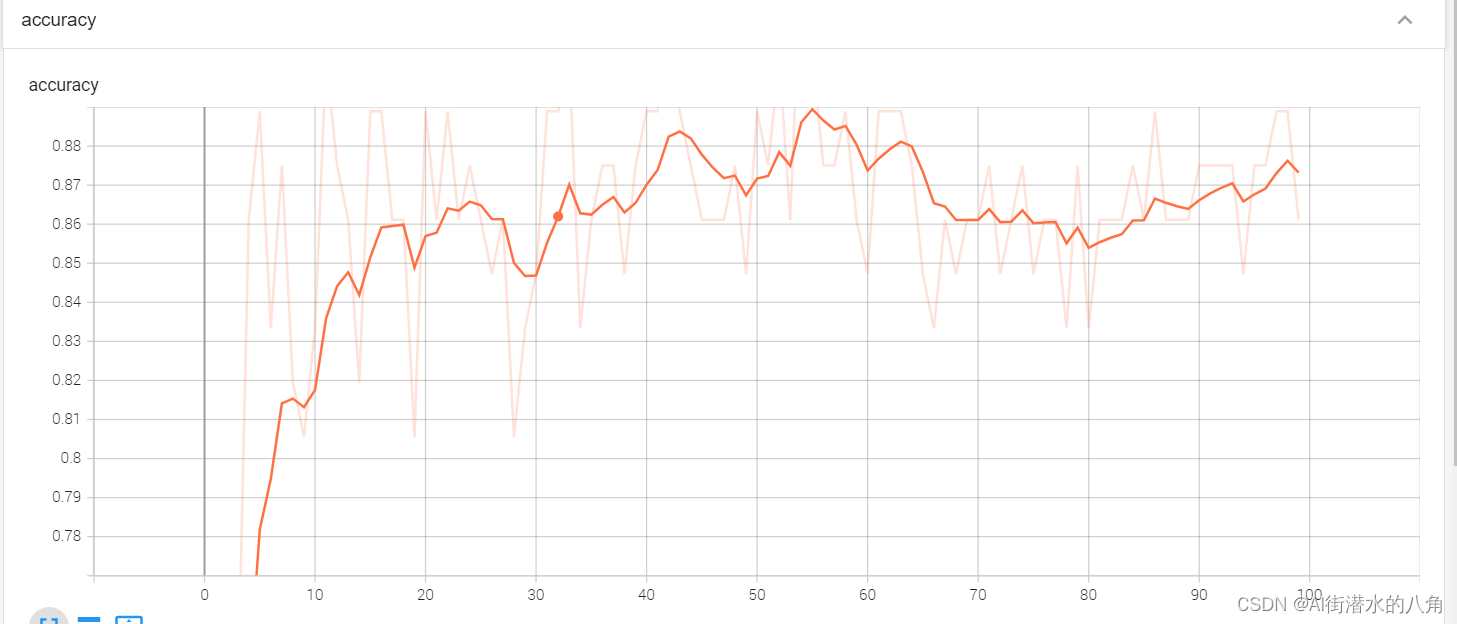

main(opt)第四步:统计正确率

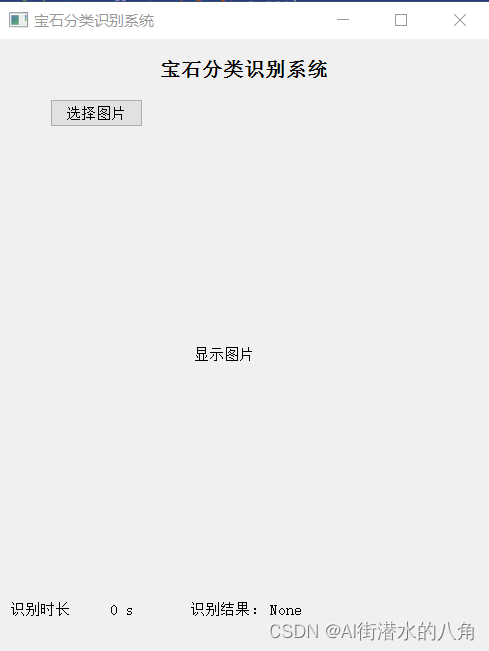

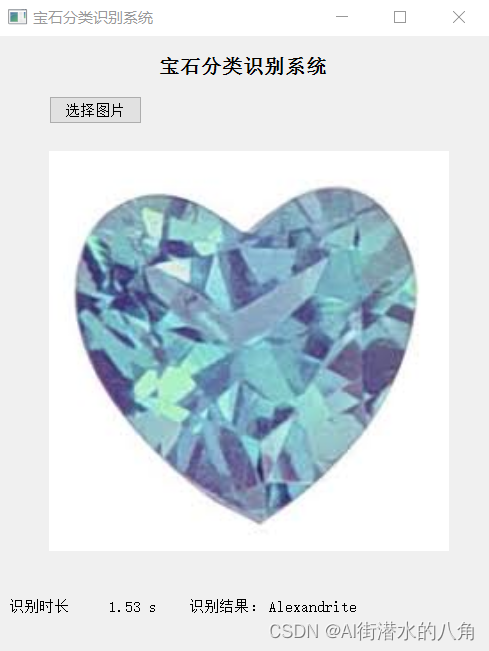

第五步:搭建GUI界面

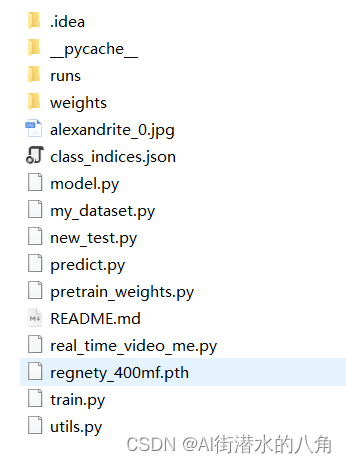

第六步:整个工程的内容

有训练代码和训练好的模型以及训练过程,提供数据,提供GUI界面代码

代码的下载路径(新窗口打开链接): 基于Pytorch框架的深度学习RegNet神经网络二十五种宝石识别分类系统源码

有问题可以私信或者留言,有问必答